What exactly transforms communication platforms into attention capture machines — defining the problem space

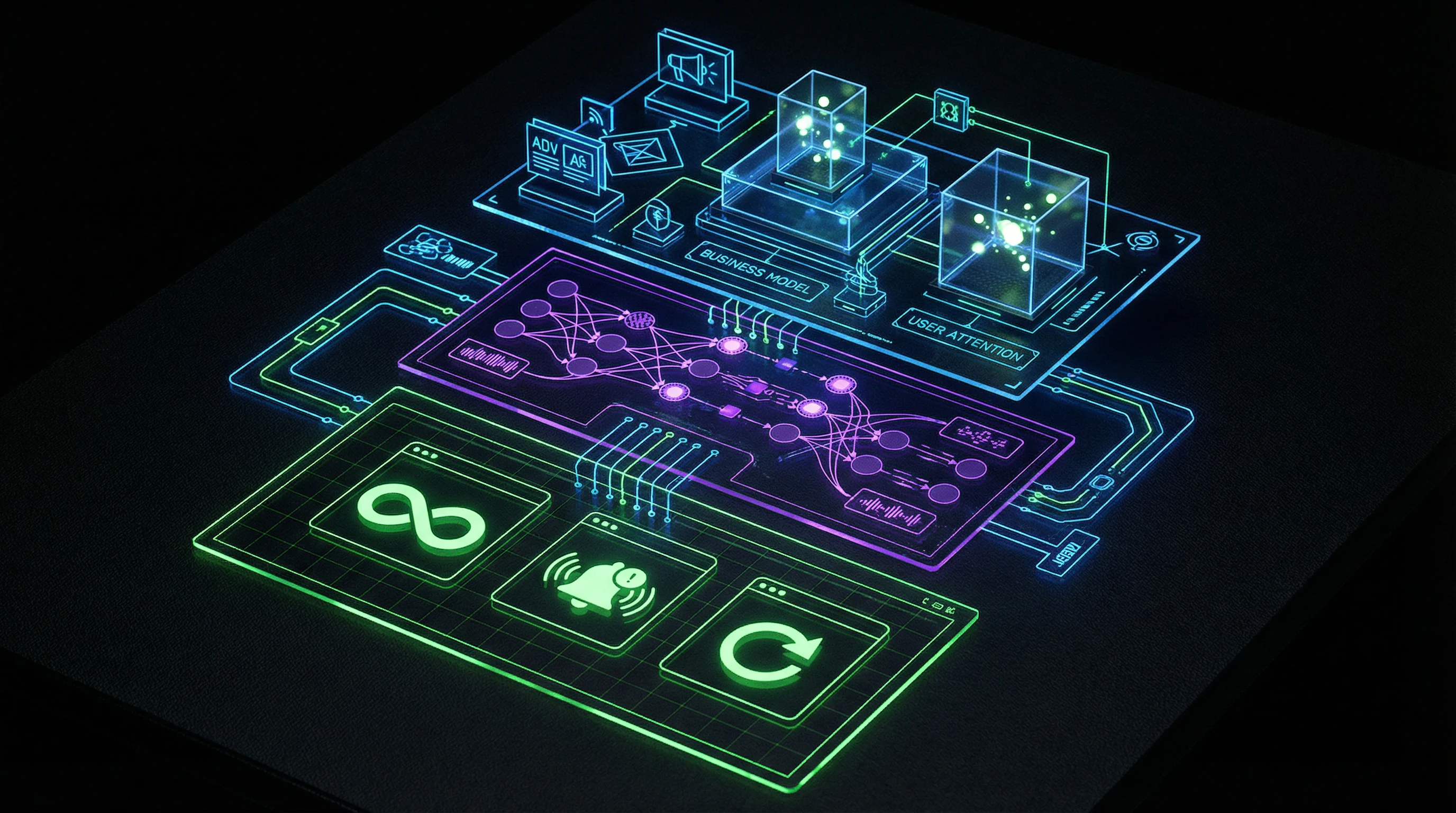

The term "social media" masks a fundamental transformation of their business model. Initially positioned as tools for maintaining connections, modern platforms function as behavioral modification systems, where the product is not the communication service, but the user's attention itself, packaged and sold to advertisers. More details in the Logic and Probability section.

The key distinction between a "social network" and an "attention capture platform" lies in the architecture of incentives: the former optimizes quality of connections, the latter — engagement metrics regardless of their impact on user wellbeing.

⚠️ Why "just don't use it" doesn't work — asymmetry between user and system

A common misconception is that the social media problem is solved by individual willpower. This position ignores a fundamental asymmetry: on one side — billions of dollars, thousands of engineers, and petabytes of behavioral data optimizing every interface pixel for attention retention; on the other — an individual with limited cognitive resources, evolutionarily unadapted to resisting such stimuli.

Even awareness of manipulation doesn't neutralize its effect — knowing about a cognitive bias doesn't prevent its automatic activation (S004).

🧱 Three levels of addiction architecture

- Interface level

- Infinite scroll, variable reinforcement (content unpredictability), minimized friction for content consumption and maximized friction for exiting the app. More on the mechanics of this process in the article "Infinite Scroll and the Dopamine Trap."

- Algorithm level

- Personalized recommendations optimized not for user interests, but for maximizing usage time; prioritization of emotionally charged content that generates more interactions.

- Business model level

- Monetization through advertising creates a direct financial incentive to retain users as long as possible, turning their behavioral data into a commodity for targeted advertising.

🔎 The boundary between tool and manipulation

The critical distinction lies between design that helps users achieve their goals, and design that substitutes the user's goals with the platform's goals. A tool expands agency — a person's ability to act in accordance with their intentions.

| Tool | Manipulative system |

|---|---|

| Expands user agency | Reduces agency, creating a gap between intentions and behavior |

| Serves user goals | Substitutes user goals with platform goals |

| Transparent in its mechanisms | Uses "dark patterns" — interface decisions that deceive or manipulate |

The gap between stated intentions ("I'll check notifications and close") and actual behavior (40 minutes of aimless scrolling) — not personal weakness, but the result of deliberate engineering design.

Seven Arguments Defending the Current Social Media Model — Steelmanning the Industry Position

Objective analysis requires presenting the opposing position in its most convincing form. The steelman approach excludes straw man fallacies. Learn more in the Epistemology section.

💎 The Argument from Freedom of Choice and Personal Responsibility

Industry defenders argue: no one is forced to use platforms, install apps, or spend time on them. Every interaction results from a voluntary decision.

The problem, according to this position, lies not in design but in lack of self-control among individual users. The solution belongs to the realm of personal responsibility and digital literacy, not regulation of business models. The argument appeals to libertarian values of individual autonomy.

🌐 The Argument from Social Value and Democratization of Communication

Social networks have provided unprecedented opportunities for global communication, organizing social movements, and accessing information. The Arab Spring, the #MeToo movement, coordination of humanitarian aid during crises — all became possible thanks to platforms.

Negative effects, according to this position, are the inevitable price for fundamental social goods. Attempts to limit engagement mechanisms could undermine the very functionality of platforms.

⚙️ The Argument from Technological Necessity of Personalization

In conditions of information overload, algorithmic content curation is not manipulation but necessity. Without personalized recommendations, users would drown in chaos of irrelevant information.

Engagement metrics (usage time, interactions) serve as proxies for user satisfaction: people spend time on platforms because they derive value from it.

The current model is presented as a technical solution to a real problem of scale, not as a manipulation tool.

📈 The Argument from Economic Sustainability of Free Services

The advertising model enables providing complex technological services free to billions of users, including those who cannot afford paid subscriptions.

Alternative models (subscriptions, micropayments) would create digital inequality, where access to communication tools is determined by ability to pay. Changing the business model would require either charging fees or government funding — each carries its own problems.

🔬 The Argument from Absence of Definitive Proof of Harm

Critics often cite correlations between social media use and negative psychological effects. But correlation does not prove causation (S003, S004).

Perhaps people predisposed to depression more frequently use social networks as a coping mechanism, not the other way around. Long-term randomized controlled studies yield mixed results. In the absence of irrefutable evidence of direct harm, radical changes are premature.

- Correlation between usage and psychological problems is established, but the direction of causality is unclear.

- Studies show mixed results regarding direct harm.

- Alternative explanations (user selection, coping behavior) are not excluded.

- Regulation based on hypothetical risks may eliminate real benefits.

🛠️ The Argument from Control Tools Already Provided to Users

Modern platforms offer numerous tools for managing experience: privacy settings, content filters, usage timers, notification disabling, blocking and unfollowing.

If people don't use available control mechanisms, this indicates either insufficient motivation to change or satisfaction with the current experience. The problem is not absence of tools but their insufficient use.

🌍 The Argument from Comparative Perspective with Other Media

Moral panics around new media technologies are a historically recurring pattern. Similar concerns were expressed about novels in the 18th century, radio in the 1920s, television in the 1950s, video games in the 1990s.

In each case, society adapted, developed usage norms, and catastrophic predictions did not materialize. Social networks are simply the next iteration of this cycle. Current criticism reflects not unique properties of platforms but general discomfort with technological change.

| Media Technology | Panic Period | Predicted Harm | Actual Outcome |

|---|---|---|---|

| Novels | 18th century | Mental degradation, moral corruption | Adaptation, usage norms |

| Radio | 1920s | Destruction of family life, mass manipulation | Adaptation, usage norms |

| Television | 1950s | Passivity, degradation of critical thinking | Adaptation, usage norms |

| Video Games | 1990s | Aggression, addiction, social isolation | Adaptation, usage norms |

However, this position ignores the qualitative difference of social networks: infinite scroll and dopamine traps are built into platform architecture intentionally, unlike passive consumption of previous media. Moreover, algorithms create modern myths that spread at speeds unattainable for traditional media.

Evidence Base: What Research Shows About Social Platform Mechanisms and Effects

Moving from arguments to empirical data, we need to systematically examine scientific evidence about social media's impact on user behavior, psychological state, and cognitive processes. More details in the Epistemology Basics section.

📊 Neurobiological Research on Dopamine Loops and Variable Reinforcement

Unpredictable stimuli activate the dopamine system significantly more strongly than predictable rewards (S004). This explains the effectiveness of variable reinforcement in social media: users don't know whether the next feed refresh contains something interesting, creating constant motivation to check.

The mechanism is identical to that used in slot machines, where unpredictability of winning creates stronger addiction than guaranteed rewards. Neuroimaging studies show activation of the same brain regions (ventral tegmental area, nucleus accumbens) when receiving social feedback online as when using psychoactive substances.

Variable reinforcement in social media works not as a side effect of design, but as its central mechanism — exactly like in casinos.

🧪 Experimental Data on Psychological Well-Being Impact

Correlational studies don't prove causation, but experimental work with more rigorous design paints a different picture. When participants are randomly assigned to groups with limited and unlimited social media access, reduced usage leads to measurable improvement in well-being indicators, decreased depression and anxiety symptoms, and better sleep quality (S002, S004).

The effect is especially pronounced in users with initially high usage levels. This data points to a causal relationship, not just correlation.

📉 Research on Information Diversity and Echo Chambers

Traffic source analysis shows that algorithmic recommendations systematically narrow the spectrum of consumed information (S001). Users relying on personalized feeds demonstrate less source diversity compared to those actively seeking content.

Algorithms optimize for engagement, which in practice means prioritizing content that confirms users' existing beliefs, since such content generates more interactions. This creates an echo chamber effect not as a byproduct, but as a direct consequence of the algorithm's optimization function.

🧾 Data on Usage Patterns and Intention-Behavior Gap

User self-report studies reveal a systematic gap between intentions and actual behavior. Most users report wanting to reduce social media time and dissatisfaction with the amount of time they actually spend on platforms.

This gap is a key indicator that usage is not fully voluntary in the sense of matching users' reflective preferences. Objective usage data (time in app, check frequency) systematically exceeds users' subjective estimates, indicating automated, habitual interaction patterns.

| Metric | Subjective Estimate | Objective Data | Gap |

|---|---|---|---|

| Time in app | "About an hour a day" | 2–3 hours per day | 2–3x underestimation |

| Check frequency | "A few times" | 50–100+ times per day | 10–20x underestimation |

| Intention to reduce | "Want to use less" | Usage increases | Behavior contradicts intention |

🔍 Attention and Cognitive Effects Research

Constant attention switching between tasks and checking notifications creates cognitive load that reduces capacity for concentration and deep work (S002). Even the presence of a smartphone in the visual field (without active use) reduces available cognitive resources — an effect known as "brain drain."

Chronic social media use is associated with changes in sustained attention capacity and increased susceptibility to distraction. This relates to infinite scrolling and its impact on attention architecture.

📱 Data on Design Patterns and Their Effectiveness in User Retention

Internal documents from tech companies made available through leaks and legal proceedings confirm deliberate use of psychological principles to maximize usage time. A/B testing of different interface solutions systematically selects variants that increase engagement metrics, regardless of their impact on user well-being.

Companies are aware of their products' negative effects on certain user groups (especially adolescents), but prioritize growth and engagement over safety.

Documents show that design choices are not the result of random coincidence, but the consequence of targeted optimization for metrics that directly contradict user interests. This creates a collective digital unconscious, where individual algorithmic decisions compound into systemic effects.

Mechanism of Impact: How Algorithms Modify Behavior at the Neurobiological Level

Understanding the mechanism is critical for distinguishing causality from correlation. It's necessary to explain not only what happens, but how exactly platform architecture creates the observed effects. More details in the section AI Errors and Biases.

🔁 The Habit Loop: Trigger, Routine, Reward

Social networks exploit the basic neurobiological architecture of habit formation. Trigger (boredom, anxiety, anticipation) → routine (opening the app, scrolling) → reward (interesting content, social validation) → strengthening of the connection between trigger and routine.

After sufficient repetitions, the process becomes automated: the trigger directly activates the routine without conscious decision. Variable reward (sometimes content is interesting, sometimes not) strengthens the loop, making it more resistant to extinction—a mechanism identical to infinite scroll and the dopamine trap.

Users often find themselves in the app without remembering a conscious decision to open it. This isn't forgetfulness—it's automaticity built into the architecture.

🧬 Social Comparison and Status Anxiety

Evolutionarily, humans are sensitive to social status and comparison with the group—a mechanism critical for survival in small hunter-gatherer bands. Social networks exploit this mechanism, creating a constant stream of information about others' achievements, appearance, and lifestyle.

Critical distinction: in traditional social contexts, comparison occurs with a limited, relevant group; on social networks—with carefully curated, unrepresentative images of thousands of people. Algorithms amplify the effect by prioritizing content that generates strong emotional reactions, including envy and inadequacy.

| Context | Comparison Group | Representativeness | Effect on Perception |

|---|---|---|---|

| Traditional society | Neighbors, colleagues, friends | High (real people) | Adequate perception of norm |

| Social networks | Thousands of curated profiles | Low (selected moments) | Systematic distortion of norm |

⚡ Cognitive Overload and Lowered Threshold for Impulsive Decisions

The constant stream of information and notifications creates a state of chronic cognitive overload. Research shows that under overload conditions, people switch from reflective (slow, analytical) to impulsive (fast, heuristic) thinking (S004).

This reduces the ability for critical evaluation of information and increases susceptibility to manipulation. Social network design systematically minimizes friction for impulsive actions (one-tap like, video autoplay) and maximizes friction for reflective ones (complex privacy settings, absence of simple tools for tracking usage time).

- Reflective Thinking

- Slow, analytical, requires cognitive resources. Includes critical evaluation, fact-checking, conscious decision-making. Shuts down first under overload.

- Impulsive Thinking

- Fast, heuristic, based on patterns and emotions. Conserves resources but is vulnerable to manipulation and errors. Activates under cognitive overload.

- Platform Architectural Design

- Deliberately creates conditions for impulsive thinking, minimizing opportunity for reflection. This isn't a bug—it's a feature.

🕳️ FOMO Effect and Anxiety of Missing Out

Fear of Missing Out—a psychological phenomenon amplified by social network architecture. The constant stream of updates creates the illusion that important events are happening continuously, and being offline means exclusion from social life.

This creates anxiety that is relieved by checking the platform, which temporarily reduces anxiety but strengthens long-term dependence. The mechanism is analogous to negative reinforcement in behavioral psychology: behavior is strengthened not by reward, but by elimination of an unpleasant state. The connection to gambling mechanics is obvious—both systems use variable reinforcement and anxiety as the primary driver.

FOMO works not because events are actually important, but because platform architecture creates a condition where absence of information is perceived as a threat to social status.

Conflicting Data and Areas of Uncertainty — Where Research Diverges

Scientific integrity requires acknowledgment: there are areas where data is ambiguous or directly contradictory. More details in the Science News section.

🔀 Direction of Causality: Use as Cause or Consequence

The central methodological problem: separating the effect of social media use from the effect of predisposition. People with low self-esteem or social anxiety may use platforms more — and then observed correlations reflect selection rather than causal influence.

Experimental studies with restricted access partially solve this problem (S003), but not completely: the effect may be temporary, long-term consequences remain unclear. An ideal study would require random assignment of people to conditions with and without access over years — which is ethically and practically impossible.

Correlation ≠ causation. Even a statistically significant relationship may reflect a third factor or reverse direction of influence.

📐 Effect Size and Clinical Significance

Studies often show statistically significant effects, but their size is small. The question: is this clinically significant? Some researchers compare effects with other recognized risk factors for psychological well-being; others consider them too small for radical policy changes.

The problem is compounded by heterogeneity: the impact may be strong for adolescents or people predisposed to disorders and minimal for others (S004).

- Statistical Significance

- The probability that a result is not random. Does not indicate the magnitude or practical importance of the effect.

- Effect Size

- How large the difference between groups is. Can be statistically significant but practically negligible.

- Clinical Significance

- Whether the effect impacts a person's real life. Requires context and professional judgment.

🌐 Cultural and Contextual Variability

Most research is conducted in Western, Educated, Industrialized, Rich, and Democratic (WEIRD) societies. The generalizability of results to other cultural contexts is unclear.

Social norms of technology use, platform structure, availability of alternative forms of interaction — all vary between cultures and may moderate effects. Studies from non-Western contexts yield mixed results (S005), pointing to the need for nuanced understanding of conditions under which effects manifest.

- Check which countries/cultures the research was conducted in.

- Assess how closely research conditions match your context.

- Look for local studies or data specific to your region.

- Consider cultural differences in attitudes toward technology and social interaction.

Honest summary: where data is contradictory, caution in conclusions is needed. This does not mean there is no problem — it means mechanisms and scales require further study.

Cognitive Anatomy of the Harmlessness Myth — Which Psychological Mechanisms Sustain the Illusion of Control

Why do people underestimate the influence of social media on their behavior? The answer lies not in lack of information, but in the architecture of perception itself.

🧩 The Illusion of Mental Transparency

People systematically overestimate the degree to which their behavior reflects conscious intentions, and underestimate the influence of automatic processes.

We believe we see ourselves from the inside completely. In reality, we only see what we've managed to become aware of — the rest remains off-screen.

When an algorithm suggests content, the user perceives the choice as their own. They don't notice they were choosing from a pre-filtered set — this is called the illusion of free choice.

Three Mechanisms That Sustain the Illusion of Control

- Self-attribution of causality. The user explains their behavior through internal motives ("I'm interested"), ignoring external stimuli (algorithm, design, time of day).

- Selective attention to successes. They remember moments when control worked; forget when it failed or was absent altogether.

- Post-hoc narrative. After an action, they construct a logical story about why they did it, even if the decision was impulsive.

These mechanisms work not because people are foolish, but because consciousness evolved for other tasks — it handles visible threats well, but is blind to invisible architectures.

The illusion of control intensifies when the platform gives users micro-level management (filter choices, privacy settings) while maintaining macro-level dependency (the algorithm remains a black box).

Research shows that even informed users who know about manipulation continue to fall for the same traps (S001). Knowledge ≠ protection if it doesn't translate into behavior change.

The gap between what a person thinks about their behavior and what they do — this isn't a bug in the perception system, it's a fundamental feature. Platforms have simply learned to exploit this gap.