What is digital physiognomy and why it didn't disappear along with phrenology

Physiognomy—the practice of determining character, abilities, and inclinations from facial features—has a millennia-long history. Its scientific version, phrenology, emerged in the early 19th century thanks to Franz Joseph Gall, who claimed that skull shape reflected the development of different brain regions and, consequently, personality traits. More details in the Deepfake Detection section.

By the end of the 19th century, phrenology was completely discredited: no correlations between skull shape and psychological characteristics were found. It seemed the story was over.

But the story wasn't over—it just dressed up in algorithms.

⚠️ How algorithms brought physiognomy back disguised as objective science

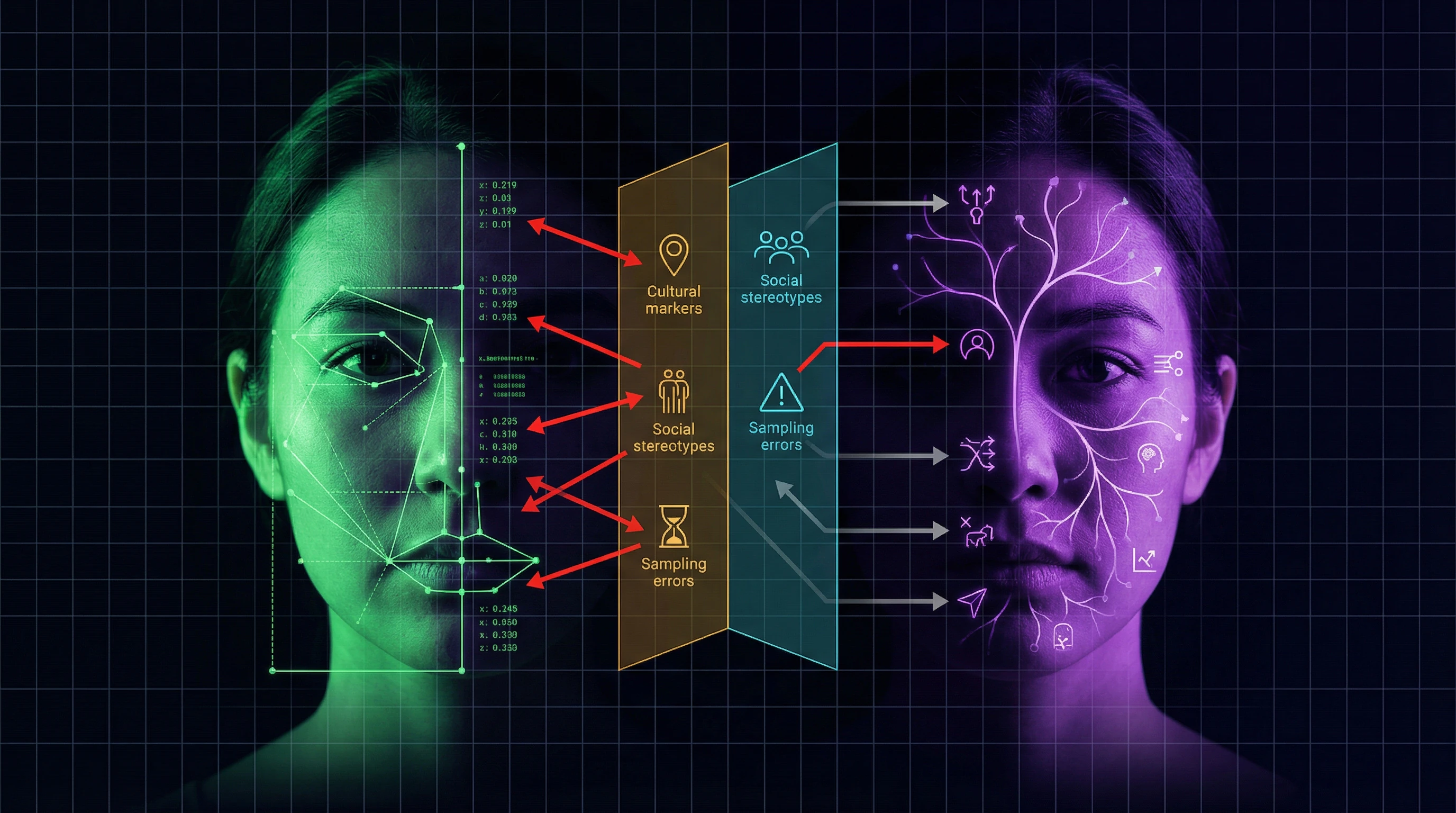

Modern AI physiognomy uses machine learning to analyze facial characteristics and claims it can predict personality traits, emotional states, sexual orientation, political views, and even criminal tendencies (S001).

Companies are developing systems for automated hiring that evaluate candidates through video interviews, analyzing microexpressions and facial structure. Law enforcement agencies in some countries use algorithms to "predict" criminal behavior based on photographs.

| 19th Century Phrenology | 21st Century AI Physiognomy |

|---|---|

| Manual skull measurement | Pixel analysis by neural networks |

| Theory: skull shape → brain development | Theory: facial features → psychological traits |

| Legitimacy: physician's authority | Legitimacy: statistical significance + big data |

| Outcome: discredited | Outcome: deployed in hiring and law enforcement systems |

The key difference is the use of big data and neural networks. Developers claim that algorithms find patterns inaccessible to human perception, and that statistical significance of correlations confirms the method's validity (S002).

However, these arguments ignore fundamental methodological problems: correlation does not imply causation, and statistical significance in large samples may reflect data artifacts rather than real patterns.

🧩 Three key misconceptions about the "scientific" nature of algorithmic physiognomy

- Misconception 1: statistical significance = real connection

- If an algorithm shows correlation between facial features and behavior, this doesn't mean the connection is real. In large datasets, you can find correlations between anything—this is the problem of multiple testing and p-hacking. Without a theoretical model explaining the mechanism of connection, such correlations are meaningless.

- Misconception 2: machine learning is objective

- Algorithms are trained on human-created data and reproduce social stereotypes encoded in that data. If the training sample contains systemic biases (racial, gender), the algorithm will amplify them, giving them the appearance of scientific legitimacy.

- Misconception 3: prediction accuracy proves validity

- Accuracy depends on what exactly is being measured. If an algorithm predicts arrest, it may be accurate not because faces reflect criminality, but because police more frequently arrest people of certain appearances—this is a self-fulfilling prophecy, not a scientific discovery.

The connection between these misconceptions and historical phrenology is not coincidental. Both systems solve the same problem: give scientific appearance to social prejudices and make discrimination automatic. More on the mechanisms of this process in the section on confounders and causality.

To understand why these systems remain popular despite methodological problems, see the article on biometric facial recognition and analysis of physiognomic AI.

Steel-Manning the Arguments: Seven Reasons Why Proponents Believe in AI Physiognomy Validity

To honestly assess the problem, we must examine the strongest arguments from algorithmic physiognomy proponents. These arguments are not trivial and require serious analysis. More details in the AI Myths section.

🧪 Argument One: Reproducible Correlations in Independent Studies

Proponents point out that certain correlations between facial features and behavioral characteristics are reproduced across different studies using different methodologies. For example, research shows statistically significant links between facial width-to-height ratio (fWHR) and aggressive behavior, between facial structure and perceived trustworthiness.

The problem with this argument lies in conflating correlation reproducibility with validity of causal interpretation. A correlation can be reproducible yet explained by third variables. For instance, fWHR correlates with testosterone levels during puberty, which in turn relates to socialization and cultural expectations of masculinity. The algorithm may be capturing not biological predisposition to aggression, but social patterns linked to gender stereotypes.

Reproducibility of correlation does not mean validity of causal interpretation. Third variables may fully explain the relationship.

📊 Argument Two: Algorithms Outperform Humans in Predicting Certain Characteristics

Research shows that machine learning algorithms can predict certain characteristics (such as sexual orientation from photographs) with accuracy exceeding random guessing and human judgment.

This argument ignores the problem of confounders and cultural markers. The algorithm may be capturing not biological features, but cultural signals: hairstyle, makeup, facial expression, clothing and accessory choices that correlate with identity in a specific cultural environment. The study showing high accuracy in predicting sexual orientation was criticized because the algorithm analyzed not facial structure, but cultural markers of self-presentation specific to dating site users in the United States.

- The algorithm may capture cultural markers rather than biological features

- High accuracy in one population does not guarantee generalizability to other cultures

- Lack of control for confounders makes result interpretation unreliable

🧬 Argument Three: Genetic and Hormonal Influences on Face and Brain Development

There are proven biological mechanisms linking the development of facial structures and the brain. For example, prenatal testosterone exposure affects the formation of both the facial skeleton and certain brain regions.

This argument contains a logical fallacy: from the fact that X influences Y and Z, it does not follow that Y predicts Z with sufficient accuracy for practical application. Hormonal influences are just one of many factors shaping both face and behavior. Within-group variability is enormous, and effects are small and overlapped by numerous other influences: genetic, epigenetic, environmental, cultural.

A common causal factor does not guarantee predictive power. Even if a theoretical link exists, its practical validity may be negligible.

🔁 Argument Four: Evolutionary Psychology and Adaptive Value of Face Assessment

Evolutionary psychologists argue that the ability to quickly assess others' intentions and characteristics by appearance had adaptive value in human evolutionary history.

The problem with this argument is conflating heuristic adaptiveness with its accuracy. Evolution optimizes not accuracy, but speed of decision-making under uncertainty. Quick "friend or foe" assessment by face could be adaptive even if it erred 40% of the time—what mattered was that it worked faster than alternatives. Modern algorithms trained on these heuristics reproduce not objective reality, but evolutionarily entrenched biases.

- Adaptiveness

- Optimization of decision speed, not accuracy. A heuristic can be adaptive at 60% accuracy if competing mechanisms work more slowly.

- Accuracy

- Correspondence of predictions to objective reality. Evolutionary mechanisms often contain systematic errors useful in ancient environments but harmful in modern ones.

⚙️ Argument Five: Successful Application in Adjacent Fields—Radiomics and Medical Diagnostics

In medicine, radiomics is actively developing—analysis of medical images using machine learning for disease diagnosis and treatment outcome prediction. Systematic reviews show that radiomics is effective in diagnosing brain glial tumors, predicting molecular markers, and forecasting therapy response (S007).

The key difference lies in the presence of a validated biological mechanism and clinical validation. Radiomics analyzes pathological tissue changes that have a direct connection to disease: tumors alter tissue structure, which is reflected in MRI images. These changes are validated by histological analysis and clinical outcomes (S007). In the case of physiognomy, such validation is absent: there is no biological mechanism linking nose shape to honesty, and no gold standard for verifying predictions.

Success in one field (radiomics) does not automatically transfer to another (physiognomy) if a validated mechanism and clinical gold standard are absent.

📈 Argument Six: Commercial Success and Widespread Technology Adoption

AI physiognomy systems are used by major companies for hiring, personnel assessment, and customer service. If the technology didn't work, companies wouldn't invest millions of dollars in it.

This argument ignores numerous reasons why ineffective technologies can be commercially successful. First, placebo effect and Hawthorne effect: the mere fact of using a "scientific" assessment system can change employee and candidate behavior. Second, systems may work due to other factors (such as hiring process structure), not facial analysis. Third, companies may continue using a system due to sunk costs, institutional inertia, or marketing advantages ("we use AI"), even if effectiveness is unproven.

| Reason for Commercial Success | Connection to Technology Validity |

|---|---|

| Placebo and Hawthorne effects | None—results achieved through behavior change, not algorithm accuracy |

| Process structure | None—improvement may result from standardization, not facial analysis |

| Sunk costs and inertia | None—company continues using system despite lack of evidence |

| Marketing advantage | None—marketing success does not mean technology validity |

🧾 Argument Seven: Meta-Analyses Show Positive AI Effects in Adjacent Fields

Systematic reviews and meta-analyses demonstrate that AI systems can outperform humans in some tasks requiring empathy and emotional understanding. For example, a meta-analysis showed that AI chatbots are perceived as more empathetic than healthcare workers in text-based scenarios (S003).

This argument conflates different types of tasks. Generating empathetic text is a natural language processing task that does not require facial characteristic analysis. The meta-analysis showing chatbot advantages evaluated text-based interactions where nonverbal cues were absent (S003). Moreover, the study identified serious methodological limitations: assessment was conducted through proxy raters rather than actual patients, and did not account for nonverbal communication aspects (S003). Success in one modality does not automatically transfer to another.

All seven arguments contain logical fallacies or methodological flaws, but they are not obvious at first glance. This is precisely why physiognomic AI continues to attract investment and attention despite lacking a valid evidence base.

Evidence Base: What Systematic Reviews and Meta-Analyses Say About Method Validity

Objective evaluation of AI physiognomy requires turning to systematic reviews and meta-analyses — the most reliable sources of scientific data. These studies aggregate results from multiple primary studies, assess methodological quality, and identify systematic errors. Learn more in the Deepfakes section.

📊 Radiomics as a Methodological Standard: When Image Analysis Works

A systematic review and meta-analysis of radiomics and machine learning applications in diagnosing glial brain tumors provides a control example (S007). Radiomics is effective for non-invasive diagnosis and subtyping of tumors based on MRI data, but the study revealed significant methodological heterogeneity: lack of unified standards for selecting regions of interest, size, and shape of analyzed areas.

The key difference between radiomics and physiognomy is the presence of a validated biological substrate. Radiomic features reflect real pathological changes in tissues, verifiable histologically. Algorithms analyze texture, density, vascularization — characteristics with direct links to tumor biology. In physiognomy, no such connection exists: there is no mechanism explaining why nose shape should correlate with honesty.

🧪 Methodological Standards: PRISMA and Evidence Quality Assessment

Modern systematic reviews follow strict standards such as PRISMA 2020 (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) (S007). Requirements include pre-registration of protocols, systematic literature searches, independent quality assessment by multiple reviewers, bias risk evaluation, and transparent presentation of results.

Most AI physiognomy studies fail to meet these standards. Typical problems: lack of pre-registration (opening opportunities for p-hacking and HARKing), use of convenience samples, absence of independent validation on external datasets, ignoring confounders.

| PRISMA Criterion | Radiomics (Brain Tumors) | AI Physiognomy |

|---|---|---|

| Protocol Pre-registration | Yes, in PROSPERO | Rare |

| Systematic Literature Search | Yes, with inclusion/exclusion criteria | Often selective |

| Independent Quality Assessment | Yes, multiple reviewers | Rare |

| External Data Validation | Mandatory | Often absent |

| Confounder Control | Systematic | Minimal |

🔁 Living Systematic Reviews: New Standards of Evidence

Scientific review methodology is evolving toward greater dynamism. The ALL-IN meta-analysis concept (Anytime Live and Leading INterim meta-analysis) proposes an approach where analysis updates as new data arrives, maintaining statistical validity (S002). This avoids accumulation of systematic errors and ensures continuous evidence evaluation.

The key advantage is the ability for retrospective and prospective application without predetermined sample sizes. Analysis becomes "living," updating in real-time as new data emerges, including interim results from ongoing studies, without changing testing criteria (S002).

Applying such standards to AI physiognomy research would reveal fundamental problems: impossibility of independent replication due to closed algorithms and data, absence of pre-registered hypotheses, multiple testing without correction, ignoring negative results.

⚠️ The Problem of Systematic Errors in Mediation Meta-Analyses

Studies attempting to establish mechanisms linking facial features and behavior through mediating variables (such as hormone levels or brain structures) present particular complexity. Mediation analysis requires strict causal assumptions rarely met in observational studies.

- Unaccounted Confounding

- Third variables simultaneously influence mediator and outcome, creating spurious associations.

- Reverse Causality

- Outcome influences mediator rather than vice versa, reversing the causal chain.

- Measurement Error

- Differentially affects estimates of direct and indirect effects, biasing results.

In the physiognomy context, this means: even if a correlation is found between facial characteristics and behavior, and even if a potential mediator is identified (such as testosterone), this does not prove causation.

🧾 Meta-Analysis of AI Empathy: Methodological Lessons for Physiognomy

A systematic review comparing empathy of AI chatbots and healthcare workers provides important methodological lessons (S003). Analysis of 15 studies from 2023–2024 showed a standardized mean difference of 0.87 (95% CI, 0.54–1.20) favoring AI, equivalent to approximately two points on a 10-point scale.

However, authors identified critical limitations: all studies evaluated only text-based interactions, ignoring nonverbal cues critically important for empathy; empathy was assessed through proxy raters (independent evaluators) rather than actual patients; studies had high risk of bias on the ROBINS-I scale (S003). These limitations make results inapplicable to real clinical practice.

- Assessment in artificial conditions (static photographs instead of real interactions)

- Use of proxy metrics (self-reports or stereotypical assessments instead of objective behavioral measurements)

- High risk of systematic errors due to confounders and lack of control for alternative explanations

- Absence of validation on independent samples with different sociocultural characteristics

Similar problems characterize AI physiognomy research. The link between facial features and personality traits identified in laboratory conditions does not transfer to real social interactions, where context, relationship history, and cultural norms determine behavior far more strongly than facial morphology.

Refer to the article on biometric facial recognition to understand the legal and ethical frameworks within which these methods are applied. Additional context on AI ethics and safety will help assess the systemic risks of such technologies.

Mechanisms and Confounders: Why Correlation Doesn't Mean Causation in Facial Analysis

A statistically significant correlation between facial features and behavior does not prove causal influence. A face may be a marker, but not a valid predictor of internal characteristics. Learn more in the Cognitive Biases section.

Alternative mechanisms often explain observed associations better than direct physiognomic hypotheses.

🧬 Genetic and Hormonal Confounders: Common Causes Without Direct Links

Genetics and prenatal hormones simultaneously influence facial and brain development. This creates correlation through a common cause, but does not validate physiognomy.

Prenatal testosterone, for example, affects digit ratio (2D:4D), facial structure, and some behavioral traits. The effect explains less than 5% of variability—predictive power for any specific individual is close to zero.

| Factor | Effect on Face | Effect on Behavior | Predictive Power |

|---|---|---|---|

| Prenatal testosterone | Structure, proportions | Aggression, risk tolerance | <5% of variance |

| Genetic background | Morphology | Cognitive abilities, temperament | Overlapped by multiple factors |

Using such markers in hiring or law enforcement is scientifically unfounded and ethically unacceptable (S001).

🔁 Cultural Markers and Self-Presentation: Algorithms Read Style, Not Biology

People manage their appearance: makeup, hairstyle, facial expression, clothing. An algorithm may detect correlation between these cultural markers and behavior, but this isn't biology—it's social communication.

An algorithm trained on photographs may learn: "people with certain makeup smile more often on camera" or "people in business suits more often hold leadership positions." This doesn't mean facial features predict competence or honesty.

Social class, ethnic background, gender identity—all are encoded in self-presentation and can be mistakenly interpreted as biological signals (S002).

📊 Selection Bias: Which Faces End Up in the Dataset

Datasets for training AI contain faces of people who agreed to be photographed and annotated. This is not a random sample of the population.

- People with certain facial features may more often agree to be photographed (self-selection effect).

- Annotators may systematically err when labeling certain groups (annotation bias).

- Historical datasets reflect the prejudices of the era in which they were collected.

Result: the algorithm learns from a biased sample and reproduces these biases as supposedly objective patterns (S001).

🎭 Pygmalion Effect and Self-Fulfilling Prophecy

If a system says a person is "dangerous" based on their face, others may treat them differently. This can change their behavior and create the appearance of prediction validity.

- Mechanism

- Label → changed social treatment → behavioral adaptation → label confirmation.

- Danger

- The system appears accurate, though it actually created what it predicted. This is especially dangerous in criminal justice and education (S002).

Correlation between face and behavior may be an artifact of the system's social impact, not biological reality.

🔍 Multiple Comparisons and P-Hacking: Statistical Illusion

If a researcher tests 100 hypotheses about links between facial features and behavior, approximately 5 will be "significant" at p < 0.05 purely by chance. Only significant results get published.

Without correction for multiple comparisons and pre-registration of hypotheses, the literature fills with false positives. This creates an illusion of physiognomy's validity (S003).

Verification: require pre-registration of studies, Bonferroni correction, and replication on independent samples.