What is reward prediction error: when the brain calculates the difference between "expected" and "got"

Reward prediction error (RPE) is a fundamental computational mechanism working in your brain right now. Mathematically: RPE = Actual reward − Expected reward (S003, S005).

Positive error — you got more than expected. Negative — less. This signal is encoded by dopaminergic neurons in the ventral tegmental area (VTA) and transmitted to the striatum, where it serves as the foundation for reinforcement learning (S007).

- VTA dopaminergic neurons

- Increase firing rate with positive error, decrease with negative. They encode not the reward itself, but the deviation from expectation (S003).

- Nucleus accumbens

- Receives projections from VTA and modulates synaptic plasticity. The same reward triggers different dopaminergic responses depending on predictability.

Signed vs Unsigned RPE: direction versus magnitude

Modern research distinguishes two types of prediction errors (S004).

| RPE Type | What it encodes | Function |

|---|---|---|

| Signed RPE | Direction of error (better/worse than expected) | Outcome evaluation, behavior reinforcement |

| Unsigned RPE | Absolute magnitude of deviation | Uncertainty processing, world model updating |

EEG studies show these two signal types are processed by partially independent neural systems. Unsigned RPE is linked to metacognitive monitoring of prediction accuracy.

Temporal Difference Learning: how RPE updates expectations over time

RPE is embedded in the temporal difference (TD) learning algorithm, where predictions are updated at each time step, not just after the final outcome (S005).

When you see a signal predicting reward (doorbell before food delivery), dopaminergic neurons start responding to that signal, not the reward itself. The prediction error "migrates" backward in time to the earliest predictor. More details in the Thermodynamics section.

- Dopaminergic response switches from reward to contextual cues preceding it

- Conditioned stimuli acquire motivational power

- Dependencies become persistent — the brain reacts to context, not substance

This mechanism explains why relationship breakups trigger the same grief mechanisms as reward loss: the brain has learned to predict the partner's presence and receives a negative prediction error in their absence.

Five Arguments for the Central Role of RPE in Learning and Decision-Making

🔬 Argument 1: Cross-Species Conservation of the Mechanism

RPE mechanisms have been discovered in organisms from fruit flies to primates, indicating their fundamental evolutionary importance (S005). All studied species exhibit similar logic: neural systems using neuromodulators (dopamine in mammals, octopamine in insects) encode deviations from expected outcomes and use these signals to modify behavior.

Conservation across hundreds of millions of years of evolution demonstrates that RPE solves a critically important adaptive problem: efficient learning in a variable environment with limited computational resources.

📊 Argument 2: Direct Correspondence Between Dopaminergic Activity and Behavioral Learning

Optogenetic experiments demonstrate a causal relationship: artificial stimulation of dopaminergic neurons at the moment of action increases the probability of repeating that action, even in the absence of actual reward (S007). The reverse is also true—suppression of dopaminergic activity impairs learning.

The magnitude of the dopaminergic response correlates with learning speed: the larger the prediction error, the faster the behavioral policy is updated (S005). This is direct evidence that RPE does not merely correlate with learning but is its causal mechanism.

🧠 Argument 3: Computational Efficiency of TD-Learning

From a machine learning perspective, RPE-based algorithms (especially TD-learning) demonstrate an optimal balance between learning speed and computational complexity (S005). Unlike methods requiring a complete model of the environment, RPE-based learning operates incrementally, updating estimates after each experience.

- Incremental Updating

- Allows organisms to learn in real time without needing to store and process the complete history of interactions.

- Convergence to Optimal Solution

- The fact that biological systems have converged on a solution mathematically close to optimal confirms the adaptive value of RPE mechanisms.

🔎 Argument 4: Explanatory Power for Clinical Phenomena

The RPE framework explains a wide spectrum of psychiatric and neurological disorders (S008). In addiction, hypersensitivity to cues predicting the drug and blunted responses to natural rewards are observed—a pattern consistent with disrupted RPE signals.

In depression, anhedonia and reduced ability to learn from positive outcomes are characteristic, corresponding to blunted positive RPE. In schizophrenia, aberrant dopaminergic signaling may generate false prediction errors, leading to the formation of delusional beliefs (S008).

A unified theoretical framework explaining such diverse clinical phenomena possesses high explanatory power.

🧪 Argument 5: Convergence of Data from Multiple Methodologies

The role of RPE is confirmed by data from single-cell recordings in animals, fMRI in humans, EEG/ERP studies, pharmacological manipulations, genetic studies, and computational modeling (S004), (S005), (S003). When independent methods with different limitations and sources of systematic error converge on the same conclusion, this substantially increases confidence in its validity.

| Methodology | What It Measures | Result |

|---|---|---|

| Single-cell recordings | Activity of individual dopaminergic neurons | Real-time encoding of prediction error |

| fMRI | BOLD signal in ventral striatum | Correlation with computed RPE from behavioral models |

| EEG/ERP | Reward positivity component | Sensitivity to magnitude of prediction error |

The Attraction Effect: How Context Hijacks Neural RPE Computations

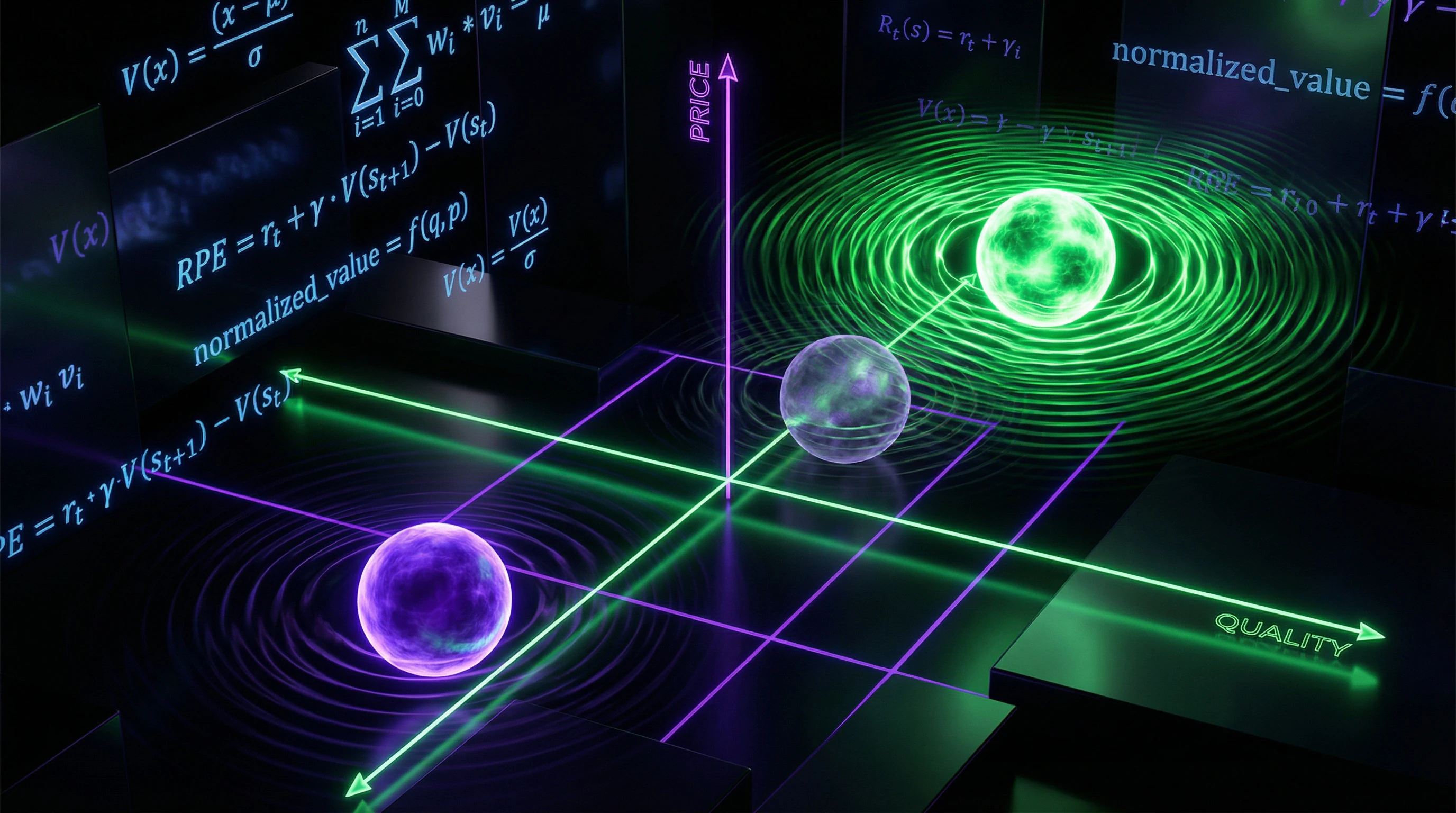

Classical RPE theory assumes that prediction errors are computed based on absolute reward values. However, research on the attraction effect demonstrates that choice context radically modulates these computations (S001, S002).

The attraction effect occurs when adding a third, asymmetrically dominated option (decoy) increases the attractiveness of one of the two original options. If you're choosing between option A (high quality, high price) and option B (low quality, low price), adding option C (slightly worse than A on both dimensions) increases the probability of choosing A, even though A's objective value hasn't changed. More details in the Electromagnetism section.

🧬 Neural Correlates of Contextual RPE Modulation

An fMRI study showed that the attraction effect modulates RPE signals in the ventral striatum and medial prefrontal cortex (S001, S002). When participants made choices in the presence of a decoy option, neural RPE signals for the target option were enhanced compared to contexts without a decoy, even with identical objective outcomes.

The brain computes prediction errors not in absolute units, but relative to choice context. This modulation occurs at the level of basic RPE signals, not just at the level of high-level decision-making.

📊 Temporal Dynamics: Intertemporal Choice Under Contextual Influence

The attraction effect influences intertemporal choice—decisions between smaller immediate and larger delayed rewards (S001, S002). The presence of a decoy option changed not only the choice itself, but also the subjective discounting of future rewards.

| Condition | Temporal Discounting | RPE Signal for Delayed Reward |

|---|---|---|

| Without decoy | High (low patience) | Weak |

| With decoy | Low (high patience) | Enhanced |

Participants demonstrated lower temporal discounting (greater "patience") for the target option in the presence of a decoy. The brain generated stronger positive prediction errors for delayed rewards in contexts that made them more attractive relative to alternatives.

⚙️ Mechanism: Value Normalization in Choice Context

The proposed mechanism involves divisive normalization—a process where the subjective value of an option is computed relative to the average or range of available options (S001). When a decoy is added to the choice set, it shifts the reference point against which other options are evaluated.

- The target option becomes more attractive not because its absolute value increased

- It now dominates a larger number of alternatives in the choice space

- This contextual revaluation is reflected in enhanced RPE signals

- Enhanced signals drive learning and future preferences (S002)

This means that neural reward evaluation systems operate not as absolute counters, but as adaptive comparators, constantly calibrating expectations to the current choice context.

Evidence Base: What We Know About RPE with High Confidence

🔬 Dopamine Encodes Prediction Error, Not the Reward Itself

Dopaminergic neurons in the VTA encode prediction error, not the absolute magnitude of reward (S003, S007). Classic experiments by Schultz showed: with the first unexpected juice delivery, neurons demonstrate a burst of activity, but after learning, when the juice becomes predictable, the burst disappears.

Instead of responding to the reward itself, neurons begin responding to the conditioned stimulus predicting the juice. If the expected reward doesn't arrive, suppression of activity below baseline is observed—a negative prediction error (S003). This pattern precisely matches the mathematical definition of RPE and has been replicated in dozens of laboratories.

Dopamine responds to the difference between expectation and reality, not to reality itself. A completely predictable reward does not trigger a dopaminergic response.

📊 Ventral Striatum as a Computational Hub for RPE

BOLD signal in the ventral striatum, especially in the nucleus accumbens, correlates with computed prediction errors from behavioral models (S008). Meta-analyses show activation of this region during positive RPE across a wide range of tasks—from conditioned reflexes to complex economic decisions.

Critically: activation is specific to RPE, not to reward per se. It's stronger for unexpected rewards than for expected ones, even when the absolute magnitude of reward is identical (S008). Individual differences in the strength of these signals correlate with impulsivity and risk-taking propensity.

- Ventral striatum activates during positive prediction errors

- Activation depends on unexpectedness, not reward magnitude

- Individual differences in activation predict behavioral traits

🧾 Reward Positivity (RewP) as an Electrophysiological Marker of RPE

The reward positivity component in EEG demonstrates sensitivity to reward prediction errors (S003). RewP is a positive deflection in potential occurring 250–350 ms after feedback, with maximum amplitude at central electrodes.

RewP amplitude is larger for positive outcomes than for negative ones, and critically—it's sensitive to expectations: the difference between wins and losses is greater when the outcome is unexpected (S003). However, there's debate: does RewP reflect specifically reward prediction error or a more general salience prediction error—deviation from expectation regardless of valence.

🔎 RPE in Aversive Learning: Extending Beyond Reward

Similar mechanisms operate for aversive stimuli (S001). Following unconditioned aversive stimuli (unpleasant sounds, electric shocks), neural signals corresponding to punishment prediction errors are observed.

When an aversive stimulus is worse than expected, a negative prediction error is generated. These signals are used for avoidance learning and forming defensive responses. Neural substrates partially overlap with reward processing systems but include specific structures: the amygdala and periaqueductal gray. More details in the Theory of Relativity section.

| Stimulus Type | Positive RPE | Negative RPE | Neural Structures |

|---|---|---|---|

| Reward | Better than expected | Worse than expected | VTA, nucleus accumbens |

| Punishment | Less severe than expected | More severe than expected | Amygdala, periaqueductal gray |

⚙️ Value-Free Teaching Signals: A New Paradigm for Understanding Dopamine

Research in Nature challenges the traditional view of dopamine as a value signal (S007). Dopaminergic action prediction errors can serve as teaching signals free from value.

Dopaminergic neurons responded to the mismatch between expected and actual action regardless of whether that action led to reward or punishment (S007). This suggests the dopaminergic system encodes more abstract prediction errors—not just "how good is the outcome," but "how accurate is my model of the world."

Dopamine can signal an error in action prediction, regardless of whether that action is good or bad. This expands our understanding of dopamine beyond the reward system.

Mechanisms and Causality: What Actually Drives Behavioral Change

🧬 Synaptic Plasticity as Mediator Between RPE and Learning

RPE signals don't change behavior directly — they modulate synaptic plasticity in target structures (S005). Dopamine acts as a neuromodulator, altering the efficacy of synaptic transmission in the striatum.

Positive RPEs strengthen synapses through long-term potentiation (LTP), while negative RPEs weaken them through long-term depression (LTD). This process — dopamine-modulated spike-timing-dependent plasticity — provides the causal link between RPE signals and changes in behavioral policy (S005).

Plasticity depends on the temporal coincidence of three factors: presynaptic activity, postsynaptic activity, and dopaminergic signal. Without this triplet, the synapse doesn't change.

🔁 Correlation vs Causality: Optogenetic Evidence

Correlation between dopaminergic activity and learning doesn't prove causality. Optogenetics enabled direct testing of this relationship (S007).

Artificial activation of VTA dopaminergic neurons at the moment of action strengthened that action in the future, even without actual reward. Suppressing dopamine at the moment of receiving reward blocked learning. Dopaminergic RPE signals don't merely correlate with learning — they are necessary and sufficient for its occurrence (S007).

- Dopamine activation → action strengthening (even without reward)

- Dopamine suppression → learning blockade (despite reward)

- Conclusion: causal role of dopamine proven experimentally

🧩 Confounders: Attention, Motivation, and Cognitive Control

Interpretation of RPE signals is complicated by multiple confounders. Attention modulates reward processing: more salient stimuli generate stronger responses independent of RPE. More details in the Statistics and Probability Theory section.

Motivational state influences subjective value: a hungry animal values food more highly, which changes baseline expectations and RPE. Cognitive control and working memory allow maintenance of complex expectations that may not conform to simple TD-learning models (S005).

| Confounder | Mechanism of Influence | How to Control |

|---|---|---|

| Attention | Amplifies neural response to salient stimuli | Equate stimulus complexity; measure attention separately |

| Motivation | Changes subjective reward value | Standardize state (hunger, thirst); vary rewards |

| Cognitive Control | Enables construction of complex expectations | Use simple tasks; measure working memory |

Individual differences in these processes create variability in RPE signals unrelated to the basic learning mechanism (S008).

🔬 Double Dissociation: Model-Free vs Model-Based Learning

RPE-based learning (model-free) isn't the only learning system. A model-based system exists in parallel, using an explicit model of environmental structure for planning (S005).

After changes in reward structure, the model-based system adapts immediately, while model-free requires repeated experience. Neuroimaging shows partial dissociation: ventral striatum is linked to model-free RPE, while dorsolateral prefrontal cortex and intraparietal sulcus are associated with model-based computations (S005).

- Model-free system

- Learns through RPE; slow adaptation to new conditions; ventral striatum.

- Model-based system

- Uses explicit environmental model; rapid adaptation; prefrontal cortex.

- Real behavior

- Combination of both strategies; complicates interpretation of neural signals.

Behavior in real tasks often represents a weighted combination of both systems, requiring more sophisticated models to explain observed activity patterns.

Conflicts in the Data: Where Sources Diverge and Why It Matters

🧩 Reward vs Salience Prediction Error: An Unresolved Debate

There is a fundamental debate about what exactly dopaminergic neurons encode. The traditional interpretation: dopamine encodes reward prediction error—the deviation from expected outcome value (S001). The alternative hypothesis: dopamine encodes salience prediction error—the deviation from expected event salience, regardless of its valence.

Research on reward positivity shows that this component may reflect salience rather than specifically reward. The problem is that in most experiments these two signals correlate: salient events often bring reward, while punishment is both salient and negative. More details in the Logical Fallacies section.

When variables correlate perfectly under laboratory conditions, it's impossible to separate their contributions to neural response. This isn't an experimenter error—it's a fundamental design problem.

Contextual Modulation: Enhancement or Redefinition?

The attractiveness effect demonstrates that context modulates the RPE signal (S002). But the mechanism remains disputed: does context enhance the existing RPE code or completely redefine its logic?

Some studies suggest that attractiveness rewrites option value in real time (S004). Other data point to parallel processing channels: RPE remains unchanged, but its influence on behavior is modulated by a separate salience system.

| Interpretation | Prediction | Status |

|---|---|---|

| Context enhances RPE | Signal amplitude increases with attractiveness | Confirmed in fMRI |

| Context redefines value | RPE is computed from a new baseline | Controversial; requires direct testing |

| Parallel channels | RPE and salience are independent but interact behaviorally | Theoretically attractive but difficult to test |

Age Differences: Normal Variation or Artifact?

Data on RPE across age groups are contradictory. Adolescents show enhanced response to reward prediction errors (S006), but interpretation varies: is this heightened sensitivity to errors or simply different system calibration?

In older adults, the RPE signal weakens, but dopamine may restore this function (S005). The question: does the RPE mechanism itself degrade or does its neurochemical basis change?

Age differences may reflect not different versions of the same mechanism, but fundamentally different learning strategies at different life stages.

Unity or Multiplicity?

The key question: do all dopaminergic neurons encode the same RPE signal or do subpopulations exist with different functions? (S007) suggests a common function, but (S008) shows that axiomatic modeling reveals deviations from the classical RPE hypothesis.

If neurons are specialized, then "reward prediction error" is not a single mechanism but a family of related processes. This changes the entire logic of data interpretation.

- Why This Matters for Cognitive Immunology

- If RPE is not a universal code, then context manipulation doesn't work through a single "lever" but through multiple parallel channels. This complicates defense against cognitive traps, but also opens new intervention points.