🧠 Neuroscience

🧠 NeuroscienceThe Gold Standard of Scientific Evidence Synthesis in Medicineλ

Systematic reviews and meta-analyses represent the highest level of evidence, combining results from multiple studies through transparent, reproducible protocols to generate reliable clinical recommendations.

Overview

Systematic reviews and meta-analyses are fundamental tools of evidence-based medicine, enabling systematic identification, selection, critical appraisal, and synthesis of all relevant research on a specific question. Unlike narrative reviews, they follow predetermined protocols, minimizing systematic errors and ensuring reproducibility of results. Meta-analysis as a statistical method combines quantitative data from independent studies, increasing statistical power and resolving contradictions between individual works. Modern standards such as PRISMA 2020 ensure transparency and completeness of reporting at all stages of review conduct.

🛡️ Laplace Protocol: The quality of a meta-analysis is determined by the quality of included studies — combining weak studies does not create strong evidence. Critical appraisal of methodology, analysis of heterogeneity and publication biases are mandatory for correct interpretation of results.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Navigation Matrix

Subsections

[abiogenesis]

Abiogenesis

Scientific theory about the natural origin of life from simple chemical compounds over 3.5 billion years ago through gradual chemical evolution

Explore

[cell-biology]

Cellular Biology

The cell is the smallest living unit containing all the molecules of life. From single-celled organisms to the trillions of cells in the human body — exploring the structure, functions, and behavior of the foundation of all living things.

Explore

[evolution-genetics]

Evolution and Genetics

Biological evolution is the process of development and change in living nature over millions of years, through which all the diversity of life on our planet emerged.

Explore

[neuroscience]

Neuroscience

An interdisciplinary science studying the structure, function, and development of the nervous system, from molecular mechanisms to human behavior and cognition.

Explore

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

🧠 Neuroscience

🧠 Neuroscience 🧬 Evolution and Genetics

🧬 Evolution and Genetics 🧠 Neuroscience

🧠 Neuroscience 🧬 Evolution and Genetics

🧬 Evolution and Genetics 🧬 Evolution and Genetics

🧬 Evolution and Genetics 🧬 Evolution and Genetics

🧬 Evolution and Genetics 🧠 Neuroscience

🧠 Neuroscience 🧬 Evolution and Genetics

🧬 Evolution and Genetics 🧠 Neuroscience

🧠 Neuroscience 🧠 Neuroscience

🧠 Neuroscience 🧠 Neuroscience

🧠 Neuroscience 🧬 Evolution and Genetics

🧬 Evolution and Genetics⚡

Deep Dive

Systematic Review Methodology: From Literature Chaos to Reproducible Protocol

Systematic reviews represent the highest tier in the hierarchy of scientific evidence. They differ from narrative reviews through rigorous methodology: prospective protocol registration, exhaustive searches across multiple databases, transparent documentation of every decision.

The key distinction: minimization of systematic errors through explicit inclusion and exclusion criteria established before search initiation. This prevents subjective source selection, which is inevitable in traditional literature reviews.

Protocols and Registration: PRISMA-P as Insurance Against Post-Hoc Manipulation

Prospective protocol registration in registries like PROSPERO is a critical mechanism for preventing selective reporting. PRISMA-P 2015 provides a 17-item checklist for protocol development before review commencement: research question, selection criteria, search strategy, synthesis methods.

Registration creates a public record of researcher intentions, making it impossible to covertly alter primary outcomes or inclusion criteria after examining results.

PRISMA 2020 expanded the checklist to 27 items: separate requirements for abstracts, flow diagrams, protocol amendments, certainty of evidence assessment, and funding transparency. PRISMA compliance doesn't guarantee quality, but ensures minimum transparency for critical evaluation of methodological rigor.

Search Strategies: From Inception to the Last Byte

Comprehensive search strategy requires systematic coverage of multiple databases. A typical protocol includes CENTRAL, MEDLINE, and Embase with searches from database inception to a specified date.

- Search Term Transparency

- Complete search strings for each database, Boolean operators, filters—everything must be published for reproducibility.

- Expanded Search

- Manual screening of reference lists from key articles, expert contacts, unpublished data searches to minimize publication bias.

Systematic searching extends beyond electronic databases. It's a combined approach where each source is documented and justified in the protocol.

Meta-Analysis and Statistical Synthesis: When Numbers Speak Louder Than Words

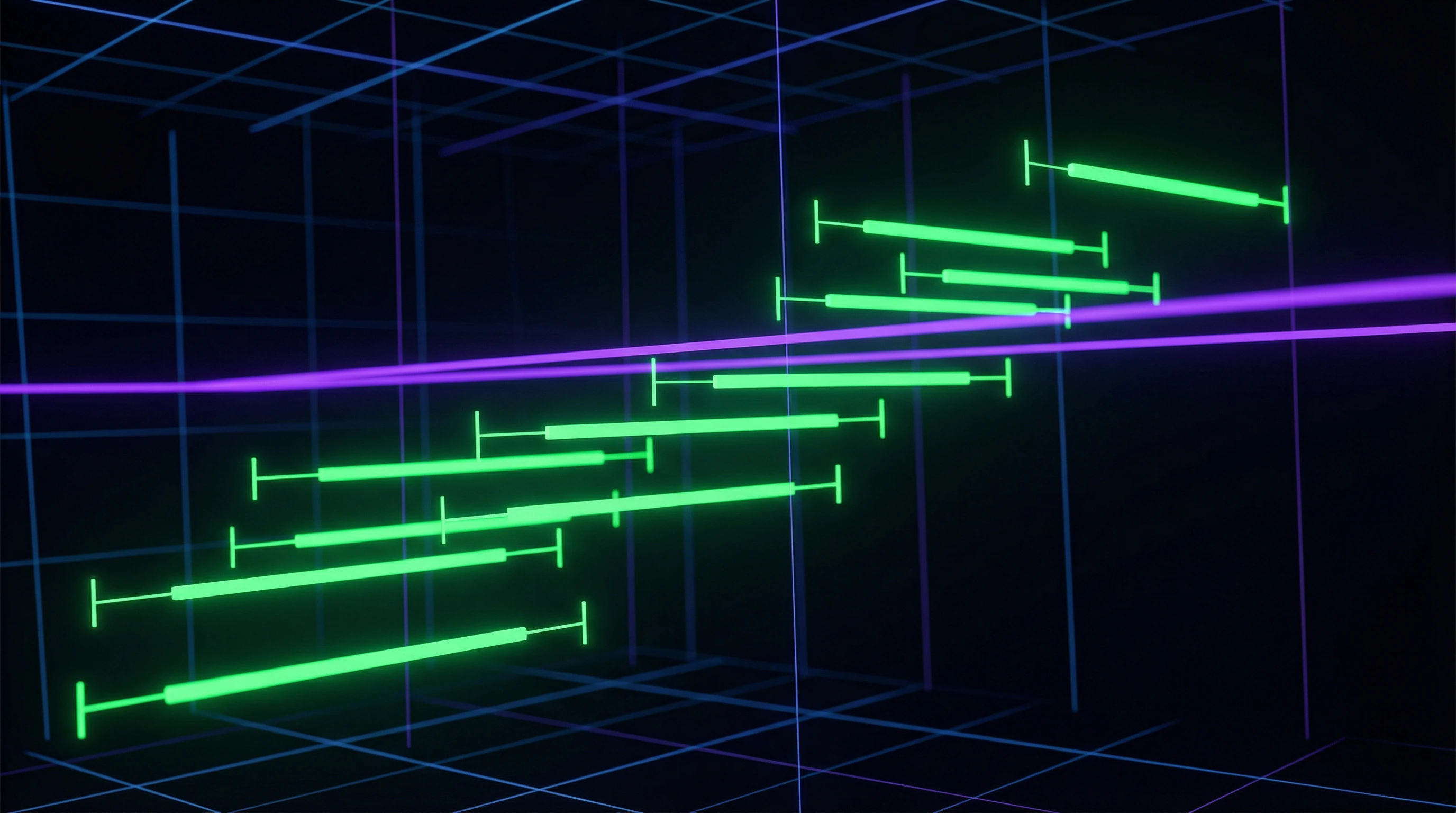

Meta-analysis is a statistical technique for combining quantitative data from multiple independent studies to obtain a single effect estimate with increased statistical power. Unlike a systematic review, which can be qualitative, meta-analysis is always quantitative and requires numerical data suitable for statistical pooling.

Critical advantage: resolving uncertainties when individual studies contradict each other, and detecting effects invisible in small samples.

Fixed and Random Effects Models: The Philosophy of Variability

The fixed effect model assumes that all included studies estimate one true effect, and differences between them are due only to random sampling error. The random effects model allows that the true effect varies between studies due to differences in populations, interventions, or design.

| Model | Assumption | Confidence Interval |

|---|---|---|

| Fixed Effect | One true effect; variation = random error | Narrower with heterogeneity |

| Random Effects | True effect varies between studies | Wider; reflects additional uncertainty |

Meta-analysis of the association between BMI and breast cancer risk revealed opposite effects when stratified by menopausal status: increased risk in postmenopausal women and decreased risk in premenopausal women. A neuroscience study of pain learning showed that intervention duration significantly influenced effect size, explaining part of the heterogeneity between studies.

Heterogeneity and Publication Bias: Detective Work with Data

The I² statistic quantifies the proportion of variability between studies attributable to true heterogeneity: values of 25%, 50%, and 75% are interpreted as low, moderate, and high heterogeneity respectively. High heterogeneity does not disqualify meta-analysis, but requires investigation through subgroup and moderator analysis.

- Identify sources of variability between studies

- Conduct sensitivity analysis by excluding studies with high risk of bias

- Test robustness of conclusions to methodological quality

Publication bias occurs when studies with positive results are published more frequently than those with negative results, distorting the pooled effect estimate toward exaggeration. Funnel plots visualize asymmetry in the distribution of effect sizes, while Egger's and Begg's statistical tests formally test for the presence of bias.

Including unpublished data through contact with researchers and searching clinical trial registries partially mitigates publication bias, but complete elimination is impossible.

Network Meta-Analysis: Multidimensional Chess Game of Interventions

Network meta-analysis extends traditional pairwise meta-analysis, allowing simultaneous comparison of multiple interventions even in the absence of direct head-to-head comparisons between all pairs. The methodology uses both direct evidence from studies directly comparing two interventions and indirect evidence through a common comparator, creating a coherent network of comparisons.

The critical advantage is the ability to rank all available interventions by efficacy and safety, informing clinical decisions in the context of multiple therapeutic options.

Indirect Comparisons and Transitivity: Logic of Transitive Inference

Indirect comparison of interventions A and C through a common comparator B relies on the assumption of transitivity: if A is superior to B, and B is superior to C, then A should be superior to C. The validity of indirect comparisons critically depends on the similarity of studies in effect modifiers—characteristics that may influence the relative efficacy of interventions.

Violation of transitivity occurs when studies comparing A to B systematically differ from studies comparing B to C in population, dosage, or concomitant interventions.

- Inconsistency Statistics

- Tests the transitivity assumption by assessing agreement between direct and indirect evidence. Significant discrepancy signals potential violations.

- Sensitivity Analysis

- Excludes network nodes at high risk of transitivity violation, testing the robustness of intervention rankings.

The RAIN protocol (systematic Review and Artificial Intelligence Network meta-analysis) for COVID-19 demonstrates the application of network meta-analysis to a rapidly evolving evidence base with multiple therapeutic candidates.

Ranking Interventions: From Probabilities to Clinical Decisions

Network meta-analysis generates probabilistic ranking of interventions through SUCRA (Surface Under the Cumulative Ranking curve)—a metric where a value of 100% indicates the highest probability of being the best intervention, and 0% the worst. Ranking accounts not only for point estimates of effect but also uncertainty: an intervention with moderate effect and narrow confidence interval may rank higher than one with larger effect but wide interval.

An intervention optimal on average across the network may be suboptimal for a specific patient subgroup. Stratification by clinical characteristics is critical for translating rankings into action.

Meta-analysis of anti-VEGF therapies for macular degeneration illustrates clinical value: ranking by efficacy and safety simultaneously informs choice between aflibercept, ranibizumab, and bevacizumab.

Integration of artificial intelligence into network meta-analysis, as proposed in the RAIN protocol, automates data extraction and risk of bias assessment, accelerating evidence synthesis in pandemic conditions. The inositol study in PCOS demonstrates the importance of stratification: myo-inositol showed superiority over D-chiro-inositol for reproductive outcomes, but the combination proved optimal for metabolic parameters.

PRISMA 2020 Reporting Standards: From Checklist to Synthesis Transparency

PRISMA 2020 — an updated set of guidelines replacing the 2009 version. The 27-item checklist covers all stages: from formulating the question using PICO structure to interpreting results with consideration of limitations.

Key difference: expanded requirements for describing search methods, assessing certainty of evidence, and reporting data synthesis. This enhances reproducibility and allows readers to verify each step of the authors' logic.

27-Item Checklist and Data Flow Diagrams

The checklist is structured by sections: title, abstract, introduction, methods, results, discussion, funding. Each section contains specific reporting requirements.

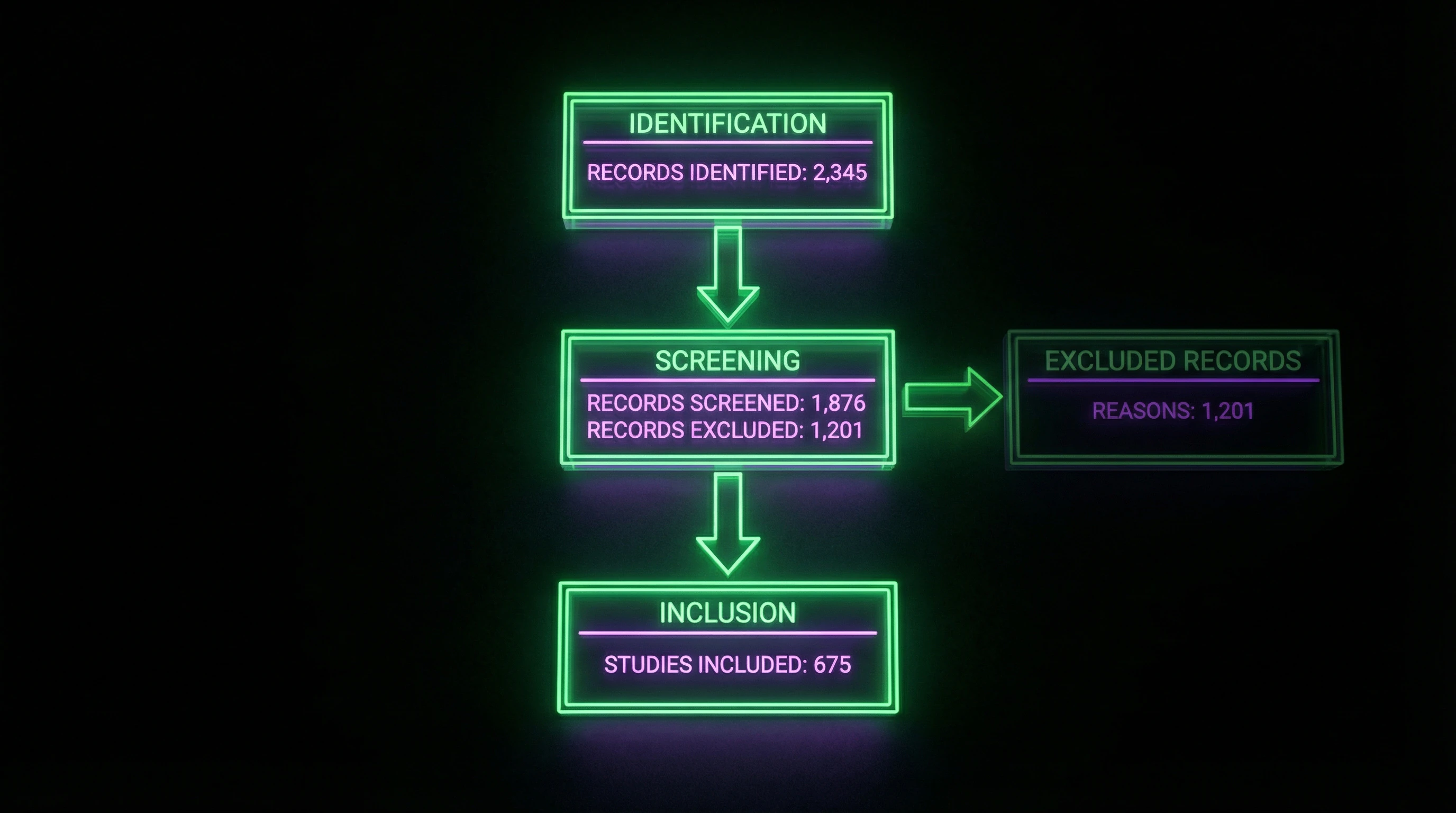

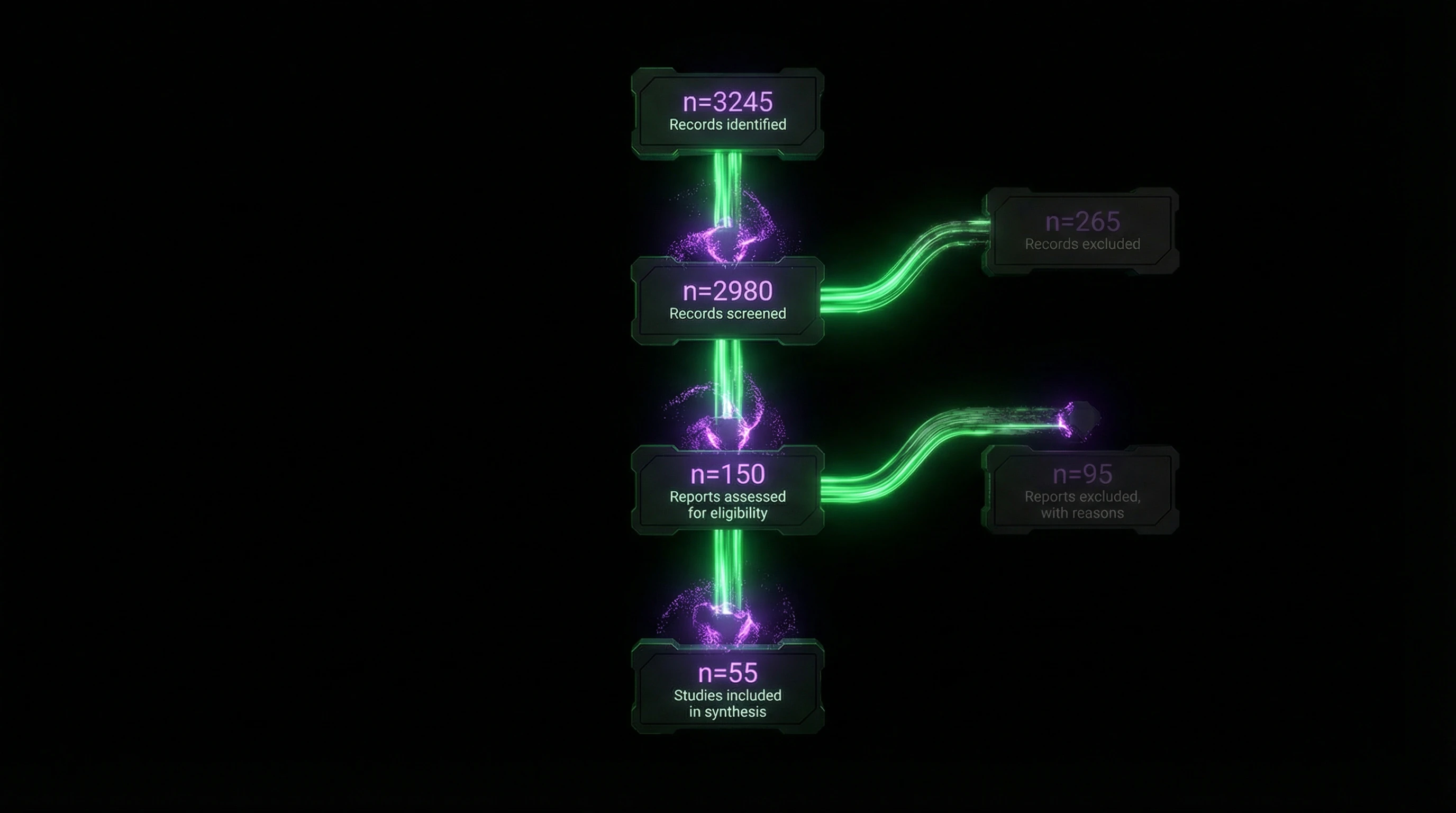

The flow diagram visualizes the selection process: number of records identified through databases → excluded at screening → assessed for eligibility → finally included in synthesis. Example: a neuroscience review on pain started with 6,850 records, but only 37 studies met inclusion criteria.

The flow diagram isn't decoration. It's a verification protocol: readers see where and why studies were filtered out, and can assess whether relevant work was lost.

A separate checklist for abstracts ensures brief but complete presentation of key review elements in structured format — critical for rapid reader screening.

Differences from PRISMA 2009 and Expanded Requirements

PRISMA 2020 requires complete search queries for all databases and the date of last search — this wasn't in 2009. This allows another researcher to reproduce the search or update the review.

- Risk of Bias Assessment

- Now mandatory to specify tools and methods used for critical appraisal, with presentation of results for each included study. In 2009, this was often described vaguely.

- Certainty of Evidence (GRADE)

- The new version requires explicit indication of the assessment system (e.g., GRADE) and discussion of limitations at both individual study and overall review levels.

- PRISMA-P 2015

- Complements the main checklist with 17-item guidance for protocols. Emphasizes the importance of pre-registering methodology in databases like PROSPERO — this prevents p-hacking and selective reporting.

Protocol registration before starting a review isn't bureaucracy. It's a guarantee that authors didn't retroactively rewrite methods to fit results.

Assessing Risk of Bias: From Tools to Interpretation

Combining low-quality data does not produce high-quality evidence. Risk of bias is assessed across multiple domains: randomization, allocation concealment, blinding of participants and outcome assessors, completeness of data, and selective reporting.

In a review on pain neuroscience education, 78% of studies had high risk of bias due to the impossibility of blinding in educational interventions. Systematic documentation of assessment for each study allows readers to judge the reliability of conclusions.

Critical Appraisal Tools and Risk Domains

Cochrane Risk of Bias (RoB 2) structures assessment of randomized controlled trials across five domains: randomization process, deviations from intended interventions, missing outcome data, measurement of outcomes, and selective reporting.

| Tool | Study Type | Key Domains |

|---|---|---|

| RoB 2 | Randomized controlled | Randomization, blinding, data completeness, selective reporting |

| ROBINS-I | Non-randomized | Confounding bias, participant selection, intervention classification |

Each domain is rated as low, some concerns, or high risk based on signaling questions, with an overall assessment reflecting the worst domain. For non-randomized studies, ROBINS-I accounts for additional sources of bias.

Interpreting Results Considering Methodological Quality

High heterogeneity between studies is often explained by differences in methodological quality. Sensitivity analysis excluding high-risk studies reveals whether effects are overestimated.

In a meta-analysis of pain neuroscience education, the effect on pain intensity persisted only when including low risk of bias studies—indicating overestimation of effect in low-quality studies.

The GRADE (Grading of Recommendations Assessment, Development and Evaluation) system integrates risk of bias assessment with inconsistency, indirectness, imprecision, and publication bias to determine overall certainty of evidence.

- Assess risk of bias across domains (randomization, blinding, data completeness)

- Conduct sensitivity analysis excluding high-risk studies

- Apply GRADE to integrate quality with other uncertainty factors

- Document limitations for clinicians and guideline developers

Practical Application and Limitations: From Statistics to Clinical Practice

Statistical significance in meta-analysis does not always correspond to clinical significance. Pooling large samples can detect minimal effects that lack practical value.

In an educational review on pain neuroscience, a standardized mean difference of −0.26 for pain intensity was statistically significant but did not reach the threshold for minimal clinically important difference of 1.5 points on a 10-point scale.

Intervention duration significantly influenced effect size: programs lasting more than 30 minutes showed clinically significant pain reduction, whereas brief interventions did not.

This underscores the need to interpret results in the context of minimal clinically important differences specific to each outcome and population.

Clinical Significance Versus Statistical Significance

Confidence intervals of pooled effect estimates inform precision and clinical interpretation. Wide intervals crossing the clinical significance threshold indicate uncertainty about the intervention's practical value.

In a network meta-analysis of inositol for polycystic ovary syndrome, myo-inositol showed an odds ratio of 2.38 (95% CI 1.43–3.95) for ovulation restoration compared to placebo—a both statistically and clinically significant improvement.

| Outcome | Intervention | Effect | Interpretation |

|---|---|---|---|

| Ovulation restoration | Myo-inositol vs placebo | OR 2.38 (95% CI 1.43–3.95) | Statistically and clinically significant |

| Metabolic outcomes | Myo- + D-chiro-inositol (40:1) | Superiority confirmed | Requires stratification by outcome types |

Heterogeneity of effects between subgroups (I² > 50%) requires caution in generalizing results and may indicate the need for individualized treatment approaches.

The Role of Artificial Intelligence in Automating Evidence Synthesis

Integration of artificial intelligence in systematic reviews automates labor-intensive stages: screening titles and abstracts, data extraction, and risk of bias assessment. Machine learning can reduce screening time by 30–70% while maintaining sensitivity above 95%.

Automation requires validation: algorithms learn from existing data and may reproduce biases in training sets or miss studies with non-standard terminology.

In a diagnostic meta-analysis of AI-assisted parathyroid gland identification, pooled sensitivity was 93.8%, but heterogeneity between studies (I² = 89%) indicated algorithm variability and the need for standardization.

- Living systematic reviews, continuously updated through AI monitoring of new publications, represent the future of evidence synthesis in rapidly evolving fields.

- Transparent reporting of automation's role in each process stage is required.

- Critical for rapid evidence synthesis during pandemics and other crisis situations.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

A systematic review is a study that uses rigorous predefined methods to search, select, and analyze all relevant research on a specific question. Unlike narrative reviews, it follows clear protocols (such as PRISMA), minimizes subjectivity, and ensures reproducibility of results. This makes systematic reviews the highest level of evidence in medicine.

Meta-analysis is a statistical method for combining quantitative data from multiple independent studies to obtain a single effect estimate. It increases statistical power and helps resolve contradictions between individual studies. It is used in clinical medicine, epidemiology, and neuroscience to obtain more precise conclusions.

PRISMA 2020 is an updated reporting standard for systematic reviews, including a 27-item checklist, abstract checklist, and flow diagrams. It replaced the 2009 version and ensures transparency, completeness, and reproducibility of publications. Adherence to PRISMA increases the quality and trustworthiness of review results.

No, systematic reviews can contain systematic errors despite rigorous methodology. Publication bias, low quality of included studies, and incomplete literature searches can distort conclusions. Therefore, critical assessment of risk of bias and sensitivity analysis are mandatory for proper interpretation.

High heterogeneity between studies requires careful interpretation. Moderator and subgroup analyses help identify sources of differences (such as dosage, duration, population characteristics). If heterogeneity is unexplained, pooling data may be inappropriate, and one should limit the analysis to qualitative synthesis.

Prospective protocol registration (for example, in PROSPERO) prevents selective publication of results and post-hoc changes to methodology. This increases transparency and reduces the risk of data manipulation. PRISMA-P 2015 emphasizes the need to develop a protocol before starting the review to ensure scientific rigor.

It is necessary to systematically search multiple databases (MEDLINE, Embase, CENTRAL) from their inception to a specified date. Search queries must be transparently documented, including keywords and filters. Additionally, reference lists of identified articles and gray literature should be checked for completeness of coverage.

Network meta-analysis allows simultaneous comparison of multiple interventions, even in the absence of direct comparisons between them. It uses indirect comparisons through a common comparator and allows ranking of treatment methods. It is used to optimize treatment selection when multiple options are available.

Standardized critical appraisal tools are used to identify methodological limitations (randomization, blinding, completeness of data). Risk of bias assessment informs interpretation of results and helps determine the reliability of conclusions. Studies with high risk may be excluded in sensitivity analysis.

The fixed-effect model assumes a single true effect across all studies, while the random-effects model allows for variability of effects between studies. In the presence of heterogeneity, the random-effects model is more conservative and produces wider confidence intervals. Model selection depends on statistical tests of heterogeneity.

No, a meta-analysis is not always equivalent to a large RCT. It combines data from studies with different protocols, populations, and quality levels, which can introduce systematic biases. A well-designed large trial is often preferable, especially when available data show high heterogeneity or low quality.

No, statistical significance does not guarantee clinical significance. Large sample sizes in meta-analyses can detect minimal effects that lack practical importance. It's essential to evaluate effect size, confidence intervals, and clinical context to determine real-world benefit.

AI automates labor-intensive stages: screening titles, extracting data, assessing risk of bias. This accelerates the process and reduces human error, but requires validation. Examples include AI-assisted identification of parathyroid glands and automated diagnostic accuracy analysis.

Moderator analysis examines how effect size depends on study characteristics (dosage, duration, population). For example, the effect of pain neuroscience education may vary based on intervention length. This helps optimize clinical guidelines and understand sources of heterogeneity.

Technically possible, but requires caution due to differences in bias risk between designs (RCTs vs observational studies). It's usually preferable to combine studies of the same type, analyzing different designs separately or in subgroups. Mixing can increase heterogeneity and complicate interpretation.

Publication bias (preferential publication of positive results) can inflate effect estimates. Funnel plots, Egger's tests, and correction methods (trim-and-fill) are used. When significant bias exists, conclusions should be stated cautiously, noting possible effect overestimation.