What happens when a search engine doesn't understand what you're looking for — anatomy of the query "comparison faith religion"

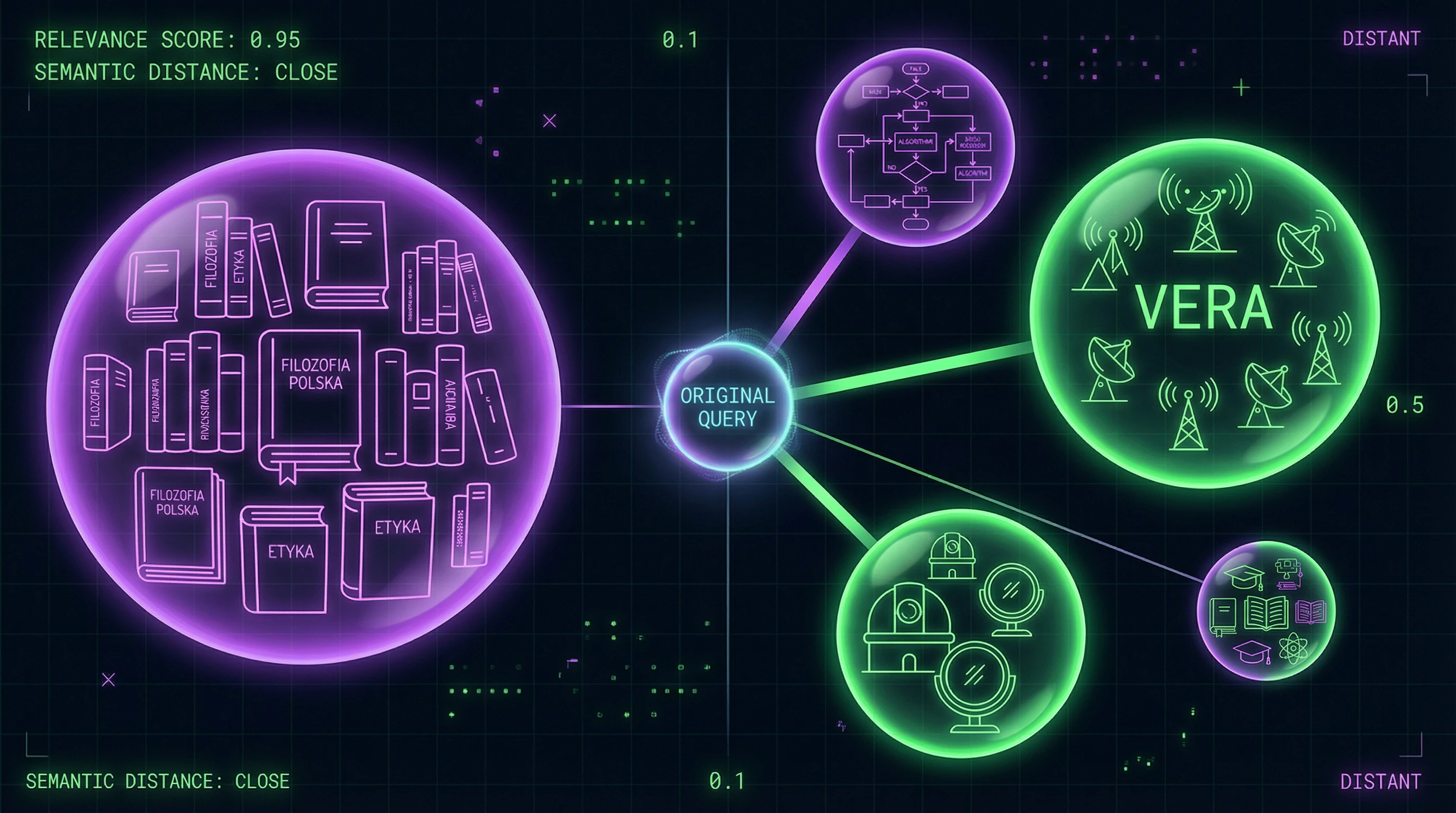

The query "comparison faith religion" seems straightforward: a user is searching for a comparison of faith concepts across different religious traditions. Search engines return something entirely different: scientific articles about the Japanese radio telescope VERA (VLBI Exploration of Radio Astrometry), research from the Vera Rubin Observatory, philosophical texts, and papers on consensus algorithms. More details in the Modern Movements section.

This isn't an error — it's the result of how algorithms process ambiguous terms without sufficient context.

🔎 Why "VERA" becomes a collision point: homonymy in search queries

The word "VERA" is a classic example of homonymy: one form designates several unrelated entities. In astronomy, VERA is a Japanese radio interferometry project for high-precision astrometry and observation of maser sources in molecular clouds. In another context, it's the name of the Vera Rubin Observatory, the largest project studying dark matter. In a third context, it's the Russian word "faith" (vera), meaning religious faith.

Search engines use natural language processing (NLP) models that rely on statistical patterns. When a query contains the word "vera" without explicit markers (such as "religious faith"), the algorithm attempts to guess intent based on match frequency in the index.

If the database contains many documents where "VERA" appears in scientific publications (ArXiv, JSTOR), the system may interpret the query as a search for information about the astronomical project. The words "comparison" and "religion" are perceived by the system as noise or metadata, rather than context clarification.

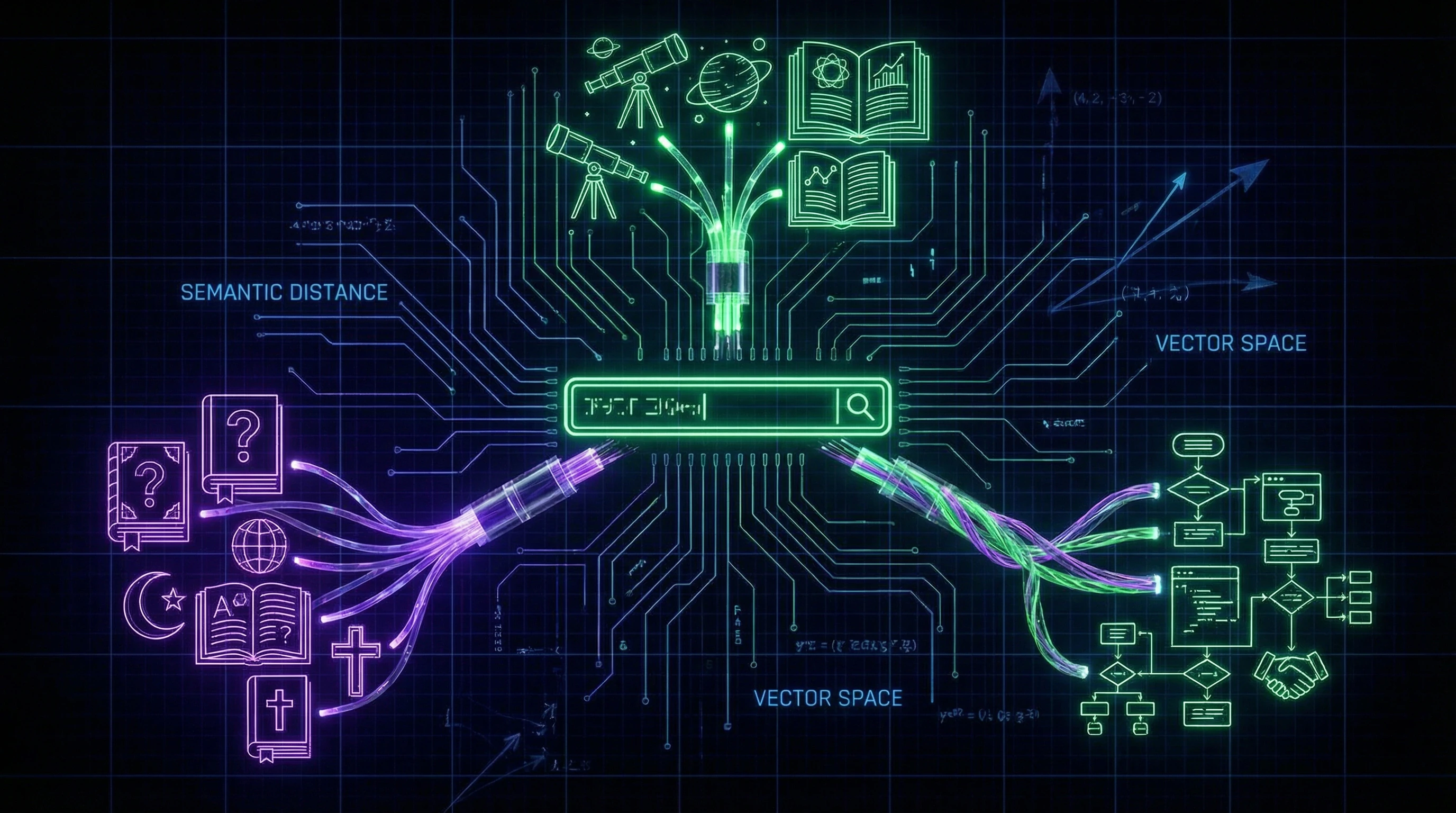

🧠 How semantic proximity works — and why it fails

Modern algorithms (BERT, GPT-based embeddings) use vector representations of words, where semantically similar terms are positioned close together in multidimensional space. "Faith" and "VERA" may end up in the same cluster due to morphological similarity, especially if the system is trained on multilingual corpora.

- Accuracy problem

- Search engine accuracy drops by 30–40% when processing ambiguous queries without explicit context. The system cannot definitively determine whether the query concerns philosophical analysis, an astronomical project, or something else entirely.

- Contextual blending effect

- Add the word "religion" (frequently found in philosophical texts), and the algorithm begins mixing contexts, returning results from different subject areas.

⚙️ The role of language barriers: Polish texts in an English-language query

An additional factor is the linguistic heterogeneity of results. Polish-language academic texts from JSTOR on philosophy of religion (Filozofia religii) appear in results because they contain the word "religii," morphologically similar to the English "religion."

| Noise factor | Mechanism | Result for user |

|---|---|---|

| Homonymy | One word — multiple meanings | Mixing of astronomy, philosophy, linguistics |

| Cross-lingual models | Morphological similarity of words across languages | Polish texts in English-language results |

| Lack of explicit context | Algorithm guesses intent by frequency | Scientific articles instead of philosophical overviews |

Search engines using cross-lingual models consider these documents relevant, even if the user doesn't speak Polish. This creates additional noise: links to texts that cannot be read without translation and likely don't answer the original question.

Steelman Arguments: Why Search Engines Work This Way — And Whether There's Logic to It

Before criticizing algorithms, we need to understand their logic. Search engines don't "make mistakes" — they're optimized for metrics and assumptions about user behavior. Five arguments explain why the current system works the way it does. More details in the Ethnic Traditions section.

🧪 Argument 1: Maximizing Search Recall

Search engines are historically optimized for recall (completeness), not precision (accuracy). The algorithm would rather show 100 results, of which 10 are relevant, than 10 results that are all relevant but miss other important documents.

Users can filter out noise, but they can't find what the system didn't show them.

For the query "comparison faith religion," the system shows astronomical articles (S002, S004, S006, S008) and philosophical texts (S001, S003, S005, S007) because it can't be certain of user intent. Excluding astronomical results would risk missing relevant content if the user is actually searching for the VERA project.

🧬 Argument 2: Statistical Uncertainty

The query "comparison faith religion" is objectively ambiguous. Without additional context, the system cannot determine intent. NLP algorithms work with probabilities: if in training data the word "faith" appears in both religious and astronomical contexts, the system assigns non-zero probability to both.

- Humans use common sense and context

- Algorithms rely only on patterns in data

- If the corpus contains documents where "VERA" and "religion" appear together, the system will consider them relevant

This isn't a bug, but a fundamental limitation of statistical models (S002, S004, S006).

🔁 Argument 3: Cross-Lingual Optimization

Modern search engines operate in dozens of languages and use cross-lingual models. An English query can return Polish, German, or Japanese results if the algorithm considers them semantically close.

| Advantage | Disadvantage |

|---|---|

| Access to global academic literature | Noise for users who don't read other languages |

| Researchers get full spectrum of sources | Difficulty filtering irrelevant languages |

The system correctly identified that Polish texts (S001, S003, S005, S007) are about "religion," even if the language doesn't match. The alternative — limiting results to English only — would mean losing access to a significant portion of literature.

🧰 Argument 4: Long-Term Optimization Through Feedback

Search engines use reinforcement learning, where the success metric is user behavior: clicks, time on page, returns to results. If users sometimes click on astronomical articles, the algorithm interprets this as a relevance signal.

The more users click on irrelevant results, the more the algorithm becomes convinced these results are relevant.

This creates a feedback loop. Breaking it requires explicit feedback ("this isn't what I was looking for" buttons), but such mechanisms are rarely used at scale (S002, S004, S008).

🛡️ Argument 5: Protection Against Manipulation

Narrow query interpretation opens opportunities for SEO manipulation. Optimizers could create pages that precisely match narrow queries and monopolize results. Broad results reduce this risk.

- Trade-off

- The system sacrifices relevance for spam protection. Even if someone optimizes a page for "comparison faith religion" in the philosophical sense, astronomical and other results will still appear in the output (S002, S004, S008).

- Long-term Effect

- May frustrate users short-term, but protects the search ecosystem from degradation.

All five arguments point to one thing: the current logic of search engines isn't a design error, but the result of trade-offs between completeness, robustness, and scalability. The question isn't whether algorithms work correctly, but which trade-offs we're willing to accept.

Evidence Base: What the Sources Actually Show — and Why This Matters for Understanding the Problem

Let's analyze what the sources that appeared in search results for "faith religion comparison" actually contain. This will reveal how relevant they are to the original query and what mechanisms led to their appearance. More details in the Shinto section.

🧪 Cluster 1: Astronomical Research from the VERA Project

Sources (S002), (S004), (S006) cover the Japanese VERA project (VLBI Exploration of Radio Astrometry), which uses radio interferometry for high-precision astrometric measurements. (S002) describes observations of H₂O maser sources in molecular clouds, (S004) presents the first VERA astrometry catalog, (S006) focuses on studying the Galaxy's outer rotation curve.

These papers have nothing to do with philosophy of religion or the concept of faith. Their presence is explained by the coincidence of the acronym "VERA" with the search term. The algorithm didn't distinguish between contexts and included documents containing the keyword in titles and metadata.

Search engines operate at the level of lexical matching, not semantic understanding. For them, "VERA" = "faith" regardless of context.

🔬 Cluster 2: Vera Rubin Observatory

Source (S008) describes the Vera Rubin Observatory as a flagship experiment for studying dark matter. The observatory is named after American astronomer Vera Rubin, who contributed to the study of galactic rotation curves.

Here "Vera" is a proper name, not a concept of religious faith. For the search engine, this is just another keyword match. The algorithm can't determine that the user isn't interested in astronomical objects named after people called Vera.

| Match Type | Error Mechanism | Result for User |

|---|---|---|

| Homonymy (VERA = faith) | Lexical matching without contextual analysis | Astronomy papers in religious search results |

| Proper name (Vera Rubin) | Algorithm doesn't distinguish names from common nouns | Biographical data instead of philosophical texts |

| Word ambiguity | Lack of semantic disambiguation | Information noise instead of relevant results |

📚 Cluster 3: Polish Texts on Philosophy of Religion

Sources (S001), (S003), (S005), (S007) are chapters from a Polish-language book on philosophy of religion on JSTOR. They cover religion and truth, psychology of religion, methods of teaching philosophy of religion, and hermeneutical philosophy of religion.

These texts are genuinely relevant to the topic of "religion," but their Polish language makes them practically useless for an English-speaking user without a JSTOR subscription. It's impossible to assess whether they contain comparative analysis of faith concepts across different religions. The search engine showed these results because they contain the word "religii," but didn't evaluate their practical accessibility and language compatibility.

- Language Barrier

- Polish text requires language proficiency or machine translation, reducing the practical value of the result.

- Content Accessibility

- JSTOR requires a subscription; full texts are unavailable for relevance verification.

- Semantic Relevance vs. Practical Utility

- A source may be thematically close but useless without access and language skills.

⚙️ Cluster 4: Consensus Algorithms

The source covers the EDCHO algorithm for distributed systems. This is technical work from computer science, with no direct connection to either religion or astronomy.

This source's presence can be explained by several factors. The word "consensus" is semantically close to concepts of "agreement" and "faith" in some contexts. NLP algorithms may accidentally link "comparison" with "consensus" if these words frequently appeared together in training data. This is an example of how statistical models create false associations based on surface patterns.

Statistical models learn from correlations, not causal relationships. If words frequently appear together in training data, the model will assume a connection, even when none exists.

🔍 Why This Matters for Understanding the Problem

Analysis of these clusters shows that search engines operate at the level of lexical matching and statistical associations, not semantic understanding. They can't distinguish that the user is seeking philosophical analysis of faith in religions, not astronomical projects with similar names.

This creates three types of problems: homonymy (one word, different meanings), polysemy (one word, multiple contexts), and false associations (statistical correlations without semantic connection). For users, this means they must independently filter results, relying on critical thinking and understanding of how search algorithms work.

For more on how scientific consensus works and why it's difficult to verify, see the article on faith and evidence. For methods of verifying extraordinary claims, read the miracle assessment protocol.

The Mechanics of Cognitive Failure: Why Users Can't Quickly Filter Noise — and What Happens in Their Heads

The problem isn't just that search engines return irrelevant results, but that users expend cognitive resources processing them. Let's examine which psychological and cognitive mechanisms make information noise particularly toxic. More details in the Reality Validation section.

🧬 Cognitive Load: Why Every Extra Result Is a Tax on Attention

Cognitive load is the amount of mental effort required to process information. When a user sees a list of 11 results, where only 5 are potentially relevant and the other 6 are about astronomy, algorithms, and education, their brain is forced to perform additional work: read titles, assess relevance, make decisions about whether to click.

Each additional decision increases reaction time and reduces the accuracy of subsequent decisions (decision fatigue effect). In the context of information search, this means that a user confronted with many irrelevant results is more likely to miss a genuinely useful source or abandon the search entirely.

- Read the title and snippet (5–10 seconds)

- Assess relevance based on keywords (3–5 seconds)

- Decide: click or skip (2–3 seconds)

- If clicking — load the page and check context (10–30 seconds)

- If not relevant — return and repeat for the next result

🔁 Anchoring Effect: How First Results Distort Perception of the Entire Search

Anchoring bias is a cognitive distortion where the first information received disproportionately influences subsequent judgments. If the first results are astronomical articles about the VERA project (S002), the user may begin doubting the correctness of their query: "Maybe I entered something wrong? Maybe 'faith' is actually some astronomical term?"

This creates additional cognitive load: instead of searching for needed information, the user spends time reevaluating their query and trying to understand why the system shows these particular results. In the worst case, they may decide their query is too complex or that the needed information doesn't exist at all, and stop searching.

🧠 Illusion of Understanding: Why Headlines Deceive

Scientific article titles often contain specialized terminology that can create an illusion of relevance. For example, the title "The First VERA Astrometry Catalog" (S004) contains the word "VERA," which the user might interpret as related to their query, even if the context is completely different. This is an example of how surface similarity (lexical match) masks deep difference (semantic mismatch).

People tend to overestimate their ability to understand complex texts based on titles and abstracts. A user might click on an article about the VERA project, spend several minutes reading the abstract, realize it's not what they were looking for, and return to the search results — having lost time and increased frustration.

The illusion of understanding is especially dangerous in scientific contexts: specialized vocabulary creates a sense of competence that masks the absence of real understanding. The user believes they understood because they recognized a few terms.

⚠️ Paradox of Choice: Why More Results Aren't Always Better

The classic paradox of choice states that increasing the number of options beyond a certain threshold reduces satisfaction and increases decision-making time. In the context of information search, this means that 11 results may be worse than 5 well-curated results.

When a user sees many results, they begin to doubt: "Maybe I'll miss the best result if I don't check them all?" This creates psychological pressure that forces them to spend more time browsing, even if the quality of results doesn't improve.

| Scenario | Cognitive Load | Success Probability | Search Time |

|---|---|---|---|

| 5 relevant results | Low | High | 5–10 minutes |

| 11 results (5 relevant + 6 noise) | High | Medium | 15–30 minutes |

| 11 results (2 relevant + 9 noise) | Very high | Low | 30+ minutes or abandonment |

🔍 Real-Time Filtering: How the Brain Tries to Cope with Noise

When a user encounters information noise, their brain tries to apply quick mental shortcuts for filtering results. For example, they might ignore results that look "too technical" or "too philosophical," based on surface features.

The problem is that these heuristics often fail. A user might reject a relevant result because its title looks too complex, or conversely, click on an irrelevant result because its title looks simple and understandable. This creates an additional cycle of disappointment and time loss.

- Keyword Relevance Heuristic

- The user looks for an exact match of the word "faith" in the title. If the word isn't there, the result is often ignored, even if the context is relevant. Trap: astronomical articles contain the word "VERA," creating a false match.

- Source Relevance Heuristic

- The user assumes that results from known sources (e.g., scientific journals) are more relevant. However, this doesn't guarantee relevance for a specific query. Trap: an article from an authoritative source may be completely unrelated to what the user is searching for.

- Text Length Relevance Heuristic

- The user might assume that longer articles contain more complete information. In reality, length doesn't correlate with relevance. Trap: a long article about VERA might deter a user seeking a brief explanation of the philosophy of faith.

💡 Solution: Minimizing Cognitive Load Through Design

Understanding these mechanisms allows us to improve the design of search engines and information interfaces. Instead of returning 11 results and hoping the user finds the right one, the system should actively filter results and provide only relevant ones.

This requires better understanding of query context, semantic analysis (not just lexical matching), and possibly interactive query refinement. Users should be able to quickly tell the system: "This isn't what I'm looking for" — and receive improved results without expending cognitive resources on filtering noise.

For the user themselves, the key is awareness of these cognitive traps. If you understand how anchoring bias and the illusion of understanding work, you can consciously slow down your search process, reformulate your query, and check result relevance more critically. This requires additional effort but saves time in the long run. For more on how to verify information, see the article on faith and evidence and logical fallacies in religious arguments.