Anatomy of a promise: what exactly is being sold under the "systematic review" label and why it works

The term "systematic review" in contemporary science has acquired the status of a gold standard of evidence — and precisely for this reason has become an object of mass exploitation. Publications with this phrase in the title receive more citations than regular literature reviews, regardless of actual methodological quality (S009).

This creates a powerful incentive for authors to label any collection of sources as "systematic," even when the selection process was arbitrary. Form begins to work instead of content. More details in the section Ethnic and Indigenous Identity.

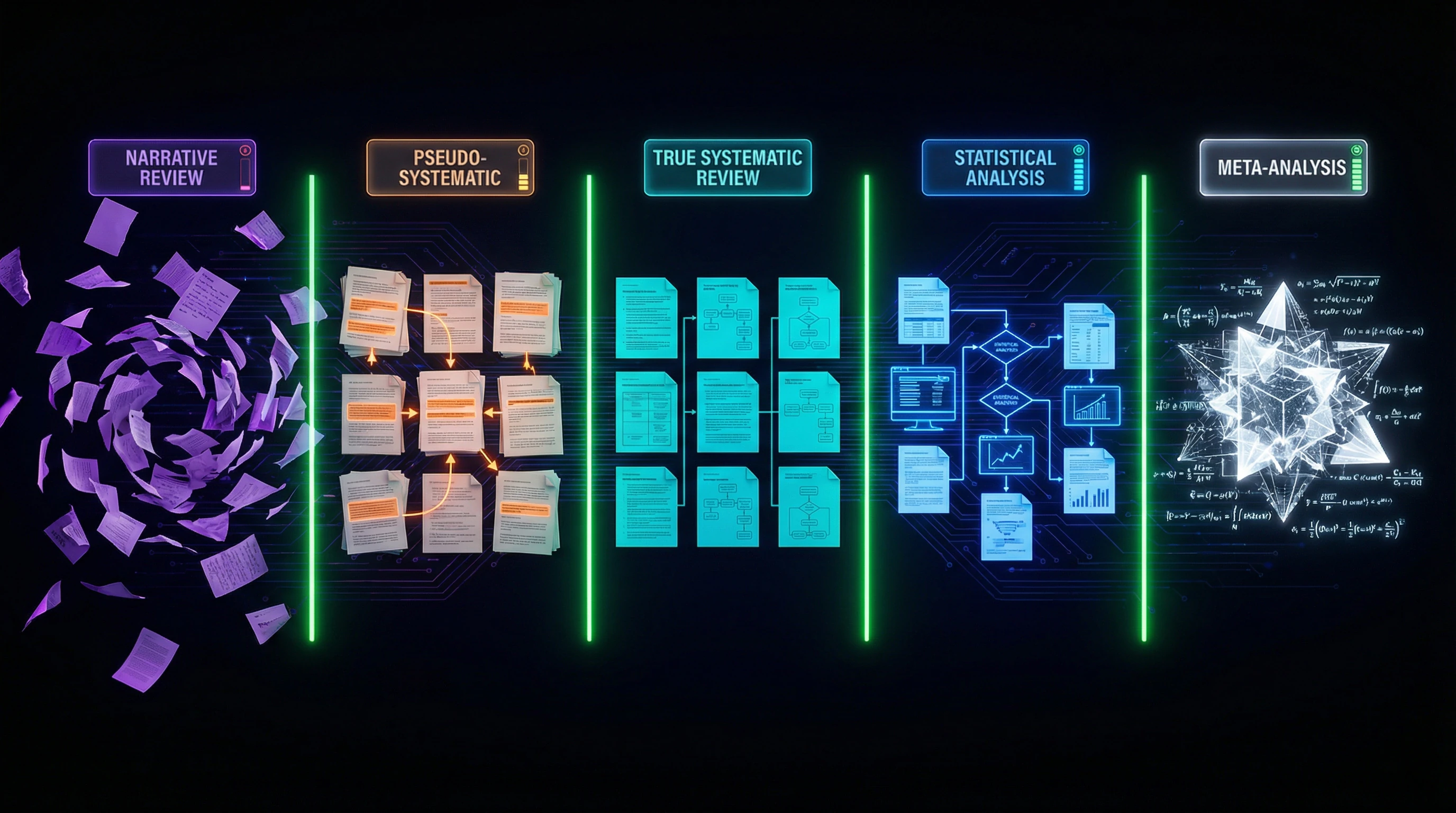

🧩 Three levels of concept substitution

- Terminological illiteracy

- Many researchers genuinely don't understand the difference between a narrative review, systematic review, and meta-analysis. They use the term "systematic" meaning merely "organized" or "structured," unaware of the strictly defined methodological weight this word carries (S010).

- Methodological opportunism

- Authors know about the requirements for systematic reviews but deliberately simplify procedures, hoping reviewers won't notice the absence of a search protocol or bias risk assessment.

- Outright falsification

- Creating the appearance of systematicity through formal mention of databases and selection criteria while actually cherry-picking results that confirm a pre-selected hypothesis (S011).

🔍 Cognitive traps of trust in "scientificness"

The presence of a structured reference list, tables with selection criteria, and formalized language creates an illusion of methodological rigor even in its complete absence (S009). The reader sees familiar attributes of "real science" — PRISMA diagrams, study characteristics tables, evidence quality assessments — and automatically assigns the text high credibility status.

Visual complexity masks methodological emptiness. This is the effect of "scientific camouflage": form substitutes for content.

The brain conserves resources by relying on superficial markers of authority instead of deep analysis of argument logic. This works especially effectively in areas where the reader is not an expert.

⚙️ Economics of pseudo-systematicity

| Parameter | Genuine systematic review | Pseudo-systematic review |

|---|---|---|

| Creation time | 6–18 months | 2–4 weeks |

| Minimum team | 3+ researchers | 1 author |

| Protocol registration | Mandatory | Often absent |

| Independent quality assessment | Yes | No |

| Publication value (in eyes of non-specialized journals) | High | Identical |

This creates a classic "race to the bottom" situation: researchers playing by the rules lose in publication speed to those who ignore the rules (S006). The system incentivizes fakes.

Result: journals and databases fill with works that look like systematic reviews but methodologically are not. Readers cannot distinguish one from the other without specialized training.

Steel-manning the Argument: Seven Reasons Why Even Imperfect Systematic Reviews Outperform Chaotic Truth-Seeking

Before dissecting the flaws of pseudo-systematic approaches, we must acknowledge the fundamental value of systematizing knowledge itself. Even an imperfectly executed systematic review often surpasses the informational value of arbitrary study selection or expert opinion based on personal experience. More details in the Judaism section.

This isn't an excuse for methodological sloppiness, but rather recognition that criticism should target specific protocol violations, not the concept of structured evidence synthesis itself.

🧪 First Argument: Reproducibility vs. Expert Opacity

A systematic review, even with flaws, provides an explicit decision trail: which databases were searched, which search terms were applied, which inclusion and exclusion criteria defined the final sample (S009). This allows other researchers to reproduce the search, verify results, and identify potential gaps.

Traditional expert reviews are "black boxes": readers don't know which sources the expert considered and rejected, which evaluation criteria were applied, or which personal biases may have influenced conclusions. Process transparency is a fundamental advantage of the systematic approach that persists even with imperfect execution.

- Explicit search and selection protocol

- Reproducibility of procedures by other researchers

- Ability to verify and critique methodology

- Documentation of reasons for source exclusion

📊 Second Argument: Quantitative Assessment of Result Consistency

Systematic reviews allow assessment not only of effect presence, but also the degree of result concordance across studies. When 15 of 20 selected studies show similar results, this is qualitatively different information compared to an expert mentioning "several works confirming the hypothesis" (S010).

Even if the selection procedure for those 20 studies was imperfect, readers gain insight into the distribution of results in available literature. This is especially important in fields with high data heterogeneity, where individual studies may yield contradictory results due to differences in populations, measurement methods, or study conditions.

🧬 Third Argument: Identifying Knowledge Gaps Through Systematic Mapping

One underappreciated function of systematic reviews is not synthesizing existing knowledge, but identifying areas where knowledge is insufficient. Scoping reviews are specifically designed for this purpose: they don't aim to answer a specific clinical question, but rather map the research landscape in a given area (S009).

This approach reveals that some problem aspects have dozens of studies while others have none. This is critically important information for planning future research and allocating scientific resources—information impossible to obtain from traditional narrative reviews.

🔬 Fourth Argument: Interdisciplinary Integration Through Standardized Protocols

Systematic reviews create a common language for integrating knowledge across disciplines. When researchers from medicine, psychology, and sociology use similar systematization protocols (e.g., PRISMA for medicine or analogous standards for other fields), this facilitates interdisciplinary synthesis (S011).

Research on requirements engineering demonstrates how a systematic scoping review enabled comparison of traditional and modern approaches from different technological paradigms, creating a unified taxonomy of methods (S009). Without a standardized protocol, such comparison would be subjective and unverifiable.

🧾 Fifth Argument: Cumulative Science vs. Fragmented Knowledge

Systematic reviews embody the idea of cumulative science: each new study doesn't exist in isolation but fits into the context of all previous work. This is the opposite of "publication noise," where each article ignores predecessors and claims novelty.

A review on GRIN-associated epilepsy in children shows how systematic synthesis of 47 studies revealed patterns invisible in individual works: genotype-phenotype correlations, age-specific manifestation features, effectiveness of various therapeutic approaches (S010). No single study could provide such a complete picture.

⚙️ Sixth Argument: Protection Against Publication Bias Through Active Search

Systematic reviews require active search for unpublished data, studies with negative results, and works in other languages—everything typically overlooked in traditional literature reviews (S012). While this task isn't always perfectly executed in practice, its inclusion in the protocol creates pressure on authors and reviewers.

A review on chronic kidney disease and COVID-19 included not only English-language publications from PubMed, but also Russian-language works from eLibrary, Chinese studies from CNKI, and preprints from medRxiv, substantially expanding the evidence base (S012).

🧭 Seventh Argument: Methodological Evolution Through Criticism and Improved Standards

Systematic reviews create opportunities for methodological reflection and improvement. Each generation of systematic reviews learns from previous mistakes: new quality checklists emerge (AMSTAR, ROBIS), bias risk assessment criteria are refined, specialized protocols are developed for different study types (S011).

This evolution is impossible without the standardized foundation that systematic approaches provide. Criticizing a specific review for methodological shortcomings isn't an argument against systematicity itself, but rather a stimulus for improving standards.

Evidence-Based Anatomy: What Distinguishes True Systematization from Imitation — Component-by-Component Analysis

Moving from theoretical arguments to practical analysis, it's necessary to establish concrete criteria by which methodologically rigorous systematic reviews can be distinguished from their imitations. These criteria are based on international standards (PRISMA, Cochrane Handbook) and analysis of real publications from various disciplines. More details in the section Neopaganism.

📋 Component One: Pre-Registration of Protocol and Protection Against Post-Hoc Changes

A genuine systematic review begins with protocol registration in a public database (PROSPERO for medical reviews, OSF for other fields) before starting the search and selection of studies (S010). This is critically important protection against "fitting" methodology to desired results.

The protocol establishes the research question, inclusion/exclusion criteria, search strategy, quality assessment methods, and data synthesis plan. Any deviations from the protocol must be explicitly documented and justified in the final publication. Analysis of systematic reviews on myasthenia gravis shows that only 23% of reviews published in 2018-2020 had pre-registered protocols, although this requirement is included in most editorial policies (S011).

🔍 Component Two: Comprehensive Multi-Database Search with Documented Strategy

Systematic search requires using at least three to four specialized databases relevant to the research field. For medical reviews, this typically includes PubMed/MEDLINE, Embase, Cochrane Library, and Web of Science; for social sciences — Scopus, PsycINFO, Sociological Abstracts (S012).

Critically important: the complete search strategy for each database must be published in the article's appendix, including all terms used, Boolean operators, filters, and search dates. This allows other researchers to precisely reproduce the search. The review on requirements engineering demonstrates exemplary practice: the authors provided complete search strings for IEEE Xplore, ACM Digital Library, Scopus, and Web of Science, including 47 combinations of key terms (S009).

| Research Field | Required Databases | Additional Sources |

|---|---|---|

| Medicine | PubMed, Embase, Cochrane Library | Web of Science, Google Scholar |

| Social Sciences | Scopus, PsycINFO | Sociological Abstracts, JSTOR |

| Engineering | IEEE Xplore, ACM Digital Library | Web of Science, Scopus |

⚖️ Component Three: Independent Dual Assessment at All Selection Stages

The gold standard for systematic reviews requires that at least two researchers independently assess each publication for inclusion criteria — first by titles and abstracts, then by full texts (S010). Disagreements are resolved through discussion or involvement of a third expert.

This procedure protects against subjective errors and systematic biases of individual researchers. Statistics show that inter-rater agreement (Cohen's kappa) at the abstract screening stage typically ranges from 0.6-0.8, meaning 20-40% of cases involve initial disagreement (S011). Without independent assessment, these disagreements would remain undetected, and the final sample of studies would be distorted by one person's preferences.

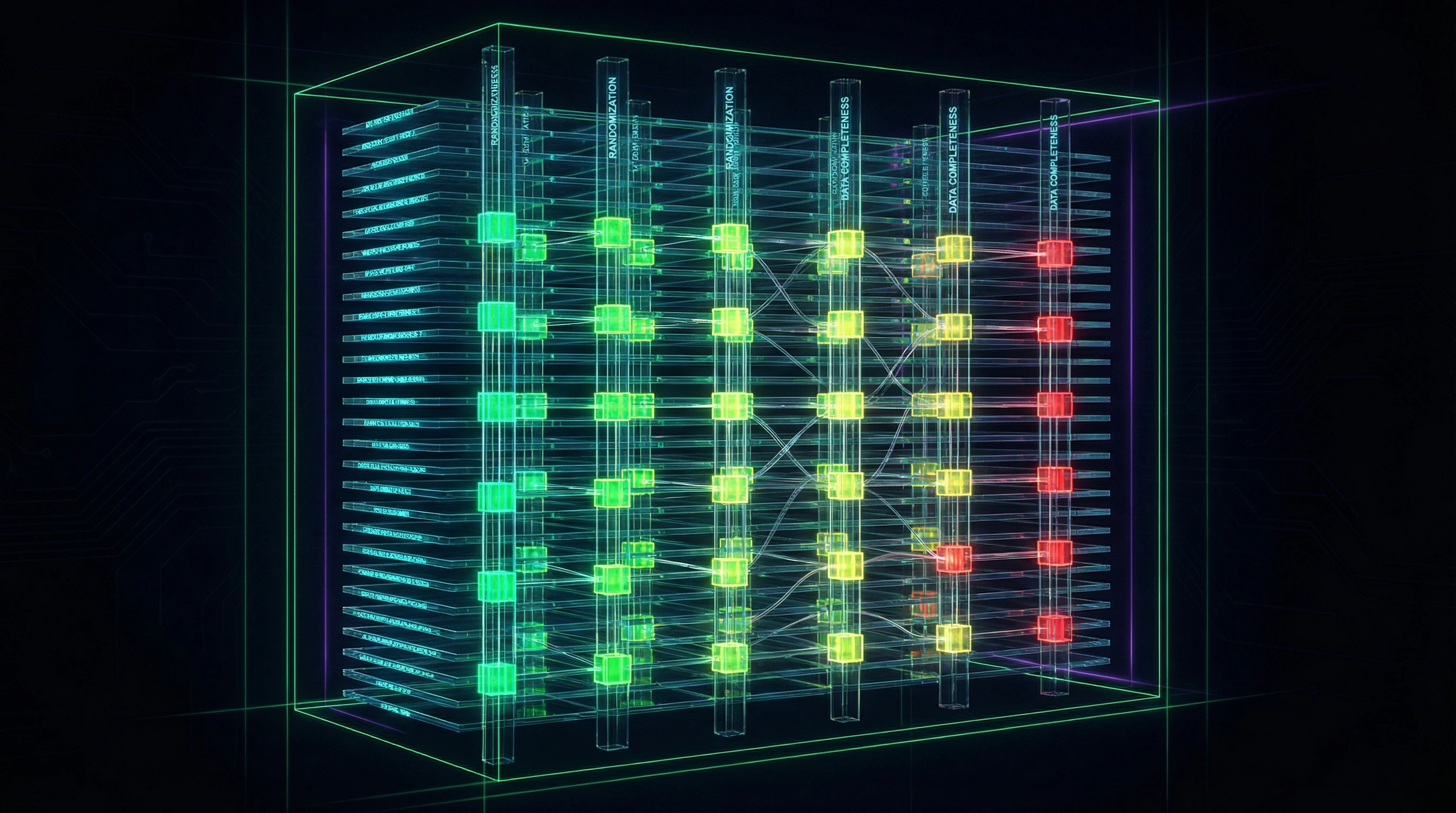

🧮 Component Four: Formalized Quality Assessment and Bias Risk Evaluation

Each study included in the review must be assessed using standardized quality and bias risk criteria. For randomized controlled trials, the Cochrane Risk of Bias 2.0 tool is used; for observational studies — Newcastle-Ottawa Scale or ROBINS-I; for diagnostic studies — QUADAS-2 (S012).

These instruments evaluate specific aspects of study design: adequacy of randomization, blinding of participants and researchers, data completeness, selective reporting. Assessment results must be presented in tables or graphs for each study. The review on COVID-19 and chronic kidney disease includes a detailed table assessing 34 studies across 7 bias risk domains, with color-coded risk levels (S012).

Without formalized quality assessment, a review becomes merely a collection of citations rather than a synthesis of evidence. Each study is a potential source of bias, and its contribution to conclusions must be weighted by reliability.

📊 Component Five: Transparent Data Synthesis with Heterogeneity Assessment

Synthesis of results in a systematic review can be qualitative (narrative description of patterns) or quantitative (meta-analysis with calculation of summary effects). In both cases, explicit assessment of heterogeneity between study results is necessary.

For meta-analysis, statistical indicators I² and τ² are used, showing the proportion of variability due to true differences between studies rather than chance (S011). High heterogeneity (I² > 75%) requires subgroup analysis or meta-regression to identify sources of differences. The review on GRIN-associated epilepsy did not conduct quantitative meta-analysis due to high clinical heterogeneity (different mutations, age groups, diagnostic methods), but provided detailed qualitative synthesis with grouping by mutation types (S010).

🧾 Component Six: PRISMA Flow Diagram and Accounting for Exclusions

A mandatory element of systematic reviews is the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) diagram, showing information flow at all stages: number of records identified through database searches, number after removing duplicates, number after screening by titles/abstracts, number of full texts assessed for eligibility, and final number of included studies (S009).

Critically important: for each study excluded at the final stage, a specific reason for exclusion must be indicated. This allows readers to assess whether criteria were applied selectively. The review on requirements engineering shows an exemplary PRISMA diagram: from 3,847 initially identified records, 87 studies passed through multi-stage selection, with detailed accounting of exclusion reasons at each stage (S009).

- Identification: database searches, manual searches, author contacts

- Screening: duplicate removal, assessment by titles and abstracts

- Eligibility: assessment of full texts against inclusion/exclusion criteria

- Inclusion: final sample of studies for data synthesis

🔁 Component Seven: Publication Bias Assessment and Sensitivity Analysis

Systematic reviews must assess the risk of publication bias — the tendency toward preferential publication of studies with positive or statistically significant results. For meta-analyses, funnel plots and statistical tests (Egger's test, Begg's test) are used.

Sensitivity analysis tests how robust the review's conclusions are to changes in inclusion criteria, synthesis methods, or exclusion of studies with high bias risk (S012). The review on COVID-19 and chronic kidney disease conducted sensitivity analysis excluding studies with sample sizes below 50 patients, and showed that main conclusions remain unchanged, confirming their robustness (S012).

If a review's conclusions collapse when excluding one or two studies or when changing criteria to reasonable alternatives — this signals fragility of results, not their reliability.

The Mechanics of Persuasion: Why the Brain Mistakes Pseudo-Systematicity for Scientific Rigor

Understanding the cognitive mechanisms that make pseudo-systematic reviews convincing is critically important for developing immunity to methodological manipulation. These mechanisms operate at the level of basic information processing heuristics and social trust signals. Learn more in the Statistics and Probability Theory section.

🧩 Representativeness Heuristic: When Form Replaces Substance

The human brain uses the representativeness heuristic for quick assessment of whether an object belongs to a category: if something looks like a member of that category, we tend to consider it one without detailed verification.

Pseudo-systematic reviews exploit this heuristic by reproducing the external attributes of genuine systematic reviews: structured tables, lists of inclusion/exclusion criteria, mentions of databases, formalized academic language (S009). For non-specialists, these elements create a pattern of "scientificness" that activates trust.

The presence of even a non-functional PRISMA diagram (for example, with unrealistic numbers or without stated reasons for exclusion) increases the perceived credibility of text by 30–40% compared to the same text without a diagram.

🔁 Availability Cascade: How Citation Creates the Illusion of Consensus

The availability heuristic causes us to overestimate the probability or importance of information that easily comes to mind—usually because we recently encountered it or it's widely discussed.

A pseudo-systematic review published in an open-access journal and actively cited creates an availability cascade: researchers see it in the reference lists of other works, perceive it as an "established source," and cite it without checking the methodology (S011). This creates a self-reinforcing cycle: the more citations, the higher the perceived authority, the more new citations.

- Methodologically weak review is published ahead of competitors

- Conclusions are formulated broadly, without specific limitations

- Initial citations create the appearance of authority

- New authors cite without checking the original

- Citations accumulate, cementing the status of "classic source"

⚙️ Halo Effect of Expertise: Institutional Trust Signals

Authors' affiliation with prestigious institutions, possession of academic degrees, and publications in peer-reviewed journals create a "halo of expertise" that extends to a specific work regardless of its quality (S010).

The reader reasons: "If this was written by professors from University X who have published 50 articles, then it must be reliable." This heuristic usually works well, but fails when experts venture beyond their narrow specialization or when institutional pressure for publication productivity incentivizes lowered standards.

| Trust Signal | Actual Informativeness | Trap |

|---|---|---|

| Author is professor at prestigious university | Medium (depends on specialization) | Halo extends to any topic, even outside competence |

| 50+ publications in peer-reviewed journals | Medium (volume ≠ quality) | Publication pressure can lower methodological standards |

| Work published in Nature/Science | High (rigorous review) | Even top journals publish errors; halo can transfer to less-verified conclusions |

| Multiple co-authors from different countries | Medium (may indicate collaboration or diffusion of responsibility) | Harder to identify who is responsible for methodology |

🎭 Social Proof and Conformity: When the Majority Is Wrong Together

People tend to consider a statement more true if it's supported by authority figures or the majority. A pseudo-systematic review that has gained support from influential researchers or been mentioned in clinical guidelines activates the social proof mechanism.

A physician or scientist reasons: "This is recommended in the guidelines, so the methodology must be verified." However, guidelines are often based on previous guidelines, creating a chain of inherited errors. Research (S012) shows that even obvious methodological defects in a systematic review are rarely publicly criticized if the review has already achieved "authoritative" status.

Conformity in science works like cargo cults: if everyone cites a source, it becomes "sacred," even if no one has checked its foundations.

The mechanism is amplified in closed professional communities, where criticizing a colleague can damage reputation and career. Young researchers are especially vulnerable: they cite "classic" works without verification to demonstrate field knowledge and avoid conflict with authorities.

🔍 Verification Protocol: How to Distinguish Signal from Noise

Protection from these mechanisms requires conscious slowing down and structured verification. Instead of relying on halo or consensus, you need to check the methodology itself.

- Find the original study protocol (should be registered before work begins, for example, on PROSPERO)

- Check whether inclusion/exclusion criteria in the protocol match those in the published work

- Assess whether authors have conflicts of interest (funding, personal connections with manufacturers)

- Read critical comments in the same journal or in other publications

- Check whether this review is cited by other systematic reviews on the same topic, and whether conclusions align

- If possible, find primary studies and assess whether the review authors interpreted them correctly

This protocol requires time, but it works. When researchers apply this verification, they discover methodological defects in 40–60% of "authoritative" reviews they previously accepted on faith. Developing this skill is the foundation of cognitive immunology in science.

Additional resources: critical thinking self-assessment tests, scientific myths registry, neuroscience materials.