What Constitutes a "Scientific Miracle" and Why Our Brains Accept Them So Easily: Defining the Boundaries of the Extraordinary

The term "scientific miracle" is an oxymoron that masks a misunderstanding of science's nature. Science doesn't deal with miracles; it deals with reproducible, testable phenomena explainable within existing or new theoretical models. More details in the Indigenous Beliefs section.

An extraordinary claim is an assertion that contradicts the established body of knowledge and requires revision of fundamental principles. For example, claims about instantaneous information transfer through quantum entanglement contradict special relativity and require extraordinary evidence (S001).

The human brain evolved not to evaluate statistical significance, but to make rapid decisions under uncertainty. We see patterns where none exist, attribute causal relationships to random correlations, and trust authorities more than data.

Even professional scientists are susceptible to cognitive biases when interpreting results, especially when working with p-values and statistical significance (S001), (S003).

🔎 Three Types of Extraordinary Claims

The first type extends existing theories without refuting them. The discovery of asymptotic freedom in quantum chromodynamics was extraordinary, but didn't contradict fundamental principles of quantum field theory.

The second type requires radical revision of fundamental laws: violation of thermodynamic laws, faster-than-light information transfer, macroscopic quantum effects in biology. Such claims require not just statistically significant results, but a theoretical mechanism explaining why all previous experiments failed to detect them.

The third type attempts to link modern science with ancient philosophical or religious texts. While historical analysis of philosophical ideas has value, presenting ancient texts as anticipating quantum mechanics is usually based on retrospective interpretation and ignores the context in which these ideas emerged.

- Apophenia

- The tendency to perceive meaningful patterns in random data—a cognitive bias particularly dangerous when analyzing extraordinary claims.

- Extraordinary Evidence

- Not merely a statistically significant result, but reproducible data, a theoretical mechanism, and integration into the existing body of knowledge.

🧱 The Line Between Skepticism and Dogmatism

Scientific history is full of examples where revolutionary ideas met resistance: heliocentrism, quantum mechanics. But these ideas prevailed not through their authors' charisma, but through reproducible experimental evidence and theoretical models that explained more phenomena than previous theories.

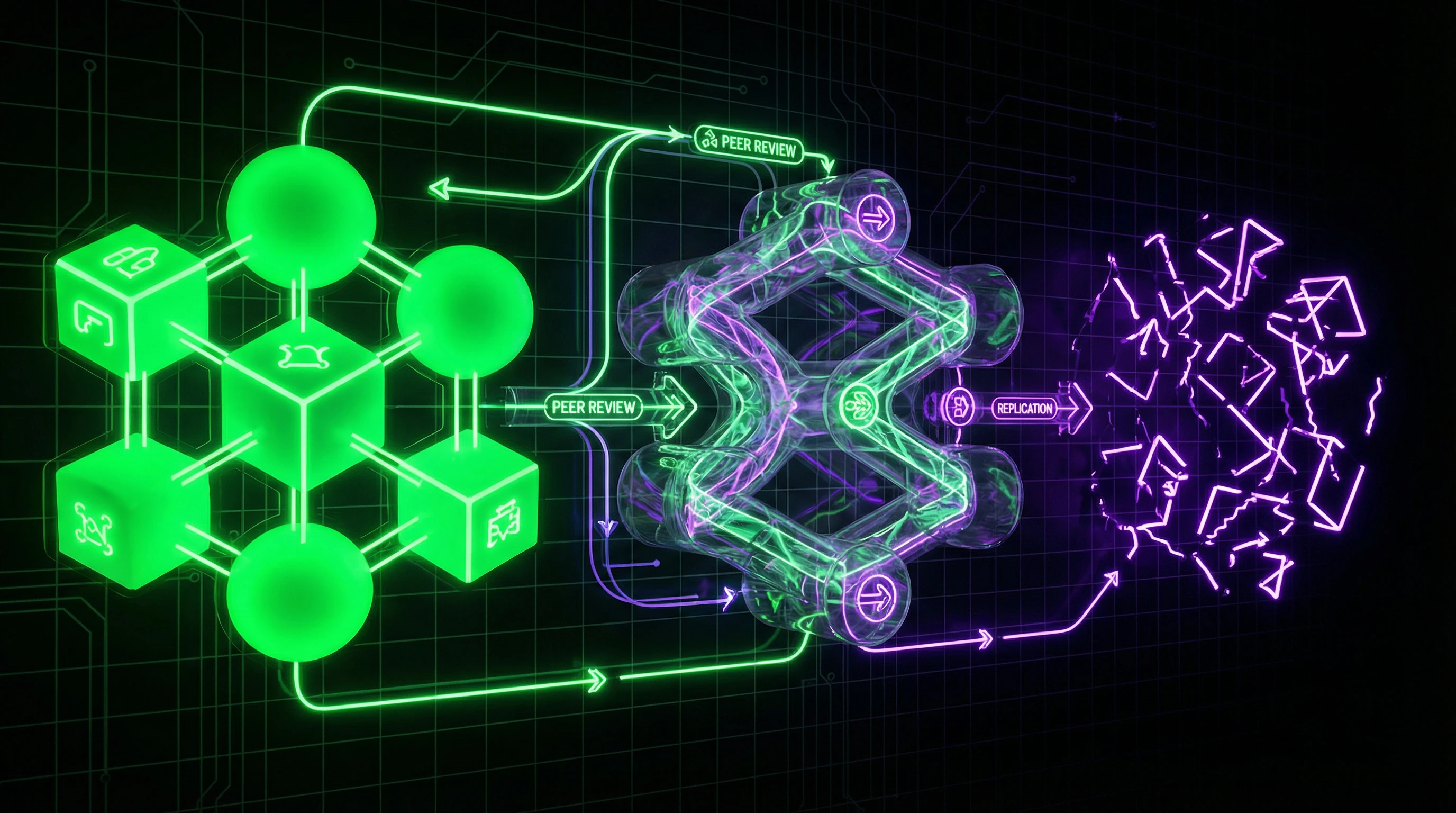

Key principle: an extraordinary claim must pass through multiple independent checks—replication in different laboratories, theoretical analysis, integration into the existing body of knowledge.

The peer review system, despite its flaws, remains the best mechanism for filtering scientific claims (S006). Process transparency doesn't introduce systematic bias, though it creates new challenges.

| Sign of Healthy Skepticism | Sign of Dogmatism |

|---|---|

| Demands reproducible data | Rejects data without analysis |

| Seeks theoretical mechanism | Denies mechanism a priori |

| Verifies through independent sources | Relies on authority |

The connection between belief and evidence shows how scientific consensus functions when challenged. Understanding logical fallacies helps protect critical thinking from manipulation.

Steelmanning: The Seven Strongest Arguments for Extraordinary Claims and Why They Deserve Serious Consideration

Before dismantling extraordinary claims, we must construct their strongest version — this is called the "steelman" principle, the opposite of a straw man. Only by refuting the most convincing form of an argument can we be confident in our conclusions. Let's examine seven categories of arguments most commonly used to support extraordinary claims. More details in the Islam section.

🧪 The Reproducible Anomaly Argument: When the Experiment Repeats but Remains Unexplained

The strongest argument for an extraordinary claim is a reproducible experimental anomaly. If multiple independent laboratories obtain the same unexpected result, it demands explanation. A classic example: experiments with neutrinos that allegedly moved faster than light (later found to be a measurement error). Important: reproducibility doesn't guarantee correct interpretation, but it does rule out random fluctuation as an explanation.

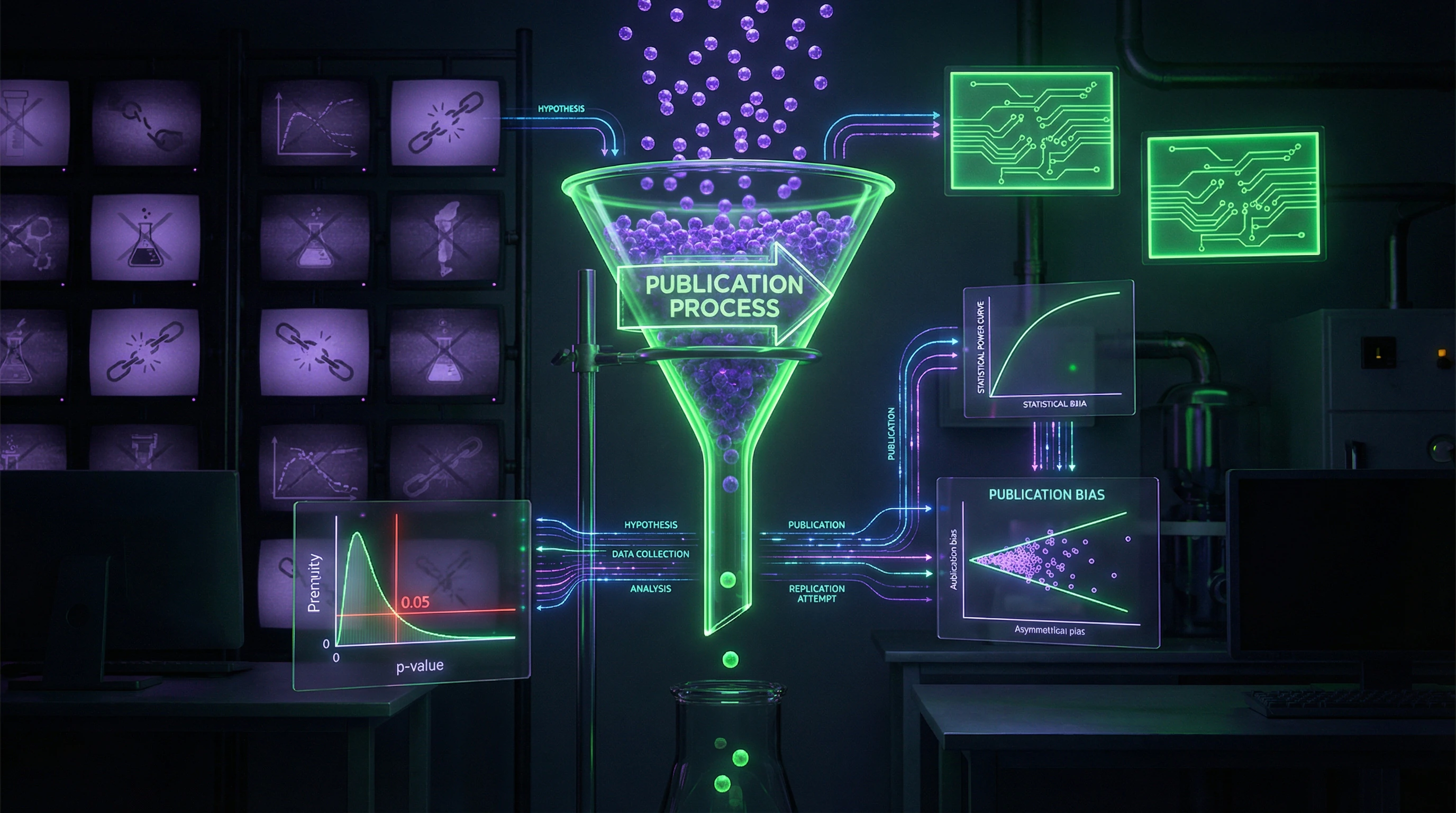

📊 The problem is that true reproducibility is rare. Research shows that in biological and natural sciences, a significant portion of results fail to reproduce in repeated experiments (S001, S003). This stems not only from fraud but from subtler issues: p-hacking (data manipulation to achieve statistical significance), publication bias (publishing only positive results), and insufficient statistical power in experiments.

🧬 The Theoretical Elegance Argument: When a New Model Explains More with Less

The second strong argument is theoretical elegance and explanatory power. If a new theory explains everything the old one did, plus additional phenomena, and does so with fewer assumptions, it deserves serious consideration. Occam's razor works precisely this way: don't multiply entities without necessity.

An example from information biology: research on the optimal number of bases in the genetic code (S005) shows how an information-theoretic approach can explain why DNA uses exactly four bases, not more or fewer. This isn't an extraordinary claim in the strict sense, but it demonstrates how theoretical elegance can point to deep organizational principles in biological systems.

🔁 The Convergent Evidence Argument: When Different Methods Lead to the Same Conclusion

⚠️ The third argument is convergence of independent lines of evidence. If the same conclusion follows from different types of experiments, theoretical models, and observations in different contexts, this significantly strengthens its credibility. For example, the existence of dark matter is confirmed by gravitational lensing, galaxy rotation curves, cosmic microwave background anisotropy, and computer modeling of structure formation in the universe.

However, convergence can be illusory if all methods share the same systematic bias. A systematic review of context use in object detection (S008) shows how different machine learning algorithms can produce similar results not because they correctly model reality, but because they exploit the same artifacts in training data.

🧠 The Mechanistic Plausibility Argument: When There's a Theoretical Path from Cause to Effect

The fourth argument is the presence of a plausible mechanism. Even if experimental data is ambiguous, having a detailed theoretical mechanism explaining how cause leads to effect strengthens the claim. This is especially important in biology and medicine, where randomized controlled trials aren't always possible.

The problem: plausibility is subjective and depends on existing theoretical frameworks. What seems plausible within one paradigm may be absurd in another. A review of the partitional approach to quantum mechanics interpretation (S007) illustrates how alternative interpretations can be internally consistent yet radically different from mainstream views.

📊 The Statistical Power Argument: When Sample Size Rules Out Chance

🔬 The fifth argument is adequate statistical power. If an experiment has a large sample size and properly calculated statistical power, the probability of a false positive decreases. This is especially important in the context of science's reproducibility crisis, where many studies have insufficient power to detect real effects (S001, S003).

However, high statistical power doesn't protect against systematic errors. A large sample can precisely measure the wrong quantity if the experimental design contains systematic bias. Moreover, in the big data era, it's easy to find statistically significant but practically meaningless correlations.

🧷 The Expert Consensus Argument: When Specialists in the Field Agree

The sixth argument is expert community consensus. If most specialists in the relevant field support a claim, that's a weighty argument in its favor. The Delphi method, used to achieve consensus in medical research (S009), shows how a structured process can help experts reach agreement on complex questions.

⚠️ But consensus isn't a guarantee of truth. The history of science knows many examples where consensus was wrong: from phlogiston theory to eugenics. Moreover, in some fields consensus can form under the influence of social, political, or economic factors unrelated to scientific evidence.

🔎 The Predictive Power Argument: When Theory Predicts New, Unexpected Phenomena

The seventh and strongest argument is predictive power. If a theory predicts new phenomena that are then discovered experimentally, this is powerful evidence in its favor. Classic examples: Einstein's prediction of light deflection in a gravitational field, Dirac's prediction of antiparticles, prediction of the Higgs boson.

An important distinction: postdictive explanations (when theory explains already known facts) are much weaker than predictions. It's easy to fit a model to existing data, but much harder to predict something no one has yet seen. This is precisely why preregistration of hypotheses and analysis plans is becoming standard in modern science.

Anatomy of Evidence: How to Evaluate Scientific Data Quality in an Era of Information Noise and Preprints

Now that we've built the steel man, it's time to take it apart. Evaluating the quality of scientific evidence requires a systematic approach that considers not only statistical significance, but also study design, potential biases, reproducibility, and theoretical integration. More details in the section New Religious Movements.

📊 Hierarchy of Evidence: From Meta-Analyses to Anecdotes, and Why It's Not Absolute

The traditional hierarchy places systematic reviews and meta-analyses of randomized controlled trials (RCTs) at the top, followed by individual RCTs, cohort studies, case-control studies, and at the very bottom—case reports and expert opinions. This hierarchy is useful, but not absolute.

The quality of a systematic review depends on the quality of the included studies (S008). A meta-analysis of poorly designed experiments won't yield reliable conclusions, while a well-designed observational study can be more informative than a poor RCT.

- Systematic review — examination of all available studies on a topic with clear inclusion criteria

- Meta-analysis — statistical combination of results from multiple studies

- Randomized controlled trial — random assignment of participants to groups

- Cohort study — observation of a group of people with a common characteristic

- Case-control study — comparison of people with and without the outcome of interest

- Case report — detailed description of one or more patients

🧾 P-Values and Statistical Significance: Why p < 0.05 Doesn't Mean "Proven"

One of the most common mistakes is equating statistical significance with practical importance or truth of a hypothesis. A p-value shows the probability of obtaining the observed data (or more extreme) given that the null hypothesis is true.

A p-value is not the probability that the null hypothesis is true, nor the probability that the result is random. It's a conditional probability assuming the null hypothesis is true.

The threshold p < 0.05 is an arbitrary convention, not a magical boundary between truth and falsehood (S001, S003). With multiple testing (when many hypotheses are tested simultaneously), the probability of false positive results increases sharply. Corrections like Bonferroni help, but don't completely solve the problem.

🔁 Reproducibility as the Gold Standard: The Replication Crisis and What It Means for Evaluating Claims

Reproducibility—the ability to obtain the same result when repeating an experiment—is considered the gold standard of the scientific method. The replication crisis of recent years has shown that a significant portion of published results cannot be reproduced, especially in psychology, medicine, and biology (S001, S003).

| Cause of Non-Reproducibility | Mechanism | How to Detect |

|---|---|---|

| Insufficient statistical power | Sample size too small to detect the effect | Check power calculation in methodology |

| Flexibility in data analysis | Researcher selects analysis that yields significant result | Compare preregistration with published analysis |

| Publication bias | Only significant results are published | Search for preprints and negative results |

| HARKing | Hypothesis formulated after obtaining results | Check logic of hypothesis and design |

Non-reproducibility doesn't always mean fraud. Often it's the result of honest errors and structural problems in the scientific publication system.

🧷 Peer Review as a Filter: What It Can and Cannot Do, and Why Open Review Is Changing the Game

The peer review system is the primary quality control mechanism in science. Before publication, an article undergoes review by several experts who evaluate the methodology, data analysis, and conclusions. However, this system is far from perfect.

Open peer review (when reviewers' names are known) doesn't necessarily introduce systematic bias, but can change the dynamics of interaction (S006). It can reduce the aggressiveness of criticism and make the process more constructive. Important: peer review doesn't guarantee correctness of results, it only verifies that the methodology meets field standards.

🔬 Preprints and Post-Publication Review: The New Ecosystem of Scientific Communication and Its Risks

The traditional model of scientific publication—submission to a journal, peer review, publication—takes months or years. Preprints (versions of articles in open access before formal review) have revolutionized scientific communication, accelerating the dissemination of results.

However, preprints create new risks. Unvetted results can be picked up by media and presented as established facts. During the COVID-19 pandemic, this led to the spread of numerous erroneous claims based on low-quality preprints (S006). Post-publication review (when an article is discussed and critiqued after publication) partially solves this problem, but requires active participation from the scientific community.

🧭 Conflicts of Interest and Funding: How Money Distorts Scientific Conclusions, Even When Researchers Are Honest

Conflicts of interest are situations where a researcher has financial or personal incentives that could influence the design, conduct, or interpretation of a study. A classic example is research funded by pharmaceutical companies, which is more likely to show positive results for those companies' drugs.

A conflict of interest doesn't automatically mean the results are incorrect. But it requires heightened vigilance when evaluating evidence.

Funding transparency, preregistration of study protocols, and open access to data are mechanisms that help reduce the influence of conflicts of interest. A structured approach to achieving expert consensus can help minimize bias in the evidence evaluation process.

When evaluating extraordinary claims, pay attention to funding, author affiliations, and availability of open data. This doesn't prove error, but indicates the need for additional verification.

Mechanisms of Illusion: Why Correlation Doesn't Equal Causation, and How Confounders Create False Patterns

Even compelling data can hide the illusion of causation. This is critical when evaluating extraordinary claims, which often rely on observations rather than controlled experiments. More details in the Logical Fallacies section.

🔁 Correlation vs. Causation: Classic Traps and Modern Methods of Causal Inference

"Correlation doesn't imply causation" is a well-known principle, but its mechanism requires examination. Two variables correlate for three reasons: A causes B, B causes A, or a third variable C causes both.

Modern causal inference methods—instrumental variables, regression discontinuity design, synthetic control—allow drawing conclusions about causation from observational data. But they require strong assumptions that often cannot be tested. Randomized controlled trials remain the gold standard: random assignment of participants eliminates systematic differences.

🧩 Confounders and Hidden Variables: How a Third Factor Creates Illusion

A confounder is a variable associated with both the presumed cause and the effect, creating a false appearance of a relationship between them. Classic example: the correlation between ice cream consumption and drownings. Both are caused by heat—a third factor.

A confounder works like an invisible director: it pushes both variables in the same direction, and the observer sees only their synchronized movement, mistaking it for causation.

In medicine, confounders are especially dangerous. Patients taking vitamins are often healthier not because of vitamins, but because they already care about their health—they exercise, eat better, get regular checkups. Health causes vitamin-taking, not the other way around.

To control confounders, researchers use stratification (dividing into subgroups), regression analysis, or matching. But all these methods require that you know about the confounder in advance. Hidden variables—those you don't suspect—remain an invisible threat.

📊 Reverse Causation and Cyclical Relationships: When Effect Becomes Cause

Reverse causation occurs when the presumed effect actually causes the cause. Depression correlates with low income, but low income can cause depression, and depression can lead to job loss and reduced income.

| Scenario | Apparent Correlation | True Mechanism | How to Test |

|---|---|---|---|

| Vitamins and health | People taking vitamins are healthier | Healthy people take vitamins | Randomized experiment |

| Prayer and recovery | Those who pray recover more often | Less severe patients pray; doctors treat believers better | Control for severity, double-blind design |

| Social media and loneliness | Active users are lonelier | Lonely people seek comfort in networks | Longitudinal study with lag |

Cyclical relationships complicate the picture even further. Poverty causes stress, stress reduces cognitive abilities, which makes escaping poverty harder. The system self-reinforces, and it's impossible to point to a single cause.

🎯 Selection Bias: When Data Sampling Itself Creates Illusion

Selection bias occurs when the method of data selection systematically distorts results. If you study treatment effectiveness only among patients who completed it, you exclude those who quit due to side effects or ineffectiveness.

Surviving patients appear healthier than they actually are. This is called survivorship bias. In extraordinary claims, selection bias works especially effectively: people helped by miracle treatments talk about it; those it didn't help stay silent.

- Publication bias

- Studies with positive results are published more often than those with negative results. This creates the illusion that an effect exists, when in reality half the studies found nothing.

- Recall bias

- People remember events that confirm their beliefs better. If you believe in miracle treatments, you'll remember cases when it worked and forget cases when it didn't help.

- Multiple testing bias

- If you test 100 hypotheses, approximately 5 will be "significant" purely by chance (at significance level 0.05). If you publish only these 5, readers see 100% success.

To control selection bias, it's necessary to clearly define inclusion and exclusion criteria before starting the study, use intention-to-treat analysis, and register the study in open registries.

🔍 How to Distinguish Causation from Illusion: Practical Checklist

- Is there an alternative explanation through a confounder? Name three possible third factors.

- Could there be reverse causation? Is it logically possible that the effect causes the cause?

- How was the data selected? Who's included, who's excluded, why?

- Is there a mechanism? If A causes B, there must be a biological or physical chain of events.

- Is it reproducible? Has it been found in different populations, countries, time periods?

- Is there a dose-response? If more A, then more B? Or is the effect the same at any amount of A?

- Does it predict the future? If causation is real, it should work in new data.

Extraordinary claims often don't pass even the first three items on this list. This doesn't mean they're false, but it does mean the evidence is insufficient to conclude causation.