What is unfalsifiability and why it turns science into philosophy — defining the problem through Popper's criterion

Unfalsifiability is a property of a statement that makes it impossible to refute through empirical observation. Karl Popper proposed falsifiability as a criterion of demarcation between science and non-science: a theory is scientific if it allows predictions that can be compared with data and potentially shown to be false (S007).

Popper's criterion doesn't require a theory to be true — only that it be refutable. This is the distinction between scientific ("all swans are white" — refuted by a black swan) and non-scientific ("an invisible dragon in the garage that leaves no traces"). The latter cannot be disproven: any absence of evidence is explained by the dragon's properties. More details in the section Sacred Geometry.

Why falsifiability matters more than truth

Science doesn't strive for absolute truth — it strives for refutable statements. A theory that explains everything explains nothing: it excludes no possible observations and carries no information about reality.

- Falsifiability

- The ability of a theory to be refuted by empirical data. Distinguishes science from metaphysics.

- Unfalsifiability

- A theory's compatibility with any possible outcome. Renders it scientifically sterile.

Large-scale replication studies have shown: reproducibility of results is far from optimal (S003). Crisis factors include questionable research practices (QRPs): publication bias, p-hacking, HARKing (hypothesizing after results are known).

The multiverse as the ultimate case of unfalsifiability

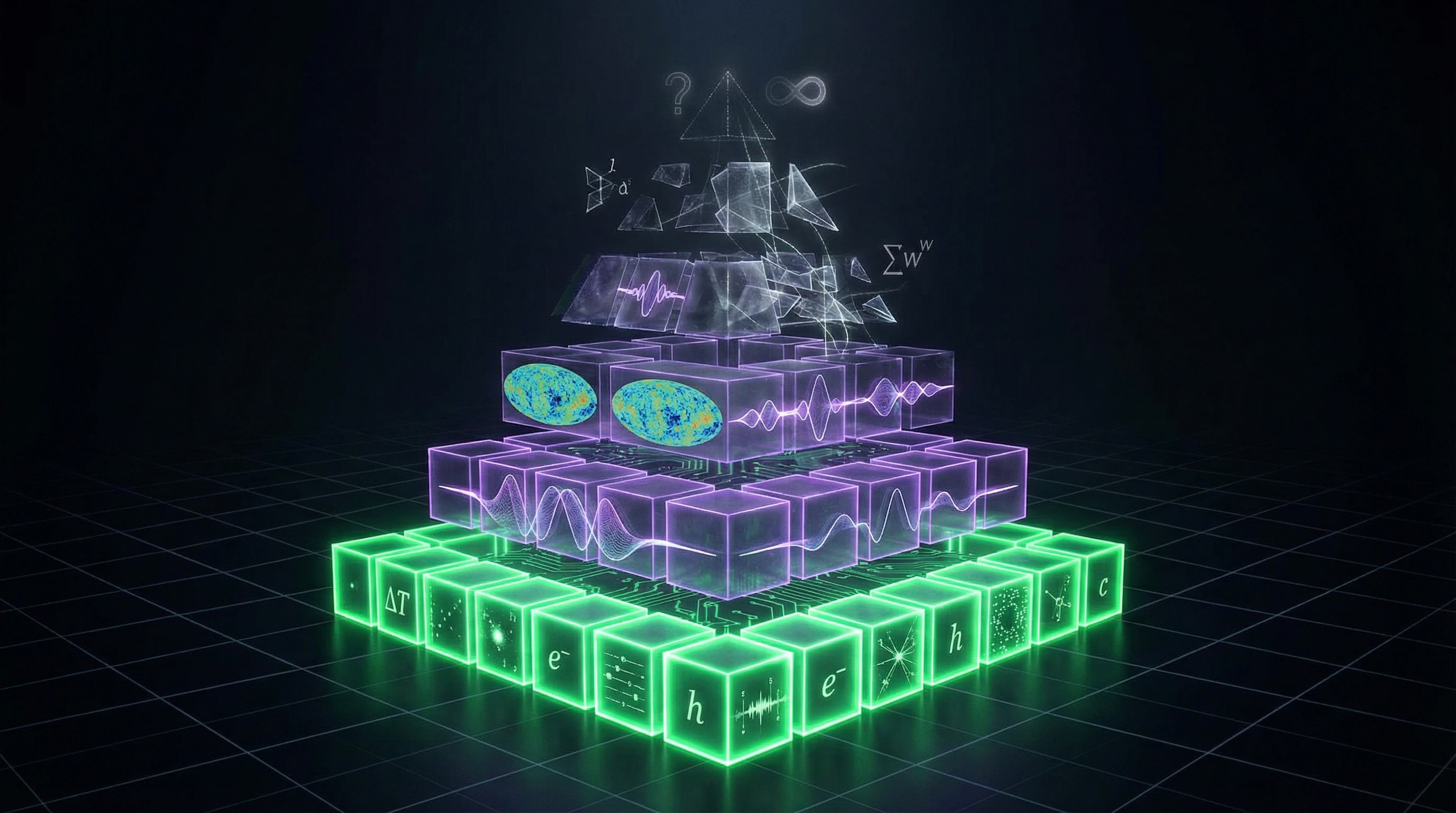

Multiverse theory claims: our Universe is one of an infinite multitude, each with its own laws and constants. It arises from eternal inflation, the landscape of string theory vacua, the many-worlds interpretation of quantum mechanics.

The fundamental problem: other universes are causally separated from ours and fundamentally unobservable. How do you test a theory if it predicts unobservable objects?

Any observation in our Universe is compatible with both the existence of a multiverse and its absence. The theory generates no testable predictions that distinguish it from alternative explanations.

This creates an epistemological dead end: the theory becomes scientifically sterile. It may be logically coherent, mathematically elegant, but it remains a philosophical statement, not a scientific hypothesis. This is precisely where Popper's criterion exposes the boundary between what can be called science and what remains speculation — see also how to distinguish sources from evidence.

Steelmanning the Multiverse — Seven Strongest Arguments for the Multiverse Theory and Why They Deserve Serious Consideration

Before criticizing multiverse theory for unfalsifiability, it's necessary to present it in its strongest form. This is called "steelmanning" — the opposite of a strawman argument. For more details, see the Pseudoscience section.

Multiverse theory didn't emerge from nowhere; it's a consequence of serious physical theories that successfully explain observed phenomena.

🌌 The Argument from Cosmological Inflation and Eternal Expansion

Inflationary cosmology, proposed by Alan Guth in the 1980s, solves several fundamental problems of the standard Big Bang model: the horizon problem, the flatness problem, and the magnetic monopole problem.

According to inflationary theory, the universe underwent a period of exponential expansion in the first fractions of a second after the Big Bang. Many versions of inflation predict "eternal inflation" — a process that never ends globally, creating an infinite number of "bubble universes" (S001).

| Element | Status |

|---|---|

| Inflation as a theory | Confirmed by cosmic microwave background observations |

| Eternal inflation | Mathematical consequence of inflation equations |

| Bubble universes | Each has its own physical laws |

If inflation is correct, then the multiverse may be an inevitable consequence, not speculation.

🎻 The Argument from String Theory and the Landscape of Vacua

String theory — one of the leading candidates for a theory of quantum gravity — predicts the existence of an enormous number of possible vacuum states, possibly 10^500 or more.

Each such state corresponds to a universe with different physical constants and laws. This is called the "string theory landscape." If string theory is correct and if all possible vacuum states are realized (which is natural in the context of eternal inflation), then the multiverse isn't just possible — it's necessary.

⚛️ The Argument from Quantum Mechanics and the Many-Worlds Interpretation

The many-worlds interpretation of quantum mechanics, proposed by Hugh Everett in 1957, asserts that all possible outcomes of quantum measurements are realized in different branches of the wave function.

This interpretation solves the wave function collapse problem without introducing a special measurement mechanism. The many-worlds interpretation is mathematically equivalent to the standard Copenhagen interpretation in terms of predictions, but it's more ontologically economical — it doesn't require an additional postulate about collapse.

If you take the unitary evolution of quantum mechanics seriously, the multiverse of quantum branches follows automatically.

🎯 The Argument from Fine-Tuning of Physical Constants

The fundamental physical constants of our universe (gravitational strength, electromagnetic constant, electron mass, etc.) have values that appear incredibly precisely calibrated for the emergence of complex structures and life (S002).

Even a slight change in any of these constants would make the universe unsuitable for life. There are three possible explanations: (1) incredible coincidence, (2) intelligent designer, (3) multiverse with anthropic selection.

- The third explanation is scientifically preferable to the second because it doesn't introduce supernatural agents

- If there exists a vast number of universes with different constants, it's unsurprising that we find ourselves in one where the constants permit our existence

- This is simply the anthropic selection principle, not a miracle

🔢 The Argument from Mathematical Inevitability and the Principle of Completeness

Some physicists and mathematicians, including Max Tegmark, propose a radical version of the multiverse: the mathematical universe. According to this hypothesis, all mathematically consistent structures physically exist.

This argument is based on the principle that physical reality and mathematical structure are one and the same. If this is true, then the question "why does this particular universe exist?" disappears — all possible universes exist, described by different mathematical structures.

🧬 The Argument from Successful Predictions of Underlying Theories

The theories that lead to the multiverse (inflation, string theory, quantum mechanics) weren't developed specifically to explain the multiverse. They were created to solve specific physical problems and made successful predictions in observable domains.

The multiverse emerges as a byproduct, not as the primary goal. This gives multiverse theory a certain scientific respectability — it's not an ad hoc hypothesis invented to explain one specific fact.

🌠 The Argument from Indirect Observational Consequences

Some versions of the multiverse may have indirect observational consequences. For example, if bubble universes can collide, this could leave characteristic imprints in the cosmic microwave background.

Researchers have searched for such signals, though so far unsuccessfully. Also, some versions of the multiverse make statistical predictions about the distribution of physical constants that can be tested through anthropic reasoning (S007).

Not all versions of multiverse theory are completely unfalsifiable. Some variants can generate testable predictions, albeit indirect ones.

Evidence Base and Its Limits — A Detailed Analysis of What We Actually Know About the Multiverse and Where Speculation Begins

Moving from arguments to evidence, it's essential to clearly distinguish what is empirically confirmed from what remains theoretical extrapolation. Modern science faces serious reproducibility problems, making critical analysis of the evidence base particularly important (S003).

🧪 Empirical Confirmations of Inflationary Cosmology

Inflationary theory has made several successful predictions confirmed by observations of the cosmic microwave background (CMB). The COBE, WMAP, and Planck satellites measured CMB temperature fluctuations that align with inflation's predictions: a nearly scale-invariant spectrum of perturbations, Gaussian distribution, and specific correlation functions. More details in the Cryptozoology section.

However, these observations confirm that something resembling inflation occurred in the early Universe. They do not confirm eternal inflation or the existence of other universes.

The leap from "inflation occurred in our observable region" to "inflation continues eternally, creating an infinite number of universes" is a theoretical extrapolation without direct empirical confirmation.

🎻 String Theory's Status as an Empirical Science

String theory, despite its mathematical elegance, has not yet made a single verified empirical prediction that distinguishes it from alternative quantum gravity theories. Predictions typically involve Planck energies (10^19 GeV), which are unattainable in current accelerators.

The string theory landscape with its 10^500 possible vacua is a mathematical result, not an empirical fact. We don't know whether all these vacua are physically realized or whether string theory is even correct.

- The Speculative Nature of the String Theory Argument

- When a theory contains 10^500 solutions, each potentially describing a separate universe, the selection criterion between them vanishes. This makes the argument from string theory to multiverse extremely speculative—we cannot test which solution (if any) corresponds to reality.

⚛️ Quantum Mechanics: Interpretations Versus Facts

Quantum mechanics as a mathematical formalism has been confirmed by countless experiments with incredible precision. However, the many-worlds interpretation is precisely that—an interpretation, a philosophical position about what the mathematical formalism means.

All interpretations of quantum mechanics make identical predictions for all possible experiments. Choosing between them is a matter of philosophical preference, not empirical data.

| Interpretation | Empirical Predictions | Multiverse Status |

|---|---|---|

| Copenhagen | Identical to others | Not required |

| Many-Worlds | Identical to others | Postulated |

| de Broglie-Bohm | Identical to others | Not required |

| Transactional | Identical to others | Not required |

The claim that the many-worlds interpretation proves the multiverse's existence is logically incorrect—it conflates interpretation with fact. See also the analysis of quantum consciousness myths, where similar confusion leads to pseudoscientific conclusions.

📊 The Fine-Tuning Problem: How Real Is It?

The fine-tuning argument rests on the assumption that physical constants could have had different values. But this assumption itself is not obvious.

Perhaps there exists a yet-unknown fundamental theory that uniquely determines all constants' values, making them necessary rather than contingent. In that case, the fine-tuning problem disappears.

Fine-tuning calculations often depend on assumptions about what forms of life are possible. We know only one example of life—carbon-based life on Earth. Perhaps there are entirely different forms of complexity that could emerge with other constant values.

This makes quantitative fine-tuning estimates extremely uncertain. The problem is compounded by our inability to conduct experiments with alternative constant sets.

🔍 Searches for Universe Collision Traces: Null Results

Several research groups have searched cosmic microwave background data for signs of collisions between our universe and other bubble universes. Such collisions could theoretically leave characteristic circular patterns in CMB temperature fluctuations.

However, all these searches have yielded null results—no convincing collision signatures have been found. This doesn't disprove the multiverse (collisions might be too rare or weak to detect), but it shows that even potentially testable consequences of the theory remain unconfirmed.

- Absence of evidence is not evidence of absence

- But it's also not evidence of existence

- A null result is information that should lower the hypothesis's probability in a Bayesian sense

🧾 Bayesian Approach to Evaluating Multiverse Theory

Contemporary research proposes using a Bayesian approach to evaluate unfalsifiable claims (S007). Instead of Popper's binary criterion, the Bayesian approach considers how a theory changes our probability assessments in light of new data.

A theory is considered informative if it significantly changes posterior probabilities compared to priors. The problem with the multiverse in a Bayesian context is that it's compatible with virtually any observations in our Universe (through the anthropic principle), so it weakly updates our probability estimates.

A theory that predicts everything predicts nothing specific. Bayesian analysis shows that even with a more flexible criterion of scientificity, the multiverse remains problematic.

📈 Meta-Analysis and the Problem of Multiple Universes in Statistics

The term "multiverse" is also used in contemporary statistics, but in a completely different context. "Multiverse analysis" is a method for investigating result robustness to various analytical decisions (S003).

Researchers test whether conclusions remain stable across different data processing methods, variable selections, and analytical approaches. This statistical "multiverse" reflects a fundamental problem: with multiple possible analytical paths, researchers can (consciously or not) select those yielding desired results.

This connects to science's reproducibility crisis. Paradoxically, the multiplicity problem in statistical analysis is conceptually similar to the multiverse problem in cosmology: when too many possibilities exist, it's difficult to determine what's real. For more on the mechanisms of this crisis, see the sources and evidence section.

Mechanisms of Causality vs. Correlation — Why Observed Patterns Don't Prove the Existence of Unobservable Universes

The fundamental problem with multiverse theory is the logical gap between observed patterns in our Universe and conclusions about the existence of other universes. This is a classic case of confusing correlation with causality, compounded by the impossibility of directly observing the supposed cause. More details in the Media Literacy section.

🔁 The Problem of Underdetermination of Theory by Data

In philosophy of science, there exists the principle of underdetermination of theory by data: any finite set of empirical data is compatible with an infinite number of different theories (S003). For any observation, multiple explanations can be constructed that equally fit the data but make different claims about unobservable aspects of reality.

The multiverse is an extreme case of this problem. All observations supposedly supporting the multiverse (fine-tuning of constants, success of inflation theory, quantum phenomena) are equally compatible with alternative explanations that don't require other universes (S001).

Fine-tuning could be explained by an unknown fundamental theory that makes the constants necessary — without invoking the multiverse.

🧷 The Anthropic Principle as Explanation or Abandonment of Explanation?

The anthropic principle states: we observe this particular universe with these constants because only in such a universe can observers exist. This sounds like an explanation, but it's actually a tautology.

We cannot observe a universe in which observers are impossible — this is a logically necessary truth that doesn't require a multiverse (S007). The anthropic principle becomes explanatory only in combination with the multiverse: if multiple universes exist with different constants, then anthropic selection explains why we're in this particular one.

But this is circular logic: the multiverse justifies the anthropic explanation, and the anthropic explanation serves as an argument for the multiverse.

⚙️ Confounders and Alternative Explanations

In causal analysis, a confounder is a variable that affects both the supposed cause and the effect, creating a false correlation. In the case of the multiverse, a potential confounder is our incomplete understanding of fundamental physics.

Perhaps there exists a deeper theory that explains the values of physical constants, quantum phenomena, and inflation without requiring a multiverse (S001). This hypothetical theory would be a confounder, obscuring the true cause of observed patterns.

- Observed pattern (fine-tuning, quantum anomalies)

- Supposed cause (multiverse)

- Hidden confounder (unknown fundamental theory)

- Alternative explanation (constants derive from deeper theory)

🎯 The Distinction Between Explanation and Relabeling

The multiverse often functions as a relabeling of the problem rather than its solution. Instead of explaining why constants have these specific values, the theory says: "Because multiple universes exist with different values."

But this doesn't explain why this particular set of universes exists, why these specific laws of inflation operate, why the probability distribution of universes has this particular form. Each answer generates a new question, pushing the fundamental mystery one level higher.

- Explanation

- Reduction of the unknown to the known or to more fundamental principles; enables new predictions.

- Relabeling

- Replacement of one mystery with another; doesn't generate new testable consequences; stops investigation.

📊 The Problem of Multiple Hypotheses

For any observed pattern, an infinite number of unobservable causes can be constructed to explain it. The multiverse is one of them, but not the only one and not the simplest.

| Observation | Multiverse Explanation | Alternative Explanation | Testability |

|---|---|---|---|

| Fine-tuning of constants | Anthropic selection in multiverse | Unknown theory making constants necessary | Both unfalsifiable |

| Success of inflation theory | Inflation generates multiple universes | Inflation is local process in one universe | Both compatible with data |

| Quantum superpositions | Decoherence in parallel branches | Wave function collapse or other interpretation | Experimentally indistinguishable |

The key question: if two hypotheses equally explain all observed data and both are unfalsifiable, which is more scientific? Answer: neither. Both transition into the realm of philosophy and metaphysics — sources and evidence require the possibility of distinction.

🔍 Why Correlation Doesn't Prove Causality in the Context of the Unobservable

The classic rule: correlation doesn't prove causality. But in the case of the multiverse, the situation is even more critical — we have a correlation between observable patterns and a hypothesis about unobservable entities that are fundamentally inaccessible to verification.

This means that even if we found a perfect correlation between all known physical phenomena and multiverse predictions, it still wouldn't prove the existence of other universes. Causal connection requires not only correlation but also mechanism and the ability to manipulate variables — all of which are absent (S001).

The multiverse explains the observable, not because it's true, but because it's flexible enough to accommodate any data.