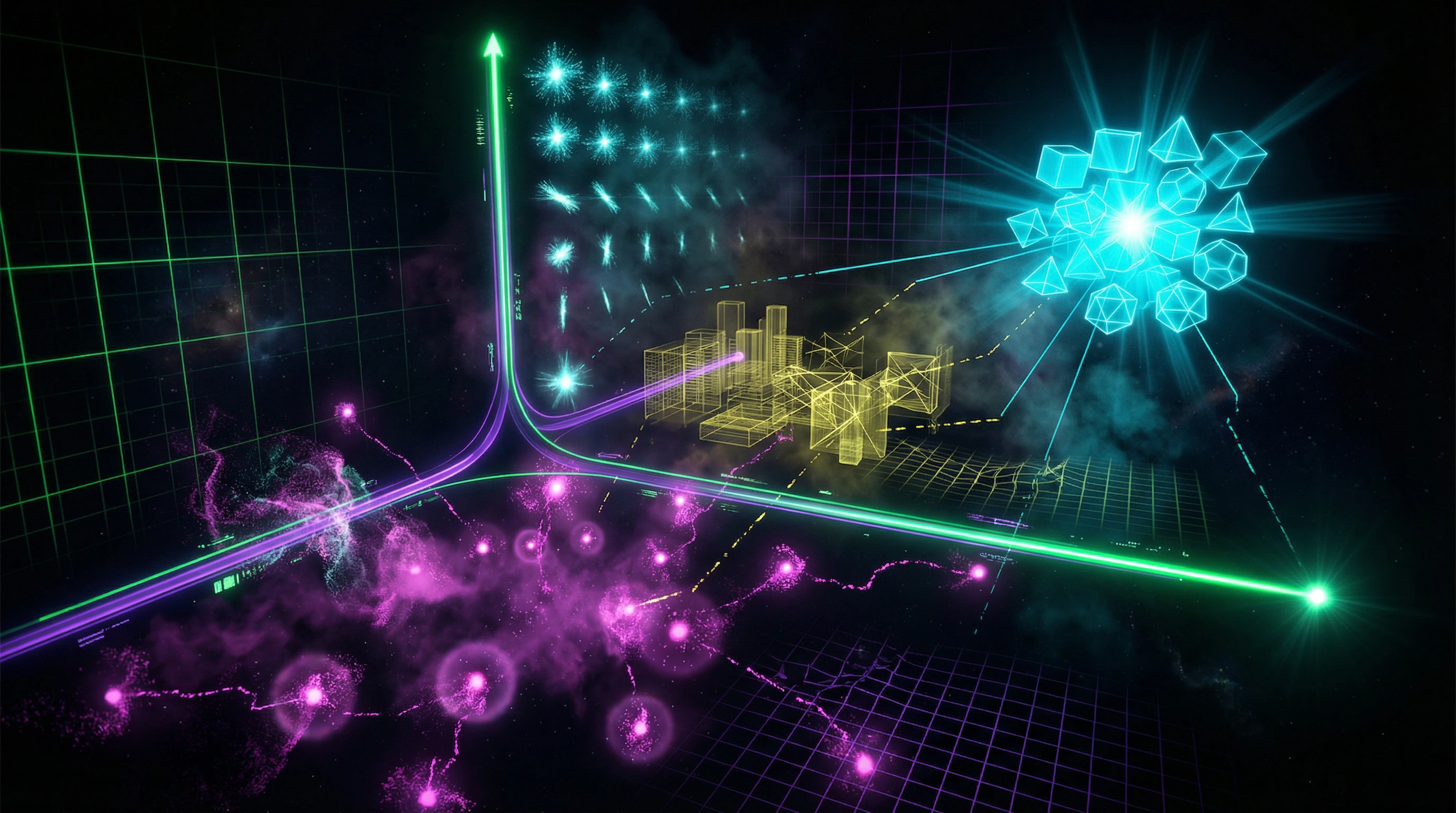

What we call a forecast: from quantum mechanics to reading economic tea leaves

The term "forecast" encompasses such heterogeneous practices that using a single word to describe them is itself a cognitive trap. When physicists from the CMS and LHCb collaborations predicted the probability of the rare decay B⁰ₛ→μ⁺μ⁻, they operated within the Standard Model with testable parameters and reproducible experiments (S002).

When economists forecast electricity prices in Poland, they work with a system where the number of hidden variables exceeds observable ones by orders of magnitude. This isn't just different scales of complexity — these are different epistemological regimes. More details in the Alternative History section.

Three classes of forecasts

- Deterministic

- Based on closed systems with known laws. Predicting a projectile's trajectory in vacuum or the time of the next solar eclipse. Error relates exclusively to measurement precision of initial conditions and computational power.

- Stochastic

- Work with systems where fundamental randomness is built into the nature of the phenomenon. Quantum mechanics, radioactive decay, Brownian motion — we cannot predict individual events, but can predict statistical distributions with high accuracy (S006).

- Pseudo-prognostic

- Masquerade as stochastic but work with open systems where the number of relevant factors is unknown, and the factors themselves may change over time. Economic forecasts, sociological predictions, energy consumption forecasts fall into this category.

Research on forecast error dependence on retrospective depth shows that even in the relatively controlled domain of electricity, accuracy depends nonlinearly on historical data volume, indicating system non-stationarity (S011).

Boundaries of applicability: why consensus in physics doesn't transfer to economics

Scientific consensus possesses evidentiary value only in domains where mechanisms for systematic hypothesis falsification exist (S010). In particle physics, consensus forms through reproducible experiments with controlled conditions: observation of the rare decay B⁰ₛ→μ⁺μ⁻ was independently confirmed by two detectors with different architectures, excluding systematic errors of a specific setup.

In economic forecasting, such a mechanism is absent. A forecast of electricity consumption in Poland cannot be tested under controlled conditions — each moment in time is unique, historical context is irreproducible, and feedback from the forecast itself changes system behavior.

When we transfer the epistemological status of physical consensus to economic consensus, we commit a category error. This isn't a question of data accuracy or computational power — it's a question of the fundamental structure of the system. More on how the brain creates an illusion of understanding where none exists in the article on recognition aura.

The Steel Man of Forecasting: Seven Arguments in Defense of Economic Predictions

Before dissecting the mechanisms of self-deception, we must present the strongest version of arguments favoring forecast reliability. Intellectual honesty demands we attack not a straw man, but the steel man of our opponent. More details in the section Free Energy and Perpetual Motion Machines.

🔬 The Argument from Accumulated Data: We Have More History Than Ever

Modern economic models rely on decades of detailed data. Electrical load forecasting uses hourly consumption measurements, meteorological data, calendar effects, industrial cycles (S007). The depth of retrospection allows identification of seasonal patterns, trends, structural shifts.

Research on the impact of retrospection depth on forecast quality shows that increasing the volume of historical data does indeed reduce forecast error in the short term (S011). For a one-month forecast horizon, using three years of history yields substantially better results than using a one-year sample.

| Forecast Horizon | One-Year History | Three-Year History | Advantage |

|---|---|---|---|

| 1 month | Higher | Lower | Three-year sample |

| Seasonal patterns | Incomplete | Complete cycles | Structural completeness |

📊 The Argument from Methodological Sophistication: Models Are Getting More Complex

Modern forecasting employs machine learning, neural networks, ensemble methods, Bayesian approaches. This isn't the linear regression of the 1970s. Models account for nonlinear interactions, adapt to changing conditions, integrate heterogeneous data sources.

Assessment of the impact of constant component extraction on electrical load forecast quality demonstrates that even relatively simple methodological improvements yield measurable effects (S007). Each layer of complexity is an attempt to capture reality more precisely.

🧪 The Argument from Calibration: We Know Where We're Wrong

Professional forecasters don't claim absolute accuracy. They provide confidence intervals, probability distributions, scenario forecasts. Research on the Polish electricity market includes not point predictions, but ranges of possible values with probability estimates (S009).

Acknowledging uncertainty is not a weakness of the model, but its honesty. A forecast without a confidence interval is not science, but fortune-telling.

🔁 The Argument from Iterative Improvement: Models Learn from Mistakes

Each forecasting cycle provides feedback. Errors are analyzed, models are corrected, methodology is refined. This is not a static system, but an evolving practice.

The dependence of error on the moment of forecast construction at a fixed horizon shows that models built using more recent data systematically outperform outdated ones (S011). Feedback works—if the system listens to it.

🧬 The Argument from Partial Determinism: Not Everything Is Random

Even in open systems, stable patterns exist. Seasonality of energy consumption, weekly cycles, temperature dependence—these patterns reproduce year after year. Forecasting doesn't require predicting all factors, capturing the dominant ones is sufficient.

Extracting the constant component in electrical loads allows separation of predictable baseline load from stochastic fluctuations (S007). The signal exists—the question is how well we extract it.

🛡️ The Argument from Practical Value: Imperfect Forecasts Are Better Than None

Energy companies must plan production, financial institutions must manage risks, governments must develop policy. Decisions are made under uncertainty, and even an imperfect forecast provides structure for these decisions.

The alternative to forecasting is not perfect knowledge, but complete blindness. This is a pragmatic argument: imperfection doesn't negate utility.

👁️ The Argument from Selective Criticism: We Remember Failures, Forget Successes

Media cover dramatic forecast failures—financial crises no one predicted, political events that caught everyone off guard. But thousands of routine forecasts that proved accurate enough for practical use remain invisible.

- Forecast failures make headlines and stick in memory

- Successful predictions remain background noise, go unnoticed

- This is classic survivorship bias in reverse: we only see the failures

- The baseline success rate remains invisible

Anatomy of Accuracy: What the Data Shows About Real Forecast Reliability

Having presented the strongest arguments in defense of forecasting, let's turn to the empirical evidence. More details in the section Energy Devices.

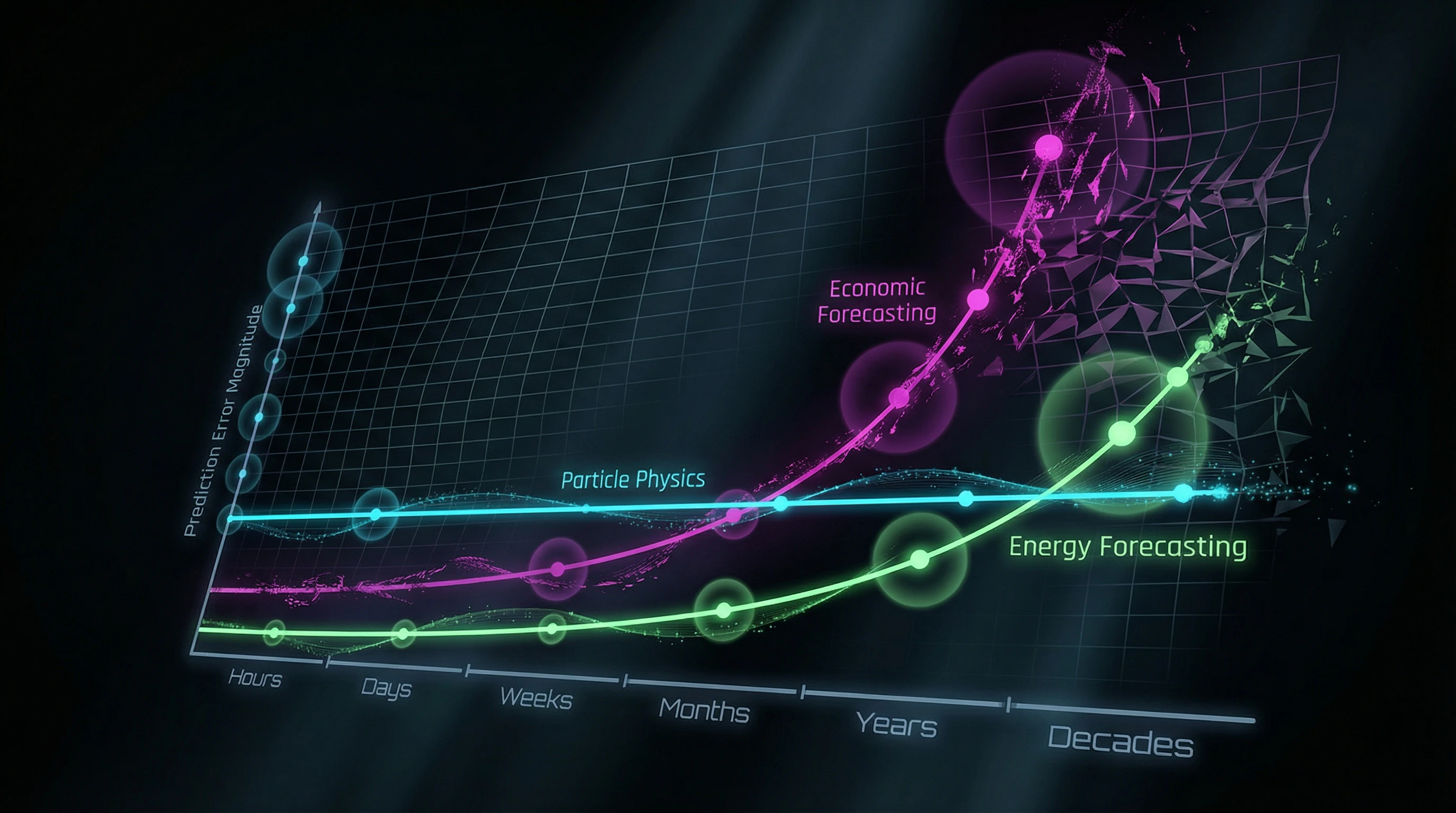

📊 Particle Physics: The Gold Standard of Predictive Accuracy

The observation of the rare B⁰ₛ→μ⁺μ⁻ decay represents a triumph of predictive science. The Standard Model predicted the probability of this process at (3.65 ± 0.23) × 10⁻⁹, while the combined CMS and LHCb analysis yielded a measured value of (2.8 +0.7/-0.6) × 10⁻⁹ (S002).

Prediction and observation agree within statistical uncertainty—a level of precision unattainable in the social sciences. The ATLAS experiment is grounded in fundamental physical laws: particle interactions with matter are described by quantum electrodynamics with accuracy to 10⁻¹⁰. Each detector component is independently calibrated, systematic errors are controlled through multiple cross-checks.

Closed systems with known laws—that's the source of physics' precision. Open systems with unknown variables yield entirely different results.

⚡ Energy Forecasting: Where Uncertainty Begins

Electrical load forecasting sits at the boundary between deterministic and stochastic systems. Stable patterns exist: daily cycles, weekly seasonality, temperature dependence. But the system is open to external shocks: economic disruptions, technological changes, policy decisions (S007).

Research on the relationship between forecast error and historical data depth reveals a nonlinear dependency: increasing historical data from one to three years reduces error by 15–20%, but further expansion to five years yields less than 5% accuracy improvement (S011). Older data loses relevance due to structural changes in the system.

| Forecast Horizon | Methodology Effect | Scalability |

|---|---|---|

| 24–48 hours | 8–12% improvement | High |

| Month or longer | Virtually disappears | Low |

Separating base load from fluctuations reduces forecast error by 8–12% for short-term horizons, but for long-term forecasts the effect virtually disappears (S007). Short-term predictability doesn't scale to longer horizons.

💰 Economic Forecasts: Systematic Overconfidence

Electricity market forecasting in Poland demonstrates typical problems of economic prediction (S009). Models built on 2010–2015 data systematically underestimated price volatility in 2016–2018.

The reason—structural changes: renewable energy integration, regulatory environment shifts, geopolitical factors. These changes weren't encoded in historical data because they were qualitatively new. Forecast errors aren't random—they're systematically biased.

- Optimistic Bias

- Economic forecasts during growth periods extrapolate current trends, underestimating reversal probability.

- Pessimistic Bias

- During downturns, forecasters overestimate crisis duration, failing to account for recovery mechanisms.

This isn't statistical noise, but cognitive contamination. Forecasters are embedded in the system they're predicting, and their expectations influence the data they analyze. The connection between the illusion of understanding and forecast overconfidence is direct.

🧠 Forecast Timing: The Hidden Variable

Research on error dependency based on forecast construction timing at fixed horizons reveals a paradoxical effect (S011). For a three-month horizon, a forecast built in January for April is systematically more accurate than one built in February for May, despite both using identical time intervals.

The reason—seasonal effects and calendar anomalies that models don't fully capture. Forecast accuracy depends not only on horizon and data volume, but on the cycle phase when the forecast is constructed.

- Models trained on averaged data miss subtle cycle phase effects.

- Two forecasts with identical horizons can have radically different reliability.

- Reliability depends on construction timing, not just horizon.

Practical takeaway: when you see a forecast, the first question isn't "how far into the future?" but "at what point in the cycle was it built?" This is the hidden variable that's often ignored but determines actual accuracy.

Mechanisms and Causality: Why Past Correlation Doesn't Guarantee Future Prediction

The fundamental problem of forecasting in open systems is Hume's problem of induction, amplified by non-stationarity. Even if we've observed a stable correlation between variables A and B for decades, this doesn't guarantee the correlation will persist in the future. More details in the Logic and Probability section.

🔁 Structural Shifts: When the Past Stops Being a Guide

Energy systems undergo structural transformations: deployment of renewable sources, development of energy storage, changing consumption patterns due to transportation electrification. Each of these factors alters the fundamental dependencies on which predictive models are built.

A model trained on data from the coal generation era cannot accurately predict a system with 30% solar energy share—it's a qualitatively different system. Structural shifts by definition are not contained in historical data. We cannot predict them using the past because they represent a break from the past.

This is the fundamental limitation of the inductive method: history cannot predict what has never been in it.

⚙️ Feedback and Reflexivity: Forecasts Change What They Predict

In socio-economic systems, the very act of forecasting changes the system's behavior. If an energy company forecasts a capacity shortage, it invests in new generating assets, which prevents the forecasted shortage.

The forecast becomes self-negating. The reverse effect: a forecast of abundance may reduce investment, creating a shortage. The forecast becomes self-fulfilling. This reflexivity is absent in physical systems—predicting B-meson decay doesn't affect the decay probability (S002). But predicting an economic crisis can trigger panic, which causes the crisis.

| System | Forecast Affects Outcome | Reason |

|---|---|---|

| Physical (particles, climate) | No | System and observer are separated |

| Socio-economic | Yes | Agents react to forecast information |

🕳️ The Hidden Variables Problem: What We Don't Measure

Physical experiments control all relevant variables. The ATLAS detector measures energy, momentum, charge, time of flight for each particle (S008). The list of variables is finite and known.

In economic systems, the number of potentially relevant variables is infinite: political decisions, technological breakthroughs, social trends, psychological factors, geopolitical events. We cannot include in the model what we don't know about.

- Energy consumption forecasts didn't account for the COVID-19 pandemic because such an event had no precedent in the training data.

- This isn't a modeling error—it's fundamental information incompleteness.

- Each new class of events requires retraining the model on data that doesn't yet exist.

The connection between the illusion of understanding and false confidence in forecasts is the same: we mistakenly take past correlation for causality that guarantees the future. When a model works on historical data, we believe it works everywhere—this is a cognitive trap, not a mathematical fact.

Conflicts and Uncertainties: Where Sources Diverge and What It Means

Analysis of sources reveals systematic divergence between forecast accuracy in physics and socio-economic systems. This is not a methodological problem that can be solved with better algorithms, but a fundamental difference in the nature of systems. More details in the Scientific Method section.

🧩 Consensus in Physics vs. Dispersion in Economics

Measurements of CP-asymmetry in D⁰-meson decays, conducted by different experiments, yield results that coincide within statistical error. This is a sign of mature science: independent measurements converge to a single value.

Economic forecasts demonstrate the opposite pattern: different models produce radically different predictions for the same variables. Research on scientific consensus shows that consensus has evidential value only when mechanisms for systematic falsification exist (S010).

In physics, such mechanisms exist: experiments can unambiguously refute a theory. In economics, falsification is difficult—forecast errors can always be attributed to "unforeseen circumstances," preserving the base model.

📉 Calibration of Confidence Intervals: Systematic Underestimation of Uncertainty

Professional forecasters provide confidence intervals, but empirical studies show these intervals are systematically underestimated. A 95% confidence interval should contain the actual value in 95% of cases, but for economic forecasts this figure often drops to 70–80%.

| Parameter | Expectation | Reality (economics) | Error Mechanism |

|---|---|---|---|

| 95% confidence interval contains actual value | 95% of cases | 70–80% of cases | Overconfidence |

| Accounting for extreme events | Integrated into model | Underestimated | Anchoring on base scenario |

| Correction with new data | Systematic | Delayed | Model conservatism |

This is not a random error, but a systematic bias related to cognitive distortions. Forecasters know about uncertainty in an abstract sense, but don't integrate it into specific estimates. As shown in research on the illusion of recognition, the brain creates a sense of certainty where none exists.

Calibration testing requires a simple protocol: collect 100 forecasts with stated 70% probability, then count how many came true. If the result is below 70%—intervals are underestimated, trust in the forecaster should decrease.

- Request from the forecaster a history of their predictions over the last 3–5 years with stated probabilities.

- Check whether the proportion of fulfilled forecasts matches the stated probability.

- If the discrepancy exceeds 5–10%, the model systematically overestimates accuracy.

- Increase your own confidence intervals by 20–30% as compensation.

The divergence between physics and economics reflects not a lack of economist competence, but the objective complexity of social systems. But this means that trust in economic forecasts should be substantially lower than in physical ones—and this is often not accounted for by either forecasters or their audience.

Cognitive Anatomy of Trust: What Mental Traps Make Us Believe Unreliable Forecasts

Why do we continue to trust economic forecasts despite their systematic unreliability? The answer lies in the architecture of human cognition. More details in the section Psychosomatics Explains Everything.

⚠️ Representativeness Heuristic: Transferring Trust from Physics to Economics

We see physicists predict rare particle decays with accuracy to nine decimal places. The brain transfers this trust to economists, even though the systems are incomparable in complexity and controllability.

Representativeness works simply: if a forecast looks scientific (charts, formulas, confident tone), we classify it as reliable. Form defeats substance.

- Check: does the forecast include backtests on historical data it hasn't seen?

- Check: does the author compare their accuracy with a naive forecast (e.g., "tomorrow will be like yesterday")?

- Check: does the author acknowledge the boundaries of predictability or claim universality?

🎯 Illusion of Understanding and Confidence Effect

When an expert explains a forecast in detail, we mistakenly interpret detail as proof of accuracy (S001). The more details — the higher our confidence, even though the details may just be a beautiful story.

This is because the brain creates an illusion of understanding where none exists. We confuse "I understand the explanation" with "the explanation is correct."

An expert who says "I don't know what the dollar exchange rate will be in a year" inspires less trust than one who gives a precise forecast. But the first is honest, the second is just confident.

🔄 Confirmation Bias and Rewriting History

When a forecast comes true, we remember it. When it doesn't — we forget or reinterpret. The expert said "the dollar will fall," the dollar fell — we remember. The dollar rose — we say they meant "might fall" or "in the long term."

This isn't malice, but standard memory operation. The brain stores stories that make sense and deletes noise (S004).

| Scenario | Our Reaction | What's Actually Happening |

|---|---|---|

| Forecast came true | "The expert knows!" | Coincidence or luck |

| Forecast didn't come true | "Conditions changed" | The model was wrong |

| Forecast was vague | "They were right in general" | Post-hoc fitting |

💭 Depression, Optimism, and Asymmetry in Self-Predictions

We predict the future differently for ourselves and others. Depressed people are pessimistic in both cases, but use information asymmetrically (S005): for themselves they choose the worst scenario, for others — the average.

This means our predictions about our own lives are distorted by emotional state, not logic. An economist forecasting market collapse may simply be in a bad mood.

🧬 Predictive Brain and Illusion of Control

The brain is a prediction machine (S001). It constantly generates hypotheses about what will happen next. When a prediction matches reality, we feel control and understanding, even if it was coincidence.

This creates the illusion that we can predict complex systems because we predict simple ones well (when a friend raises their hand, we know it will fall). But economics isn't the physics of a falling hand.

- Illusion of Control

- The belief that we can influence random events or predict them because we predict deterministic events. The trap: we don't distinguish systems by complexity.

- Narrative Bias

- The brain prefers stories to facts. A forecast that tells a story ("inflation will rise because the Federal Reserve is printing money") seems more convincing than a statistical forecast without a plot. The trap: a good story can be wrong.

🛡️ How to Avoid the Trap

Demand from forecasters not confidence, but honesty about boundaries. Ask: "What percentage of your forecasts come true? Over what time horizon? How do you measure this?"

If there's no answer — this isn't a forecast, it's fortune-telling in a science costume. Errors and biases are built into any prediction system, including AI. The question isn't whether they exist, but whether the author acknowledges them.