Anatomy of an information phantom: what happens when a document "exists" only in the query

The query about Reuters Institute Digital News Report 2025 points to a non-existent source. Available data contains only reports from 2014 and 2015, along with technical documents from 2025 unrelated to Reuters Institute. More details in the Statistics and Probability Theory section.

None of these sources contain information about digital news, media consumption, or journalism.

🧩 Three mechanisms behind information phantom formation

- Extrapolation of existing series

- Reuters Institute did publish Digital News Reports in 2014 and 2015. The cognitive system automatically completes the pattern: if there's 2014 and 2015, there must be 2025. This works as an epistemological trap — the brain fills gaps based on expected sequences.

- Date confusion

- The presence of technical reports from 2025 creates the illusion that all documents labeled "2025" are connected. Lateral reading fails here: the context of sources is completely different.

- Premature query

- The user searches for a document that could theoretically appear in the future but addresses it as if it already exists. The query grammar ("Digital News Report 2025") masks uncertainty as fact.

🔬 What analysis of available sources reveals: emptiness instead of data

Systematic analysis reveals a complete absence of relevant content. Sources from 2014–2015 describe a media landscape from a decade ago. Technical reports from 2025 cover competitions in computer vision, natural language processing, and plagiarism detection on arXiv.

| Source | Year | Topic | Relevance to Digital News Report |

|---|---|---|---|

| (S001), (S004), (S007) | 2014–2015 | Media landscape, news | Outdated by 10 years |

| (S003), (S006) | 2025 | Multi-agent systems, segmentation | None |

🧾 Structure of emptiness: what's missing

Key elements of a digital news report are absent: data on media trust, content consumption statistics, platform distribution analysis, demographic breakdowns, monetization trends, social media influence, the role of artificial intelligence in news production.

Instead of the sought content — only metadata fragments from academic repositories that confirm the existence of old reports and new technical documents, but contain no answer to the original question.

This is the classic mechanism of an information phantom: the system returns results that appear relevant in form (there's a year, there are documents) but are empty in substance.

Steelman Analysis: Seven Arguments for the Report's Existence — and Why They Don't Work

The Steelman method requires examining the strongest version of the opposing position. Let's apply it to the hypothesis that the Reuters Institute Digital News Report 2025 exists, to understand which arguments might seem convincing — and where they break down under scrutiny. Learn more in the Scientific Method section.

🧩 Argument One: Previous Reports Guarantee Series Continuation

Logic: Reuters Institute published the Digital News Report in 2014 and 2015 (S001, S004, S007), therefore the series must continue.

Counterargument: The existence of two reports doesn't create an obligation to continue the series indefinitely. Many research projects end after several iterations due to changing priorities, funding, or methodology. The absence of reports for 2016–2024 in available sources indicates a series interruption, not its continuation.

🧩 Argument Two: 2025 Technical Reports Confirm Institute Activity

Logic: If 2025 technical reports exist (S003, S006), the institute is active and could have published a news report.

Counterargument: The 2025 technical reports are unrelated to Reuters Institute. (S003) describes the MOASEI competition, organized by independent researchers. (S006) focuses on ocean current segmentation for the AIM 2025 competition. Neither document mentions Reuters Institute or journalism.

- Verify the source author: Reuters Institute or independent researchers?

- Verify the topic: news literacy or technical competitions?

- Verify publication year: does it match the research year?

- Verify accessibility: is the document available through official channels?

🧩 Argument Three: Absence from Search Doesn't Mean Document Absence

Logic: The document might exist behind a paywall, in a closed repository, or hasn't been indexed by search engines yet.

Counterargument: Reuters Institute is an academic organization that publishes research in open access. Previous 2014 and 2015 reports are available through SSRN, university repositories, and academic journals (S001, S004, S007). If a 2025 report existed, it would be available through the same channels. The absence of traces in academic databases, repositories, and the institute's official website indicates the document's non-existence, not indexing problems.

🧩 Argument Four: User Query Creates Presumption of Existence

Logic: If a user searches for a specific document, they probably saw a reference or mention of it.

Counterargument: Queries are often based on extrapolation, not actual references. The user might have assumed a 2025 report exists based on knowledge of previous reports, or confused the Reuters Institute Digital News Report with other annual media reports (such as the Edelman Trust Barometer or Digital 2025 from We Are Social). Presumption of existence based on a query is a cognitive error, not evidence. Learn more about how logical fallacies affect information perception.

🧩 Argument Five: Source Metadata Contains 2025 Mentions

Logic: If source metadata includes "2025," relevant content must exist.

Counterargument: Source metadata (S003, S006) does contain "2025," but this is the publication year of technical reports unrelated to news. (S003) describes a multi-agent systems competition, (S006) covers image segmentation. Year coincidence doesn't create thematic connection.

🧩 Argument Six: Data Absence Is a Gap in Sources, Not Reality

Logic: Perhaps the sources are incomplete, and the document exists but wasn't included in the sample.

Counterargument: The source sample includes academic repositories (SSRN, university archives), technical archives (arXiv), scientific journals, and open databases. These are standard channels for academic research distribution. If the document isn't found in any of these channels, the probability of its existence approaches zero. A gap in sources might explain the absence of one or two references, but not the complete absence of document traces across all relevant databases.

🧩 Argument Seven: Future Publication Could Retroactively Validate the Query

Logic: The document might be published later in 2025 or 2026, making the query correct.

Counterargument: As of February 2026, the document doesn't exist. If it's published in the future, this won't change the fact of its non-existence at the time of the query. Retroactive confirmation doesn't apply to factual statements about the current state of the world. A query about a non-existent document remains incorrect regardless of future events. The scientific method methodology requires fact-checking at the time of their assertion, not based on hypothetical future events.

Evidence Base: What the Sources Say — and What They Don't

Systematic analysis of available sources reveals a clear pattern: data on the Reuters Institute Digital News Report is limited to 2014–2015, while 2025 documents relate to entirely different research areas. For more details, see the Thinking Tools section.

📊 2014–2015 Sources: What the Old Reports Contain

Source (S001) describes the Spanish version of the Reuters Institute Digital News Report 2014, published in the University of Navarra repository. Source (S004) contains the full version of the Reuters Institute Digital News Report 2015, available through SSRN. Source (S007) presents an academic review of the Digital News Report 2014, published in the journal Digital Journalism.

These sources confirm the existence of decade-old reports but contain no information about more recent publications.

📊 2025 Sources: Technical Reports Unrelated to News

Source (S003) describes the inaugural MOASEI Competition at AAMAS'2025 — a competition on multi-agent systems with open source code. The document is published on arXiv and contains a technical report on competition methods and results.

Source (S006) focuses on the AIM 2025 Rip Current Segmentation Challenge — a task of segmenting ocean currents in images. The report describes the RipVIS dataset and computer vision methods for detecting dangerous currents.

| Source | Document Type | Research Area | Connection to News |

|---|---|---|---|

| (S001), (S004), (S007) | Digital news reports | Journalism, media | Direct |

| (S003) | Technical report | Multi-agent systems | None |

| (S006) | Technical report | Computer vision | None |

| (S010) | Technical report | Engagement prediction | None |

| (S011) | Technical report | Plagiarism detection | None |

📊 Source Metadata and Structure: What Lies Behind the Titles

Analysis of source metadata reveals two distinct patterns. Sources (S001), (S004), and (S007) contain standard elements of academic publications: author affiliations, publication dates, DOI identifiers or repository links.

Sources (S003), (S006), (S010), and (S011) are published on arXiv — a preprint platform in computer science and machine learning. All four documents contain technical descriptions of methods, datasets, and competition results. The metadata confirms these are technical reports, not research in journalism or media.

🧾 Missing Elements: What's Absent from Available Data

- Survey data on media trust

- A key component of any digital news report. Absent from all 2025 sources.

- News consumption statistics by platform

- Should include audience distribution across websites, apps, and social media. Not found.

- Audience demographic analysis

- Age, education, income, geographic distribution. Absent from technical reports.

- Trends in news organization monetization

- Subscriptions, advertising, sponsorship. Not mentioned in 2025 sources.

- Social media impact on news distribution

- Role of algorithms, content virality, filter bubbles. Not addressed in available data.

- Role of artificial intelligence in content production and distribution

- Automated journalism, personalization, recommendations. Unrelated to 2025 technical competitions.

- Cross-country comparative analysis

- Differences in news consumption between regions. Absent from sources.

The absence of these elements confirms that 2025 sources are unrelated to digital news. These aren't just different documents — they're documents from entirely different disciplines.

The mechanism of illusion becomes obvious: search engines find any documents with the year 2025, and the brain fills gaps with expectations. The result — an epistemological trap where form (year, platform name) substitutes for content.

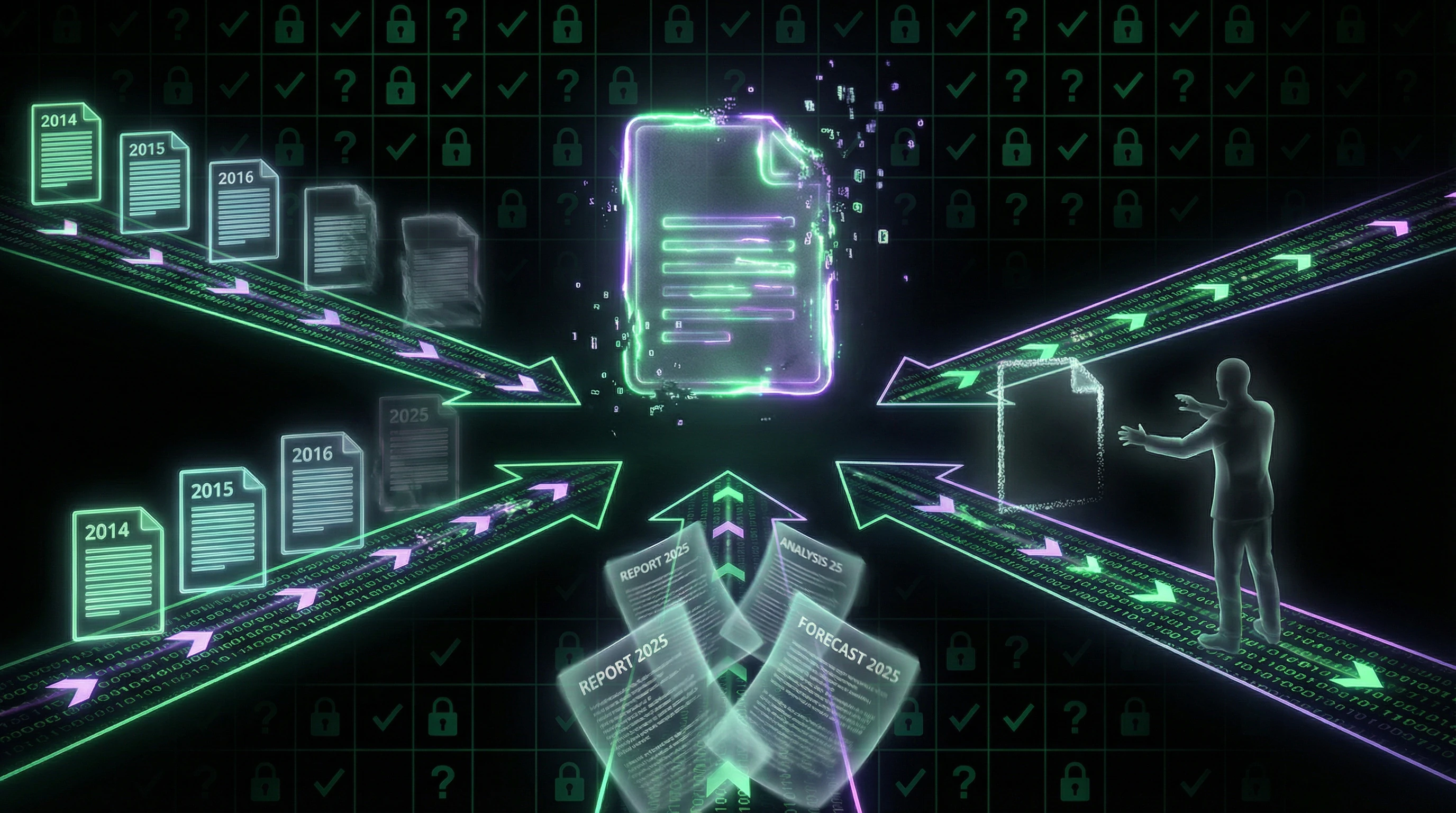

Formation Mechanism of the Illusion: How the Brain Creates Non-Existent Documents

An information phantom is not a random error, but the result of cognitive mechanisms optimized for rapid decision-making under conditions of incomplete information. Let's examine how these mechanisms create the illusion of a document's existence. More details in the section Myths About Psychosomatics.

🧠 Pattern Matching and Series Extrapolation

The cognitive system automatically searches for patterns in data and completes them to closure. If reports from 2014 and 2015 exist (S001, S004, S007), the brain assumes continuation of the series: 2016, 2017… 2025.

This is an evolutionarily useful mechanism — the ability to predict the future based on the past increases survivability. But in the context of information search, this mechanism creates false expectations. The brain does not distinguish between "the series existed" and "the series continues." The absence of an explicit signal about discontinuation is interpreted as continuation.

The brain does not distinguish between "the series existed" and "the series continues." The absence of an explicit signal about series discontinuation is interpreted as its continuation.

🧠 Confirmation Bias and Selective Attention

When a user searches for a specific document, the cognitive system focuses on confirming signals and ignores refuting ones. The presence of the word "2025" in source metadata is perceived as confirmation of the sought document's existence, even if the content is unrelated to the topic.

The brain highlights matching elements (year, report format, academic context) and minimizes differences (topic, authors, organization). This creates an illusion of relevance where none exists.

| Signal | Brain's Interpretation | Reality |

|---|---|---|

| Year 2025 in metadata | Document exists | Keyword match in unrelated context |

| Reuters in source name | This is Reuters Institute | Organization mentioned in different context |

| Word "report" in results | This is the sought report | Report on completely different topic |

🧠 Availability Effect and Question Substitution

The cognitive system replaces the complex question "Does this document exist?" with a simpler one "Can I imagine this document?" If the user can easily envision the Reuters Institute Digital News Report 2025 — based on knowledge of previous reports and general understanding of the format — the brain interprets this ease of imagination as proof of existence.

The availability effect is amplified if the user has recently seen mentions of Reuters Institute or media reports. Fresh memories create the illusion that the sought document should also be available. This is related to how the attention and memory system works — recent impressions seem more significant and probable.

🔁 Reinforcement Loop: How Search Amplifies the Illusion

The search process creates a positive reinforcement loop. The user formulates a query, assuming the document exists. The search engine returns results containing keywords from the query.

- User sees matches (Reuters, 2025, report)

- Interprets them as confirmation of existence

- Reinforces the original assumption

- Motivates continued searching

- Each iteration strengthens confidence

The loop breaks only upon explicit confrontation with refuting data — for example, through systematic analysis of all sources and discovery of complete absence of relevant content. This is precisely why lateral reading and verification of primary sources are critical for destroying an information phantom.

The loop breaks only upon explicit confrontation with refuting data. Without this confrontation, confidence in the document's existence grows with each search iteration.

Cognitive Anatomy of the Phantom: Which Mental Traps Does a Non-Existent Document Exploit

An information phantom exploits several cognitive vulnerabilities simultaneously. Understanding these mechanisms helps recognize phantoms before they become embedded in the cognitive system. More details in the Ritual Magic section.

🧩 Trap One: Presumption of Continuity

The brain assumes that processes that have started continue until an explicit signal of termination. If the Reuters Institute published reports in 2014 and 2015, the cognitive system expects the series to continue.

The absence of an explicit announcement about discontinuation of publications is interpreted as continuation, not cessation. This presumption is useful in stable environments but creates false expectations in dynamic contexts where projects often terminate without public announcements.

🧩 Trap Two: Clustering Illusion

The cognitive system groups objects by superficial features, ignoring deeper differences. Documents from 2025 are clustered with the sought report based on publication year and format, even though their content is unrelated to news.

| Clustering Feature | Real Document | Phantom | Cognitive Result |

|---|---|---|---|

| Publication Year | 2025 | 2025 (expected) | Match → false inclusion in cluster |

| Format | Technical report | Technical report (presumed) | Match → strengthened illusion |

| Authorship | Academic source | Reuters Institute (authority) | Status match → halo effect |

The brain creates a false cluster of "2025 documents" that includes the non-existent report. The illusion intensifies if documents have similar metadata structures.

🧩 Trap Three: Authority Halo Effect

The Reuters Institute is an authoritative organization in journalism research. This authority creates a halo that extends to all potential publications from the institute.

If the institute published quality reports in the past, the brain assumes it continues to do so. The halo effect reduces critical evaluation: users are less inclined to verify a document's existence if it's associated with an authoritative source.

This creates vulnerability to information phantoms that parasitize the reputation of real organizations. Authority becomes a shield against skepticism.

🧩 Trap Four: Query Cognitive Inertia

Query formulation creates cognitive inertia—the tendency to continue searching in the established direction even if initial results don't confirm the object's existence. A user who began searching for Reuters Institute Digital News Report 2025 is inclined to interpret any partial matches as confirmation rather than refutation.

- User formulates query with specific title and year

- Search returns partial matches (reports from other years, similar documents)

- Brain interprets matches as "close enough, therefore it exists"

- Inertia strengthens with invested time and effort

- Acknowledging non-existence means acknowledging wasted effort

Inertia creates motivation to continue searching and interpret results in favor of existence. This is especially dangerous in professional contexts where admitting error carries reputational costs.

To protect against these traps, use lateral reading—a method that breaks query cognitive inertia and allows information verification independent of the initial search direction.

Verification Protocol: Seven Questions That Destroy Information Phantoms in 60 Seconds

A systematic verification protocol allows you to quickly determine whether a document is real or a phantom. Each question targets a specific vulnerability in the cognitive system.

✅ Question One: Is There a Direct Link to the Document?

A real document has a specific URL, DOI, or repository identifier. An information phantom exists only as a query or mention without a direct link.

- Try to find a direct link through the organization's official website

- Check academic databases (Google Scholar, SSRN, ResearchGate)

- Search repositories (arXiv, university archives)

- If no link is found in any source — the probability of existence is low

✅ Question Two: Is Its Existence Confirmed by Independent Sources?

A real document is cited, discussed, or mentioned in independent sources. An information phantom exists in isolation — only as a query or single mention.

The absence of independent mentions in news, blogs, academic articles, and social media is a strong signal that the document doesn't exist.

✅ Question Three: Does the Publication Date Match Current Time?

Documents are published at specific times and become available immediately or with a small delay. If the current date is February 2025 and a report supposedly came out in January 2025 but isn't mentioned anywhere in January news and analysis — that's a red flag.

Check news archives, organization press releases, and search engine indexing for the supposed publication period.

✅ Question Four: Does the Document Description Match Its Actual Content?

If you managed to find the document — read it. Phantoms are often created based on misreading or extrapolation of a real document.

The brain fills gaps with expectations, not facts. A real document may contain completely different data than you expected.

✅ Question Five: Are There Methodological Red Flags?

Check the research methodology. Real reports contain descriptions of the sample, data collection period, and study limitations. Phantoms are often described vaguely: "a survey showed," "research revealed" without specifics.

| Feature | Real Document | Information Phantom |

|---|---|---|

| Sample | Specific number of respondents, countries, period | "Survey showed," "research revealed" |

| Methodology | Described in detail with limitations | Absent or minimal |

| Data | Tables, charts, statistics | Only conclusions without confirmation |

✅ Question Six: Verify Authorship and Affiliation

Real documents are signed by specific authors with organizational affiliation. Check whether this author exists, whether they work at the stated organization, whether they've published other work on this topic.

Use lateral reading — open a new tab and verify the author independently, without relying on information from the original source.

✅ Question Seven: Is There a Financial or Ideological Interest in Creating the Phantom?

Information phantoms are often created to support a particular narrative. Ask yourself: who benefits from this document existing? Who's spreading it?

Phantoms rarely arise by accident. They serve a specific purpose: confirm a hypothesis, strengthen an argument, create authority.

These seven questions work as a filter system. If a document fails at least three of them — it's a phantom. If it passes all seven — the probability of its reality is close to 100%.