What is a conspiracy theory from a narrative structure perspective and why the classical definition no longer works

The traditional definition of conspiracy theory as "an explanation of events through secret collusion of powerful actors" doesn't capture modern conspiratorial narratives. The key feature is not the content of accusations, but a specific structure: linking unrelated domains through the concept of "hidden knowledge" (S004).

Pizzagate demonstrates this logic: a pizzeria, politicians, and pedophilia connected through interpretation of emails as coded messages. A multi-domain narrative cannot be refuted within a single subject area (S004).

Structural elements of conspiratorial narrative

- Hidden agents

- Postulating the existence of actors with exceptional power and malevolent intentions (S007).

- Alternative epistemology

- Absence of evidence is interpreted as evidence of concealment (S005). The logic closes: any fact becomes confirmation.

- Linking disparate events

- Creating a common "hidden plan" generates an illusion of explanatory power (S001). Conspiratorial narratives function as subgraphs of a hidden network, where each post is a sample from a more extensive structure (S004).

COVID-19 as the perfect environment

The absence of authoritative scientific consensus about the virus, its transmission, and long-term consequences created an information vacuum (S006). Conspiracy theories filled it immediately.

Circulating narratives: 5G activates the virus, the pandemic is a hoax by a global cabal, the virus is a Chinese bioweapon, Bill Gates is using the pandemic for a global surveillance regime (S006). They spread faster than official information because they offered simple explanations under conditions of uncertainty.

The boundary between skepticism and conspiracism

The boundary is blurred: real conspiracies (Watergate, MKUltra) confirm that secret collusions exist (S005). The key distinction is not in the content of suspicions, but in the method of processing counter-evidence.

| Scientific skepticism | Conspiratorial thinking |

|---|---|

| Corrects hypothesis when receiving refuting data | Integrates any data as confirmation through the "they're hiding it" mechanism (S007) |

Five Arguments That Make Conspiracy Theories Convincing to Millions: Steelmanning Conspiratorial Logic

To understand the mechanisms of conspiracy narrative spread, we must reconstruct their internal logic in its strongest form. Steelmanning reveals the cognitive mechanisms that make conspiracy theories attractive. More details in the Mental Errors section.

⚠️ Argument from Historical Verification: Real Conspiracies as Proof of Possibility

Proponents of conspiratorial thinking point to documented cases of real conspiracies: Operation Northwoods (plan for false flag terrorist attacks to justify invading Cuba), MKUltra program (CIA mind control experiments), Watergate (political espionage at the highest level).

These historical precedents create an epistemological problem: if some "conspiracy theories" turned out to be true, how do we distinguish false from true ones before they're exposed? This argument exploits the real difficulty of differentiation and creates a presumption of possibility for any conspiratorial narrative (S005).

🧩 Argument from Explanatory Power: Conspiracy Theory as Universal Hermeneutic Key

Conspiratorial narratives offer a single explanation for multiple disparate events, creating an illusion of deep understanding. Instead of acknowledging randomness, complexity, and multiple causation, conspiracy theory reduces chaos to a simple "agent-intention-action" schema (S001).

This reduction is cognitively attractive: it reduces uncertainty and provides a sense of control through understanding. Pizzagate connected emails, a pizzeria, politicians, and pedophilia into a single narrative that, for believers, explained multiple "oddities" simultaneously.

🔁 Argument from Epistemological Asymmetry: Absence of Evidence as Evidence of Cover-Up

Conspiratorial thinking creates a self-sustaining epistemological system through inversion of evidentiary logic. In scientific method, absence of evidence weakens a hypothesis; in conspiracism, absence of evidence is interpreted as proof of effective concealment, which strengthens the hypothesis (S007).

- If evidence is found — theory confirmed

- If not found — this proves the scale of the conspiracy

This inversion makes conspiracy theory unfalsifiable: any test result gets integrated into the narrative.

🧠 Argument from Cognitive Economy: Simplicity vs. Complexity Under Information Overload

Under information overload, conspiratorial narratives offer a cognitively economical solution. Instead of processing multiple sources, evaluating research methodology, understanding statistical uncertainty, and acknowledging limits of knowledge, conspiracy theory provides a ready-made interpretive framework (S003).

The narrative "Bill Gates uses vaccines for microchipping" is cognitively simpler than understanding mRNA vaccine mechanisms, pharmacokinetics, immunology, and epidemiological modeling (S006). This explains why conspiratorial explanations spread faster than scientific ones.

👁️ Argument from Social Identity: Conspiracism as Marker of Belonging to the "Enlightened"

Accepting a conspiratorial narrative often functions as a marker of belonging to the group of "those who know the truth," in opposition to the "deceived masses" (S001). This identification provides social capital within the conspiratorial community and a sense of superiority through possession of "hidden knowledge."

Conspiratorial thinking becomes not merely a way of interpreting events, but an element of social identity, making abandonment of it psychologically painful, as it requires revision of group membership.

Methods for Automated Detection of Conspiratorial Content: From Narrative Structure Analysis to Domain Connection Monitoring

Researchers have developed computational methods for automatically detecting conspiratorial narratives in social media and news. These methods are based on analyzing structural features of conspiratorial discourse, rather than evaluating the truth value of claims. Learn more in the Thinking Tools section.

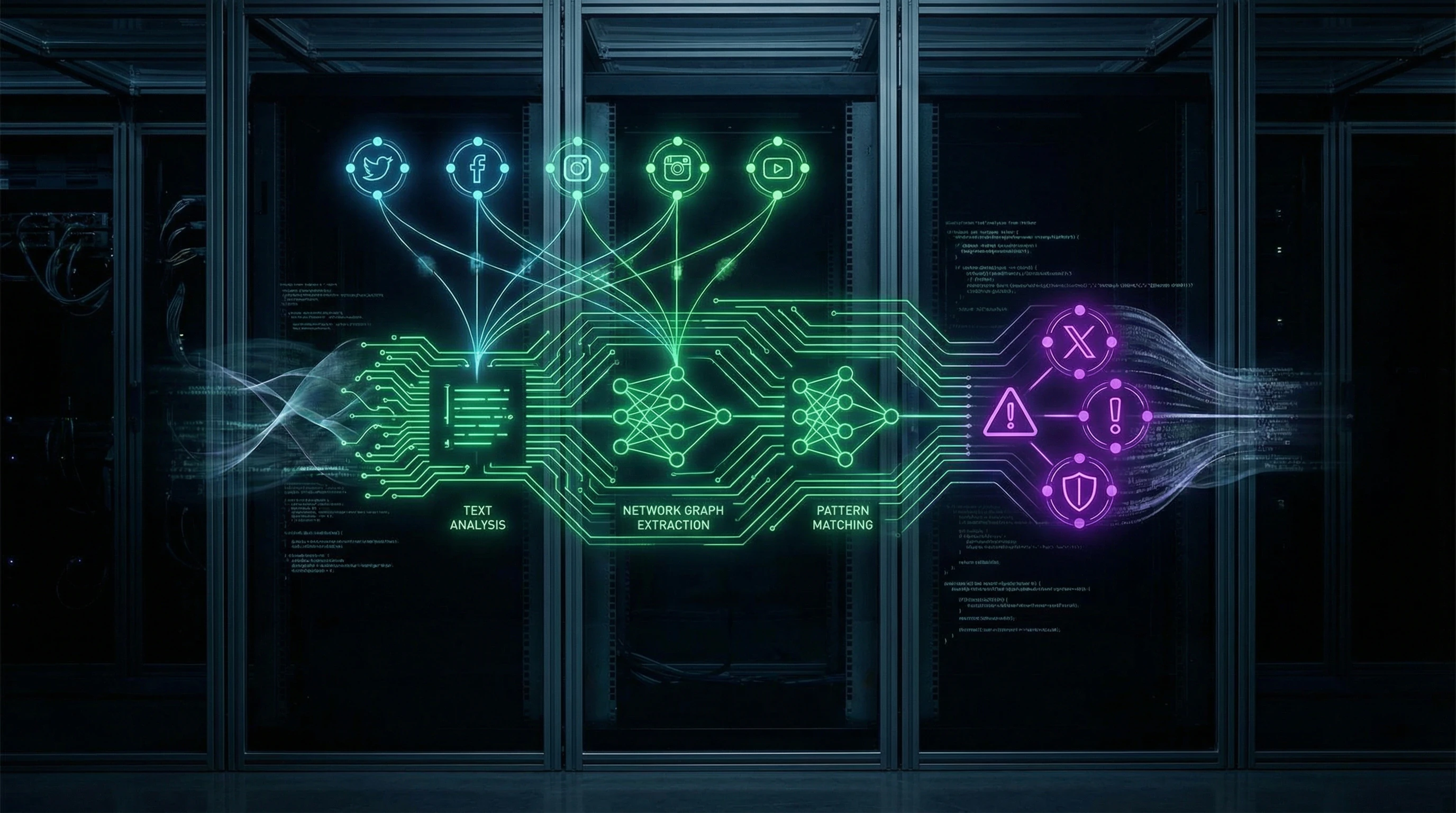

📊 Automated Pipeline for Detecting Conspiratorial Narrative Frameworks

The automated pipeline developed by the research team treats posts and news materials as samples of subgraphs from a hidden narrative network. The problem of reconstructing the underlying structure is formulated as a latent model estimation task (S004).

The method was successfully applied to analyze Bridgegate and Pizzagate, revealing that the Pizzagate conspiratorial framework relies on interpreting "hidden knowledge" to connect otherwise unrelated domains of human interaction. Researchers hypothesized that multi-domain focus is an important characteristic of conspiracy theories, distinguishing them from ordinary narratives (S004).

A conspiratorial narrative doesn't simply explain an event—it connects unrelated domains (politics, medicine, finance, technology) into a unified network of causality, where each element serves as evidence for the existence of others.

🧾 Detection of COVID-19 Conspiracy Theories in Social Media and News

A specialized automated detection system analyzes alignments and connections between news domains and conspiratorial sources (S002). These alignments, which can be monitored in near real-time, prove useful for identifying areas in news that are particularly vulnerable to reinterpretation by conspiracy theorists.

The system tracks how conspiratorial narratives about 5G-activated viruses, global cabals, biological weapons, and Bill Gates's plans spread across various platforms and adapt to local contexts (S002).

- Identifying "anchor" sources (domains that appear in conspiratorial texts earlier than in mainstream news)

- Tracking narrative transformation as it moves between platforms

- Analyzing the speed of spread and mutation of conspiratorial frameworks

- Mapping republication and citation networks between conspiratorial and legitimate sources

🔎 Analysis of Discursive Markers: Linguistic Patterns of Conspiratorial Text

Discourse analysis of conspiratorial texts reveals specific linguistic patterns: increased frequency of epistemic modalities ("must be," "obviously," "actually"), use of interrogative constructions for implicit assertions ("Why isn't anyone talking about...?"), appeals to "common sense" against expert knowledge, and "just asking questions" rhetoric to avoid responsibility for claims (S003).

These discursive markers can be formalized for automated text classification. A key feature is the use of questions as assertion tools: instead of directly stating "X controls Y," conspiratorial text asks "Doesn't X control Y?", shifting the burden of disproof onto the reader.

| Marker | Example | Function in Conspiracy Theory |

|---|---|---|

| Epistemic modality | "Obviously..." | Simulating certainty without evidence |

| Rhetorical question | "Why is the media silent?" | Assertion through question, avoiding accountability |

| Appeal to intuition | "Common sense tells us..." | Opposing expertise, democratizing knowledge |

| Meta-speech | "I'm just asking questions" | Defense against criticism, innocent investigator position |

🧬 Narrative Analysis: Plot Structure as Diagnostic Criterion

The narratological approach focuses on plot structure: conspiratorial narratives typically follow the pattern "hidden threat — heroic revelation — struggle against concealment" (S007). Unlike scientific narratives, which allow for uncertainty and revision, conspiratorial narratives are constructed as detective stories with guaranteed exposure.

This structural feature can be formalized for automated classification: texts following the "mystery-revelation-struggle" pattern with high certainty in conclusions despite lacking direct evidence are classified as conspiratorial. Scientific narratives, by contrast, allow for multiple interpretations and require explicit acknowledgment of knowledge gaps.

- Narrative closure

- Conspiratorial text assumes that all puzzle pieces are already known or can be found. Any contradiction is interpreted as part of the conspiracy, not as a sign of error in the hypothesis.

- Heroic author position

- The author of a conspiratorial narrative positions themselves as a brave exposer confronting powerful forces. This position creates psychological reward for participating in text dissemination.

- Binary morality

- The world is divided into those who know the truth and those who hide it or don't see it. Intermediate positions (skepticism, uncertainty) are interpreted as complicity or naivety.

Automated detection systems use these structural features to classify texts without needing to verify the factual truth of each claim. This approach enables scaling conspiratorial content monitoring to millions of texts in real-time (S002).

Cognitive Mechanisms of Conspiracy Theory Susceptibility: From Pattern Detection to Motivated Reasoning

Understanding the cognitive mechanisms that make people susceptible to conspiratorial narratives is critical for developing effective countermeasures. These mechanisms are not thinking defects, but rather normal cognitive processes exploited by the specific structure of conspiratorial discourse. More details in the Epistemology Basics section.

🧩 Hyperactive Pattern Detection: Evolutionary Legacy in the Information Environment

The human brain evolved to detect patterns under conditions of incomplete information, since false-positive threat detection (seeing a predator where there is none) had a lower evolutionary cost than false-negative detection (missing a real predator) (S001). In the modern information environment, this hypersensitivity to patterns leads to perceiving connections between random events.

Conspiratorial narratives exploit this mechanism by providing ready-made patterns for interpreting disparate data. The brain derives satisfaction from discovering order in chaos—even when that order is illusory.

False-positive alarm (panic without threat) is evolutionarily cheaper than false-negative (calm before danger). This asymmetry is built into our neurobiology and activated by conspiratorial content.

🔁 Confirmation Bias and Selective Information Processing

Confirmation bias—the tendency to seek, interpret, and remember information in ways that confirm preexisting beliefs—is amplified in the context of conspiratorial thinking (S005). Individuals who have adopted a conspiratorial narrative begin to selectively process information: confirming data is accepted uncritically, disconfirming data is rejected as part of the cover-up.

This mechanism creates a self-reinforcing cycle where each iteration of information processing strengthens the original belief.

- Individual encounters a conspiratorial narrative

- Narrative explains uncertainty or anxiety

- Brain begins searching for confirming signals

- Confirming signals are found (or constructed)

- Belief strengthens, search intensifies

⚠️ Illusion of Understanding and the Dunning-Kruger Effect in Complex Domains

Conspiratorial narratives create an illusion of understanding complex systems through reduction to simple cause-and-effect schemas (S007). The Dunning-Kruger effect—a cognitive bias where people with low competence in an area overestimate their understanding—is particularly pronounced in the context of complex technical and scientific questions.

An individual who has absorbed a conspiratorial narrative about 5G and COVID-19 may feel they understand telecommunications technology and virology better than experts, because they possess a "simple explanation," while experts are "lost in the details" (S006).

| Competence Level | Perception of Own Understanding | Perception of Experts |

|---|---|---|

| Low (conspiratorial narrative) | "I've figured out the essence" | "They're hiding the truth or confused" |

| Medium (initial learning) | "I don't know much" | "Experts know more" |

| High (expertise) | "I see complexity and limits of knowledge" | "Colleagues see the same" |

🧷 Motivated Reasoning: Defending Identity Through Defending Beliefs

Motivated reasoning—a process where the desired conclusion influences the evaluation of evidence—plays a central role in the persistence of conspiratorial beliefs (S001). When a conspiratorial narrative becomes part of social identity, its refutation is perceived as a threat to the self.

In this context, the individual is motivated to find ways to preserve the belief regardless of the quality of counter-evidence. Reasoning becomes not a tool for seeking truth, but a tool for defending identity.

- Motivated Reasoning

- A cognitive process where emotional or social motivation influences logical analysis. Not an error, but a normal mechanism for defending beliefs, especially when they're connected to group belonging.

- Why This Is Dangerous in the Context of Conspiracy Theories

- Transforms reasoning into a defensive tool rather than a search tool. Counter-evidence is interpreted as part of the conspiracy, creating a closed system invulnerable to facts.

- Where This Occurs

- Political beliefs, religious narratives, group identities. Social media amplifies the effect, creating ecosystems where motivated reasoning becomes the norm.

When belief becomes identity, refutation of the belief is perceived as personal attack. The brain defends not truth, but the self.

European Commission Recommendations for Regulating AI-Based Disinformation Detection Systems: Between Effectiveness and Human Rights

The European Commission is developing regulatory frameworks for AI systems, including those used for detecting conspiracy content. The Montreal AI Ethics Institute provided 15 recommendations in response to the Commission's white paper (S002).

🛡️ Key Recommendations for Ethical Use of AI in Disinformation Detection

The recommendations cover three levels of action: the research and innovation community, EU member states, and the private sector. Alignment is required between trading partners' policies and EU policies, along with gap analysis between theoretical frameworks and practical approaches to building trustworthy AI (S002).

The central idea is creating a network of centers of excellence in AI research to strengthen research capacity. In parallel, mechanisms must be implemented to promote private and secure data sharing among ecosystem participants (S002).

- Policy coordination between the EU and trading partners

- Creation of a network of centers of excellence in AI research

- Mechanisms for secure data exchange between government, academia, and business

- Gap analysis between theory and practice in regulation

🧾 Transparency Requirements and Right to Appeal AI System Decisions

A critically important recommendation is adding nuance to the discussion about AI system opacity and creating an appeals process for individuals who contest AI system decisions or conclusions (S002). This is a particularly acute problem for conspiracy content detection systems: misclassification can lead to censorship of legitimate skepticism and suppression of critical thinking.

A system that doesn't allow a person to know why their content was blocked and provides no appeal mechanism is not regulation—it's a black box of power.

The recommendation is to implement new rules and strengthen existing regulations so that all AI systems meet similar standards and mandatory requirements (S002). Algorithm transparency and availability of an appeals process are minimum conditions for the legitimacy of automated content control.

⛔ Ban on Facial Recognition Technologies and Biometric Identification Restrictions

The Institute recommends banning the use of facial recognition technology and ensuring that biometric identification systems serve exclusively the purpose for which they were implemented (S002). The risk is obvious: surveillance technologies can be deployed under the pretext of combating disinformation but used to suppress political opposition or monitor activists.

| Technology | Recommendation | Reason |

|---|---|---|

| Facial Recognition | Complete Ban | High risk of mass surveillance and political abuse |

| Biometric Identification | Purpose Limitation | Use only for stated purpose, without expansion to other tasks |

| Low-Risk Systems | Voluntary Labeling | Transparency for users and regulators |

The recommendation is to appoint individuals to the oversight process who understand AI systems well and can communicate potential risks (S002). Oversight should not be a formality: oversight bodies must have technical competence and independence from political pressure.

Conflicts in Research and Areas of Uncertainty: Where the Scientific Community Has Not Reached Consensus

Despite progress in understanding conspiratorial thinking, significant areas of uncertainty and conflicting data interpretations exist. For more details, see the Sources and Evidence section.

⚠️ The Operationalization Problem: Where Skepticism Ends and Conspiracy Thinking Begins

A fundamental problem is the absence of a clear operational definition that distinguishes legitimate skepticism from conspiratorial thinking (S005).

Some researchers propose focusing on epistemological methods (how counterevidence is processed), while others emphasize content-based criteria (degree of deviation from scientific consensus).

This uncertainty creates a risk of false-positive classification of critical thinking as conspiracy thinking—and conversely, missing actual conspiratorial narratives disguised as legitimate skepticism.

🧪 Intervention Effectiveness: Contradictory Data on Debunking

Research on the effectiveness of debunking conspiratorial narratives yields contradictory results.

Some data suggest that direct refutation may strengthen belief through the backfire effect, while others indicate that methodologically sound debunking is effective (S003).

- Type of narrative (local conspiracy vs. global system)

- Degree of audience engagement (passive consumption vs. active belief defense)

- Source of refutation (authority, peer, opponent)

- Methodology of counterevidence presentation (factual debunking vs. emotional appeal)

🔬 The Role of Social Media: Amplifier or Creator of Conspiracy Thinking

There is debate over whether social media merely amplifies preexisting conspiratorial tendencies or actively creates new forms of conspiratorial thinking through algorithmic curation and echo chambers (S006).

Some researchers argue that recommendation algorithms create radicalizing trajectories, while others contend that users actively seek conspiratorial content independently of algorithms.

This uncertainty is critical for developing regulatory measures: if social media is an amplifier, regulation should focus on content moderation; if it's a creator, a fundamental rethinking of algorithmic architecture is necessary.

Cognitive Self-Defense Protocol: Seven Questions for Identifying Conspiratorial Narratives

Developed through structural analysis of conspiratorial narratives, this protocol enables systematic evaluation of claims for conspiratorial characteristics (S004), (S005). The protocol doesn't assess truth—only the architecture of thinking.

- Is there a hidden agent with unlimited power? Conspiracy thinking requires an enemy who is simultaneously omnipotent and invisible. If a claim relies on a figure who can foresee everything and conceal everything—red flag.

- Is contradictory evidence rejected as part of the conspiracy? (S003) When any counterargument is interpreted as enemy disinformation, the system becomes logically sealed. This is motivated reasoning, not analysis.

- Does understanding the "truth" require special knowledge? Conspiracy thinking often positions itself as esoteric knowledge accessible only to the initiated. Science, by contrast, strives for reproducibility and openness.

- Are disparate events connected into a unified network without causal mechanism? Coincidence ≠ causation. If a narrative connects events only through "they're behind everything," check the logic of the chain.

- Does the text appeal to emotions of fear, anger, or exceptionalism? (S001) Conspiracy thinking often activates threat and group identity. Fact-checking requires emotional pause.

- Does the narrative contain unverifiable claims about motives? "They want to control the population"—this is speculation about intention, not action. Only behaviors are verifiable.

- Does the source use selective fact-picking? (S007) Conspiracy thinking often takes real events but ignores context, alternative explanations, and scale. Lateral reading helps restore the complete picture.

If a claim answers "yes" to four or more questions—this doesn't mean it's false, but indicates conspiratorial thinking architecture. Additional verification through independent sources and lateral reading is required.

The protocol functions not as a verdict, but as a diagnostic tool. It helps separate critical thinking from patterns that make us vulnerable to manipulation.