The attention economy is a system in which human attention has become a scarce resource and commodity. In conditions of information abundance, companies, media, and authorities compete for every second of your focus, converting it into profit through advertising, data, and behavioral patterns. Surveillance capitalism is a business model that monetizes the observation of user behavior and prediction of their actions. Together, these phenomena create a system where your identity is fragmented, aggregated, and sold without your informed consent.

🖤 Every time you open your smartphone, scroll through a feed, or click on an ad, you're not just consuming content—you yourself become the product. Your attention, your behavioral patterns, your micro-reactions to stimuli are extracted, digitized, and sold at auctions whose existence you don't even suspect. 👁️ This is not a metaphor or conspiracy theory—it's a documented business model that over the past twenty years has transformed human consciousness into the most liquid asset of the information era. 💎 In this article, we'll dissect the mechanics of the attention economy and surveillance capitalism, relying exclusively on academic sources, empirical data, and critical analysis—without conspiracy theories, but also without illusions.

What is the attention economy: from information abundance to cognitive resource scarcity

The term "attention economy" was first used by American economist Michael Goldhaber in 1997 (S008). He reconceptualized the "information economy," shifting focus from information production to its consumption—more precisely, to the limitations of human capacity to process that information.

The paradox of abundance: more information = less attention

Information and goods have become more abundant, while the amount of time to process them has remained the same (S001). Herbert Simon, Nobel laureate, formulated this in 1971: "In a world rich in information, the wealth of information means a dearth of something else—a scarcity of whatever it is that information consumes. What information consumes is attention" (S001).

The abundance of information creates a scarcity of attention and the need to allocate it efficiently among an overabundance of sources.

Attention as scarce resource and commodity

The attention economy is a system in which media, companies, and authorities compete for consumer attention as their most scarce resource (S001). Attention becomes not merely a psychological phenomenon, but an economic unit that can be measured, valued, and exchanged.

Smartphones and laptops have replaced books, live communication, stores (S008)—every interaction generates data about where attention is directed, how long it's held, and what switches it.

Boundaries of the concept: attention economy vs surveillance capitalism

- Attention economy

- An analytical framework describing competition for cognitive resources. Can exist without total surveillance—in traditional advertising, public speaking, media.

- Surveillance capitalism

- A specific business model for monetizing attention through collection, analysis, and prediction of behavior based on personal data. The technological infrastructure implementing the attention economy.

These two phenomena intersect but are not identical. The first is economic logic, the second is its concrete technical implementation. Understanding the distinction is critical for analyzing where exactly risks arise and where alternative models are possible. For more detail, see the section on Epistemology.

For deeper exploration of attention manipulation mechanisms, see the analysis of infinite scroll and dopamine traps.

The Steel Version of the Argument: Why the Attention Economy and Surveillance Capitalism Are Considered Inevitable

Before moving to critique, we need to present the most compelling arguments for why the attention economy and surveillance capitalism are a logical consequence of technological and economic development. This is the so-called "steel version" (steelman) — the strongest possible formulation of the position, which we will then test against facts. More details in the Statistics and Probability Theory section.

| Argument | Mechanism | Claimed Result |

|---|---|---|

| Targeting Efficiency | Algorithms match relevant offers instead of mass distribution | Reduced transaction costs, user time savings |

| Free Access | Advertisers subsidize services for billions of users | Inclusivity, reduced digital inequality |

| Innovation Race | Competition for attention drives R&D and UX improvements | Better interfaces, more accurate recommendations, new features |

| User Control | Privacy settings, GDPR, right to data deletion | Voluntary exchange of data for convenience |

| Economic Inevitability | Alternative models (subscriptions, decentralization) don't scale | The market chose the only viable option |

| Public Goods | Aggregated data used for epidemic forecasting, traffic optimization, forensics | Positive externalities outweigh privacy risks |

| Evolutionary Adaptation | People develop digital hygiene skills and critical thinking | Society adapts, as it adapted to literacy and TV |

Why These Arguments Sound Convincing

Each relies on real phenomena: targeted advertising does reduce noise, platforms are indeed free, innovations do happen. Proponents of the attention economy aren't fabricating facts — they're reinterpreting them through the lens of efficiency and inevitability.

The key move: they shift the question from "Is this good?" to "Is there an alternative?" If there are no alternatives, then criticism becomes moralizing rather than analysis.

This is a classic technique: if a system appears inevitable, its flaws stop being perceived as problems — they become simply "the price of progress." Panic around surveillance capitalism is declared a moral panic, analogous to fears about radio or comic books. Society will cope, as it always has.

Where the Traps Hide in This Logic

The steel version of the argument looks invulnerable, but contains several hidden assumptions that require verification. They're not false, but they're not obvious either.

- Assumption 1: "Users Consciously Agreed"

- This is legally true (there's a checkbox in Terms of Service), but psychologically questionable. Consent under pressure (either pay or give up the service) isn't quite voluntary choice. Moreover, most users don't read the terms and don't understand what data is collected and how it's used. Here we need data on actual levels of informed consent.

- Assumption 2: "Alternative Models Are Unviable"

- This is true for the current moment, but doesn't explain why. Maybe they're unviable because the market is captured by monopolists who use their power to suppress competition? Or because network effects create natural barriers to entry? This isn't an argument for inevitability, but an indication of structural market problems.

- Assumption 3: "Data Is Used for Public Good"

- This is true in isolated cases, but isn't the primary purpose of data collection. The primary purpose is profit. Public goods are a byproduct. We need to distinguish where data is actually used for society versus where it's just rhetoric.

All these assumptions require empirical verification. That's exactly what we'll do in the following sections — not rejecting the arguments, but testing them against data.

For now, what's important to understand: the steel version of the argument isn't truth, it's a hypothesis. It's logical, but logic doesn't guarantee correspondence with reality. The scientific method requires testing through facts, not through rhetorical persuasiveness.

A convincing argument and a correct argument are not the same thing. The steel version is convincing precisely because it ignores inconvenient details: the information asymmetry between platforms and users, network effects that block competition, and the difference between consent on paper and actual understanding of what you've agreed to.

The next step is to look at what the data says about each of these arguments. Do they confirm the hypothesis of efficiency and inevitability, or do they point to other mechanisms?

Evidence Base: What the Data Says About the Attention Economy and Surveillance Capitalism

Let's move to systematic analysis of the facts. Each claim is backed by a source; we distinguish between empirical data, theoretical models, and normative judgments. More details in the Scientific Method section.

📊 Defining Surveillance Capitalism: Shoshana Zuboff's Conceptual Framework

The term "surveillance capitalism" was systematized by Shoshana Zuboff starting in 2015. Zuboff defines it as an economic logic in which private human experience becomes free raw material for transformation into behavioral data (S010).

This data is processed through machine learning to create "behavioral surplus"—predictions about users' future actions that are sold in behavioral futures markets (S010). The key difference from traditional advertising: what's monetized isn't the ad impression, but the probability of a specific action (click, purchase, vote).

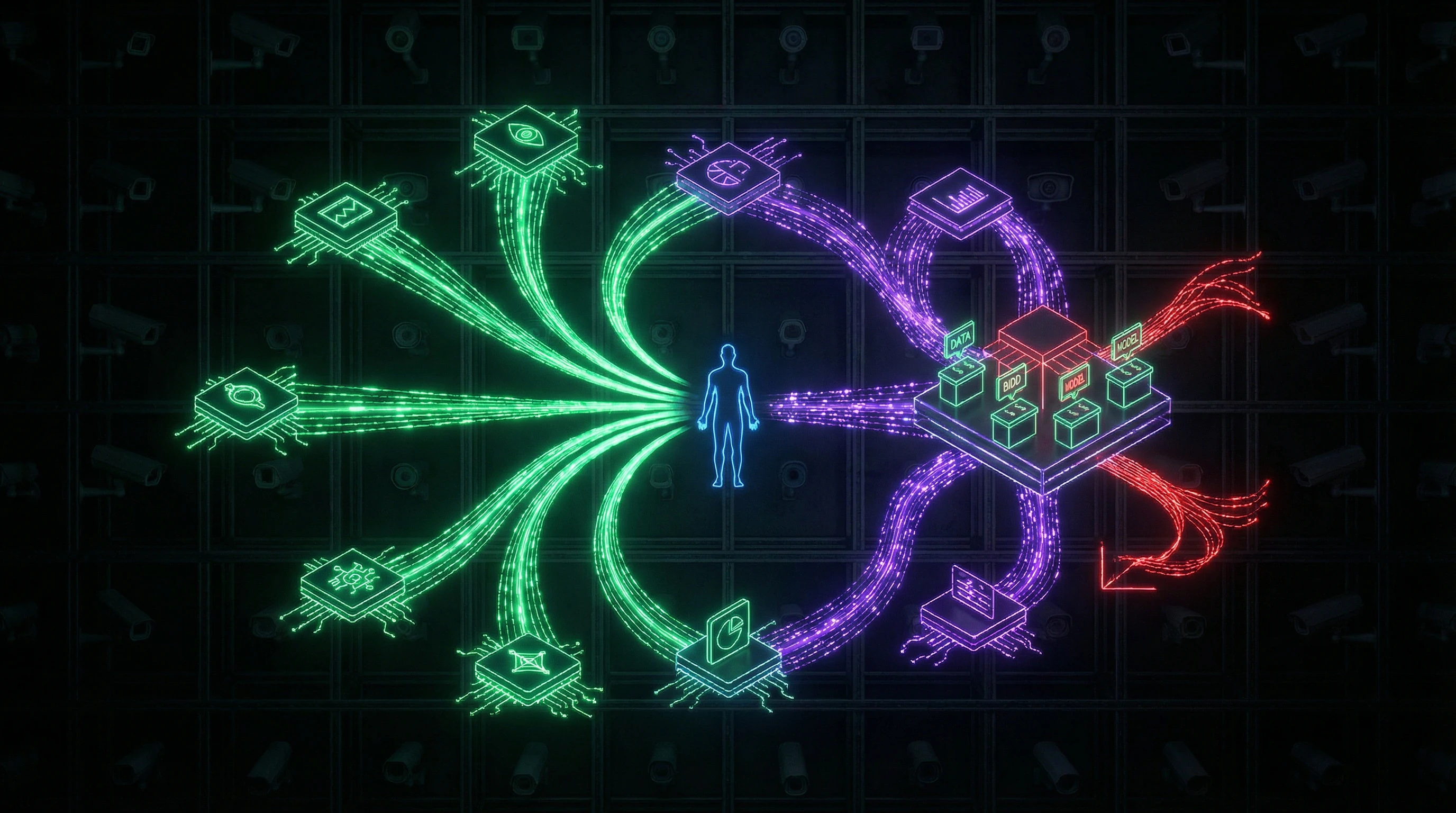

📊 The Value Extraction Mechanism: From Observation to Prediction

Surveillance is a process that observes behavior, recognizes attributes, and identifies individuals (S011). Individuals have multiple identities—real and virtual, used in different aspects of life (S011).

Most aspects of life are subject to some form of surveillance, and observations with identities are aggregated (S011). Data collected in one context (search queries) is combined with data from another (geolocation, purchases), creating a detailed profile that no single source could provide.

📊 Critical Theory of Surveillance in Informational Capitalism

Christian Fuchs in "Towards a Critical Theory of Surveillance in Informational Capitalism" (2012) argues that traditional surveillance theories are insufficient for studying internet surveillance (S012).

Fuchs proposes a typology of surveillance in informational capitalism based on critical political economy, enabling systematic analysis of surveillance in the spheres of production, circulation, and consumption (S012). His conclusion: overcoming surveillance requires political recommendations (S012)—the problem cannot be solved by technological or individual means alone.

- Surveillance in production: control over labor and worker data

- Surveillance in circulation: tracking consumer behavior and preferences

- Surveillance in consumption: personalization and manipulation of choice

🧾 Empirical Data: The Scale of Data Collection

Precise figures about the scale of data collection by major platforms are trade secrets. Indirect evidence points to unprecedented volume: Google processes over 8.5 billion search queries per day, each linked to a user profile or unique device identifier.

Meta collects data not only about on-platform actions but also about visits to third-party sites through tracking pixels and "Share" buttons. Data includes explicit actions (clicks, likes) and implicit signals: time on page, scroll speed, cursor movements—everything indicating engagement level.

🧪 Experimental Data: The Impact of Targeting on Behavior

Personalized advertising is more effective than untargeted advertising, but this effectiveness is achieved not only through relevance but also through exploitation of cognitive vulnerabilities. Ads are shown at moments of maximum susceptibility (fatigue, stress, boredom) or use social proof ("your friends bought this"), creating an illusion of consensus.

These techniques go beyond informing and approach manipulation—deliberate influence on choice through exploitation of cognitive limitations rather than through information provision.

🔬 Information Asymmetry: Users Don't Know What's Known About Them

The key problem of surveillance capitalism is radical information asymmetry. Platforms know far more about users than users know about platforms. Even when requesting data under GDPR, users receive only a fraction: raw logs, but not the algorithms that interpret them, not the behavioral prediction models, not the prices at which these predictions are sold.

This makes "informed consent" a fiction: you cannot consent to what you don't understand. The connection between infinite scroll and the dopamine trap demonstrates how platform architecture amplifies this asymmetry through design, not just through data collection.

- Information Asymmetry

- Platform knows: algorithms, models, prices, predictions. User knows: what they did, but not how it's interpreted.

- Cognitive Asymmetry

- Platform designs interface to maximize engagement. User interacts with interface without seeing its logic.

- Legal Asymmetry

- Terms of service are a one-sided contract. User agrees or doesn't use the service.

The problem isn't individual facts of data collection, but the systemic architecture that makes transparency impossible. Even the scientific method requires reproducibility and openness—surveillance capitalism is built on their opposite.

Mechanisms of Causality: How the Attention Economy Influences Behavior and Consciousness

Correlation between social media time and behavioral changes is not proof of causality. But there are specific mechanisms that indicate a direct link between platform architecture and consciousness transformation. More details in the Logical Fallacies section.

🧬 Variable Reinforcement and Habit Formation

Digital platforms use variable reinforcement — rewards (likes, notifications, interesting posts) arrive unpredictably. This creates stronger habits than fixed reinforcement because the brain remains in a state of anticipation.

The mechanism is identical to gambling: uncertainty about when the "win" will come drives repeated feed checking. The design is not accidental — it's optimized to maximize time on platform, which directly correlates with data collection volume and ad impressions (S001).

Variable reinforcement works because it exploits an ancient resource-seeking mechanism: the brain cannot distinguish "maybe a reward" from "definitely a reward" and remains in maximum readiness mode.

🔁 Feedback Loops and the Echo Chamber Effect

Recommendation algorithms create closed loops: the more interaction with a content type, the more similar content is shown. The result — an echo chamber where users see only confirmation of their beliefs.

Empirical data on the scale of the effect is contradictory (S003), but the mechanism itself exists and can amplify polarization. This is especially dangerous in the context of conspiracy theory recognition, where the algorithm can transform a marginal idea into a dominant narrative for a specific user.

- Echo Chamber

- An information environment where the algorithm shows only content aligned with the user's previous behavior. The trap: it seems like your choice, but it's the result of engineering.

- Polarization

- Amplification of opposing positions in society through isolation of groups in separate information spaces. Mechanism: each group sees only extreme versions of the opposing position.

🧷 Cognitive Overload and Deep Thinking Atrophy

Constant switching between notifications, messages, news, and ads creates cognitive overload. Multitasking reduces the effectiveness of each individual task and impairs concentration.

The habit of rapid consumption of short information fragments atrophies deep reading and critical analysis skills. This is not an individual problem — it's a change in society's cognitive ecology. The connection to infinite scroll and the dopamine trap shows how platform architecture directly affects the neurobiology of attention.

| Type of Cognitive Load | Mechanism | Result |

|---|---|---|

| Context Switching | Notifications interrupt current task | Reduced depth of information processing |

| Information Noise | Multiple sources simultaneously | Inability to extract signal |

| Consumption Speed | Optimization for rapid scrolling | Atrophy of analytical skills |

🧠 Erosion of Autonomy: From Choice to Nudging

Surveillance capitalism doesn't just observe — it actively modifies behavior through nudges. These are design decisions that guide action without formally restricting freedom of choice.

The "Buy Now" button is brighter than "Add to Wishlist." A countdown timer creates artificial urgency. These techniques work because they exploit cognitive heuristics and automatic reactions, bypassing rational deliberation (S006).

- Behavioral prediction: the algorithm determines which action is most likely

- Design intervention: the interface is optimized for that action

- Illusion of choice: the user thinks the decision was theirs

- Scaling: millions of people are nudged simultaneously

Nudging differs from coercion by leaving formal freedom of choice. But when choice is made through exploitation of cognitive errors, it's freedom in name only.

Conflicts and Uncertainties: Where Sources Diverge and What Remains Unclear

Researchers disagree on the threat posed by the attention economy and surveillance capitalism. The disagreements concern not the facts themselves, but their interpretation and normative conclusions. For more details, see the Reality Check section.

Persuasion vs. Manipulation: Where's the Line?

Critics argue that personalized advertising and algorithms manipulate users, stripping away autonomy (S001). Defenders counter: this is simply more effective persuasion, like traditional advertising.

Persuasion appeals to rational arguments and leaves room for disagreement. Manipulation exploits cognitive vulnerabilities, bypassing critical thinking. Modern targeting often falls between these poles—and this creates a problem: where exactly does harm begin?

- Persuasion: argument → critical thinking → choice

- Manipulation: trigger → cognitive vulnerability → action without reflection

- Targeting: data about you → prediction of weak points → action (status unclear)

Prediction Accuracy: The Myth of the All-Seeing Eye

Companies claim algorithmic precision, but independent research shows overestimation (S006). Models work well on aggregated data (group behavior) but poorly at the individual level.

Even inaccurate predictions cause harm: they affect credit scoring, hiring, insurance, creating discrimination. But this undermines the narrative of an "all-seeing eye" capable of reading minds.

The question remains open: how effective is control if models are wrong? Is 60% accuracy enough to manipulate millions?

Privacy vs. Convenience: A Normative Split

A fundamental disagreement: is privacy an absolute value, or can it be traded for convenience and security?

- Libertarian Position

- If users voluntarily consent to data collection, it's a legitimate transaction. The state shouldn't interfere.

- Communitarian Position

- Privacy is a collective good, not just an individual right. Consent under pressure (choice: data or exclusion from service) isn't genuinely voluntary.

- Pragmatic Position

- Information asymmetry makes "voluntary consent" a fiction: users don't know how their data is used, and companies hide this (S007).

Each position is logical within its own value system. The conflict isn't resolved by facts—only by political choice.

Scale of Harm: Individual vs. Systemic

One key disagreement: does surveillance capitalism harm specific individuals or only society as a whole?

| Level of Analysis | Supporters' Position | Critics' Position | Uncertainty |

|---|---|---|---|

| Individual | User receives relevant content, saves time | Manipulation, addiction, loss of autonomy (S003) | How to measure harm to a specific person? |

| Systemic | Market allocates resources more efficiently | Concentration of power, undermining democracy, inequality | Which harm outweighs the other? |

A critic may agree there's no individual harm but object to systemic effects. A defender may acknowledge systemic problems but argue they're solved through regulation, not abandoning the technology.

Regulation: Panacea or Illusion?

There's consensus that surveillance capitalism needs constraints. But disagreement exists about which ones and how effective they are.

- GDPR and similar laws: do they actually protect or just create the appearance of control?

- Bans on specific practices: are they technically feasible or will companies find workarounds?

- Algorithm transparency: will it help users or just complicate things without changing behavior?

- Separation of data and services: will it solve the problem or create new monopolies?

Each approach has supporters and critics. There's no empirical proof that any one works better than others.

What Remains Unclear

The attention economy exists. Surveillance capitalism is real. But the degree of harm, mechanisms of causality, and effectiveness of solutions remain subjects of honest disagreement, not established facts.

This doesn't mean all positions are equal. But it does mean critical thinking requires acknowledging uncertainty, not certainty in one's own rightness. For deeper understanding of manipulation mechanisms, see the analysis of dopamine traps and methods for verifying claims.

Counter-Position Analysis

⚖️ Critical Counterpoint

The attention economy is a real phenomenon, but it is often described through the lens of total control, ignoring contradictions, regional differences, and user adaptability. Here's what should be considered when critiquing this model.

Overestimation of Platform Agency

Tech companies do not possess total control over user behavior. Research shows that the effectiveness of behavioral predictions is often overestimated—users demonstrate unpredictability, algorithms make mistakes, and "personalization" is often based on crude correlations rather than deep understanding of personality. The focus on "omnipotent algorithms" distracts from more fundamental problems of capitalism: labor exploitation, inequality, concentration of power.

Underestimation of User Adaptation

The article emphasizes lock-in effects and architectural inevitability, but does not account for growing digital literacy and user resistance. Millions of people use ad blockers, VPNs, alternative platforms (Mastodon, Signal), and practice "digital detox." Perhaps the attention economy has already reached its peak, and we are witnessing the beginning of a reverse trend—conscious rejection of toxic platforms.

Lack of Cross-Cultural Analysis

Most research on surveillance capitalism is focused on the US and Western Europe. In other regions (China, Russia, India), surveillance and attention economy models work differently—through state control, different cultural norms of privacy, and alternative business models. Conclusions about Western surveillance capitalism may not be applicable to non-Western contexts.

The Problem of Alternatives

Criticism of surveillance capitalism often does not offer working alternatives. Regulation (GDPR) has shown limited effectiveness. Decentralized platforms do not scale. State control over data (as in China) creates even greater risks to freedom. Perhaps surveillance capitalism is not a bug, but an inevitable feature of the digital economy, and the task is not its destruction, but finding a compromise between convenience, profit, and rights.

Risk of Moral Panic

The focus on "manipulation" and "addiction" can devolve into technophobia, ignoring the real benefits of digital platforms—access to information, social connections, economic opportunities. Criticism sometimes sounds like nostalgia for the pre-digital era, which was no less problematic: limited access to knowledge, social isolation, media monopolies. The balance between criticism and recognition of benefits is a complex task that the article may not resolve.

FAQ

Frequently Asked Questions