�� In 2019, The New York Times published an investigation about how YouTube's algorithms turned an ordinary user into an extremist. The story went viral worldwide, sparking a wave of panic about the "radicalization pipeline." But what if the most dangerous radicalization isn't happening on the platform, but in our heads—when we accept a compelling narrative as scientific fact? ��️ The largest quantitative study in 2019 analyzed over 2 million YouTube recommendations and found no substantial evidence of this "pipeline." We break down the anatomy of a misconception that has shaped platform policy and public opinion for decades.

�� What Is "Algorithmic Radicalization" and Why This Term Became a Weapon in Information Wars

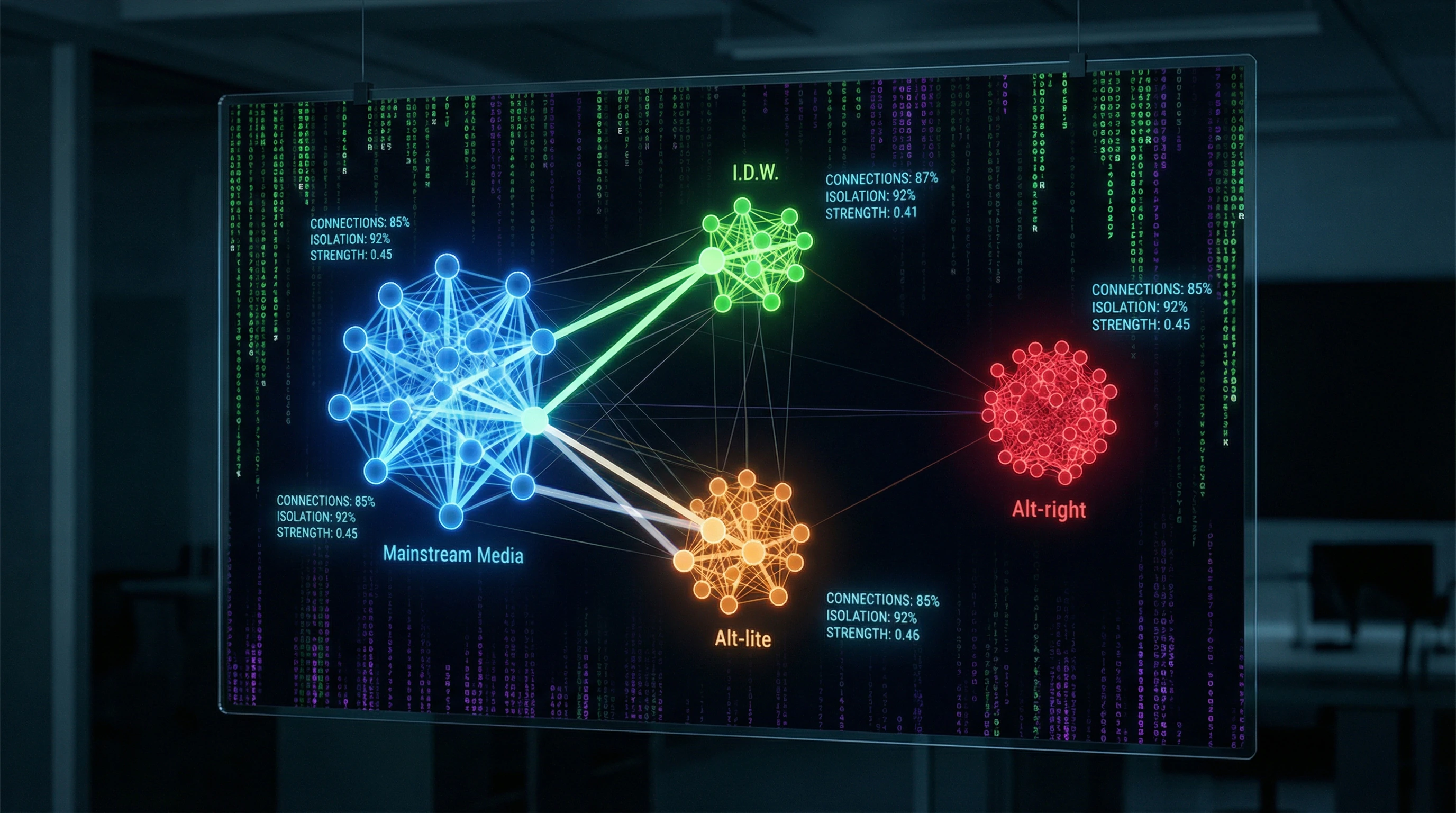

The term "algorithmic radicalization" describes a hypothetical process whereby video platform recommendation systems systematically guide users from moderate content to extremist material through a chain of progressively radicalizing recommendations. The "radicalization pipeline" concept assumes the existence of a predictable path: a user begins by watching mainstream political content, the algorithm recommends more provocative material, then overtly extremist content, and ultimately the person ends up in an information bubble of radical ideas (S010).

This narrative has become central to debates about the attention economy and platform accountability. But behind the concept's popularity lies a methodological crisis: most research fails to distinguish correlation from causation, relies on anecdotal evidence instead of quantitative data, and ignores alternative explanations.

Three Components of the "Pipeline" Narrative

- Content Hierarchy

- A clear ladder exists from moderate to extremist: Intellectual Dark Web (I.D.W.) — intellectuals critiquing progressive agendas; Alt-lite — right-wing populists with provocative rhetoric; Alt-right — overt advocates of white supremacy and antisemitism (S010).

- Active Promotion of Transitions

- YouTube's algorithm recommends more radical content to users watching moderate material, creating a directed flow from one category to another.

- Systematic Nature of the Process

- This mechanism operates not randomly, but as the primary driver of online radicalization on the platform.

Radicalization as a Scientific Concept: Beyond Technological Determinism

Radicalization is defined as "a process by which an individual or group adopts extremist ideological beliefs and/or willingness to use violence to achieve political goals" (S002). A key distinction: radicalization of views does not equal radicalization of actions.

Research shows that radicalization is a multifactorial process involving personal predispositions, social environment, political context, and trigger events (S005). Attempting to reduce this complex phenomenon to the mechanical action of recommendation algorithms oversimplifies the problem to the level of technological determinism.

Radicalization of views does not equal radicalization of actions. Watching provocative content is not the same as adopting extremist ideology or willingness to commit violence.

The Methodological Trap: Anecdotes Instead of Data

Most publications about YouTube's "radicalization pipeline" rely on qualitative methods: analysis of individual cases, interviews with former extremists, journalistic investigations. A typical example is the story of someone who claims "YouTube pulled me into the Alt-right through recommendations." For more details, see the section Epistemology Basics.

These narratives possess powerful emotional impact but cannot serve as evidence of a systematic effect. The problem of representativeness remains unresolved: how many users received the same recommendations but did not radicalize? What is the baseline frequency of radicalization among users who did not receive such recommendations?

| Question | Required Answer | Status in Literature |

|---|---|---|

| What proportion of users received recommendations but did not radicalize? | Quantitative data with control group | Absent |

| Does the radicalization trajectory differ for users with recommendations versus those without? | Comparative analysis with controlled variables | Rare |

| What role do users' pre-existing beliefs play in content selection? | Analysis of selection bias | Ignored |

Without a control group and quantitative data, it is impossible to separate correlation from causation. This is not methodological pedantry — it is the foundation upon which all logic of proof is built (S010).

�� Steel Version of the Argument: Seven Most Compelling Cases for the Algorithmic Pipeline

Before examining evidence against the "radicalization pipeline" hypothesis, we must honestly present the strongest arguments in its favor. Intellectual honesty requires constructing a "steel version" (steelman) of the opposing position—not a caricature, but the most convincing formulation possible. More details in the Epistemology section.

�� First Argument: Economic Logic of Engagement Maximization Inevitably Leads to Radicalization

YouTube's business model is built on maximizing watch time, which directly impacts advertising revenue. The algorithm is optimized to predict which video will keep users on the platform longest.

Emotionally charged, provocative content generates higher engagement than nuanced and balanced content. Radical content is a "superstimulus" for a system tuned for engagement. This isn't malicious intent by developers, but an inevitable consequence of the optimization function.

The structural incentive for the algorithm to recommend increasingly provocative material is built into the very architecture of a platform oriented toward the attention economy.

�� Second Argument: Documented Growth of Extremist Channels Correlates with Changes to the Recommendation Algorithm

Researchers document explosive growth in the audience of right-wing populist and extremist channels on YouTube during 2015–2018 (S002, S003). This growth coincides with changes to the recommendation algorithm that YouTube implemented to increase watch time.

Alt-lite and Alt-right channels reported sharp increases in subscribers and views specifically through recommendations, not through search or external links. The temporal correlation between algorithm changes and extremist content growth suggests a causal relationship.

�� Third Argument: Testimonies from Former Extremists About YouTube's Role in Their Radicalization

Many people who have gone through deradicalization describe YouTube as a key factor in their ideological shift (S004). They detail how they started by watching videos about feminism or immigration, received recommendations for more radical critics of these phenomena, then moved to overtly racist content.

These testimonies are consistent with each other and describe a repeating pattern. Dismissing them as "anecdotal" means ignoring the lived experience of people who directly encountered the phenomenon.

- Beginning: videos about social phenomena (neutral content)

- Recommendations: criticism with a sharper angle

- Deepening: overtly ideological content

- Result: transition into extremist communities

�� Fourth Argument: Psychological Mechanisms of Personalization Amplify the Echo Chamber Effect

The personalization algorithm creates a unique recommendation feed for each user based on viewing history. If a user begins watching content of a particular ideological orientation, the algorithm interprets this as a preference and recommends more similar content.

This creates an echo chamber effect: users encounter alternative viewpoints less frequently and sink deeper into an ideological bubble. Personalization transforms casual interest into a self-reinforcing feedback loop.

�� Fifth Argument: Cross-Platform Data Shows YouTube Is the Primary Traffic Source for Extremist Sites

Analysis of referral traffic to extremist websites and forums shows that YouTube is one of the largest sources of new visitors (S005). Users transition from YouTube to platforms like 4chan, 8chan, Gab, where radicalization continues in more intensive form.

YouTube functions as a "funnel"—attracting a broad audience with moderate content, then directing the most receptive users into the ecosystem of extremist platforms. Without YouTube, many of these users would never have discovered the existence of radical communities.

��️ Sixth Argument: Internal YouTube Documents Confirm the Company Knew About the Problem

Journalistic investigations and leaked internal documents show that YouTube employees repeatedly warned leadership that the recommendation algorithm was promoting extremist content (S008). Engineers proposed changes that would reduce this effect, but leadership rejected them due to concerns that it would decrease engagement and revenue.

This demonstrates that the problem is real and was acknowledged within the company, but economic interests outweighed ethical considerations.

Seventh Argument: Mass Shooters and Terrorists Cited YouTube as a Source of Radicalization

In the manifestos and statements of several mass murderers in recent years, YouTube is mentioned as the platform where they encountered white supremacist ideology or antisemitism (S006). The Christchurch shooter, the Pittsburgh terrorist, and other perpetrators described their radicalization journey beginning with watching videos on YouTube.

When extremist violence is directly linked to a platform, this demands serious consideration of the algorithmic radicalization hypothesis, regardless of statistical data. The connection between technology and real-world harm becomes undeniable.

�� Quantitative Audit: What the Largest Study of 2 Million YouTube Recommendations Revealed

In 2019, researchers from the Swiss Federal Institute of Technology in Lausanne conducted the first large-scale quantitative study of the "radicalization pipeline" hypothesis on YouTube. The work, published at the ACM FAT* (Fairness, Accountability, and Transparency) conference, analyzed over 2 million video and channel recommendations collected between May and July 2019 (S003).

This study became a turning point in the debate, as it provided large-scale empirical data for the first time instead of anecdotal evidence. More details in the Logical Fallacies section.

Methodology: How Researchers Mapped the Political Content Ecosystem

The researchers created a dataset of 792 channels divided into four categories: Media (mainstream news channels); Intellectual Dark Web—intellectuals critiquing progressive ideology; Alt-lite (right-wing populists with provocative rhetoric); Alt-right (explicit white supremacists) (S003).

For each channel, two types of recommendations were collected: video recommendations (which videos YouTube suggests after viewing) and channel recommendations (which channels YouTube suggests on the channel page). The probability of transitions between categories through recommendations was analyzed.

Key Finding: Absence of Substantial Evidence for a Systematic Pipeline

The study's main conclusion: as of 2019, there was no substantial quantitative evidence for the alleged pipeline (S003). The analysis showed that YouTube's video recommendations extremely rarely direct users from moderate content to extremist content.

Alt-right content virtually never appears in video recommendations even for users watching Alt-lite or Intellectual Dark Web content. This means the algorithm does not create an automatic pathway from moderate to extremist content.

Researchers found that Alt-lite content is reachable from Intellectual Dark Web channels through recommendations, but Alt-right videos are only reachable through channel recommendations, not through specific video recommendations (S003).

Nuance: The Distinction Between Video Recommendations and Channel Recommendations

The study revealed a critical distinction between two types of recommendations:

| Recommendation Type | User Visibility | Cross-Category Connection | Required Action |

|---|---|---|---|

| Video recommendations | Sidebar, end of video (high) | Weak | Passive viewing |

| Channel recommendations | Channel page (low) | Strong between I.D.W., Alt-lite, Alt-right | Active navigation to channel page |

Channel recommendations require deliberate user action—navigating to the channel page and viewing the recommendations section. This is not a passive "pipeline," but rather the result of actively seeking similar content.

Temporal Dynamics: Algorithm Changes Following Public Pressure

The study was conducted in 2019, after YouTube made changes to its recommendation algorithm in response to criticism. In January 2019, YouTube announced measures to reduce recommendations of "borderline content"—material that doesn't violate platform rules but comes close.

This means the study captures the algorithm's state after adjustments, not during the 2015–2018 period when the "pipeline" allegedly operated most actively. Critics of the study point to this as a limitation: perhaps the pipeline existed earlier but was eliminated by the time of the study.

The connection between attention economy and platform design shows that algorithm changes often follow public pressure rather than precede it. This creates a methodological problem: studies conducted after adjustments cannot fully confirm or refute hypotheses about the algorithm's past behavior.

�� Mechanism or Myth: Why Correlation Between Viewing and Radicalization Doesn't Prove Causation

Even if research showed that users watching extremist content on YouTube actually become radicalized, this wouldn't prove that the recommendation algorithm causes radicalization. For more details, see the Cognitive Biases section.

The fundamental problem: correlation does not equal causation. Observing a relationship between two phenomena tells us nothing about the direction of influence or the presence of third factors.

�� The Reverse Causality Problem: Radicalization Precedes Viewing, Not the Other Way Around

People who already hold radical views or have a predisposition toward them actively seek out corresponding content on YouTube. The algorithm doesn't push them toward extremism—it merely efficiently satisfies existing demand.

Radicalization research shows that the process typically begins with personal experience, social environment, or political events, not with media consumption (S002, S005). Online content can reinforce and legitimize already existing views, but rarely creates them from scratch.

- User experiences a personal crisis (economic, social, identity-related)

- A predisposition forms toward certain explanations and communities

- Active search for content matching this predisposition

- Algorithm recommends relevant material (because the user is seeking it)

- Observer sees correlation and mistakenly attributes it to the algorithm

�� Confounders: Third Variables Explaining Both Viewing and Radicalization

Multiple factors simultaneously influence content choice and radicalization: authoritarianism, need for cognitive closure, social isolation, economic instability, political polarization in society.

| Confounder | Impact on Content Viewing | Impact on Radicalization |

|---|---|---|

| Economic crisis | Seeking explanations in conspiracy theories | Status threat, search for an enemy |

| Social isolation | Online communities as substitute | Vulnerability to group influence |

| Authoritarian personality traits | Preference for clear hierarchy and enemies | Susceptibility to authoritarian narratives |

Without controlling for these confounders, it's impossible to isolate the algorithm's effect. A person experiencing an economic crisis may simultaneously seek explanations in conspiracy theories and watch corresponding content. The algorithm didn't create their vulnerability—it merely provided content that resonates with already existing anxieties.

�� The Selection Problem: Who Becomes the Subject of Radicalization Research

Most qualitative research on YouTube's role in radicalization is based on interviews with people who have already gone through radicalization and deradicalization. This creates systematic survivorship bias.

- Survivorship Bias in the Context of Radicalization

- We hear stories from those who became radicalized, but don't hear stories from millions of users who watched similar content and didn't radicalize. Perhaps YouTube recommended extremist content to millions of people, but only those who already had a predisposition became radicalized.

- Base Rate

- Without knowing how many people received recommendations but didn't radicalize, it's impossible to assess the algorithm's real effect. If 0.01% of those who received a recommendation became radicalized, that's completely different from 50% becoming radicalized.

Algorithmic Power and Moral Responsibility: The Philosophical Aspect of Causation

Philosophical research on algorithmic power raises the question of whether algorithms can even be attributed a causal role in social processes (S007). An algorithm is a tool implementing the goals of its creators and reflecting user preferences.

Attributing to the algorithm an autonomous ability to "radicalize" people anthropomorphizes technology and redistributes responsibility. The real actors: developers who chose the optimization function; executives who prioritized profit over safety; users making the choice to watch certain content. The discourse on "algorithmic radicalization" may serve as a convenient way to avoid more complex questions about the social causes of extremism and about the attention economy that incentivizes polarization regardless of the algorithm.

Conflicts in the Evidence Base: Where Research Diverges and What It Means

Scientific literature on YouTube's role in radicalization demonstrates significant divergence in conclusions. These divergences are not accidental—they reflect fundamental differences in methodology, timeframes, and geographical scope. For more details, see the Sources and Evidence section.

�� Qualitative vs. Quantitative: Why Methodology Determines Conclusions

Qualitative studies based on interviews and case analysis support the "radicalization pipeline" hypothesis (S002). Quantitative studies analyzing large datasets of recommendations find no substantial evidence of a systematic effect (S001, S003).

This divergence reveals a trap in data interpretation: qualitative methods identify the existence of a phenomenon (yes, some people become radicalized through YouTube), but don't assess its prevalence. Quantitative methods assess frequency but may miss the nuances of individual trajectories.

Key question: Is algorithmic radicalization a systematic effect impacting a significant proportion of users, or a rare exception that receives disproportionate attention due to the dramatic nature of individual cases?

The connection to attention economics is direct: rare but extreme events receive more media coverage, creating an illusion of their frequency.

�� Temporal Factor: Algorithm Changes and Problem Evolution

Studies from different periods reach different conclusions because the research subject itself has changed. Work from 2015–2018 documents a stronger "pipeline" effect than studies from 2019–2020, after YouTube implemented measures to reduce recommendations of borderline content (S007).

This creates a methodological problem: we cannot simply average results from studies of different periods, since they describe different versions of the algorithm. It's critically important to distinguish between claims about how the algorithm worked in the past and claims about how it works now.

| Period | Nature of Findings | Reason for Differences |

|---|---|---|

| 2015–2018 | Strong "pipeline" effect | Algorithm optimized for engagement, without extremism filters |

| 2019–2020 | Weak or absent effect | Measures implemented to reduce borderline content recommendations |

| 2021–2024 | Requires new research | Further changes to recommendation system |

�� Geographic and Linguistic Limitations: The Generalization Problem

The overwhelming majority of studies focus on English-language content and users from the US and Western Europe. Recommendation patterns may differ substantially in other linguistic and cultural contexts.

Studies of radicalization in Central Asia show that online propaganda plays a role, but its effectiveness depends heavily on local political and social context (S005). Generalizing conclusions obtained from English-language material to YouTube's global audience is methodologically incorrect.

- Anglophone Context

- High content competition, developed moderation system, diversity of alternative platforms. The algorithm may be less radicalizing simply because competition for attention is higher.

- Non-Anglophone Contexts

- Less moderation, fewer alternative platforms, more homogeneous audiences. The "radicalization pipeline" may work differently or more effectively.

- Conclusion

- Global claims about YouTube require research covering at least 5–10 languages and cultural contexts. The current evidence base does not provide this.

The connection to epistemology is obvious: we cannot make universal conclusions from local data without explicitly stating the boundaries of applicability.

�� Cognitive Anatomy of the Myth: Which Mental Traps Make the "Pipeline" Narrative So Convincing

Why has the "radicalization pipeline" hypothesis gained such widespread acceptance despite the lack of compelling quantitative evidence? The answer lies in cognitive biases that make this narrative psychologically irresistible. More details in the Astrology section.

The first bias is the illusion of causality. When someone observes that a person watched political videos and then expressed radical views, the brain automatically connects the events with a causal link. But this ignores third variables: prior beliefs, social environment, life circumstances.

YouTube's algorithm becomes a scapegoat for phenomena that existed long before its emergence. This is cognitively economical: instead of analyzing complex social reality, we point to a single button.

Second is confirmation bias. Researchers and journalists search for examples of radicalization through YouTube and find them. But they don't count the millions of people who watch the same videos and remain moderate. (S003) demonstrated exactly this: recommendations don't create a radical path, they amplify it for those already predisposed.

- A person with radical views seeks content matching their beliefs

- The algorithm recommends similar content (that's its job)

- An observer sees a chain of videos and concludes: the algorithm radicalized the person

- In reality: the algorithm reflected an already existing trajectory

Third is narrative appeal. The "pipeline" story has a clear structure: villain (algorithm), victim (user), salvation (regulation). This is an archetype that works in media, politics, and science. Conspiracy theories often triumph over boring statistical findings precisely because they tell a better story.

Fourth is moral panic. Every new communication medium triggers fear: print allegedly corrupted youth, television allegedly made people passive, the internet allegedly destroyed society. YouTube fell into this cycle. (S002) documents how the media narrative about the "rabbit hole" formed before data emerged.

- Halo Effect

- If a source (researcher, journalist, organization) is authoritative in one area, their claims in another seem more convincing. Computer scientists talk about algorithms—it sounds like fact, though it may be speculation.

- Availability Heuristic

- Dramatic stories about radicalization are easier to recall than statistics about 99% of users who weren't radicalized. The brain uses availability as a proxy for frequency.

Fifth is institutional interest. Researchers find it easier to secure funding for a project about "algorithmic dangers" than for one about "algorithms work as expected." Journalists find it easier to write an article about a threat than about the absence of a threat. Politicians find it easier to regulate a visible enemy than to solve social problems.

This isn't a conspiracy—these are structural incentives. The attention economy rewards sensationalism. The scientific system rewards novelty. Politics rewards simple solutions.

The result: the "pipeline" narrative becomes self-sustaining. Each new study, even if it refutes the hypothesis, gets interpreted as confirmation. (S007) used counterfactual bots and showed that YouTube recommendations don't cause radicalization. But the conclusion often gets reframed as "YouTube can radicalize if conditions are favorable"—which is no longer falsifiable.

Cognitive immunology here requires one thing: distinguishing between "the algorithm can amplify existing trends" and "the algorithm creates radicalization." The former is probable. The latter is unproven. And this distinction matters for policy, research, and your own thinking.