From Reaction to Prevention: Debunking and Prebunking Against Misinformationλ

A comprehensive approach to combating misinformation: reactive fact-checking after false information spreads and proactive "inoculation" against manipulative techniques before encountering them

Overview

Debunking refutes falsehoods after the fact — when lies have already spread. Prebunking works proactively: 🧬 it trains people to recognize manipulative techniques before encountering specific disinformation, creating cognitive immunity. The professional community of journalists and media literacy specialists increasingly recognizes the need to shift from reactive fact-checking to systematic preventive "inoculation" against manipulation.

🛡️

Laplace Protocol: Effective fight against disinformation requires combining immediate refutation of specific falsehoods (debunking) with long-term development of critical thinking through training in recognizing manipulative patterns (prebunking). A reactive approach alone is insufficient — proactive "inoculation" against information threats is necessary.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

⚡

Deep Dive

Debunking and Prebunking: Two Approaches to Combating Misinformation

In today's information environment, professionals face the need not only to refute false claims but also to build audience resilience against manipulation. Debunking and prebunking are two complementary methods for addressing misinformation, each with its own logic and application domain.

Debunking: Reactive Fact-Checking

Debunking is a reactive approach: detected falsehood → verified → refuted with verifiable data. The method works with specific claims, providing precise facts and evidence.

Debunking effectiveness is limited: refutations have smaller reach than the original misinformation, and cognitive biases (confirmation bias) lead people to retain initial false beliefs even after receiving correct information.

In some cases, a "backfire effect" occurs—refutation attempts paradoxically strengthen false beliefs instead of weakening them.

Prebunking: Proactive Inoculation

Prebunking is a preventive approach: prepares people to recognize manipulative techniques before encountering them. Works like a vaccine: introduces audiences to weakened versions of manipulative tactics in advance, building cognitive immunity.

| Parameter | Debunking | Prebunking |

|---|---|---|

| Timing | After misinformation spreads | Before encountering manipulation |

| Focus | Correcting specific facts | Recognizing manipulation patterns |

| Outcome | Short-term refutation | Long-term resilience |

Prebunking teaches recognition of logical fallacies, propaganda narratives, and manipulative techniques through developing critical thinking and media literacy.

From Reaction to Prevention

The professional community increasingly recognizes the need to shift from debunking to prebunking, understanding the limitations of purely reactive strategies. This doesn't mean abandoning fact-checking—debunking remains an important tool for rapid response.

- When to use debunking

- Urgent refutation of actively spreading false information requiring immediate intervention.

- When to use prebunking

- Long-term strengthening of audience resilience to manipulation through education and critical thinking development.

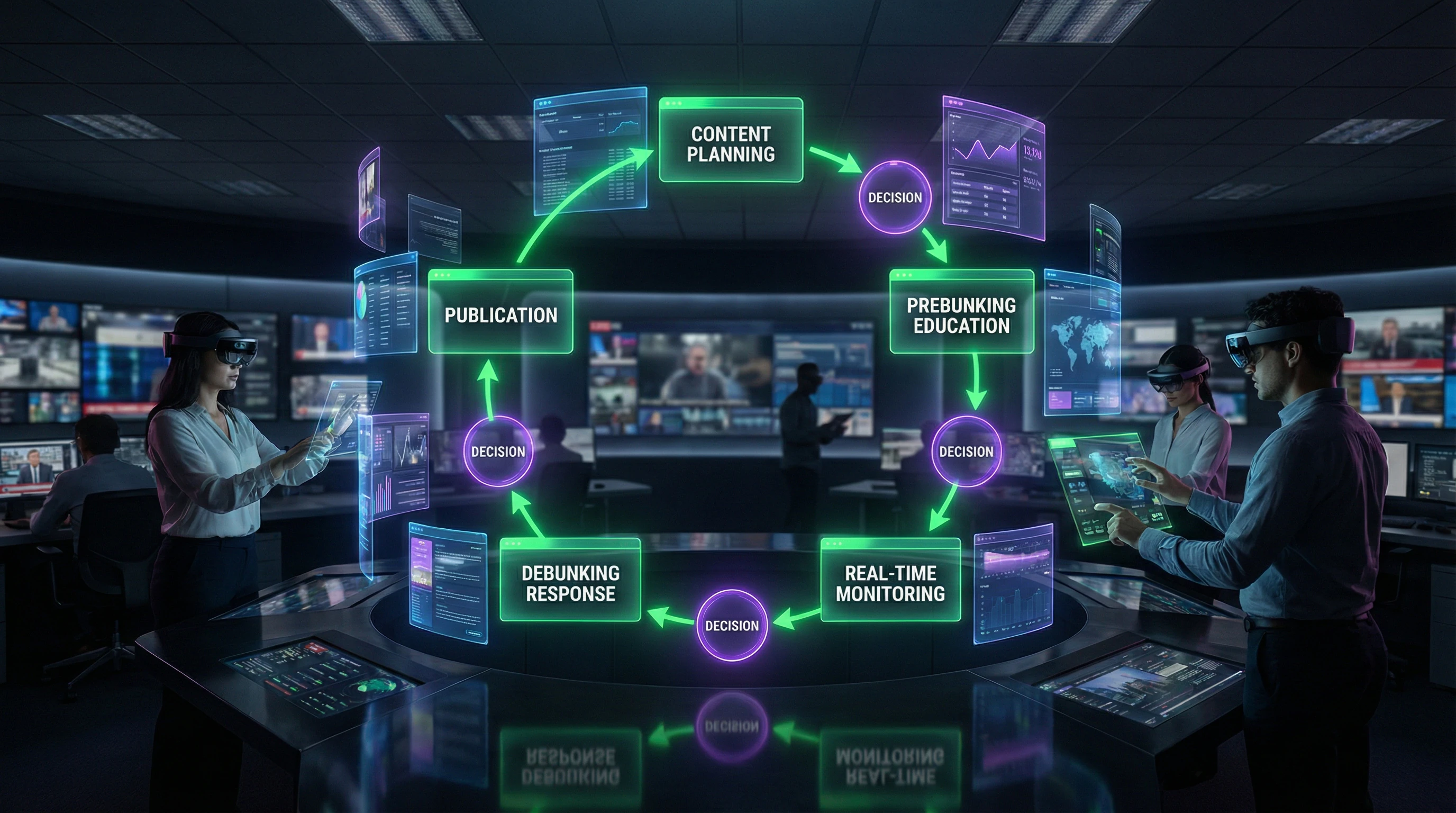

The optimal strategy combines both approaches: debunking addresses current problems, prebunking prevents future ones.

Scientific Consensus and Professional Practice Shift

The professional community has formed a solid consensus: disinformation must be met on two fronts simultaneously. The systemic transition is moving from pure reaction to combined models, where reality checking works hand in hand with prevention.

Transition from Debunking to Prebunking in Practice

Journalists and media literacy specialists are actively implementing systematic prebunking approaches through webinars, courses, and methodological materials. Organizations like StopFake emphasize: today's information environment requires going beyond simple fact-checking.

Reactive debunking is insufficient. Corrections reach limited audiences, while disinformation spreads virally, and people resist corrective information due to cognitive barriers.

Development of "universal algorithms" for deconstructing propaganda narratives demonstrates methodological standardization in prebunking. These systematic approaches are taught to journalists, librarians, and general audiences.

Complementarity of Approaches

Debunking and prebunking aren't enemies—they're partners. The first addresses tactical moments (refuting specific false claims), the second handles strategy (building critical thinking and long-term resilience).

- Debunking: rapid response to specific false claims with factual corrections

- Prebunking: explaining manipulative techniques and building information literacy

- Integration: professionals learn to fact-check AND explain manipulation mechanisms to audiences

Practical training programs demonstrate this two-level defense. The international reach of initiatives—Russian-speaking, English-speaking, Belarusian contexts—confirms the universality of both problem and solutions.

Common Myths and Misconceptions About Disinformation Countermeasures

Despite growing understanding of the importance of comprehensive approaches, persistent misconceptions about debunking and prebunking effectiveness remain among professionals and the public. Dispelling these myths is critical for proper application of both methods.

The Myth of Debunking Sufficiency

A common misconception: simply correcting false information is enough to combat disinformation. Reality differs.

Debunking effectiveness is limited by cognitive biases—people retain initial false beliefs even after receiving correct information. Corrections have significantly smaller reach than the viral spread of original disinformation.

In some cases, a "backfire effect" occurs: correction attempts paradoxically strengthen false beliefs. The professional community's broad shift toward prebunking reflects collective recognition of these limitations.

Prebunking Is Not Censorship

Critics sometimes equate prebunking with censorship or restricting information access. The method's nature is fundamentally educational, not prohibitive.

- Prebunking teaches critical thinking and explains manipulative techniques

- Expands people's ability to independently evaluate information

- Does not restrict access to any sources

- Works through building cognitive immunity, not blocking content

The approach is based on informed choice: instead of deciding for audiences, prebunking provides tools for independent analysis. This fundamentally differs from censorship, which restricts access without developing analytical skills.

Application Beyond Political Propaganda

While many examples involve political disinformation, the methods' application is significantly broader: business disinformation, scientific misinformation, social media manipulation.

Universal algorithms for analyzing manipulative narratives apply to any context of systematic information distortion. Media literacy becomes a tool for protection against various forms of disinformation.

- Libraries

- Implement prebunking to protect against disinformation in the information environment

- Educational Institutions

- Teach critical analysis techniques as part of basic competency

- Business Organizations

- Apply methods to protect against manipulation and reputational risks

- Specialists Across Fields

- Use approaches to combat disinformation in their professional spheres

Practical Techniques for Media Professionals and Newsrooms

Modern journalists and editors need systematic protocols that go beyond simple fact-checking. Professional training programs like StopFake and international media literacy initiatives have developed concrete strategies that not only respond to disinformation but also prevent its spread.

Prebunking Strategies for Journalists

A proactive approach requires journalists to explain manipulative techniques to their audience before they encounter specific falsehoods. Newsrooms are implementing regular features that dissect propaganda mechanisms: emotional triggers, out-of-context quotes, visual material manipulation, and the use of pseudo-experts.

An effective strategy includes preemptive coverage of topics that may become targets of manipulation. Before elections, journalists explain typical electoral disinformation schemes; before crises—the mechanisms of panic and rumors.

- Show a weakened version of the manipulative narrative

- Immediately deconstruct its structure and logical fallacies

- Build audience resilience against full-scale attacks

- Regularly update examples in accordance with the current information environment

Debunking Best Practices

Reactive fact-checking requires adherence to strict protocols to avoid the backfire effect, where debunking reinforces false beliefs. Professional fact-checkers begin with accurate information rather than repeating the falsehood, to avoid cementing the false claim in readers' memory.

Debunking must be specific, contain an alternative explanation, and be supported by visual evidence—which is remembered better than text.

Editorial strategies include rapid response to viral falsehoods, use of authoritative sources and evidence, and transparency in verification methodology. Documenting the verification process shows audiences the tools and steps, simultaneously teaching critical thinking and increasing trust in the debunking.

Tools for Librarians and Educational Institutions

Libraries and educational organizations are becoming key centers for developing information literacy. The American Library Association and international programs have developed specialized protocols that enable librarians to serve as guides for critical thinking in their communities.

These institutions possess a unique advantage: audience trust and systematic access to diverse age and social groups.

Information Literacy Programs

Library media literacy programs focus on universal source evaluation skills rather than debunking specific fakes. This includes workshops on verifying digital sources, seminars on cognitive biases, and hands-on sessions using verification tools.

Librarians adapt materials for different audiences: from schoolchildren to seniors, accounting for each group's specific vulnerabilities to certain types of manipulation.

- Game-based simulations of misinformation spread

- Group exercises in news analysis

- Creating original fact-checks under expert guidance

This approach builds not only knowledge but practical skills that participants apply in everyday life.

Systematic Countermeasure Protocols

Educational institutions are implementing structured protocols that integrate media literacy into curricula across various disciplines. This isn't a separate subject, but a cross-cutting competency developed through history, literature, natural sciences, and social studies.

Educators use current examples of disinformation as teaching cases, demonstrating the application of critical thinking in real situations.

The systematic approach includes regular assessment of program effectiveness, updating materials in line with evolving manipulation techniques, and sharing best practices among institutions.

Libraries and schools create mutual support networks, sharing successful methodologies and alerting each other to new waves of disinformation.

Building Personal Cognitive Immunity

Individual resistance to manipulation requires developing metacognitive skills — the ability to recognize and control one's own thought processes. Cognitive immunity doesn't mean distrusting all information, but rather represents calibrated skepticism that allows distinguishing between reliable and questionable sources.

This is a skill requiring constant practice and reflection on one's own information habits.

Recognizing Manipulative Techniques

Key manipulative tactics: appealing to emotions instead of facts, false dichotomy, using authority without verifying credentials, straw man arguments, selective quoting.

Understanding these techniques allows recognizing them in real time, activating critical thinking before the manipulation takes effect.

- Create a personal "catalog" of manipulations, updated with each encounter of a new technique

- Develop reverse image search skills and metadata verification

- Pay attention to visual manipulations: photos taken out of context, edited videos, charts with distorted axes

Critical Source Evaluation Habits

Systematic information verification requires forming stable habits: check publication date, find the original source, compare coverage across multiple independent sources, evaluate author credentials and transparency of publication funding.

The ability to say "I don't know, need to verify" is a sign of mature critical thinking, not weakness. Tolerance for uncertainty is the foundation of resilient cognitive immunity.

An effective approach is using a checklist of questions before reposting or accepting information as credible. These habits should become automatic, requiring minimal cognitive effort.

Regular verification practice builds intuition that allows quickly identifying suspicious content.

Effectiveness Metrics and Self-Assessment

Evaluating one's own progress requires concrete indicators: frequency of information verification before reposting, number of manipulations identified, ability to explain disinformation mechanisms to others.

| Indicator | What to Track | Why It Matters |

|---|---|---|

| Verification Time | How many minutes spent checking information | With practice the process accelerates, becoming part of natural content consumption |

| Fake News Log | Encountered manipulations and methods of recognizing them | Allows tracking skill development and identifying weak spots |

| External Feedback | Participation in fact-checker communities and experience sharing | Provides motivation and protection against false confidence |

Regular self-assessment helps avoid overconfidence — a cognitive bias where people overestimate their ability to recognize manipulations.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Debunking is reactive fact-checking after misinformation spreads, while prebunking is proactive preparation to recognize manipulation before encountering it. Debunking refutes specific false claims, prebunking builds cognitive immunity. Both approaches complement each other in combating disinformation.

Corrections rarely reach the same audience as viral misinformation, and people tend to maintain initial beliefs due to cognitive biases. Sometimes a backfire effect occurs—the correction reinforces the false belief. That's why professionals are shifting toward preventive prebunking strategies.

Prebunking explains manipulative techniques in advance, creating an 'inoculation' against disinformation. People learn to recognize emotional triggers, concept substitution, false dilemmas, and other tactics. This builds critical thinking skills before encountering specific misinformation.

Key techniques include emotional triggers (fear, anger), concept substitution, false dilemmas, appeals to authority, and selective quoting. It's also important to recognize deepfakes, out-of-context images, and fabricated sources. Systematic training in these tactics increases media literacy.

Journalists must verify facts through independent sources, use reverse image search, and verify original sources. It's important to provide context and avoid repeating misinformation without refutation. Professional training from StopFake and similar organizations teaches these skills.

Primary tools include reverse image search (Google Images, TinEye), video verification, metadata analysis, and verification through fact-checking organizations. Tools for detecting deepfakes and analyzing information sources are also used. Librarians and journalists employ systematic verification protocols.

Prebunking doesn't block information but teaches critical thinking and manipulation recognition. It's an educational approach that gives people tools for independent information assessment. Censorship restricts access, prebunking expands analytical capabilities.

Yes, prebunking is effective against medical misinformation, financial scams, scientific hoaxes, and commercial manipulation. Manipulation recognition techniques are universal and applicable in any field. Libraries and educational institutions implement media literacy programs for broad audiences.

Librarians can conduct fact-checking workshops, create educational materials, and teach users critical source evaluation. It's important to use systematic protocols and adapt content for different age groups. International resources offer ready-made methodologies and training programs.

Cognitive immunity is mental resilience to manipulation and false information, developed through education and practice. Just as biological vaccination protects against disease, prebunking creates protection against disinformation. It's a long-term critical thinking skill.

Effectiveness is assessed through the ability to recognize manipulation, changes in behavior when evaluating information, and reduced susceptibility to misinformation. Research shows improvement in critical thinking after training. However, systematic metrics are still being developed by the professional community.

Yes, professional organizations are developing systematic approaches to deconstructing propaganda narratives. These algorithms include source analysis, identification of emotional triggers, and verification of logical connections. Methodologies are being standardized and taught at international training programs.

The modern information environment requires preventive strategies, as reactive debunking cannot keep pace with the flow of disinformation. Prebunking builds long-term resilience and reduces the burden on fact-checkers. The professional community recognizes the necessity of this shift.

Key habits: verifying author and publication date, seeking confirmation from independent sources, analyzing emotional tone of text. It's important to ask questions like "who," "why," and "what evidence." Regular practice turns these actions into automatic skills.

Schools and universities integrate media literacy into curricula, teaching students to recognize manipulation and verify facts. Interactive formats are used: case analysis, game simulations, and practical exercises. This develops skills necessary for the digital age.

Available resources include media literacy webinars, crash courses, white papers from library associations, and international training programs. Materials exist in English and other languages. Many resources are free and adapted for different professional groups.