What is Conspiracy Thinking: Definition Through Cognitive Mechanisms, Not Belief Content

Conspiracy thinking is not a set of specific beliefs (flat Earth, reptilians, world government), but a specific way of processing information in which a person systematically applies certain cognitive strategies to interpret reality. More details in the Reality Validation section.

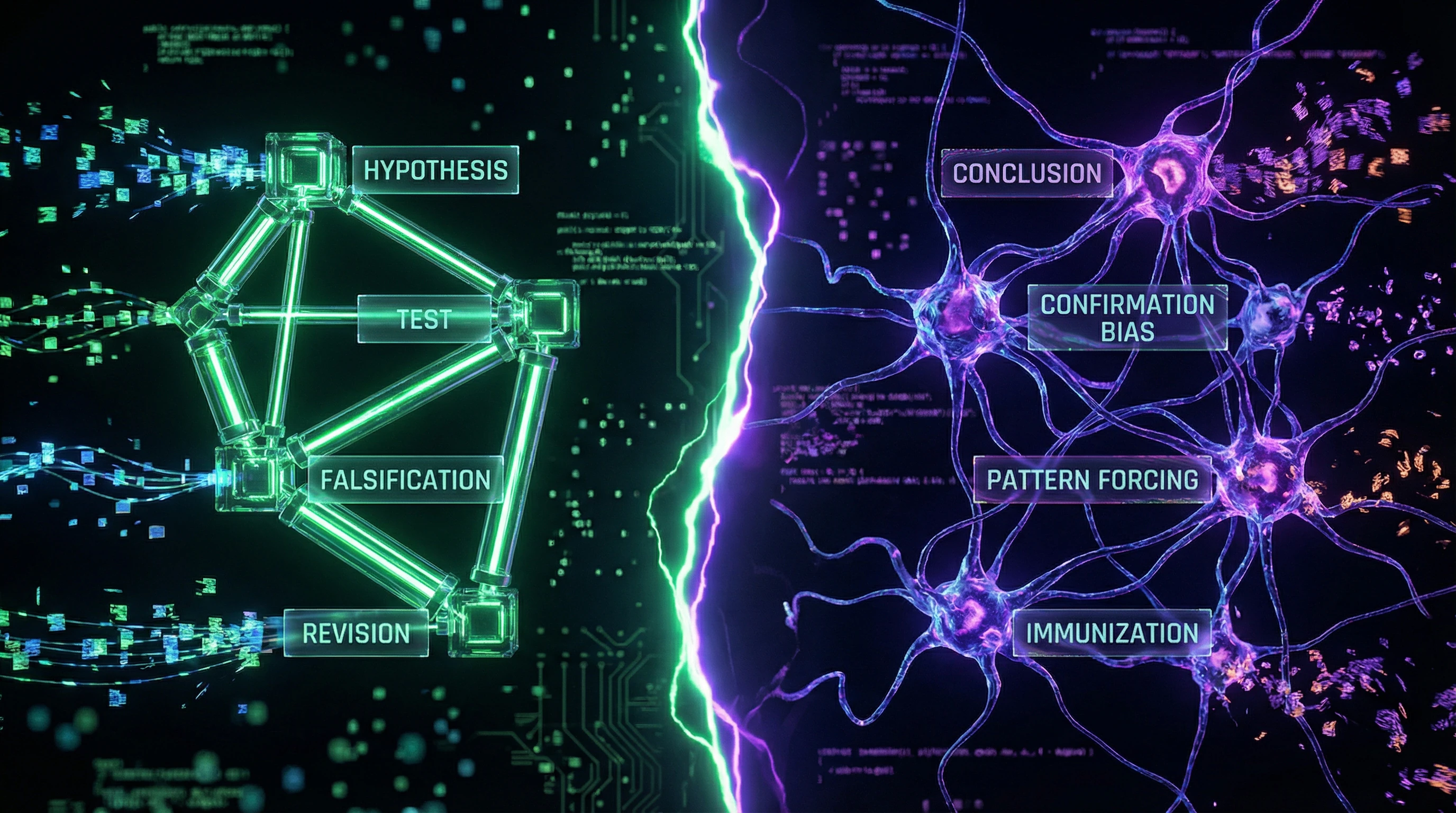

Key difference from scientific skepticism: conspiracy thinking starts with a conclusion and seeks confirmation, whereas critical thinking starts with data and forms conclusions (S006).

🧩 Structural Features of the Conspiracy Pattern

- Hyperagency

- The tendency to see intentional actions and hidden plans where randomness, systemic effects, or natural processes are at work. Coincidences are interpreted as evidence of coordination, absence of evidence as evidence of concealment (S006).

- Hypothesis Immunization

- Any counterarguments are automatically incorporated into the conspiracy theory as part of the conspiracy itself. If experts refute the theory—they're bought or deceived. If there's no direct evidence—the conspiracy is so powerful it hides all traces. The belief becomes unfalsifiable.

- Pattern Forcing

- Forced detection of patterns in noise. The brain is evolutionarily tuned to seek regularities, but conspiracy thinking lowers the sensitivity threshold of the pattern detector so much that it triggers on random coincidences.

⚠️ Cognitive Process vs. Belief Content

It's critically important to distinguish between thinking style and specific beliefs. A person may not believe in any popular conspiracy theory, yet still use conspiracy thinking style in other domains—in interpreting colleagues' actions, a partner's motives, or political events.

Conspiracy thinking is an interpretive tool that can be applied to any domain of reality, regardless of belief content.

People with high levels of education and intelligence may be more vulnerable to conspiracy thinking in areas where they lack expertise, because their cognitive abilities allow them to construct more complex and internally consistent narratives (S006). Intelligence without critical thinking is a powerful processor running on faulty algorithms.

🔎 Boundaries: Healthy Skepticism vs. Conspiracy Thinking

Healthy skepticism and conspiracy thinking use superficially similar tools—doubt in official versions, search for alternative explanations, criticism of authorities. The key difference lies in the methodology of hypothesis testing.

| Critical Thinking | Conspiracy Thinking |

|---|---|

| Formulates criteria that could disprove the hypothesis | Formulates hypothesis to be fundamentally irrefutable |

| Actively seeks data contradicting the belief | Interprets any data as confirmation |

| Ready to change position when evidence is present | Any counterarguments are incorporated into theory as part of conspiracy |

Practical distinction criterion: ask yourself—"What data or arguments could make me change this belief?" If the answer is "none, because any counterarguments are part of the deception," you're in the zone of conspiracy thinking (S006). If you can clearly formulate falsification conditions—you're applying scientific skepticism.

Steelman Argumentation: Five Strongest Foundations for Conspiratorial Thinking That Cannot Be Ignored

Intellectual honesty requires examining the strongest versions of arguments favoring conspiratorial thinking, not caricatured oversimplifications. The steelman approach involves strengthening the opponent's position to its most convincing form before critical analysis. Learn more in the Media Literacy section.

🧩 First Argument: Historical Precedents of Real Conspiracies Validate the Basic Model

History provides documented evidence of real conspiracies—from Operation Northwoods (a declassified CIA plan to organize false-flag terrorist attacks) to the Watergate scandal and the MKULTRA program. These cases prove that influential groups are indeed capable of coordinating covert actions, manipulating information, and maintaining secrecy for decades.

Many conspiracy theories that were initially ridiculed later received partial or complete confirmation. Intelligence agency surveillance of citizens was considered conspiratorial thinking until Edward Snowden's revelations. Tobacco companies' manipulation of research on smoking harms was a "conspiracy theory" until internal documents were published.

This pattern creates a legitimate basis for distrusting official narratives—not as paranoia, but as an empirically grounded hypothesis.

🧩 Second Argument: Information Asymmetry Makes Conspiratorial Thinking a Rational Heuristic

Under conditions of radical information asymmetry, when institutions possess incomparably greater resources for controlling the narrative, conspiratorial thinking functions as a compensatory heuristic—a simplified decision-making rule under uncertainty.

If you lack access to primary data, expertise, and insider information, assuming hidden motives may be statistically more accurate than naively trusting official statements. Research shows that heuristics are not thinking errors, but adaptive strategies for rapid decision-making with limited resources (S006).

- Conspiratorial thinking as hyperactivation of the "don't trust those with motives to deceive" heuristic

- In certain contexts may be more protective than the alternative

- Rational adaptation to information asymmetry, not cognitive failure

🧩 Third Argument: Cognitive Biases Work Both Ways

Critics of conspiratorial thinking point to cognitive biases (confirmation bias, pattern recognition errors), but these same biases operate in people who reject conspiracy theories. Normalcy bias causes people to underestimate the probability of extraordinary events and hidden threats.

Authority bias causes uncritical acceptance of expert and institutional statements. If conspiracy theorists overestimate the probability of conspiracies due to a hyperactive pattern detector, skeptics may underestimate this probability due to a hypoactive detector.

| Position | Dominant Bias | Result |

|---|---|---|

| Conspiratorial Thinking | Hyperactive pattern detector | Overestimation of conspiracy probability |

| Skeptical Thinking | Hypoactive pattern detector | Underestimation of conspiracy probability |

| Optimal Assessment | Bayesian calibration | Adequate probability |

🧩 Fourth Argument: Conspiratorial Thinking as a Defensive Mechanism

In an era of industrial consciousness manipulation (PR, propaganda, targeted advertising, information operations), conspiratorial thinking functions as cognitive immunity—an excessive but protective response to a real threat.

Just as the physical immune system sometimes produces false positives (allergies, autoimmune reactions), cognitive defense can generate false positives while still protecting against real manipulation (S002). A person who "sees conspiracies everywhere" may be more resistant to actual manipulation attempts than someone with a "normal" level of trust.

This is not an optimal strategy, but in a toxic information environment it may be less harmful than naivety.

🧩 Fifth Argument: Epistemological Crisis Makes Conspiratorial Thinking Inevitable

Contemporary society is experiencing a crisis of epistemological institutions—the breakdown of shared mechanisms for establishing truth. When scientific journals publish irreproducible research (S003), when media systematically distort information to serve owners' interests, when expert communities are politicized—a rational agent cannot rely on traditional truth verification mechanisms.

Under conditions of epistemological crisis, conspiratorial thinking is not a deviation but a rational response to the collapse of trust in knowledge institutions. If you cannot trust experts, media, and scientific publications, you must construct your own models of reality based on available information fragments.

- Replication Crisis

- Irreproducibility of results in scientific research undermines the authority of the scientific method as a truth verification mechanism.

- Politicization of Expertise

- When expert communities become instruments of political or corporate interests, their recommendations lose neutrality.

- Media Information Asymmetry

- Concentration of media in the hands of a small number of owners creates systematic narrative distortion.

These models will inevitably contain conspiratorial elements because you lack tools for reliable verification. Conspiratorial thinking becomes not a choice, but a consequence of the breakdown of epistemological institutions.

Evidence Base: What Neurobiology and Cognitive Psychology Tell Us About Conspiracy Thinking Mechanisms

Moving from philosophical arguments to empirical data requires analyzing research in cognitive psychology, neurobiology, and educational sciences. More details in the section Statistics and Probability Theory.

🧪 Critical Thinking as a Protective Factor: Evidence from Educational Research

Research demonstrates a consistent correlation between the level of critical analysis skills and resistance to conspiracy beliefs (S006). Critical thinking is the capacity for reflective and independent analysis: evaluating evidence, identifying logical fallacies, and testing arguments.

Students with high critical thinking scores demonstrate significantly lower tendency to accept conspiracy narratives without verification (S006). The protective mechanism: critical thinking forms a metacognitive habit — automatic activation of questions like "How do I know this?", "What alternative explanations exist?", "What evidence could disprove this?"

Critical thinking is not an innate ability but a skill developed through systematic practice. Educational interventions show measurable reduction in susceptibility to conspiracy beliefs.

This habit creates a cognitive barrier between information perception and belief formation, reducing the likelihood of impulsively accepting conspiracy hypotheses. The link between critical thinking development and resistance to conspiracy thinking confirms a causal mechanism, not merely correlation.

🧠 Sanogenic Thinking as an Alternative to Pathogenic Cognitive Patterns

The concept of sanogenic thinking offers a model of health-preserving cognitive strategies contrasted with pathogenic patterns (S002). Sanogenic thinking is characterized by the ability to reflect on emotional states, recognize cognitive distortions, and constructively process negative experiences without forming dysfunctional beliefs.

Applied to conspiracy thinking, the sanogenic approach involves replacing anxious conspiracy narratives with constructive strategies for coping with uncertainty (S002). Instead of constructing all-encompassing conspiracy theories to explain threatening events, sanogenic thinking focuses on distinguishing controllable from uncontrollable factors, forming realistic risk assessments, and developing adaptive coping strategies.

| Conspiracy Pattern | Sanogenic Approach |

|---|---|

| Seeking a single all-encompassing explanation | Distinguishing controllable from uncontrollable factors |

| Anxiety as the driving force of belief | Adaptive coping strategies and realistic risk assessment |

| Fixation on threat | Constructive processing of negative experience |

Sanogenic thinking training leads to reduced anxiety and increased psychological resilience (S002). While direct research on sanogenic thinking's impact on conspiracy beliefs is absent, the theoretical model suggests that developing sanogenic patterns should reduce the psychological need for conspiracy explanations as an anxiety-coping mechanism.

📊 Project-Based and Associative-Imagery Thinking: Alternative Cognitive Strategies

Project-based thinking, oriented toward creating concrete solutions and achieving measurable results, forms a habit of verifying hypotheses through practical action (S003). Students developing project-based thinking demonstrate higher tolerance for uncertainty and lower tendency to seek all-encompassing explanatory schemes.

Associative-imagery thinking, used in natural science teaching, develops the ability to construct multiple mental models of a single phenomenon (S004). This cognitive flexibility — the ability to hold several alternative interpretations simultaneously — is the antithesis of conspiracy thinking, which seeks a single all-encompassing interpretation.

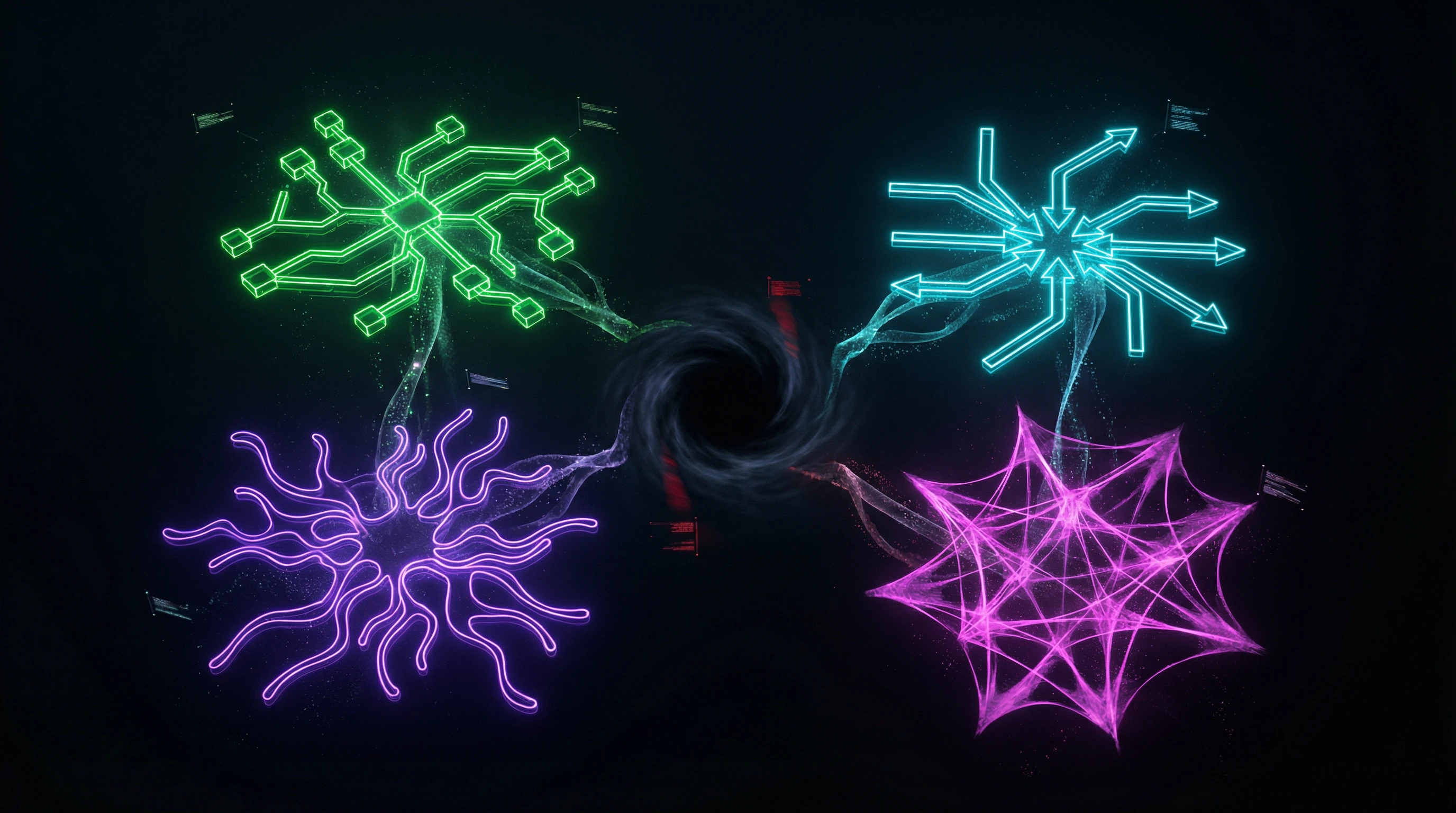

- Cognitive Flexibility

- The ability to hold multiple alternative interpretations simultaneously. Opposite to conspiracy thinking's search for a single explanation. Developed through associative-imagery modeling and practice working with ambiguous data.

- Tolerance for Uncertainty

- The ability to act and make decisions with insufficient information, without filling gaps with speculative theories. Formed through project-based thinking and hypothesis verification practice.

- Metacognitive Habit

- Automatic activation of questions about knowledge sources, alternative explanations, and disconfirming evidence. A key protective mechanism against conspiracy thinking.

The diversity of cognitive strategies available to an individual correlates with resistance to conspiracy thinking. The more tools a person has for interpreting reality (critical analysis, project-based thinking, associative-imagery modeling, sanogenic reflection), the lower the probability of fixating on a single conspiracy narrative.

🧾 Systematic Reviews as Verification Methodology: Lessons from Other Fields

The systematic review methodology applied in requirements engineering (S003) and medical research offers important lessons for evaluating the evidence base of any claims. Systematic review demonstrates the importance of mapping the entire research landscape, identifying knowledge gaps, and assessing evidence quality.

Applied to conspiracy thinking, this means distinguishing: (1) well-studied mechanisms (e.g., the role of confirmation bias), (2) areas with contradictory data (e.g., the link between intelligence and conspiracy beliefs), (3) complete research gaps (e.g., long-term intervention effectiveness).

When evaluating claims about conspiracy thinking, it's necessary to distinguish established facts, preliminary hypotheses, and speculation. Honest acknowledgment of evidence base limitations is a critical element of scientific methodology.

The methodology includes strict study inclusion criteria, assessment of systematic error risk, and honest acknowledgment of evidence base limitations. These principles are critically important when working with rare and complex phenomena where data is fragmentary and contradictory.

- Map the entire research landscape on conspiracy thinking

- Identify well-studied mechanisms and established correlates

- Designate areas with contradictory or preliminary data

- Honestly acknowledge complete research gaps

- Assess evidence quality and systematic error risk

- Distinguish facts, hypotheses, and speculation in public discourse

Mechanisms of Conspiracy Belief Formation: Causality, Correlation, and Hidden Variables

The distinction between correlation and causality is the foundation of honest analysis. Most research on conspiratorial thinking relies on correlational data, which creates a risk of erroneous conclusions about causes. More details in the Sources and Evidence section.

🔁 Feedback Loops: How Conspiratorial Thinking Reinforces Itself

Conspiratorial thinking creates self-reinforcing cognitive loops through three mechanisms.

Selective attention and memory. An adopted hypothesis redirects attention toward confirming information, while contradictory evidence is ignored or reinterpreted (S006). This creates a subjective sense of accumulating evidence while the objective base remains unchanged.

Social reinforcement. Conspiracy communities reward the expression of beliefs with social approval, "insider" status, and a sense of belonging. This reinforcement strengthens motivation regardless of the truth of the beliefs.

Cognitive dissonance and escalation. Public expression of beliefs, time investment, or decisions based on them create a psychological barrier to abandonment. It becomes easier to continue believing and seeking new "confirmations" than to admit error.

| Mechanism | Process | Result |

|---|---|---|

| Selective attention | Information filtering to match hypothesis | Illusion of growing evidence |

| Social reinforcement | Reward for expressing beliefs | Strengthened commitment independent of facts |

| Dissonance | Psychological pain from contradiction | Defending beliefs instead of revising them |

🧷 Confounders: Hidden Variables Creating False Correlations

Analysis of the relationship between cognitive characteristics and conspiratorial thinking requires accounting for confounders—hidden variables that influence both measured quantities and create a false appearance of direct connection.

Traumatic experience as a confounder. The correlation between distrust of institutions and conspiratorial thinking may be mediated by personal experiences of deception or betrayal. A person who has experienced real deception by authority figures simultaneously develops distrust and conspiratorial interpretations—both as parallel results of trauma, not cause and effect.

Conspiratorial thinking is often not the cause of distrust, but a symptom of the same source: real experience of systemic deception or betrayal.

Cognitive load and stress. People under high cognitive load or in states of stress more frequently use simplified heuristics and are more prone to conspiratorial thinking (S006). The connection between low socioeconomic status and conspiracy beliefs may be mediated by chronic stress, rather than a direct causal link.

- Identify the proposed correlation (e.g., "low status → conspiracy beliefs")

- List possible confounders (stress, trauma, information environment)

- Check whether the confounder influences both variables independently

- Control for the confounder statistically or logically

- Reassess the strength of the original relationship

🧠 Neurobiological Correlates: What We Know About Brain Mechanisms

Direct neurobiological research on conspiratorial thinking is limited, but studies of related phenomena (paranoia, hyperactive pattern detection, predictive processing) provide mechanistic clues.

The predictive brain (S001) constantly generates hypotheses about the causes of observed events. Under high uncertainty or threat, this system can shift into a mode of hypersensitivity to patterns, generating false causal connections. This is not a brain error—it's an adaptive mechanism under conditions of real threat, but it can also be activated under conditions of informational uncertainty.

Research on paranoia (S005) shows that people with paranoid beliefs demonstrate hyperactivity in systems related to threat detection and social evaluation. This suggests that conspiratorial thinking may be linked to the calibration of threat systems—not a defect, but a shift in sensitivity threshold.

- Predictive processing

- The brain generates hypotheses about causes of events; under uncertainty may create false patterns.

- Threat detection system

- Hyperactive in paranoia; a shift in sensitivity threshold, not a defect.

- Social evaluation

- Integration of information about others' intentions; when data is lacking, filled with conspiratorial hypotheses.

Key conclusion: conspiratorial thinking is not a sign of cognitive inadequacy, but the result of normal brain mechanisms operating under conditions of uncertainty, stress, or information vacuum. This makes it widespread and persistent, but also amenable to correction through changing conditions and retraining predictive models.