What exactly the Dunning-Kruger effect claims — and where the boundary lies between science and mythology

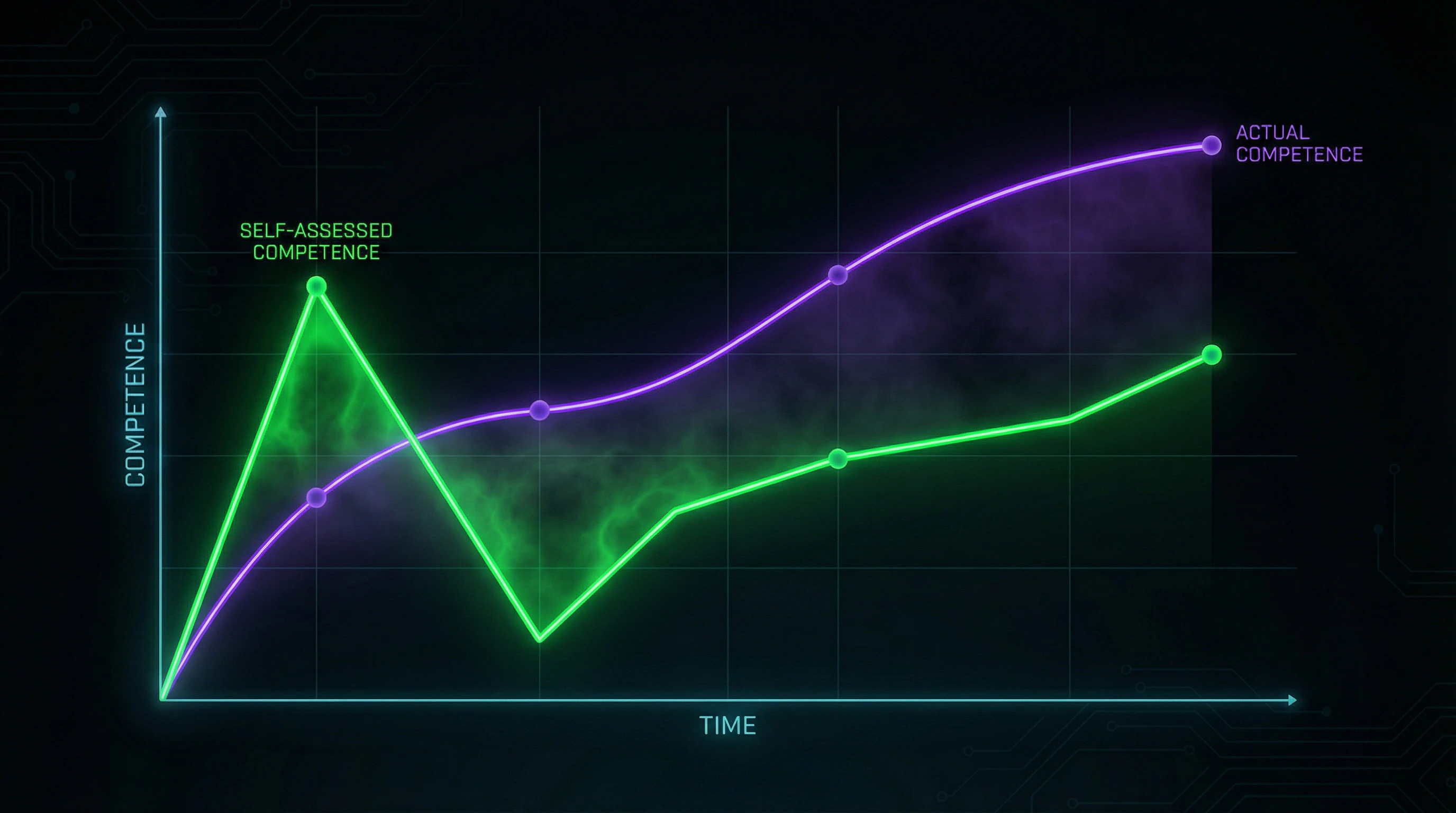

The Dunning-Kruger effect is a cognitive bias in which people with low competence systematically overestimate their abilities, while experts tend toward moderate underestimation (S009). The mechanism is dual: the incompetent don't recognize their incompetence (metacognitive deficit), and the competent underestimate the uniqueness of their skills (false consensus effect) (S011).

🔎 The original 1999 study

Kruger and Dunning conducted four experiments with Cornell University students (S009). Participants solved problems in logic, grammar, and humor, then rated their own results in percentiles.

| Group | Actual result | Self-assessment | Overestimation |

|---|---|---|---|

| Bottom quartile | 12th percentile | 62nd percentile | +50 points |

| Top quartile | 86th percentile | 68th percentile | −18 points |

⚙️ The metacognitive hypothesis

The authors explained the results through metacognitive deficit: the skills needed to perform a task coincide with the skills needed to evaluate it (S009). Those who don't understand good logic can't assess either others' or their own reasoning.

Incompetence simultaneously reduces performance and blocks awareness of the problem — this is a "double burden" (S011).

Experts possess metacognitive tools but suffer from projection: they assume others have similar skills (S009).

🧱 Boundaries of applicability

- Sample and context

- The original study is limited to U.S. students and abstract cognitive tasks (S009). The effect was not tested on practical skills (driving, surgery, programming) and did not account for motivational factors (S010).

- Cultural differences

- In collectivist cultures (East Asia), the pattern may invert — the competent tend toward greater modesty, while the incompetent don't display inflated self-assessment (S010). This questions the universality of the effect as a psychological law.

The boundary between science and mythology runs here: the original research is valid in its context, but popularization has transformed a local pattern into a universal law about human nature.

Seven Arguments for the Reality of the Effect — A Steelman Version of the Dunning-Kruger Hypothesis

Before examining the criticism, we must present the strongest possible version of the argument in favor of the effect. The steelman approach requires strengthening the opponent's position to its most convincing form. Below are seven arguments that defenders of the effect can advance based on data and theory. For more details, see the section on Mental Errors.

🔬 Argument 1: Replication in Independent Studies

The effect has been reproduced in dozens of studies beyond the original sample (S001). Research has covered medical diagnosis, driving skill assessment, financial literacy, and academic performance.

Meta-analyses confirm: the pattern of "low competence → inflated self-assessment" is robust in Western samples when using similar methodology (S005). This indicates reproducibility of the phenomenon, not a random artifact of a single experiment.

🧠 Argument 2: Neurocognitive Basis of Metacognitive Deficit

Metacognitive processes (monitoring and controlling one's own thinking) are localized in the prefrontal cortex and require developed executive functions in neuroscience. If a person has not mastered basic skills in a domain, their metacognitive system lacks reference points for calibrating self-assessment.

This explains why training improves not only performance but also accuracy of self-assessment: in the original study, after logic training, participants from the bottom quartile corrected their estimates downward (S002).

📊 Argument 3: Asymmetry of Self-Assessment Errors

If overestimation and underestimation were symmetrical (random noise), the average error would approach zero. However, data show systematic asymmetry: the incompetent overestimate themselves more strongly than experts underestimate (50 vs 18 percentage points in the original study).

This indicates a structural rather than random nature of the distortion — the error has direction and magnitude that cannot be explained by noise.

🧬 Argument 4: Evolutionary Adaptiveness of the Illusion of Competence

From an evolutionary perspective, moderate overestimation of one's abilities could have been adaptive: it reduces anxiety, increases motivation to act, and improves social status through confident behavior. Natural selection may have favored not accurate self-assessment, but optimistic bias — as long as the cost of error did not exceed the benefits of confidence.

The Dunning-Kruger effect may be a byproduct of this mechanism, not an evolutionary error.

⚙️ Argument 5: Phenomenological Validity — The Effect Is "Recognizable" to Practitioners

Managers, educators, and experts in various fields intuitively recognize the pattern: novices often display excessive confidence, while experienced professionals show caution and self-criticism (S004). This phenomenological validity (correspondence with subjective experience) does not prove the effect, but indicates its ecological relevance: the description resonates with real observations.

- A novice programmer overestimates the speed at which they will write an application

- An experienced developer is cautious in estimates and accounts for unknown unknowns

- An instructor sees this asymmetry in every cohort of students

- A medical intern is confident in diagnosis, an experienced physician requests a colleague's consultation

🧪 Argument 6: The Effect Persists When Controlling for Regression to the Mean

Critics claim the effect is an artifact of regression to the mean: if self-assessment and performance correlate imperfectly, extreme performance values will be accompanied by less extreme self-assessment values. However, defenders point out: even with statistical control for regression, residual overestimation in the bottom quartile significantly exceeds what would be expected from a random noise model.

This means regression explains part of the effect, but not the entire phenomenon (S002).

🔁 Argument 7: The Reverse Effect in Experts Confirms the Mechanism

If the effect were purely a statistical artifact, there would be no reason for systematic underestimation in the top quartile. However, experts do indeed underestimate the uniqueness of their skills, which is consistent with the false consensus hypothesis: they project their competence onto others.

| Competence Level | Self-Assessment Pattern | Mechanism |

|---|---|---|

| Low (bottom quartile) | Overestimation | Lack of reference points for calibration |

| Average | Relatively accurate assessment | Development of metacognitive skills |

| High (top quartile) | Underestimation (impostor syndrome) | Projection of competence onto others, awareness of complexity |

The presence of a reverse effect (impostor syndrome) in highly competent individuals strengthens the theoretical coherence of the model (S006). If the mechanism were purely statistical, the reverse effect would be impossible.

Evidence Base Under the Microscope: What 25 Years of Research Shows

Moving from arguments to facts. Below is a detailed breakdown of the empirical base with sources, methodological limitations, and contradictions between studies. More details in the Reality Check section.

📊 Original 1999 Data: Four Experiments by Kruger and Dunning

The study included four experiments with a total sample of approximately 300 students (S009). Experiment 1: logical reasoning test (20 items), participants estimated their results in percentiles relative to other students. Bottom quartile (actual percentile 12) rated themselves at 62; top quartile (actual 86) rated themselves at 68 (S009).

Experiment 2: grammar test, similar results. Experiment 3: humor assessment (subjective domain), effect persisted. Experiment 4: after logic training, bottom quartile participants corrected their self-assessment downward, confirming the metacognitive hypothesis (S009).

| Experiment | Domain | Bottom Quartile (actual vs. self-assessment) | Top Quartile (actual vs. self-assessment) |

|---|---|---|---|

| 1 | Logic | 12 vs. 62 | 86 vs. 68 |

| 2 | Grammar | Overestimation | Underestimation |

| 3 | Humor | Overestimation | Overestimation (weaker) |

| 4 | Logic + training | Downward correction | Stable |

🧾 Replications and Extensions: Where the Effect is Confirmed

The effect has been reproduced in medical diagnosis studies: medical students with low exam scores overestimated their readiness for clinical practice (S011). In driving skills research: drivers with high violation rates rated themselves as "above average" (S011).

In financial literacy: participants with low test scores overestimated their understanding of investments and credit (S011). General pattern: in Western samples (USA, Western Europe) using objective tests and percentile self-assessment, the effect is robust (S009).

The Dunning-Kruger effect replicates only under specific conditions: objective test, percentile self-assessment, Western sample. Outside these parameters — artifact or cultural pattern.

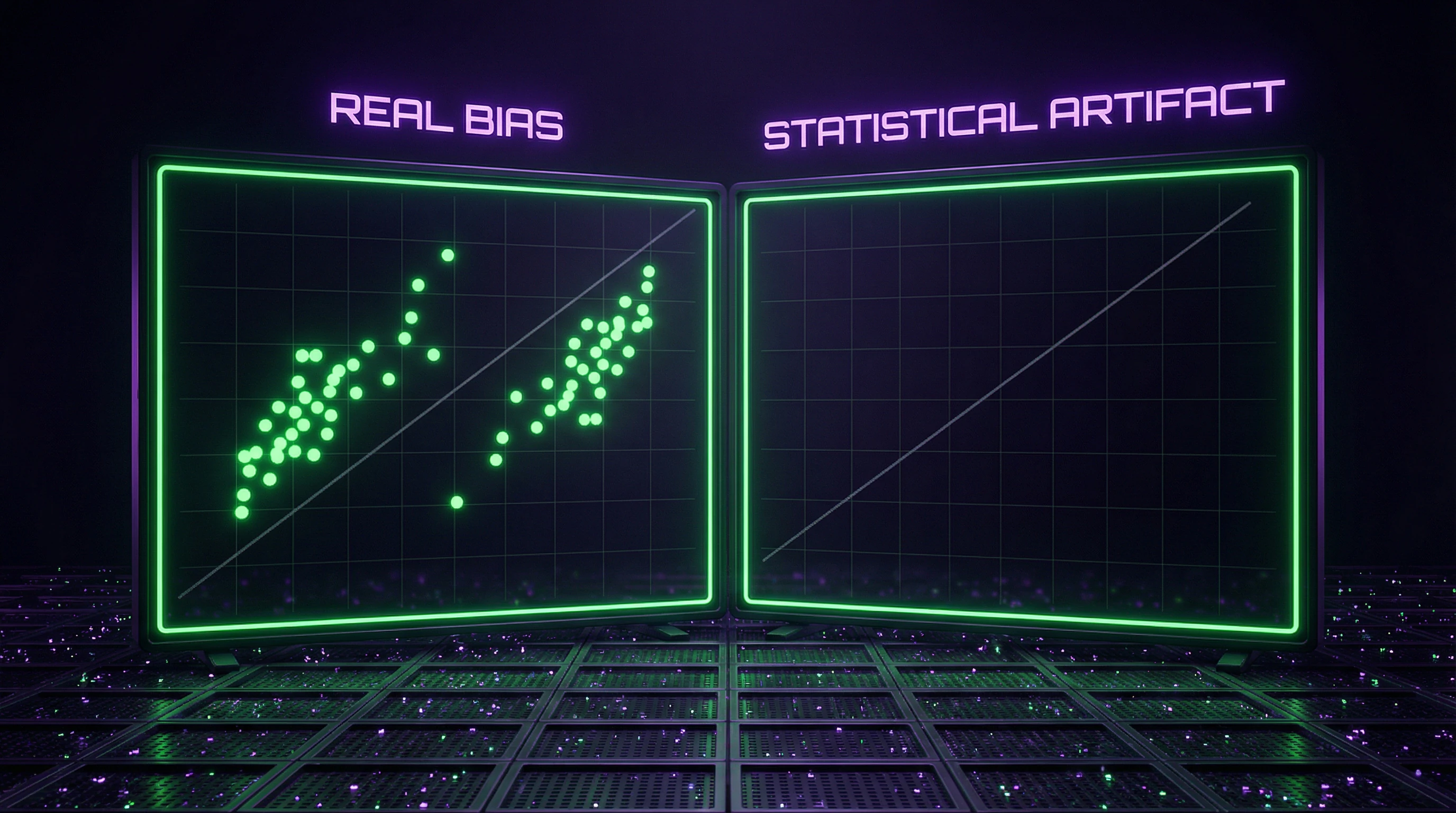

⚠️ Methodological Critique: Regression to the Mean and Correlation Artifacts

Primary critique: the effect may be a statistical artifact (S010). If self-assessment and results correlate imperfectly (inevitable due to measurement noise), regression to the mean creates the illusion of the Dunning-Kruger effect (S010).

Mathematical model: if the true correlation between competence and self-assessment equals 0.5, then dividing the sample into quartiles by results automatically shows the bottom quartile with inflated self-assessment and the top quartile with deflated assessment, even if no real cognitive bias exists (S010). Critics demand alternative analytical methods (e.g., latent variable models) that control for regression (S010).

- Divide sample by test results into quartiles

- Calculate average self-assessment in each quartile

- Check whether the pattern is a regression artifact (mathematical consequence of imperfect correlation)

- Apply latent variable models to control for measurement noise

- Compare results: if effect disappears — artifact; if remains — real bias

🌍 Cross-Cultural Research: Effect is Not Universal

Studies in East Asia (Japan, China, South Korea) show weakened or inverted effects (S010). In collectivist cultures, highly competent participants demonstrate greater modesty (cultural norm), while low-competent participants show no systematic overestimation (S010).

This indicates cultural moderation of the effect: metacognitive deficit may be universal, but its manifestation in self-assessment depends on cultural norms of self-presentation (S010). The Dunning-Kruger effect may be less a cognitive law than a culturally-specific pattern characteristic of individualistic societies (S010).

- Individualistic Cultures (USA, Western Europe)

- Norm: self-presentation of competence, minimizing weaknesses. Result: low-competent overestimate, high-competent underestimate (contrast maximized).

- Collectivist Cultures (East Asia)

- Norm: modesty, avoiding standing out. Result: high-competent underestimate, low-competent don't overestimate (contrast minimized or inverted).

- Conclusion

- Effect is not a universal cognitive law, but a culturally-moderated pattern of self-presentation.

🧪 Alternative Explanations: Motivation, Social Desirability, Measurement Noise

Beyond regression to the mean, critics propose alternative explanations (S010). Motivational bias: participants may inflate self-assessment due to social desirability (desire to appear competent), not metacognitive deficit (S010).

Measurement noise: if tests lack sufficient reliability, random errors create the appearance of systematic bias (S010). Reality check requires separating three sources: (1) real metacognitive deficit, (2) social desirability, (3) statistical artifact.

If the effect disappears when controlling for regression and social desirability — it's not a law of psychology, but a methodological artifact. If it remains — explanation is needed for why it's culturally-specific.

The Distortion Mechanism: How Metacognitive Deficits Create the Illusion of Competence

If we accept that the effect is real (at least partially), we need to understand its mechanism. More details in the Logic and Probability section.

🧬 Metacognitive Monitoring: How the Brain Evaluates Its Own Performance

Metacognitive monitoring is the ability to track and evaluate one's own cognitive processes (S011). It operates on two levels: the object level (task execution) and the meta-level (quality assessment) (S011).

For accurate self-assessment, the meta-level must have access to quality criteria. If someone doesn't know what good logic looks like, their meta-level cannot detect errors (S009). This creates a blind spot: incompetence simultaneously reduces performance and blocks awareness of the problem.

Incompetence hides itself not through active denial, but through the absence of tools to detect it.

🔁 The Double Burden of Incompetence: Why Ignorance Conceals Ignorance

Kruger and Dunning formulated a key principle: the skills required to perform a task overlap with the skills required to evaluate it (S009). If someone can't code, they cannot assess code quality—neither others' nor their own.

If someone doesn't understand statistics, they won't detect errors in their reasoning about data (S009). Training improves self-assessment precisely because it provides metacognitive tools for calibration.

| Competence Level | Ability to Perform | Ability to Evaluate | Result |

|---|---|---|---|

| Incompetent | Low | Low | Overestimation (doesn't see errors) |

| Beginner | Growing | Growing faster | Underestimation (sees more errors) |

| Competent | High | High | Accurate assessment |

| Expert | Very high | May be lower | Underestimation (task seems easy) |

🧷 False Consensus Effect in Experts: Projecting Competence onto Others

The reverse effect in experts (underestimation) is explained through the false consensus effect: people overestimate how widespread their knowledge is in the population (S009). An expert for whom a complex task has become routine assumes others will handle it just as easily.

This leads to underestimating the uniqueness of their skills (S011). The effect intensifies in domains where competence makes the task "transparent": the expert forgets how difficult it was during the learning phase.

- False Consensus

- A cognitive bias where someone projects their experience and knowledge onto others, assuming it's universal. In experts, this leads to underestimating their own competence.

- Why This Matters

- Explains why experienced people are often poor teachers: they don't see where students get stuck because those steps are obvious to them.

⚙️ Calibrating Self-Assessment: Why Feedback Doesn't Always Help

The original study showed that training improves self-assessment calibration in incompetent participants (S009). However, in real life, feedback is often ineffective (S011).

- Feedback may be nonspecific ("bad," without pointing to concrete errors)

- People interpret it through defensive mechanisms (external attribution: "the task was unfair")

- Without metacognitive tools, feedback doesn't convert into improved self-assessment (S011)

This explains the effect's persistence in real-world conditions where formal training is absent (S004, S005). Calibration requires not just information about an error, but understanding its cause and how to fix it.

The connection to reality testing is direct: metacognitive monitoring is an internal validation mechanism, and its deficit means the absence of a built-in quality control system.

Data Conflicts and Uncertainty Zones: Where Sources Diverge

Scientific integrity requires explicitly indicating where data contradict each other and where they are simply absent. More details in the section Occultism and Hermeticism.

🕳️ Contradiction 1: Universality of the Effect — Western Artifact or Global Pattern?

The original study and most replications were conducted on Western samples (S009), (S011). Cross-cultural studies show weakening or inversion of the effect in East Asia (S010).

But the methodology of cross-cultural studies varies: different tests, different self-assessment scales, different cultural contexts (S010). It's unclear whether the difference results from actual cultural moderation or methodological artifacts.

Standardized cross-cultural studies with identical methodology are needed. Without this, the conclusion about the effect's universality remains speculation.

🧩 Contradiction 2: Regression to the Mean — Complete Explanation or Partial Contribution?

Critics argue: regression to the mean fully explains the effect, there is no real cognitive bias (S010). Defenders counter: even when controlling for regression, residual overestimation is significant (S011).

The problem is that different studies use different methods of controlling for regression: simple correlational models vs complex latent variable models (S010). This makes comparing results difficult.

| Position | Argument | Weakness |

|---|---|---|

| Regression explains everything | Statistical artifact, not psychology | Ignores residual effects in controlled studies |

| Regression is partial contributor | Effect persists after control | Control methodology varies, no consensus |

🔎 Contradiction 3: Practical Skills vs Abstract Tests

Most studies use abstract cognitive tests: logic, grammar (S009), (S011). It's unclear whether the effect persists in practical domains with immediate feedback: driving, surgery, programming (S010).

Some studies of driving skills confirm the effect (S011), but samples are small and methodology is disputed (S010). In high-stakes domains (medicine), institutional mechanisms — exams, certification — may suppress the effect's manifestation (S011).

- Uncertainty Zone

- Insufficient data to generalize across all skill types. The effect may be specific to abstract cognitive tasks and weaken in contexts with rapid feedback.

- What's Needed

- Direct comparisons of the same individuals on abstract tests and practical tasks with controlled feedback and motivation.

These three conflict zones demonstrate: the Dunning-Kruger effect is not a monolith, but a set of conditional phenomena. Reality testing requires distinguishing where the effect is proven, where it's disputed, and where it's simply unstudied.

Cognitive Anatomy of the Myth: Which Biases Does the Effect's Popularization Exploit

The Dunning-Kruger effect has become a viral meme, often used to discredit opponents ("you just don't understand that you're incompetent"). We examine which cognitive biases are exploited by the effect's popularization itself. More details in the section Candida and Leaky Gut.

⚠️ Bias 1: Fundamental Attribution Error — "they're foolish, I'm right"

The fundamental attribution error is the tendency to explain others' behavior through internal factors (personality, abilities) while explaining our own through external factors (circumstances) (S001). The Dunning-Kruger effect is often used for internal attribution of others' mistakes: "he disagrees with me because he's incompetent and doesn't realize it" (S010). This allows avoiding consideration of alternative explanations (different values, different data, different priorities) and preserving the illusion of one's own correctness (S010).

🧩 Bias 2: Barnum Effect — "this applies to everyone, therefore it's science"

The Barnum effect (Forer effect) is the tendency to consider vague general descriptions as accurate and specific (S010). The statement "incompetent people overestimate themselves" is general enough that everyone can recall examples from their own experience (S010). This creates an illusion of validity: "I've seen this with my own eyes, therefore the effect is real" (S010). However, anecdotal observations don't replace controlled studies: subjective convincingness doesn't equal scientific proof (S010).

🕳️ Bias 3: Confirmation Bias — ignoring counterexamples

Confirmation bias is the tendency to seek and interpret