What is the black box fallacy and why it doesn't reduce to simple ignorance of how something works

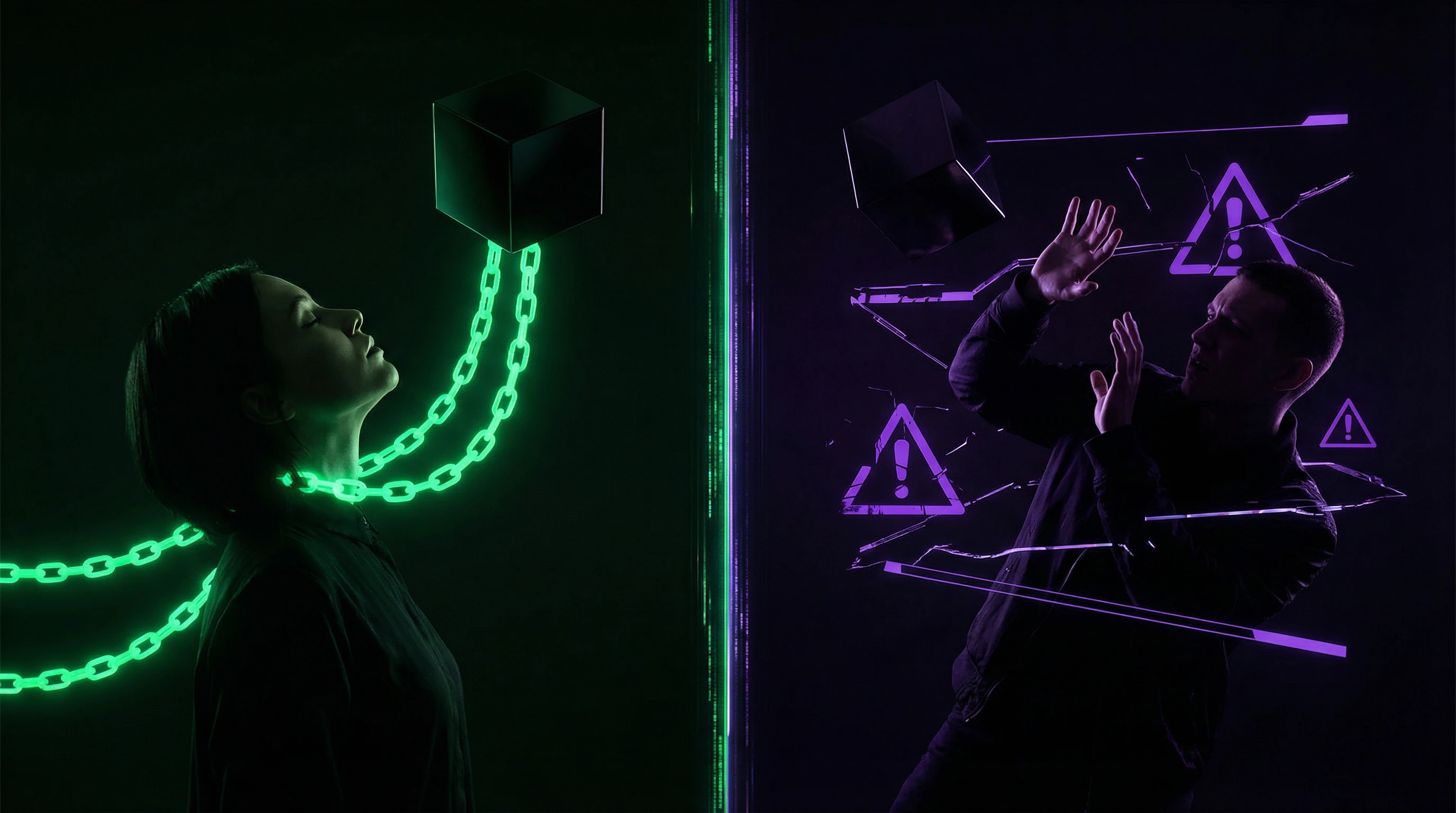

The black box fallacy is a cognitive bias in which a person forms an extreme evaluation of a system (complete trust or complete rejection) based solely on the opacity of its internal workings, ignoring empirical data about its effectiveness, reliability, and context of application. More details in the Critical Thinking section.

This isn't just ignorance—it's replacing analysis of results with analysis of information accessibility about the process.

🧩 Two poles of one fallacy: blind trust and paranoid rejection

The fallacy manifests in two opposite forms. The first is blind trust in the authority of opacity: "This is a complex system, I don't understand how it works, therefore it must be correct." The second is paranoid rejection: "I can't see what's inside, therefore it's deception/danger/manipulation."

Both forms share a substitution: instead of evaluating the validity of output data, reproducibility of results, and system-task fit, a person evaluates only their degree of understanding of the mechanism.

⚠️ Why this isn't the same as availability heuristic or halo effect

The black box fallacy differs from related cognitive biases. The availability heuristic works with ease of retrieving examples from memory. The halo effect transfers evaluation of one quality to others.

- Black box fallacy

- is triggered specifically by process opacity and leads to ignoring empirical data about results. A person may know that a system errs in 30% of cases but continues to trust it because "the algorithm is complex." Or conversely: a system demonstrates 95% accuracy but is rejected because "it's unclear how it does this."

🔎 Boundaries of term applicability: where the fallacy exists and where it doesn't

Not every use of an opaque system is a fallacy. If you take aspirin without knowing the mechanism of cyclooxygenase inhibition, but rely on meta-analyses of effectiveness and safety profile—this is rational delegation to expertise.

| Scenario | Decision basis | Is this a fallacy? |

|---|---|---|

| Doctor prescribes drug only because "it's a new development, mechanism is complex" | Process opacity | Yes—ignores absence of RCTs |

| Patient refuses vaccine with proven effectiveness | "Don't understand what's inside" | Yes—displaces evidence analysis |

| Patient takes drug based on meta-analyses without knowing mechanism | Empirical data about results | No—rational delegation |

The fallacy occurs when opacity becomes the only or primary evaluation criterion, displacing evidence analysis.

Five Strongest Arguments That System Opacity Actually Creates a Trust Problem

Concerns about opaque systems are not unfounded. There are real risks associated with delegating decisions to systems whose internal workings are unavailable for inspection. More details in the Statistics and Probability Theory section.

🧪 Argument 1: Impossibility of Audit and Reproducibility in Critical Domains

When a system makes decisions affecting life and health (medical diagnosis, judicial risk assessment algorithms, critical infrastructure management systems), the inability to audit its logic creates a real accountability problem.

If an algorithm recommends denying parole or prescribing chemotherapy, but no one can explain what features informed the decision, it becomes impossible to identify systematic errors or bias before they cause harm.

🔬 Argument 2: Medical History Is Full of "Working" Methods That Proved Harmful

Bloodletting "worked" for centuries — patients sometimes recovered, physicians observed correlation. Lobotomy won a Nobel Prize. Thalidomide passed clinical trials.

Opacity of mechanism combined with observed effect does not guarantee safety. The requirement to understand mechanism is a lesson from history: correlation without understanding causality can mask harm that manifests later or under different conditions.

📊 Argument 3: Algorithmic Systems Can Reproduce and Amplify Hidden Biases

Opaque machine learning models trained on historical data encode racial, gender, and socioeconomic biases without making them explicit.

- A credit risk assessment system may discriminate by zip code

- A recruitment system — by name

- A medical diagnostic system — by access to previous examinations

Without transparency, these biases cannot be detected and corrected before the system scales.

⚠️ Argument 4: Information Asymmetry Creates Space for Manipulation

When a system developer knows its limitations but the user doesn't, a classic information asymmetry problem emerges. A diagnostic AI manufacturer may know the system only works on certain demographic groups or image types, but market it as a universal solution.

Without transparency in methodology, training data, and validation, users cannot assess the system's real applicability to their context. This is especially dangerous in techno-esotericism, where opacity often serves as a marker of supposed "advancement."

🧠 Argument 5: Delegating Decisions to Opaque Systems Atrophies Expertise

If physicians begin relying on decision support system recommendations without understanding their logic, they gradually lose the ability to make decisions independently. This creates fragility: when the system fails, becomes unavailable, or is applied in a non-standard situation, the expert is helpless.

- Opacity accelerates atrophy because it leaves no opportunity for learning through understanding the system's logic

- The expert loses the ability to critically evaluate recommendations

- The organization becomes dependent on a black box instead of developing competence

What Systematic Reviews and Meta-Analyses Say About the Real-World Effectiveness of Opaque Systems in Medicine and Education

Moving from arguments to evidence, we need to separate two questions: do opaque systems work (empirical effectiveness) and can they be made transparent without losing effectiveness (technical feasibility). Meta-analyses from recent years provide surprising answers to both.

📊 AI Chatbots Demonstrate Higher Empathy Than Physicians in Controlled Studies

A systematic review comparing AI chatbots and medical professionals in the context of empathy in patient care revealed a paradox: in controlled settings, AI systems demonstrated higher perceived empathy scores than human physicians (S004). This doesn't mean AI "feels"—the algorithm's structured, consistent, unhurried responses were perceived by patients as more attentive than the rushed consultations of overworked specialists.

The opacity of the mechanism didn't prevent the system from surpassing humans on a measurable criterion of interaction quality.

🧪 Clinical Decision Support Systems (CDSS) Improve Diagnostic Accuracy When Properly Integrated

Analysis of CDSS effectiveness shows significant improvement in diagnostic accuracy and reduction in medical errors, but only when properly integrated into clinical workflow and with adequate staff training (S010). The key factor is not algorithm transparency, but process transparency: physicians must understand what data the system considers, what limitations it has, and how to integrate recommendations into clinical reasoning.

The opacity of the internal algorithm was not a critical factor in effectiveness.

🔎 AI Diagnostic Accuracy in Screening Surpasses Humans in Highly Specialized Tasks

Meta-analysis of AI diagnostic accuracy for screening specific conditions demonstrates that in highly specialized tasks (detecting diabetic retinopathy, identifying early signs of lung cancer on CT scans), AI systems achieve sensitivity and specificity comparable to or exceeding those of experienced specialists (S011). Critically important: these results were obtained under conditions where physicians did not understand the algorithm's internal logic, but could evaluate its outputs and integrate them into the diagnostic process.

- The system operates on a narrow, well-defined task

- Outputs are validated on independent cohorts

- Physicians can evaluate results in the context of clinical presentation

- The mechanism remains opaque, but this doesn't reduce utility

🧬 Cognitive Task Analysis (CTA) in Surgery: Unpacking the Black Box of Expert Thinking Improves Training

A systematic review of cognitive task analysis impact on surgical education shows the opposite side of the problem: when the "black box" of a surgeon's expert thinking is unpacked through structured analysis of cognitive decision-making processes, training effectiveness significantly increases (S005). Meta-analysis of 12 studies revealed a large effect size favoring CTA-based training compared to traditional methods.

This demonstrates that transparency of the expert's thinking process is critically important for knowledge transfer, even if the expert cannot verbalize all their decisions without structured analysis. More details in the Debunking and Prebunking section.

⚙️ Prognostic Role of MRI: When Opacity of Biological Process Doesn't Hinder Clinical Utility

Research on the prognostic role of magnetic resonance imaging shows that MRI biomarkers can predict disease outcomes with high accuracy, even when the precise biological mechanism linking the visualized feature to the outcome remains unclear (S012). Physicians use these prognostic models without fully understanding the pathophysiology, but relying on validated correlations.

This is rational use of opacity: the mechanism is unclear, but reproducibility, validation on independent cohorts, and clinical utility are proven.

The Mechanism of Error: How Opacity Exploits Evolutionary Heuristics and Creates the Illusion of Understanding

The black box fallacy doesn't arise in a vacuum—it exploits several fundamental features of human cognition shaped by evolution to solve problems under conditions of limited information and time. More details in the Reality Check section.

🧩 The "Complexity = Competence" Heuristic and Its Evolutionary Roots

In the social environment of our ancestors, complexity of explanation often correlated with expertise: a shaman capable of giving a complex explanation of illness likely possessed more experience than one who gave a simple answer. This heuristic worked because complexity was a costly signal—difficult to fake without real knowledge.

But in the modern world, complexity is easily imitated: pseudoscientific jargon, complex diagrams, multi-step algorithms can create the appearance of expertise without its substance. System opacity activates this heuristic: "I don't understand it, therefore it's complex, therefore the creators are competent."

Complexity has ceased to be a signal of competence—it has become a tool of manipulation. Opacity allows imitation to pass for reality.

🔁 The Illusion of Explanatory Depth and Overestimation of One's Own Understanding

People systematically overestimate the depth of their understanding of causal mechanisms. When a person can name a system ("it's a neural network," "it's a quantum computer," "it's blockchain"), they often mistakenly believe they understand how it works.

System opacity paradoxically strengthens this illusion: the absence of details allows gaps to be filled with simplified mental models that seem sufficient. A person doesn't recognize the boundaries of their ignorance because the system provides no information that could expose those boundaries.

- Named the system—felt understanding

- Opacity conceals gaps in that understanding

- Absence of contradictory data reinforces the illusion

- Critical thinking shuts down

⚠️ Trust Asymmetry: Why It's Easier to Believe in Magic Than to Check Statistics

Verifying empirical data about a system's effectiveness requires cognitive effort: you need to find studies, evaluate their methodology, understand statistics, consider context. Accepting on faith or rejecting based on opacity requires zero effort.

Evolutionarily, we're wired to minimize cognitive expenditure. Opacity creates asymmetry: the path of least resistance is an extreme assessment (complete trust or complete rejection), not laborious analysis of evidence.

| Scenario | Cognitive Cost | Outcome |

|---|---|---|

| Check research on effectiveness | High | Informed judgment |

| Believe opacity is a signal of quality | Zero | Illusion of understanding |

| Reject system as a "black box" | Zero | Unfounded skepticism |

🧬 The Context Confounder: When Opacity Correlates with Novelty and Task Complexity

Opaque systems are often applied in new, complex domains where established evaluation standards don't yet exist. This creates a confounder: poor results may be a consequence not of opacity, but of technology immaturity or incorrect application.

Good results may be a consequence not of system quality, but of careful selection of tasks where it works. Without controlling for this confounder, it's impossible to separate the effect of opacity from the effect of novelty. This is especially critical in domains where technological mysticism has already created fertile ground for overestimating capabilities.

Where the Evidence is Strong and Where Gaps Remain: An Honest Analysis of Current Data Limitations

Critical analysis requires acknowledging not only what we know, but also what we don't know. Systematic reviews and meta-analyses have their own limitations that must be considered when interpreting results. Learn more in the Logic and Probability section.

📊 Publication Bias: Only Successes Are Visible

Studies demonstrating high effectiveness of AI systems are published more frequently than studies with null or negative results. This creates systematic bias in meta-analyses: effectiveness estimates may be inflated by 20–40% depending on the field.

The problem is compounded by the fact that many AI systems are developed by commercial companies that control data access and may selectively publish results. Without access to protocols from unpublished studies, it's impossible to assess the true scale of this bias.

When only successes are visible, the map of effectiveness becomes a map of marketing, not reality.

🔬 Heterogeneity: Different Studies Speak Different Languages

Different studies use different effectiveness metrics, different populations, different application conditions (S001, S003, S007). This makes direct comparison and generalization of results difficult.

For AI systems, the problem is particularly acute: there's no consensus on how to evaluate "explainability" or "transparency," no standard benchmarks for comparing systems in real clinical settings. One researcher might consider a system "explainable" if it outputs coefficients; another will demand complete tracing of decision logic.

- Metric A: accuracy on test set

- Metric B: sensitivity and specificity

- Metric C: decision-making time

- Metric D: concordance with expert opinion

- Metric E: user satisfaction

Each metric answers a different question. Without standardization, it's impossible to say which system is better.

⚠️ The Gap Between Laboratory and Clinic

Most studies are conducted under controlled conditions: carefully curated datasets, standardized protocols, absence of time pressure. Real clinical practice differs radically: incomplete data, non-standard cases, need for rapid decisions, integration with existing workflows.

Effectiveness demonstrated in research may not reproduce in real-world conditions. Systematic data on long-term outcomes of AI system implementation in routine practice remains insufficient. This doesn't mean systems are ineffective—it means we don't know how well they work where they're actually used.

| Parameter | Controlled Study | Real Practice |

|---|---|---|

| Data Quality | High, standardized | Incomplete, variable |

| Time Pressure | Absent | High |

| Workflow Integration | Ideal | Often conflicting |

| Case Selection | Representative | Biased (severe cases) |

🧪 Expertise Atrophy: Theory Without Data

The argument about expertise atrophy when delegating decisions to opaque systems is theoretically sound but empirically weakly confirmed. There are no long-term studies tracking changes in cognitive skills of physicians using decision support systems compared to a control group.

There's no data on how the ability to make decisions in non-standard situations changes after years of using AI assistants. This is a critical gap: if atrophy is real, its effects may manifest after years, when rolling back becomes impossible. We can only speculate, relying on cognitive biases and evolutionary psychology.

We know the mechanism, but not the scale. This is more dangerous than knowing nothing.

Strengths of the evidence base: effectiveness of AI systems in narrow, well-defined tasks (image-based diagnosis, screening) is confirmed by numerous studies. Weaknesses: long-term effects, impact on cognitive skills, real clinical effectiveness, error mechanisms in decision delegation—all remain in the shadows.

Cognitive Anatomy of the Error: Which Biases Are Exploited and How They Interact

The black box fallacy is not an isolated distortion, but a node in a network of interconnected cognitive vulnerabilities. Understanding this network is necessary for developing effective countermeasures. More details in the section AI Errors and Biases.

🧩 Technology Halo Effect: Transfer of Prestige to a Specific System

If AI systems in general are associated with progress and innovation, this positive evaluation transfers to a specific system without analyzing its individual characteristics. "It's AI" becomes sufficient grounds for trust.

Opacity amplifies the effect: the absence of details prevents discovering that a specific system may be poorly designed, insufficiently validated, or misapplied. This is especially dangerous in the context of techno-esotericism, where technological language itself becomes a source of authority.

⚠️ Agency Attribution Error: Ascribing Intentions and Understanding to the System

People tend to attribute agency (intentions, goals, understanding) to systems demonstrating complex behavior. When an AI chatbot gives an empathetic response, it's easy to forget that this is the result of statistical optimization, not emotional understanding.

Opacity reinforces this illusion: if we don't see the mechanism, it's easier to imagine there's "understanding" inside. This leads to overestimating the system's reliability—we trust it as we would trust an understanding expert, even though the system lacks the flexibility and contextual understanding of a human.

🔁 Confirmation Loop: How Using the System Reinforces Trust in It

Each successful application of the system (even a random coincidence) is interpreted as confirmation of its competence. Failures are reattributed: user error, wrong context, insufficiently precise query—anything except the system itself.

- User applies the system and gets a result

- Result is interpreted as success (even if it's coincidence)

- Trust in the system grows

- User applies the system in more complex situations

- Failures are explained by external factors, not system limitations

- Cycle repeats, trust strengthens

Opacity blocks escape from this cycle: there's no way to verify whether the system actually works better than chance, or if it's availability heuristic—we remember successes and forget failures.

🎯 Interaction of Biases: Synergy of Vulnerabilities

These three mechanisms don't operate independently. The halo effect creates initial trust, agency attribution error makes the system psychologically "alive" and responsible, and the confirmation loop reinforces both effects.

Add base rate neglect—the user doesn't know how often the system fails overall—and you get a perfect storm: the system is perceived as competent, understanding, and reliable, when in reality it's just a black box that sometimes produces useful results.

Opacity is not simply an absence of information. It's an active catalyst that transforms normal cognitive processes into systematic errors of judgment.