What survivorship bias is and why it's invisible to those caught in it

Survivorship bias is a form of selection bias in which conclusions are drawn exclusively based on objects that passed through a selection process, while completely ignoring those who didn't make it through (S011). The key word is "bias": this isn't a random oversight but a structural defect in the method of collecting and interpreting data.

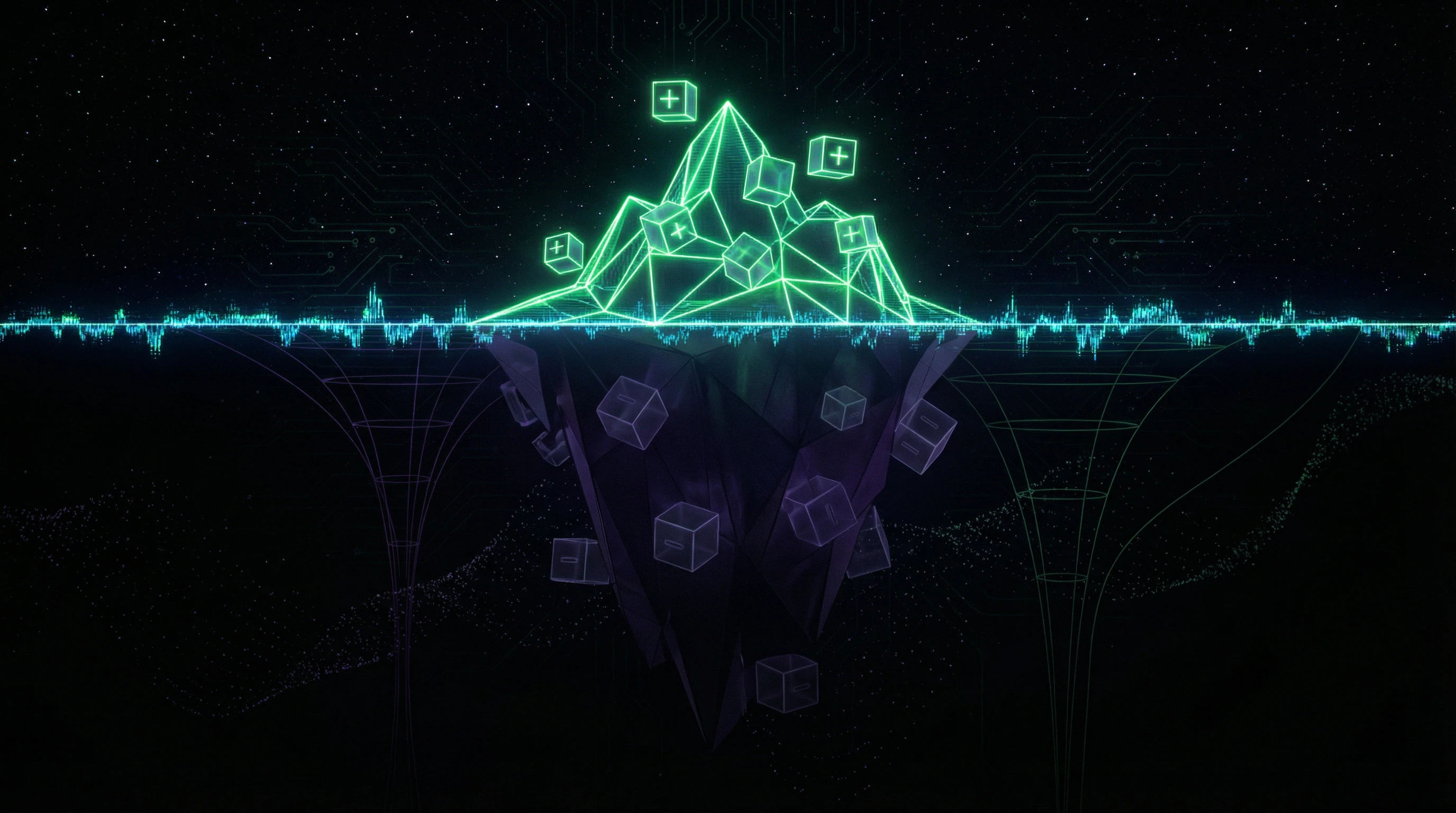

Successful objects remain visible. Failed ones disappear. The researcher sees only the "survivors" and builds a model of reality on an incomplete sample.

The invisibility mechanism: availability asymmetry

Successful companies, people, and planes continue to exist — they're written about, studied. Failed ones disappear: companies shut down and delete their websites, unsuccessful people don't give interviews, shot-down planes don't return to base (S011).

This creates a fundamental asymmetry. What's visible seems typical, though in reality it's a sample from a sample — only those who survived the selection filter. More details in the Critical Thinking section.

Classic example: Abraham Wald's planes

During World War II, American military analysts examined damage on returned bombers. Bullet holes clustered on wings, tails, and fuselage — engineers proposed reinforcing exactly these zones (S011).

Statistician Abraham Wald noticed a critical error: they were analyzing only planes that returned. The aircraft that were shot down had damage in other places — where the returned planes had no bullet holes. These zones — engines and cockpit — required reinforcement, because hits there were lethal.

- Visible damage (wings, tail)

- Planes survived despite them — meaning these zones are less critical.

- Invisible damage (engines, cockpit)

- Planes with such damage didn't return — so they're absent from the sample.

Three structural components of the bias

- Selection process — systematically removes a certain category of objects from the observed sample (death, bankruptcy, refusal to participate).

- Incomplete visibility — the researcher lacks access to data about filtered-out objects or doesn't recognize their existence.

- False generalization — conclusions drawn from "survivors" are extended to the entire population, including those who didn't survive (S010), (S011).

Five Most Compelling Arguments That Survivorship Bias Is a Real Threat to Decision-Making

🔥 Argument One: Empirical Evidence from Military Aviation History

The Abraham Wald case is not merely a historical anecdote, but a documented example of how survivorship bias could have led to mass pilot casualties. Military engineers had precise data on damage locations on returned aircraft, but this data was systematically biased (S011).

Had the engineers' recommendations been implemented without Wald's correction, armor would have been installed in the wrong places, failing to protect against lethal hits. This case demonstrates that even with large volumes of quantitative data, survivorship bias can remain undetected without specialized statistical analysis. More details in the Epistemology Basics section.

Data on returned aircraft is data on survivors. Information about downed aircraft is absent by definition.

📊 Argument Two: Systematic Reviews and Meta-Analyses Are Subject to Publication Bias

In scientific literature, survivorship bias manifests as publication bias—a systematic tendency to publish studies with positive or statistically significant results while ignoring studies with negative or null findings (S009, S012).

Meta-analyses that synthesize data from published studies automatically inherit this systematic error: they analyze only "surviving" studies that passed through the filter of peer review and editorial policy (S001, S005). Research shows that effects estimated in meta-analyses may be inflated by 30–50% due to the absence of unpublished negative results (S009).

| Study Type | Publication Probability | Impact on Meta-Analysis |

|---|---|---|

| Positive result (p < 0.05) | ~85–95% | Effect overestimation |

| Null result | ~10–20% | Systematic bias |

| Negative result | ~5–15% | Harm underestimation |

🧪 Argument Three: Medical Studies with High Participant Attrition Yield Distorted Results

In clinical trials, survivorship bias occurs when participants drop out non-randomly—for example, due to drug side effects or lack of improvement (S012). If analysis is conducted only on those who completed the study ("per-protocol analysis"), results will be systematically biased in favor of intervention effectiveness: the sample retains only those who tolerated treatment well and for whom it worked (S010, S012).

Medical research design requires "intention-to-treat" analysis, which includes all randomized participants regardless of whether they completed the study, precisely to minimize survivorship bias (S012).

- Per-protocol analysis

- Analysis of completers only. Result: bias favoring the drug. Trap: invisible side effects.

- Intention-to-treat analysis

- Analysis of all randomized participants. Result: realistic assessment. Protection: includes dropouts and withdrawals.

🧬 Argument Four: Business Literature Systematically Overestimates Successful Companies' Strategies

Books and articles about business strategies almost always analyze successful companies—Apple, Google, Amazon—and identify their common traits: innovation, customer focus, bold decisions (S011). The problem is that thousands of bankrupt companies possessed the same characteristics, but their stories don't make it into bestsellers.

A study analyzing both successful and failed startups with identical strategies might show that "innovation" or "boldness" are not predictors of success, but merely necessary yet insufficient conditions. Without a control group of "casualties," any conclusions about causes of success remain speculative.

A successful company with an innovative strategy is visible. A failed company with the same strategy is forgotten. The conclusion about causes of success is an illusion.

🔁 Argument Five: Cognitive Psychology Confirms the Naturalness of This Error

Survivorship bias is not the result of carelessness, but a consequence of fundamental features of human cognition. We are evolutionarily tuned to notice the presence of objects, not their absence; we better remember striking successes than quiet failures; we tend to construct narratives based on available information without considering what information might have been lost (S011).

These cognitive features make survivorship bias universal and difficult to detect without specialized methodological tools. Even professional researchers regularly fall into this trap if they don't apply explicit protocols for checking systematic selection errors (S010, S012).

- The brain notices presence (visible successes), ignores absence (invisible failures)

- Memory encodes vivid events, erases routine failures

- Narrative is constructed from available data, without questioning what's missing

- Specialized methods (hypothesis registration, dropout analysis) require conscious effort

Evidence Base: What Systematic Reviews and Meta-Analyses Tell Us About Survivorship Bias in Scientific Research

📊 Meta-Analyses as Both Tool and Victim of Survivorship Bias

Systematic reviews and meta-analyses represent the highest level of evidence in the hierarchy of scientific data, as they synthesize results from multiple primary studies to obtain more precise effect estimates (S001), (S005), (S007). However, the meta-analysis process itself is vulnerable to survivorship bias at multiple levels.

First, meta-analyses include only published studies that have passed through the peer review filter—a classic case of analyzing "survivors" (S009). Second, even among published studies, some may be inaccessible due to language barriers, paywalls, or simply because they're published in obscure journals (S001).

| Filtering Level | Selection Mechanism | Resulting Bias |

|---|---|---|

| Peer Review | Rejection of studies with negative results | Overestimation of intervention effects |

| Accessibility | Paywalled journals, language barriers | Underrepresentation of studies from developing countries |

| Visibility | Publication in obscure outlets | Insufficient inclusion in meta-analyses |

🧾 Quantifying Publication Bias in Medical Meta-Analyses

Studies analyzing publication bias in medical meta-analyses show that intervention effects are systematically overestimated. Methods for assessing publication bias include funnel plots, Egger's test, and trim-and-fill analysis (S009).

When these methods are applied to existing meta-analyses, they often reveal asymmetry in the distribution of study results, indicating the absence of small studies with negative findings. After statistical correction for publication bias, effect estimates may decrease by 20–40%, and in some cases, statistically significant effects disappear entirely (S009).

A meta-analysis without correction for publication bias isn't a synthesis of evidence—it's a synthesis of published successes. The difference between them can be twofold.

🔎 The Problem of Participant Attrition in Clinical Trials

Survivorship bias in clinical trials occurs when participants drop out non-randomly, and analysis is conducted only on those who completed the protocol (S012). Research on study design in medicine emphasizes that high attrition rates (over 20%) are a critical risk factor for result validity (S012).

If dropout reasons are related to the intervention being studied—for example, patients discontinue medication due to side effects or lack of improvement—the remaining sample will be systematically biased toward those who tolerate treatment well and for whom it's effective (S010), (S012).

- Determine the study's attrition rate (acceptable: less than 20%)

- Check whether dropout reasons are described for each group

- Assess whether dropout reasons are related to the intervention

- Verify whether intention-to-treat analysis was conducted

- Compare characteristics of dropouts versus completers

🧪 ALL-IN Meta-Analysis as an Attempt to Overcome Survivorship Bias

The ALL-IN meta-analysis concept (Anytime Live and Leading INterim meta-analysis) represents a methodological innovation aimed at minimizing survivorship bias in evidence synthesis (S002). Traditional meta-analyses are conducted retrospectively, after all included studies are completed, creating a time lag and risk of publication bias.

ALL-IN meta-analysis proposes continuous updating of data synthesis as new results emerge, including interim data from ongoing studies (S002). The key advantage is the ability to update analysis at any time without losing statistical validity, allowing data inclusion before it passes through the publication filter (S002).

🧬 Survivorship Bias Specifics in Bilingualism Research

Research on sources of invalidity in bilingual development studies reveals specific manifestations of survivorship bias in this field (S010). Many bilingualism studies include only children who successfully acquired two languages, ignoring those who experienced difficulties or abandoned second language learning (S010).

This creates systematic bias in assessing cognitive advantages of bilingualism: if only successful bilinguals enter the sample, any detected cognitive advantages may be selection artifacts rather than consequences of bilingualism itself (S010). The research emphasizes the need to include control groups with unsuccessful second language acquisition attempts for accurate effect assessment.

- Selection Artifact

- When differences between groups are explained not by the intervention itself, but by who remained in the sample. In bilingualism: successful bilinguals differ from unsuccessful ones not by language, but by motivation, abilities, and social environment.

- Control Group with Attrition

- Must include children who began learning a second language but discontinued. Their cognitive profiles will reveal what bilingualism actually provides versus what comes from pre-selection.

🔬 AI Chatbot Empathy: A Meta-Analysis with High Survivorship Bias Risk

A recent systematic review and meta-analysis comparing empathy of AI chatbots and human healthcare providers demonstrates both the potential and limitations of the meta-analytic approach in the context of survivorship bias (S004). Analysis of 15 studies showed that AI chatbots (primarily ChatGPT-3.5/4) are perceived as more empathetic than humans, with a standardized mean difference of 0.87 (95% CI, 0.54–1.20), equivalent to approximately two points on a 10-point scale (S004).

However, the authors note critical limitations: all studies are based on text scenarios that ignore nonverbal cues, and empathy assessment was conducted through proxy evaluators rather than actual patients (S004). Moreover, studies where AI showed worse results or technical failures may not have reached publication—a classic case of publication bias.

The Mechanism of Error: Why Correlation Among "Survivors" Doesn't Mean Causation

🔁 False Causality: When Common Traits of Successful Entities Aren't Causes of Success

The central problem of survivorship bias is false causal attribution. When we see that all successful startups have charismatic founders, we conclude: charisma causes success. More details in the Reality Validation section.

But if thousands of failed startups also had charismatic founders, then charisma cannot be a causal factor (S011). It's merely a necessary but insufficient condition, or even just a random correlation. Without analyzing "failed" entities, it's impossible to distinguish true causes from simple coincidences.

Correlation among survivors is not proof of causation. It's proof that you're only looking at half the data.

🧬 Confounders and Reverse Causality in "Survivor" Analysis

Survivorship bias is compounded by confounders—third variables that affect both the probability of survival and the observed characteristics.

Successful companies have more resources to hire PR specialists, making them more visible. Conclusion: "good PR causes success." Reality: both success and PR are consequences of having resources (S011). Reverse causality adds confusion: successful people are confident, but success breeds confidence, not the other way around.

| What we see | What we think | What's actually happening |

|---|---|---|

| Successful founders are charismatic | Charisma → success | Resources → visibility → charisma appears greater |

| Successful people are confident | Confidence → success | Success → confidence |

| Surviving companies had a plan | Plan → survival | Luck + resources → plan appears brilliant |

📊 Statistical Power and Type I Errors in Incomplete Sample Analysis

Analyzing only "survivors" artificially inflates statistical power and increases the probability of false positive results (S009), (S012).

Participant attrition reduces variability in the sample: only those who respond well to the intervention remain. Less variability means smaller standard error, which means higher statistical significance. Even if the true effect in the full population is absent or much smaller.

- Full sample: high variability, real effect is honestly visible

- Failures filtered out: variability dropped, noise disappeared

- Remaining data appears statistically significant

- Conclusion is erroneous: the effect was an artifact of attrition, not reality

This is especially dangerous in medicine and psychology, where ignoring base rates already distorts perception. Add survivorship bias to this, and you get systematic overestimation of treatment or intervention effectiveness.

Conflicts and Uncertainties: Where Sources Diverge and Why It Matters

🧩 Disagreements on the Scale of Publication Bias

Sources diverge in assessing the severity of publication bias across different scientific fields. Some studies claim that in medicine it inflates effects by 30–50% (S009), while others point to more moderate estimates or note that in large clinical trials with mandatory protocol registration the problem is less pronounced (S012).

These disagreements reflect real heterogeneity: the scale of survivorship bias depends on the research field, funding sources, and publication culture. This isn't a flaw in science—it's a sign that the problem is more complex than a universal formula. More details in the Cognitive Biases section.

| Field / Context | Bias Estimate | Factor Influencing Scale |

|---|---|---|

| Medicine (general) | 30–50% inflation | Commercial funding, publication pressure |

| Large clinical trials (government funding) | More moderate | Mandatory protocol registration |

| Psychology, sociology | High (unspecified) | Low barriers to entry, multiple hypotheses |

🔬 Debates on Methods for Correcting Publication Bias in Meta-Analyses

The methodological debate on correcting publication bias has divided researchers into two camps. Traditional methods (trim-and-fill, Egger's test) are criticized for low statistical power and high false-positive rates (S009).

Modern approaches (selection models, p-curve analysis) offer alternatives but require additional assumptions about the publication selection mechanism (S009). The ALL-IN meta-analysis concept goes further—avoiding the problem by including pre-publication data, but requiring radical changes in research culture (S002).

- Trim-and-fill, Egger's test: fast, but unreliable with small samples

- Selection models: more accurate, but require assumptions about selection mechanism

- P-curve analysis: focuses on p-value distribution, sensitive to p-hacking

- ALL-IN meta-analysis: includes preprints and unpublished data, requires changes to scientific infrastructure

🧪 Uncertainty in Assessing AI Empathy: Methodological Traps

The meta-analysis of AI chatbot empathy exposes a fundamental problem: all studies are based on text scenarios that ignore nonverbal aspects of empathy—tone of voice, facial expressions, body language (S004).

Empathy was assessed not by actual patients but by proxy evaluators (healthcare workers or researchers), which may not align with the perceptions of those who truly need help (S004). Conclusions about AI superiority may be an artifact of the specific evaluation context rather than a real advantage in clinical practice.

When research methodology doesn't match reality, conclusions remain valid only for laboratory conditions. This isn't survivorship bias in the classic sense, but its close relative: context bias.

This uncertainty points to a broader problem: ignoring base rates when interpreting meta-analyses. Even if AI shows statistically significant advantages in empathy in text scenarios, this doesn't mean patients will feel more understood in an actual clinic.

Cognitive Anatomy of the Error: Which Psychological Mechanisms Make Us Vulnerable

Survivorship bias isn't accidental—it's built into the architecture of our perception. Three psychological mechanisms work in sync, transforming statistical blindness into conviction. Learn more in the Logical Fallacies section.

⚠️ Availability Heuristic: We Judge by What's Easy to Recall

Survivorship bias is closely linked to the availability heuristic—a cognitive bias where we estimate the probability of events based on how easily examples come to mind (S011). Successful companies, celebrities, disaster survivors—they're all more visible and memorable than failures and victims.

When we try to assess which strategies lead to success, we involuntarily recall vivid examples of success, ignoring thousands of invisible failures. This heuristic is evolutionarily adaptive for quick decision-making, but systematically distorts our judgments in situations requiring statistical thinking.

The brain doesn't distinguish between "occurs frequently" and "easily recalled." To it, they're the same thing.

🧠 Confirmation Bias: We Seek Confirmation, Not Refutation

Confirmation bias amplifies the effect of survivorship bias: we tend to seek and interpret information in ways that confirm our existing beliefs (S011). If we believe that "persistence leads to success," we notice stories of persistent people who succeeded, and ignore stories of persistent people who failed.

We don't ask the critical question: "How many persistent people failed?"—because that question threatens our belief. The combination of survivorship bias and confirmation bias creates a powerful illusion of causality where none exists.

🔁 Narrative Fallacy: We Love Stories, Not Statistics

The human brain is evolutionarily tuned to perceive and remember narratives—stories with a beginning, middle, and end, with heroes and obstacles (S011). The story of a successful entrepreneur who overcame hardships and built an empire is a compelling narrative.

Statistics, however, are boring and abstract: "out of 1,000 startups, 50 survive." The brain remembers the story, forgets the number. Narrative creates an illusion of understanding the causes of success, when in reality we're just hearing a well-told story.

| Mechanism | What Happens | Result |

|---|---|---|

| Availability Heuristic | Vivid examples seem typical | Overestimation of success probability |

| Confirmation Bias | We seek facts confirming our beliefs | Illusion of causality |

| Narrative Fallacy | Stories are remembered better than numbers | Causes of success seem clear |

🎯 Why These Mechanisms Work Together

Survivorship bias isn't a single error—it's a system. The availability heuristic supplies vivid examples, confirmation bias selects those that confirm our beliefs, narrative fallacy packages them into a convincing story.

The result: we see causal relationships where there's only base rate neglect and selection on survivors. This isn't a logic error—it's a perception error, built into the very architecture of attention.

Survivorship bias works because it's invisible. We only see the survivors—and that seems like the complete picture.