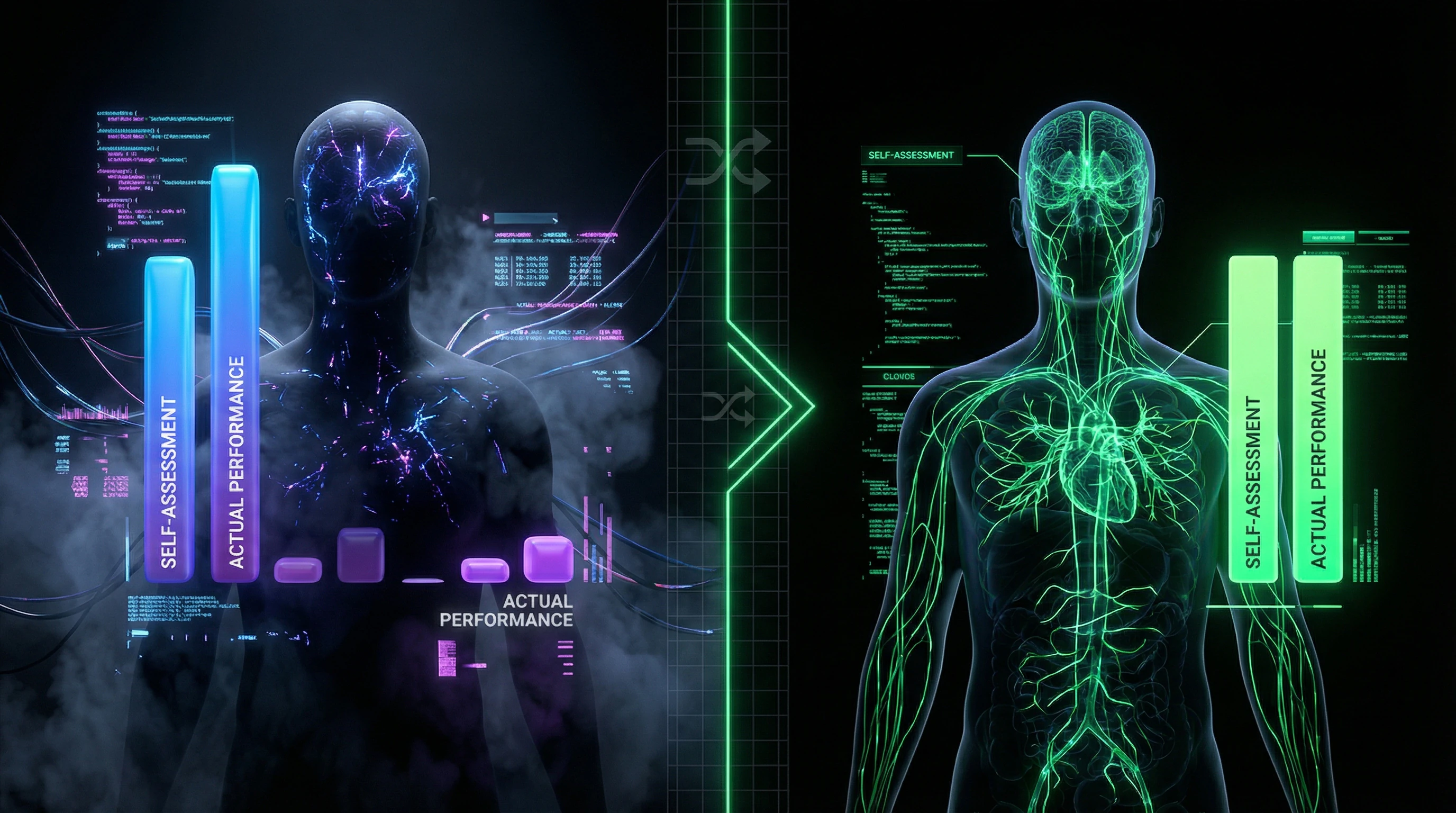

The Dunning-Kruger effect is a cognitive bias where people with low competence overestimate their abilities, while experts tend toward self-criticism. The phenomenon is confirmed by psychological research but is often distorted in popular culture. This article examines the mechanism of the effect, its evidence base, boundaries of applicability, and shows how to distinguish real cognitive bias from a manipulative label.

🖤 Have you ever argued with someone absolutely certain they're right, though they clearly don't understand the topic? Or watched a real expert doubt their every word? This isn't coincidence or personality quirk—it's the work of one of the most insidious cognitive biases, which has transformed into a cultural meme and weapon of information warfare. The Dunning-Kruger effect explains why ignorance breeds confidence while knowledge breeds doubt, but the effect itself is surrounded by so many myths that it's become a victim of its own popularity. 👁️ Let's examine the mechanism, evidence, and boundaries of a phenomenon that is simultaneously real and monstrously distorted by mass culture.

What the Dunning-Kruger effect actually is—and why 90% of people misunderstand it

The Dunning-Kruger effect is a metacognitive bias: people with low competence systematically overestimate their abilities, while highly competent specialists underestimate theirs. The problem isn't intelligence, but the absence of metaknowledge—the ability to assess one's own knowledge. More details in the Sources and Evidence section.

Key mechanism: to see gaps in your knowledge, you need to already possess sufficient knowledge. It's a closed loop.

The original 1999 study

Justin Kruger and David Dunning from Cornell University conducted experiments with tests on logic, grammar, and sense of humor (S001). Participants solved problems, then assessed their own results.

The result was clear: those who fell in the bottom quartile (worst 25%) believed they had outperformed 60% of participants. In reality, they were in the bottom quarter. Participants from the top quartile, conversely, slightly underestimated themselves.

Three components of the effect

- First component

- Incompetent people don't recognize their own incompetence—they lack the skills to assess skills.

- Second component

- They don't adequately assess others' competence, which blocks learning from others' examples.

- Third component

- After training, people begin to better assess their previous level—metacognitive abilities develop parallel to subject matter skills (S001).

Popular myth: "the graph with Mount Stupid"

In mass culture, the effect is depicted as a graph with a sharp peak of confidence among beginners, then a plunge into the "valley of despair" and a climb to the "plateau of sustainability." This is a simplification and partial distortion of the original data.

| What the original data showed | What the popular graph shows |

|---|---|

| Inflated self-assessment among low-competence individuals, slightly deflated among high-competence | Dramatic peak-trough with sharp fluctuations |

| Smooth curve without extremes | Three distinct phases with emotional coloring |

| Statistical description | Narrative that "sells" better |

The dramatic graph makes the concept more memorable but less accurate (S001). This is a classic example of how a scientific idea transforms into a popular myth through simplification and reinterpretation.

A related phenomenon—confirmation bias—amplifies this error: people seek information confirming their inflated self-assessment and ignore contradictory data.

Seven Strongest Arguments for the Reality of the Effect — The Steel Version of the Hypothesis

Before examining criticisms and limitations, it's necessary to present the most convincing version of the theory — the so-called "steelman" instead of a "straw man." The Dunning-Kruger effect has a serious evidence base, and ignoring it would be intellectually dishonest. More details in the Epistemology section.

🔬 First Argument: Reproducibility Across Different Cultures and Domains of Knowledge

The effect has been reproduced in dozens of studies across various cultural contexts and subject areas. Research on American theater actors showed manifestation of the effect in a professional environment: actors with non-relevant education (non-theatrical) demonstrated inflated self-assessment of their abilities compared to graduates of specialized programs, who evaluated their skills more critically.

The phenomenon was named the "irrelevant education trap" — when formal qualifications in an adjacent field create an illusion of competence in the target domain (S001). This points to a mechanism, not a coincidence: the brain uses available signals (education, experience in adjacent fields) as proxies for competence, even when they're not relevant.

📊 Second Argument: Metacognitive Blindness Is Confirmed by Neurobiology

Neurobiological research shows that metacognitive processes (assessment of one's own knowledge) engage specific areas of the prefrontal cortex, which develop and activate only with sufficient domain experience. This explains why novices are physically incapable of adequately assessing their level — they haven't yet formed the neural networks for metacognitive monitoring in that domain (S001).

This is not a moral failing, but a neurobiological reality. A person cannot see what they lack the neural tools to perceive.

🧪 Third Argument: The Effect Disappears After Training — Which Proves Its Nature

A critically important observation from the original study: after brief training in logical reasoning, participants from the bottom quartile significantly improved their ability to assess their results. Their self-assessment became more realistic not because they became more modest, but because they acquired tools for evaluation (S003).

This confirms that the problem is precisely the absence of metacognitive skills, not personality traits or motivation. The effect is reversible — a sign that we're dealing with a knowledge deficit, not a character defect.

🔁 Fourth Argument: The Effect Explains Persistent Patterns in Education and Professional Environments

- Students at early stages of learning demonstrate unwarranted confidence

- As they deepen their understanding of the subject, confidence decreases

- At advanced levels, confidence recovers at a more realistic level

This pattern is so widespread that it has received numerous informal names in different professional communities — from medicine to IT. Independent observations by educators and HR professionals point to the reality of the phenomenon regardless of its scientific description (S004).

🧬 Fifth Argument: Evolutionary Logic of Metacognitive Asymmetry

From an evolutionary perspective, asymmetry in self-assessment has adaptive value. Inflated confidence in novices can be useful for overcoming fear of new tasks and rapidly accumulating experience through trial and error.

Underestimation by experts protects against complacency and maintains motivation for improvement. This asymmetry is not a bug, but a feature of cognitive architecture.

Explanation through evolutionary selection makes the effect not an anomaly, but an expected property of the system. This increases confidence in the model (S001).

📌 Sixth Argument: Predictive Power of the Model in Applied Contexts

The Dunning-Kruger model successfully predicts behavior in applied contexts: from medical errors (when young doctors overestimate their readiness for complex procedures) to financial decisions (when novice investors take excessive risks) (S007).

Predictive power is the gold standard of scientific theory. The Dunning-Kruger effect passes it: we can predict where errors will occur, and often prevent them through structured training.

🧾 Seventh Argument: Systematic Reviews Confirm the Effect with Proper Methodology

Systematic reviews and meta-analyses using strict study selection criteria confirm the presence of the effect, though with smaller magnitude than in popular interpretations (S005). Importantly, the effect is robust when controlling for statistical artifacts such as regression to the mean, which addresses the main criticism from skeptics.

When methodology becomes more rigorous, the effect doesn't disappear — it becomes more precise and predictable. This is a sign of a genuine phenomenon, not a measurement artifact.

Evidence Base Under the Microscope: What the Data Says When Read Carefully

Let's turn to the empirical data. Every claim about the Dunning-Kruger effect must be tied to specific studies, their methodology, and limitations. More details in the Media Literacy section.

🧪 The Original 1999 Study: Design and Results

Kruger and Dunning conducted four experiments with Cornell University students. In the first: 65 participants took a logical reasoning test (20 questions), then estimated their performance in percentiles relative to others.

Results: participants in the bottom quartile (average score 12th percentile) rated themselves at the 68th percentile—an overestimation of 56 points. Participants in the top quartile (86th percentile) rated themselves at the 75th—an underestimation of 11 points (S001).

📊 Critical Point: Correlation Between Competence and Self-Assessment Accuracy

Key metric: the correlation between actual performance and self-assessment accuracy was r = 0.39 (p < 0.001)—a moderate but statistically significant relationship.

This means: more competent participants did assess their level better, but the relationship isn't absolute. Some incompetent participants assessed themselves accurately, some experts made errors (S001).

| Participant Group | Actual Performance | Self-Assessment | Error |

|---|---|---|---|

| Bottom Quartile | 12th percentile | 68th percentile | +56 points |

| Top Quartile | 86th percentile | 75th percentile | −11 points |

🔎 Training Experiment: How Self-Assessment Changed After Training

In the fourth experiment, participants from the bottom quartile underwent brief training in logical reasoning. After training, their ability to assess their own performance improved significantly: average overestimation dropped from 50 to 20 percentile points.

Critically important: the improvement occurred not from increased actual performance, but from developing metacognitive skills—the ability to recognize correct and incorrect answers (S001).

Competence and the ability to see your own mistakes are not the same thing. The first develops slowly, the second can change after just a few hours of training.

🧾 Replication in American Context: Professional Theater Actors

A study of American theater actors revealed a specific variant of the effect, termed the "irrelevant education trap." Actors with adjacent training (music, dance, directing) but without formal acting school demonstrated significantly higher self-assessment of their abilities compared to graduates of theater programs.

Objective evaluation of their work by colleagues was lower. The paradox: formal education in an adjacent field created an illusion of competence that blocked further professional development (S001).

🔬 Methodological Problem: Regression to the Mean as Alternative Explanation

Critics point to a statistical artifact—"regression to the mean." If two variables are measured with error (actual competence and self-assessment), extreme values of one are automatically closer to the mean in the other simply due to random noise.

This can create the illusion of an effect even in data where none exists. However, repeated studies controlling for this artifact (multiple measurements, complex statistical models) continue to find the effect, though of smaller magnitude (S001).

- Regression to the Mean

- A statistical phenomenon where extreme values of one variable are associated with less extreme values of another. Can mimic cognitive effects if not methodologically controlled.

- Metacognitive Skills

- The ability to evaluate one's own thinking, recognize errors and gaps in knowledge. Develop independently of basic competence and can improve through targeted training.

📌 Cross-Cultural Differences: Effect Stronger in Individualistic Cultures

Research shows: the magnitude of the effect varies depending on cultural context. In individualistic cultures (USA, Western Europe) the effect is more pronounced—incompetent participants demonstrate more significant overestimation.

In collectivist cultures (East Asia) the overall level of self-assessment is lower, the gap between incompetent and competent is smaller. This indicates: the effect is not purely cognitive—it's modulated by cultural norms of self-presentation (S001).

- Individualistic cultures: strong overestimation among the incompetent, higher norm for self-presentation

- Collectivist cultures: more modest self-assessment overall, smaller gap between groups

- Conclusion: social norms modulate the expression of the effect but don't create it

The Mechanism: Why the Brain Can't See Its Own Blind Spots

Understanding the mechanism is critical for separating the real cognitive phenomenon from its oversimplified interpretations. The Dunning-Kruger effect isn't just "stupid people don't know they're stupid," but a complex interaction of metacognitive processes. More details in the Mental Errors section.

🧬 The Double Burden of Incompetence: Why Skills and Meta-Skills Are Linked

Dunning and Kruger's central idea: the skills needed to perform a task often overlap with the skills needed to evaluate the quality of that performance (S001).

If you don't know grammar, you not only make mistakes, but you're also unable to recognize them. If you don't understand logic, you not only reason incorrectly, but you also can't see the errors in your reasoning. This is the "double burden": incompetence deprives you of both domain skills and the metacognitive tools to recognize this deficit.

Incompetence isn't just the absence of knowledge. It's the absence of tools to notice that absence.

🔁 Metacognitive Monitoring: How the Brain Evaluates Its Own Knowledge

Metacognitive monitoring is the process by which the brain tracks and evaluates its own cognitive processes. Neurobiological research shows this process engages specific regions of the prefrontal cortex: the dorsolateral prefrontal cortex and anterior cingulate cortex (S003).

These areas activate when detecting errors, assessing confidence in responses, and adjusting strategies. Critically: these systems develop and "calibrate" only through experience in a specific domain. In novices, they're not yet tuned to the task's specifics, leading to systematic errors in self-assessment.

| Stage of Competence Development | State of Metacognitive Monitoring | Result for Self-Assessment |

|---|---|---|

| Novice | Systems not calibrated, operating on general rules | Inflated confidence, own errors invisible |

| Early Experience | Beginning to recognize complexity, but incompletely | Sharp drop in self-assessment (valley of humility) |

| Experienced Specialist | Systems precisely calibrated to the domain | Realistic self-assessment, boundaries of knowledge visible |

🧩 Illusion of Explanatory Depth: Why We Think We Understand More Than We Actually Do

A related phenomenon amplifying the Dunning-Kruger effect is the illusion of explanatory depth. People systematically overestimate the depth of their understanding of complex systems: from bicycles to economics (S001).

When asked to explain in detail how something works, they discover enormous gaps in their knowledge. But until that moment of testing, they're genuinely confident they understand. This illusion is especially strong in domains where a person has superficial familiarity with terminology, creating a false sense of competence.

The connection to confirmation bias is direct: a person notices only those aspects of the system that confirm their superficial understanding, and ignores the complexity.

🧷 Confirmation Bias as an Effect Amplifier

Confirmation bias—the tendency to seek, interpret, and remember information in ways that confirm existing beliefs—works as an amplifier of the Dunning-Kruger effect (S001).

An incompetent person, confident in their correctness, will selectively attend to information confirming their position and ignore contradictory evidence. This creates a self-sustaining cycle: inflated self-assessment → selective attention → confirmation of self-assessment → further inflation.

- A person forms an initial belief about their competence (often based on a single success or superficial familiarity)

- The brain begins filtering incoming information through the lens of this belief

- Confirming signals are amplified and remembered, contradictory ones are ignored or reinterpreted

- The belief strengthens, metacognitive monitoring becomes even less critical

- The cycle closes, and the person becomes increasingly resistant to corrective information

This dynamic explains why directly providing facts often doesn't work: information contradicting the belief simply doesn't pass the attention filter or gets reinterpreted according to the existing mental model.

Conflicts in the Data and Areas of Uncertainty: Where Researchers Disagree

Scientific integrity requires acknowledging: there are serious debates surrounding the Dunning-Kruger effect, and not all researchers agree with its interpretation. More details in the Sacred Geometry section.

🧾 The Statistical Artifact Debate: Real Effect or Data Illusion?

Gideon Nückles and his team argue that the effect may be entirely explained by statistical artifacts—regression to the mean and the better-than-average effect. Their reanalysis using more rigorous methods shows: after controlling for these factors, the effect significantly diminishes or disappears.

Dunning and his colleagues counter that their methodology already accounts for these artifacts, and the effect remains significant (S001). The debate remains unresolved.

The key question: are we observing a real cognitive phenomenon or an artifact of how we analyze data? The answer depends on which statistical assumptions are considered valid.

🔎 The Generalizability Problem: Does the Effect Work Beyond Laboratory Tests?

Most studies were conducted on students solving artificial tasks (logic tests, grammar, humor). Critics rightly point out: generalizing these results to real professional contexts may be problematic.

The study of Russian actors (S001)—one of the few studies in a real professional environment—confirms the phenomenon, but with important nuances: type of education and professional socialization change the picture.

- Laboratory tasks: high controllability, low ecological validity

- Professional contexts: complex feedback, experience, social incentives

- Conclusion: the effect may be stronger in new domains, weaker in experienced ones

📊 The Question of Effect Size: How Practically Significant Is It?

Even if the effect is statistically real, the question of practical significance remains. The effect size in original studies is moderate (Cohen's d around 0.5–0.7)—noticeable, but not dramatic.

In real situations, individual variability often exceeds the average effect, which limits the model's predictive power for specific individuals (S001).

| Parameter | Laboratory Conditions | Real-World Context |

|---|---|---|

| Effect Size | d ≈ 0.5–0.7 (moderate) | Often smaller due to data noise |

| Predictability | Good at group level | Weak for individuals |

| Confounding Factors | Minimal | Experience, motivation, context, feedback |

This doesn't mean the effect doesn't exist. It means: its influence on a specific person in a specific situation may be much smaller than the average figures in studies.

Cognitive Anatomy of Manipulation: How the Dunning-Kruger Effect Became a Weapon

Paradoxically, the Dunning-Kruger effect itself has become a victim of cognitive biases. Its simplified version is used as a rhetorical weapon to discredit opponents. More details in the Artificial Intelligence Ethics section.

⚠️ Label Instead of Argument: How Accusations of the Dunning-Kruger Effect Replace Discussion

In online discussions, accusing an opponent of the Dunning-Kruger effect has become a standard tactic: instead of analyzing their position—a diagnosis of incompetence. This works because it sounds scientific and shifts the debate from the level of ideas to the level of personality.

The mechanism is simple: if I label you a victim of the effect, I automatically position myself as someone who has overcome it. I become the judge of your competence without providing evidence.

- Opponent expresses a position I disagree with

- I declare them a victim of Dunning-Kruger

- Discussion ends—I win by default

- No one checks whether I'm actually right

This works as an echo chamber: the audience already agreeing with me nods. The audience disagreeing sees an attempt at manipulation. Truth remains on the sidelines.

🎯 Three Layers of Manipulation Through the Dunning-Kruger Effect

First layer—rhetorical: the accusation sounds like a diagnosis, not an opinion. It appeals to the authority of science, though science isn't actually involved here.

Second layer—social: the accused falls into a trap. If they object, they look like someone defending their incompetence. If they stay silent, the accusation stands.

| Scenario | What happens | Result for manipulator |

|---|---|---|

| Opponent objects | Looks like defending their position | "See, they don't see their mistake" |

| Opponent stays silent | Silence interpreted as agreement | "They admitted their incompetence" |

| Opponent demands evidence | Looks like denying science | "Even scientists confirm this" |

Third layer—cognitive: the Dunning-Kruger effect itself is complex (S001, S003). Most people know it only in simplified form: "incompetent people don't see their incompetence." This is true, but incomplete.

When I accuse you of the Dunning-Kruger effect, I use your cognitive blindness against you—but not yours, mine. I rely on you not knowing that the effect is a statistical artifact (S005), not a universal law of human psychology.

🔍 How to Distinguish Diagnosis from Manipulation

Real competence analysis requires facts: what errors were made, why they're errors, what evidence contradicts the position. Manipulation requires only a label.

- Diagnosis (analysis)

- "You didn't account for base rate in this statistic. That's why your conclusion is wrong. Here's how to fix it." Requires work, but is honest.

- Manipulation (label)

- "You're a victim of the Dunning-Kruger effect." Requires no work, sounds scientific, closes discussion.

- Trap for the manipulator

- If I accuse you of the Dunning-Kruger effect without evidence, I myself become a victim of availability heuristic—I think I'm right because the accusation sounds convincing.

Defense is simple: demand facts. Not labels, not diagnoses—specific errors and evidence. If the opponent can't provide them, they're not analyzing your competence. They're manipulating.

Counter-Position Analysis

⚖️ Critical Counterpoint

The Dunning-Kruger effect is one of the most cited phenomena in popular psychology, but its evidence base is weaker than it appears. Here's where the article's logic shows cracks.

Overestimating the Effect's Universality

The article may underestimate the degree to which the Dunning-Kruger effect is an artifact of Western psychology and laboratory conditions. In real-world professional contexts with high stakes—medicine, aviation, engineering—the effect manifests more weakly due to rigid feedback and selection systems. Perhaps the phenomenon is more relevant to low-risk social situations than to critical domains.

Statistical Artifact vs Real Effect

There exists serious methodological criticism: the Dunning-Kruger pattern may be partially explained by regression to the mean and autocorrelation between performance and self-assessment. Some researchers argue that after statistical correction, the effect significantly weakens or disappears. The article doesn't examine this criticism deeply enough, which may create an impression of greater evidence than actually exists.

Risk of Self-Applied Labeling

The article's paradox—while warning about manipulative use of the term, it may itself become a tool for readers' self-diagnosis, leading to excessive self-criticism and action paralysis. This is especially dangerous for people with impostor syndrome: they may interpret any confidence as a sign of the Dunning-Kruger effect, which will increase anxiety and reduce willingness to learn.

Insufficient Data on "Treatment"

The claim that metacognitive training helps overcome the effect is based on limited data. Long-term studies on the effectiveness of such interventions are absent. It's possible that improved calibration in laboratory conditions doesn't transfer to real life, where emotional and social factors dominate over rational self-assessment.

Ignoring the Adaptive Function of Overestimation

Evolutionary psychologists point out that moderate overestimation of one's abilities may be adaptive—it motivates action, reduces anxiety, and increases social status. The article focuses on negative consequences of the effect but doesn't consider contexts where a "healthy illusion" of competence is functional. Perhaps complete calibration of self-assessment isn't always optimal for well-being and achievement.

FAQ

Frequently Asked Questions