Dead Internet Theory: from anonymous post to mass panic — what exactly is claimed and why it matters

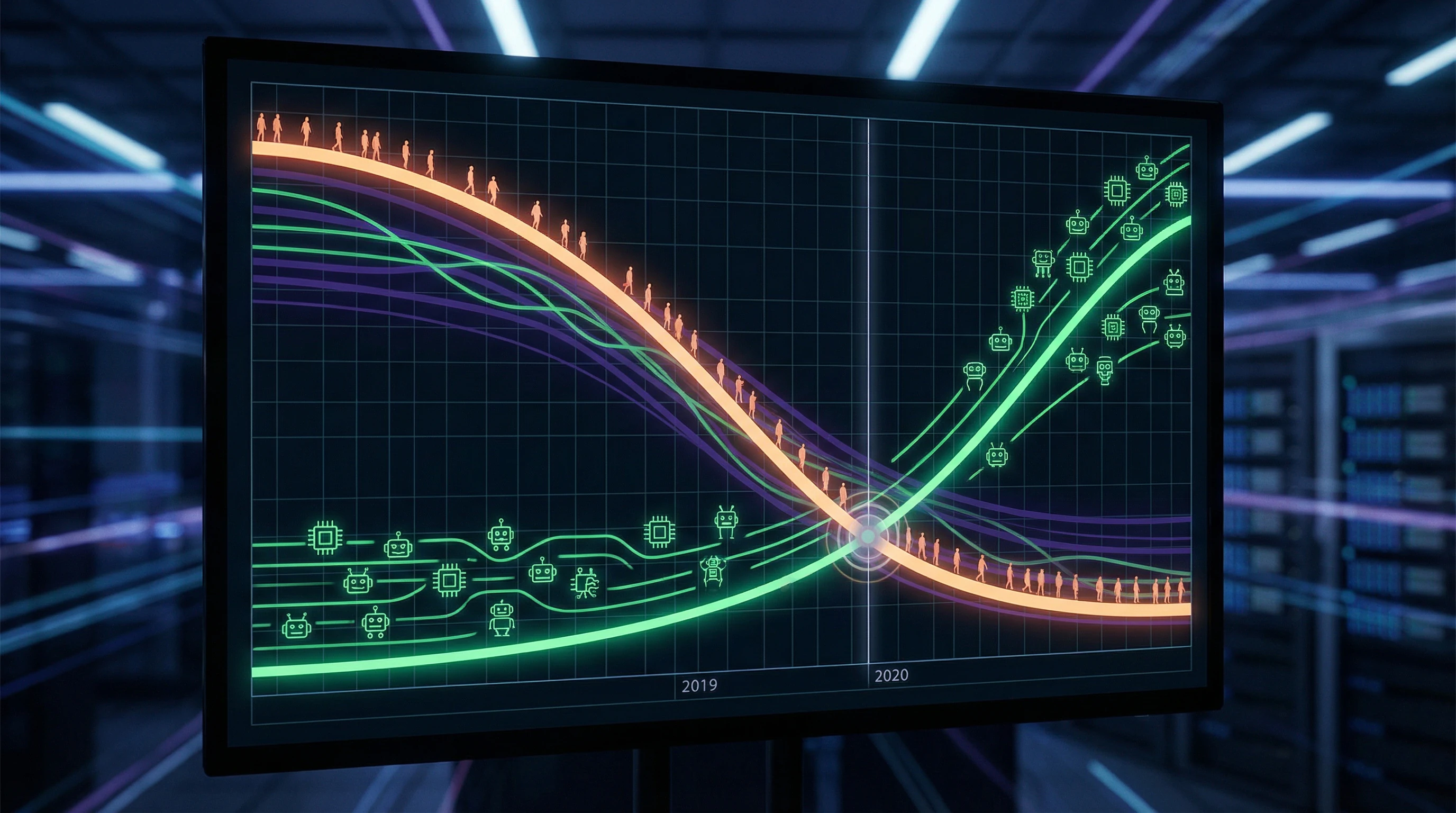

Dead Internet Theory is a conspiratorial hypothesis claiming that a significant portion of online activity, content, and interactions is generated not by living people, but by bots, algorithms, and artificial intelligence. According to this theory, the internet "died" around 2016–2017, when automated systems reached critical mass and began dominating human presence. More details in the Mental Errors section.

Proponents claim that corporations and governments deliberately maintain the illusion of a living internet to manipulate public opinion, sell advertising, and control the information landscape.

The internet didn't just change — it allegedly ceased being a space for human communication and became a shadow theater where people interact with simulations.

⚠️ Key claims of the theory: what exactly is considered "dead"

The theory rests on four interconnected assertions. Most comments on social media are allegedly written by bots, not real users. A significant share of content — articles, videos, posts — is generated by algorithms to create the appearance of activity.

- Trends and viral phenomena

- Allegedly artificially created and maintained by automated systems, rather than emerging organically from human interests.

- Activity ratio

- Real people constitute a minority of online activity, their interactions drowning in a sea of synthetic noise.

🧩 Historical context: how an anonymous post became a cultural phenomenon

The first systematic exposition of the theory appeared on the Agora Road's Macintosh Cafe forum in 2021, though individual elements circulated on imageboards since 2016. The post described the internet as a "dead space" where algorithms imitate human activity so convincingly that most users don't notice the substitution.

The theory quickly spread to Reddit, Twitter, and YouTube, where it resonated with people experiencing "digital fatigue" and a sense of unreality in online interactions. By 2023, after the launch of ChatGPT and explosive growth of generative AI, the theory gained new life — now not as conspiracy theory, but as a description of observable reality.

| Period | Theory status | Spread trigger |

|---|---|---|

| 2016–2020 | Marginal hypothesis on imageboards | Growth of bots on social media |

| 2021 | Systematized conspiracy theory | Macintosh Cafe post |

| 2023–2024 | Quasi-scientific observation | Explosive growth of generative AI |

🔎 Analysis boundaries: what we're testing and what we're not

In this article we focus on verifiable claims of the theory: the proportion of automated content, bot statistics, data on synthetic media. We don't examine conspiratorial elements about "global conspiracy" or "deliberate murder of the internet" — these claims aren't empirically testable.

Our task is to separate observable trends from cognitive biases and show where statistics end and paranoid interpretation begins. This doesn't mean the theory is completely false — rather, it mixes real phenomena with incorrect conclusions about their causes and scale.

Steel Version of the Theory: Seven Most Compelling Arguments for the "Dead Internet" — and Why They Cannot Be Ignored

Before examining the theory, it's necessary to present it in its strongest form — the so-called "steelman argument." This is an intellectually honest approach: we take the best arguments from the theory's proponents, strip away emotions and conspiracy thinking, and test how well they align with the data. More details in the Critical Thinking section.

Below are seven of the most convincing observations that fuel the dead internet theory.

⚠️ First Argument: Exponential Growth of Bots on Social Media

Bot statistics on social media are genuinely alarming. By various estimates, between 15% and 60% of accounts on Twitter (before rebranding to X) were automated or semi-automated.

Facebook regularly removes billions of fake accounts quarterly — in 2022, the company blocked over 1.6 billion accounts in three months. Instagram battles bots that generate comments, likes, and follows.

If platforms are removing billions of bots, how many more remain undetected? These figures aren't secret — they're published in the platforms' own reports.

🧩 Second Argument: Synthetic Content Has Become Indistinguishable from Human Content

With the emergence of GPT-3, GPT-4, and other large language models, the boundary between human and machine text has blurred. Research shows that people cannot reliably distinguish AI-written text from human writing — detection accuracy hovers around 50%, equivalent to random guessing.

Generative models create articles, social media posts, and comments that pass moderation and are perceived as authentic. If we cannot distinguish synthetic content, how can we be certain we're interacting with people?

🔁 Third Argument: Growth of "Empty" Content and Content Farms

The internet is flooded with low-quality content created for SEO and ad monetization. Content farms generate thousands of articles per day using templates and automation.

YouTube is filled with automatically generated videos — compilations, re-uploads, robotic voiceovers. TikTok and Instagram Reels display endless variations of the same content created from algorithmic templates.

- Content is created for content's sake, not for meaning

- Human creativity becomes a rare exception

- Algorithms optimize for virality, not quality

📊 Fourth Argument: Trend Manipulation and Artificial Virality

There are documented cases where social media trends were artificially created through botnets and coordinated campaigns. Political actors use automated systems to promote hashtags, discredit opponents, and create the illusion of mass support.

Marketing agencies sell "virality" as a service, using bot networks to inflate views, likes, and shares. If trends can be purchased, how can we trust what we see in our feeds?

🧠 Fifth Argument: The Phenomenon of "Digital Derealization" Among Users

Many users report a subjective sense of "unreality" in online interactions. Comments seem templated, dialogues repetitive, reactions predictable.

Psychologists document rising "digital fatigue" and the feeling that "everyone around is a bot." This subjective experience isn't proof, but it's widespread and persistent.

The collective unconscious may recognize the changed nature of the internet before data captures it. But subjective feeling and objective reality are different things.

🕳️ Sixth Argument: Declining Search Quality and Rising SEO Spam

Google and other search engines increasingly return low-quality, automatically generated results. AI-created SEO-optimized articles are displacing original content.

Users complain that search has become "useless" — first pages are filled with ads, spam, and content farms. The internet is being optimized for algorithms, not people, making it less suitable for human needs.

⚙️ Seventh Argument: Economic Incentives for Content Automation

Creating content with humans is expensive and slow. Automation is cheap and scalable. Advertising platforms pay for views and clicks regardless of who generates them.

- Economic Logic

- Pushes toward replacing people with algorithms. In a capitalist system where content is a commodity, its industrialization is inevitable.

- "Dead Internet"

- Not a conspiracy, but a natural result of market forces where scalability defeats quality.

Evidence Base: What the Data Says About the Real Share of Synthetic Content — Numbers, Research, Facts Without Emotion

Moving from arguments to verifiable data. More details in the Sources and Evidence section.

🧪 Bot Statistics: What Traffic Research Shows

Imperva, a cybersecurity company, publishes annual reports on internet traffic composition. In 2022, approximately 47.4% of all web traffic was generated by bots — both "good" (search crawlers, monitoring systems) and "bad" (scrapers, spam bots, DDoS attacks). The share of "bad" bots accounted for 30.2% of total traffic.

Traffic does not equal content. Bots generate numerous requests, but this doesn't mean they create content visible to humans.

📊 Synthetic Content on Social Media: Platform Data

Twitter estimated bots at 5% of active accounts, but independent researchers cited 9–15%. Elon Musk, contesting the platform purchase, claimed the real share could reach 20% or higher. Facebook removed 1.9 billion fake accounts in Q2 2022 — about 5% of its total user base.

Instagram fights automated systems generating comments and likes, but doesn't publish exact figures. The bot problem is real, but the scale depends on counting methodology.

🧾 Generative AI and Text Content: Prevalence Research

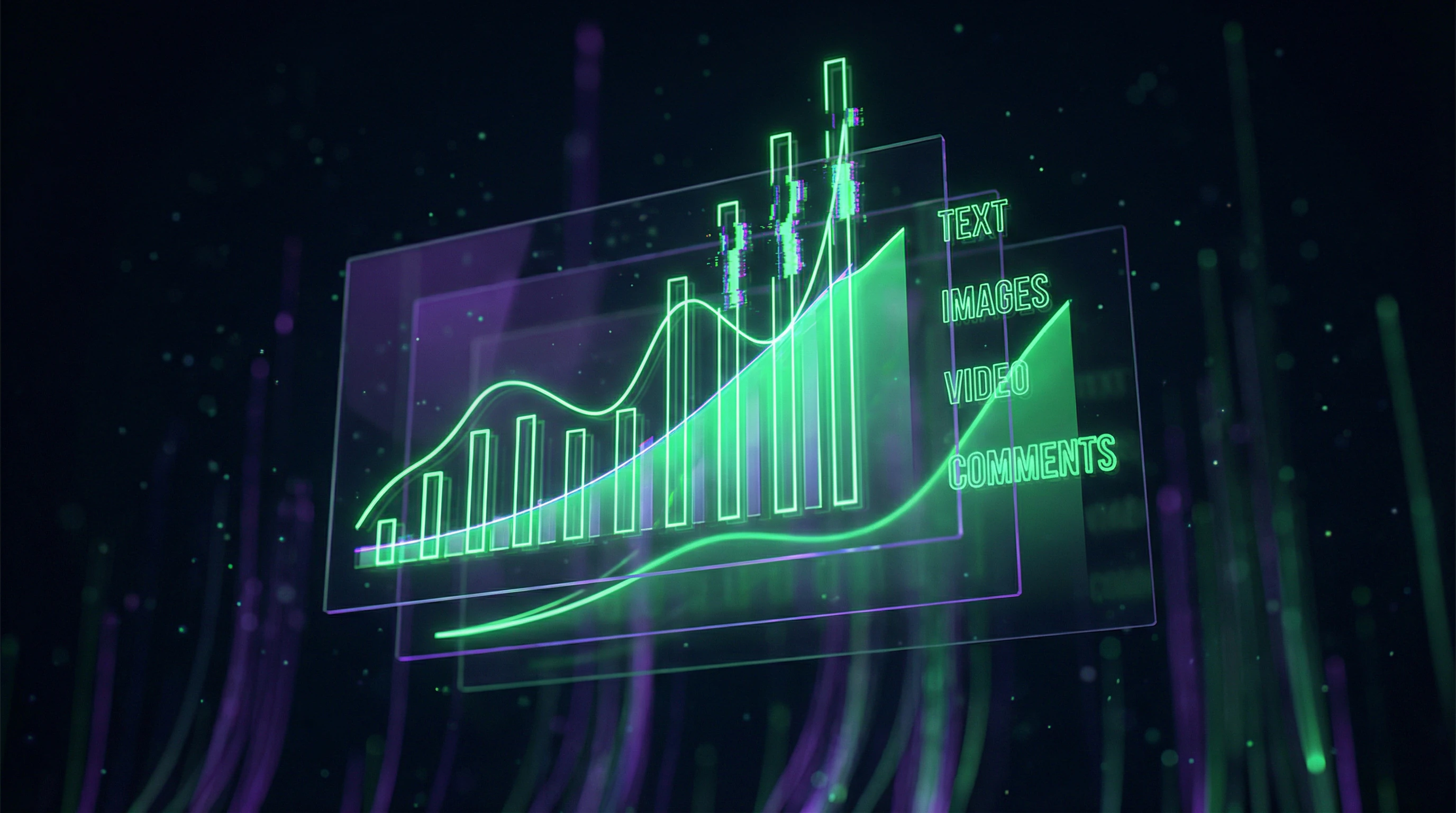

After ChatGPT's launch in November 2022, the share of AI-generated text began growing exponentially. An Originality.AI study (2023) showed: approximately 10–15% of new articles in the English-language segment contain signs of AI generation.

| Content Niche | AI Content Share | Detection Reliability |

|---|---|---|

| General Internet | 10–15% | 70–80% |

| SEO Content, Reviews, News Aggregators | 40–60% | 60–70% |

AI text detection methodology is imperfect — false positives and false negatives account for 20–30%. Exact figures are unknown, but the trend is clear: synthetic text is becoming the norm.

🔬 Synthetic Images and Video: Data on Deepfakes and Generative Models

Generative models (Midjourney, DALL-E, Stable Diffusion for images; Runway, Synthesia for video) have made synthetic media creation accessible to the masses. A Sensity AI study showed: the number of deepfake videos doubles every six months.

- In 2023, over 95,000 deepfake videos were detected (most pornographic in nature).

- These are only detected cases.

- Synthetic images for illustrations, advertising, and social media cannot be accurately counted — they're indistinguishable from photographs and aren't labeled.

🧬 Methodological Limitations: Why Exact Numbers Are Impossible

All cited data has serious limitations. Bot and AI content detection is imperfect — algorithms constantly improve, bypassing detection systems.

- Problem 1: Platform Incentives

- Platforms aren't interested in publishing exact figures — a high bot share reduces advertiser and investor confidence.

- Problem 2: Blurred Definition

- What counts as synthetic content? AI-edited text? Images with filters? Videos with automatic subtitles? These questions have no definitive answers, making any estimates approximate.

- Problem 3: Hidden Volumes

- Most synthetic content remains undetected — especially in closed systems, corporate networks, and private channels.

Conclusion: data confirms the growth of synthetic content, but exact scales remain unknown. This doesn't mean the dead internet theory is correct — it means the internet is indeed changing, and ignoring base rates in interpreting this data leads to erroneous conclusions.

The Mechanism of the Phenomenon: Causation vs. Correlation — Why the Internet Is Changing and Who's Responsible

The growth of automated content is a fact. But is it the result of a conspiracy or a natural consequence of technology and economics? Let's examine the causal relationships. More details in the Psychology of Belief section.

⚙️ The Attention Economy: Why Automation Is Inevitable

The internet monetizes attention. Advertising platforms pay for views, clicks, time on page — the more content, the more opportunities to display ads.

A human creates one article per day. AI creates a thousand per hour. The platform's choice is obvious not because it's malicious, but because it's market efficiency. Platforms optimize for their business models, not intentionally "killing" the internet.

🔁 Technological Determinism: AI Development as an Inevitable Process

Generative AI is the result of decades of research, not a sudden conspiracy. GPT-3 (2020), GPT-4 (2023), Stable Diffusion and Midjourney (2022) developed openly: scientific papers, open source code, predictable quality improvements and cost reductions.

The Dead Internet Theory interprets technological progress as a malicious plan, ignoring that this is the natural trajectory of AI development.

🧩 Confounders: Other Factors Explaining the Observed Changes

The feeling of "deadness" may be explained not by the growth of bots, but by something entirely different. Algorithmic curation creates filter bubbles — users see repeating patterns. Commercialization has led to standardization: everyone follows the same SEO rules, creating an illusion of uniformity.

The growth in the number of users has diluted quality — more people create content, but the average level declines. These factors aren't related to bots, but create similar subjective experiences.

| Observation | Bot Explanation | Alternative Causes |

|---|---|---|

| Content seems repetitive | Bots generate uniform text | Algorithms show similar content; SEO standardization |

| Fewer original voices | Bots drown out humans | Commercialization displaced amateurs; platform concentration |

| Average content quality has dropped | Bots fill the internet with garbage | More users = more average content; democratization of creation |

| Harder to find niche content | Bots displaced niche creators | Algorithms prioritize popular content; economies of scale |

🎯 Where Correlation Ends and Causation Begins

Correlation: the internet is changing, AI is developing — both processes run in parallel. Causation: does AI cause internet changes? Or does the internet cause demand for AI? Or are both phenomena consequences of a third factor (capitalism, platform scaling)?

The Dead Internet Theory takes correlation and declares it causation, adding intent (conspiracy). This is a classic cognitive bias error: we see a pattern → assume an agent → attribute a goal to it.

- Causal Chain (Real)

- Economic incentives → AI investment → technological progress → platform implementation → content changes

- Causal Chain (Dead Internet Theory)

- Conspiracy → deliberate bot deployment → internet death → information control

- Why the Second Version Is More Appealing

- It's simpler (one agent instead of a system of factors), explains the feeling of lost control, offers an enemy instead of uncertainty

Reality is more complex: the internet is changing because its economics require scaling, and AI is a scaling tool. This isn't a conspiracy, it's market logic. But this doesn't mean the changes are harmless or that they can't be criticized — it's just that criticism should be directed at economic incentives, not imaginary enemies.

Conflicts and Uncertainties: Where Sources Diverge and Why No Single Answer Exists

Research on the proportion of bots and synthetic content yields contradictory results. Different methodologies, different definitions, different timeframes — all lead to estimates ranging from 5% to 60%. More details in the Logical Fallacies section.

🔎 Defining "Bot": Technical Agent or Social Actor?

The main problem is the absence of a unified definition of "bot." Technically, it's any automated program interacting with web services: Google search crawlers, monitoring systems, automatic notifications.

In the context of dead internet theory, "bot" means a system imitating human behavior for manipulation or deception. These definitions don't overlap.

Studies using broad definitions yield high figures; narrow definitions — low figures. This is methodological uncertainty that cannot be eliminated without consensus.

📊 The Measurement Problem: How to Count What Hides?

Bots and AI systems constantly improve to evade detection. This creates a fundamental problem: we can only count detected cases, but don't know how many remain undetected.

Different studies use different detection methods: behavioral analysis, linguistic patterns, technical signatures. No method is 100% reliable.

- Behavioral analysis — tracking activity patterns, but bots learn to mimic human rhythms

- Linguistic patterns — text analysis, but generative AI becomes indistinguishable from human speech

- Technical signatures — checking metadata and IP, but easily masked through proxies and VPNs

🧾 Temporal Dynamics: The Problem of Rapidly Changing Reality

The situation changes so quickly that research becomes outdated by publication time. Data from 2022 doesn't reflect the reality of 2024, when generative AI became a mass tool.

Any estimates of synthetic content proportion are snapshots that lose relevance within months. We're always analyzing the past, not the present.

- Research Lag

- From data collection to publication takes 6–18 months. During this time, AI technology advances several generations.

- Adaptation Lag

- As soon as research on a detection method is published, bot creators are already working on bypassing that method.

- Awareness Lag

- The public learns about the problem when it has already moved to a new phase of development.

These three lags create a "chasing the tail" effect: we describe yesterday's reality while today's is already different. No single answer to the question "what is the proportion of synthetic content?" exists not because researchers are incompetent, but because the question itself assumes a static reality that doesn't exist.

Cognitive Anatomy of the Myth: Which Psychological Mechanisms Transform Observation into Conspiracy

The Dead Internet Theory is a classic example of how real observations transform into conspiratorial thinking. We examine the cognitive biases that fuel this theory. More details in the section Karma and Reincarnation.

⚠️ Pattern-Matching and Apophenia: Seeing Connections Where None Exist

The human brain is evolutionarily tuned to seek patterns — this helped survival by recognizing threats. But this ability misfires, creating the illusion of patterns in random data.

Apophenia is the perception of meaningful connections between unrelated phenomena. Dead Internet Theory proponents see "evidence" everywhere: repetitive comments, similar posts, templated responses. But these phenomena have simple explanations: people copy each other, use the same memes, follow trends.

Apophenia transforms banality into "conspiracy evidence" — and the more data available, the more "coincidences" the brain finds.

🕳️ Confirmation Bias: Seeking Only What Confirms the Hypothesis

When someone accepts the Dead Internet Theory, they begin seeking confirmation and ignoring refutation. Encountered a templated comment? "It's a bot!" Saw an original post? "Rare exception."

This cognitive filter makes the theory unfalsifiable — any data gets interpreted in its favor. Confirmation bias is a systematic error that reinforces beliefs regardless of facts.

🧠 Agency and Intentionality: Attributing Intent to Impersonal Processes

People tend to see intentions and agents where impersonal processes operate. The growth of internet automation is the result of economic incentives and technological development, not someone's malicious plan.

But the brain demands an enemy, a coordinator, a conspirator. Impersonal market forces and algorithms are experienced as collusion. This isn't paranoia — it's the normal operation of an agent-detection system that fires too often.

- Observation: content has become more homogeneous and automated

- Interpretation: this isn't market logic, but someone's plan

- Agent search: who could it be? AI companies? The government? Corporations?

- Reinforcement: any new fact becomes "proof" of the plan

📊 Availability Heuristic: Remembering the Vivid, Forgetting the Typical

Vivid examples of bots, copied posts, and strange comments are easy to recall and seem frequent. Millions of ordinary posts go unnoticed — they don't trigger emotions.

The availability heuristic causes us to overestimate the frequency of what's easily recalled. A few vivid bot examples create the impression that bots are everywhere.

🎯 Base Rate Neglect: Forgetting the Context

Even if 5% of the internet is synthetic content, this doesn't mean the internet is "dead." But when someone focuses on that 5%, they forget about the 95% of living content.

Base rate neglect is the systematic disregard of context and scale. The Dead Internet Theory works exactly this way: it takes a real phenomenon (growth of AI content) and ignores its true scale.

⚫⚪ Black-and-White Thinking: No Shades of Gray

The Dead Internet Theory assumes a dichotomy: either the internet is alive or dead. Either content is human or bot-generated. But reality is more complex: content can be partially automated, partially human, hybrid.

False dichotomy simplifies the world, making it more comprehensible but less accurate. Complex phenomena require complex models — not a choice between two extremes.

The Dead Internet myth isn't a lie. It's a real observation filtered through cognitive biases that transform partial truth into absolute conspiracy.