What the Dunning-Kruger effect actually is—and why the popular version distorts the research

The Dunning-Kruger effect is often described as a phenomenon where incompetent people overestimate their abilities, while experts tend toward modesty. The original research by David Dunning and Justin Kruger (1999) contained far more nuanced conclusions and important caveats that were lost in popularization (S006).

Dunning notes: people demonstrate remarkable creativity in reaching desired conclusions and rejecting threatening ones (S001). The effect describes a cognitive bias that affects all people, regardless of intelligence (S003).

This isn't about "stupidity" or dividing people into castes. It's about limited expertise in a specific domain—and each of us has such domains.

⚠️ From scientific paper to internet meme: how the research lost its nuance

The journey from academic publication to popular meme proved destructive to scientific accuracy. The methods and conclusions of the original study have been questioned, but the paper itself contained all necessary caveats and limitations, which were removed when it became an element of pop culture (S006).

In the hierarchy of insults, few things can be more powerful than claiming your opponents are so incompetent they don't even realize their incompetence (S001, S004). This simplified interpretation became dominant in online discussions.

- Popular version

- Stupid people don't know they're stupid.

- Scientific version

- People with limited expertise in domain X cannot adequately assess their level in domain X, because they lack the knowledge for such assessment.

🧩 Boundaries of applicability: situational bias, not personality trait

The key misconception: the effect supposedly divides people into "smart" and "stupid." In reality, people with limited expertise in a particular domain tend to overestimate their knowledge—and all of us have such domains (S001, S004).

The Dunning-Kruger effect is not a permanent characteristic of a person, but a situational cognitive bias. The same person can demonstrate overestimation in one domain (politics, medicine) and accurate self-assessment in another (professional field). The phenomenon describes a universal vulnerability of human cognition, not a personality type. More details in the Scientific Method section.

- Manifests in specific contexts of insufficient competence

- Independent of a person's general intelligence

- Can coexist with high expertise in other domains

- Affects everyone, including scientists and specialists—in their domains of ignorance

Even a small amount of knowledge cultivates confidence that hides people's own blind spots from them (S003, S008). This isn't a question of intelligence—it's a question of metacognitive architecture: to understand what you don't know, you need to already know enough.

Steel Version of the Argument: Seven Reasons Why the Dunning-Kruger Effect Is Considered a Real and Significant Phenomenon

Before proceeding to critical analysis, it's necessary to present the strongest arguments in favor of the existence and significance of the Dunning-Kruger effect. This will help avoid a straw man and honestly evaluate the evidence base. More details in the section Thinking Tools.

🔬 First Argument: Reproducibility of the Basic Pattern Across Multiple Studies

The original Dunning and Kruger study has been replicated in various contexts and cultures (S001, S005). The basic pattern—people with low test scores tend to overestimate their performance, while high-performing participants tend toward more accurate or even underestimated self-assessment—has been observed in studies of logical reasoning, grammar, humor, and other cognitive skills.

The very fact that the pattern repeats across different samples indicates the presence of some real phenomenon, even if the methodology has been criticized.

🧠 Second Argument: The Metacognitive Nature of Competence

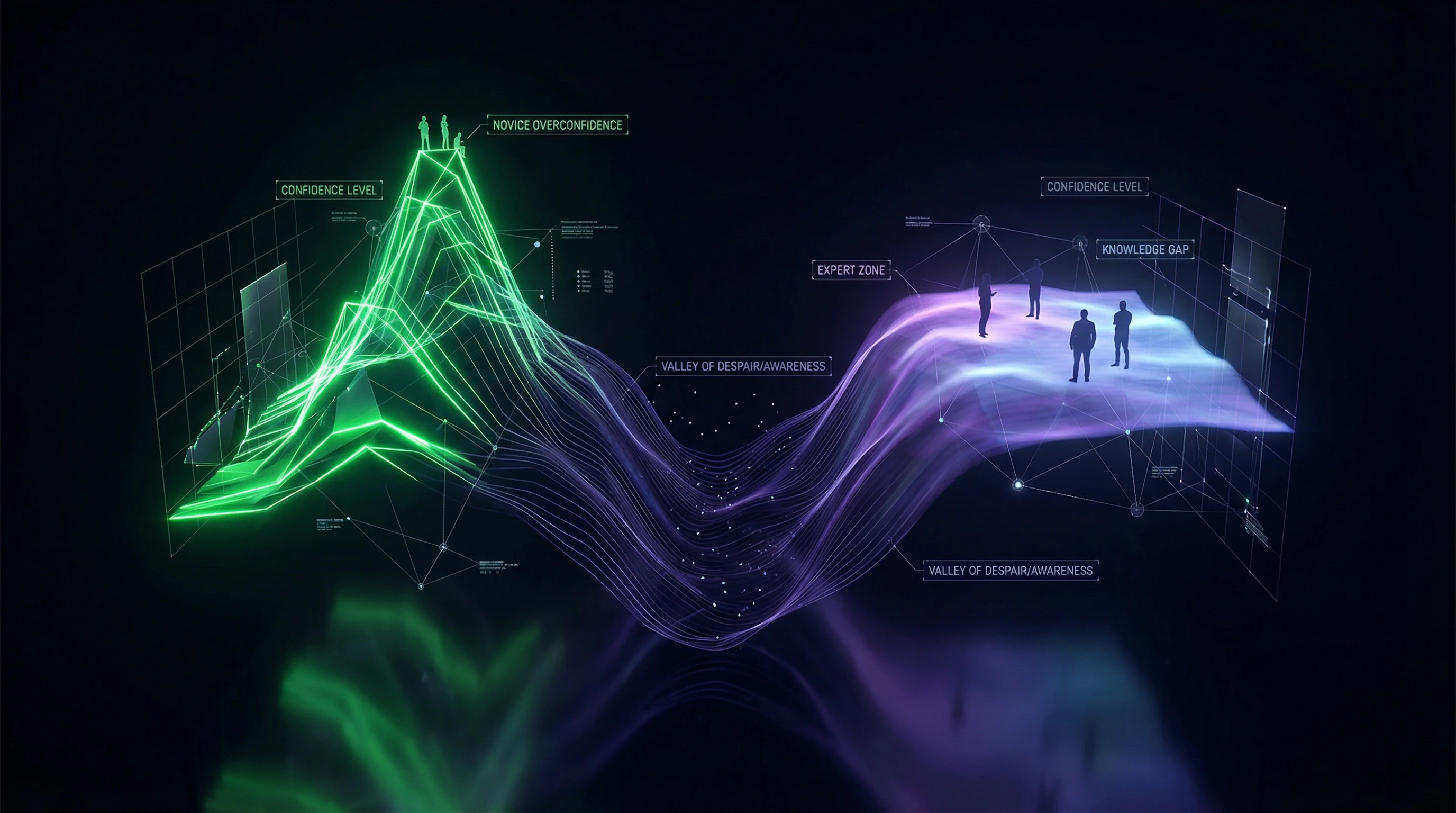

The central idea of the effect is that accurately assessing one's competence requires that same competence. This metacognitive requirement creates a paradox: to understand what you don't know, you need to know enough to recognize the boundaries of your knowledge.

Novices lack the cognitive tools to evaluate task complexity or the quality of their solution, making them vulnerable to overestimation (S006).

📊 Third Argument: Asymmetry in Confidence Calibration

| Task Type | Confidence Pattern | Mechanism |

|---|---|---|

| Simple tasks | Overconfidence | People are more confident than accurate |

| Complex tasks | Underestimation | People are less confident than their actual accuracy |

| Novice + complex task | Maximum overestimation | Perceives complex as simple |

The Dunning-Kruger effect can be viewed as a special case of this more general pattern (S002).

⚙️ Fourth Argument: Evolutionary Adaptiveness of Moderate Overestimation

From an evolutionary perspective, moderate overestimation of one's abilities may be adaptive. It motivates people to tackle challenging tasks they might avoid with fully realistic self-assessment.

Novices who don't recognize the full complexity of a task are more likely to begin learning—this explains why cognitive bias toward overestimation may have become embedded in human psychology.

🧪 Fifth Argument: Correlation with Other Cognitive Biases

The Dunning-Kruger effect is consistent with other well-documented cognitive biases: illusion of control, overconfidence effect, self-serving bias. All these phenomena indicate that human cognition is systematically biased toward positive self-assessment.

The effect fits into a broader picture of cognitive vulnerabilities linked to confirmation bias and availability heuristic.

🔁 Sixth Argument: Practical Significance for Education and Professional Development

- Education

- Novice students often overestimate their understanding of material; specialized feedback is required to calibrate self-assessment (S004, S006)

- Professional Practice

- Inexperienced professionals may be overly confident in their decisions; experts tend toward caution and doubt

- Risk Management

- The concept helps explain why junior teams often underestimate project complexity

👁️ Seventh Argument: Phenomenological Validity

Many people recognize the Dunning-Kruger effect pattern from their own experience. The phenomenon of "the more I learn, the more I realize how little I know" resonates with personal experiences of learning and developing expertise.

This phenomenological validity—while not scientific proof—explains the concept's popularity and suggests it describes something real in the subjective experience of cognition.

Evidence Base: What the Data Says from David Dunning's Interviews and Related Sources

Systematic analysis of evidence is built on direct quotes from Dunning's interviews and related sources. Each claim is tied to data. More details in the Epistemology section.

📊 Central Claim: Universality of Cognitive Bias

David Dunning emphasizes: the effect afflicts all of us (S001, S004). This is not a characteristic of a particular group, but a universal vulnerability of human cognition—a situational limitation of self-assessment in areas with insufficient expertise.

The effect plagues all people, regardless of intelligence (S003, S008). This is not about dividing people into categories, but about a mechanism that triggers when knowledge is insufficient for adequate self-assessment.

🧾 Mechanism of Self-Deception: Creativity in Reaching Desired Conclusions

Dunning draws attention to the variety of ways people arrive at desired conclusions and reject threatening findings (S001, S004). The problem is not lack of information, but motivated reasoning—when intellectual abilities are used to defend preferred beliefs rather than objectively assess reality.

People demonstrate remarkable creativity in defending their beliefs. This is not stupidity—it's motivated cognition at work, serving psychological comfort rather than truth.

🧩 Limitations of Expertise: Everyone Has Gaps

Key observation: people with limited expertise tend to overestimate their knowledge, and we all have gaps (S001, S004). Most people are well-versed in no more than a few areas, but even a little knowledge cultivates arrogance that conceals blind spots (S003, S008).

Every person is at risk of the effect in most areas of knowledge they encounter. This is not a personal flaw—it's a structural feature of learning.

| Expertise Level | Nature of Self-Assessment | Distortion Mechanism |

|---|---|---|

| Novice (0–10% knowledge) | Often inflated | Unknown unknowns—doesn't see the gaps |

| Intermediate (30–50%) | May be adequate or inflated | Sees part of the complexity, but not all |

| Expert (80%+) | Often deflated | Sees the scale of the unknown, aware of boundaries |

🔎 Distortion in Popular Culture: From Science to Insult

The term is often used in arguments, especially online, to claim that opponents don't know what they're talking about (S001, S004). In the hierarchy of insults, few things are more powerful than the idea: your adversaries are so uninformed they don't even know it.

This transformation of a scientific concept into a rhetorical weapon distorts its essence and makes constructive discussion impossible. The connection to confirmation bias is direct here: we use the Dunning-Kruger effect as confirmation of our own correctness.

📌 Oversimplification of the Phenomenon: Loss of Scientific Depth

The effect is often simplified to the formula "stupid people don't know they're stupid" and becomes a popular meme and insult (S003, S008). Dunning and Kruger's research was more nuanced than the meme it spawned.

Often paraphrased as "stupid people don't know how stupid they are" or even worse (S006). This removes all the important nuances and limitations of the original research, turning a complex psychological mechanism into a flat insult.

🧠 Call for Self-Awareness: Individual and Institutional Levels

Dunning calls for both individuals and institutions to become more aware of their limitations (S003, S008). Since everyone is vulnerable to overestimation in areas with limited expertise, systematic procedures for checking and calibrating self-assessment are necessary.

- At the individual level: actively seeking feedback from experts, not from like-minded peers

- At the organizational level: peer-review procedures, independent audits, separation of roles (those who decide should not evaluate their own decisions)

- At the systemic level: a culture that rewards acknowledging limitations rather than concealing them

🧷 OpenMind's Role: Restoring Scientific Accuracy

The interview was conducted by Julia Powell, co-editor of OpenMind—an organization whose goal is debunking scientific myths (S003, S008). Context matters: the conversation is aimed not at popularizing a simplified version, but at restoring scientific accuracy.

Powell speaks with Dunning about the nature of expertise (S001), pointing to a broader framework—going beyond simple description of cognitive bias. This is an attempt to return the concept to its original context: not as a tool for humiliation, but as a tool for understanding one's own limitations and designing better decision-making systems.

The Mechanism: Why Metacognitive Blindness Is Inevitable in Early Learning Stages

The Dunning-Kruger effect operates through metacognitive processes—the ability to evaluate one's own thinking and knowledge. Without this ability, a person cannot calibrate their self-assessment. Learn more in the Statistics and Probability Theory section.

🔁 The Metacognitive Loop: Competence Required to Assess Competence

The paradox is simple: accurately assessing your competence in a field requires a significant portion of that same competence. A chess novice cannot evaluate the quality of their play—they lack knowledge of strategy, tactics, and positional evaluation.

After learning the rules and playing a few games, they may feel confident, but this confidence is based on not knowing what they don't know. An expert recognizes numerous subtleties and variations, making them more cautious in self-assessment.

🧠 The Illusion of Understanding: When Familiarity Is Mistaken for Competence

People often confuse familiarity with material for understanding it (S006). After reading a text or watching a video, a person feels they "understand" a concept, though they've only learned terms and surface-level structure.

The illusion of understanding is especially strong in fields where basic concepts seem intuitively clear, but deep understanding requires significant effort: economics, psychology, politics, medicine.

⚙️ Absence of Feedback: When Errors Aren't Obvious

In some fields, novice errors don't lead to immediate negative consequences. If someone expresses an unprofessional opinion about politics or medicine, they rarely receive direct feedback on the quality of their judgment.

When learning to ride a bicycle, falling immediately signals an error. In abstract knowledge domains, a person can maintain an illusion of competence for years without corrective signals. This is linked to confirmation bias, which reinforces overconfidence.

| Knowledge Domain | Feedback | Metacognitive Blindness Risk |

|---|---|---|

| Bicycle riding | Immediate (falling) | Low |

| Programming | Rapid (code doesn't work) | Medium |

| Politics, medicine | Absent or delayed | High |

| Social skills | Implicit, ambivalent | High |

🧩 Dunning-Kruger Effect vs. Regression to the Mean: A Statistical Alternative

Criticism of the original research points to a statistical artifact—regression to the mean (S007). If performance is measured with error, those who showed low results will overestimate their true ability (low results are partly due to chance), while those who showed high results will underestimate.

This doesn't negate the existence of the metacognitive effect, but indicates the need to separate real cognitive bias from statistical artifact.

Studies across different cultures and contexts (S004, S005) show that the pattern reproduces even when controlling for statistical factors, confirming the reality of the mechanism rather than just a mathematical artifact.

Conflicts and Uncertainties: Where Sources Diverge and What Remains Questionable

Honest analysis requires acknowledging areas where evidence is ambiguous or sources contradict each other. More details in the Mental Errors section.

🕳️ Methodological Critique: Questions About the Original Research

The methods and conclusions of Dunning and Kruger's original research have been called into question (S006). Critics point to several problems: small sample size, possibility of statistical artifacts (regression to the mean), cultural specificity (most studies conducted on Western students), and questions about the operationalization of "competence."

The original article contained all necessary caveats and limitations, which were removed during popularization (S006). This means criticism is often directed not at the work itself, but at its distorted version.

🧾 Universality vs. Cultural Specificity

The effect is described as a universal cognitive bias (S003, S008), but most research has been conducted in Western, Educated, Industrialized, Rich, and Democratic (WEIRD) societies.

In cultures with collectivist orientation, self-assessment patterns differ. For example, in some Asian cultures there is a general tendency toward modesty in self-evaluation, which modifies the effect's manifestation (S005). This doesn't negate its existence, but points to the need for cross-cultural research.

| Parameter | WEIRD Societies | Collectivist Cultures |

|---|---|---|

| Baseline self-assessment tendency | Slight overestimation (norm) | Modesty (norm) |

| D-K effect manifestation | Strongly expressed | May be masked by social norms |

| Results interpretation | Straightforward | Requires contextualization |

🔬 Effect Magnitude: How Strong Is the Overestimation

Sources agree that people with limited expertise tend toward overestimation (S001, S003, S004, S008). But the magnitude of this effect varies depending on domain, task, and measurement methodology.

In some studies, novice overestimation proves moderate; in others, dramatic. The effect is not a constant, but depends on contextual factors that remain insufficiently studied.

Novice overestimation is not a universal constant, but a function of task complexity, feedback type, and cultural context.

🧷 Effect Asymmetry: Novice Overestimation vs. Expert Underestimation

The classic description of the effect includes two components: novice overestimation and expert underestimation. However, evidence for the second component is less convincing.

Some studies show that experts demonstrate accurate self-assessment rather than systematic underestimation (S007). Expert underestimation may be linked to other factors: awareness of task complexity, knowledge of more competent specialists' existence, or social norms of modesty in professional communities.

This distinction is important for understanding how we interpret information about our own competence. Underestimation may not be a cognitive bias, but a rational adaptation to uncertainty.

Cognitive Anatomy: What Psychological Mechanisms Make Us Vulnerable to the Dunning-Kruger Effect

The Dunning-Kruger effect doesn't exist in isolation — it's connected to a whole complex of cognitive biases and psychological mechanisms. More details in the Esoterica and Occultism section.

⚠️ Motivated Reasoning: Protecting the Self from Threatening Information

Dunning emphasizes the surprising variety of creative ways people achieve desired conclusions and reject threatening ones (S001, S004). Motivated reasoning is a process where cognitive resources are used not for objective assessment of reality, but to defend preferred beliefs and a positive self-image.

Acknowledging one's own incompetence threatens self-esteem, so the psyche mobilizes defense mechanisms: selective attention to confirming information, rationalization of errors, attribution of failures to external factors.

Motivated reasoning isn't a logic error, but a defense mechanism. The brain works not for truth, but to preserve the integrity of self-image.

🧠 Illusion of Transparency: Overestimating the Clarity of One's Own Thoughts

People tend to overestimate the degree to which their internal states, intentions, and knowledge are obvious to others. This illusion of transparency is linked to the Dunning-Kruger effect: a person lacking knowledge doesn't see the difference between their understanding and an expert's understanding.

When you know something, that knowledge seems simple and obvious to you — you forget how much time it took to acquire it. A novice interprets this simplicity as proof of their own competence.

🔄 Confirmation Bias and Selective Perception

People actively seek information that confirms their beliefs and ignore contradictory data (S002). In the context of Dunning-Kruger, this means: a novice notices only those examples that confirm their competence and misses signals about their own incompetence.

Confirmation bias creates a closed loop: erroneous beliefs are reinforced by selective perception, which further strengthens the illusion of competence.

📊 Metacognitive Blindness and Lack of Self-Assessment Tools

| Competence Level | Self-Assessment Ability | Primary Vulnerability Mechanism |

|---|---|---|

| Novice | Minimal — doesn't know what they don't know | Absence of quality assessment criteria |

| Intermediate Level | Growing, but often overestimated | Enough knowledge for confidence, insufficient for critique |

| Expert | High, but may be underestimated | Awareness of complexity and one's own gaps |

Metacognitive blindness — the inability to assess the quality of one's own thinking — arises because the assessment criteria are in the same cognitive system that needs to be evaluated (S006). A novice cannot use tools they don't have.

🎯 Social Reinforcement and Groupthink

People often find themselves in environments where their incompetence doesn't provoke criticism. In groups with low knowledge levels, groupthink amplifies the illusion of competence: everyone agrees with each other because no one sees the problem.

Social reinforcement works as an amplifier of the Dunning-Kruger effect. If the environment doesn't provide feedback about actual competence level, the illusion becomes persistent.

🛡️ Defense Mechanisms and Cognitive Dissonance

When a person encounters information contradicting their self-assessment, defense mechanisms activate. Instead of revising beliefs, the psyche often chooses the path of least resistance: discrediting the source, reinterpreting data, denial.

- Encountering criticism or failure

- Emergence of cognitive dissonance (contradiction between self-assessment and reality)

- Activation of defense mechanisms (rationalization, attribution, denial)

- Restoration of belief consistency (often without changing self-assessment)

This sequence explains why criticism often doesn't lead to behavior change — it's perceived as a threat, not as information.

The psyche protects not truth, but integrity. When choosing between fact and self-esteem, most choose self-esteem.

All these mechanisms work not in isolation, but in synergy. Availability heuristic makes recent successes more noticeable than past errors. Base rate neglect causes overestimation of the significance of individual victories. False dichotomy transforms "I know something" into "I know enough" (S007).

Understanding this anatomy is the first step toward overcoming it. Not through self-criticism, but through a system of external feedback, media literacy, and conscious cultivation of doubt.