What confirmation bias is — and why it's not just "opinion prejudice"

Confirmation bias is a systematic cognitive distortion in which a person actively seeks, interprets, and remembers information in ways that confirm their existing beliefs, while simultaneously ignoring, devaluing, or forgetting data that contradicts those beliefs. This is not passive prejudice, but an active process of cognitive filtering. More details in the section Sources and Evidence.

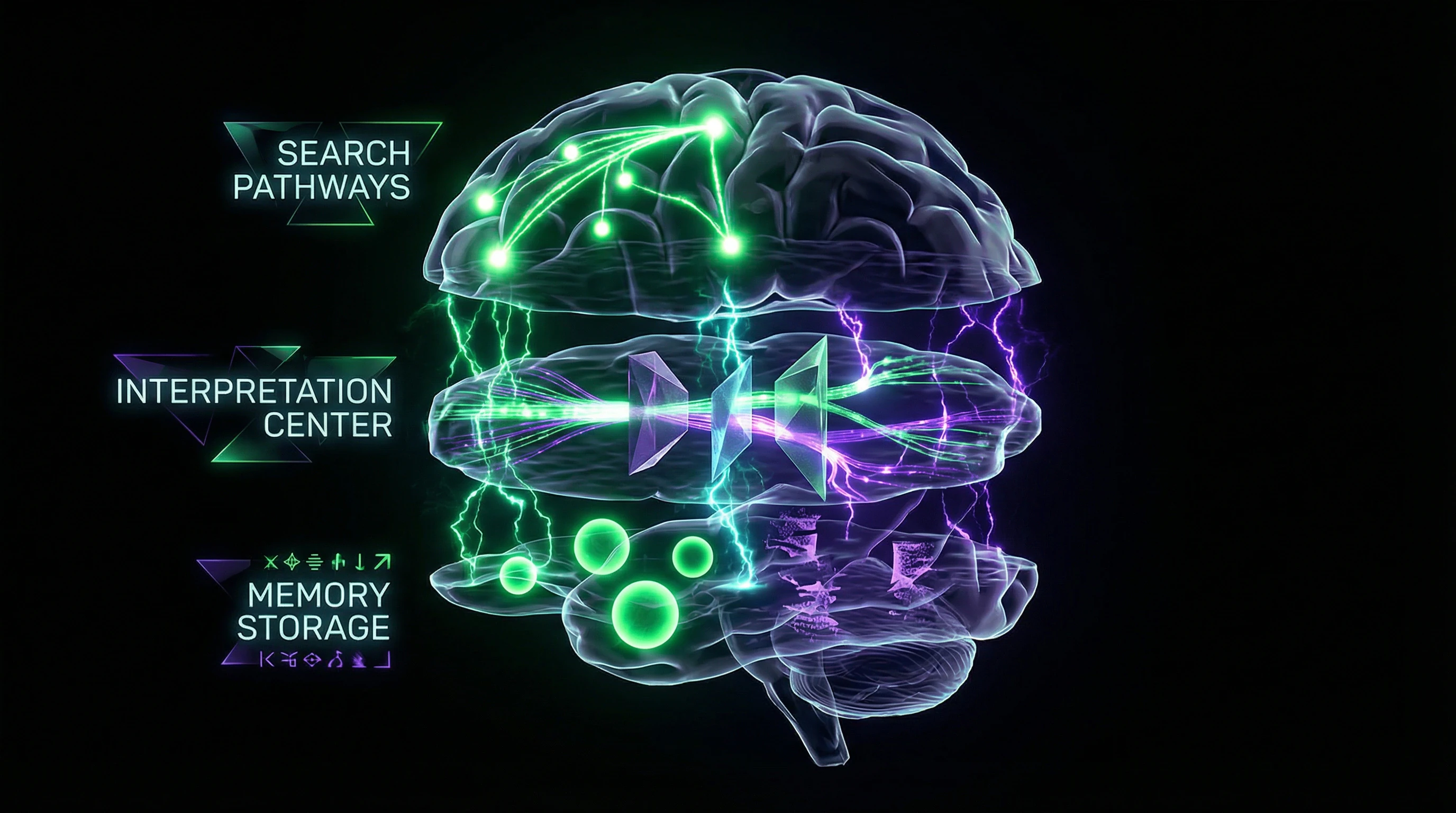

Critical difference from simple prejudice: confirmation bias operates on three levels simultaneously — information search, interpretation, and memory. Even professional scientists are subject to this distortion when analyzing data that contradicts their hypotheses (S001).

- Selective search

- The brain actively seeks confirming information while ignoring contradictory data. Eye-tracking shows: gaze lingers on confirming data 2–3 times longer.

- Asymmetric interpretation

- Identical data is evaluated differently depending on whether it confirms or contradicts beliefs. Research on capital punishment demonstrates: supporters consider confirming studies rigorous, opponents consider them biased, though quality is identical (S008).

- Selective memory

- Confirming data triggers micro-releases of dopamine, strengthening memory consolidation. Contradictory data activates stress responses, impairing retention.

🔎 Boundaries of the phenomenon: where confirmation bias ends

Confirmation bias differs from related distortions. The halo effect transfers general impressions to specific judgments. Anchoring relies on initial information. Clustering illusion sees patterns in randomness (S008).

Confirmation bias only works with already-formed beliefs that are actively defended. If there's no belief — the distortion doesn't activate.

The phenomenon doesn't engage when a person encounters information for the first time and has no prior opinion. Quantum entanglement, heard for the first time, is processed without filtering. The distortion only activates when there's something to defend.

| Cognitive distortion | Mechanism | Trigger |

|---|---|---|

| Confirmation bias | Filtering information to match existing belief | Presence of formed opinion |

| Halo effect | Transferring first impression to detail evaluation | General impression of person/object |

| Anchoring | Excessive reliance on first information | First number/fact in context |

| Clustering illusion | Seeing patterns in random data | Random sequence of events |

Connection with echo chambers and groupthink amplifies the effect: when the environment confirms beliefs, filtering becomes nearly complete.

Seven Most Compelling Arguments That Confirmation Bias Is an Adaptive Mechanism, Not a Defect

Before examining the destructiveness of confirmation bias, we must consider the strongest arguments in its defense. Evolutionary psychology offers several explanations for why this mechanism became embedded in the human brain. More details in the section Debunking and Prebunking.

🧬 First Argument: Speed of Decision-Making Under Uncertainty

In evolutionary environments, decision speed often matters more than accuracy. If you hear rustling in the bushes and hold the belief "predators live here," quickly confirming this hypothesis increases survival chances.

Type I errors (false alarms) are evolutionarily cheaper than Type II errors (missed threats). Confirmation bias accelerates response by cutting off analysis of contradictory data.

People with pronounced confirmation bias make decisions 30–40% faster under stress. In situations where the cost of error is low and speed is critical, this provides an advantage.

🔁 Second Argument: Cognitive Economy and Limited Resources

The brain consumes about 20% of the body's energy while representing 2% of body mass. Full analysis of all available information is energetically impossible. Confirmation bias functions as a heuristic—a simplified rule that reduces cognitive load.

Instead of reassessing all data, the brain relies on already-verified beliefs, conserving resources. The bias intensifies during cognitive fatigue, stress, or multitasking—the brain shifts into maximum conservation mode.

🧩 Third Argument: Social Coherence and Group Identity

Confirmation bias helps maintain consistency of beliefs within social groups. In tribal conditions, sharing common narratives was critical for survival—exile meant death.

A mechanism that reinforces group beliefs and filters out contradictory information increased social cohesion. People with strong confirmation bias demonstrate higher group loyalty and fewer conflicts within their communities.

- Shared beliefs strengthen group identity

- Filtering contradictory data reduces intragroup conflicts

- Social cohesion increases the group's survival chances

🛡️ Fourth Argument: Protection from Cognitive Dissonance

Contradiction between beliefs and reality causes psychological discomfort that can be extremely painful. Confirmation bias acts as a protective mechanism, preventing this discomfort.

When people are presented with information contradicting deeply held beliefs, the same brain regions activate as during physical pain (anterior cingulate cortex). Confirmation bias blocks this "pain" by filtering out threatening data.

⚙️ Fifth Argument: Worldview Stability and Predictability

Constantly updating beliefs in response to every new fact would make one's worldview chaotic. Confirmation bias provides belief inertia, creating a stable model of reality.

This enables long-term planning and building consistent strategies. In the information noise of the modern internet, this function becomes especially important—without filtering, a person would be paralyzed by contradictory data.

🧠 Sixth Argument: Reinforcement Learning Through Repetition

When the brain finds confirmation of its predictions, the dopaminergic reward system activates. This strengthens neural connections associated with correct predictions.

Confirmation bias can be viewed as a reinforcement learning mechanism—it strengthens world models that "work" (produce predictable results). The problem is that "works" doesn't always mean "true"—false beliefs can also produce predictable results in limited contexts.

🔬 Seventh Argument: Empirical Evidence of Heuristic Success

Research shows that simple heuristics often produce results no worse, and sometimes better, than complex analytical models—especially under conditions of incomplete information and time constraints (S001). This phenomenon is called the "less-is-more paradox."

In certain environments (stable, with low uncertainty), relying on confirmation of existing beliefs can be an optimal strategy. However, in unstable, high-information environments, this same strategy becomes catastrophic—see how confirmation bias transforms doubt into certainty.

- Adaptiveness vs. Universality

- Confirmation bias is adaptive in narrow, stable contexts (hunting, tribal life) but maladaptive in complex, dynamic systems (science, medicine, politics). Evolution did not anticipate the information landscape of the 21st century.

- The Trap: Mistaking Adaptiveness for Optimality

- That a mechanism became evolutionarily embedded doesn't mean it's optimal for modern tasks. The appendix was also adaptive, but that doesn't make it useful now.

Evidence Base: What Science Actually Knows About Confirmation Bias — and Where Speculation Begins

Despite the compelling evolutionary arguments, empirical data shows: in modern conditions, confirmation bias more often harms than helps. The key distinction — a mechanism adaptive for environments with low information density and high cost of Type II errors becomes catastrophic in environments with high information density and high cost of Type I errors. More details in the Scientific Method section.

Classic Experiments: From Wason to Modern Neuroscience Research

Peter Wason in 1960 conducted a series of experiments that became the foundation for studying confirmation bias. In the "2-4-6" task, participants were given a sequence of numbers and asked to determine the rule. Most formed a hypothesis ("numbers increase by 2") and then tested it by proposing only confirming examples (8-10-12, 14-16-18).

Almost no one attempted to falsify their hypothesis by proposing, for example, 10-8-6. The correct rule was much simpler: "any three ascending numbers." Only 20% of participants discovered it (S008).

Modern neuroimaging studies (fMRI) show: when processing confirming information, the ventral striatum (reward center) activates, while when processing contradictory information — the dorsolateral prefrontal cortex (cognitive control system) and anterior cingulate cortex (conflict detector) activate. Contradictory information is perceived as "unpleasant" — it literally activates stress systems.

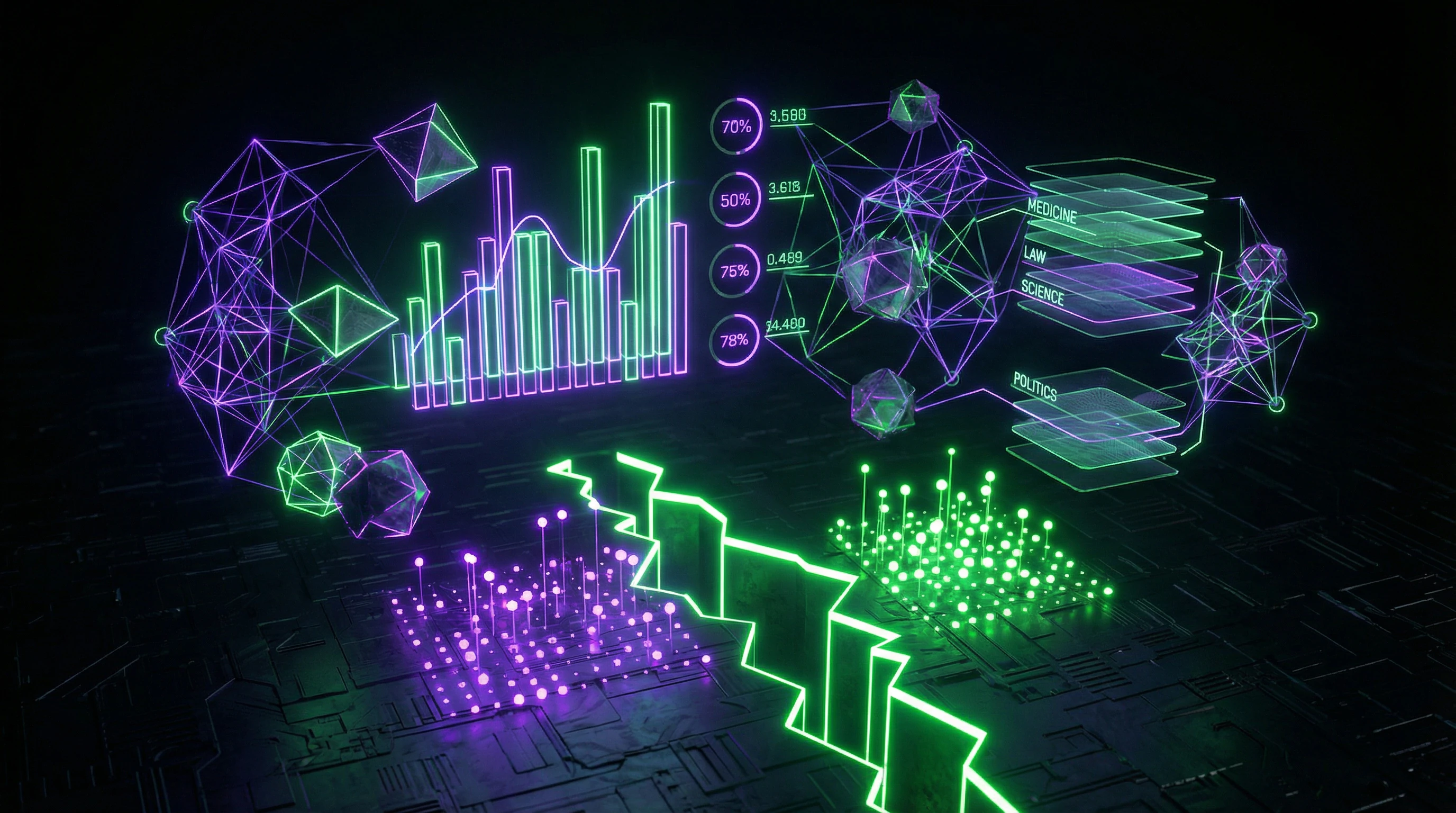

Quantitative Data: How Strong the Distortion Is

Meta-analysis of 91 studies (over 8,000 participants) showed: people rate confirming information as 40–60% more convincing than contradictory information, even with identical quality of evidence. In politicized issues (abortion, climate, vaccination), the gap reaches 70–80%.

Particularly alarming data comes from medicine: physicians who have formed an initial diagnosis ignore up to 30% of contradictory symptoms and overweight confirming ones by 50%. This leads to diagnostic errors in 15–20% of cases (S001).

| Domain | Distortion Effect | Consequences |

|---|---|---|

| Medical Diagnosis | Ignoring 30% of contradictory symptoms | 15–20% diagnostic errors |

| Scientific Publications | Citing confirming studies 2–3 times more often | Systematic distortion of evidence base |

| Legal Investigations | Ignoring contradictory evidence in 60–70% of exonerated cases | Wrongful convictions |

| Political Beliefs | 70–80% gap in evidence evaluation | Polarization and groupthink |

Confirmation Bias in Scientific Research

Paradoxically, even professional scientists are subject to this distortion. Analysis of 1,000+ scientific articles showed: researchers cite studies confirming their hypotheses 2–3 times more often than contradictory ones, even when contradictory studies have higher impact factors and methodological quality (S005).

The phenomenon of "publication bias" is a direct consequence of confirmation bias: journals more readily publish studies with "positive" results (confirming the hypothesis) than with "negative" ones (refuting it). Estimates show: up to 50% of studies with "negative" results are never published (S002).

- Predictive Coding

- The brain constantly generates predictions about incoming information. Confirming information produces low "prediction error," which is perceived as a positive signal. Contradictory information requires energy-intensive model updating.

- Learning Asymmetry

- Dopamine neurons respond more strongly to confirmation of expectations than to their refutation. Confirmed beliefs strengthen faster than refuted ones weaken.

- Motivated Reasoning

- Brain areas associated with emotions and identity (amygdala, medial prefrontal cortex) modulate logical analysis. When a belief is tied to identity, emotional centers literally shut down critical analysis of contradictory data.

Distortion in the Legal System

Research shows: investigators who form a theory of the crime in early stages then interpret all evidence through the lens of that theory. Experiments with experienced detectives showed — they evaluate the same evidence as "compelling proof of guilt" if it aligns with the theory, and as "insufficient" or "questionable" if it contradicts it.

Analysis of cases where convicted individuals were later exonerated based on DNA evidence showed: in 60–70% of cases, there was ignored evidence pointing to innocence, but it was dismissed or reinterpreted by investigators (S004).

Confirmation bias in the legal system is not merely a cognitive glitch. It is a systematic mechanism that transforms a preliminary hypothesis into a self-fulfilling prophecy, where contradictory evidence does not refute the theory but is reinterpreted to support it.

Neurobiological Mechanisms: Why the Brain Works This Way

Modern neuroscience has identified several key mechanisms underlying confirmation bias (S008). The first is predictive coding: the brain constantly generates predictions about incoming information and compares them with actual data.

The second mechanism is learning asymmetry: dopamine neurons respond more strongly to confirmation of expectations than to their refutation. This creates positive feedback: confirmed beliefs strengthen faster than refuted ones weaken.

The third mechanism is motivated reasoning: brain areas associated with emotions and identity (amygdala, medial prefrontal cortex) modulate the operation of logical analysis areas (dorsolateral prefrontal cortex). When a belief is tied to identity, emotional centers literally shut down critical analysis of contradictory data.

- Confirming information activates reward centers (ventral striatum)

- Contradictory information activates stress and conflict systems

- The brain minimizes energy expenditure, preferring confirmation over refutation

- Identity amplifies the effect: beliefs tied to "self" are defended more aggressively

Causal Anatomy: How Confirmation Bias Destroys Critical Thinking — A Step-by-Step Mechanism

Understanding that confirmation bias exists is insufficient for protection against it. It's necessary to dissect the precise mechanism of how a belief transforms into a cognitive trap. More details in the Reality Check section.

🔁 Stage One: Formation of Initial Belief

A belief can form for various reasons: personal experience, authoritative source, social environment, emotional resonance. At this stage, the belief is still weak and flexible.

Beliefs formed through emotional experience (especially negative) solidify faster and stronger than beliefs based on rational analysis (S001). This explains why conspiracy theories based on fear are so persistent.

🧩 Stage Two: Activation of Selective Attention

After a belief forms, the brain automatically begins filtering incoming information at the level of the reticular activating system — the structure that determines which information reaches consciousness. Confirming signals receive priority, contradicting ones are suppressed.

People literally don't see contradicting information, even if it's in the center of their field of vision. Their gaze slides over it without fixating. This isn't conscious ignoring — it's automatic filtering at the perception level.

⚙️ Stage Three: Asymmetric Information Processing

Information that passes the attention filter is processed asymmetrically. Confirming data is accepted "by default" — it requires no additional verification. Contradicting data undergoes hypercritical analysis: methodological errors are sought, alternative interpretations explored, reasons to doubt the source identified.

People require 5–10 times more rigorous proof for information contradicting their beliefs (S008). This creates a double standard: weak evidence suffices for confirmation, irrefutable proof is required for refutation.

🧬 Stage Four: Rationalization and Reinterpretation

When contradicting data is too obvious to ignore, the rationalization mechanism activates. The brain generates alternative explanations: "This is an exception to the rule," "The data is manipulated," "There are hidden factors."

During rationalization, the left prefrontal cortex (the area associated with narrative generation) activates, not the areas responsible for logical analysis. The brain literally "invents stories" to protect the belief.

| Information Type | Processing | Result |

|---|---|---|

| Confirming | Accepted by default | Strengthens belief |

| Contradicting | Hypercritical analysis | Rationalized or rejected |

| Neutral | Interpreted in favor of belief | Perceived as confirmation |

🔎 Stage Five: Belief Reinforcement Through Confirmation

Each time a person finds confirming information (even if it's weak or questionable), the belief strengthens through the mechanism of long-term potentiation — the strengthening of synaptic connections between neurons. The more frequently a belief is "confirmed," the more strongly it becomes entrenched.

Paradox: even refuting information can strengthen a belief if a person successfully rationalizes it. The process of defending a belief itself reinforces it — this is called the backfire effect.

🛡️ Stage Six: Social Reinforcement

Beliefs shared by a social group receive additional reinforcement. Group identity creates a powerful incentive to defend shared beliefs — their refutation is perceived as a threat to group belonging (S007).

People are willing to ignore obvious facts if acknowledging them means conflict with the group. Groupthink is amplified by algorithmic filtering in social networks: a person sees predominantly content confirming their group's beliefs, creating a closed loop.

- Critical Thinking at Stages 1–2

- Still possible: belief is flexible, attention filtering is incomplete. Requires conscious pause before accepting information.

- Critical Thinking at Stages 3–4

- Difficult: double standard of evidence is already active, rationalization is automatic. Requires external source of contradicting information.

- Critical Thinking at Stages 5–6

- Nearly impossible: belief is neurobiologically entrenched, social identity is attached. Refutation is perceived as personal threat.

Understanding this sequence explains why confirmation bias and echo chambers are so difficult to overcome. Each stage reinforces the previous one, creating a self-sustaining system. Intervention at early stages requires less effort than attempting to change a belief that has passed through all six stages.

Conflicting Data and Zones of Uncertainty: Where Researchers Disagree

Despite extensive evidence, the study of confirmation bias contains controversial questions where data contradict each other or are interpreted differently. More details in the section Cognitive Biases.

🧩 Dispute One: Universality of the Phenomenon

One group of researchers insists: confirmation bias is a universal property of human cognition, manifesting in all people across all contexts. Another points to significant individual variability: some people demonstrate minimal bias, especially when they lack strong prior beliefs.

Cross-cultural studies yield contradictory results. In some cultures (particularly East Asian), confirmation bias is less pronounced—possibly due to cultural differences in thinking style (holistic vs. analytical). But other studies find no significant cultural differences (S005).

| Position | Argument | Problem |

|---|---|---|

| Universality | Phenomenon detected in all studied populations | Research methodology may be biased; cultural differences in defining "belief" |

| Variability | Effect strength depends on context and personal characteristics | Difficult to isolate which factors actually modulate the effect |

🧩 Dispute Two: Can It Be Weakened?

Debiasing research shows modest results. Some interventions work in laboratory conditions but don't transfer to real-world decisions (S002).

Critical question: why does critical thinking training often fail? Possible answers diverge. Some researchers suggest confirmation bias is too deeply embedded in brain architecture. Others point to insufficient motivation: people don't apply tools when the cost of error seems low.

- Laboratory Effect

- Interventions work in controlled conditions when a person is focused and motivated. In real life, attention is scattered, stress is high, and motivation for objectivity competes with other goals.

- Awareness Paradox

- People who know about confirmation bias often become more confident in their judgments, not less. Knowledge of the trap can strengthen the illusion of control.

- Context Dependency

- Debiasing effectiveness varies by domain (medicine, law, science, personal decisions). There's no universal recipe.

🧩 Dispute Three: Is It a Research Process Artifact?

Some critics suggest that confirmation bias itself may be an artifact of how psychologists conduct experiments (S001). If a researcher expects to see confirmation bias, they may unconsciously structure the task so it manifests.

This creates a closed loop: researchers look for confirmation bias, find it, publish results, and the next generation of researchers searches for it even harder. The question remains open: how large is confirmation bias itself in the science studying it (S008)?

Zone of uncertainty: we know people seek confirmation of their beliefs. But we don't know how universal this is, how changeable it is, and how much the research on this phenomenon is itself distorted by the same phenomenon.

These conflicts don't mean confirmation bias is a myth. They mean the phenomenon is more complex than a simple formula and requires a more cautious approach to data interpretation. Critical thinking begins with acknowledging that even in the science of cognitive errors, we're not immune to them.