What Are Cognitive Biases and Heuristics — and Why They're Confused with Thinking Errors

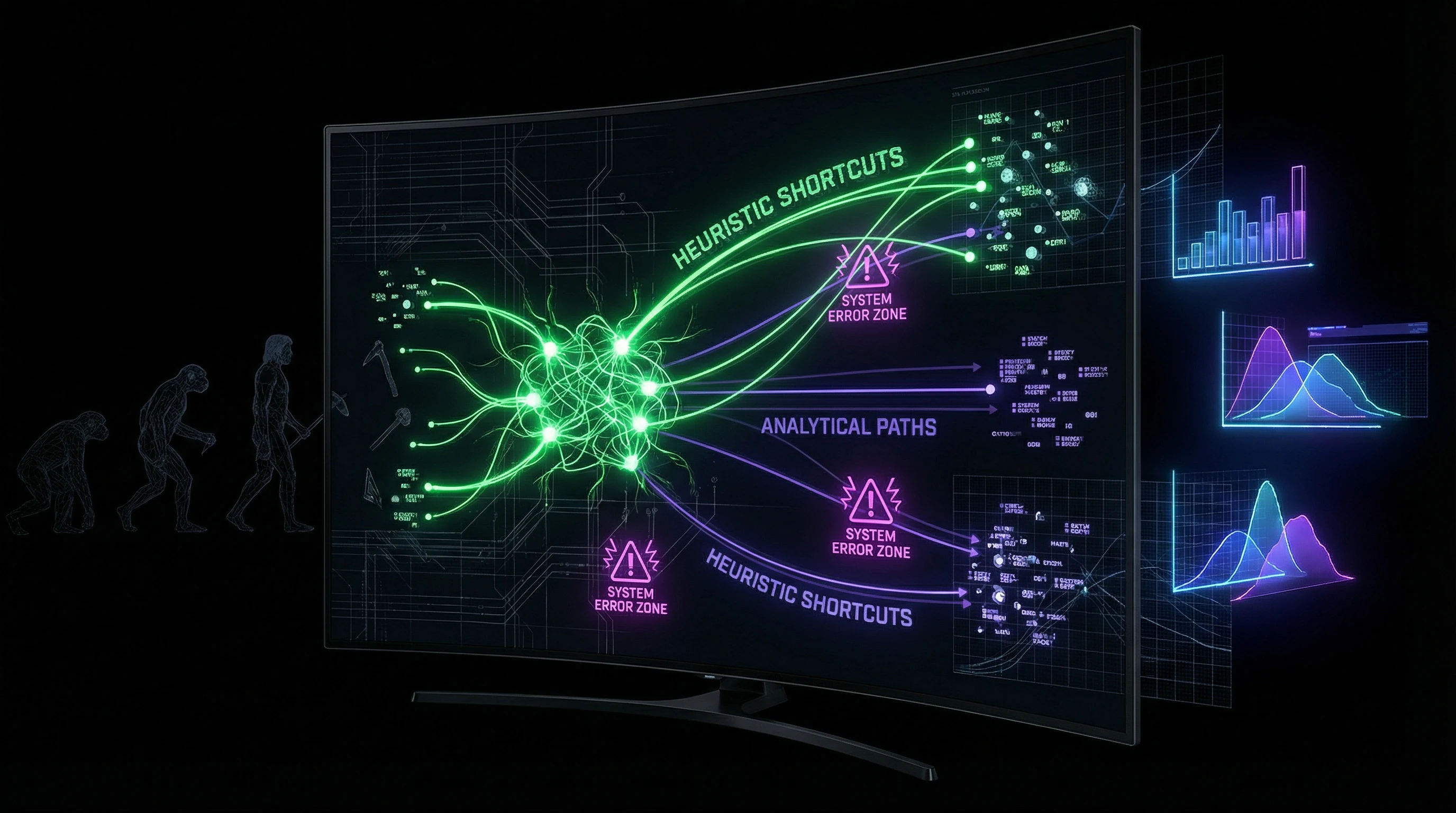

Heuristics are mental shortcuts, simplified decision-making rules that allow rapid information processing under conditions of limited time and resources (S001). Cognitive biases are systematic deviations from rational judgment that arise as side effects of applying heuristics or as results of the architecture of human thinking (S003).

They're often confused because heuristics do generate biases. But this doesn't mean heuristics are errors. It means they work in some contexts and fail in others. More details in the section Debunking and Prebunking.

Heuristics as Adaptive Tools

Heuristics evolved as evolutionary adaptations for solving recurring tasks under uncertainty. When our ancestors encountered rustling in the bushes, the "better safe than sorry" heuristic made them assume danger, even when the probability of encountering a predator was low.

The cost of a false alarm (energy spent fleeing) was incomparably lower than the cost of a missed threat (death). This risk asymmetry shaped the basic architecture of human thinking, oriented toward fast, "good enough" decisions rather than slow, energy-intensive analysis of all data (S001).

Cognitive Biases as Systemic Deviations

Cognitive biases manifest as predictable patterns of deviation from logical or statistical norms. Confirmation bias causes people to seek, interpret, and remember information in ways that confirm their existing beliefs (S003).

- Availability Effect

- People overestimate the probability of events that are easy to recall. After news of a plane crash, people overestimate flight risks, even though statistically aviation remains the safest form of transportation (S001). This bias operates independently of actual data.

When Heuristics Become Traps

Heuristics generate cognitive biases when applied in contexts for which they weren't optimized. The representativeness heuristic works well when quick categorization of objects by external features is needed, but leads to errors when base rates are ignored.

| Context | Heuristic Works | Heuristic Fails |

|---|---|---|

| Rapid categorization (friend or foe) | Yes — adaptive | No |

| Statistical tasks (probabilities, base rates) | No | Yes — ignores data |

| Delayed consequences (investments, health) | No | Yes — overweights near events |

Classic example: describing someone as "shy, methodical, orderly" makes most people assume they're a librarian rather than a farmer, even though farmers vastly outnumber librarians in the population (S003). The context of the modern world — with its statistical tasks, abstract risks, and delayed consequences — radically differs from the environment of evolutionary adaptation.

The Strongest Arguments for Heuristics: Why "Fast and Dirty" Often Beats "Slow and Precise"

The traditional view of cognitive biases as thinking defects that must be eliminated has dominated cognitive psychology since the 1970s. However, accumulated evidence shows this model is oversimplified and in some cases incorrect. Learn more in the Logic and Probability section.

Heuristics don't just "work well enough"—under certain conditions they outperform complex analytical methods in accuracy, speed, and resistance to data noise.

⚙️ Efficiency Under Resource and Time Constraints

The human brain consumes about 20% of the body's energy while representing only 2% of body weight. Full rational analysis of every decision would require astronomical energy expenditure and time.

Heuristics solve this problem through radical simplification: instead of processing all available information, they focus on key signals (S001). The "take-the-best" heuristic demonstrates prediction accuracy comparable to multifactor regression models in experiments, while using only a fraction of the information (S003).

- In real-world conditions, decision-making time is limited to seconds or minutes

- Heuristics provide the only viable path to action

- Complete analysis is often physiologically impossible

📊 Resistance to Overfitting and Data Noise

Paradoxically, the simplicity of heuristics makes them more resistant to overfitting compared to complex models. When data contains noise or samples are unrepresentative, complex algorithms begin "fitting" the model to random fluctuations, losing their ability to generalize.

Heuristics that ignore most information automatically ignore most noise as well. Research shows that in forecasting tasks with high uncertainty (such as predicting startup success or sports outcomes), simple heuristics often outperform complex statistical models (S003).

🧠 Cognitive Offloading and Preventing Decision Paralysis

Information and option overload leads to the phenomenon of "choice paralysis," where people either postpone decisions indefinitely or experience severe stress and dissatisfaction with outcomes.

Heuristics act as filters, reducing the choice space to a manageable size. The "satisficing" heuristic—choosing the first option that meets minimum criteria instead of searching for the optimal one—reduces cognitive load and increases subjective well-being without significant loss in decision quality (S001).

In today's information-overloaded world, this function of heuristics becomes critically important for mental health.

🔁 Social Coordination and Communication Efficiency

Heuristics serve as shared cognitive protocols, allowing people to coordinate actions without lengthy negotiations and explanations. When a group uses the same heuristics (such as "follow the majority" or "trust the expert"), collective decisions are made faster and social cohesion is strengthened.

This function is especially important in crisis situations requiring immediate coordination without the possibility of detailed discussion (S003). Attempting to replace heuristics entirely with rational analysis would destroy this coordination infrastructure, making collective action impossible.

💎 Ecological Rationality: Matching Tool to Environment

The concept of ecological rationality asserts that the effectiveness of a cognitive strategy is determined not by its conformity to abstract logical norms, but by its fit with the structure of the environment in which it's applied (S003).

The "imitate-the-successful" heuristic may seem irrational from a Bayesian belief-updating perspective, but in environments with high costs of individual trial-and-error learning, it enables rapid adaptation and knowledge transfer.

Criticism of heuristics as "irrational" often ignores the fact that they're optimized for real, not idealized, decision-making conditions.

The 2024–2025 Revolution: Data on Balancing Biases Instead of Eliminating Them

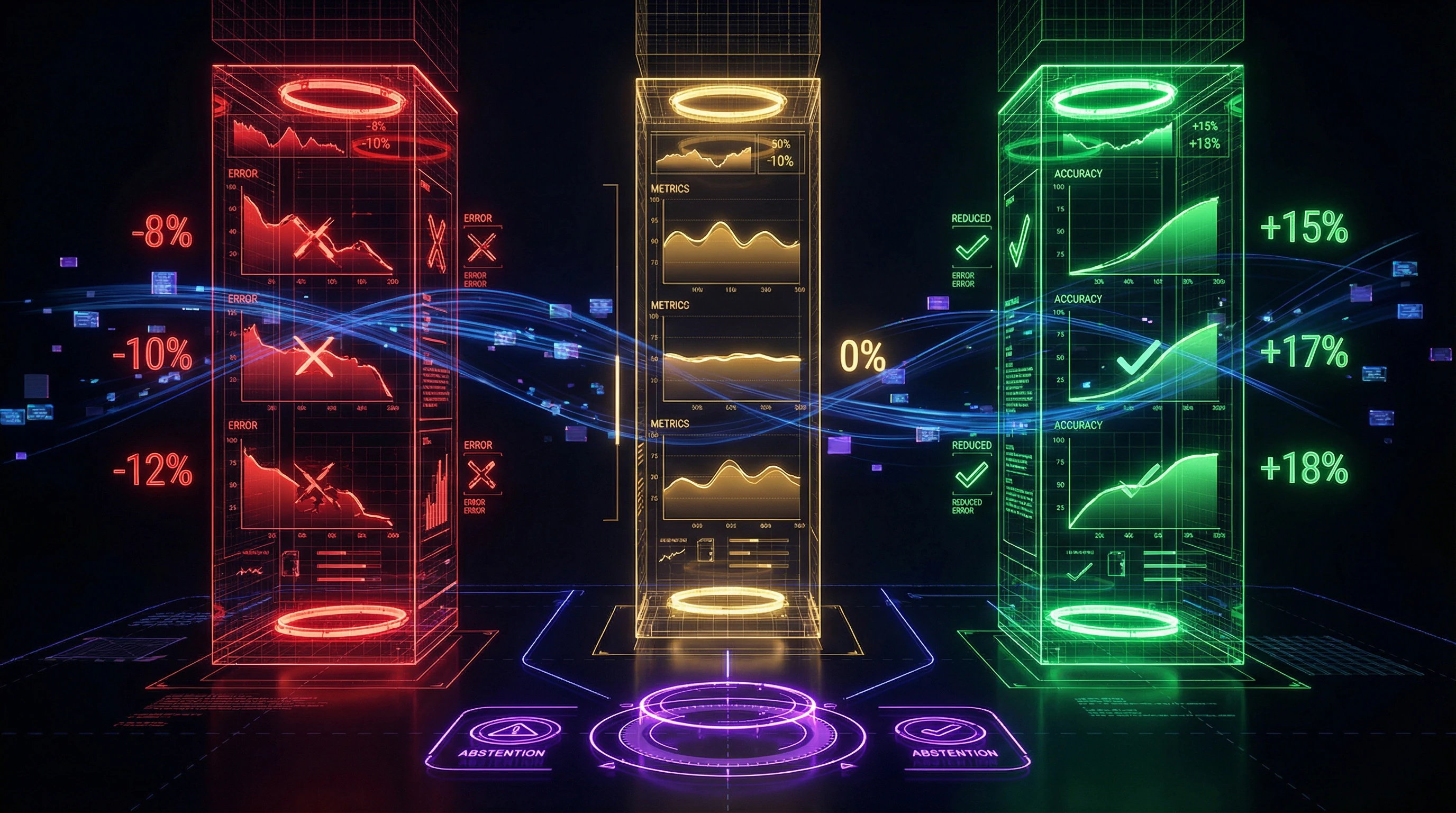

The study "Balancing Rigor and Utility: Mitigating Cognitive Biases in Large Language Models for Multiple-Choice Questions" (April 2025) overturns the standard approach: complete elimination of cognitive biases reduces effectiveness rather than enhancing it (S006). The authors developed the BRU dataset and tested the hypothesis on large language models solving multiple-choice tasks.

🔬 Methodology: Expert Annotation and Controlled Experiments

The BRU dataset was created in collaboration with cognitive psychology experts and includes tasks that activate specific biases: anchoring effect, framing, availability, and others (S006). Each task contains a correct answer, distractors, and metadata about which biases may be activated.

Researchers tested three strategies: complete suppression of heuristics through instructions, moderation (selective use), and introducing an abstention option under high uncertainty. More details in the Sources and Evidence section.

📊 Results: Moderation Outperforms Elimination

| Strategy | Accuracy Change | Error Reduction | Resource Cost |

|---|---|---|---|

| Complete heuristic suppression | −8–12% | Low | High |

| Moderation (selective use) | +15–18% | −23% | Optimal |

| Abstention option under uncertainty | Stable | −19% | Minimal |

Models prohibited from using mental shortcuts consumed more resources and more frequently fell into traps of overfitting on irrelevant details (S006). The moderation strategy—targeted inspection of active biases and conscious decision-making about whether to follow them—increased accuracy by 15–18%.

🧾 Abstention: When "I Don't Know" Beats Guessing

The abstention option under high uncertainty reduced errors by 19% with minimal decrease in solved tasks (S006). Experts are characterized not only by accuracy but also by the ability to recognize the boundaries of their competence.

Attempting to force an answer with insufficient data systematically leads to errors that can be avoided through acknowledging uncertainty.

🧬 Mechanism: Metacognitive Monitoring

The effectiveness of moderation is explained by activation of the metacognitive level—monitoring one's own processes (S006). Instead of automatically following or completely suppressing heuristics, models (and humans) learn to ask questions:

- Which heuristic is currently active?

- Is it appropriate for this specific task?

- What signs indicate the heuristic might lead to error?

- Is there sufficient data for a reliable judgment?

This process requires additional resources, but significantly fewer than complete abandonment of heuristics, and provides an optimal balance between speed and accuracy.

🔁 From LLMs to Human Thinking

LLMs demonstrate biases structurally analogous to human ones because they are trained on human texts and inherit patterns of human thinking (S006). The balancing protocol—inspection of active heuristics, assessment of their applicability, and willingness to abstain under uncertainty—adapts as a practical strategy for improving decisions without the unrealistic goal of complete bias elimination.

This is particularly relevant in contexts where availability heuristic distorts risk perception or where ignoring base rates leads to incorrect conclusions about probabilities.

Neurobiological Mechanisms: Why the Brain Chooses Speed Over Accuracy

Cognitive biases persist not because we're foolish, but because they're embedded in brain architecture at the level of neural networks and neurotransmitter systems. Heuristics aren't software bugs that can be fixed with an update. More details in the Scientific Method section.

🧬 Dual-Process Model of Thinking: System 1 and System 2

System 1 operates quickly, in parallel, automatically, and requires minimal energy. System 2 is slow, sequential, requires conscious attention, and is energetically expensive (S001).

Most decisions are made by System 1, because activating System 2 for every task would deplete cognitive resources within hours. Cognitive biases are side effects of System 1 operation—the price of speed.

The brain doesn't make mistakes—it economizes. Heuristics aren't bugs, they're features of evolution.

⚙️ The Role of Emotions in Forming Heuristic Judgments

Emotions serve as rapid evaluation signals, integrating complex information into simple tags of "good/bad," "safe/dangerous" (S002). Somatic markers—bodily sensations associated with choice options—direct attention and accelerate decisions, eliminating unacceptable options before conscious analysis begins.

The affect heuristic uses emotional response as a proxy for risk assessment: if something evokes positive emotions, we underestimate risks and overestimate benefits (S001). Evolutionarily, this is justified—emotions reflect accumulated experience and often contain information unavailable to conscious analysis.

| Mechanism | Function | Cost |

|---|---|---|

| Somatic Markers | Rapid option filtering | May eliminate useful alternatives |

| Affect Heuristic | Integration of complex information | Risk assessment bias |

| Parallel Processing | Simultaneous analysis of multiple signals | Superficial detail processing |

🔁 Neuroplasticity and Persistence of Cognitive Patterns

Repetitive thinking patterns form stable neural pathways through long-term potentiation. The more frequently a heuristic fires, the stronger the synaptic connections supporting it (S002).

This explains why biases are difficult to "unlearn": they're not conscious beliefs but deeply rooted neural habits. Correction requires not merely knowledge of the bias's existence, but repeated practice of alternative strategies in real decision-making contexts.

🧷 Dopaminergic System and Heuristic Reinforcement

The dopaminergic system reinforces heuristics through reward. When a heuristic leads to quick success, dopamine release strengthens the neural pathways associated with that strategy (S002).

The problem: the dopaminergic system responds to immediate results, not long-term consequences. A heuristic that provides a quick solution receives neurochemical reinforcement, while slow analysis leading to better results later may not receive sufficient reinforcement.

Structural bias favoring heuristics is built into the brain's neurochemistry itself. This isn't a design flaw—it's a tradeoff between speed and accuracy, optimized for survival, not statistics.

Understanding these mechanisms explains why mental errors are so persistent and why simple awareness of them rarely leads to behavioral change. Neurobiology reveals: fighting heuristics means fighting brain architecture, not logic.

Conflicts in the Data and Zones of Uncertainty: Where Sources Diverge

The consensus that cognitive biases are not purely negative coexists with deep disagreements in the literature. The question is not whether heuristics are useful, but under what conditions they work—and when they systematically break down. More details in the section Statistics and Probability Theory.

🧩 Debates on Normative Standards of Rationality

The conflict begins with the definition of the word "rationality" itself. The traditional approach uses logic and probability theory as the benchmark: any deviation from Bayesian belief updating is considered an error (S003).

The alternative approach—ecological rationality—flips the logic: rationality is assessed not by abstract formal systems, but by how well a decision fits the structure of the environment and the agent's goals (S003). The same heuristic is an error in one paradigm, an adaptation in another.

The choice of normative standard determines the conclusion. This is not a scientific dispute—it's a choice of axioms.

🔬 Contradictions in Transferability of Results

Most heuristics research is conducted in laboratories with abstract tasks. A student solving a logic puzzle in silence is not the same as a physician making a decision under time pressure, emotional load, and social pressure (S003).

The BRU study used large language models for controlled analysis, but this creates a new question: how well do LLMs model human thinking when bodily sensations, emotions, and social context come into play (S006)?

- Laboratory Effect

- Heuristics work in clean conditions but may fail in reality, where there are more variables than in any experiment.

- Model Gap

- Data on LLMs don't guarantee that the human brain works the same way—especially when emotions and social cues are involved.

📊 Uncertainty in Long-Term Effects

Nearly all studies measure immediate outcomes: the correct answer to a task, diagnostic accuracy at that moment. But a heuristic can be optimal now and destructive a year later.

Example: the "follow the majority" heuristic provides quick social integration and conserves cognitive resources. But it also triggers information cascades and collective errors—groupthink (S003). The absence of longitudinal studies tracking consequences over months and years leaves a huge zone of uncertainty.

- Short-term outcome: the heuristic provides a quick solution.

- Medium-term effect: the pattern repeats, becomes reinforced.

- Long-term outcome: accumulated errors or adaptive advantage—unknown.

Three paradigms of rationality, three types of contexts, three time horizons—and in each combination the answer differs. This is not a flaw in the science. It's a sign that the question is more complex than it seemed.

Cognitive Anatomy of Biases: Which Mental Traps Are Exploited Most Often

Understanding the specific mechanisms through which cognitive biases influence decisions allows for the development of targeted compensation strategies. Not all traps are equally dangerous — some trigger in narrow contexts, others permeate the entire spectrum of judgment. More details in the section Myths About Conscious AI.

Medicine, law, and engineering demonstrate where biases inflict maximum damage (S001, S003, S004). This is no accident: in these fields, decisions are made under time pressure, incomplete information, and high stakes.

- Anchoring — the first number or fact blocks reassessment. A doctor hears a preliminary diagnosis and fits symptoms to match it (S001).

- Availability heuristic — vivid examples seem more typical than they are. A plane crash is memorable, statistics are not.

- Base rate neglect — people forget background probabilities. A test with 99% accuracy can yield 90% false diagnoses if the disease is rare (S004).

- Confirmation bias — the brain seeks facts that confirm already-formed opinions, ignoring contradictions.

- Dunning-Kruger effect — incompetent people overestimate their knowledge. Dangerous in surgery and diagnostics (S003).

Each trap has a trigger: time, emotion, social pressure, data incompleteness. Recognizing the trigger means intercepting the bias before it influences choice.

Groupthink and false dichotomy are especially dangerous in organizations and politics, where decisions affect thousands of people (S005).

Cults and pseudomedicine exploit precisely these mechanisms: anchoring on leader charisma, availability of emotional healing stories, confirmation bias in interpreting results. Control begins with a cognitive trap, not with violence.

The strategy is not to "avoid" biases — that's impossible. The strategy is to know where they trigger and build in checks: second opinions in medicine, statistical literacy in data analysis, thought-debugging protocols in team decisions.