Base Rate Neglect: The cognitive blindness that turns precise instruments into error generators

Base rate neglect is a systematic cognitive bias in which people ignore the statistical prevalence of an event in the general population, focusing instead on specific information about a particular case (S001).

The Encyclopedia of Social Psychology defines this as an error in probabilistic judgment: individuals disregard information about event frequency in the population and rely on vivid but statistically less significant details. Learn more in the Sources and Evidence section.

The structure of error: Three components that create the illusion of precision

The classic structure includes three elements:

- Base rate (prior probability)

- The prevalence of an event in the population — for example, 0.1% of the population has disease X.

- Test sensitivity (true positive rate)

- The test correctly identifies 99% of sick individuals.

- Test specificity (true negative rate)

- The test correctly identifies 99% of healthy individuals.

The human mind intuitively focuses on sensitivity and specificity, perceiving "99% accuracy" as a guarantee, while completely ignoring the rarity of the disease itself.

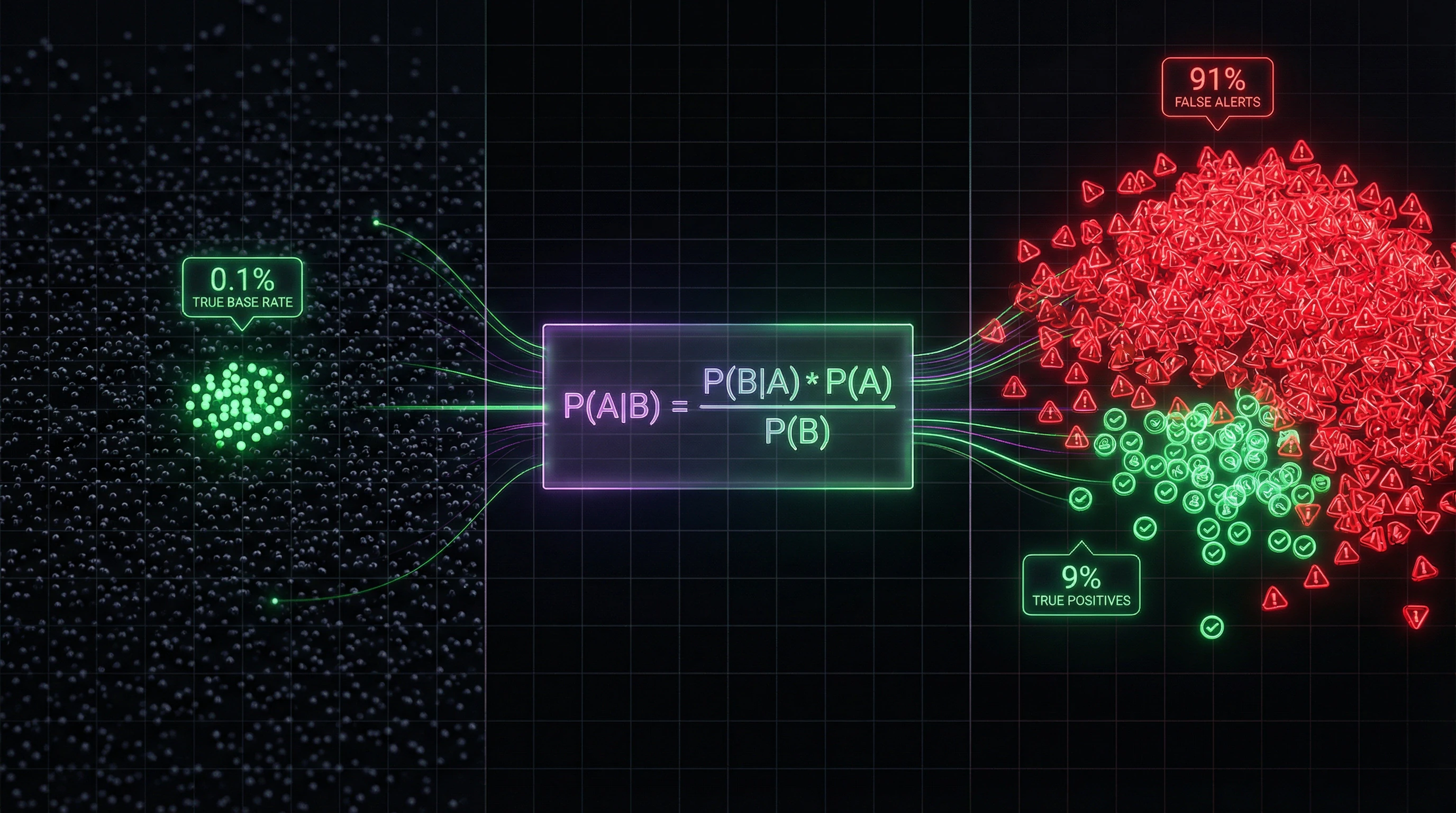

Why 99% accuracy can mean 90% false alarms

Concrete example: a disease occurs in 1 person out of 1,000 (base rate 0.1%). The test has 99% sensitivity and 99% specificity. We test 100,000 people.

| Group | Number | Test result | Number of people |

|---|---|---|---|

| Sick (0.1%) | 100 | True positives (99%) | 99 |

| Healthy (99.9%) | 99,900 | False positives (1%) | 999 |

| Total positive results | 1,098 | ||

Probability that a person with a positive result is actually sick: 99 / 1,098 ≈ 9%. Probability of false alarm: 999 / 1,098 ≈ 91%.

Boundaries of the phenomenon: From individual error to systemic problem

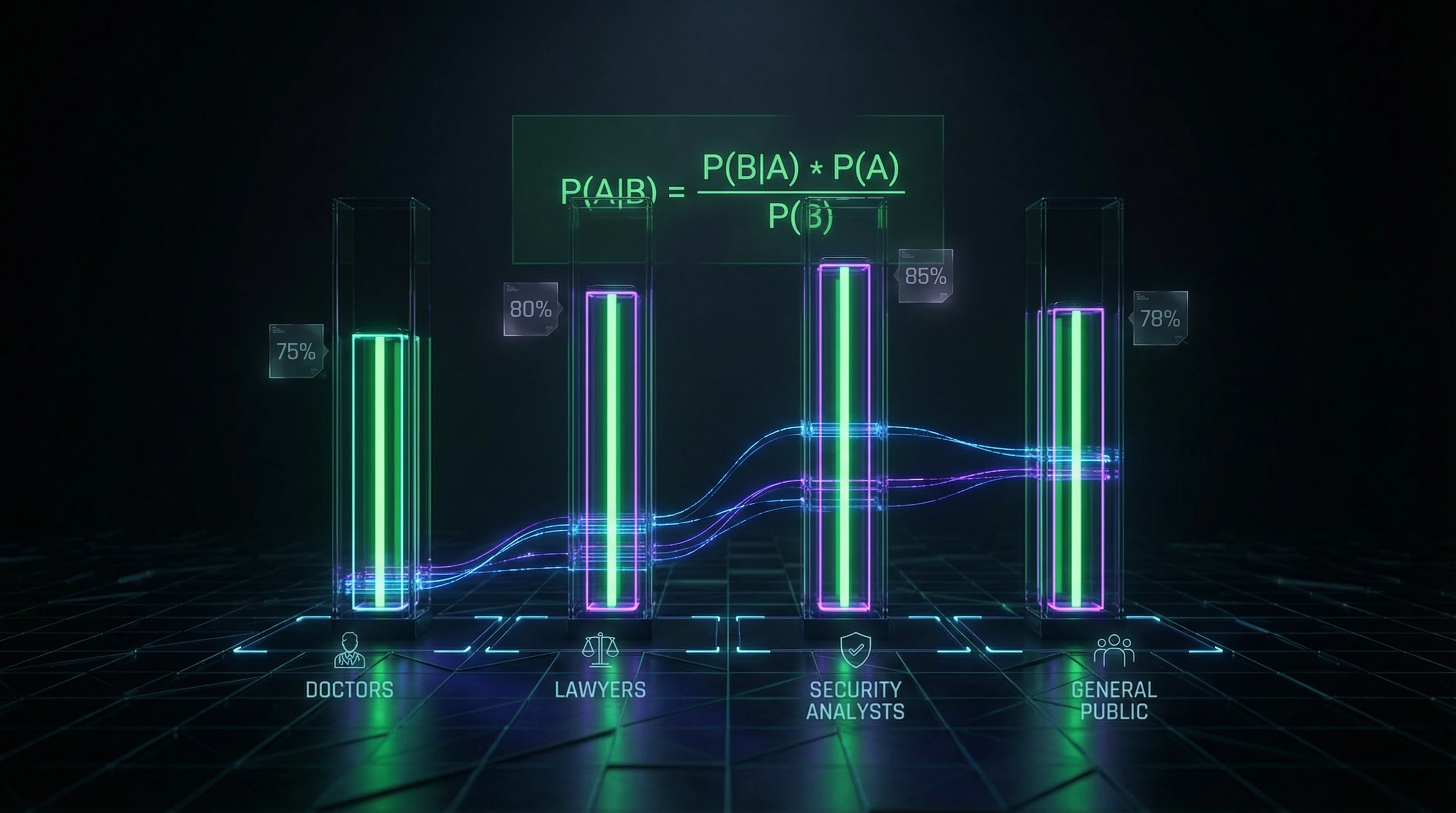

Base rate neglect isn't just an individual judgment error. Professionals fall victim to this mistake: doctors misinterpret screening test results, judges overestimate probability of guilt based on expert testimony, cybersecurity specialists generate avalanches of false alarms (S001).

The phenomenon manifests in any situation requiring integration of base statistical information with specific case data — from risk assessment to medical diagnosis and security threat evaluation.

Seven Arguments That Make Base Rate Neglect So Convincing and Dangerous

Base rate neglect is not a result of stupidity. It's a consequence of deep features of human cognition that work efficiently in most situations, but create systematic distortions in the context of probabilistic judgments. More details in the Epistemology Basics section.

⚠️ Argument 1: Concrete Information Is Psychologically More Vivid Than Abstract Statistics

The brain evolved to process concrete, tangible events, not abstract distributions. Information that "the test showed a positive result specifically for you" is perceived as more relevant than abstract information that "in the population this disease is rare" (S001).

The psychological vividness of the specific case suppresses the statistical context—this is not a logical error, but a feature of attention architecture.

⚠️ Argument 2: Representativeness Dominates Over Probability in Intuitive Judgments

People assess the probability of an event not by its statistical frequency, but by how "representative" it is—how well it matches a prototype or stereotype (S002). If symptoms or test results "look like" a disease, the brain automatically increases the probability estimate, ignoring the base rate.

The representativeness heuristic is a fast but systematically biased way of judging that works against you in rare events.

⚠️ Argument 3: Professional Expertise Creates the Illusion That Base Rates Are "Already Accounted For"

Physicians, lawyers, and security analysts often believe that their experience automatically compensates for the need to explicitly account for base rates. The expert thinks: "I know this is a rare disease, but the symptoms are so specific that the base rate doesn't apply."

This is an illusion—the mathematics of Bayes' theorem doesn't depend on expert opinion about the "specificity" of the case (S004).

⚠️ Argument 4: Training Systems Focus on Test Accuracy, Not on Interpreting Results

Medical education teaches how to evaluate the sensitivity and specificity of diagnostic tests, but rarely trains the skill of integrating these metrics with the base rate of disease in a specific population. Cybersecurity specialists are trained to configure intrusion detection systems for maximum sensitivity, but not to minimize false positives accounting for the actual frequency of attacks.

Educational systems reproduce the error at an institutional level.

⚠️ Argument 5: Asymmetry of Consequences Creates Motivation to Ignore Base Rates

In medicine, missing a rare but dangerous disease is perceived as a more serious error than causing panic with a false positive diagnosis. In cybersecurity, missing a real attack is more catastrophic than generating thousands of false alarms.

| Domain | False Negative | False Positive | System Pressure |

|---|---|---|---|

| Medicine | Patient won't receive treatment for rare disease | Patient will undergo unnecessary examination | Increase sensitivity |

| Cybersecurity | Real attack will go undetected | False alarm will distract analysts | Increase sensitivity |

This asymmetry creates institutional pressure toward "playing it safe"—increasing system sensitivity without accounting for the fact that with low base rates, this leads to an avalanche of false positives.

⚠️ Argument 6: Cascading Effects in Decision Chains Amplify the Original Error

A base rate error at one stage becomes input data for the next stage. A physician who receives a false positive screening test result orders a more invasive examination, which itself carries risks and may produce new false positives.

A security analyst responding to a false alarm from an intrusion detection system may interpret normal activity as suspicious, creating a cascade of erroneous conclusions.

⚠️ Argument 7: Lack of Feedback Makes the Error Invisible to Practitioners

A physician who referred a patient with a false positive result for additional examination rarely learns the final diagnosis—the patient goes to another specialist. A security analyst doesn't receive systematic feedback about how many of their alarms were false.

- Without explicit feedback, professionals cannot calibrate their intuitive probability assessments.

- The error reproduces endlessly, becoming embedded in routine.

- The practitioner remains convinced of the correctness of their approach because they don't see the full picture of consequences.

This creates a closed loop: the error remains invisible, so it's not corrected, so it reproduces again.

Evidence Base: What Empirical Research Shows About Base Rate Neglect

The phenomenon of base rate neglect was first systematically described in a series of experiments by Kahneman and Tversky in the 1970s and has since been replicated in hundreds of studies across various contexts—from laboratory experiments to analyses of real professional decisions (S001).

📊 Classic Experiments: How People Ignore Statistics Even When Explicitly Presented

In Kahneman and Tversky's original study, participants were presented with a problem: "In a city, 85% of cabs are green and 15% are blue. A witness to an accident claims to have seen a blue cab. The witness's reliability has been tested: they correctly identify the color 80% of the time. What is the probability that the cab was actually blue?" The correct answer by Bayes' theorem: approximately 41%. Typical participant response: 80%—they completely ignored the base rate (85% green cabs) and focused only on the witness's reliability (S001).

People don't integrate information. They substitute a complex calculation with a simple rule: "The witness is 80% reliable—therefore, the answer is 80%." This isn't a calculation error. It's a refusal to calculate.

📊 Medical Diagnosis: Doctors Make the Same Error as Non-Specialists

A study in which doctors were presented with a task of interpreting mammography results showed widespread base rate neglect. Participants were told: the base rate of breast cancer in the screening population is 1%, mammography sensitivity is 90%, and the false positive rate is 9%. Question: what is the probability of cancer given a positive result? Correct answer: approximately 9%. Median doctor response: 75%. Most doctors overestimated the probability of cancer by a factor of 8, ignoring the low base rate (S002).

This isn't a competence problem. Doctors know statistics. The problem is that the availability heuristic and the concreteness of the clinical case outweigh abstract numbers. More details in the Media Literacy section.

📊 Cybersecurity: Avalanche of False Alarms as a Consequence of Ignoring Attack Base Rates

A systematic review of intrusion detection system (IDS) applications in cybersecurity showed that ignoring the base rate of actual attacks leads to a catastrophic ratio of false to true alarms (S004). With a typical attack base rate of 0.01% (1 attack per 10,000 events) and IDS sensitivity of 99%, a system with a 1% false positive rate will generate 100 false alarms for every real attack.

| Parameter | Value | Consequence |

|---|---|---|

| Attack base rate | 0.01% | Attacks are rare |

| IDS sensitivity | 99% | Catches 99% of real attacks |

| False positives | 1% | 100 false per 1 real |

Security analysts systematically underestimate the scale of this problem, focusing on the system's "high accuracy" (99%) and ignoring the rarity of actual attacks (S004).

📊 Legal System: Expert Testimony and Overestimation of Guilt Probability

Analysis of the use of probabilistic expert testimony in legal proceedings (e.g., DNA matches, ballistic analysis) showed that jurors and judges systematically overestimate the probability of guilt, ignoring the base rate of crimes in the population. If an expert reports that "the probability of a random DNA match is 1 in a million," jurors interpret this as "the probability of innocence is 1 in a million," completely ignoring the prior probability that a random person from the population committed the crime (S002).

- Prosecutor's Fallacy

- Confusion between P(match | guilty) and P(guilty | match). The former is close to 1, the latter depends on the base rate of crimes and other suspects.

- Why This Is Dangerous

- An innocent person can be convicted if their DNA happens to match DNA at the crime scene, and the court ignores that millions of people in the population have similar DNA.

🧾 Meta-Analysis: Robustness of the Effect Across Different Populations and Contexts

Meta-analysis of base rate neglect studies showed that the effect is robust across different cultures, age groups, and education levels (S001). Effect size varies depending on how information is presented: when the base rate is presented as natural frequencies (e.g., "10 out of 1,000") instead of percentages (e.g., "1%"), the error decreases but doesn't disappear completely.

- Natural frequencies reduce the error by 20–40%, but don't eliminate it

- Visualization (diagrams, graphs) helps more than text

- Even with optimal format, a significant portion of participants continue to ignore the base rate

- Education and experience weaken but don't cancel the effect

🔬 Neurocognitive Correlates: Which Brain Systems Are Involved in the Error

Neuroimaging studies showed that tasks requiring integration of base rate with specific information activate the dorsolateral prefrontal cortex—an area associated with working memory and cognitive control (S005). Participants who successfully account for the base rate demonstrate higher activation in this area, indicating that the correct solution requires suppression of the intuitive response and explicit analytical effort.

The correct answer requires cognitive resources that in real conditions are often unavailable due to load, stress, or time constraints. The error isn't stupidity. It's brain energy conservation that becomes dangerous in high-stakes situations.

This explains why groupthink amplifies base rate neglect: in groups, social pressure suppresses analytical effort even more strongly.

The Mechanism of Error: Why the Brain Systematically Ignores Base Rates

Base rate neglect is not a random error, but a systematic consequence of human cognitive architecture. Understanding the mechanism is critical for developing effective prevention strategies. More details in the section Cognitive Biases.

🧬 Representativeness Heuristic: Fast Judgment Instead of Slow Calculation

Kahneman and Tversky showed that people assess the probability of an event not through formal application of Bayes' theorem, but through the representativeness heuristic: "How much does A resemble B?" (S001). If a patient's symptoms "resemble" the typical presentation of a disease, the brain automatically increases the estimated probability of that disease, ignoring its rarity.

This heuristic works quickly and produces acceptable results in most situations, but systematically fails in situations with low base rates and highly specific information. Similarity to a prototype becomes stronger than statistical reality.

The brain asks: "What does this look like?" — not: "How often does this occur?"

🧬 System Competition: Intuitive System 1 vs. Analytical System 2

In terms of Kahneman's dual-system model, base rate neglect is the dominance of fast intuitive System 1 over slow analytical System 2. System 1 automatically generates an answer based on representativeness and information availability.

System 2 is capable of applying Bayes' theorem and accounting for base rates, but this requires explicit effort, time, and motivation. Under conditions of cognitive load, time pressure, or absence of an explicit signal for analytical thinking, System 2 does not activate, and the erroneous System 1 response dominates (S004).

| System 1 (intuitive) | System 2 (analytical) |

|---|---|

| Automatic, fast | Requires effort, slow |

| Relies on similarity and availability | Applies formal logic |

| Active by default | Activates when explicitly needed |

| Ignores base rates | Accounts for base rates |

🔁 Framing Effect: How Information Format Modulates the Error

Research has shown that the format of probabilistic information presentation critically affects the frequency of base rate neglect (S002). When information is presented as percentages or probabilities ("1% of the population has the disease, the test is 99% accurate"), the error is maximal.

When the same information is presented as natural frequencies ("out of 1,000 people, 10 have the disease, the test correctly identifies 9 of them and falsely flags 10 healthy people"), the error significantly decreases. This indicates that the human brain is evolutionarily adapted to process frequencies, not abstract probabilities.

- Natural Frequencies

- Presenting information as concrete numbers from a population (e.g., "out of 1,000"). Activates System 2 and reduces base rate neglect by 50–70%.

- Abstract Probabilities

- Presenting as percentages or decimal fractions. Remains in System 1 mode, error is maximal.

🧬 Motivational Biases: When Desired Outcomes Influence Probability Assessment

Base rate neglect is amplified by motivational factors. If a person fears a particular disease, they tend to overestimate its probability even with low base rates and nonspecific symptoms.

If a security analyst is under pressure to "not miss an attack," they tend to interpret any anomaly as a threat, ignoring the low base rate of actual attacks. Motivation distorts not only information interpretation, but also the very willingness to engage analytical thinking (S001).

Fear and pressure don't just distort judgment — they shut down analytical thinking at its root.

The connection to the availability heuristic is direct here: motivationally significant events seem more frequent than they actually are, which further amplifies base rate neglect.

Conflicting Data and Confidence Boundaries: Where Evidence Diverges

The base rate effect is robust, but the conditions under which it manifests and methods to overcome it remain subjects of scientific disagreement. Three key debates reveal where evidence diverges and why no universal solution exists. More details in the Reality Check section.

Expertise: Shield or Illusion?

Experienced diagnostic physicians commit the base rate fallacy less often than novices (S009). But reframe the task in abstract terms—and the difference vanishes (S011).

Expertise works only if the professional has an explicit mental model for integrating base rates, and that model is activated by context. In atypical situations, experience offers no protection.

A physician accustomed to diagnostic protocols in their specialty may automatically account for disease prevalence. But if the task is framed as an abstract logical puzzle, their brain switches to "novice" mode—and the error returns.

Training: An Effect That Doesn't Hold

Brief training sessions on Bayes' theorem improve performance on subsequent tasks, but the effect doesn't transfer to new contexts and fades over time (S011). Intensive programs with repeated practice and feedback show more durable results, but require significant resources (S010).

| Intervention Type | Immediate Effect | Transfer to New Contexts | Durability Over Time |

|---|---|---|---|

| Brief training (explanation + examples) | Present | Weak | Fades |

| Intensive program (practice + feedback) | Present | Stronger | More durable |

The problem: the brain learns context, not principle. Teaching someone to calculate using Bayes in a laboratory doesn't mean they'll do it in a doctor's office or when assessing risk at work.

Data Format: Natural Frequencies—Not a Panacea

Presenting information as natural frequencies (e.g., "10 out of 1,000" instead of "1%") consistently reduces the base rate fallacy (S011). But even with optimal formatting, 30–40% of participants continue to ignore base rates.

- Natural Frequencies

- A format that facilitates intuitive understanding of probabilities (e.g., "50 out of 10,000 patients"). Works better than percentages, but isn't universal.

- Real-World Context

- In medical protocols, safety reports, and financial documents, information is often presented as percentages or probabilities. Changing format requires systemic changes in documentation and training.

Even if you reformat data perfectly, the system in which that data circulates may work against you. A physician receives test results as natural frequencies, but the electronic health record requires input as percentages—and the cycle closes.

These three debates point to one thing: there's no universal cure. Each solution works under specific conditions and requires ongoing support. Ignoring base rates isn't simply a cognitive error that can be fixed with a single intervention. It's a systemic problem embedded in how we learn, how information is organized, and how we make decisions under pressure.

Cognitive Anatomy of Manipulation: Which Biases Does Base Rate Neglect Exploit

Base rate neglect not only leads to unintentional errors, but can also be deliberately exploited to manipulate risk perception and decision-making. More details in the section Pharmaceutical Company Data Concealment.

⚠️ Exploitation Through Selective Presentation of Test Accuracy

Manufacturers of diagnostic tests, security systems, or machine learning algorithms often advertise "99% accuracy" or "high sensitivity" while remaining silent about the base rate of the event (S001). This is not an error—it's a strategy.

When the probability of an event is low (rare disease, rare breach), high test accuracy becomes an illusion of reliability. The consumer hears "99%" and ignores the context in which most positive results are false positives.

Manipulation works not because the information is false, but because it is incomplete. The fact remains a fact, but without the base rate it becomes a weapon.

🎯 Three Mechanisms of Exploitation

- Selective disclosure. They report sensitivity (proportion of true positives), but not specificity or positive predictive value.

- Emotional anchoring. "99% accuracy" sounds like a guarantee, activating the availability heuristic—a vivid number displaces statistical context.

- Social proof. When most people believe in test reliability (due to base rate neglect), groupthink reinforces the illusion (S004).

🔗 Connection to Other Cognitive Biases

Base rate neglect rarely operates in a vacuum. It intertwines with false dichotomy (the test either works or it doesn't), with confirmation bias (we seek facts supporting the initial positive result), and with disinformation, which deliberately conceals base rates.

The result: a system in which accurate instruments become error generators, and people become victims of their own inability to integrate statistical context.