Availability Heuristic: When Memory Vividness Replaces Event Frequency

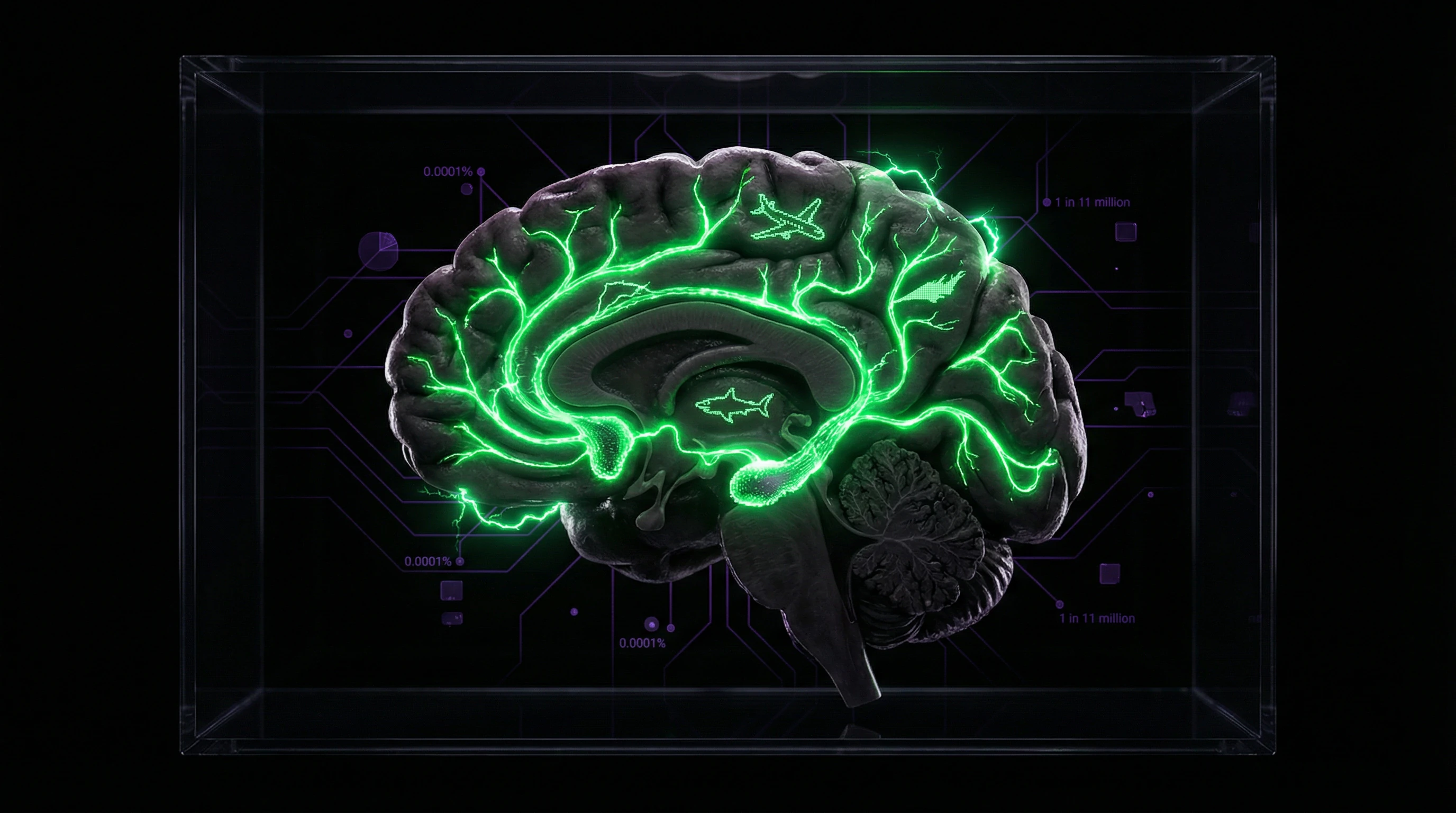

The availability heuristic is a cognitive bias in which we estimate the probability of an event based on how easily examples come to mind (S009). If something is easy to recall, the brain considers it more important than alternatives that are harder to remember.

The mental availability of consequences positively correlates with the perceived magnitude of those consequences: the easier to recall, the more significant it seems (S009).

- Plane crash vs car accident

- A single plane crash with hundreds of victims is more memorable than thousands of individual car accidents. Result: people overestimate flight risk, though statistically cars are more dangerous (S011).

- Information recency

- The heuristic is biased toward recent news. Yesterday's incident influences risk assessment more strongly than long-term trends.

- Emotional intensity

- Events that trigger fear or shock are encoded in memory with high priority and retrieved faster (S010).

Discovery History: Kahneman and Tversky in the 1970s

Amos Tversky and Daniel Kahneman began a series of studies on heuristics and cognitive biases under uncertainty (S009). They demonstrated that judgments often rely on simplifying heuristics rather than complete information processing.

Classic experiment: people were asked whether there are more words in English that begin with "K" or have "K" in the third position. Most chose the first option—because such words are easier to recall, though in reality there are approximately 1.5 times more words with "K" in the third position.

Distinction from Other Heuristics

It's important to distinguish the availability heuristic from representativeness and affect. Representativeness assesses probability by similarity to a typical category member, not by ease of recall. More details in the Logic and Probability section.

| Heuristic | Mechanism | Source of Error |

|---|---|---|

| Availability | Ease of retrieving examples from memory | Vividness, recency, emotions distort recall |

| Representativeness | Similarity to typical category member | Ignoring base rate frequency (more details) |

| Affect | Emotional reaction as information | Current mood determines judgment |

Key distinction: availability operates through the metacognitive experience of ease of recall, not through memory content or emotional coloring (S009).

Steel Version of the Argument: Seven Reasons Why the Availability Heuristic May Not Be a Bug, But an Adaptive Survival Mechanism

Before examining the availability heuristic as a source of systematic errors, we must consider the strongest arguments in its defense. Perhaps what we call a cognitive bias is actually an evolutionarily advantageous adaptation that, under certain conditions, works better than statistical analysis. More details in the Logical Fallacies section.

🧬 First Argument: The Evolutionary Environment Contained No Statistics — Only Personal Experience and Tribal Stories

In the environment of human evolutionary adaptation, there were no databases, statistical reports, or epidemiological studies. The only source of information about risks was personal experience and oral histories transmitted within the group. Under such conditions, vivid, memorable events genuinely correlated with important threats: if someone in the tribe died from a predator attack, this event needed to be remembered and influence the behavior of the entire group. The availability heuristic may have been an optimal strategy in a world where the sample of available memories matched the actual distribution of risks in the local environment.

🛡️ Second Argument: Speed of Decision-Making Matters More Than Accuracy in Situations of Immediate Threat

Cognitive shortcuts exist for a reason — they enable rapid decision-making under conditions of limited time and attentional resources. If you hear rustling in the bushes and easily recall a story about a snake attack, an immediate avoidance response may save your life, even if statistically the probability of encountering a snake is low. In situations where the cost of a Type I error (false alarm) is lower than the cost of a Type II error (missing a real threat), the availability heuristic may be a rational strategy that maximizes survival rather than forecast accuracy.

📊 Third Argument: Vivid Events Often Genuinely Signal Systemic Risks Invisible in Averaged Statistics

A plane crash is not just a single event with N casualties. It's a signal of a possible systemic failure in aviation safety that could lead to a series of accidents. A terrorist attack is not merely a local crime, but an indicator of an organized threat capable of scaling. Vivid, high-profile events may be "canaries in the coal mine," pointing to hidden risks not reflected in historical statistics. In this sense, heightened attention to dramatic events may be a form of early threat detection that statistical models based on past data have not yet captured.

🧠 Fourth Argument: Social Function — Coordinating Group Behavior Through Shared Vivid Narratives

The availability heuristic may serve an important social function: synchronizing risk perception within a group. When all community members respond identically to a vivid event (for example, a series of attacks), this creates a coordinated response — heightened vigilance, route changes, collective protective measures. Such synchronization may be more effective than a situation where each individual independently assesses risks based on statistics and reaches different conclusions. Shared vivid memories create a common threat landscape, facilitating collective action.

⚙️ Fifth Argument: Media Coverage as a Proxy for Social Significance, Not Just Frequency

One could argue that the intensity of media coverage reflects not only the frequency of an event, but also its social significance, political consequences, and potential for systemic change. A terrorist attack receives more attention not because journalists are irrational, but because it has consequences extending beyond immediate victims: changes in legislation, geopolitical shifts, erosion of social trust. If the availability heuristic causes us to give more weight to events with high media coverage, perhaps we're implicitly accounting for these secondary effects that are difficult to quantify statistically.

🔁 Sixth Argument: Metacognitive Information About Ease of Recall May Be a Valid Signal

Research shows that people rely not only on the content of memories, but also on the metacognitive experience of ease of retrieval (S009). If information comes to mind easily, this may signal that it was encoded as important, repeatedly activated, or associated with strong emotional context. In certain situations, this metacognitive information may be more relevant than abstract statistics: if you easily recall three cases of fraud with a specific type of investment, perhaps your brain has detected a pattern worth considering, even if the overall statistics look favorable.

🧭 Seventh Argument: Under Conditions of Incomplete Information, Any Heuristic Is Better Than Analysis Paralysis

Criticism of the availability heuristic often assumes the existence of an alternative in the form of complete statistical analysis. But in real life, such an alternative is rarely available: data are incomplete, contradictory, outdated, or unavailable at the moment of decision-making. Under such conditions, using available information — even if it's biased toward vivid examples — may be better than refusing to act or making a random choice. The availability heuristic provides at least some basis for decision-making when ideal information is unattainable.

Evidence Base: What Hundreds of Studies Show About Availability Heuristic — From Classic Experiments to Modern Neuroimaging Data

Despite the strength of defensive arguments, empirical data from the past fifty years demonstrates that availability heuristic systematically leads to predictable errors in probability and risk assessment in today's information environment. More details in the Epistemology section.

📊 Classic Tversky and Kahneman Experiments: The Letter K, Causes of Death, and Word Frequency Estimation

In Tversky and Kahneman's foundational work, participants were asked to estimate whether there are more words in English that begin with the letter "K" or words where "K" appears in the third position (S009). Most subjects chose the first option because words starting with "K" are easier to recall.

In reality, text contains twice as many words with "K" in the third position. This experiment demonstrates the basic mechanism: ease of retrieving examples from memory substitutes for objective frequency.

In another classic study, participants estimated the frequency of various causes of death: events receiving more media attention (homicides, plane crashes, tornadoes) were systematically overestimated, while more common but less dramatic causes (diabetes, asthma, drowning) were underestimated (S009).

🧪 Schwarz's Research: When Difficulty of Recall Matters More Than Number of Examples

A critical study conducted by Schwarz and colleagues showed that judgments are influenced not so much by the content of memories as by the ease of retrieving them (S009). Participants were asked to recall either 6 or 12 examples of their own assertive behavior.

Logic suggests: those who recalled 12 examples should rate themselves as more assertive. The result was opposite: participants who recalled 6 examples (which was easy) rated themselves as more assertive than those who struggled to recall 12 examples (S009).

The metacognitive experience of ease of recall can outweigh the volume of retrieved information.

🧾 Vaughn's Research: Effect of Uncertainty on Availability Heuristic Use

Vaughn's study (1999) examined how uncertainty affects the application of availability heuristic (S009). Results showed that under conditions of high uncertainty, people rely even more heavily on readily available examples, even when they recognize their non-representativeness.

- In crisis situations, information is contradictory and incomplete

- Availability heuristic becomes the dominant risk assessment strategy

- Panic reactions intensify toward vivid but statistically improbable threats

🔎 Medical Diagnostic Errors: How Availability of Recent Cases Distorts Clinical Judgment

Research shows that availability heuristic contributes to medical diagnostic errors (S011). Physicians who have recently encountered a rare disease tend to overestimate its probability in subsequent patients with similar symptoms, even when the base rate of that disease is extremely low.

This phenomenon, known as "recency effect in diagnosis," leads to excessive testing and missing more probable but less "available" diagnoses. A systematic review of diagnostic errors in emergency medicine showed that up to 15% of misdiagnoses are related to excessive reliance on recent experience and vivid cases (S011).

Recency effect in diagnosis is a direct mechanism through which base rate neglect transforms into clinical error.

📌 Crime Perception Studies: How Media Coverage Creates the Illusion of a Violence Epidemic

Pew Research Center data shows a persistent gap between crime statistics and public perception (S010). In the U.S., violent crime rates declined over two decades, but surveys show that most Americans believe crime is increasing.

This gap directly correlates with intensity of media coverage: vivid crime reports create an illusion of high frequency, though objective data demonstrates the opposite trend. Availability heuristic transforms media narrative into subjective reality, independent of statistical facts.

- Statistics: crime is declining

- Perception: majority believes it's increasing

- Cause: media coverage creates availability of vivid examples

- Result: subjective reality diverges from objective data

🧬 Neuroimaging Studies: How the Brain Processes Vivid Versus Statistical Data

Modern fMRI studies show different brain activation patterns when processing emotionally charged information versus abstract statistics (S010). Vivid, dramatic events activate the amygdala and other limbic system structures associated with emotional memory and rapid decision-making.

Statistical information activates the prefrontal cortex, requiring more cognitive resources and time. Under conditions of cognitive load or stress, prefrontal cortex activity decreases, and fast emotional processing dominates — which explains why availability heuristic intensifies under pressure and uncertainty (S010).

Brain architecture prefers speed over accuracy. Under pressure, the limbic system defeats rationality.

The Distortion Mechanism: How the Availability Heuristic Exploits Memory and Attention Architecture — From Information Encoding to Retrieval

To understand why the availability heuristic is so resistant to correction, we need to examine its neurocognitive foundations — from the moment information is encoded to its use in decision-making. More details in the Media Literacy section.

🧷 Priority Encoding: Why Emotionally Charged Events Are Recorded in Memory with High Priority

The amygdala modulates memory consolidation in the hippocampus. Events that trigger strong emotional reactions — fear, shock, outrage — are encoded with the involvement of noradrenaline and cortisol, which strengthens their consolidation in long-term memory.

This mechanism is evolutionarily adaptive: threatening events should be remembered better to avoid them in the future. But in the modern media environment, this mechanism is exploited — dramatic news activates the same neural pathways as real threats, creating a false sense of high frequency of dangerous events.

🔁 Repetition Effect and Media Amplification: How Multiple Coverage of a Single Event Creates the Illusion of Multiplicity

A single plane crash can generate hundreds of news stories, reports, and discussions over weeks. Each repetition strengthens the availability of this event in memory, creating the illusion that such disasters occur frequently (S009).

The brain doesn't distinguish between "one event mentioned 100 times" and "100 different events mentioned once each". Repetition increases the strength of the memory trace and the ease of its retrieval, which directly affects frequency estimation. This effect explains why the intensity of media coverage has a greater influence on risk perception than objective statistics.

⚙️ Metacognitive Substitution: When Ease of Recall Is Interpreted as Event Frequency

The key mechanism of the availability heuristic is metacognitive substitution: instead of answering the question "how often does this happen?" the brain answers the simpler question "how easily can I recall examples?" (S009).

This substitution occurs automatically and unconsciously. Even when people are warned about this bias, they continue to rely on ease of recall as an indicator of frequency. The metacognitive experience of "this is easy to remember" feels like valid information about the world, though it merely reflects the peculiarities of memory organization and media exposure.

🧩 Interaction with Other Biases: The Cascade Effect

The availability heuristic rarely operates in isolation. It interacts with other cognitive biases, creating cascade effects (S010).

| Bias | Amplification Mechanism | Result |

|---|---|---|

| Confirmation | Seeking information that confirms already formed beliefs about risk | Belief becomes entrenched and resistant to correction |

| Affect Heuristic | If an event is easily recalled and evokes fear, it seems even more likely | Emotion substitutes for statistics in risk assessment |

| Halo Effect | Risk assessment spreads from one aspect to others | An airline featured in news about a crash is perceived as unreliable in all aspects |

This interaction explains why cognitive biases are so difficult to overcome individually — they form a self-reinforcing system where each bias feeds the others.

Conflicts and Uncertainties: Where Sources Diverge and What Questions Remain Open in Availability Heuristic Research

Despite an extensive evidence base, the literature on the availability heuristic contains areas of uncertainty and methodological disputes that are important to consider for a complete picture. More details in the section Alternative Oncology.

🔎 The Operationalization Problem: What Exactly Do Studies Measure—Memory Content or Ease of Retrieval?

One of the central criticisms concerns the fact that different studies understand "availability" differently. Some focus on the frequency of recalling an event, others on the speed with which it comes to mind, and still others on the emotional intensity of the memory trace.

This creates methodological variability: when two researchers talk about the availability heuristic, they may be testing completely different cognitive processes (S003).

If you don't specify exactly what you're measuring—frequency, speed, or affect—results become incomparable across laboratories.

📊 Cross-Cultural Discrepancies: Is the Availability Heuristic Universal?

Studies in different countries show unequal effect sizes. In some populations, the availability heuristic explains 60–70% of risk assessment, while in others only 20–30% (S002), (S006).

The question remains open: is this a methodological artifact or a real difference in how different cultures encode and retrieve information about risks?

| Source of Dispute | Position A | Position B |

|---|---|---|

| Affect vs. availability | Emotion is a byproduct of availability | Affect is an independent predictor of risk (S007) |

| Adaptiveness of mechanism | Heuristic is an evolutionary error | Heuristic is a rational strategy under uncertainty |

| Media effect | Media distorts availability, creating illusion of frequency | Media simply reflects actual risk distribution |

🚨 The Causality Problem: Does Availability Cause Judgment Error or Simply Correlate with It?

Most studies show a correlation between ease of recall and risk assessment. But causality remains disputed: perhaps both processes are fed by a single source—for example, the actual frequency of the event in a person's environment (S004).

If so, then the availability "error" is not an error at all, but an adequate response to real statistics.

❓ Open Questions

- How can we separate the influence of availability from the influence of affect and social consensus in field conditions?

- Why do people rely on availability in some contexts but not in others?

- Is there a threshold beyond which availability ceases to be an adaptive strategy?

- How has the media ecosystem (algorithms, filter bubbles) changed the very nature of information availability?

These uncertainties do not negate the reality of the availability heuristic, but require caution when interpreting results and applying conclusions to policy and risk communication.