�� Your mind is not a fortress. It's a battlefield where an invisible war unfolds daily for your decisions, beliefs, and actions. The work of Daniel Kahneman and Amos Tversky shattered the myth of the rational human, revealing that our thinking is riddled with systematic errors that turn us into predictable puppets (S003). "Doctor Spin" is the archetypal manipulator who knows these 58 documented cognitive traps and wields them with surgical precision. Today we'll dissect the mechanisms of these biases and give you a self-defense protocol that works in 30 seconds.

�� What are cognitive biases and logical fallacies — and why 17th-century rationalists were catastrophically wrong

17th-century rationalists — Descartes, Spinoza — believed human thinking was flawless if given the right tools of logic (S003). Several centuries later, Kahneman and Tversky demolished this illusion: thinking is filled with systematic errors, prejudices, and cognitive traps (S003).

Cognitive biases are systematic deviations from rationality, hardwired into the brain's architecture. Logical fallacies are violations of formal logic rules in argumentation. Both categories make us predictably vulnerable to manipulation. More details in the Thinking Tools section.

The rationalists weren't wrong about logic — they were wrong about thinking that logic is the only mechanism of thought. The brain operates on heuristics: fast, dirty, economical rules that often make mistakes.

The boundary between bias and fallacy

A cognitive bias is a mental bug in perception. The availability heuristic makes us overestimate the probability of events that are easy to recall: plane crashes seem more dangerous than car accidents, even though statistics say otherwise (S003).

A logical fallacy is a defect in the chain of reasoning. "After this, therefore because of this" (post hoc ergo propter hoc) confuses correlation with causation (S008).

- Key distinction

- Cognitive biases generate logical fallacies. Base-rate neglect is a bias that leads to errors in probabilistic judgments. People ignore statistics and focus on vivid details (S003). This connection makes us especially vulnerable: we don't err randomly, but according to predictable patterns.

Scale of the problem: documented traps

Research has identified dozens of cognitive biases that reproduce in experiments with high reliability (S001). Among the most studied:

- Base-rate neglect

- Availability bias

- Conjunction fallacy

- Decoy effect

- Framing effect

- Allais paradox

Each of these biases has been confirmed repeatedly under controlled conditions. They're not random — they're part of our cognitive architecture, built into how the brain processes information under time pressure and uncertainty.

�� Five Most Powerful Arguments for the Existence of Systematic Cognitive Traps

Cognitive biases are not a theoretical abstraction, but a real phenomenon with a solid evidence base. Here are the strongest arguments that make this concept irrefutable. Learn more in the Critical Thinking section.

�� Argument 1: Reproducibility in Controlled Experiments

Cognitive biases demonstrate high reproducibility in laboratory settings. Kahneman and Tversky's experiments on the framing effect showed that the same information, presented in terms of losses or gains, triggers opposite decisions in the same people (S003). This is not random fluctuation—it's a systematic pattern that repeats across different cultures and time periods.

| Characteristic | Random Error | Systematic Bias |

|---|---|---|

| Reproducibility | Unpredictable | Repeats in 80–95% of cases |

| Direction | Random | Always in one direction |

| Magnitude | Varies | Stable across groups |

�� Argument 2: Predictive Power of Models

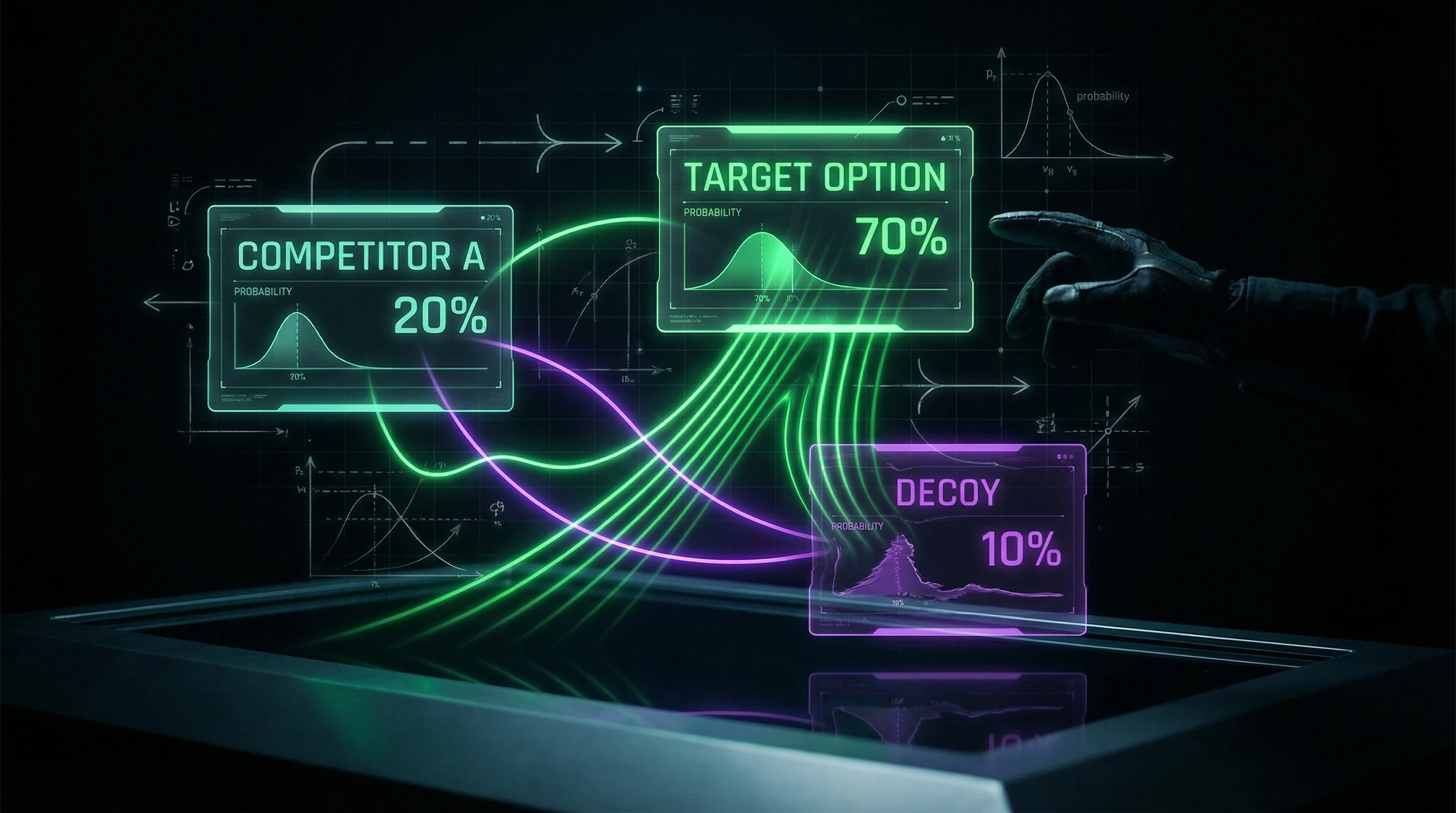

Models based on cognitive biases successfully predict human behavior in real situations. The decoy effect is used in pricing: adding a deliberately unfavorable third option makes the target option more attractive (S003). Companies apply this knowledge to increase sales of premium products—and it works with mathematical precision.

If biases were random noise, prediction would be impossible. Instead, we see reproducible effects ranging from 15–40% of the baseline metric.

�� Argument 3: Neurobiological Correlates

Modern neuroimaging methods show that cognitive biases are linked to activity in specific brain regions. Research in computational cognition demonstrates how brain architecture predisposes us to certain types of errors (S007). This is not just a psychological phenomenon—it's physiology.

When the brain processes information under time pressure or uncertainty, it activates fast decision-making systems (limbic system, basal ganglia) rather than slow analytical processes (prefrontal cortex). This is an architectural constraint, not a failure of willpower.

Argument 4: Evolutionary Justification

Many cognitive biases have evolutionary explanations. The availability heuristic was useful in environments where easily recalled threats were indeed more frequent and dangerous (S003). Our brains are optimized for survival on the savanna, not for statistical analysis in the information age.

These "bugs" are side effects of adaptations that once saved lives. They haven't disappeared because evolution hasn't had time to edit them out over the last 10,000 years.

�� Argument 5: Cross-Cultural Universality

Core cognitive biases are found across different cultures, indicating their universal nature. Base rate neglect manifests in subjects regardless of education, cultural context, or language (S003). This is not an artifact of Western psychology—it's a property of human cognition itself.

- Framing effect: reproduced in the USA, Israel, Japan, India

- Anchoring: works identically in cultures with different languages and number systems

- Confirmation bias: universal regardless of literacy level

- Decoy effect: operates across different economic systems and markets

�� Evidence Base: Six Experimentally Confirmed Cognitive Traps and Their Exploitation Mechanisms

Let's move to specific biases that have been repeatedly confirmed in research and actively exploited by manipulators. Each one is an open door into your consciousness. More details in the Psychology of Belief section.

Base-Rate Neglect

This bias causes people to ignore the statistical prevalence of a phenomenon and focus on specific details (S003). If a test for a rare disease (occurring in 1% of the population) has 95% accuracy, a positive result is still more likely to be a false positive. People systematically overestimate the probability of disease, forgetting the base rate.

"Doctor Spin" exploits this by presenting a vivid, emotionally charged case (a rare vaccine side effect) and making you forget the base rate (millions of safe vaccinations). Your brain latches onto the dramatic story and ignores the statistics.

One tragic story outweighs a million favorable outcomes—not because it's logical, but because that's how our memory works.

�� Availability Bias

We overestimate the probability of events that are easy to recall—usually because they're recent, vivid, or emotionally charged (S003). After a series of news reports about plane crashes, people start considering flights more dangerous, even though the statistics haven't changed.

This bias is exploited through control of the information agenda: if media constantly talk about rare but frightening events, you begin to consider them typical. More on the mechanism in availability heuristic and risk perception.

�� Conjunction Fallacy

People systematically rate the probability of a conjunction of two events (A and B) as higher than the probability of one of them (A), which is mathematically impossible (S003). The famous Linda problem: most people choose "bank teller and feminist activist," even though this is logically less probable than simply "bank teller."

Manipulators exploit this by adding plausible details to a statement, making it more "vivid" and convincing. Each additional detail reduces the probability of the entire conjunction but increases its psychological persuasiveness.

Decoy Effect

Adding a third, deliberately disadvantageous option (decoy) changes the relative attractiveness of the other two options (S003). Example: magazine subscription for $59 (online only) and $125 (print + online). Most will choose the cheaper option.

But if you add a third option—$125 (print only), suddenly the "print + online" combo for the same $125 seems like an incredible deal. Sales of this option skyrocket, even though objectively nothing has changed.

| Scenario | Majority Choice | Mechanism |

|---|---|---|

| Two options: $59 (online) vs $125 (print + online) | $59 (online) | Price is the main criterion |

| Three options: $59 (online) vs $125 (print + online) vs $125 (print only) | $125 (print + online) | Decoy makes combo the "best deal" |

�� Framing Effect

The same information presented in different formulations triggers different decisions (S003). Classic experiment: "Program will save 200 out of 600 lives" is perceived positively, while "Program will lead to the death of 400 out of 600 people" is perceived negatively, even though it's the same thing.

Politicians, marketers, and propagandists masterfully use framing to direct your perception in the desired direction. The same fact can be presented as a triumph or catastrophe depending on the chosen angle.

�� Allais Paradox

People systematically violate the axioms of expected utility theory, making choices that contradict their own preferences in a different context (S003). This demonstrates that our decisions don't follow a rational model of utility maximization.

We're sensitive to context and problem formulation in ways that make us predictably irrational. This predictability is the manipulator's main tool.

- Why these traps are universal

- They're built into the architecture of human thinking, not a lack of education or intelligence. Even experts and scientists fall into them when working outside their specialty.

- How to recognize them

- First sign—when you're asked to make a decision based on one vivid story, without statistics. Second—when information is presented in a form that triggers emotion before you've had time to analyze the facts.

- Defense

- Not complete immunization (it doesn't exist), but awareness of the mechanism. When you see framing, you can reframe the information yourself. When you notice a decoy, you can ignore it. When you hear one story—you can demand the base rate.

�� Mechanisms of Cognitive Biases: Why Our Brain Systematically Errs and How This Is Used Against Us

Understanding the mechanisms is key to protection. Cognitive biases aren't random; they arise from fundamental features of how our brain works. Learn more in the Cognitive Biases section.

�� System 1 vs System 2: The Architecture of Vulnerability

Kahneman described two modes of thinking: System 1 (fast, automatic, intuitive) and System 2 (slow, analytical, effortful). Most cognitive biases occur when System 1 provides a quick but inaccurate answer, and System 2 fails to activate for verification (S003).

Manipulators exploit this by creating conditions where System 2 doesn't have time to engage: time pressure, cognitive load, emotional arousal.

�� Heuristics: Useful Tools Turned Into Weapons

Heuristics are mental "shortcuts" that allow us to make quick decisions under uncertainty. The availability heuristic, representativeness heuristic, affect heuristic—all were adaptive in our evolutionary past (S003).

In today's information landscape, where information is carefully filtered and presented, these heuristics become vulnerabilities. "Dr. Spin" knows which triggers activate which heuristics and uses this knowledge with surgical precision.

- Availability Heuristic

- Events that are easier to recall seem more probable. The manipulator repeats rare but vivid examples until they become "available" in memory.

- Representativeness Heuristic

- We judge probability by similarity to a typical example. One vivid case outweighs statistics.

- Affect Heuristic

- The emotional coloring of an event determines our assessment of its risk and benefit. Fear distorts calculations.

�� Emotions as Bias Amplifiers

Research demonstrates a tight connection between cognitive processes and emotions (S005). Emotional arousal amplifies cognitive biases: fear strengthens the availability effect (you overestimate threats), anger reduces critical thinking, euphoria blinds you to risks.

Manipulators deliberately use emotional triggers to shut down your System 2 and make you maximally vulnerable. This isn't accidental—it's engineering.

| Emotion | Effect on Thinking | How It's Used |

|---|---|---|

| Fear | Overestimation of threats, seeking protection | Catastrophic scenarios, appeals to safety |

| Anger | Reduced criticism, seeking an enemy | Blame, polarization, black-and-white thinking |

| Euphoria | Ignoring risks, overestimating benefits | Promises of miracle solutions, hiding side effects |

| Guilt | Willingness to compensate, submission | Moral reproaches, demands for "atonement" |

Causality vs Correlation: The Fundamental Trap

Causal errors are a class of logical fallacies related to incorrectly establishing cause-and-effect relationships (S008). Our brain is evolutionarily wired to seek causes—this was critical for survival.

But this tendency leads to systematic errors: we see causality where there's only correlation, ignore confounders (third variables), confuse the direction of causality. "Dr. Spin" exploits this by presenting correlations as proof of causation and hiding alternative explanations.

- Two events occur together → brain seeks connection

- Connection found (often false) → confidence grows

- Alternative explanations ignored → causality "proven"

- Decision made based on false cause → outcome unpredictable

��️ Conflicts and Uncertainties: Where Sources Diverge and What This Means for Understanding Cognitive Biases

Scientific integrity requires acknowledging that not all aspects of cognitive biases are unambiguous. There are areas where researchers disagree. For more details, see the Climate and Geology section.

�� Debates About Rationality

Some researchers argue that many "cognitive biases" are actually rational adaptations to real-world decision-making conditions.

Ignoring base rates may be justified if you have specific information that makes the base rate irrelevant—but in controlled experiments, people systematically deviate from normative standards of rationality (S003).

These debates are important, but they don't negate the fundamental fact: when we face uncertainty without additional context, our brains choose predictable errors. For more on how this works, see the article on base rate neglect.

�� The Problem of Ecological Validity

Critics point out that many experiments are conducted in artificial laboratory conditions and may not reflect real-world behavior.

- Marketing uses this knowledge with predictable results

- Political campaigns build strategies based on cognitive traps

- Native advertising exploits the same mechanisms (S001)

If cognitive biases were merely laboratory artifacts, they wouldn't work in the real world with such predictability. Practical effectiveness is the best test of validity.

The gap between theory and practice disappears here: what works in experiments works on the street. This isn't coincidence—it's a sign that we've identified a real mechanism.

Cognitive Anatomy of Manipulation: Which Biases "Doctor Spin" Exploits and How to Recognize an Attack

Manipulators rarely use a single bias. They combine several, amplifying the effect and making defense more difficult. Here's how it works in practice. More details in the Witchcraft section.

�� Technique 1: Emotional Framing + Availability Heuristic

The manipulator presents information in an emotionally charged frame while simultaneously making certain examples easily accessible for recall. Result: you overestimate the probability of an event and make decisions based on emotions rather than facts.

A series of news stories about rare but frightening crimes by immigrants creates an impression of a massive threat, even though statistics show the opposite. The availability heuristic makes you remember vivid examples rather than numbers.

�� Technique 2: Base Rate Neglect + Conjunction Fallacy

A vivid, detailed story is presented that makes you forget about the statistical prevalence of the phenomenon. You assess the probability of a complex scenario as high because it "sounds plausible."

This is the foundation of conspiracy theories: the more details, the more "convincing" the story, even though each detail mathematically reduces its probability. Ignoring base rates allows the manipulator to replace statistics with narrative.

⚙️ Technique 3: Decoy Effect in Political Choice

In politics, the decoy effect is used to manipulate voter preferences (S003). Introducing a third candidate who "draws away" votes from one of the main competitors can change the election outcome.

| Scenario | Mechanism | Result |

|---|---|---|

| Two candidates are equal | Voter chooses based on principles | Predictable outcome |

| Third added (similar to one) | Decoy effect distorts comparison | Votes redistribute illogically |

�� Technique 4: Causal Errors in Native Advertising

Native advertising actively exploits cognitive biases by presenting promotional content as editorial material. Causal errors create the illusion of a cause-and-effect relationship between the product and the desired outcome.

- "Successful people use this product"

- The manipulator shows correlation but hides the fact that successful people use many products. Your brain automatically searches for causal connections and fills in the gaps.

- "If I use this product, I'll become successful"

- This is a classic error, but it works because we overestimate the role of a single factor and underestimate the complexity of reality. Mental errors of this type are built into our cognitive architecture.

You can recognize an attack by stopping and asking three questions: what emotions am I experiencing right now, which examples do I remember best, and what statistical data am I ignoring (S001).

��️ 30-Second Verification Protocol: Seven Questions That Will Destroy Any Cognitive Bias-Based Manipulation

Theory is useless without a practical tool. Here's a protocol you can apply to any claim, decision, or call to action.

- What's the base rate? Before reacting to a vivid example, ask: how statistically common is this phenomenon? If you're shown a dramatic case but given no frequency data, that's a red flag. (S003) shows that ignoring base rates is one of the most exploited biases.

- Why is this information easily accessible? If something is "common knowledge" or "constantly discussed," ask: who is deliberately making it visible? The availability heuristic only works if information is easily recalled—manipulators know this.

- Do details add probability or just plausibility? Each additional detail reduces the mathematical probability of the entire conjunction (S003). Plausibility ≠ probability. This protects against the conjunction fallacy.

- Is there a "decoy" here? If you're offered a choice, check: has a third option been added specifically to make one of the main options more attractive? The decoy effect works invisibly, but it can be detected if you know what to look for.

- How would my decision change if reframed in terms of losses/gains? The framing effect means your decision depends on wording. Reframe the statement in opposite terms: if your decision changes, you've fallen victim to framing.

- Correlation or causation? If a causal relationship is claimed, ask: is this proven causality or just correlation? Are there alternative explanations? Causal errors (S008) are the foundation of most pseudoscientific claims.

- What emotion does this trigger? If a message evokes anger, fear, or urgency, stop. Emotional arousal shuts down the prefrontal cortex. Ask: why this specific emotion? Who benefits from my reaction?

Manipulation doesn't work because you're stupid. It works because your brain uses heuristics to conserve energy. The verification protocol is simply switching to slow thinking mode.

These seven questions don't require expertise. They require a pause. Manipulators count on the speed of your reaction—on you responding emotionally rather than analytically.

Each question targets a specific mechanism: base rate blocks base rate neglect, availability exposes the availability heuristic, details protect against the conjunction fallacy, decoy reveals choice manipulation, framing shows dependence on wording, causality destroys pseudoscientific claims, emotion reveals the manipulator's intent.

| Question | Protects Against | Danger Signal |

|---|---|---|

| Base rate | Ignoring statistics | Vivid example without numbers |

| Information availability | Availability effect | "Everyone's talking about it" |

| Details vs probability | Conjunction fallacy | Excess details in story |

| Decoy | Choice manipulation | Third option that's "worse" |

| Framing | Wording dependence | Decision changes when reframed |

| Causality | Causal errors | Correlation presented as cause |

| Emotion | Emotional hijacking | Urgency, anger, fear |

Apply this protocol not as dogma, but as a calibration tool. If you can't answer three of the seven questions—the information is insufficient for a decision. If answers point to manipulation—this doesn't mean the claim is false, but it does mean it requires independent verification.

Cognitive immunology isn't paranoia. It's thinking hygiene. Just as you brush your teeth to avoid cavities, you verify information to avoid cognitive contamination.