Terminological chaos: why "systematic review" and "meta-analysis" are not synonyms, but everyone pretends they are

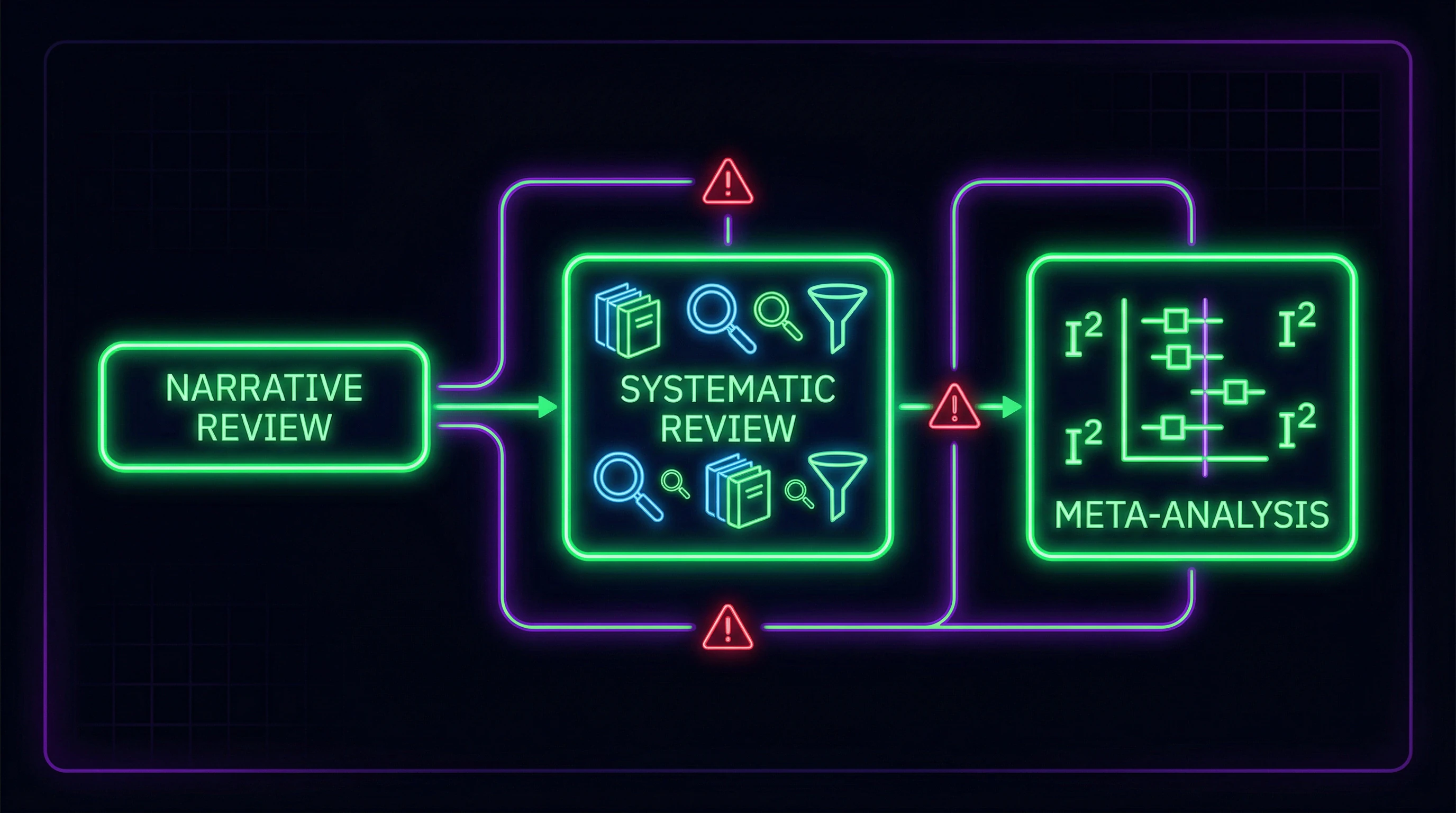

The first and most common error in scientific literature is using the terms "systematic review" and "meta-analysis" as interchangeable concepts. A systematic review represents a comprehensive process of searching and selecting all relevant studies on a specific topic using strictly defined inclusion and exclusion criteria (S010).

Meta-analysis is a statistical method for combining quantitative data from a systematic review (S010). Critically important: meta-analysis is impossible without a prior systematic review, but a systematic review can exist without meta-analysis when data are too heterogeneous or studies provide only qualitative information.

- Systematic review

- Methodological framework for searching and selecting studies with predetermined criteria. Ensures reproducibility and transparency of evidence synthesis.

- Meta-analysis

- Statistical pooling of quantitative data. Requires data homogeneity and correct assessment of heterogeneity.

- Scoping review

- Systematic approach with broader coverage of the research question (S010). Ideal for emerging fields and identifying directions for further research.

Why terminological confusion destroys scientific communication

Mixing concepts creates an illusion of rigor where none exists. Researchers often label their work a "systematic review with meta-analysis" without conducting systematic searching or correct statistical analysis.

The result: publications that look like high-quality evidence but actually represent selective literature reviews with arbitrary pooling of incomparable data.

Differentiation criteria: identification protocol

| Element | Systematic review | Meta-analysis |

|---|---|---|

| Protocol | Pre-registered | Includes statistical plan |

| Search | Systematic across multiple databases | From systematic review |

| Criteria | Defined before search begins | Defined before analysis |

| Quality assessment | Risk of bias by two reviewers | Heterogeneity and publication bias analysis |

| Data | Qualitative or quantitative | Only quantitative, poolable |

A systematic review without meta-analysis remains valid research. Meta-analysis without a systematic review is statistical manipulation, not science. More details in the Critical Thinking section.

Seven Rock-Solid Arguments for Strict Methodological Requirements in Systematic Reviews

Before examining why most reviews fail quality checks, it's essential to understand why the requirements are so stringent. This isn't academic pedantry — each requirement protects against a specific type of systematic error. More details in the Cognitive Biases section.

🧪 Argument One: Reproducibility as the Foundation of Scientific Method

A systematic review aims to synthesize evidence on a specific topic through structured, comprehensive, and reproducible literature analysis (S010). Reproducibility means that an independent team of researchers, following the same protocol, should obtain an identical set of included studies.

This is critically important for developing informed understanding of the subject, enabling evidence-based conclusions to guide further research, policy decisions, and clinical practice (S010).

📊 Argument Two: Preventing Selective Data Picking

Without systematic search and clear inclusion criteria, researchers inevitably select studies that confirm their hypothesis. This isn't necessarily malicious manipulation — confirmation bias operates automatically.

A systematic approach with pre-registered protocol makes selection impossible. A protocol published before analysis begins is an anchor that prevents conclusions from drifting toward desired results.

🧾 Argument Three: Bias Risk Assessment as Protection Against Garbage Data

For randomized controlled trials, the revised Cochrane Risk of Bias tool (RoB-2) is widely recognized as the standard (S010). The Cochrane Collaboration tool for assessing risk of bias in randomized trials provides structured evaluation of methodological quality (S009).

Without such assessment, a systematic review may combine high-quality RCTs with studies where randomization was compromised, blinding was absent, and data were selectively reported.

🔁 Argument Four: Quantifying Heterogeneity Prevents Meaningless Averaging

Quantitative assessment of heterogeneity in meta-analysis (S009) determines how much the results of included studies differ from each other. Pooling data from studies with high heterogeneity without analyzing it is a statistical error, equivalent to averaging patient temperatures in a hospital: you'll get a number, but it will be meaningless.

- Calculate I² — the proportion of variation explained by heterogeneity rather than chance

- If I² > 75%, heterogeneity is high — analysis of sources of differences is required

- If heterogeneity is unexplainable, data pooling is inadmissible

- Use random effects model instead of fixed effects if heterogeneity is present

🧬 Argument Five: Critical Evaluation of Non-Randomized Study Quality

Critical appraisal of the Newcastle-Ottawa Scale for assessing the quality of non-randomized studies in meta-analyses (S009) shows that even widely used instruments have limitations. However, the absence of any quality assessment for observational studies renders a systematic review useless.

It's impossible to distinguish a well-conducted cohort study from a retrospective analysis with multiple sources of bias without structured assessment.

🧰 Argument Six: Systematic Review Strength Directly Relates to Included Study Quality

While some topics may have numerous high-quality randomized controlled trials, others may be limited to case series or other study designs with lower levels of evidence (S010). The strength of a systematic review is directly related to the quality of included studies (S010).

A systematic review of low-quality studies remains low-quality evidence. Methodology cannot turn garbage into gold — it can only honestly show that we're dealing with garbage.

🛡️ Argument Seven: Protection Against Publication Bias

Studies with positive results are published more frequently than studies with negative or null results. Without systematic search of unpublished data, clinical trial registries, and grey literature, meta-analysis will systematically overestimate intervention effects.

This isn't a theoretical problem — in some areas of medicine, publication bias completely changes conclusions about treatment effectiveness. Search must include clinical trial databases, dissertations, conference proceedings, and direct contact with authors.

Step-by-Step Anatomy of a Quality Systematic Review: What Should Be There and What Almost Never Is

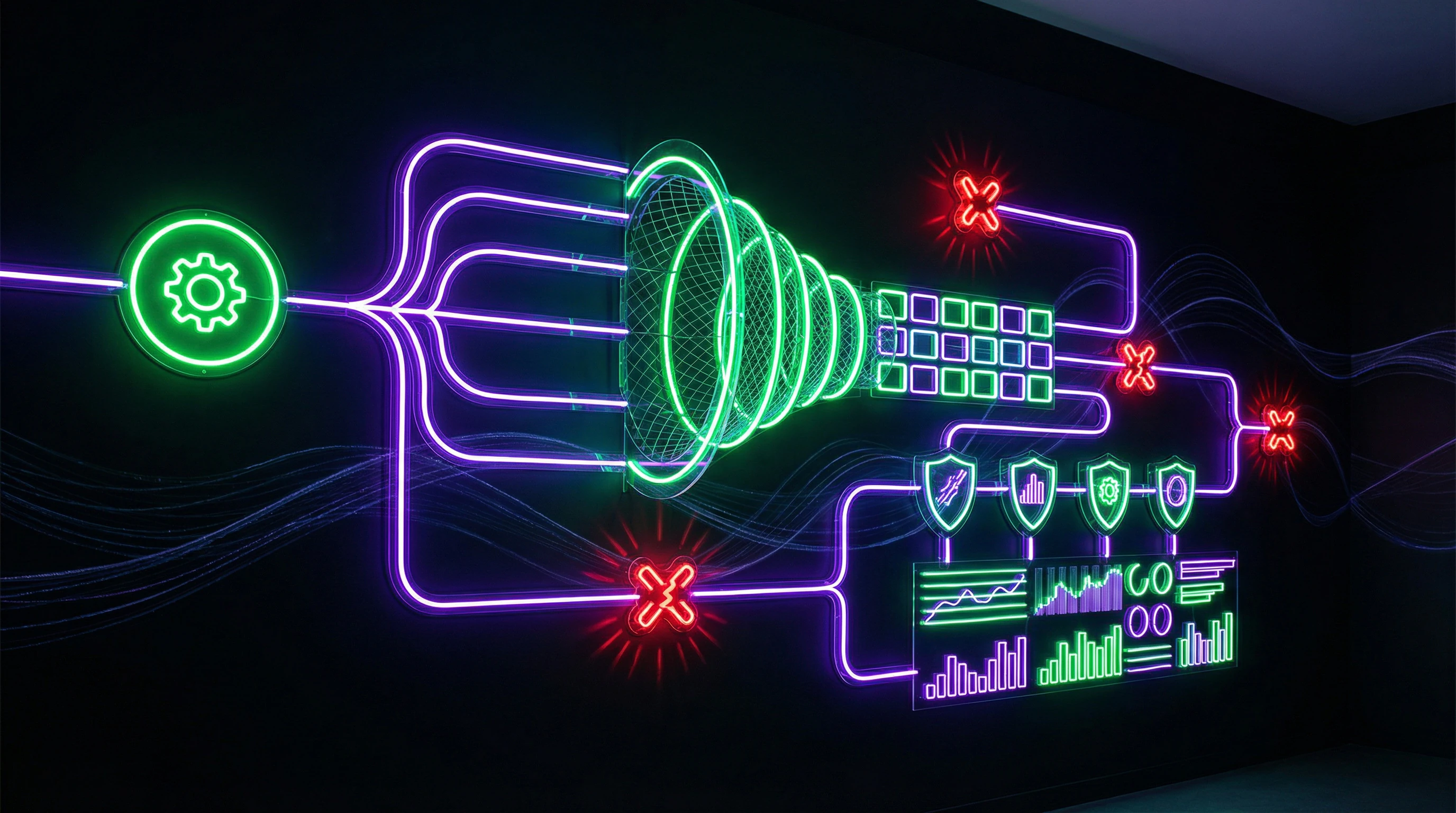

A systematic review is not just a compilation of articles. It's a protocol with seven critical stages, each with clear requirements and failure points (S010).

Most published "systematic reviews" skip or simplify at least three of them. The result: conclusions that look like evidence but aren't. More details in the Media Literacy section.

📌 Stage One: Formulating the Research Question and Pre-registering the Protocol

The research question must be specific and clearly defined (S010). The PICO format (Population, Intervention, Comparison, Outcome) structures the clinical question so that inclusion criteria are objective, not tailored to the desired result.

The protocol must be registered in PROSPERO before beginning the literature search. This makes it impossible to change inclusion criteria after researchers have seen the results — the main mechanism of p-hacking at the systematic review level.

🔬 Stage Two: Systematic Search Strategy Across Multiple Databases

The search covers at least three major databases (PubMed, Embase, Cochrane Library), plus gray literature, clinical trial registries, and manual searches of reference lists from key articles. The strategy must be reproducible — another researcher will get the same results with the same search terms and filters.

If the search is limited to one database or publication language, that's already selection bias.

🧾 Stage Three: Independent Screening by Two Reviewers

Two reviewers independently assess each study against inclusion criteria. Any uncertainties are included in full-text screening to avoid premature exclusion (S010).

Conflicts are resolved through discussion, consensus, or a third reviewer. This requirement protects against subjectivity — one reviewer might miss a relevant study or misinterpret the criteria.

🧪 Stage Four: Structured Data Extraction Using Predefined Forms

The extraction form is developed and tested before work begins. It includes all variables for analysis plus information for assessing risk of bias. Extraction is conducted independently by two reviewers with subsequent comparison and resolution of discrepancies.

- Why This Is Critical

- If the form is developed after reviewing several articles, the researcher already knows which data "confirm" their hypothesis. A predefined form blocks this trap.

- Where It Breaks Down in Practice

- One reviewer extracts data, the second checks selectively. Or the form contains open fields that allow the same data to be interpreted differently.

🔁 Stage Five: Risk of Bias Assessment Using Validated Tools

For RCTs, RoB-2 is used; for observational studies — Newcastle-Ottawa Scale or ROBINS-I (S010). Assessment is conducted independently by two reviewers and documented.

Results are presented as tables and graphs showing the distribution of risks across domains. This allows readers to see which studies have high risk of bias and why.

📊 Stage Six: Statistical Synthesis with Heterogeneity Assessment

If data permit meta-analysis, a model (fixed or random effects) must be chosen based on expected heterogeneity. Then calculate the pooled effect estimate with confidence intervals.

- Assess heterogeneity (I², τ², Q-statistic)

- Conduct sensitivity analysis — exclude studies with high risk of bias and recalculate results

- Assess publication bias (funnel plots, Egger/Begg tests)

- Conduct subgroup analysis if specified in the protocol

🧬 Stage Seven: Assessing Certainty of Evidence (GRADE)

The GRADE system assesses quality of evidence at four levels: high, moderate, low, very low (S010). Assessment considers risk of bias, inconsistency of results, indirectness of evidence, imprecision of estimates, and publication bias.

High quality evidence does not mean the effect is large or clinically significant. It means further research is unlikely to change the estimate of effect. Low quality means the next study could completely change the conclusions.

The link between methodological rigor and reliability of conclusions is direct. Each skipped stage is an open door for systematic error. For more on the cognitive mechanisms that cause researchers to ignore these requirements, see the critical thinking toolkit.

Cognitive Anatomy of Pseudo-Systematic Reviews: Which Mental Traps Make Researchers Ignore Methodology

The psychological mechanisms that lead to the creation of low-quality systematic reviews operate automatically and invisibly. Identifying them is the first step toward prevention. More details in the section DNA Energy and Quantum Mechanics.

🧩 Confirmation Bias: Why Researchers Only See What They Want to See

Confirmation bias causes researchers to disproportionately focus on studies that confirm their hypothesis and ignore contradictory data. Without systematic search and predefined inclusion criteria, this bias operates automatically.

A researcher seeking evidence of a method's effectiveness finds three confirming studies and stops. A systematic search would have identified twenty more—half of which show no effect.

🕳️ Illusion of Validity: When the Number of Studies Creates a False Sense of Reliability

Combining a large number of studies creates a psychological sense of conclusion reliability, even if all these studies are of low quality. A meta-analysis of 50 poorly conducted studies remains systematized garbage.

- The Quantity Trap

- The number of studies in a review does not correlate with conclusion quality. The criterion is the methodological rigor of each included study and the transparency of the selection process.

- Where This Manifests

- Reviews that boast "analysis of 200+ studies" often hide the absence of exclusion criteria and biased selection.

🧠 Anchoring Effect: How the First Studies Found Determine the Direction of the Entire Review

Researchers who begin with non-systematic search become "anchored" to the first studies found and then seek confirming data. Systematic search with a predetermined strategy neutralizes this effect.

The connection to thinking tools is direct here: anchoring is a cognitive tool that must be recognized and controlled through protocol, rather than relying on researcher intuition.

⚙️ Planning Fallacy: Why Researchers Underestimate Time and Resources

A quality systematic review requires hundreds of hours of work by a team of at least three people. Researchers systematically underestimate these requirements and choose "simplified" approaches that destroy methodological rigor.

- Literature search in 5+ databases (not Google Scholar)

- Independent assessment of each study by two reviewers

- Documentation of exclusion reasons for each study

- Risk of bias assessment using standardized tools

- Heterogeneity analysis before combining data

The result of skipping these steps is publications that are called systematic reviews but are actually selective literature reviews. The distinction between them is not a matter of terminology, but a matter of conclusion reliability.

Evidence Base Analysis: What the Data Says About the Quality of Modern Systematic Reviews

Analysis of published systematic reviews reveals systemic problems with methodological quality across most areas of medicine and science. More details in the section 5G Fears.

📊 Empirical Data on the Frequency of Methodological Violations

Studies evaluating the quality of published systematic reviews consistently find that a significant proportion of publications fail to meet basic methodological requirements.

Absence of protocol pre-registration, incomplete literature searches, lack of independent assessment by two reviewers, absence of bias risk assessment—these violations occur in 40–70% of published "systematic reviews" depending on the field.

Methodological defects in most cases are not the result of ignorance, but rather a consequence of saving time and resources. The researcher knows what needs to be done but chooses the shortcut.

🔬 Specific Examples from Pharmacogenetics: Warfarin Dosing Variability

A systematic review and meta-analysis of the influence of CYP2C9 genotype on warfarin dose requirements (S003) demonstrates correct methodology: systematic search across multiple databases, use of validated software for meta-analysis, inclusion of a randomized trial of genotype-guided warfarin dosing, analysis of heterogeneity between studies.

This example shows that quality reviews exist. The question is not one of impossibility, but of prevalence.

🧾 Data from Gastroenterology: Loss of Response to Anti-TNFα Therapy

A systematic review with meta-analysis of loss of response and need for dose intensification of anti-TNFα in Crohn's disease (S009) follows strict methodological standards: use of the PRISMA statement (Preferred Reporting Items for Systematic Reviews and Meta-Analyses), application of the Cochrane Collaboration tool for bias risk assessment, quantitative assessment of heterogeneity.

The study analyzes data from large RCTs, including ACCENT I (infliximab maintenance therapy) and CHARM (adalimumab for maintenance of clinical response and remission).

- Protocol pre-registration in PROSPERO

- Search in at least 3 databases (MEDLINE, Embase, Cochrane)

- Independent quality assessment by two reviewers

- Formal bias risk assessment using Cochrane methods

- Heterogeneity analysis (I² statistic)

🧬 Mechanistic Data: Link Between Drug Levels and Clinical Response

Post-induction serum trough level of infliximab and decrease in C-reactive protein are associated with durable sustained response to infliximab: a retrospective analysis of the ACCENT I study (S009).

C-reactive protein is an indicator of serum infliximab levels in predicting loss of response in patients with Crohn's disease. These data show that quality systematic reviews don't simply aggregate data, but also analyze mechanistic connections between biomarkers and clinical outcomes.

The difference between a review and a meta-analysis manifests precisely here: a review can identify a pattern, a meta-analysis can quantify it, but only a quality review will understand why it exists.

🔁 Analysis of Speed and Magnitude of Induction Response

Response and remission at 18 months of certolizumab pegol therapy in patients with active Crohn's disease are independent of the speed and magnitude of induction: analysis of PRECISE 2 and 3 (S009).

This type of analysis is only possible within a quality systematic review that includes detailed extraction of data on temporal parameters of treatment response. This requires not just collecting numbers, but understanding the clinical logic of the studies.

Causation vs. Correlation: Why Most Meta-Analyses Fail to Distinguish Between Them

One of the fundamental problems with modern systematic reviews is the inability to distinguish between causal relationships and simple correlations, especially when pooling observational studies.

🔬 The Confounder Problem in Observational Studies

Even a high-quality meta-analysis of observational studies cannot eliminate systematic errors inherent in the included studies. If all cohort studies in a meta-analysis failed to control for an important confounder, the pooled estimate will be systematically biased.

Quality assessment tools (e.g., Newcastle-Ottawa) measure methodological rigor but cannot compensate for the absence of control over critical variables in the source data.

🧬 Biological Plausibility as a Necessary but Insufficient Condition

The presence of a biologically plausible mechanism does not prove causation. Systematic reviews must explicitly discuss which causality criteria are met for observed associations.

- Bradford Hill Criteria for Causation:

- Strength of association — effect size and statistical significance

- Consistency — reproducibility across different populations and settings

- Specificity — cause produces a specific effect, not multiple outcomes

- Temporal sequence — cause precedes effect

- Biological gradient — dose-response relationship

- Coherence — consistency with known facts

- Experimental evidence — controlled studies confirm the mechanism

📊 Heterogeneity as an Indicator of Hidden Moderators

High statistical heterogeneity (I² > 75%) indicates the presence of unaccounted effect moderators. Rather than simply noting high heterogeneity, a quality systematic review should conduct subgroup analysis and meta-regression to identify sources of variability.

- Calculate I² and Q-statistic to assess heterogeneity

- Conduct subgroup analysis by key characteristics (age, sex, intervention duration)

- Perform meta-regression to identify continuous moderators

- Discuss which unmeasured variables might explain remaining variability

- Indicate whether identified heterogeneity reduces confidence in conclusions

🧾 Temporal Sequence in Longitudinal Data

Establishing causation requires demonstrating that the presumed cause precedes the effect in time. Meta-analyses of cross-sectional studies cannot establish temporal sequence, which limits causal inferences.

Systematic reviews must explicitly state these limitations rather than making causal claims based on correlational data. Separating studies by design (randomized controlled trials, cohort, cross-sectional) and analyzing each group separately is the minimum standard for honest interpretation.

Conflicts and Uncertainties: Where Sources Diverge and Why This Is Critical for Interpretation

A high-quality systematic review does not hide discrepancies between studies but makes them a central element of analysis.

🧩 Disagreements in Risk of Bias Assessment Between Reviewers

Any conflicts during the quality assessment phase are resolved through discussion and consensus between two reviewers or a third arbiter (S010). However, a systematic review must report the frequency and types of disagreements—high frequency indicates unclear assessment criteria or subjectivity of the instrument.

Silence about disagreements between reviewers is concealment of methodological vulnerability. Transparency about conflicts increases confidence in conclusions.

When reviewers disagree in their assessment of the same study, it's a signal: either the criteria are unclear, or the instrument requires revision. Documenting such cases is part of honest methodology.

🔬 Contradictory Results Between RCTs and Observational Studies

Randomized controlled trials and observational studies often yield opposite conclusions. This indicates systematic errors in observational data (confounding, selection) or real differences in populations and interventions.

A high-quality review conducts separate analysis by study design and discusses reasons for discrepancies, rather than averaging them into a single figure. This requires critical examination of mechanisms, not mechanical pooling of data.

📊 Inconsistency Between Direct and Indirect Comparisons

In network meta-analyses, direct comparison (A vs B in one study) may differ from indirect comparison (A vs C and C vs B, from which we derive A vs B). Large discrepancies indicate violation of the transitivity assumption or hidden differences in populations.

- Check whether patient characteristics match in direct and indirect comparisons

- Assess whether doses, duration, or types of interventions differ

- Conduct sensitivity analysis, excluding studies with the greatest discrepancy

- Discuss whether the discrepancy can be explained by clinically significant differences

If discrepancies remain unexplained, this is a limitation, not a reason to ignore the problem.