📈 Statistics and Probability Theory

📈 Statistics and Probability TheoryStatistics and Probability: Mathematical Foundations for Understanding the Worldλ

Fundamental mathematical disciplines for data analysis, decision-making, and understanding random phenomena in science, business, and everyday life

Overview

Statistics and probability theory form the mathematical foundation for data analysis, decision-making, and understanding random phenomena. From scientific experiments to financial planning 🧩 these disciplines shape objective knowledge of reality and protect against data manipulation. Key concepts — random sampling, representativeness, empirical distribution function — constitute the methodological basis for correct analysis.

🛡️

Laplace Protocol: Statistics and probability theory are not merely tools for working with numbers, but fundamental methods of knowledge acquisition that allow us to extract reliable insights from uncertainty and make informed decisions under conditions of randomness.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

📈 Statistics and Probability Theory

📈 Statistics and Probability Theory 📈 Statistics and Probability Theory

📈 Statistics and Probability Theory⚡

Deep Dive

How Random Sampling Transforms Data Chaos into Scientific Knowledge

Statistical analysis begins with a fundamental question: how do you select a few hundred objects from millions so that conclusions remain valid for the entire population? Random sampling and representativeness form the methodological foundation of modern research — from marketing surveys to clinical trials.

These concepts define the boundary between scientific analysis and simple guessing, transforming partial observations into reliable statements about the population.

Random Sampling and Representativeness as the Foundation of Validity

Random sampling is a selection method where each object in the population has a known, non-zero probability of being included in the study. Sample representativeness means its ability to reflect key characteristics of the entire population: distribution of features, group proportions, parameter variability.

| Sampling Type | Mechanism | When to Use |

|---|---|---|

| Simple Random | Each element has equal probability of selection | Homogeneous population, complete registry available |

| Stratified | Population divided into strata, proportional selection from each | Key subgroups known (age, region, income) |

| Cluster | Select entire groups (clusters), then elements within them | Population geographically dispersed, high access costs |

Critical misconception: large sample size automatically guarantees quality. A non-representative sample of one million people will produce less accurate results than a properly constructed sample of one thousand.

Systematic errors in sample formation cannot be compensated by increasing sample size — if the selection mechanism is biased, each new element only amplifies the distortion.

Telephone surveys automatically exclude people without landlines, creating demographic bias regardless of respondent count. Ensuring randomness requires strict protocols: random number tables, pseudorandom sequence generators, stratification by key variables.

- Documenting Selection Procedures

- Mandatory methodological element allowing evaluation of potential systematic error sources and study replication.

- Sample Size

- Must balance statistical power with available resources, but formation method remains the priority factor.

Empirical Distribution Function as Bridge Between Data and Theory

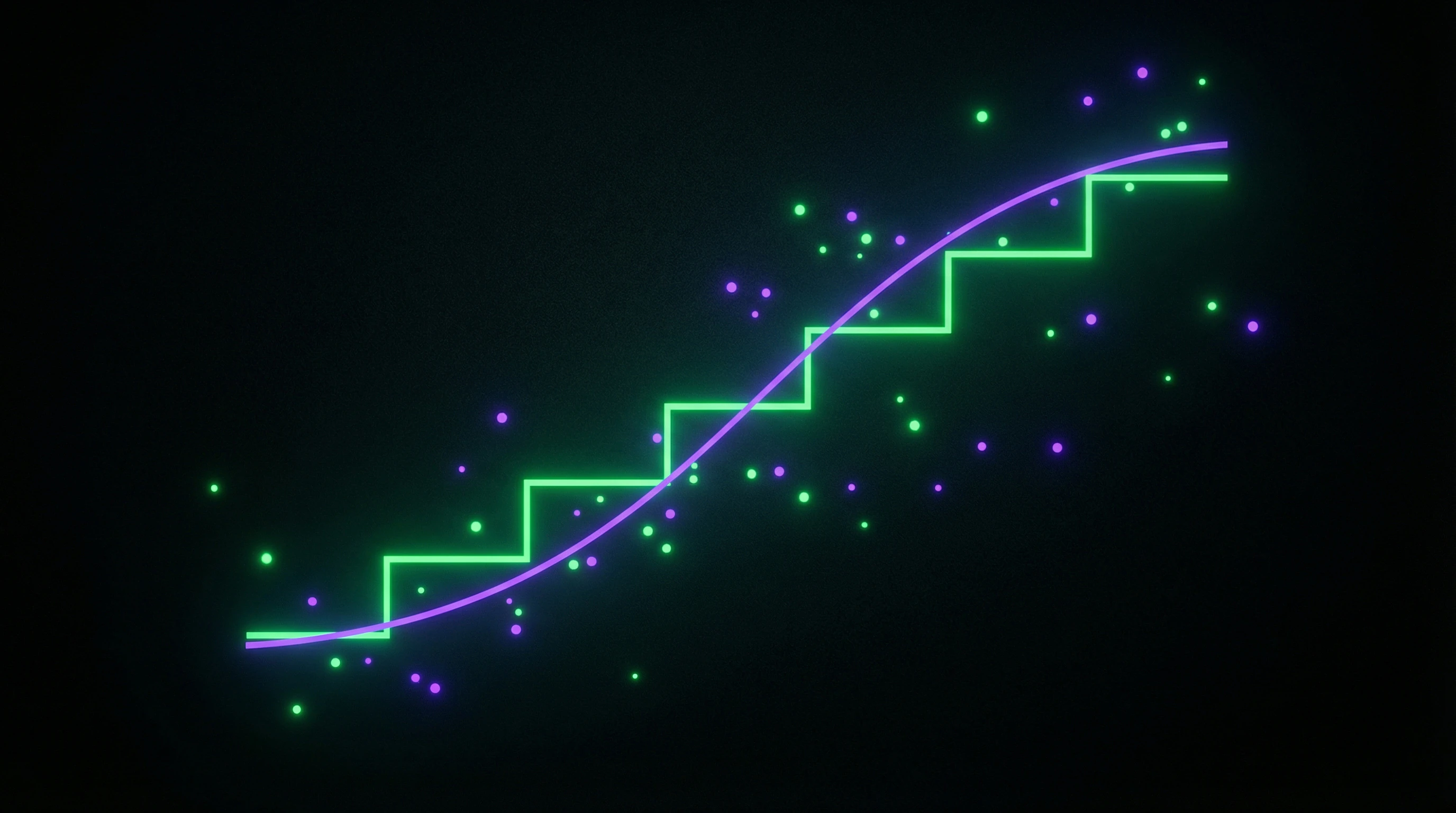

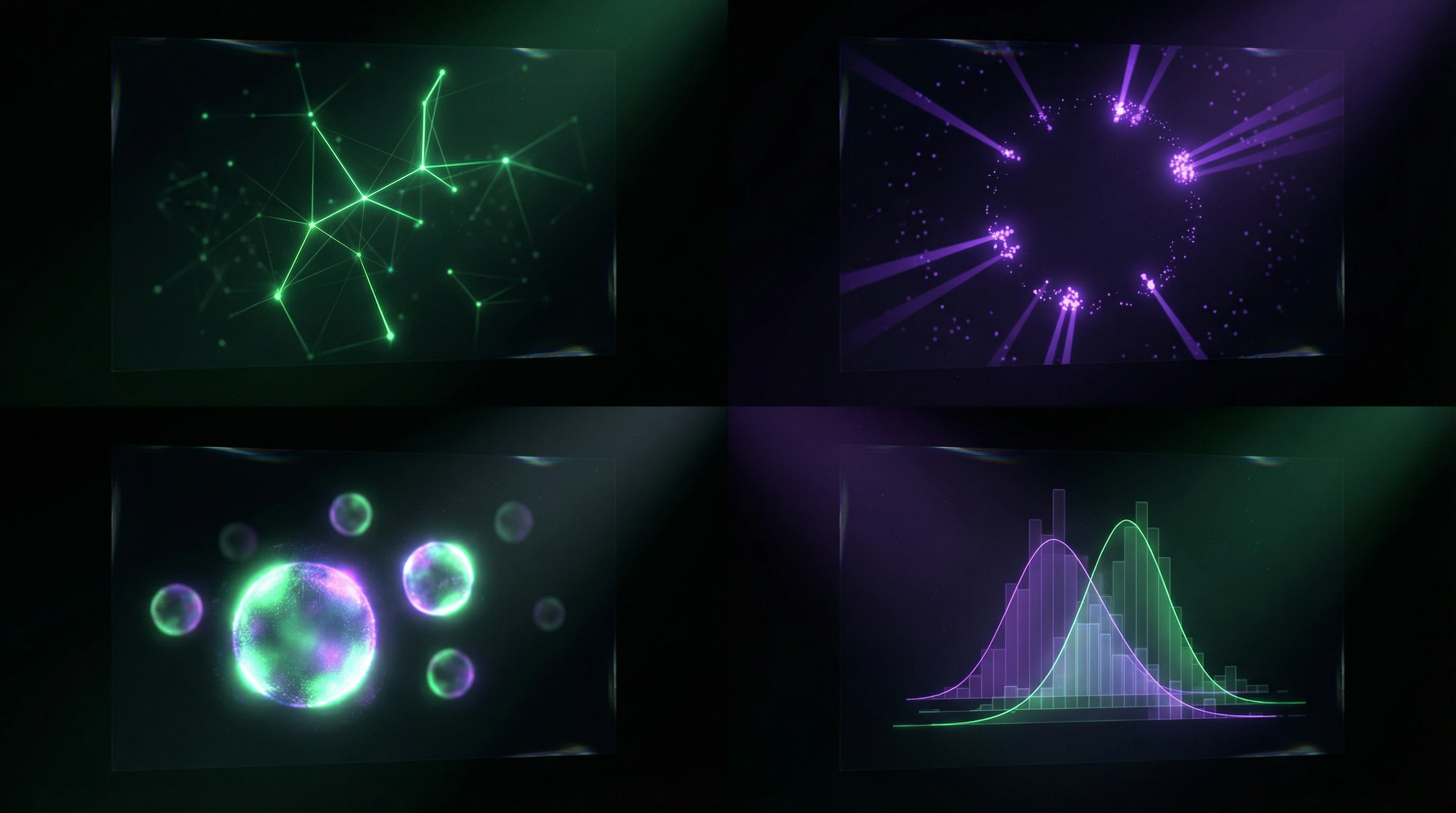

The empirical distribution function (EDF) is a statistical estimate of the true probability distribution function, constructed directly from observed data. For a sample of n elements, the EDF at point x equals the proportion of observations not exceeding x — a step function with jumps occurring at observed values.

The EDF serves as a visualization tool for data distribution without prior assumptions about its form, revealing asymmetry, multimodality, outliers before applying parametric methods. Comparing the EDF with theoretical distributions (normal, exponential, binomial) forms the basis for selecting an adequate statistical model.

- Order data in ascending sequence

- Calculate cumulative frequencies for each unique value

- Construct step function reflecting proportion of observations ≤ x

- Add confidence bands to assess uncertainty

As sample size increases, the EDF converges to the true distribution function — this statement is formalized in the Glivenko-Cantelli theorem. Graphical representation of the EDF is often accompanied by confidence bands showing the uncertainty range of the estimate for a given sample size.

Probability Distributions: From Abstract Mathematics to Real-World Decisions

Probability theory provides a mathematical framework for describing random phenomena through families of distributions — each with its own parameters, application domain, and interpretation. The binomial distribution and the Glivenko-Cantelli theorem represent two poles of probability analysis: the former models specific discrete processes, the latter establishes the fundamental connection between empirical observations and theoretical models.

Binomial Distribution in Marketing Research and Decision-Making

The binomial distribution describes the number of successes in a series of independent Bernoulli trials — experiments with two possible outcomes (success/failure), where the probability of success is constant. Classic examples: number of conversions from n ad impressions, number of positive responses in a survey of n respondents, number of defective items in a batch of n units.

The distribution is defined by two parameters: n (number of trials) and p (probability of success in a single trial). In marketing research, this allows calculating the probability of achieving a target number of conversions, evaluating A/B test effectiveness, and planning sample sizes for surveys with specified precision.

- Verify independence of trials — the result of one does not affect others

- Ensure constant probability of success p across the entire sample

- Confirm dichotomous outcome (exactly two options)

- For large n, verify approximation condition: np > 5 and n(1-p) > 5

Violating these conditions leads to systematic errors. If survey respondents influence each other, the binomial model will overestimate precision. When the approximation condition is met, the binomial distribution transitions to normal, simplifying calculations and enabling z-tests for hypothesis testing.

Glivenko-Cantelli Theorem as Theoretical Foundation for Statistical Inference

The Glivenko-Cantelli theorem states that the empirical distribution function converges to the true distribution function uniformly across the entire domain as sample size increases to infinity. Mathematically: the supremum (maximum) of the absolute difference between the EDF and the true distribution function approaches zero with probability one as n → ∞.

A sufficiently large random sample allows reconstruction of the population distribution with any specified precision without any assumptions about its form.

The practical significance of the theorem extends beyond pure mathematics: it guarantees consistency of nonparametric estimation methods, justifies bootstrap application for constructing confidence intervals, and explains why histograms and kernel density estimates work.

The theorem does not specify convergence rate — for this, refinements like the Dvoretzky-Kiefer-Wolfowitz inequality are used, providing probabilistic bounds on EDF deviation from the true distribution for finite samples. Understanding this theorem builds intuition about why statistical methods work and what guarantees they provide when correctly applied.

Statistical Research Methodology: From Hypothesis to Conclusions

Statistical research is a structured process: planning, data collection, analysis, interpretation. Each stage is critical for the validity of conclusions.

Methodology defines the logic of scientific inference: how to move from specific observations to general statements while maintaining control over errors and uncertainty.

Research Planning and Design as the Foundation of Validity

Planning begins with a clear definition of the population — the set of all objects about which conclusions are intended to be drawn.

- Selection of sampling method: simple random, stratified, cluster, systematic — depends on the structure of the population and objectives.

- Sample size calculation requires specification of estimation precision, acceptable Type I error rate (typically 0.05), expected effect size, and test power (typically 0.80).

- Operationalization of concepts — translating abstract notions into observable indicators with defined measurement scales (nominal, ordinal, interval, ratio).

The choice of statistical analysis methods should precede data collection, not follow it.

This prevents p-hacking (selecting methods that yield desired results) and ensures proper error control.

A pilot study on a small sample tests instruments, identifies problems in formulations, and assesses the realism of assumptions about distributions and effect sizes.

Documenting an analysis plan before data collection begins is becoming standard in clinical trials and gradually spreading to other fields — this increases research transparency and reproducibility.

Data Collection and Processing: From Raw Observations to Analytical Dataset

Instrument development requires balancing measurement completeness with respondent burden — lengthy questionnaires reduce response rates and increase missing values.

Ensuring random selection in practice encounters unit non-response and participation refusals, creating potential selection bias. Documentation of data collection conditions includes recording time, location, procedures, and protocol deviations — this information is critical for assessing external validity.

- Missing Data Patterns

- MCAR (Missing Completely at Random) — no relationship with other variables; requires minimal adjustments.

- MAR (Missing at Random) — depends on observed variables; requires multiple imputation methods.

- MNAR (Missing Not at Random) — depends on the missing values themselves; requires sensitivity analysis and substantive expertise.

Outlier detection uses statistical criteria (three-sigma rule, interquartile range) and substantive expertise — not every extreme value is an error; some represent genuine rare events.

Constructing empirical distribution functions for key variables allows visual assessment of distribution shape, skewness, and presence of modes before applying parametric methods that assume normality.

Selection of theoretical distribution is based on graphical analysis (Q-Q plots, P-P plots) and formal goodness-of-fit tests (Kolmogorov-Smirnov, Shapiro-Wilk), but substantive considerations about the nature of the data remain paramount.

Application in Marketing Research — From Theory to Business Practice

Consumer Behavior Analysis Through Probabilistic Models

Binomial distribution becomes the primary tool when analyzing dichotomous consumer decisions — buy or not buy, click or ignore, return or switch to competitors.

Marketers use this model to forecast conversion: if the probability of purchase after viewing an ad is 0.03, then out of 1000 impressions, 30±10 purchases are expected with 95% confidence.

Random sampling of customers for A/B testing requires strict adherence to representativeness — stratification by age, geography, and purchase history prevents systematic biases that can lead to erroneous conclusions about target audience preferences.

The empirical distribution function of time between purchases allows identification of segments with varying loyalty and optimization of communication frequency, avoiding both insufficient brand presence and irritating intrusiveness.

Segmentation and Targeting Based on Statistical Clusters

Cluster analysis of transactional data reveals natural groups of consumers with similar behavioral patterns, but critical validation of cluster stability through bootstrap procedures separates real segments from algorithmic artifacts.

- Sample representativeness for building segment profiles determines the accuracy of predicting new customer behavior — insufficient representation of younger audiences will lead to systematic underestimation of their purchasing power.

- Statistical significance of differences between segments is verified through chi-square tests for categorical variables and t-tests for continuous ones.

- Practical significance (effect size) is often more important than formal p-value — a difference in average transaction of $0.50 may be statistically significant on a million-record sample, but economically meaningless.

The Glivenko-Cantelli theorem guarantees that with sufficient sample size, the empirical distribution of segment characteristics converges to the true distribution, justifying the scaling of insights from pilot groups to the entire customer base.

Statistics in Decision-Making — When Numbers Drive Strategy

Hypothesis Testing and Statistical Significance in Business Context

The null hypothesis in business analytics is formulated as the absence of effect: a new website design didn't change conversion, an advertising campaign didn't affect sales, a price change didn't shift demand.

The significance level α=0.05 has become an industry standard, but its blind application is dangerous. High-frequency trading requires α=0.001 to minimize false signals, while exploratory marketing research may accept α=0.10 to detect weak but potentially important effects.

- Test power (1-β) determines the probability of detecting a real effect — insufficient sample size leads to rejecting a promising innovation, even when it substantially improves the product.

- P-hacking (data manipulation through multiple testing, selective exclusion of observations, or changing groupings) has become an epidemic undermining trust in corporate analytics.

- Hypothesis testing requires pre-determining sample size and significance level — changing these parameters after analyzing data invalidates statistical validity.

Confidence Intervals and Risk Assessment in Strategic Planning

A confidence interval for average customer revenue [$4.50; $5.50] at 95% confidence level means the true mean lies within this range with probability 0.95 — but doesn't guarantee that a specific customer will generate revenue within these bounds.

Confidence interval width is inversely proportional to the square root of sample size: narrowing the interval by half requires quadrupling the sample. This explains the diminishing returns from increasing research budgets.

The Bayesian approach integrates expert prior knowledge with empirical data, allowing probability updates as new information arrives — critically important for dynamic markets where historical data quickly becomes obsolete.

Quantile regression estimates not just the mean but also distribution tails, revealing extreme scenario risks. The 95th percentile of losses shows maximum losses in the worst 5% of cases — essential for capital and reserve management.

Critical Thinking and Protection from Manipulation — Statistical Literacy as a Survival Skill

Common Data Interpretation Errors and Cognitive Traps

Correlation doesn't mean causation. Ice cream sales rise in summer along with drownings, but ice cream isn't the cause — the common factor is heat.

Survivorship bias hides failures. We analyze only successful companies and see a universal recipe, forgetting thousands of projects with the same strategy that collapsed and disappeared from the sample.

- Regression to the mean: after an extremely successful quarter comes a decline not due to errors, but because extremes are statistically rare and the system returns to normal.

- Simpson's Paradox: treatment can be more effective in each age group separately, but appear worse in the overall sample due to uneven patient distribution across groups.

Ethical Aspects of Statistical Analysis and Researcher Responsibility

Pre-registering hypotheses before data collection blocks HARKing — fitting theory to results disguised as prediction. This is the difference between finding a pattern and testing it.

Publishing only significant results — and science becomes a collection of lucky coincidences. The file drawer effect distorts literature toward positive effects, creating a false impression of intervention reliability.

Personal data protection in analysis requires balance. Differential privacy adds controlled noise, preserving statistical properties while protecting individuals from de-anonymization.

Researchers must communicate uncertainty. Point estimates without confidence intervals create an illusion of precision — statistical noise is presented as signal, and catastrophic decisions are made based on it.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Statistics is the science of collecting, analyzing, and interpreting data, while probability theory studies patterns in random phenomena. These disciplines form the foundation for making informed decisions in science, business, and everyday life. They are included in school curricula as a distinct content area of mathematics to develop analytical thinking.

Studying statistics enriches understanding of the modern world and methods for investigating it. It develops data analysis skills, critical thinking, and helps make informed decisions based on facts. The ability to work with data is a critically important skill in today's information society.

A representative sample is a subset of the population that accurately reflects its characteristics. Sample quality matters more than size: even a large sample can produce distorted results if it's not representative. Proper selection ensures the reliability of statistical conclusions and forecasts.

No, this is a common misconception. Statistics provides objective tools for data analysis, but manipulation is possible through incorrect application of methods or deliberate distortion. Critical thinking and understanding of methodology help recognize improper use of statistics and protect against manipulation.

Not always—representativeness matters more than sample size. A small but properly constructed sample will yield more accurate results than a large but biased one. A balance is needed between sample size, available resources, and data quality to obtain reliable conclusions.

No, probability models are approximations of reality, not absolute truths. Calculation accuracy depends on data quality, applicability of the chosen distribution, and consideration of all factors. It's important to understand model limitations and conditions for their correct application to adequately interpret results.

Start with a clear formulation of the study's objective and hypotheses. Define the population, select a method for forming a representative sample, and calculate the necessary sample size. Plan data collection methods, quality criteria, and analysis approaches, considering available resources and time constraints.

Statistics helps analyze consumer behavior, segment audiences, and evaluate campaign effectiveness. Binomial distribution is used to forecast response rates, while sampling methods test hypotheses about customer preferences. This enables informed decision-making and optimization of marketing strategies.

A confidence interval shows the range of values within which the true population parameter lies with a specified probability. For example, a 95% confidence interval means that in 95 out of 100 cases, the true value will fall within this range. A narrow interval indicates high estimation precision.

It's a statistical estimate of the probability distribution function constructed from observed sample data. The Glivenko-Cantelli theorem proves that as sample size increases, the empirical function converges to the true distribution function. This is a fundamental tool for data analysis and testing hypotheses about distributions.

Correlation shows a statistical relationship between variables, but doesn't prove causation. Two variables may correlate due to the influence of a third factor or random coincidence. Establishing causation requires controlled experiments, theoretical justification, and elimination of alternative explanations.

Statistical significance indicates how likely it is that an observed effect is not due to chance. The typical threshold is p<0.05, meaning less than 5% probability of a random result. However, significance doesn't equal practical importance—a statistically significant effect may be too small for real-world application.

Verify the data source, sample size and representativeness, and research methodology. Pay attention to visualization—manipulative charts often distort scale or truncate axes. Look for absolute values rather than just percentages, and compare with independent sources to verify credibility.

Applying classical statistics to single unique events is limited, as repeatability is required to estimate probabilities. However, the Bayesian approach allows working with subjective probabilities and updating estimates as new information becomes available. This is useful for analyzing rare risks and making decisions under uncertainty.

Key issues include data privacy, bias in sampling and algorithms, and manipulative presentation of results. Researchers must ensure participant anonymity, avoid cherry-picking data, and honestly report study limitations. Methodological transparency and responsible use of results are critical for ethical practice.

Statistical methods enable analysis of income and expenses, forecasting future needs, and assessing investment risks. Understanding probability distributions helps make informed decisions about insurance, retirement savings, and portfolio diversification. This transforms financial planning from an intuitive process into a systematic data-driven approach.