❌ Logical Fallacies

❌ Logical FallaciesLogical Fallacies: How to Recognize and Avoid Thinking Trapsλ

Systematic errors in reasoning occur everywhere — from scientific research to everyday decisions, but they can be learned to recognize and prevent.

Overview

Logical fallacies are systematic failures in reasoning that render conclusions invalid even when premises are true. Formal fallacies violate the structure 🧩 of logic, while informal fallacies exploit cognitive weaknesses: appeals to authority, false dilemmas, straw man arguments. Recognizing fallacy patterns is a fundamental skill for evaluating arguments in science, media, and everyday decisions.

🛡️

Laplace Protocol: Logical fallacies are not rare even in professional and scientific work. Systematic study of flawed reasoning patterns and application of verification checklists significantly improves the quality of thinking and argumentation in any field of activity.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies⚡

Deep Dive

Formal and Informal Logical Fallacies: Two Natures of Reasoning Violations

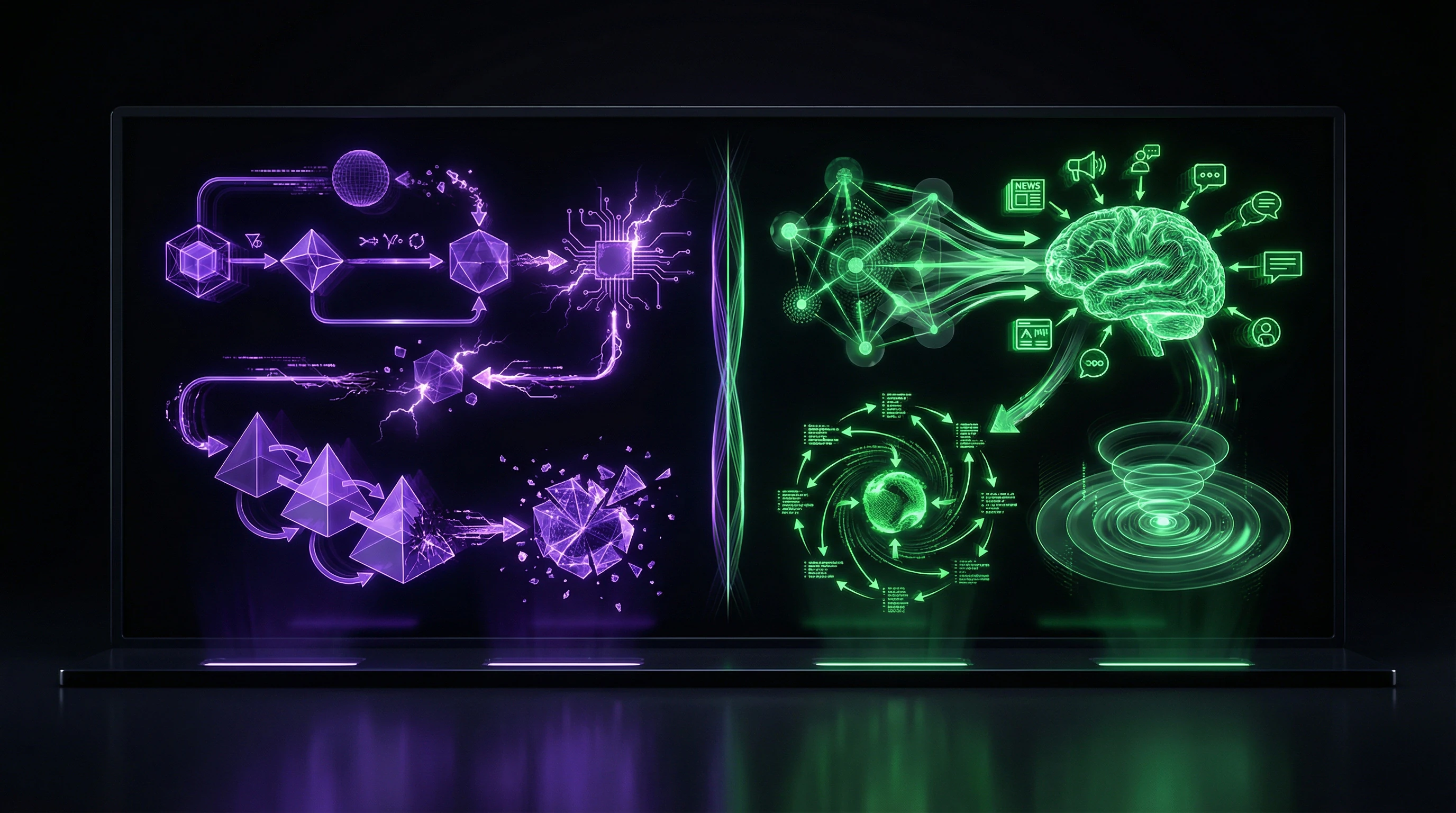

Logic distinguishes two fundamentally different types of errors. Formal fallacies violate structural rules of inference — the conclusion does not follow from the premises according to the laws of logic. Informal fallacies are defective in content: the argument appears convincing but is logically unsound upon analysis.

The key distinction: formal fallacies violate the syntax of logic, while informal fallacies exploit the semantics and pragmatics of communication.

Structural Violations in Formal Logic

Formal fallacies arise from violations of inference rules, independent of content. A classic example is affirming the consequent: "If it rains, the ground is wet; the ground is wet, therefore it is raining." Structurally invalid, even though the premises are true.

Denying the antecedent is another formal fallacy: "If A, then B; not-A, therefore not-B." Logically impermissible, yet often seems obvious.

These errors are identified through formal analysis of structure, without reference to factual content. In academic work, formal violations manifest in incorrect formulation of research aims, objectives, subject matter, and object — rendering the entire work logically unsound.

- Formal Correctness

- Each step of reasoning must follow from the previous one according to established rules. Critical in mathematical proofs and formalized theories.

Content and Context Errors in Informal Logic

Informal fallacies do not violate formal rules but lead to false conclusions through manipulation of content, context, or psychology. Appeal to emotion instead of logical arguments, argument from authority outside their competence — typical examples.

These fallacies are effective because they mimic valid reasoning, exploiting cognitive predispositions.

| Fallacy Type | Mechanism | High-Risk Context |

|---|---|---|

| Appeal to Emotion | Emotional manipulation instead of logic | Politics, advertising, social media |

| Argument from Authority | Authority cited outside their expertise | Medicine, science, consulting |

| Context Substitution | Rule from one context applied to another | Clinical reasoning, diagnosis |

Context dependence makes informal fallacies insidious: what is fallacious in one context may be acceptable in another. In medical practice, logical errors in clinical reasoning lead to diagnostic failures. Cognitive therapy views them as unsubstantiated judgments presented as proven facts — linked to emotional distress and maladaptive thinking.

Recognizing informal fallacies requires not only knowledge of logic but also understanding of the psychology of persuasion and contextual factors. This is a skill developed through practice analyzing real arguments, not through memorizing classifications.

Moving the Goalposts and Argument Manipulation: How the Subject of Debate Gets Quietly Changed

Moving the goalposts — one of the most common logical fallacies, where the original claim requiring proof is imperceptibly altered during argumentation. This fallacy is insidious: it creates the illusion of logical victory, though what's actually being proven is something entirely different.

In academic settings, moving the goalposts occurs when formulating research objectives, where the stated goal is substituted during the work process, making conclusions irrelevant to the original problem. Systematic use of this technique destroys the possibility of constructive dialogue.

Straw Man: Distorting the Opponent's Position

The straw man fallacy — a specific form of moving the goalposts, where an opponent's position is deliberately distorted, simplified, or caricatured to make it easier to refute. Instead of attacking the real argument, a weakened version is created — a "straw man" that's easy to knock down.

This technique is widely used in political debates and public discussions, where making an impression on the audience matters more than reaching truth.

In academic disputes, the straw man manifests through selective quotation, taking phrases out of context, and attributing to the author claims they never made. Even in peer-reviewed publications, cases occur of distorting criticized theories to simplify their refutation.

- Precise quotation with source citation and context

- Verification of the full text of the original statement

- Application of the principle of charity — interpreting the opponent's position in its strongest form

Shifting Discussion Focus and Topic Avoidance

Shifting discussion focus — a tactic where instead of responding to the original argument, attention is redirected to secondary details, the opponent's personal qualities, or irrelevant circumstances. A classic example — ad hominem attacks, where instead of refuting the argument, the person making it is attacked.

Another form — the red herring, where a distracting topic is introduced, leading the discussion away from an uncomfortable question. These techniques are effective in manipulative communication but destructive to rational discourse.

- In clinical practice: a physician concentrates on vivid but secondary symptoms, ignoring less noticeable but critically important disease indicators

- In research work: the research object is substituted during the process, studying a phenomenon different from what was initially stated

- In public discussion: arguing about details is used to avoid the main question

Countering this fallacy requires constant return to the original question and verification of each argument's relevance to the topic under discussion.

Correlation versus Causation: The Most Costly Error in Data Interpretation

Confusing correlation with causation is one of the most common and dangerous logical errors in scientific research, medical practice, and data-driven decision-making. Correlation means a statistical relationship between two variables, while causation implies that one variable directly causes a change in the other.

Mistakenly equating these concepts leads to false conclusions, ineffective interventions, and wasted resources. A classic example: the correlation between ice cream sales and drowning incidents does not mean ice cream causes drownings—both phenomena are linked to a third factor (summer heat).

Establishing causal relationships requires meeting strict criteria: temporal sequence (cause precedes effect), covariation (change in cause is associated with change in effect), and elimination of alternative explanations.

False Causal Relationships in Research

Observational studies often reveal correlations but cannot prove causation without controlling for confounders—hidden variables that influence both observed variables. Educational research systematically encounters errors where correlational data is interpreted as proof of causality.

The gold standard for establishing causation is randomized controlled trials, where random assignment of participants minimizes the influence of confounders. False causality is particularly problematic in medical research: erroneous conclusions about disease causes can lead to ineffective or harmful treatment.

- Verify temporal sequence: the presumed cause actually precedes the effect

- Identify confounders: what third variables might explain both observed variables

- Consider reverse causation: could B be causing A instead of A causing B

- Assess the mechanism: articulate exactly how one variable should cause the other

Systematic Errors in Data Interpretation

Cognitive biases systematically distort the interpretation of correlational data toward causal conclusions. Confirmation bias causes researchers to interpret correlations as confirmation of pre-existing hypotheses, ignoring alternative explanations.

Illusion of control leads to overestimating one's ability to influence correlated events, even when causal connection is absent. In the era of big data, the problem intensifies: when analyzing thousands of variables, random correlations are inevitably discovered and mistakenly accepted as meaningful patterns.

- Confirmation Bias

- Interpreting correlations as confirmation of pre-existing causal hypotheses, ignoring alternative explanations. Trap: the researcher sees what they expect to see.

- Illusion of Control

- Overestimating one's ability to influence correlated events in the absence of causal connection. Trap: false sense of influence over independent processes.

- Multiple Testing

- When analyzing thousands of variables, random correlations are inevitable and mistakenly accepted as patterns. Trap: statistical significance without practical meaning.

Protection against interpretation errors requires systematic application of causality criteria and skeptical attitude toward correlational findings. It is necessary to explicitly articulate the mechanism of the presumed causal connection, verify temporal sequence of events, and actively seek alternative explanations.

In research practice, it is critically important to distinguish research questions requiring establishment of correlations from questions requiring proof of causation, choosing the appropriate research design. Cognitive therapy uses identification of false causal attributions as a tool for correcting maladaptive thinking, demonstrating the practical significance of distinguishing correlation from causation in everyday life.

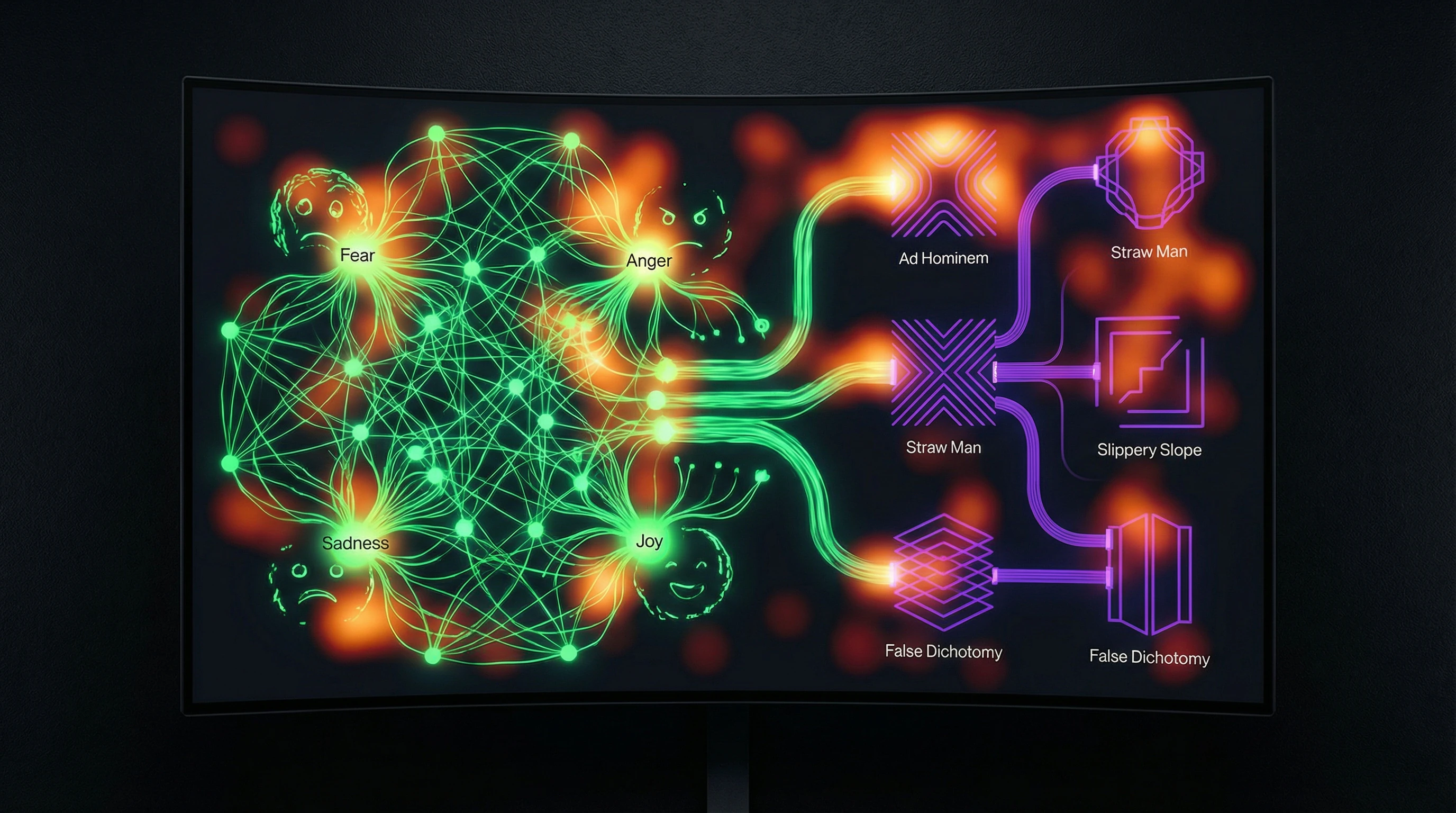

Emotional Appeals and Cognitive Biases in the Structure of Logical Fallacies

Manipulation Through Emotions as a Substitute for Logical Argumentation

Appeal to emotion is a systematic replacement of logical reasoning with emotional impact, exploiting psychological vulnerabilities instead of rational argument evaluation. Emotionally charged statements are perceived as more convincing regardless of logical validity, creating an illusion of soundness through intensity of experience.

In cognitive therapy, emotional appeals are viewed as unsubstantiated judgments presented as proven facts. This leads to the formation of maladaptive thinking patterns and emotional distress. Critical thinking requires systematic separation of a message's emotional content from its logical structure.

The validity of an argument does not depend on the intensity of emotions with which it is presented. Emotion is a signal, not evidence.

Connection Between Logical Fallacies and Cognitive Biases and Systematic Distortions

Many logical fallacies are not random intellectual mistakes, but systematic patterns of deviation from rational judgment, rooted in cognitive biases and heuristic thinking. Cognitive distortions function as automatic mental shortcuts, increasing decision efficiency in some contexts while systematically leading to errors in others.

Emotional states significantly amplify susceptibility to certain types of logical fallacies: anxiety increases the likelihood of catastrophizing and false causal attributions, confirmation bias drives the search for only information that matches existing beliefs.

- Anxiety → catastrophizing, false causal connections

- Confirmation bias → selective information seeking

- Emotional arousal → reduced critical evaluation

- Cognitive overload → increased heuristic thinking

Simply knowing formal rules of logic is insufficient to overcome deeply rooted cognitive patterns. Awareness of one's own emotional triggers and systematic practice of alternative analysis is necessary.

Understanding the cognitive architecture of logical fallacies is critically important for developing effective prevention strategies. Thinking tools that account for emotional context work more effectively than pure logic.

Logical Errors in the Structure of Scientific Research and Academic Practice

Systematic Errors in Formulating Research Goals and Objectives

Educational research systematically contains logical fractures in basic components: the goal doesn't align with objectives, objectives exceed the scope of the goal, research tasks are replaced with practical activities.

Logical structure requires: goal → anticipated result; objectives → decomposition of the path; methods → correspondence to the specifics of each objective. Violation of this sequence compromises validity regardless of data quality.

- The goal defines the anticipated result of the research

- Objectives break down the path to achieving the goal into stages

- Methods are selected according to the specifics of each objective

- All components are checked for logical consistency

Problems in Defining the Object and Subject of Research as a Source of Conceptual Errors

Incorrect definition of object and subject is an insidious error that propagates throughout the entire structure of the work. Typical fractures: conflation of object and subject, subject broader than object, subject formulated as a process instead of an aspect, mismatch between subject and goal.

Object is the domain of reality under study. Subject is the specific aspect or relationship within the object requiring research attention. The subject must be strictly narrower than the object and directly correspond to the goal.

Logical correctness requires that the subject define the boundaries of empirical analysis and flow directly from the research goal. The systematic presence of these errors indicates a deficit in methodological training and absence of formalized procedures for verifying component consistency.

Practical Strategies for Detecting and Preventing Logical Errors in Thinking

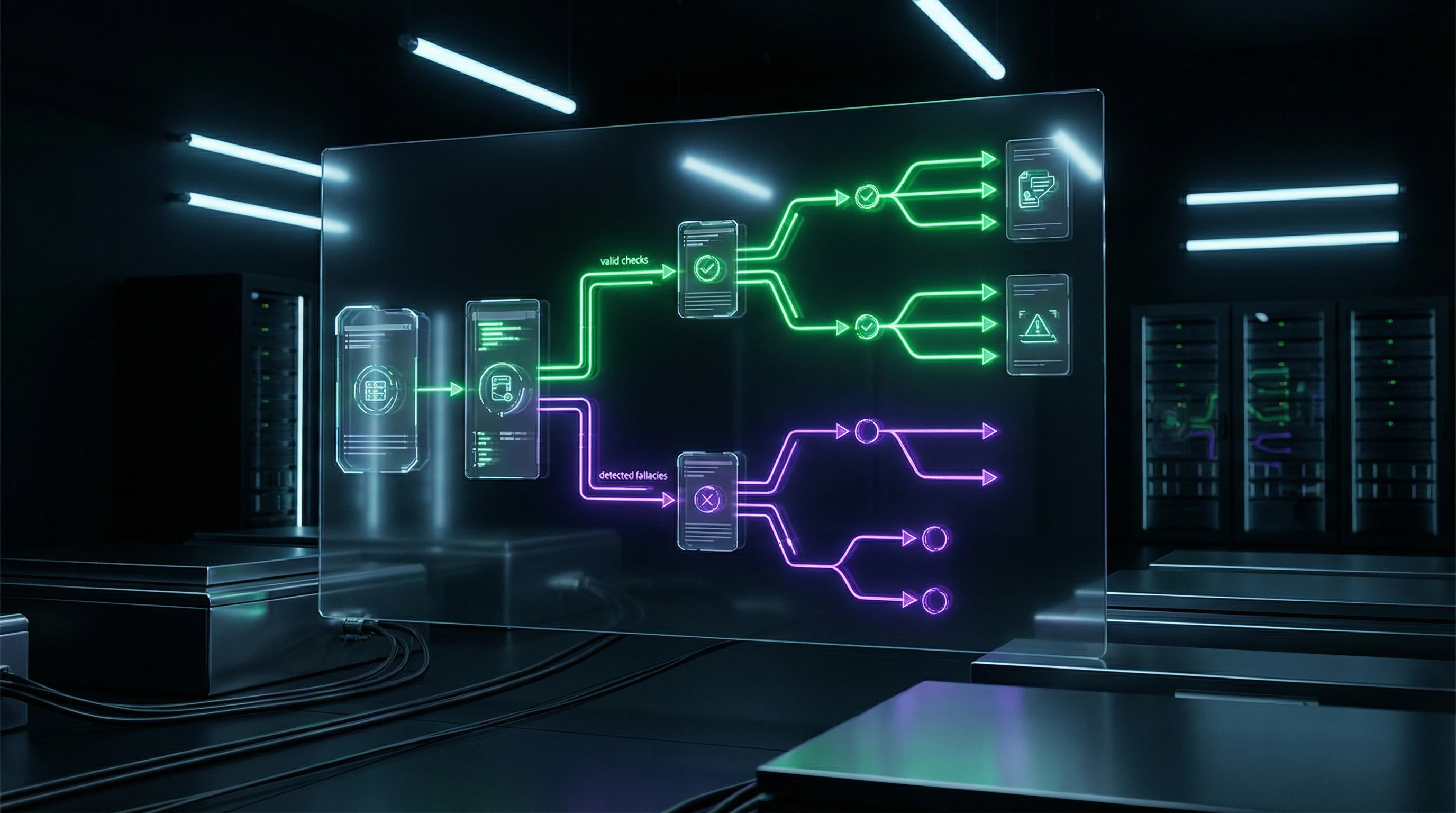

Structured Checklists for Systematic Verification of Argumentation

Prevention of logical errors requires systematic application of structured verification procedures. A basic checklist covers verification of thesis consistency with conclusions, validity of the connection between premises and conclusion, quality of evidence, identification of emotional appeals, circularity of reasoning, alternative explanations, and distinguishing correlation from causation.

For research practice, a specialized checklist includes checking clarity of goal, correspondence of objectives to goal, correctness of object and subject definition, adequacy of methods, and logical coherence of research components.

- Consistency of thesis with conclusions

- Validity of connection between premises and conclusion

- Quality and relevance of evidence

- Presence of emotional appeals

- Circularity of reasoning

- Alternative explanations of the phenomenon

- Distinguishing correlation from causation

Systematic application of these tools transforms abstract knowledge about logical errors into practical critical thinking skills, creating cognitive habits that reduce the probability of errors.

Methods of Reflective Self-Analysis and Critical Evaluation of One's Own Thinking

The most effective strategy for preventing logical errors is developing metacognitive skills—the ability to observe and critically evaluate one's own thinking processes. Reflective practice requires systematic distancing from one's own arguments and examining them from the position of a skeptical observer.

Actively searching for potential weaknesses in reasoning before its public presentation is not a sign of insecurity, but a sign of intellectual honesty.

Cognitive therapy demonstrates the effectiveness of techniques for identifying automatic thoughts and unfounded judgments, which can be adapted for detecting logical errors in professional and academic contexts.

Critical thinking as a skill develops through conscious practice of applying logical standards to one's own reasoning, creating the habit of demanding evidence for one's own claims, and cultivating intellectual humility—acknowledging the limitations of one's own knowledge and the possibility of error.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

A logical fallacy is a violation of the rules of correct reasoning that leads to invalid conclusions. There are formal fallacies (violations of logical structure) and informal fallacies (errors in argument content). Such errors are ubiquitous—from everyday disputes to scientific research.

Formal fallacies violate structural rules of logic regardless of content, while informal fallacies relate to context and argument meaning. Informal fallacies can appear convincing despite being logically unsound. Most everyday reasoning errors fall into the informal category.

Moving the goalposts is changing the original claim during a discussion to another one that's more convenient to refute. A classic example is the "straw man," where an opponent's position is distorted. This fallacy is often used manipulatively in arguments.

The coincidence of two phenomena doesn't prove that one causes the other—there may be a third factor or mere chance. This fallacy is particularly dangerous in research and data analysis. Establishing causation requires additional evidence and controlled experiments.

No, that's a myth. Research shows systematic presence of logical fallacies even in academic publications, especially in formulating aims and objectives. Vetoshkin (2021) documents their prevalence in educational research. Professional expertise doesn't guarantee protection from such errors.

A false dichotomy presents a situation as a choice between only two options when other alternatives exist. It's a simplification of complex issues into an "either-or" format. Often used in manipulative rhetoric and political discussions.

An appeal to emotion replaces logical argumentation with emotional manipulation—fear, pity, anger. The telltale sign: emphasis on feelings instead of facts and evidence. Test whether the argument remains convincing after removing the emotional component.

Circular reasoning uses the conclusion as its own premise, creating a logical loop. Example: "This law is just because it's right." Such reasoning provides no independent justification and proves nothing.

Many logical fallacies arise from systematic cognitive biases—prejudices in how the brain processes information. These aren't just intellectual mistakes but results of evolutionary heuristics and emotional states. Understanding this connection aids cognitive therapy and critical thinking.

Most prevalent are errors in formulating research aims, objectives, subjects, and objects. Confusing correlation with causation and making unwarranted generalizations are frequent. Vetoshkin (2021) notes these errors are preventable yet systematically repeated in educational research.

No, this is a misconception. Many logical fallacies are convincing precisely because they mimic valid reasoning. Reliable recognition requires systematic training and practice in critical thinking.

Use checklists: verify you haven't shifted the thesis, confused correlation with causation, or employed circular logic. Ask an independent person to evaluate your arguments. Systematic self-analysis and knowledge of common fallacies significantly improve reasoning quality.

In medicine, logical fallacies in diagnosis and decision-making directly impact patient safety. Skryabin (2022) emphasizes the critical nature of cognitive biases in clinical thinking. Incorrect causal connections can lead to improper treatment.

Yes, context is decisive for informal logical fallacies. What works as a convincing argument in one domain may be unsound in another. This underscores the importance of understanding not only formal structure but also the substantive context of reasoning.

Manipulators deliberately employ logical fallacies: they shift the thesis, create false dichotomies, appeal to emotions instead of facts. Knowledge of typical patterns helps recognize manipulation. Critical thinking is the primary defense against such influence techniques.

The digital environment amplifies classic fallacies: algorithms create echo chambers that confirm biases, viral content often relies on emotional appeals. The speed of information dissemination reduces critical evaluation. Basic fallacy types remain the same, but the scale and speed of their spread are unprecedented.