❌ Logical Fallacies

❌ Logical FallaciesLogic-Probability Analysis: From Boole to Modern Systemsλ

Exploring the fundamental unity of logic and probability theory for analyzing reliability, safety, and decision-making under uncertainty

Overview

Logic and probability represent two fundamental tools of cognition, united as early as 1854 by George Boole in his work "An Investigation of the Laws of Thought". Logic-probabilistic analysis combines the rigor of deductive reasoning with quantitative assessment of uncertainty, creating a powerful methodological apparatus for solving practical problems. This field encompasses the theoretical foundations of Boolean algebra, probabilistic logic, reliability analysis of complex systems, and modern computational implementations.

🛡️ Laplace Protocol: Logic and probability do not compete but complement each other — classical logic deals with certainty, probabilistic logic extends it to the domain of uncertainty, maintaining mathematical rigor since 1854.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Navigation Matrix

Subsections

[logical-fallacies]

Logical Fallacies

Systematic errors in reasoning occur everywhere — from scientific research to everyday decisions, but they can be learned to recognize and prevent.

Explore

[stats-probability]

Statistics and Probability Theory

Fundamental mathematical disciplines for data analysis, decision-making, and understanding random phenomena in science, business, and everyday life

Explore

[thinking-tools]

Thinking Tools

Visual and conceptual tools that help structure complex problems, make thinking visible, and develop higher-order cognitive skills in education and professional practice

Explore

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies 🛠️ Thinking Tools

🛠️ Thinking Tools 🛠️ Thinking Tools

🛠️ Thinking Tools ❌ Logical Fallacies

❌ Logical Fallacies 📈 Statistics and Probability Theory

📈 Statistics and Probability Theory 📈 Statistics and Probability Theory

📈 Statistics and Probability Theory ❌ Logical Fallacies

❌ Logical Fallacies ❌ Logical Fallacies

❌ Logical Fallacies⚡

Deep Dive

Historical Foundations: From Boole to Poretsky

Boole 1854: Logic Meets Probability

George Boole in "An Investigation of the Laws of Thought" (1854) first established a rigorous mathematical connection between logical structures and probability theory. Boolean algebra became the common foundation for both disciplines—the same operations worked with logical propositions and probabilistic events.

This wasn't a theoretical exercise. The unification laid the groundwork for all subsequent developments in probabilistic logic over more than 170 years.

Logical operations of conjunction, disjunction, and negation have direct analogs in probability theory as operations on events. Boolean algebra provided a unified mathematical language where truth values and probability measures are handled within a single formal system.

This duality enabled the development of methods for quantitative analysis of logical systems under uncertainty.

Poretsky: Classical Probability Calculus

P.S. Poretsky developed the classical approach to probability calculus for random events, which remains a fundamental method in modern theory. His work focused on rigorous algorithms for computing probabilities of complex events through logical combinations of elementary ones.

- Mutually Exclusive Event Groups

- Poretsky's fundamental concept where events cannot occur simultaneously. This ensures precise quantitative inference in logical systems where absolute certainty is unattainable.

Poretsky's classical approach wasn't replaced by modern methods but became the foundation on which new approaches are built—including tuple algebra and semantic models.

Theoretical Foundations of Logic-Probability Analysis

Boolean Algebra as Common Structure

Boolean algebra is a universal mathematical structure serving both classical logic and probability theory simultaneously. In logic it operates on truth values (true/false), in probability—on events with measures from 0 to 1.

This duality reflects a deep connection between deductive reasoning under certainty and inductive reasoning under uncertainty.

| Operation | Logical Context | Probabilistic Context |

|---|---|---|

| Conjunction (AND) | Logical product | Event intersection |

| Disjunction (OR) | Logical sum | Event union |

| Negation (NOT) | Value inversion | Event complement |

The isomorphic structure of operations allows applying logical methods to probabilistic problems and vice versa, creating a unified methodological foundation.

Logic and probability aren't incompatible—they're complementary tools operating on the same algebraic foundation.

Probabilistic Logic and Quantitative Inference

Probabilistic logic extends classical logic by generalizing truth values to probabilistic ones. Each proposition is assigned a numerical value reflecting the degree of confidence in its truth.

Inference rules incorporate quantitative assessment of uncertainty, combining deductive reasoning with statistical evidence.

- Preserving logical structure while incorporating uncertainty

- Combining deductive rules with probabilistic measures

- Application to reasoning under incomplete information

Quantitative inference differs from pure statistical analysis by preserving logical structure while incorporating uncertainty. This approach finds application in thinking tools for artificial intelligence and machine learning.

Probabilistic logic unites the normative power of logic with the empirical flexibility of probability—a foundational element of all decision-making and analytics.

Logic-Probabilistic Modeling Methodology

Probability Calculus in Logical Systems

Logic-probabilistic calculus — a mathematical framework for computing probabilities of complex events expressed through logical combinations of elementary events. Integrates structural analysis of logical dependencies with quantitative probability assessment.

Standard tool in reliability engineering, risk analysis, and safety assessment of critical infrastructure. Contrary to popular misconception, this is not purely theoretical apparatus — practical applications span reliability analysis of complex systems, quantitative risk modeling, pattern recognition, and classification.

- Structural analysis: identifying logical dependencies between system components

- Quantitative assessment: assigning probabilities to elementary events

- Computation: deriving probabilities of complex events through logical operations

- Validation: verifying consistency and reproducibility of conclusions

The methodology provides precise quantitative measures of uncertainty, making reasoning rigorous in scenarios where absolute certainty is unattainable. This is critical for engineering thinking when working with complex systems.

Requirement of Maximum Specificity and Statistical Ambiguity

Requirement of Maximum Specificity (RMS) — a formalized rule for eliminating statistical ambiguity problems (SAP). Ensures that when multiple possible probabilistic interpretations of a logical structure exist, the most specific one minimizing uncertainty is selected.

Problem: logical structure permits multiple probability distributions compatible with available data. Solution: RMS resolves these ambiguities systematically, ensuring consistency of probabilistic inferences.

Particularly critical in semantic probabilistic inference, where integration of meaning and probability requires strict rules for eliminating interpretational uncertainties. Without RMS, the same logical scenario can generate different probabilistic conclusions depending on interpretation choice — making analysis unreliable.

| Scenario | Without RMS | With RMS Applied |

|---|---|---|

| Multiple distributions compatible with data | Choice arbitrary or implicit | Most specific selected |

| Reproducibility of conclusions | Not guaranteed | Guaranteed |

| Interpretational uncertainties | Remain unresolved | Systematically eliminated |

RMS transforms probabilistic analysis from an art (where experience and intuition decide) into an engineering discipline with reproducible results. This is the foundation for reality checking in logic-probabilistic models.

Application in Reliability and Safety Analysis of Complex Technical Systems

Assessing System Survivability Through Logic-Probabilistic Models

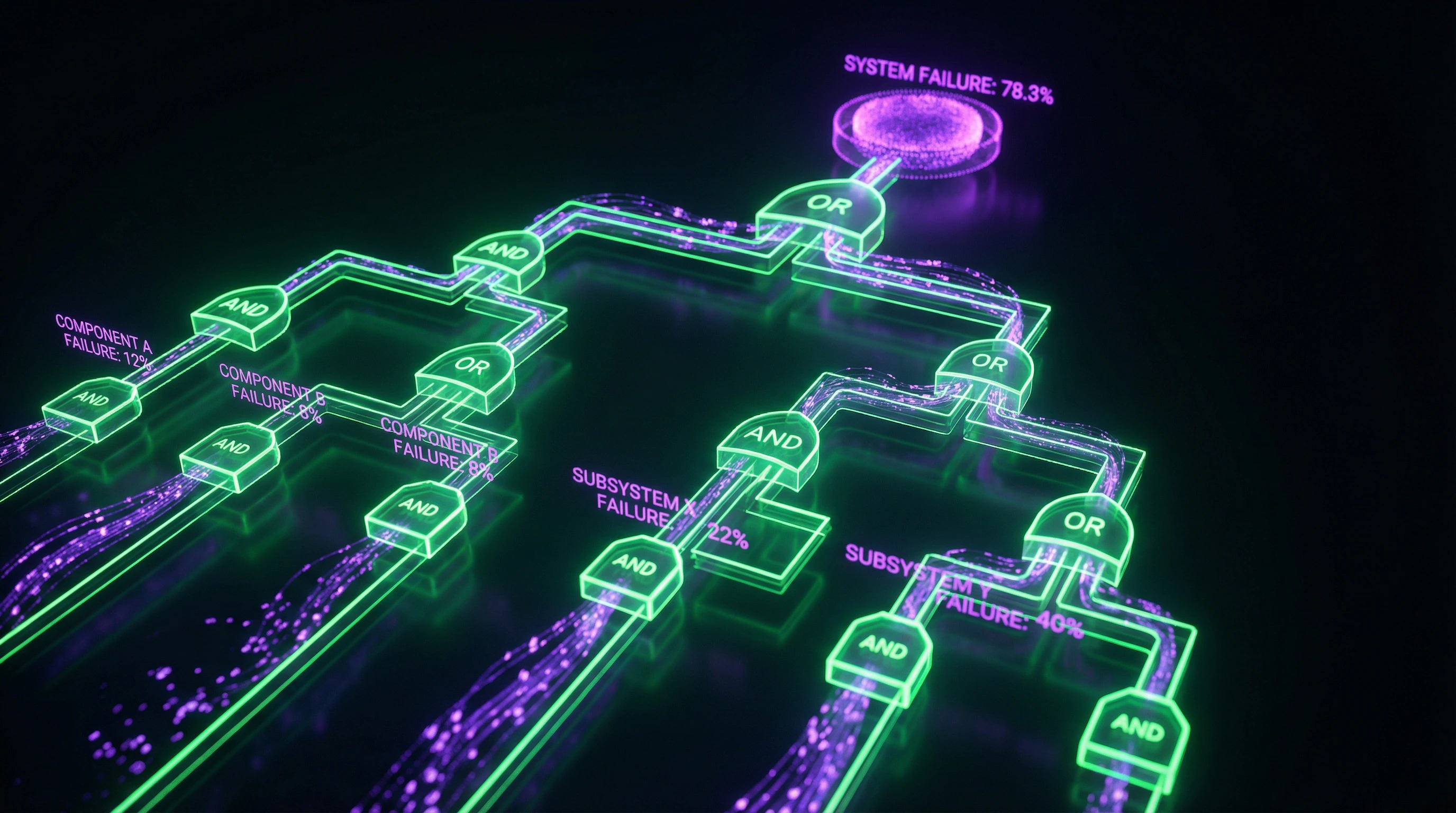

Logic-probabilistic analysis is a standard method for evaluating reliability, survivability, and safety of complex technical systems. It combines structural logical models with probabilistic characteristics of component failures, enabling quantitative assessment of critical event probabilities.

System survivability is the ability to maintain functionality under partial failures. This requires analyzing all possible combinations of component failures through Boolean algebra.

- Construct a fault tree: logical operators (AND, OR, NOT) link basic events with probabilistic characteristics.

- Apply tuple algebra for efficient probability calculation—especially critical for systems with thousands of components.

- Identify critical failure paths and optimize redundancy to improve overall reliability.

Quantitative Risk Analysis in Critical Infrastructure

Quantitative risk assessment requires integrating logical threat models with probabilistic distributions of their realization. The logic-probabilistic approach formalizes the connection between initiating events, intermediate states, and final consequences through structured logical expressions.

Probabilities are assigned to basic events based on statistical data, expert assessments, or physical models. Probabilistic calculus is then applied to calculate final risks.

The method is particularly effective for safety analysis of critical infrastructure, where multiple failure scenarios and their interactions must be considered. The requirement for maximum specificity eliminates statistical ambiguities in the presence of incomplete data, ensuring consistent risk assessments.

Analysis results are used to prioritize risk mitigation measures and justify safety investments based on quantitative criteria.

Modern Computational Approaches to Probabilistic Logic

Tuple Algebra for Efficient Probabilistic Inference

Tuple algebra is a computational framework for probabilistic reasoning that provides efficient algorithms for complex logical structures. Probability distributions are represented as tuples: ordered sets of values corresponding to different logical states of the system.

Algebraic operations on tuples directly correspond to logical operations (conjunction, disjunction, negation), enabling efficient computation of resulting probabilities. The method's advantage is computational efficiency for systems with large numbers of variables, where classical methods are impractical due to combinatorial explosion.

- Represent probability distributions as tuples of logical states

- Apply algebraic operations corresponding to logical connectives

- Process groups of mutually exclusive events as the fundamental unit

- Calculate resulting probabilities without enumerating all combinations

Tuple algebra finds applications in pattern recognition, classification, and other domains requiring probabilistic inference in complex logical structures.

Semantic Probabilistic Inference and Ambiguity Resolution

Semantic probabilistic inference integrates semantic content with probabilistic measures, providing richer reasoning models. This approach extends classical probabilistic logic by incorporating semantic relationships between concepts, accounting for contextual information in probabilistic inferences.

The statistical ambiguity problem: logical structure admits multiple probability distributions compatible with the same observed data. The maximum specificity requirement systematically resolves this ambiguity by selecting the most informative distribution that minimizes entropy while satisfying all constraints.

Consistency of probabilistic inferences is critical for artificial intelligence and machine learning—the reliability of decision-making systems depends on it. Formalizing the maximum specificity requirement in terms of logic and probability eliminates problems arising from multiple data interpretations.

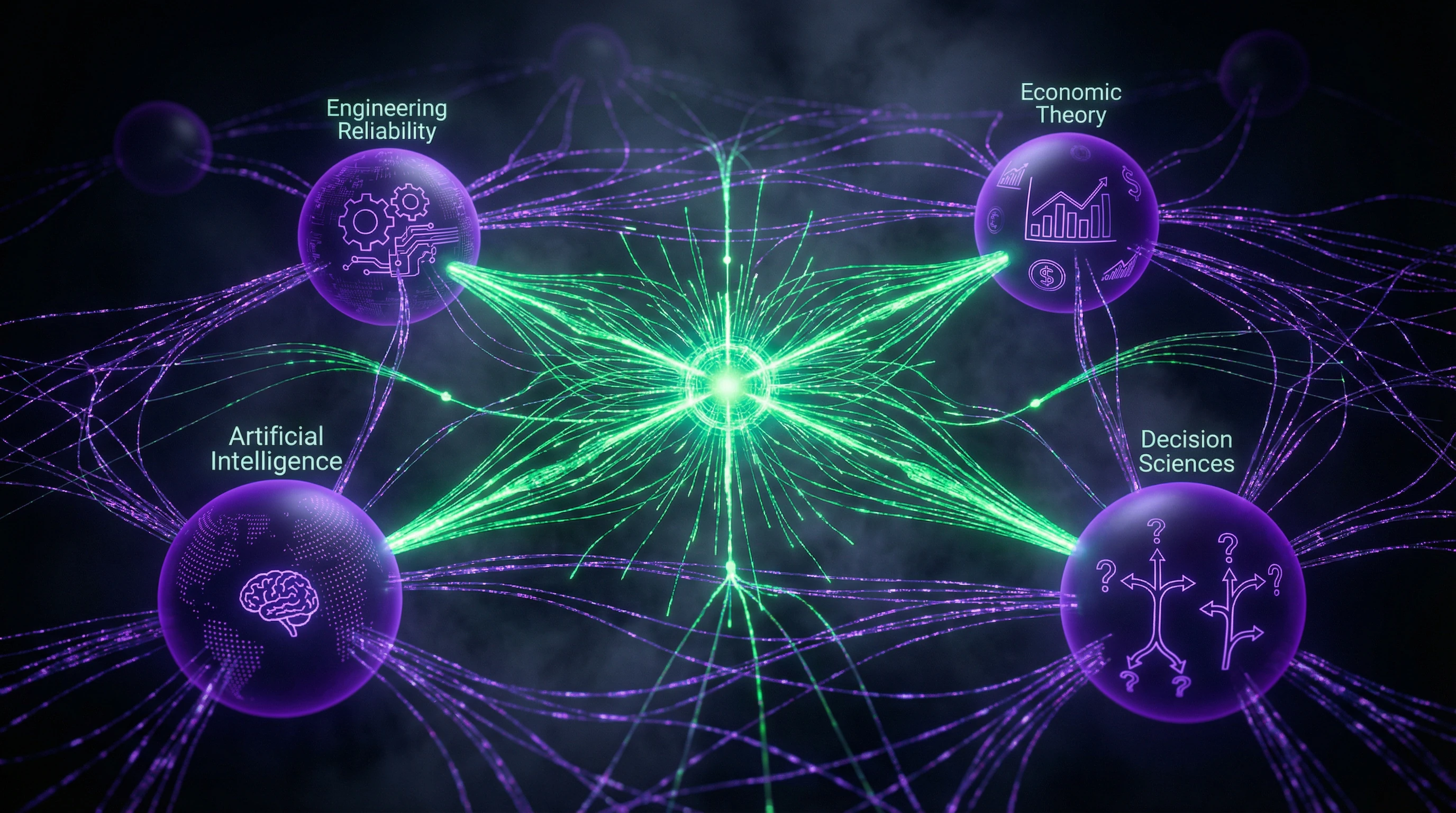

Interdisciplinary Applications and Future Directions for Logic-Probability Integration

Artificial Intelligence and Machine Learning Under Uncertainty

Probabilistic logic is the foundation for reasoning under uncertainty in AI systems. It enables combining deductive reasoning with inductive learning from data.

Bayesian networks and probabilistic graphical models directly follow from principles of probabilistic logic. They operate in pattern recognition, natural language processing, planning, and decision-making with incomplete information.

- Semantic probabilistic inference interprets meaning alongside quantifying uncertainty

- Transparent logical structures with probabilistic assessments make AI decisions explainable

- System reliability depends on integrating logic and probability in the architecture

Decision Theory and Economic Applications

Logic and probability are foundational elements of all actions and analytics. John Maynard Keynes demonstrated the fundamental role of probabilistic reasoning in economic analysis and choice under uncertainty.

Integrating logical preference structures with probabilistic outcome assessments creates a mathematically rigorous foundation for rational choice.

| Application Domain | Task | Tool |

|---|---|---|

| Financial Engineering | Derivative valuation, portfolio management | Logic-probabilistic risk analysis |

| Systemic Risk | Quantitative assessment of interdependencies | Probabilistic agent models |

| Behavioral Economics | Accounting for cognitive limitations | Integration of deviations from rationality |

Future directions include deeper integration of behavioral aspects of decision-making with formal probabilistic models that account for systematic deviations from rationality.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

It's a methodology that combines logical structures (Boolean algebra) with probability theory for quantitative assessment of uncertainty. Applied to analyze reliability, survivability, and safety of complex systems, where logic describes the structure and probabilities provide quantitative characteristics of events.

George Boole in 1854 in his work 'An Investigation of the Laws of Thought.' He created the mathematical foundation linking logical operations with probabilistic calculations, which became the basis for modern methods. Poretsky's contribution developed classical probability calculus for random events.

Probabilistic logic preserves the structure of logical inference while extending truth values to probabilities. It combines deductive reasoning with inductive reasoning, unlike pure statistical data analysis. This allows formalizing reasoning under uncertainty.

No, that's a myth. They complement each other: classical logic works with certainty, probability with uncertainty. Mathematically rigorous integration has existed since 1854 and is confirmed by 170 years of practical application.

In reliability engineering, risk assessment, artificial intelligence, and decision theory. The method is standard for safety analysis of complex technical systems, probabilistic programming, and machine learning. Also used in economics for modeling decisions under uncertainty.

A formalized requirement in logical-probabilistic systems to eliminate statistical ambiguity problems (SAP). It ensures selection of the most specific probabilistic inference from possible alternatives. Critical for correctness of semantic probabilistic inference.

Logical-probabilistic calculus is used: first a logical model of events is built through Boolean functions, then probability calculation rules are applied. For mutually exclusive events, probabilities are summed; for dependent events, conditional probabilities are used. Tuple algebra automates these calculations.

A set of events that cannot occur simultaneously. A fundamental concept in probabilistic modeling, where the sum of probabilities of such events does not exceed unity. Used to construct correct logical-probabilistic models of systems.

A logical model of component failures is constructed (fault tree or Boolean function), then the probability of system failure is calculated through component failure probabilities. The method accounts for structural connections and dependencies between components. Allows quantitative assessment of survivability and safety.

It serves as the common mathematical structure for logic and probability. Boolean operations (AND, OR, NOT) describe logical relationships of events, and probabilistic measures are assigned to the results of these operations. This ensures methodological unity since 1854.

Yes, it's one of the core approaches for reasoning under uncertainty. Bayesian networks, probabilistic programming, and fuzzy logic are based on these principles. Applied in machine learning, expert systems, and robotics for decision-making.

Integration of semantic relationships with probabilistic estimates in logical systems. Enables inference that accounts for both the logical structure of knowledge and the degree of confidence in it. Requires formalization of semantics through probabilistic measures.

No, that's a misconception. Probabilistic logic preserves rules of logical inference while extending them to uncertainty, whereas statistics focuses on data analysis. It unifies deduction and induction within a single formal system.

Provides a modern computational framework for automating probabilistic reasoning. Tuples represent combinations of events and their probabilities, algebraic operations perform logical-probabilistic computations. Simplifies implementation of complex models in software.

Yes, it's a standard approach in quantitative risk analysis. The logical model describes threat scenarios, probabilities assess their likelihood, and the result is a quantitative risk measure. Applied in industrial safety, finance, and project management.

Development toward AI, autonomous systems, and big data. Integration with machine learning for explainable AI, application in quantum computing and distributed systems. Interdisciplinarity ensures growing applications in medicine, economics, and social sciences.