Epistemic Trespassing as a Structural Defect in Contemporary Expert Discourse: Defining the Boundaries of Competence

Epistemic trespassing is a phenomenon in which a specialist with recognized expertise in one domain makes categorical claims in another domain without possessing the necessary methodological tools, contextual knowledge, or epistemic virtues (S003). This isn't simply an error in judgment—it's a systemic violation of epistemic norms.

When a Nobel laureate in chemistry holds forth on social policy, their words receive unearned credibility. The halo effect transfers to a domain where their methodological training doesn't apply.

🧩 Why Epistemic Trespassing Differs from Ordinary Incompetence

The key distinction is the presence of legitimate expertise in the original domain. A dilettante opining about quantum physics is easily dismissed. But when a recognized specialist does so, their words carry undeserved weight (S003).

The problem is compounded by the fact that the expert often fails to recognize the boundaries of their competence, believing that general critical thinking skills are universally applicable. This creates an illusion of competence where none exists. More details in the Epistemology section.

🔎 Three Dimensions of Epistemic Trespassing

- Methodological

- Each discipline develops specific tools for managing uncertainty, validating data, and constructing inferences. Transferring methods without adaptation leads to systematic errors.

- Contextual

- Understanding the historical development of a problem, key debates, and terminological nuances. The absence of this context renders judgment superficial, even when methodology is formally observed.

- Virtuous

- Epistemic virtues—intellectual humility, sensitivity to counterarguments, understanding the limits of one's knowledge (S003). Trespassing occurs when an expert ignores at least one of these dimensions.

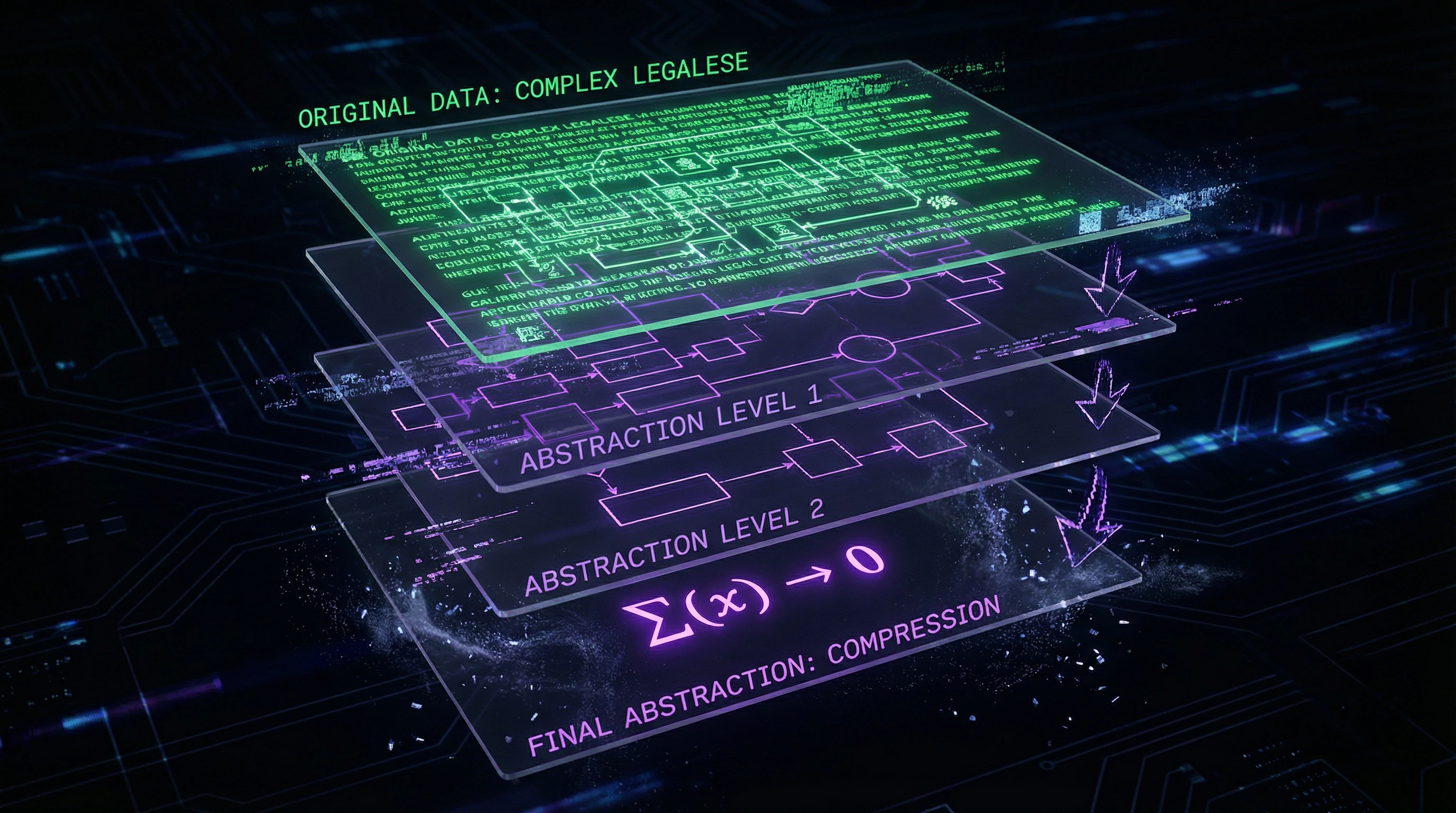

⚙️ Abstraction as a Mechanism of Epistemic Trespassing

Computer scientists are trained to create abstractions that simplify and generalize (S004). This professional virtue becomes an epistemic vice when abstraction is created prematurely, without understanding critical contextual details.

Premature abstraction that omits important details creates a risk of epistemic trespassing by falsely asserting its relevance in other contexts. This is how oversimplified algorithmic "fairness" metrics are born—erasing legal nuances and creating an illusion of solving discrimination problems.

The boundary between useful abstraction and dangerous oversimplification runs through the question: does the author recognize what they're omitting, and are they willing to acknowledge the limitations of their approach?

Steel Man: Five Strongest Arguments for Experts Making Interdisciplinary Statements

Before examining the problem of epistemic trespassing, we must honestly consider the strongest arguments in favor of experts having the right—and even the obligation—to speak beyond their narrow specialization. This is not a straw man, but a steel man: the most convincing version of the opposing position. For more details, see the Reality Check section.

💎 The Skills Transfer Argument: Critical Thinking as a Universal Tool

Experts in any field develop critical thinking skills, evidence evaluation, and logical reasoning that are universally applicable. A physicist skilled at analyzing complex causal relationships in quantum mechanics can theoretically apply the same methodological rigor to economic or social questions.

A fresh outside perspective sometimes reveals patterns that specialists immersed in intradisciplinary debates overlook. This doesn't mean the perspective will be correct—but it may be useful.

🔬 The Interdisciplinary Necessity Argument: Complex Problems Require Integration

Many contemporary problems—from climate change to AI ethics—are fundamentally interdisciplinary. Requiring that a climatologist not comment on the economic implications of their models makes any meaningful dialogue impossible.

| Scenario | Epistemic Humility | Outcome |

|---|---|---|

| Climatologist stays silent on economics | Maintained | Economists make decisions without accounting for physical constraints |

| Economist stays silent on climate physics | Maintained | Climatologists miss economic realities of implementing models |

| Both speak, but without mutual respect | Violated | Conflict instead of integration |

📊 The Information Asymmetry Argument: Experts See What's Hidden from the Public

Experts often possess access to information, methodological tools, or understanding of fundamental principles unavailable to the general public. Even when speaking beyond their narrow specialization, they can provide more grounded analysis than non-specialists.

Expert silence in public discourse creates a vacuum filled by outright misinformation. This is especially critical in areas where alternative practices or financial schemes actively compete for audience attention.

🧠 The Social Responsibility Argument: Experts Are Obligated to Warn of Dangers

When a nuclear physicist sees policymakers making decisions about nuclear weapons based on fundamental misunderstanding of physical processes, isn't there an obligation to speak up? When an epidemiologist observes catastrophic errors in public health policy, is epistemic humility more important than potentially saved lives?

This argument appeals to the expert's moral duty to use their knowledge for the public good—even if it means crossing the boundaries of narrow competence.

⚙️ The Knowledge Evolution Argument: Disciplinary Boundaries Are Artificial and Fluid

Boundaries between disciplines are historically contingent and constantly revised. Biochemistry emerged from chemists "trespassing" into biology. Neuroeconomics—from neuroscientists "trespassing" into economics (S005).

- Rigid adherence to disciplinary boundaries preserves outdated knowledge structures

- Prevents innovation and the emergence of new fields

- Epistemic trespassing is the mechanism through which science evolves

- What's considered a violation today may become the norm tomorrow

All five arguments have serious merit. But their persuasiveness creates a dangerous illusion: that the absence of competence boundaries is simply the price of progress.

Empirical Anatomy of Epistemic Trespassing: Where Abstraction Kills Meaning

The strongest arguments defending interdisciplinarity don't negate empirical evidence of systematic knowledge distortion. The problem isn't boundary-crossing itself, but the mechanisms through which it occurs. More details in the Media Literacy section.

📊 The Four-Fifths Rule Case: How Computer Scientists Reinvented a Legal Concept

Computer scientists working on algorithmic fairness have massively equated the legal concept of "disparate impact" with the statistical "four-fifths rule"—a simplified test that's merely one of many preliminary assessment tools (S004). This erasure of legal nuance signals a critical problem: abstraction convenient for machine learning destroys the meaning that lawyers developed over decades.

When a specialist translates a concept from one discipline to another, they often lose not details, but the very logic that makes the concept work in its original context.

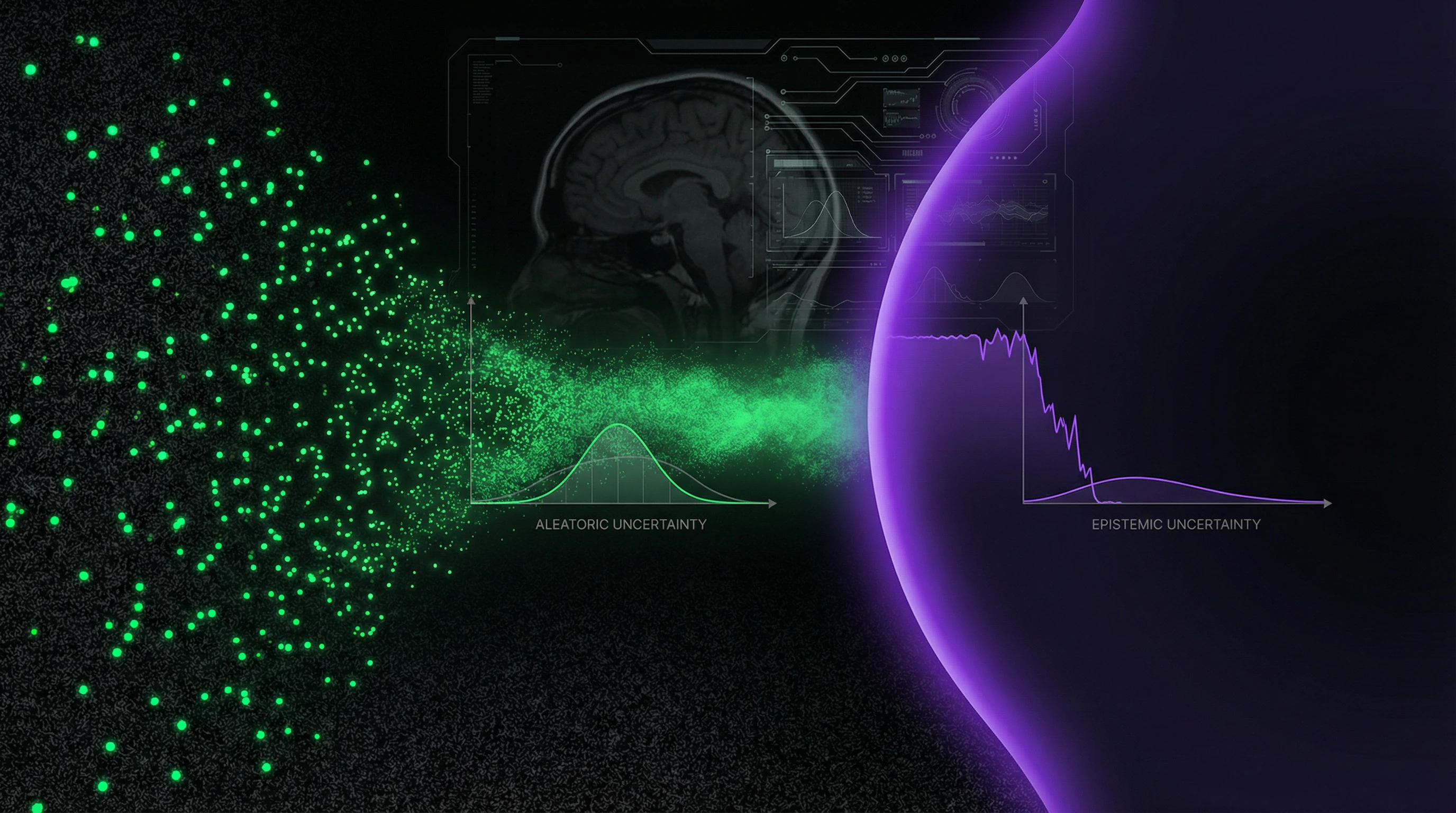

🧪 Radiotherapy and Epistemic Uncertainty: When Algorithms Don't Know What They Don't Know

Precision in organ contouring for radiotherapy planning is critical for patient safety (S002). However, automatic segmentation algorithms developed by machine learning specialists without deep understanding of clinical uncertainty create a new class of risks.

Research shows that epistemic uncertainty estimation is effective in identifying cases where model predictions are unreliable (S002). Algorithm developers often fail to understand: clinical uncertainty is qualitatively different from statistical uncertainty.

- Statistical uncertainty is variability in data that can be measured and reduced.

- Clinical uncertainty is irreducible ambiguity of the object itself (tumor boundary, optimal dose).

- Algorithms can reduce the former but cannot resolve the latter.

🧾 Methodological Gap: Why Statistical Significance Doesn't Equal Clinical Relevance

The current research landscape contains significant gaps: absence of ground truth for uncertainty assessment and limited empirical evaluations (S002). The key problem is that machine learning specialists operate with the concept of "ground truth" (absolute truth), which often doesn't exist in clinical practice.

| Object | ML Specialist Position | Clinician Position |

|---|---|---|

| Tumor boundary | An objective boundary exists that needs to be found | Boundary is blurred; choice depends on clinical judgment |

| Optimal radiation dose | Maximize prediction accuracy | Balance efficacy and risk for specific patient |

| Algorithm error | Random variation that can be minimized | Systematic error that needs to be understood and controlled |

🧬 Expert Testimony in Court: Institutionalized Epistemic Trespassing

The legal context provides vivid examples of institutionalized epistemic trespassing. Experts are regularly invited to testify on matters beyond their narrow specialization, and the legal system often lacks tools to distinguish between legitimate interdisciplinarity and trespassing (S005).

Attorneys strategically exploit the halo effect: presenting experts with impressive credentials in one field to make statements in another. A judge sees "PhD" and assumes competence, failing to distinguish between the principle of simplicity in different contexts and actual depth of knowledge.

⚠️ Cognitive Models and Epistemic Policies: Hidden Assumptions in AI Systems

Belief formation behavior is governed by implicit, untested epistemic policies (S006). This applies not only to humans but to AI systems that inherit the epistemic assumptions of their creators.

Frontier models enforce coherence of identity and position, penalizing arguments attributed to sources whose expected ideological stance conflicts with the content (S006). This is second-order epistemic trespassing: the system imposes a simplified model of how identity and beliefs should relate, ignoring the complexity of the actual landscape.

- First-Order Epistemic Policy

- Rules that guide a person in forming beliefs (which sources to trust, what evidence to require).

- Second-Order Epistemic Policy

- Rules embedded in the system that determine which first-order policies are considered legitimate. An AI system may block an argument not because it's incorrect, but because it violates the built-in model of coherence.

Mechanisms and Causality: Why Epistemic Trespassing Is Systematic, Not Random

Epistemic trespassing is not a series of individual judgment errors. It is a systematic phenomenon generated by structural features of modern knowledge production, academic institutions, and public discourse. For more details, see the Scientific Method section.

🔁 Halo Effect and Trust Transfer: The Cognitive Architecture of Epistemic Trespassing

The basic mechanism is the halo effect: the tendency to transfer positive evaluation of one attribute to other unrelated attributes. A Nobel laureate in physics speaks about economics, and the audience automatically transfers the trust earned in physics to economic judgments.

This transfer occurs at a prereflective level and is extremely resistant to correction, even when people recognize its irrationality (S001).

🧩 Institutional Incentives: Why the Academic System Encourages Trespassing

Modern academia creates powerful incentives for epistemic trespassing. Interdisciplinarity is officially encouraged by grant agencies. Public visibility is often valued over narrow specialization.

Experts who speak on a wide range of issues receive more conference invitations, more media citations, more consulting opportunities—regardless of actual competence in those areas.

- Grant agencies require "translational potential" and "social impact"

- Media influence raises university rankings and attracts students

- Consulting and expert testimony generate revenue distributed between faculty and administration

- Narrow specialists are perceived as less "innovative" in competitive environments

🧷 Abstraction as Professional Deformation: Why Programmers Are Particularly Vulnerable

Computer scientists are trained to create abstractions that simplify and generalize (S004). This professional virtue becomes a source of systematic epistemic trespassing.

A programmer who successfully created an abstraction for a complex technical system transfers the approach to social, legal, or ethical problems, not realizing: premature abstraction here is not merely ineffective, but actively harmful. It masks fundamental misunderstanding of context under the illusion of a solution.

Abstraction works in engineering because physical laws are universal. In social systems, universality is rare, and context is the rule.

⚙️ Thermodynamics of Learning and Epistemic Costs: Physical Limits of Knowledge

Learning is a fundamentally irreversible process when performed in finite time, and the implementation of epistemic structure necessarily entails entropy production (S008). This boundary depends only on the Wasserstein distance between initial and final ensemble distributions and is independent of the specific learning algorithm.

There are fundamental physical limits to the speed of knowledge acquisition—and these limits are independent of intelligence or methodology. Epistemic trespassing often occurs when experts ignore these limits, believing their general cognitive abilities allow them to quickly master a new field.

| Knowledge Domain | Type of Abstraction | Risk of Premature Abstraction |

|---|---|---|

| Physics, mathematics | Universal laws | Low — laws are genuinely universal |

| Biology, medicine | Mechanisms with exceptions | Medium — context matters, but patterns exist |

| Sociology, law, economics | Contextual patterns | High — abstraction often destroys meaning |

Conflicts, Contradictions, and Boundaries of Confidence: Where Sources Diverge and Why It Matters

An honest analysis of epistemic trespassing requires acknowledging areas where sources contradict each other or where data is insufficient for definitive conclusions. These zones of uncertainty don't weaken the argument—on the contrary, they demonstrate the epistemic virtue often lacking in those who commit epistemic trespassing. More details in the Cognitive Biases section.

🔎 Normative vs Descriptive Question: Should Experts Stay Silent or Learn to Speak Differently?

A key disagreement in the literature concerns the normative question: is epistemic trespassing always epistemically vicious, or is the problem in how exactly experts speak outside their field?

(S003) argues that there are cases where trespassing is fundamentally impermissible. An alternative position suggests the problem is solved through epistemic humility, explicit marking of competence boundaries, and willingness to engage in dialogue with experts in the target field.

- Position 1: trespassing is structurally vicious, regardless of caveats

- Position 2: trespassing is permissible if accompanied by explicit boundary marking and openness to criticism

- Position 3: the problem isn't trespassing itself, but social effects (halo effect, attention asymmetry)

Empirical data doesn't yet allow us to definitively resolve this dispute. This isn't a research shortcoming—it's a sign that the question is partly normative, not purely descriptive.

📊 Measuring Epistemic Trespassing: Methodological Challenges

There's a fundamental methodological problem: how do we objectively determine when epistemic trespassing has occurred? Disciplinary boundaries are blurred and historically contingent.

Interdisciplinary fields by definition require integrating knowledge from different sources. Some researchers propose operationalizing epistemic trespassing through absence of publications in peer-reviewed journals of the target field, but this criterion excludes legitimate cases of systematic reviews and meta-analyses by researchers who haven't undergone formal socialization in the discipline but possess relevant expertise.

| Criterion | Problem | Consequence |

|---|---|---|

| Publications in target discipline | Excludes newcomers and transdisciplinary researchers | Ossifies disciplinary boundaries |

| Formal education | Ignores practical experience and self-education | Privileges institutional status |

| Citation by target field specialists | Depends on social networks and visibility | Reflects influence, not competence |

🧾 The Role of Epistemic Virtues: Is Humility Enough?

One of the central questions: can epistemic humility compensate for lack of specialized knowledge? If an expert explicitly marks the boundaries of their competence, acknowledges uncertainty, and invites criticism from specialists in the target field, does this still constitute epistemic trespassing?

Sources diverge in their answer. (S003) argues that epistemic virtues can make interdisciplinary statements legitimate. Others point out that in practice, epistemic humility rarely manifests sufficiently, and the very act of public statement creates a halo effect regardless of caveats.

- Halo Effect in the Context of Epistemic Trespassing

- Audiences perceive an expert's statement in one field as authoritative in another, even when the expert explicitly qualifies boundaries. Mechanism: trust in the source transfers to content, bypassing critical evaluation.

- Attention Asymmetry

- A prominent scientist's statement receives more attention than criticism from a target field specialist. Result: trespassing shapes public opinion faster than it can be refuted.

- Social Legitimation Through Humility

- Explicit acknowledgment of competence boundaries can be perceived as honesty, which paradoxically strengthens trust. Epistemic virtue becomes a tool of persuasion.

- Hindsight Prediction and Social Effects

- The problem isn't that experts speak falsehoods. The problem is that hindsight prediction and social effects operate independently of intentions and caveats.

The problem isn't that experts speak falsehoods. The problem is that hindsight prediction and social effects operate independently of intentions and caveats.

Cognitive Anatomy of Persuasiveness: Which Mental Traps Does Epistemic Trespassing Exploit

Epistemic trespassing is effective not because the arguments are strong, but because it exploits systematic cognitive vulnerabilities. Understanding these mechanisms is critical for developing resilience. More details in the Cryptozoology section.

🕳️ The Halo Effect as a Fundamental Vulnerability

The halo effect is a fundamental feature of social information processing. Evolutionarily, it made sense to use success in one domain as a proxy for general competence: in small hunter-gatherer groups, a successful hunter possessed other useful skills as well.

In the modern world of narrow specialization, this heuristic mechanism systematically fails. We trust Nobel laureates in physics on matters of economics because the brain did not evolve to distinguish highly specialized expertise.

⚠️ Identity-Position Coherence

Research shows that systems enforce identity-position coherence, penalizing arguments attributed to sources whose expected ideological position conflicts with the content (S006). This tendency reflects a deep human cognitive predisposition: we expect people's beliefs to form coherent clusters.

A physicist "should" be a rationalist. A humanities scholar "should" be skeptical of technology. These expectations create cognitive dissonance when an expert speaks "out of character," and we resolve it either by rejecting the statement or by revising our assessment of the expert as a whole.

🧠 The Illusion of Understanding Through Abstraction

When an expert from another field offers a simple abstraction for a complex problem, it is perceived as insight rather than oversimplification. A physicist reducing an economic problem to a system of differential equations appears to have penetrated to an essence unavailable to economists mired in details.

This is a cognitive illusion: an abstraction that ignores contextual variables does not reveal essence—it conceals it. But the brain interprets mathematical elegance as a sign of truth.

The connection between hindsight bias and this trap is direct: after the abstraction is proposed, we search for facts that confirm it and ignore those that refute it.

📊 Three Mechanisms of Persuasiveness Exploitation

| Mechanism | How It Works | Why It's Dangerous |

|---|---|---|

| Authority + novelty | An expert from a prestigious field says something unexpected | Novelty appears as insight, authority blocks criticism |

| Semantic distance | Terminology from another discipline is used | The listener cannot verify whether the terms are correctly applied |

| Social proof | If an authority says it, other experts must agree | In reality, colleagues remain silent because it's not their field |

🔍 Verification Protocol: How to Distinguish Insight from Oversimplification

- Ask: can the expert explain why their abstraction works in this particular domain but not in an adjacent one?

- Check: does their argument mention contextual variables they are ignoring?

- Compare: does their conclusion align with the consensus of specialists in this field?

- Evaluate: do they propose a mechanism, or only correlation disguised as causation?

Epistemic trespassing is persuasive not because it is correct, but because it exploits the architecture of our trust. Recognizing this architecture is the first step toward strengthening it.