What Systematic Review and Meta-Analysis Actually Mean: Definitions That Hide a Critical Difference

A systematic review is a structured process of searching, selecting, and critically evaluating all available research on a specific question, conducted according to a predetermined protocol (S003). Meta-analysis is a statistical method for combining quantitative results from multiple studies to obtain a pooled effect estimate (S008).

The key distinction: a systematic review can exist without meta-analysis, but meta-analysis without systematic review loses methodological rigor. More details in the section Microchipping and Global Government.

- Systematic Review

- Protocol-driven search and evaluation of all available studies. Guarantees completeness, but not quality of source data.

- Meta-Analysis

- Statistical pooling of results. Increases precision of effect estimates, but does not correct systematic errors in source studies.

🔎 Why Confusion Between Terms Creates False Confidence

The study on H. pylori antibiotic resistance in Russia was registered in PROSPERO and followed PRISMA 2020 guidelines, formally meeting systematic review criteria (S001). However, prospective protocol registration does not guarantee quality of included studies—it merely documents authors' intentions.

When we see the phrase "systematic review and meta-analysis," the brain automatically assigns the highest level of evidence to the results, ignoring the question of source data heterogeneity.

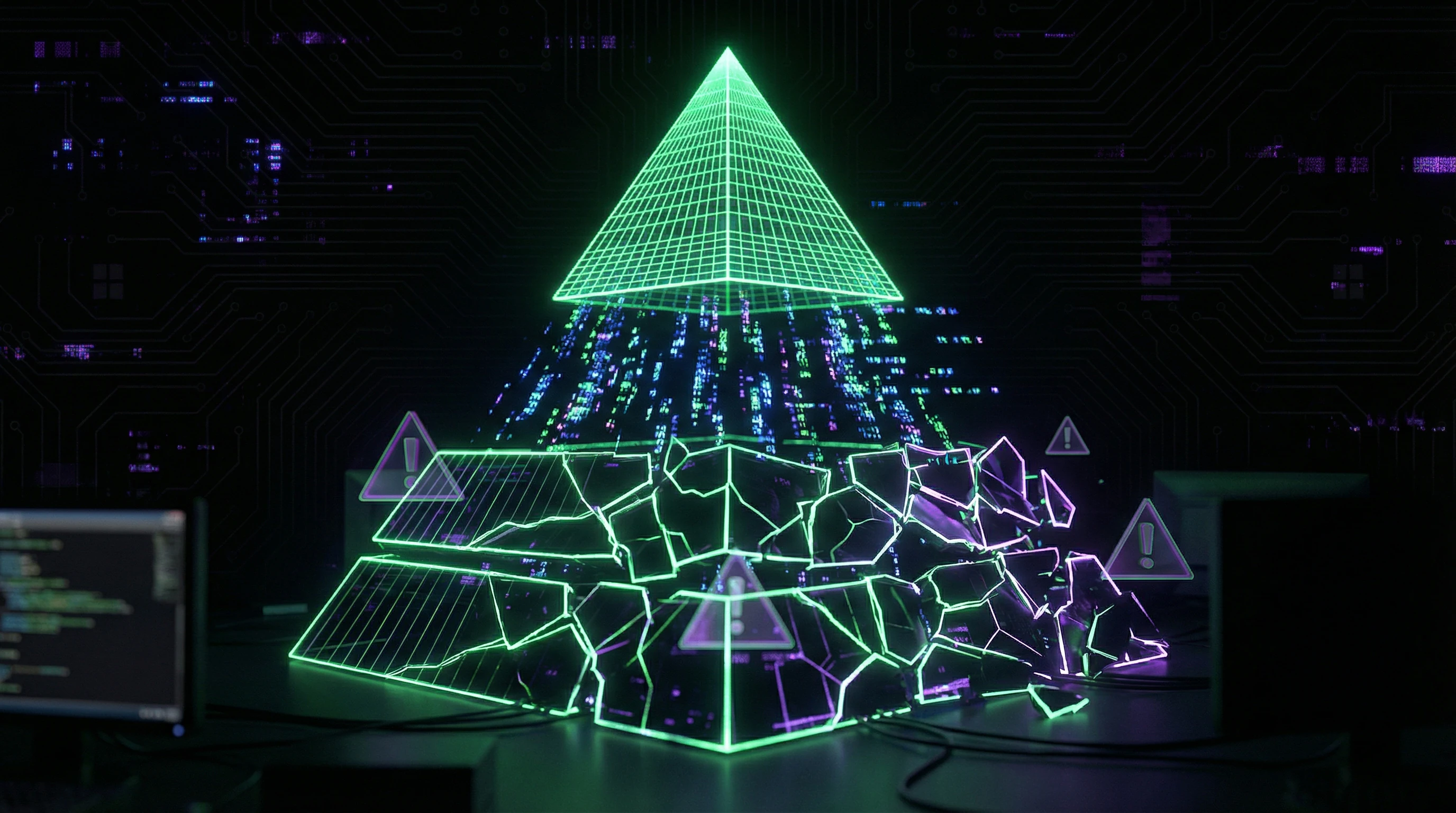

🧱 Evidence Hierarchy: Where Meta-Analysis Sits and What Ranks Higher

The traditional evidence-based medicine pyramid places systematic reviews and meta-analyses at the apex, above randomized controlled trials (RCTs) and cohort studies. But this hierarchy only works when critical conditions are met: population homogeneity, standardized measurement methods, absence of systematic errors in source studies.

| Condition | Met | Result |

|---|---|---|

| Homogeneous populations | Yes | Meta-analysis strengthens evidence |

| Homogeneous populations | No | Meta-analysis masks heterogeneity |

| Standardized methods | Yes | Results are comparable |

| Standardized methods | No | Combining incomparables |

Meta-analysis of low-quality studies does not become high-quality evidence—it becomes a precise estimate of systematic error.

⚙️ PRISMA 2020 Protocol: What It Guarantees and What It Doesn't

PRISMA guidelines define a reporting standard, not a source data quality standard (S001). A study can perfectly follow PRISMA while combining incomparable populations, using outdated diagnostic methods, or ignoring critical confounders.

- Search completeness (MEDLINE, EMBASE, regional indexes)—meets requirements

- Transparency of inclusion criteria—documented

- Quality of source studies—not guaranteed

- Population comparability—not verified by PRISMA

- Absence of confounders—left to authors' discretion

Search completeness does not equal evidence completeness. This distinction is critical for understanding the meta-level of the evidence base.

Five Arguments for Meta-Analysis: Why the Methodology Seems Bulletproof

Before examining limitations, it's essential to understand the method's strengths. Meta-analysis solves real problems in medical science, and ignoring these advantages means missing the context in which researchers operate. More details in the section Pharmaceutical Company Data Concealment.

🔬 Increasing Statistical Power: When Small Samples Combine into Large Ones

An individual study with a sample of 50 patients may fail to detect a statistically significant treatment effect. A meta-analysis of 20 such studies (n=1000) increases power and allows detection of a real but small effect.

In a study of cognitive task analysis (CTA) in surgical education, a meta-analysis of 12 studies showed a large training effect favoring CTA compared to traditional teaching methods (S007). None of these 12 studies individually had sufficient power for such a conclusion.

📊 Resolving Contradictions: When Studies Yield Different Results

When one RCT shows intervention effectiveness while another shows no effect, clinicians face uncertainty. Meta-analysis allows quantitative assessment of result heterogeneity and determines whether differences are random or systematic.

This is especially important in fields with high population variability, such as antibiotic resistance, where regional differences can be critical (S001).

🧪 Detecting Subgroup Effects: When Treatment Doesn't Work for Everyone

Meta-analysis enables subgroup analysis that's impossible within individual studies due to insufficient power. For example, one can assess whether an antibiotic's effect differs by patient age, geographic region, or resistance diagnostic method.

In a meta-analysis of H. pylori, authors were able to evaluate temporal changes in resistance over a 15-year period, which would have been impossible within a single study (S001).

🧾 Standardizing Evidence: When Unified Assessment Is Needed for Clinical Guidelines

Clinical guidelines require systematic evaluation of all available evidence. Meta-analysis provides a quantitative summary estimate that can be used to formulate recommendations.

- The finding that clarithromycin resistance exceeds the 15% threshold established by the Maastricht VI Consensus directly impacts revision of empirical treatment strategies (S001).

- Without meta-analysis, such a conclusion would be based on subjective assessment of individual studies.

⚙️ Living Systematic Reviews: When Evidence Updates in Real Time

The concept of living systematic reviews and prospective meta-analyses addresses the problem of evidence obsolescence (S002). Instead of a static document that becomes outdated a year after publication, a living review continuously updates as new studies emerge.

The ALL-IN meta-analysis methodology proposes infrastructure for such updates, which is especially important in rapidly evolving fields such as evaluating empathy in AI medical chatbots (S004).

Critical Analysis of the Evidence Base: What Real Meta-Analyses Show and What They Hide

Let's examine specific studies to demonstrate the gap between methodological declarations and actual limitations. More details in the section Sovereign Citizens Movement.

🧪 The H. pylori Case in the US: When Meta-Analysis Reveals a Critical Problem

A systematic review by Andreev et al. assessed antibiotic resistance of Helicobacter pylori based on 15 years of research (S001). The search was conducted in MEDLINE/PubMed, EMBASE, Web of Science, and Google Scholar following PRISMA 2020, pre-registered in PROSPERO.

Main finding: The US exceeds the 15% clarithromycin resistance threshold established by the Maastricht VI Consensus (S001). This requires revision of empirical treatment strategies and directly impacts first-line therapy selection.

However, methodological details not disclosed in the abstract are critically important: which resistance determination methods were used? Culture-based, molecular, or phenotypic assays? Differences in methods lead to systematic differences in estimates.

📊 Cognitive Task Analysis in Surgery: When Meta-Analysis Shows Large Effects

Alexander Coombs' systematic review of cognitive task analysis (CTA) in surgical education covered 12 studies (S007). Meta-analysis showed significant improvement in procedural knowledge and technical skills among trainees using CTA compared to traditional methods.

Effect size was large, identifying CTA as a highly effective supplement to traditional training (S007).

- Critical question: what was measured as "technical skills"?

- Objective structured assessments (OSATS), completion time, error rates, or subjective instructor ratings? Outcome heterogeneity is the main problem in educational meta-analyses, where measurement standardization is significantly lower than in clinical trials.

🧬 AI Chatbots vs. Physicians: Meta-Analysis of Empathy in Healthcare

A systematic review comparing empathy of AI chatbots and healthcare providers is particularly interesting due to the complexity of measuring empathy (S004). Empathy is a multidimensional construct: cognitive, emotional, and behavioral components.

How do you combine studies using different empathy scales, different interaction types (text, voice, video), and different patient populations?

Meta-analysis can provide precise quantitative estimates, but this precision is illusory if the original studies measure different constructs under the same name. Statistical homogeneity (low I²) does not guarantee conceptual homogeneity.

🔎 Network Meta-Analysis: When Comparing Interventions Never Directly Compared

Network meta-analysis allows comparison of multiple interventions even if they were never directly compared (S005). If there are RCTs comparing A vs B and B vs C, network meta-analysis estimates the relative effectiveness of A vs C through the common comparator B.

This is based on the critical assumption of transitivity: populations, study designs, and outcome definitions are sufficiently similar for indirect comparison to be valid.

| Scenario | Problem | Consequence |

|---|---|---|

| A vs B in mild disease; B vs C in severe | Transitivity violation | Indirect comparison A vs C is systematically biased |

| Different populations (age, sex, comorbidities) | Heterogeneity of effect modifiers | Averaged effect is not representative of any subgroup |

| Different follow-up intervals | Temporal incomparability | Effect may be an artifact of different follow-up periods |

⚠️ Mediation Analysis in Systematic Reviews: When Causality Becomes Speculation

Systematic reviews of mediation analyses face unique challenges (S008). Mediation analysis explains through which mechanisms an intervention affects outcomes: does physical activity reduce depression directly or through improved sleep quality?

Pooling mediation analyses requires that all studies measure the same mediators with the same methods and use the same statistical models.

In practice, this is rarely fulfilled. Differences in operationalization of mediators, measurement timing, and statistical approaches make meta-analysis of mediation effects extremely vulnerable to systematic errors. The result may be statistically significant, but causal interpretation remains speculative.

This problem is particularly acute in meta-level analyses, where attempts to generalize intervention mechanisms often lead to aggregation artifacts.

Mechanisms and Confounders: Why Correlation in Meta-Analysis Does Not Equal Causation

Meta-analysis combines study results, but cannot correct fundamental design limitations of those studies. If all included studies are observational, the meta-analysis remains observational, with all inherent limitations in establishing causality. More details in the Media Literacy section.

🧬 The Problem of Unmeasured Confounders: What Didn't Make It Into the Model

In meta-analysis of H. pylori antibiotic resistance, critical confounders may include: prior antibiotic use in the population, availability of over-the-counter antibiotics, regional differences in H. pylori strains, culturing methods and resistance determination techniques (S001). If included studies did not control for these factors or controlled them differently, the pooled resistance estimate will conflate true resistance with systematic methodological differences.

An unmeasured confounder is a variable that affects the outcome but was not captured in the study. It cannot be statistically controlled, and remains a source of systematic error indefinitely.

🔁 Temporal Dynamics: When Pooling 15 Years of Data Masks Trends

The Russian meta-analysis of H. pylori assessed temporal changes in resistance over 15 years (S001). But if resistance increased nonlinearly—for example, a sharp spike in the last 5 years—the pooled estimate across the entire period may underestimate the current situation.

Meta-regression by publication year can partially address this problem, but only if the number of studies is sufficient to detect a temporal trend. Without this analysis, you get an averaged figure that reflects reality neither in the past nor in the present.

🧷 Publication Bias: When Negative Results Go Unpublished

Studies that did not find high resistance or did not demonstrate intervention effectiveness are published less frequently than studies with "positive" results (S008). This creates publication bias, which inflates pooled effect estimates in meta-analysis.

- Methods for assessing publication bias (funnel plot, Egger's test) have low power with small numbers of studies.

- They can produce false negatives—failing to detect bias that is actually present.

- Even when bias is detected, its magnitude cannot be precisely estimated.

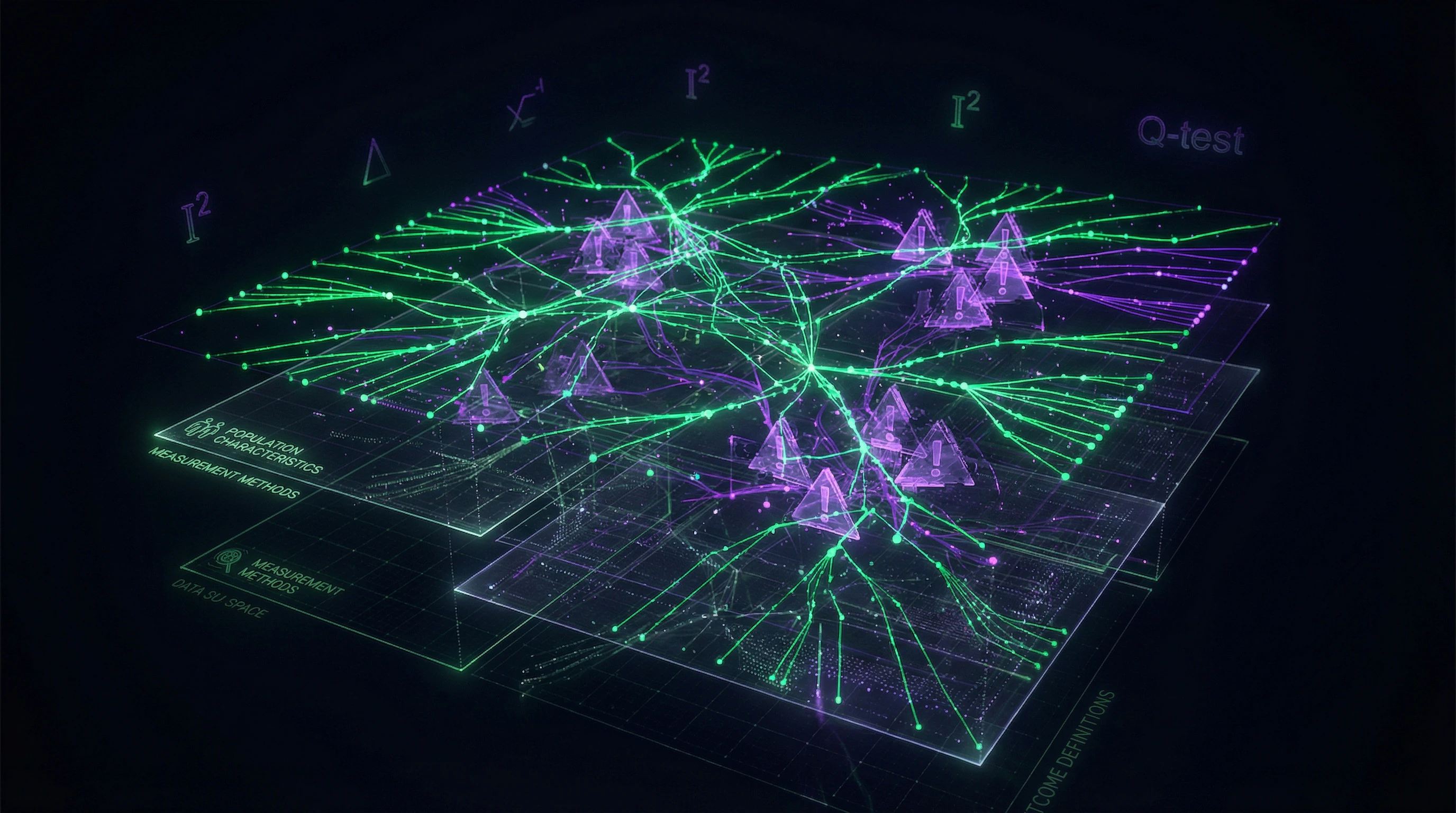

⚙️ Population Heterogeneity: When "H. pylori Patients" Are Different Populations

H. pylori patients in New York, Los Angeles, and rural Montana may differ in genetic factors, dietary habits, access to healthcare, and prior antibiotic use. Pooling data from these regions into one meta-analysis assumes these differences do not affect resistance, which may be an incorrect assumption.

Statistical tests for heterogeneity (I², Q-test) assess variability in results but do not explain its sources. High I² says: "Something is wrong here," but does not say what exactly.

Conflicts and Uncertainties: Where Sources Diverge and Why It Matters

Even high-quality systematic reviews can reach different conclusions on the same question. Understanding the sources of these discrepancies is critical for interpreting results. More details in the Cognitive Biases section.

🧩 Differences in Inclusion Criteria: How Population Definition Changes the Outcome

Two meta-analyses on the same topic may use different study inclusion criteria. One might include only RCTs, another—RCTs and cohort studies. One might limit itself to adult patients, another—include all age groups.

These differences are not errors—they reflect different research questions. But a reader seeing two meta-analyses with contradictory conclusions may not understand that they're answering different questions. This is especially dangerous in the context of meta-level interpretations, where contradiction between sources is often perceived as evidence of conspiracy or hidden knowledge.

🔬 Differences in Statistical Methods: Fixed vs Random Effects

A meta-analysis can use a fixed-effect model (assumes all studies estimate one true effect) or a random-effects model (assumes the true effect varies between studies).

The fixed-effect model produces narrower confidence intervals and more often shows statistically significant results, but it's only valid in the absence of heterogeneity. The random-effects model is more conservative but may underestimate the effect with a small number of studies.

The choice between them is not neutral: the same dataset can yield opposite conclusions depending on the method chosen (S002).

📊 Differences in Outcome Definition: When "Effectiveness" Is Measured Differently

In the surgical education meta-analysis, CTA "effectiveness" was measured through procedural knowledge and technical skills (S007). But what if some studies measured knowledge through written tests, while others—through simulation assessments?

What if technical skills were evaluated by procedure completion time in some studies and by error frequency in others? Combining these heterogeneous outcomes into one meta-analysis creates the illusion of a unified "effectiveness" construct, which is actually a composite of incomparable measurements.

- Check whether authors used primary outcomes (predetermined) or secondary ones (selected post-hoc)

- Compare definitions of the same outcome across different studies

- Assess how heterogeneous the measurement methods are in the pooled studies

- Look for signs of cherry-picking: if authors included only those outcomes that showed an effect

When two meta-analyses diverge, the first question is not "who's right," but "what different questions are they answering." This requires reading the methodology, not just the abstract. This is precisely where paranormal interpretations often take over: contradiction between sources is perceived as evidence of hidden knowledge, rather than as a result of methodological choices.

Cognitive Anatomy of the Myth: Which Mental Traps Make Us Trust Meta-Analysis Unconditionally

Meta-analysis exploits several cognitive biases that make its results particularly convincing, even when methodological limitations are obvious. Learn more in the Moderation and Quality Control section.

⚠️ Representativeness Heuristic: When Study Quantity Creates an Illusion of Completeness

A meta-analysis of 20 studies seems more convincing than a single study, regardless of the quality of those 20. The brain uses quantity as a proxy for quality—a classic example of the representativeness heuristic.

If all 20 studies have high risk of bias, pooling them doesn't reduce that risk—it provides a precise estimate of the bias.

🕳️ Illusion of Precision: When Narrow Confidence Intervals Mask Uncertainty

Meta-analysis produces a pooled estimate with a confidence interval that is often narrower than the intervals of individual studies. This creates an illusion of precision.

A narrow confidence interval reflects only statistical uncertainty (random error), ignoring systematic uncertainty (bias, heterogeneity, unmeasured confounders). True uncertainty may be substantially larger.

🧠 Methodological Halo Effect: When PRISMA and PROSPERO Create False Confidence

Mentioning adherence to PRISMA 2020 guidelines and pre-registration in PROSPERO creates a halo effect—an assumption of high quality (S001).

- PRISMA

- A reporting standard, not a quality standard. A study can perfectly follow PRISMA while including low-quality studies or making unwarranted causal conclusions.

- PROSPERO

- Registration reduces risk of selective reporting but doesn't guarantee protocol quality.

🔁 Trust Cascade: How Meta-Analysis Gets Cited Without Critical Appraisal

After a meta-analysis is published, its results are often cited in clinical guidelines, textbooks, and review articles without re-evaluation. Each subsequent citation reinforces perceived credibility.

- Original meta-analysis is published with methodological limitations

- Clinical guidelines cite the result without mentioning limitations

- Textbooks and reviews repeat the citation from guidelines

- The result becomes "accepted fact," though the evidence base remains weak

The mechanism works as a meta-level trap: each layer of citation distances the reader from the original data and methodological details.

Five-Minute Critical Appraisal Protocol for Systematic Reviews: A Practitioner's Checklist

Any systematic review or meta-analysis is not a verdict, but a hypothesis packaged in methodology. Before accepting its conclusions, you need to examine three layers: study design, data quality, and interpretation logic.

Below is a minimal set of questions that filters out 80% of problematic reviews within minutes of reading.

- Inclusion criteria: narrow or vague? If authors included studies with different populations, dosages, durations, or measurements—this isn't synthesis, it's averaging noise. Check the table of study characteristics (usually in appendices). If variation is large, heterogeneity (I²) will be high, and the pooled result loses meaning.

- Funding source and author conflicts of interest. Meta-analyses sponsored by drug or device manufacturers systematically overestimate effects (S008). This doesn't necessarily mean falsification—selective citation and interpretation often do the work.

- Publication bias: was the "file drawer" checked? Authors should have searched for unpublished studies (through registries, author correspondence, conferences). Without this, results are inflated. A funnel plot in the appendix is the first sign of integrity.

- Quality of included studies: randomized or observational? A meta-analysis of 50 observational studies is weaker than one good RCT (S003). Check how many studies had low risk of bias (by Cochrane Risk of Bias scale). If fewer than half—the result is unreliable.

- Heterogeneity (I²): above 50% is a red flag. This means more than half the variation in results is explained by differences between studies, not chance. At I² > 75%, the pooled result is nearly useless. Authors should have conducted subgroup analysis or meta-regression to identify sources of variation.

- Effect size: clinically meaningful or statistically significant? The confidence interval is the key indicator. If the 95% CI includes zero or crosses the clinical significance threshold, the conclusion is uncertain. Number needed to treat (NNT) should be explicitly stated.

- Sensitivity analysis: is the result robust? Authors should have excluded one study at a time and recalculated results. If conclusions change dramatically—this signals instability. Also check whether analysis was conducted for RCTs only (excluding observational studies).

- Protocol registration: was it registered beforehand? PROSPERO (for systematic reviews) or Open Science Framework is standard. Without a protocol, authors could have changed inclusion criteria during analysis (p-hacking at the review level).

If a review doesn't answer 5–6 questions out of 8—its conclusions are preliminary. This doesn't mean they're wrong, but they require verification with independent data or RCTs.

Practical tip: start with the abstract and table of study characteristics. If high heterogeneity or mixed study types are already visible there, diving into methodology is often unnecessary—the result is already compromised.

Remember: meta-analysis is a tool for synthesis, not a tool for truth. Its value depends on the quality of input data and author integrity. Critical reading takes 5–10 minutes and often prevents erroneous decisions.