✈️ Chemtrails

✈️ ChemtrailsTech Fears: Digital Age Anxieties and the Visual Language of Threatλ

From the 1895 Montparnasse train wreck to artificial intelligence: how fear of technology shapes media culture and influences the perception of progress

Overview

Techno-fears are anxieties and concerns related to new technologies, their impact on society, and potential risks to humanity. These fears have existed since the Industrial Revolution and evolve alongside technological progress: from steam engines to artificial intelligence. French philosopher Paul Virilio argued that every technology contains the potential for catastrophe, and visual media play a key role in activating and spreading techno-fears through shocking images and contrasts.

🛡️ Laplace Protocol: Techno-fears are not irrational—they reflect legitimate concerns about privacy, security, and the social consequences of technology. Understanding the mechanisms of fear activation in media culture helps critically evaluate information and form a balanced attitude toward technological progress.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Navigation Matrix

Subsections

[5g-fears]

5G Fears

We examine the social phenomenon of fears surrounding fifth-generation mobile technology and separate scientific facts from conspiracy myths

Explore

[chemtrails]

Chemtrails

The conspiracy theory about secret chemical spraying from aircraft contradicts scientific evidence about the natural formation of contrails in the atmosphere

Explore

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

✈️ Chemtrails

✈️ Chemtrails ✈️ Chemtrails

✈️ Chemtrails ✈️ Chemtrails

✈️ Chemtrails ✈️ Chemtrails

✈️ Chemtrails ✈️ Chemtrails

✈️ Chemtrails ✈️ Chemtrails

✈️ Chemtrails⚡

Deep Dive

History of Tech Fears: From Industrial Revolution to Digital Age

Tech fears are a persistent cultural phenomenon accompanying humanity since the first major technological breakthroughs. Each era generates its own anxieties: loss of control over created systems, unpredictability of consequences, transformation of familiar ways of life.

Understanding the historical dynamics of tech fears allows us to separate rational concerns from irrational panic and develop adequate strategies for navigating the technological future.

The 1895 Montparnasse Train Wreck as a Symbol of Tech Fear

On October 22, 1895, a passenger train at Montparnasse station in Paris failed to brake, crashed through the station wall, and plunged onto the street below. This event became one of the first visual manifestos of tech fear in the industrial era.

The photograph of the hanging locomotive instantly became a cultural icon, demonstrating the fragility of technological progress and its capacity to spiral out of control.

The visual language of this image is based on shocking contrast: a massive machine, symbol of power and reliability, helplessly suspended above the street, violating the boundary between technological space and everyday life. This image cemented in the collective consciousness the idea that technology carries an inherent threat of catastrophe.

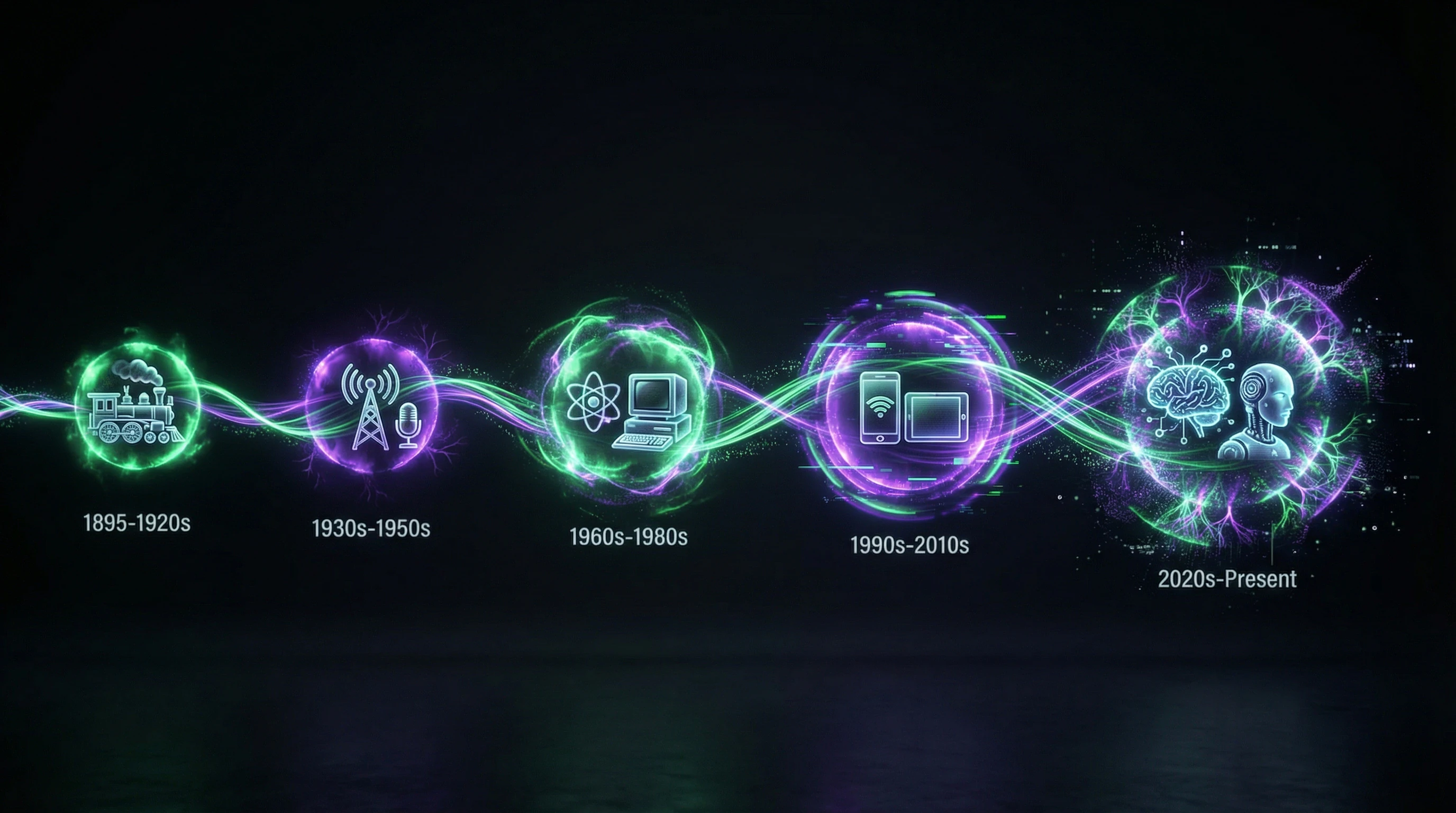

Evolution of Technological Anxieties in the 20th-21st Centuries

Throughout the 20th century, tech fears transformed alongside technological development: from concerns about industrial accidents to anxieties of the nuclear age, then to fears of computerization and automation displacing human labor.

- 1950s–1960s

- Fear of nuclear catastrophe dominated public consciousness.

- 1980s–1990s

- Concerns about mass unemployment due to robotization and production automation.

- 2000s

- Panic around digital surveillance and loss of privacy.

- Contemporary Era

- Convergence of multiple tech fears: artificial intelligence, biotechnology, neural interfaces, and climate technologies simultaneously trigger anxiety across different social groups.

Paul Virilio's Philosophy: Technology and Catastrophe

French philosopher Paul Virilio developed a conceptual framework for understanding techno-fears: every technology contains within itself a specific catastrophe as an inherent part of its nature. His approach allows us to view techno-fears not as an irrational reaction, but as an intuitive understanding of the immanent risks of technological development.

Virilio's philosophy is particularly relevant in the context of contemporary discussions about artificial intelligence and autonomous systems, where questions of control and predictability are especially acute.

The Concept of Immanent Accident in Technology

According to Virilio, the invention of the ship simultaneously means the invention of the shipwreck, the creation of the airplane — the invention of the plane crash, and the development of nuclear energy — the potential for Chernobyl.

The immanent accident is not necessarily a realized catastrophe, but a structural possibility embedded in the very nature of technology and requiring constant vigilance.

This logic is not a pessimistic rejection of progress, but represents a sober analysis of technological reality: every system contains points of failure, every innovation creates new vulnerabilities.

| Technology | Immanent Accident |

|---|---|

| Artificial Intelligence | Loss of control, unpredictable behavior |

| Biotechnology | Biological catastrophes, uncontrolled spread |

| Neural Interfaces | Consciousness manipulation, hacking of cognitive processes |

Visual Language of Threat in Media Culture

Media culture activates and spreads techno-fears through a specific visual language based on contrast, shock, and violation of familiar boundaries. Images of technological catastrophes — from Montparnasse to Fukushima — employ a common semiotic structure: technology that has exceeded its designated space, invading the human world.

Contemporary media amplify this effect through visualization of abstract threats: artificial intelligence algorithms are depicted as sinister digital networks, facial recognition systems — as all-seeing eyes, and robots — as mechanical invaders of human jobs.

Visual language shapes emotional response faster than rational analysis, which explains the persistence of techno-fears even in the presence of statistical data on technology safety.

Modern Manifestations of Techno-Fears

In the 21st century, techno-fears have acquired new forms related to digitalization, artificial intelligence, and biotechnological intervention in human nature. They concern not only physical safety but also cognitive autonomy, privacy of consciousness, and the very essence of human identity.

Contemporary techno-fears often focus on invisible threats—algorithms, data, neural networks—which makes them more abstract and simultaneously more pervasive in everyday life.

Artificial Intelligence and Fear of Loss of Control

Fear of artificial intelligence centers around the technological singularity scenario—the moment when AI surpasses human intelligence and becomes uncontrollable. This fear is fueled by both science fiction narratives and real examples of algorithmic decision-making opacity in critical domains: medical diagnostics, judicial systems, financial markets.

The "black box" phenomenon in deep learning causes particular concern, where even the creators of systems cannot explain the decision-making logic of neural networks.

Research shows that this fear is not universal and varies depending on the level of digital literacy, age, and cultural context.

Facial Recognition Technologies and Surveillance Anxieties

Facial recognition systems have become a symbol of techno-fears related to total surveillance and loss of anonymity in public space. These technologies are perceived as instruments of control capable of tracking every movement, identifying protest participants, and creating detailed behavioral profiles without individual consent.

- Opacity in the use of collected data

- Potential for abuse by government and corporate structures

- Technical errors leading to false identifications

Debates surrounding facial recognition reflect a broader conflict between technological efficiency and the right to privacy, between security and freedom.

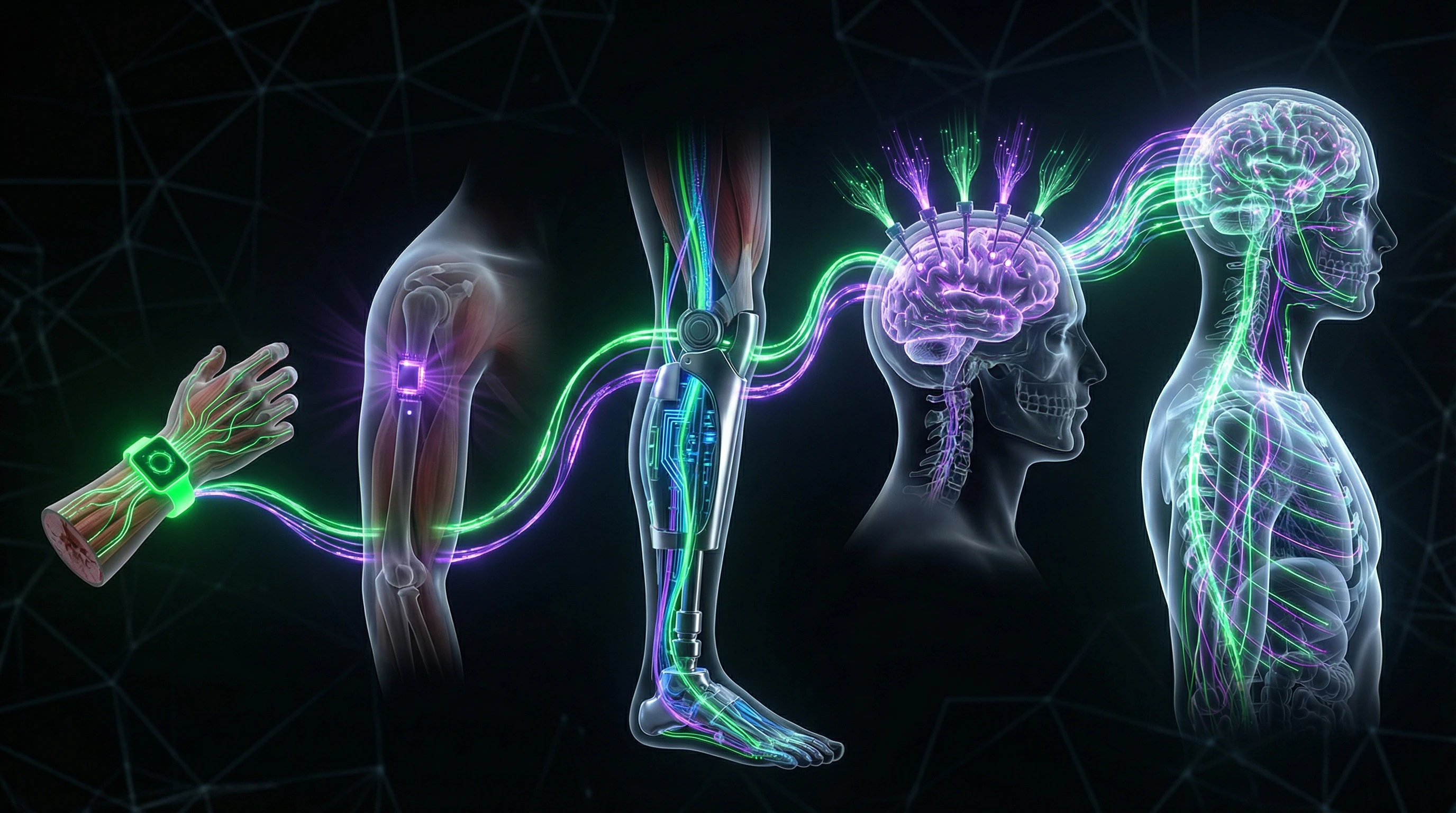

Cyborgization and Transhumanism

Concepts of cyborgization and transhumanism evoke existential techno-fears related to the blurring of boundaries between human and machine, natural and artificial. Prospects of neural interfaces, genetic editing, and technological life extension generate anxieties about the loss of human authenticity and the creation of social inequality between "enhanced" and "natural" humans.

- Unpredictable consequences of intervention in biological nature

- Deep tension between the drive for enhancement and the desire to preserve human identity

- The transhumanist project as simultaneously a promise of liberation and a threat of loss

Media-Body and Techno-Body: Humanity in the Age of Technology

Human-Machine Integration

The techno-body describes the transformation of the organism through technological intervention at the boundary between biological and artificial. Cyborgization—the integration of technological components into the body—is no longer science fiction: cochlear implants, neural interfaces, prosthetics with sensory feedback are already medical practice.

The fundamental question: at what point does a modified body cease to be "human." Techno-fears here reflect anxiety about loss of bodily autonomy, when the boundary between subject and object, living and mechanical, becomes permeable.

Cyborgization doesn't threaten humanity—it redefines what we consider human. Fear arises not from the fact of integration itself, but from the uncontrollability of the process and the ambiguity of "normal" criteria.

Digital Identity and Media Representation

The media-body is an extension of presence in digital space, where identity is constructed through visual representations, data, and algorithmic profiles. Facial recognition, biometrics, digital surveillance create a parallel reality: the body exists as a dataset for analysis, control, and manipulation.

Fear of dematerialization is linked to loss of control over one's own image: the digital body is copied, altered, used without consent. Transhumanist projects intensify this anxiety, proposing radical reconceptualization of human nature through technological enhancement—perceived as a threat to equality and dignity.

- Body as data: biometric profiles, search histories, geolocation

- Body as object of control: algorithmic filtering, predictive analytics

- Body as commodity: monetization of personal data, microtargeting

- Body as weapon: deepfakes, synthetic media, perception manipulation

Visual Semiotics of Technological Threat

Shocking Contrast as Fear Activation Tool

The visual language of techno-fear is built on dramatic contrast between order and chaos. The Montparnasse train derailment (1895)—prototype of this semiotics: a machine, symbol of progress, crashes through a wall and hangs suspended over the street.

This code—technology out of control, invading human space—is reproduced in contemporary media: autonomous vehicle crashes, AI failures, critical system malfunctions. Shocking contrast functions as a cognitive trigger, instantly activating the archetypal fear of uncontrolled force.

| Visual Code | Historical Origin | Contemporary Manifestation |

|---|---|---|

| Technology out of control | Montparnasse derailment (1895) | Autonomous vehicle crashes, AI failures |

| Invasion of human space | Machine crashes through station wall | Robots in urban environments, drones over homes |

| Loss of human oversight | Engineer loses control | Autonomous systems without visible operator |

Role of News Media in Amplifying Techno-Fears

News media function as amplifiers of techno-fears through sensational headlines and dramatic imagery. Research shows: media representation of technological risks often exaggerates the probability of catastrophes, creating distorted perception of actual threats.

Facial recognition technologies, artificial intelligence, and geoengineering are presented through the lens of potential abuses rather than balanced risk-benefit analysis. This is media logic, not objective reality.

A self-reinforcing cycle emerges: public fears generate demand for anxiety-inducing content, which amplifies these fears, forming a cultural climate of technological paranoia. A media ecosystem oriented toward engagement becomes an incubator for conspiratorial narratives and unfounded anxieties.

Digital Literacy as a Response to Techno-Fears

Educational Approaches to Overcoming Technological Anxieties

Digital literacy is not a set of technical skills, but the ability to critically assess the risks and opportunities of technologies, overcoming irrational fears through understanding.

Educational programs help distinguish real threats from media constructs, understand the principles of how technologies work, and make informed decisions about their use. The generational gap in technological competence amplifies techno-fears among older age groups, requiring adapted approaches for different demographic segments.

An effective strategy for overcoming techno-fears includes not denying risks, but rationally assessing them and developing skills for safe interaction with technologies.

Critical Thinking and Media Literacy

Media literacy enables recognition of fear activation mechanisms in media culture and resistance to manipulative strategies of sensational narratives.

- Critical analysis of the visual language of threat

- Understanding media's economic incentives to create anxiety-inducing content

- Ability to verify information from multiple sources

These skills build immunity to technological panic and conspiratorial narratives.

Paul Virilio's position on the inevitability of technological accidents is reinterpreted not as a reason for fear, but as a foundation for responsible design and use of technologies.

Healthy skepticism based on knowledge differs from paralyzing fear: the former stimulates development of ethical frameworks and safety measures, the latter impedes technological progress.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Techno-fears are anxieties and concerns related to new technologies and their impact on society. They include fear of losing control over technological systems, privacy concerns, and apprehensions about AI and cyborgization. Philosopher Paul Virilio argued that every technology contains the potential for catastrophe.

Techno-fears have existed since the beginning of the Industrial Revolution. A landmark example was the train crash at Montparnasse station in 1895—the photograph of the disaster became a symbol of technological threat. Since then, techno-fears have evolved with each new technological era.

Contemporary techno-fears include concerns about artificial intelligence surpassing human capabilities, facial recognition technologies, and total surveillance. Anxieties about cyborgization, transhumanism, and loss of digital privacy are also widespread. Geoengineering and digital inequality amplify these fears.

No, many techno-fears are based on real risks. Concerns about privacy, data security, and the social consequences of technology are well-founded. Research shows that healthy skepticism contributes to the development of ethical standards and safety measures in the technology sector.

This is a myth—techno-fears are present across all age groups. Young people worry about surveillance through social media and data breaches, while older generations are concerned about digital inequality. The nature of fears differs, but is not directly dependent on age or technological literacy.

No, critical attitudes toward technology stimulate more responsible development. Techno-fears compel developers to consider ethical aspects, security, and social consequences. This leads to the creation of more thoughtful and safer technological solutions.

The key method is increasing digital literacy and critical thinking. Educational programs help understand the real risks and opportunities of technologies, separating facts from myths. It's important to obtain information from verified sources and develop media literacy skills.

Media-body is a concept describing the intersection of the human body and media technologies, while techno-body refers to a body modified by technologies. These concepts describe the growing integration of human and machine in the digital age. They are connected to discussions about cyborgization and transhumanism.

News media use the visual language of threat—shocking contrasts and sensational headlines. This activates and spreads techno-fears throughout society. Paul Virilio demonstrated that media representation of technological catastrophes creates long-term cultural impact.

Paul Virilio was a French philosopher who created the concept of the immanent accident in technology. He argued that every invention contains the potential for catastrophe: the ship invents the shipwreck, the airplane invents the plane crash. His work became the philosophical foundation for research on techno-fears in media culture.

The primary danger is total surveillance and privacy violation. Facial recognition technologies enable tracking people's movements without their consent, creating a society of total control. There are also risks of biometric data breaches and algorithmic discrimination.

Cyborgization is the process of integrating humans and machines through technological implants and enhancements. Fear is associated with loss of human identity, ethical questions, and social inequality. Transhumanist ideas about technological human enhancement spark debates about acceptable boundaries.

In narrow tasks, AI already surpasses humans, but artificial general intelligence has not yet been created. Fear is linked to potential loss of control over superintelligent systems. Experts call for developing ethical standards and safety mechanisms before AI reaches human-level capabilities.

The visual language of threat uses shocking contrasts and dramatic imagery for emotional impact. The 1895 train crash photograph became the first example of such visual semiotics. Contemporary media continue using these techniques to amplify perception of technological risks.

Yes, techno-fears can deepen the digital divide between generations and social groups. People who avoid technology out of fear lose access to opportunities in education, employment, and social services. The solution is inclusive educational programs that account for varying levels of technological readiness.

Critical thinking helps analyze information about technologies, separating real risks from manipulation. Media literacy teaches recognition of sensational headlines and source verification. This enables making informed decisions about technology use without succumbing to panic or euphoria.