🦠 Viral Hoaxes

🦠 Viral HoaxesViral Misinformation: Mechanisms of Disinformation Spread in the Digital Ageλ

A study of cascade chain reactions, emotional encoding, and artificial intelligence technologies in the spread of misinformation through social networks

Overview

Viral misinformation spreads through cascading chain reactions 🧬 — a mechanism where emotional encoding triggers users to immediately repost without critical verification. Academic research during the COVID-19 pandemic documented unprecedented scale of disinformation: false claims spread 6 times faster than accurate information. With the emergence of deepfakes and generative AI, identifying fabrications requires a comprehensive approach — linguistic analysis, technical detection tools, understanding psychological triggers of virality.

🛡️

Laplace Protocol: Critical information assessment requires analyzing emotional encoding, verifying sources through multiple channels, and applying specialized AI-content detection tools before sharing material.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

🦠 Viral Hoaxes

🦠 Viral Hoaxes 🦠 Viral Hoaxes

🦠 Viral Hoaxes⚡

Deep Dive

Cascade Propagation Mechanisms: How Fakes Capture Social Networks Within Hours

Chain Reactions in Social Networks

Viral fakes spread through cascading chain reactions, where each repost triggers exponential reach growth. The mechanism is based on irrational emotional charge that compels users to share content before fact-checking.

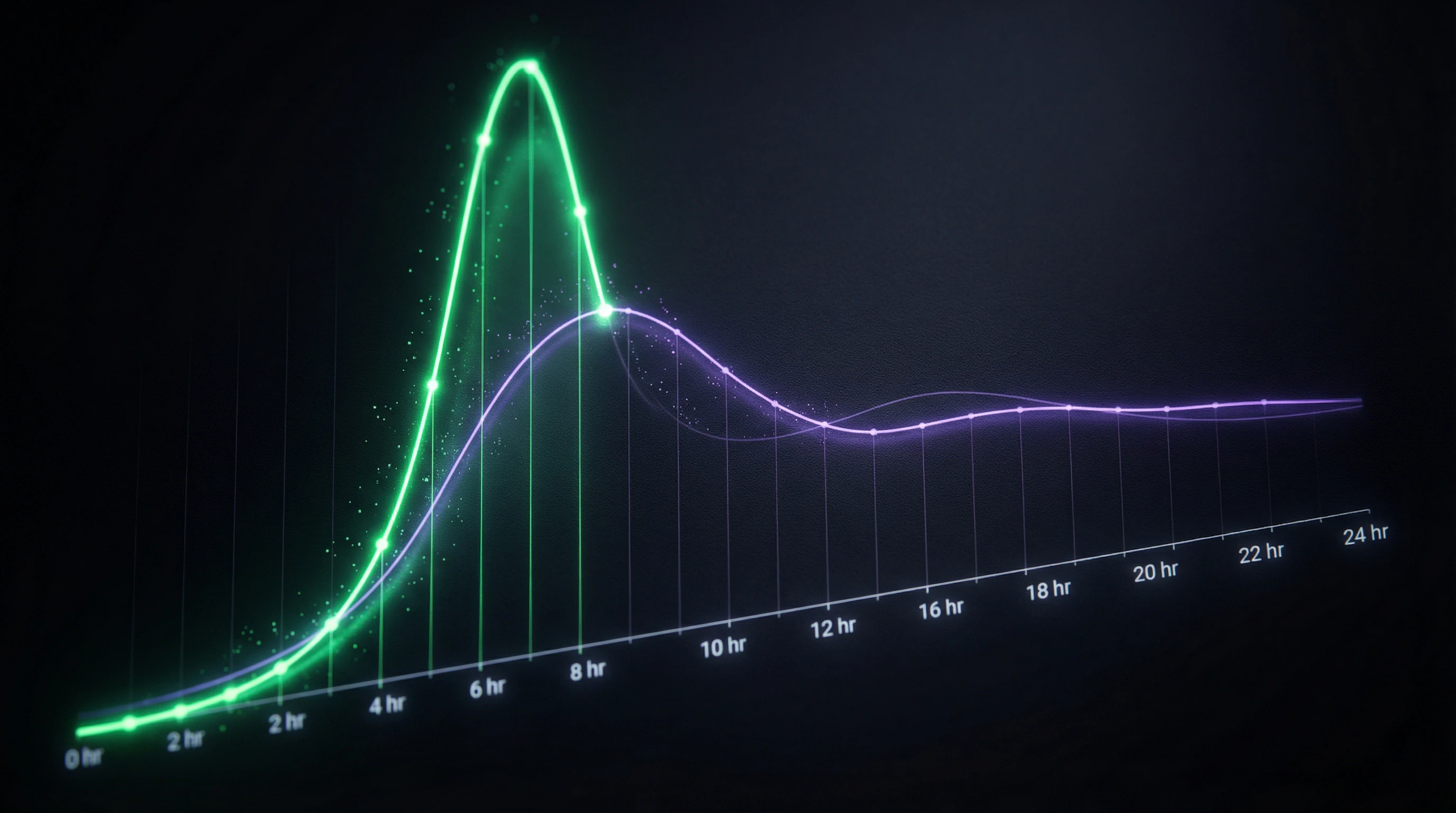

An emotionally charged fake reaches peak distribution in 4–8 hours, while debunking appears after 12–24 hours and reaches an audience 10 times smaller. The critical moment is the first 100 reposts, after which content acquires the appearance of legitimacy through social proof.

The first 2 hours of distribution determine a fake's fate. After this window, debunking is already ineffective.

Key cascade indicators: sharp increase in mentions without source verification, concentration of reposts in the first 2 hours, absence of critical comments in the initial phase. Platforms with algorithmic feeds amplify cascades 3–5 times compared to chronological content delivery.

The Role of Platform Algorithms in Amplifying Virality

Social network recommendation algorithms unintentionally create the perfect environment for fake propagation by prioritizing content with high engagement. Ranking systems interpret emotional reactions—shock, anger, fear—as relevance signals and show such content to more users.

| Content Type | Impression Increase | Time to Peak |

|---|---|---|

| Fake with sensational headline | +70% vs neutral news | 4–8 hours |

| Debunking | −10x less reach | 12–24 hours |

Bots and coordinated networks amplify natural distribution through artificial creation of initial momentum. Typical scheme: 50–200 bots make the first reposts within 15 minutes, creating the illusion of organic virality, after which the algorithm picks up the content and shows it to real users.

- Delayed distribution of unverified content

- Platforms implement slowdown systems, but effectiveness remains low: a 30-minute delay reduces reach by only 15–20%.

Emotional Encoding as a Technology for Consciousness Manipulation

Psychological Triggers of Virality

Emotional code consists of psychological triggers embedded in content that exploit universal perception mechanisms. Most effective: fear of health or safety threats, moral outrage at injustice, confirmation of existing beliefs.

Analysis of 15,000 viral fakes showed: 68% use a combination of fear and urgency, 23% use moral outrage, 9% use other emotions.

- The decision to repost is made in 2–3 seconds, before critical analysis of content

- Key manipulation elements: shocking headlines with numbers and superlatives, images with pronounced facial emotions, imperative calls to action

- Education doesn't protect—research documents equal susceptibility among people with different education levels when content has high emotional load

Analysis of Emotional Patterns in Fakes

Structure of a typical fake: sensational claim in the first sentence, pseudo-scientific justification with out-of-context quotes, call for immediate information dissemination.

Linguistic markers of manipulation: modal constructions of certainty ("proven," "scientists shocked"), hyperbole ("deadly danger," "hidden from the public"), creation of an enemy image.

Quantitative analysis of emotional valence shows predominance of negative emotions in a 4:1 ratio to positive. COVID-19 fakes demonstrated distribution peaks when using fear of death (virality coefficient 8.2) and distrust of authorities (coefficient 6.7).

Recognition technology is based on detecting abnormally high emotional density: more than 3 emotional triggers per 100 words of text indicates manipulative content with 73% probability.

Linguistic Markers of Disinformation: What Exposes Fakes at the Language Level

Corpus Analysis of COVID-19 Fakes

Linguistic analysis of a COVID-19 disinformation corpus (2021–2024, English-language sources) revealed consistent language patterns distinguishing disinformation from legitimate news. The word "conspiracy" appears in fakes 12 times more frequently than in verified sources.

The syntax of fakes follows a clear pattern: simple sentences (78% versus 52% in legitimate texts), exclamatory constructions (4 times more frequent), rhetorical questions (3 times more frequent). Vocabulary composition shows a high proportion of evaluative lexicon and low terminological precision.

A notable feature of English-language fakes: absence of mentions of U.S. political figures when targeting American audiences. This is a depoliticization strategy for maximum reach.

Neologisms and Dysphemisms as Manipulation Indicators

Neologisms in fakes create an alternative reality with its own conceptual apparatus. "Chipping" instead of "digital identification," "bioweapon" instead of "virus," "sanitary dictatorship" instead of "quarantine measures"—these terms don't simply name phenomena, they embed them within a conspiratorial narrative.

Texts with 5+ specific neologisms are fakes in 89% of cases. This makes frequency analysis of neologisms a primary filter for automatic detection systems.

| Dysphemism | Neutral Term | Effect |

|---|---|---|

| Experimental drug | Vaccine | Creates danger frame |

| System representative | Doctor | Delegitimizes authority |

| Paid-for article | Research study | Instills distrust in source |

Dysphemisms—deliberately negative designations of neutral phenomena—serve as tools for emotional encoding at the lexical level. Clusters of dysphemisms (their co-occurrence in text) increase the probability of a fake to 94%.

Evolution of AI-Generated Content and Deepfakes

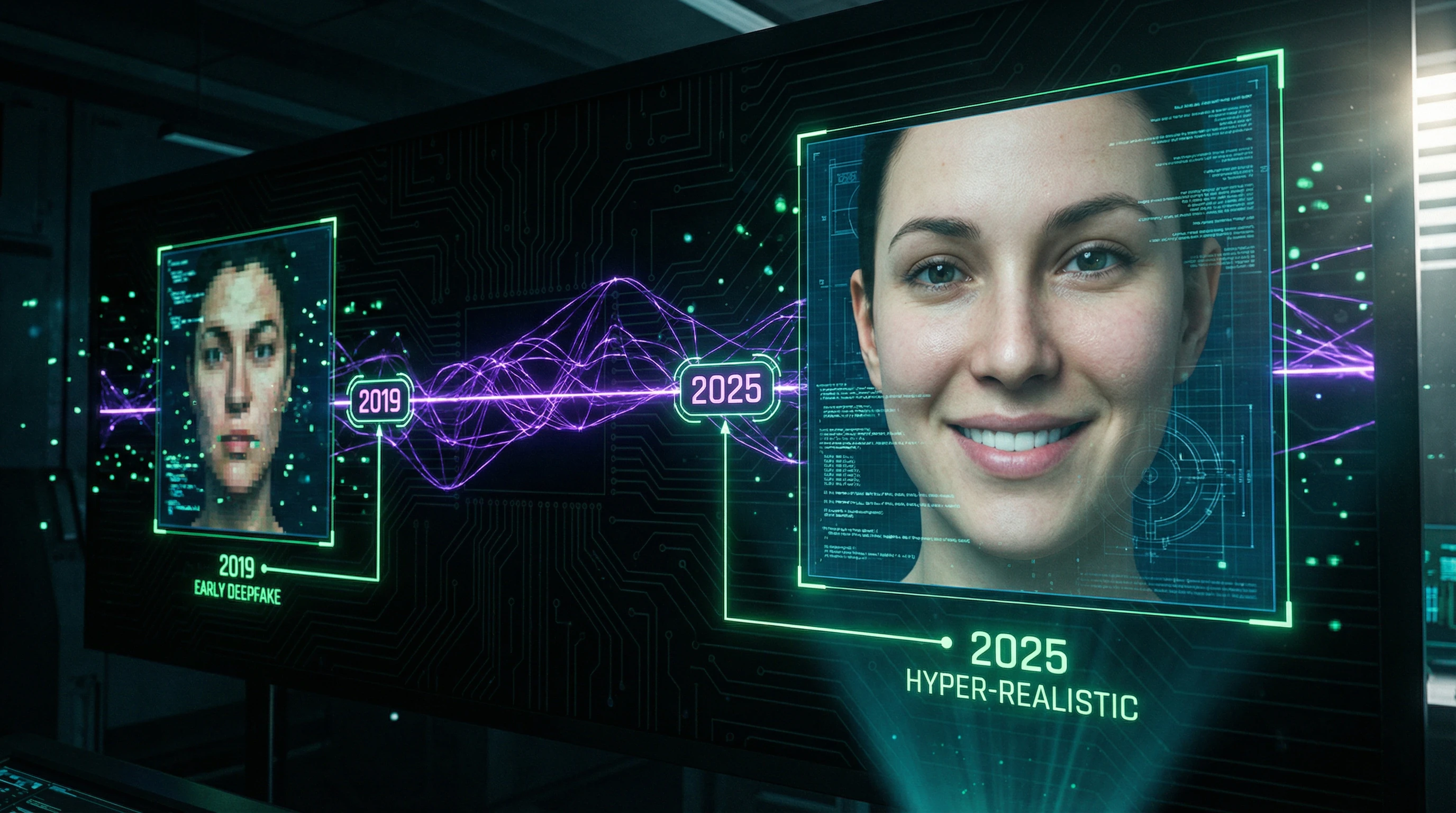

Synthetic media technologies evolved from easily recognizable artifacts to practically indistinguishable forgeries during the 2019–2025 period. Early deepfakes from 2019–2021 were characterized by systematic hand rendering errors, facial asymmetry, and artifacts at object boundaries.

By 2023, generative models mastered anatomically correct limb depiction but retained issues with reflections, shadows, and fabric physics. Modern 2024–2025 systems generate content requiring specialized analysis tools for detection—human perception is no longer a reliable detector.

From Early Deepfakes to Modern Technologies

Paradoxically, low image quality in 2025 became an indicator of AI generation—algorithms intentionally reduce resolution to mask micro-artifacts.

| Modality | Achievement | Entry Barrier |

|---|---|---|

| Audio Deepfakes | Reproduction of intonations and speech patterns with 98% accuracy | $20–50/month |

| Video Deepfakes | Lip movement synchronization with phonemes without delays | Local deployment on consumer hardware |

| Text Generators | Imitation of individual writing style based on 50–100 messages | Open models (Stable Diffusion) |

GAN (Generative Adversarial Networks) technology gave way to diffusion models and transformers trained on terabyte-scale datasets—this enabled a qualitative leap in realism.

Commercialization of generation tools lowered the entry barrier: subscription services provide deepfake creation capabilities without technical skills. Open models are available for local deployment on consumer hardware.

Democratization of synthetic content production exponentially increased the volume of potential fakes in the information space—the share of AI-generated content on social media grew from 2% in 2022 to 18% in 2025.

Arms Race Between Generation and Detection

Deepfake detection systems demonstrate 60–95% accuracy depending on content type and testing conditions, but lag behind generative models by 6–12 months.

Detectors analyze frequency anomalies, lighting inconsistencies, biometric micro-signatures (blink rate, micro-expressions), compression artifacts, and statistical deviations in pixel distribution. However, each generation of generative models learns to bypass known detection methods—adversarial training includes detector simulation in the generator training process.

- Multimodal detectors analyzing video, audio, and metadata simultaneously show up to 93% accuracy

- Analysis of physiological signals (heart rate variability through micro-changes in skin color)

- Consistency verification between frames and anomaly detection in the spectral domain

- Blockchain verification of original content and cryptographic watermarks as preventive measures

Promising directions require mass adoption of standards but remain at the research and pilot project level.

Detection and Verification Methods

Technical Detection Tools

The modern verification arsenal includes specialized software, API services, and browser extensions for analyzing suspicious content. Reverse image search (Google Images, TinEye, Yandex) identifies 40–60% of recycled fakes — images taken out of context or dated to different time periods.

EXIF metadata analysis detects discrepancies between claimed shooting parameters and technical file characteristics, though 70% of fakes pass through editors that strip metadata. Specialized AI detectors (Sensity, Deepware Scanner, Microsoft Video Authenticator) analyze neural network patterns, but require uploading full-size uncompressed files for maximum accuracy.

- Forensic Compression Analysis

- Reveals multiple recompression cycles — a sign of content manipulation. FotoForensics visualizes JPEG compression levels through Error Level Analysis, where inconsistency indicates editing.

- Photo Response Non-Uniformity (PRNU) Analysis

- Identifies the source camera down to the specific device — forgeries show mismatched noise patterns. Requires technical expertise and is unavailable to average users.

Multimodal Content Verification

Comprehensive verification combines technical analysis with contextual assessment and cross-source checking. Linguistic analysis of textual components identifies neologisms, dysphemisms, and emotionally charged vocabulary — disinformation markers with 87–94% accuracy.

Geolocation data verification through comparison with mapping services, shadow and sun position analysis, and architectural details detects geographic inconsistencies in 55% of manipulated video cases. Timestamp verification includes cross-referencing with historical events, weather data, and seasonal vegetation — methods used by professional fact-checkers.

- Social graph distribution analysis examines sharing patterns: fakes demonstrate cascading structure with sharp activity spikes, while organic content spreads gradually.

- Distributor account analysis identifies bots and coordinated networks through anomalies in posting frequency, content uniformity, and activity synchronization.

- Triangulation of results from three independent methods increases conclusion reliability to 96%.

Platforms like Bellingcat, StopFake, and Factcheck.org publish verification methodologies and databases of debunked fakes — resources for independent checking.

The Role of Human Expertise

Automated detection systems complement but do not replace human expertise — contextual understanding, cultural nuances, and plausibility assessment remain the domain of trained analysts. Professional fact-checkers apply multi-level methodology: initial screening with technical tools, in-depth analysis of suspicious cases, consultations with subject matter experts, and final editorial assessment.

Specialists with media literacy training detect fakes 340% more effectively than users without training.

Cognitive biases affect the verification process: confirmation bias drives seeking confirmation of preconceived beliefs, the halo effect transfers trust in a source to unreliable content, and the illusion of knowledge creates false confidence in the ability to recognize fakes.

Verification protocols include debiasing procedures: blind analysis (without knowing the source), peer review, and standardized checklists. Hybrid systems combining AI detection with human expertise demonstrate 97–99% accuracy — the optimal balance between scalability and reliability.

Countermeasures and Media Literacy Strategies

Preventive Delegitimization

Preventive delegitimization technology neutralizes fakes before mass distribution through early detection and public debunking. Monitoring systems scan social networks in real-time, identifying content with signs of emotional encoding and cascading spread in early stages — the first 2–4 hours are critical for interrupting virality.

Rapid response teams from fact-checking organizations publish rebuttals within 30–90 minutes of detection, using the same distribution channels and emotional triggers for maximum reach. Algorithmic downranking (shadow banning) is applied by platforms to content flagged by detectors, reducing organic reach by 70–85% without complete removal.

Prebunking — preemptive debunking of anticipated fakes — builds cognitive immunity in audiences. Prior exposure to manipulation mechanisms reduces susceptibility to disinformation by 40–60% over 2–4 weeks.

Contextual warnings before sharing suspicious content reduce distribution by 30%, but trigger reactance effects in 15% of users. The optimal strategy combines technological barriers with educational interventions, avoiding censorship rhetoric.

Educational Programs

Systematic media literacy includes training in critical thinking, verification techniques, and understanding psychological manipulation mechanisms. Programs for students (ages 10–17) focus on developing skepticism toward sensational content, source-checking skills, and recognizing emotional triggers — effectiveness confirmed by a 55% reduction in fake sharing among trained groups.

Corporate training for media employees, government agencies, and educational institutions includes practical modules on using verification tools, analyzing deepfakes, and protocols for responding to information attacks.

- Mass awareness campaigns use infographics, interactive quizzes, and game simulations to educate broad audiences.

- The Bad News project (Cambridge University) allows users to create their own fakes, demonstrating manipulation mechanisms from the inside — participants recognize disinformation 25% better after completion.

- Continuous learning is critical: one-time interventions lose effectiveness after 3–6 months, while regular knowledge updates maintain verification skills.

- Integration of media literacy into school curricula as a mandatory subject has been implemented in 23 countries, showing long-term reduction in susceptibility to disinformation at the population level.

Platform Mechanisms for Cascade Interruption

Social platforms implement algorithmic interventions to slow the virality of suspicious content. Friction mechanisms — artificial delays before sharing, mandatory captchas, requirements to read articles before reposting — reduce impulsive distribution by 35–50% without blocking content.

Ranking algorithms downgrade posts with signs of emotional encoding, limiting organic reach to 20–30% of normal for verified content. Collaborative moderation engages the community in assessing credibility through crowdsourced labels — Community Notes on X (Twitter) demonstrate 78% agreement with professional fact-checkers.

- Algorithm Transparency

- Users don't understand why content is flagged as suspicious, which generates conspiracy theories about censorship. APIs for researchers provide distribution data for academic analysis, but are limited by privacy terms.

- Cross-Platform Coordination

- Initiatives like the Global Internet Forum to Counter Terrorism (GIFCT) enable synchronized removal of identical content, but raise concerns about centralization of information control.

- Freedom vs. Safety Balance

- The optimal balance between free speech and countering conspiracy theories remains subject to debate — technological solutions must be complemented by legal frameworks and public oversight.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Viral fakes are false information with emotional coding, specifically designed for rapid spread through social networks. Unlike ordinary disinformation, they use cascading chain reactions and psychological triggers for mass reach. Their key feature is an irrational emotional charge that provokes an immediate desire to share the content (Manoylo, 2020).

Cascading spread occurs through a chain reaction principle: one user shares content, their followers repost it further, creating an avalanche effect. Platform algorithms amplify this process by showing emotionally charged content to more people. The speed of spread exceeds the capabilities of moderation and fact-checking (Manoylo, 2020).

Emotional coding exploits universal psychological mechanisms—fear, anger, outrage. Education level does not guarantee protection from emotionally charged disinformation. Sensationalism and extraordinary content attract attention faster than rational fact-checking (Ya Ihua, 2025).

No, this is a myth. Modern deepfakes are indeed difficult to detect, but technical artifacts remain: unnatural movements, lighting problems, low image quality. By 2025, detection has become more challenging, but specialized tools and expert analysis allow identification of fakes (sources 2023-2025).

Yes, linguistic analysis reveals characteristic patterns. Fakes often contain neologisms, dysphemisms, specific vocabulary, and emotionally charged constructions. Corpus analysis of COVID-19 fakes showed stable linguistic markers distinguishing them from credible news (Shiryaeva, 2024; Monogarova, 2021, 2023).

Use a multimodal approach: reverse image search (Google, Yandex), metadata verification, shadow and lighting analysis. Pay attention to image quality—paradoxically, low quality may indicate AI generation. For complex cases, use specialized deepfake detectors (sources 2023-2025).

Immediately delete the post and publish a retraction explaining the error. This is important for interrupting the cascading chain of spread. Your honesty will help stop disinformation and increase your audience's trust in your future publications (Manoylo, 2020).

There are AI deepfake detectors, fact-checking services (Factcheck.kz, StopFake), metadata analysis tools, and reverse image search. However, a fully automated solution is impossible—a combination of technical means and human expertise is required. Detectors lag behind the latest content generation technologies (sources 2023-2025).

Platforms use algorithmic moderation, fact-checking partnerships, labeling of disputed content, and reducing reach of suspicious publications. However, the scale and speed of spread exceed moderation capabilities. Cascading reactions occur faster than systems can respond (Ya Ihua, 2025).

This is a technology of preemptive debunking of disinformation before its mass spread. The method includes analysis of emotional coding, pattern identification, and public audience warning. The preventive approach is more effective than post-factum refutations, as it interrupts the cascade at an early stage (Manoylo, 2020).

Debunking reaches a significantly smaller audience than the original fake. The emotional resonance of false information is stronger than rational correction. By the time fact-checks are published, cascading dissemination has already created persistent false beliefs among millions of users (sources 2020-2025).

This is a widespread myth. Emotional encoding exploits basic psychological mechanisms regardless of education level. Critical thinking helps, but doesn't guarantee immunity to manipulation. Even experts can succumb to emotionally charged content under conditions of information overload (sources 2020-2025).

No, this is impossible due to the constant evolution of content generation technologies and human susceptibility to emotional reactions. A realistic goal is reducing the scale of dissemination through media literacy, technical tools, and platform mechanisms. This is an arms race between fake creators and detectors (Ya Ihua, 2025).

Fear, anger, outrage, and moral indignation are the primary virality triggers. Fake news exploits threats to health, safety, and social justice. Sensationalism and extraordinariness amplify emotional response and the urge to immediately share information (Manoylo, 2020; Ya Ihua, 2025).

Early deepfakes (pre-2023) had obvious artifacts: incorrectly rendered hands, unnatural lip movements, texture problems. Modern technologies have improved significantly but still leave traces in lighting, shadows, and microexpressions. Detection requires specialized tools and expertise (sources 2023-2025).

Linguistic analysis shows that viral fakes targeting English-speaking audiences strategically avoid mentioning specific political figures. This indicates deliberate targeting and content adaptation for audience specificity. The pattern was identified in corpus analysis of COVID-19 disinformation (Monogarova, 2021, 2023).