Pizzagate and QAnon as Heirs to Satanic Panic: Structural Continuity of Conspiratorial Narratives

The Pizzagate conspiracy theory emerged in October 2016 on the 4chan forum in the context of leaked emails from Hillary Clinton's campaign chairman John Podesta. Users interpreted mentions of pizza and other foods as coded messages about pedophilia and child trafficking, allegedly conducted by high-ranking Democrats through the Comet Ping Pong pizzeria (S007).

The QAnon theory, which appeared in 2017, expanded this narrative to a global scale: an anonymous source "Q" claimed that President Trump was waging a secret war against an international cabal of Satanist pedophiles controlling governments and media (S006).

⚠️ Archetypal Structure of Accusations: From McMartin to Comet Ping Pong

Research demonstrates a direct structural connection between these modern theories and the Satanic Panic of the 1980s. In 1983, the McMartin Preschool case in California initiated a wave of accusations of ritualistic Satanic child abuse in daycare centers across America.

Despite the absence of physical evidence and subsequent recognition that child interrogation methods were manipulative, the panic led to hundreds of trials. QAnon reproduces identical elements: accusations of elite pedophilia, appeals to child protection, construction of a hidden network of evil, and demands for vigilance from "awakened" citizens (S004).

The Satanic Panic of the 1980s and QAnon share the same architecture: the enemy is invisible, evidence of their activities is encoded, and belief in the theory becomes a marker of moral vigilance.

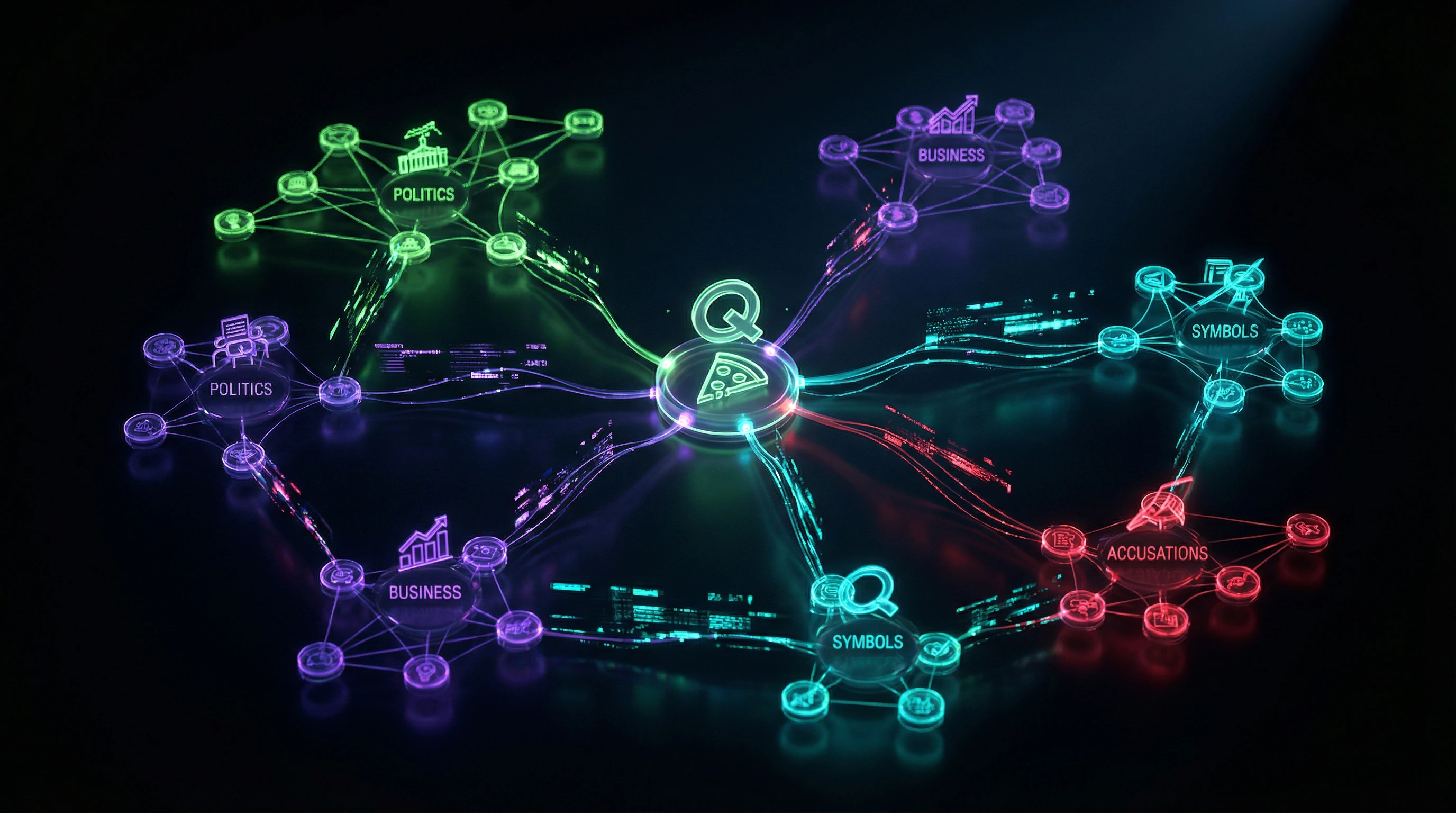

🧩 Multi-Domain Connectivity as a Key Mechanism

Pizzagate relies on interpretation of "hidden knowledge" to connect unrelated domains: politics, business, art, symbolism, and pedophilia (S002). The theory uses arbitrary "codes"—the word "pizza" is interpreted as denoting underage girls, "hotdog" as boys, "cheese" as young girls.

| Domain | Connection in Narrative | Interpretation Mechanism |

|---|---|---|

| Politics | Podesta emails | Searching for "codes" in ordinary correspondence |

| Business | Comet Ping Pong pizzeria | Transforming a real establishment into a network hub |

| Symbolism | Logos, geometry | Searching for "hidden signs" in design |

| Art | Works by artists acquainted with elites | Interpretation as "confessions" of crimes |

🔁 From Forums to Mainstream: Real-Time Meme Evolution

Pizzagate emerged through a collective process on anonymous forums, where users jointly interpreted leaked emails, creating increasingly complex connections (S002). This process demonstrates characteristics of "post-truth protest" (S005), where emotional engagement and group identity matter more than factual accuracy.

QAnon amplified this dynamic through game mechanics: "drops" from Q presented puzzles requiring collective decoding, creating a sense of participation in an investigation and access to exclusive knowledge (S006).

- Collective Investigation

- Forum users jointly "decode" materials, creating the illusion of scientific method. Each participant becomes a "researcher," which strengthens commitment to the theory.

- Puzzle Game Mechanics

- Incomplete and ambiguous Q "drops" allow everyone to find their own explanation in them. Ambiguity becomes an advantage rather than a flaw.

- Knowledge Exclusivity

- Participants believe they possess information unavailable to mainstream consciousness. This creates a psychological barrier against criticism and factual refutations.

Steelman Analysis: Seven Arguments That Make Conspiracy Theories Convincing to Millions

To understand the resilience of Pizzagate and QAnon to fact-checking, we must examine the strongest arguments presented by supporters of these theories. The steelman approach requires presenting the opponent's position in its most convincing form before critical analysis. More details in the Pharma Distrust section.

🧱 Argument from Real Scandals: Epstein as Proof of Systemic Abuse

Supporters point to actual cases of sexual exploitation by elites, particularly the Jeffrey Epstein case—a financier convicted of trafficking minors who had connections to numerous politicians, businesspeople, and celebrities. His death in prison in 2019, officially ruled a suicide, generated widespread suspicions of murder to conceal compromising information.

This real scandal is used as proof that "elite pedophile networks exist," and therefore Pizzagate and QAnon could be true. The logic: if one such case is real, why couldn't other accusations also be real?

🔎 Argument from Symbolism: "Too Many Coincidences"

Conspiracy theorists point to the use of certain symbols in logos, artwork, and social media of people connected to the accusations. For example, a spiral triangle, which the FBI actually included in a 2007 document about pedophile symbols, was found in the logo of a company associated with the owner of Comet Ping Pong (S007).

Theory supporters claim that the probability of multiple such "markers" coinciding by chance is statistically negligible, therefore the symbols are used intentionally for communication within the network.

🧠 Argument from "Hidden Knowledge": Elites Communicate in Code

The central thesis: influential people won't openly discuss criminal activity, so they use codes. Food mentions in Podesta's emails are interpreted as such codes. Supporters point to supposedly strange phrasings ("playing dominos on cheese or on pasta?") as proof of coded communication (S007).

Normal people don't write like this, so it must be code; if it's code, something illegal is being hidden; given the context of other "evidence," it must be pedophilia.

📊 Argument from Movement Scale: Millions Can't Be Wrong

By 2020, QAnon had evolved into a mass movement with millions of followers worldwide. Research on Voat, a platform where QAnon supporters migrated after Reddit bans, showed an active community with thousands of posts and comments demonstrating a complex system of interpreting Q "drops" (S006).

- If the theory were completely absurd, it couldn't attract so many people

- Supporters include educated professionals

- Mass appeal is perceived as validation: "we can't all be crazy"

⚙️ Argument from Censorship: "If There's Nothing to Hide, Why Delete?"

The removal of QAnon and Pizzagate content from major platforms (Twitter, Facebook, YouTube) is interpreted not as combating disinformation, but as proof the theory is correct. The logic: if the theory is false, it's easy to refute with facts; if censorship is applied instead of refutation, the theory must touch on truth they want to hide.

Every account ban or video removal is perceived as confirmation of the conspiracy's existence. This mechanism is particularly effective in the context of conspiratorial thinking, where any opposition is interpreted as part of the conspiracy.

🧬 Argument from Q's Insider Status: Specific Predictions

QAnon supporters point to cases where Q "drops" allegedly predicted events or contained information later confirmed. For example, mentioning certain topics days before they appeared in the news. This is interpreted as proof that Q actually has access to high-level insider information.

Research showed that supporters actively search for such "coincidences" (qoincidences) and create complex connection graphs between drops and events (S006).

🛡️ Argument from Child Protection: Moral Imperative to Act

The most emotionally powerful argument: even if the probability of the theory being true is low, the stakes are so high (saving children from abuse) that it's morally necessary to investigate any suspicions. This argument appeals to the universal value of protecting children and creates a moral dilemma: rejecting the theory means risking ignoring real abuse.

- Mechanism of Action

- The moral imperative blocks critical thinking—doubting the theory is perceived as irresponsibility

- Historical Parallel

- The Satanic Panic of the 1980s–90s demonstrated that this moral imperative made accusations particularly resistant to skepticism (S004)

- Result

- The theory becomes protected from refutation—any objection is interpreted as an attempt to protect criminals

Evidence Base: What Systematic Research Shows About Conspiracy Narratives

Academic research on Pizzagate and QAnon provides empirical data on dissemination mechanisms, narrative structures, and community characteristics, allowing us to separate documented facts from speculation. More details in the Sovereign Citizens Movement section.

📊 Automated Narrative Structure Analysis: Data from 4chan and News Sources

A study using an automated pipeline to detect conspiracy narrative frameworks analyzed posts from 4chan and news articles about Pizzagate (S002). The methodology treated posts as samples of subgraphs from a hidden narrative network, formalizing the task as a latent model estimation problem.

Key finding: Pizzagate demonstrates systematic multi-domain connectivity—the theory links politics, business, art, and crime through interpretation of "hidden knowledge" (S002). Researchers hypothesized that this multi-domain focus is a distinguishing feature of conspiracy theories, differentiating them from ordinary political scandals that typically remain within a single domain.

Multi-domain connectivity is not a random artifact but a structural feature that makes conspiracy narratives more "sticky": each new domain adds a layer of plausibility and expands the audience.

🔬 Meme Evolution on 4chan: From Interpretation to Mobilization

Detailed analysis of Pizzagate's development on 4chan showed how the theory evolved in real time through a collective process (S003). The study documented the transition from initial interpretation of leaked emails to creation of complex "connection maps," identification of "suspects," and calls to action.

Critically important: the theory was not created centrally but emerged through a distributed process where each participant added elements that were then accepted or rejected by the community. This process demonstrates characteristics of "post-truth protest," where collective narrative construction matters more than factual verification (S007).

| Evolution Stage | Characteristic | Community Function |

|---|---|---|

| Interpretation | Searching for hidden meanings in leaks | Cognitive activation |

| Mapping | Visualizing connections between actors | Social coordination |

| Identification | Assigning roles to specific individuals | Psychological anchoring |

| Mobilization | Calls to action (investigation, protest) | Transition to offline |

🧾 QAnon Research on Voat: Community Structure and Content Toxicity

An exploratory study of QAnon on the Voat platform analyzed 10 million posts and comments from the /v/GreatAwakening subverse (S006). QAnon emerged in 2017 on 4chan, promoting the idea that influential politicians, aristocrats, and celebrities are involved in a global pedophile network.

Key findings: governments are controlled by "puppet masters," while democratically elected officials serve as a false front for democracy (S006). Graph visualization showed that some QAnon-related topics are closely connected to Pizzagate and so-called "Q drops."

Unexpected discovery: discussions on /v/GreatAwakening were less toxic than in the broader Voat community (S006). This shows that QAnon communities are not necessarily characterized by high communication aggression, which may explain their appeal to a wider audience.

- Low toxicity in closed communities

- When a group shares a unified narrative, internal communication becomes more civil—the enemy is outside, not inside.

- Paradox of appeal

- Absence of aggression within the group can serve as a filter: people seeking conflict leave; those seeking belonging and meaning remain.

🧬 Comparative Analysis with Satanic Panic: Structural Parallels

Research specifically examining QAnon's connection to the 1980s Satanic Panic revealed deep structural parallels (S004). Both waves are characterized by accusations of elites or authority figures engaging in systematic child abuse and claims of hidden networks using symbolism and rituals.

Both waves appeal to the moral duty of protecting children as justification for extraordinary measures and demonstrate resistance to refutation through the mechanism of "absence of evidence is evidence of concealment." Real consequences for the accused include destroyed careers and legal prosecution.

Critical difference: modern theories spread through digital platforms at speeds and scales unattainable in the 1980s. Satanic Panic required months to spread between cities; QAnon reaches millions in days.

⚙️ Sociotechnical Perspective on Combating Misinformation: Why Fact-Checking Isn't Enough

Research on sociotechnical approaches to combating misinformation shows the limitations of traditional fact-checking (S005). The problem is not just access to correct information, but cognitive, social, and technological factors that make people susceptible to conspiracy narratives.

Researchers emphasize the need for a multi-level approach: understanding cognitive biases exploited by misinformation; accounting for social dynamics of groups where conspiracy theories become identity markers; analyzing algorithmic mechanisms of platforms that amplify emotionally charged content (S005).

- Simply providing facts is often ineffective when the theory serves psychological functions for its adherents

- Social function (group belonging) often outweighs cognitive function (explaining the world)

- Platform algorithms amplify emotional content regardless of its truthfulness

- Interventions must address all three levels simultaneously, otherwise the effect is minimal

Mechanisms of Impact: Why Conspiracy Narratives Capture Consciousness Despite Facts

Understanding the causal mechanisms that make Pizzagate and QAnon convincing requires analyzing the cognitive, social, and technological factors that create ideal conditions for conspiracy theory propagation. More details in the section Pyramid Schemes and Scams.

🧩 Pattern-Matching and Apophenia: The Brain That Sees Connections Everywhere

The human brain is evolutionarily tuned to detect patterns—a critically important survival capability. However, this ability leads to apophenia—perceiving meaningful connections between unrelated phenomena.

Conspiracy theories exploit this mechanism by providing abundant material for pattern-matching: symbols, date coincidences, linguistic "codes." Research on Pizzagate showed how supporters systematically connected unrelated domains through interpretation of "hidden knowledge" (S002).

The more you search for connections, the more you find—not because they exist, but because selective attention and confirmation bias create a self-reinforcing cycle of conviction.

🔁 Game Mechanics and Reward: QAnon as Alternate Reality

QAnon uses mechanics similar to alternate reality games (ARGs): cryptic "drops" require collective decoding, creating a sense of participation in an investigation (S006).

This structure provides constant reward: each "decode" delivers a dopamine spike from the sensation of discovery, social recognition from the community, and reinforcement of group identity. Analysis of Voat revealed complex connection graphs between Q drops and events created by the community (S006)—this is collective creativity that brings satisfaction regardless of actual truth.

- Puzzle → collective decoding → social recognition

- Each "insight" → dopamine spike → identity reinforcement

- No ending → infinite game → constant engagement

🧷 Epistemic Isolation: Self-Sustaining Belief Systems

Conspiracy theories construct epistemically closed systems where any refutation is integrated as confirmation.

- Absence of evidence

- interpreted as evidence of cover-up

- Refutations from official sources

- viewed as part of the conspiracy

- Demands for proof

- rejected as naivety or complicity

Research showed that QAnon supporters perceive governments as controlled by "puppet masters," and democratic institutions as fake facades (S006). In this coordinate system, any information from mainstream sources is automatically discredited, creating complete epistemic isolation.

👁️ Illusion of Insider Knowledge: Epistemic Superiority

Conspiracy theories provide a sense of access to "hidden knowledge" unavailable to the "sleeping" masses. This creates a feeling of epistemic superiority: "I know the truth that others don't see."

Analysis of Pizzagate showed that the theory relies on interpretation of "hidden knowledge" to connect domains (S002). QAnon supporters call themselves "awakened," contrasting themselves with "sheeple" who blindly believe official narratives.

This is not merely cognitive belief, but identity that provides meaning and status. Abandoning the theory means losing special status and admitting you were deceived—a psychologically painful choice.

🔬 Algorithmic Amplification: Technological Acceleration of Spread

Social media platforms use algorithms optimized for engagement that disproportionately amplify emotionally charged and polarizing content. Conspiracy theories that provoke outrage, fear, and moral indignation gain algorithmic advantage.

Research on Pizzagate's evolution showed how the theory spread in real-time through 4chan, then migrated to Twitter, Facebook, and YouTube (S003). Each platform added its own amplification mechanisms: YouTube's recommendation algorithms created "rabbit holes" leading from moderate content to extreme; Facebook's algorithms formed echo chambers where users saw only confirming content.

| Platform | Amplification Mechanism | Effect |

|---|---|---|

| 4chan | Anonymity + lack of moderation | Rapid emergence and mutation of theory |

| YouTube | Recommendation algorithms | "Rabbit holes" from moderate to extreme |

| Echo chambers + social validation | Group reinforcement of beliefs | |

| Alternative platforms | Minimal moderation | Migration after bans, even greater radicalization |

Research on QAnon on Voat showed that after bans on mainstream platforms, the community migrated to alternative platforms with even less moderation (S006). Technology is not neutral—it structures which ideas spread, who sees them, and how often.

The connection to cognitive biases shows that these mechanisms work not against our thinking, but through its natural channels. Understanding conspiracy mechanisms requires analyzing not only the content of theories, but also the architecture of platforms that spread them.

Data Conflicts and Zones of Uncertainty: Where Evidence Contradicts Itself

Honest analysis requires acknowledging areas where research yields contradictory results or where data is insufficient for definitive conclusions. More details in the Statistics and Probability Theory section.

🧾 Toxicity of QAnon Communities: Contradictory Data

Research on QAnon on Voat found that discussions on /v/GreatAwakening were less toxic than in the broader Voat community (S006). This contradicts the widespread perception of QAnon communities as particularly aggressive.

However, this may be an artifact of the specific platform or research period. Other studies have documented instances of violence and threats linked to QAnon, including the Capitol riot.

Toxicity may vary between platforms and subgroups within the movement, or may manifest not in textual aggression but in willingness to take real-world action.

🔎 Effectiveness of Debunking: Mixed Results

Research on the effectiveness of fact-checking and debunking shows mixed results. Simply providing facts is often insufficient—the backfire effect occurs, where refutation strengthens the original belief.

At the same time, targeted debunking that accounts for psychological motivations shows greater effectiveness. The difference in results depends on method, audience, and context of information dissemination.

- Universal fact-checking is often ineffective or counterproductive

- Personalized approaches that acknowledge emotional roots of belief work better

- Long-term effects remain unclear—longitudinal studies are needed

📊 Role of Platforms and Algorithms: Data is Contradictory

Some studies point to the decisive role of recommendation algorithms in spreading conspiracy narratives (S001). Others find that social connections and trust in sources play a more significant role than algorithmic filtering.

Likely, both mechanisms operate simultaneously, but their weight varies depending on platform, user demographics, and content type.

| Factor | Evidence Level | Zone of Uncertainty |

|---|---|---|

| Algorithmic amplification | Medium | Weight relative to social networks |

| Social trust | High | How it interacts with algorithms |

| Emotional vulnerability | High | Which emotions are most critical |

| Education level | Low | Paradox: educated people also believe |

🔗 Link Between Conspiracy Belief and Real Harm

There is a correlation between adherence to conspiracy narratives and willingness to engage in violence (S004), but the causal relationship remains disputed. Is conspiracy belief a cause or symptom of deeper alienation?

Data shows that not all conspiracy believers transition to action. Mind control mechanisms and social isolation may be intermediate factors, but their role requires further study.

- Data Conflict

- Correlation between belief and violence exists, but most believers do not commit violent acts.

- Possible Explanation

- Conspiracy belief is a necessary but not sufficient condition for radicalization. Additional factors are needed: social isolation, personal trauma, influence of leaders.

⏳ Long-Term Effects: Insufficient Data

Most research on conspiracy narratives consists of snapshots at specific moments in time. How do people exit conspiracy communities? What factors contribute to belief reassessment? This remains understudied.

Research (S006) begins to fill this gap, but data on long-term trajectories of believers is still insufficient for reliable conclusions.