Dead Internet Theory: from conspiratorial meme to documented reality of digital erosion

Dead Internet Theory asserts that organic content created by real people is systematically disappearing or becoming inaccessible, replaced by artificially generated material — bots, algorithmic syntheses, neural network content. According to research by Mehmet Kaan Yildiz from Uskudar University, "today the internet is no longer a space that preserves organic discussions from the past" (S001).

This isn't merely a change in user experience — it's a fundamental transformation of the nature of digital memory. The internet has become a stream dominated by superficial and repetitive artificial content. More details in the Disinformation section.

🧩 Boundaries of the phenomenon: what counts as "dead" in the context of the digital ecosystem

"Dead internet" has several dimensions. First — physical inaccessibility: links to nowhere, deleted pages, vanished domains. Second — algorithmic invisibility: content exists, but search engines and recommendation algorithms don't show it.

Most content is either inaccessible or unreachable in algorithmic feeds (S001).

The third dimension — replacement of authentic content with synthetic: original discussions replaced by AI-generated summaries that may contain factual errors or "hallucinations."

🔎 Wikipedia as indicator: why the encyclopedia became litmus test for digital degradation

Wikipedia functions as a massive archive of links to external sources. Each article contains dozens, sometimes hundreds of hyperlinks that should verify stated facts. Research documents the scale of the problem: a significant portion of these links becomes non-functional over time (S003).

This isn't just a technical glitch — it's a symptom of a deeper process of disappearing digital knowledge infrastructure. When sources cited by the encyclopedia vanish, the encyclopedia itself becomes a graveyard of links.

🧱 Architecture of the problem: three layers of digital memory destruction

- Technical layer

- Servers shut down, domains expire, content is deleted by owners.

- Economic layer

- Maintaining old content requires resources that companies aren't willing to spend on materials not generating traffic.

- Algorithmic layer

- Search engines and social platforms prioritize fresh content, making old discussions practically invisible.

Yildiz notes: "The digital past is simultaneously disappearing and being replaced by an artificial past" (S001). These three layers interact, creating an accelerated erosion effect that affects not just individual sites, but the very architecture of digital memory.

The Steel Man of the Theory: Seven Most Compelling Arguments for the Reality of the Dead Internet

📊 Argument One: Documented Disappearance of Wikipedia Links as Empirical Fact

The ACM study (S003) provides direct empirical data on the scale of the "permanently dead" link problem in Wikipedia. This is not a theoretical construct, but a measurable phenomenon with concrete numbers.

The methodology included systematic analysis of links in Wikipedia articles, tracking their status over time, and classifying types of unavailability. Results show that a significant proportion of external links become non-functional within several years of publication, with the process accelerating for certain content categories. More details in the section Pharmaceutical Company Data Concealment.

| Characteristic | Observation |

|---|---|

| Type of phenomenon | Measurable, documented |

| Scale | Significant proportion of external links |

| Dynamics | Accelerating degradation over time |

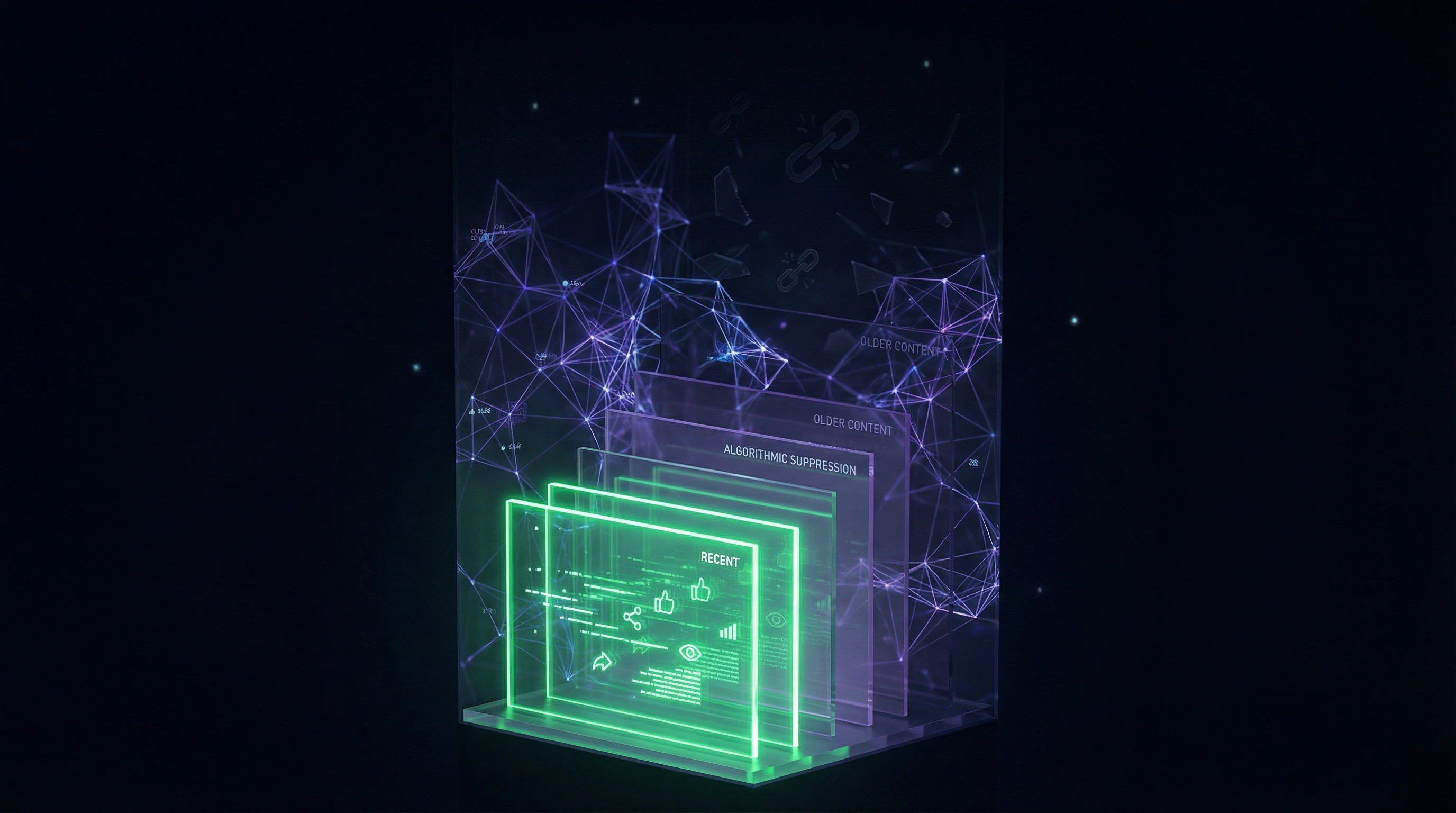

🔬 Argument Two: Algorithmic Suppression of Historical Content in Search Engines and Social Networks

Modern ranking algorithms systematically discriminate against old content in favor of fresh material. Yildiz notes: "Yet today, much of this content is either unavailable or unreachable in algorithmic feeds" (S001).

This is not accidental, but the result of deliberate design: the engagement metrics on which algorithms are based naturally favor new content. A user attempting to find a five-year-old discussion faces an insurmountable barrier—search engines display recent results even when older ones are more relevant to the query.

Algorithmic suppression is not a bug, but a feature: the system works exactly as designed. The question is not why this happens, but who benefits from the disappearance of memory.

🧾 Argument Three: Replacement of Organic Content with AI-Generated Hallucinations

"At the heart of the problem is that as original human content weakens, the worn and faded areas are filled with artificial intelligence hallucinations. As real memory is erased, it is replaced by simulated memory" (S001).

Generative models create plausible but factually inaccurate content that fills the voids left by disappeared originals. The problem is compounded by the fact that "the fact that generative models sometimes produce unverified, fabricated, or context-detached information adds an additional layer of uncertainty on top of old digital discussions that are already difficult to find" (S001).

- Organic content disappears from search and archives

- AI fills the voids with plausible but false data

- New users cannot distinguish real from synthetic

- Digital memory transforms into simulation

🧬 Argument Four: Economic Impracticality of Maintaining Archives in the Era of Engagement Metrics

The business models of modern platforms are based on maximizing engagement and time spent on site. Old content that does not generate clicks and does not attract advertisers becomes economic ballast.

Companies have no financial incentives to maintain accessibility of materials that do not generate revenue. This creates systematic pressure toward deletion or deprioritization of historical content. Server space costs money, and old discussions do not earn it—the economic logic is inexorable.

🔁 Argument Five: Self-Reinforcing Cycle of Degradation Through AI Training on Synthetic Data

When generative models are trained on data that already contains AI-generated content, a "model collapse" effect emerges—progressive quality decline and increased homogeneity of outputs.

As organic content disappears and is replaced by synthetic substitutes, new generations of models train on increasingly distorted representations of reality. This creates positive feedback: the more AI content on the internet, the worse the quality of future AI models, which produce even more low-quality content.

- Model Collapse

- A phenomenon where training on synthetic data leads to progressive quality degradation. Each generation of models trains on increasingly distorted data, amplifying errors.

- Positive Feedback

- A system in which the result reinforces the cause. Here: more AI content → worse new models → even more low-quality content.

📌 Argument Six: Transformation of Digital Memory from Archive to Simulation

"However, as online discussions disappear and are replaced by artificial syntheses, what users perceive as 'digital memory' increasingly becomes a simulative structure" (S001).

This is not merely data loss—it is a qualitative change in the nature of digital memory. Instead of an archive of real human interactions, we get an algorithmically constructed representation of what "should have" happened, based on patterns extracted from training data. The distinction between "what was" and "what the model considers plausible" is erased.

Digital memory ceases to be testimony and becomes interpretation. This is not an archive—it is a gallery of probabilities, where reality and simulation are indistinguishable.

🧩 Argument Seven: Wikipedia's Institutional Inability to Counter Mass Link Degradation

Despite Wikipedia functioning as a "successful example of a self-governing team" (S006), where "an integrated and coherent data structure is created while users successfully distribute roles through self-selection" (S006), the project lacks mechanisms for systematically combating the mass disappearance of external sources.

The volunteer model does not scale to the task of verifying and restoring millions of links. The study (S003) documents this problem, showing that even in a well-maintained encyclopedia, the process of link degradation outpaces restoration efforts.

- The volunteer model works for content editing

- But does not scale for verifying millions of links

- Link degradation occurs faster than restoration

- Institutional resources are insufficient to solve the problem

Evidence Base: What the Data Says About the Scale and Mechanisms of Digital Erosion

📊 Quantitative Data on "Permanently Dead" Links in Wikipedia

The study "Characterizing 'permanently dead' links on Wikipedia" (S003) presents a systematic analysis of non-functional links in the encyclopedia. The methodology included automated verification of HTTP response status for external links, classification of error types (404, 410, timeouts, DNS errors), and temporal analysis of degradation.

Results show systematic bias: news sites, personal blogs, and small commercial projects disappear significantly faster than institutional sources. This means digital memory preserves the voice of large organizations while losing the voices of independent authors. More details in the Conspiracy section.

| Source Type | Disappearance Rate | Reason |

|---|---|---|

| Institutional sites | Low | Continuous funding, backup copies |

| News portals | Medium | Reindexing, platform changes |

| Personal blogs | High | Lack of maintenance, hosting shutdown |

| Small commercial projects | High | Bankruptcy, domain changes |

🧪 Qualitative Analysis of Digital Memory Transformation

Yildiz provides a conceptual framework: "The digital past is simultaneously disappearing and being replaced by an artificial past" (S001). This is a dual process—not merely loss, but active substitution.

"At the heart of the problem is that as original human content weakens, the worn and faded areas are filled with AI hallucinations. While real memory is erased, it is replaced by simulated memory" (S001).

The internet is transforming from an archive of real events into a generator of plausible narratives. Users cannot distinguish between recovered facts and generated imitations.

🔎 Mechanisms of Algorithmic Suppression of Historical Content

Search engines and social platforms use ranking algorithms that consider content freshness, engagement metrics, and source authority. However, the freshness factor often outweighs other criteria.

"Much of this content is either inaccessible or unreachable in algorithmic feeds" (S001). Even high-quality old material becomes practically invisible. This is not a system error—it's its objective function: algorithms are optimized for short-term engagement, not long-term knowledge preservation.

- Algorithm ranks content by freshness

- Old content drops in search results

- User cannot find historical source

- Turns to AI summary instead of original

- Receives plausible but unverified information

🧾 The Problem of AI Hallucinations as Replacement for Factual Information

Generative models create plausible but factually inaccurate content—a phenomenon known as "hallucinations." "The fact that generative models sometimes produce unverified, fabricated, or decontextualized information adds an additional layer of uncertainty on top of old digital discussions that are already hard to find" (S001).

When users cannot access the original source and rely on AI-generated summaries, they lose the ability to verify accuracy. This creates an epistemological crisis: how to distinguish real history from plausible simulation? For more on manipulation mechanisms, see the analysis of conspiracy narratives.

- Hallucination

- Model generation of information that sounds plausible but is not supported by sources. Dangerous because users cannot distinguish it from fact without access to original context.

- Epistemological Crisis

- A situation where a knowledge system loses reliable criteria for distinguishing truth from fiction. In the context of the dead internet—the impossibility of verifying information through original sources.

Mechanisms of Causality: Why Correlation Between Content Disappearance and AI Growth Doesn't Mean Direct Causation

🧬 Distinguishing Direct and Indirect Causal Links

The correlation between AI growth and content disappearance masks three different mechanisms. First — direct substitution: algorithms actively promote synthetic content instead of human content. Second — parallel processes: organic content disappears for independent reasons (site closures, economic factors), and AI fills the voids. More details in the Scientific Method section.

Third — feedback loops: the presence of AI content changes economic incentives for creators, accelerating the disappearance of human content. Yildiz captures the result: "The internet has transformed into a stream dominated by superficial and repetitive artificial content" (S001), but this is the product of multiple interacting factors, not a single cause.

- Direct substitution: algorithmic promotion of AI displaces human content from feeds

- Parallel processes: organic content disappears independently; AI fills the resulting voids

- Feedback loops: AI content changes the economics of creation, making human content less profitable

🔁 Confounders: Economic and Technological Factors Independent of AI

Content disappearance began long before generative models. The economic unfeasibility of maintaining old sites, platform consolidation, technological obsolescence of formats — these are all independent processes that operated in parallel with AI implementation.

Internet infrastructure is also changing independently. Changes in IXPs (Internet Exchange Points) affect content accessibility unrelated to AI (S008). Attributing all responsibility to artificial intelligence oversimplifies a complex picture and obscures the real economic and technological causes.

| Disappearance Factor | Independent of AI | Amplified by AI |

|---|---|---|

| Site closures | ✓ (economics, bankruptcies) | — (AI doesn't close sites) |

| Dead links | ✓ (documented before 2020) | ✓ (AI accelerates content replacement) |

| Platform consolidation | ✓ (monopolization) | — (AI doesn't cause consolidation) |

| Replacement of reality with simulation | — (new phenomenon) | ✓ (AI-specific) |

🧷 Temporal Sequence: What Came First — The Problem or Its AI Amplification

The dead link problem was documented years before ChatGPT and diffusion models. Study S003 analyzes a phenomenon that developed in parallel with the internet. But Yildiz points to a qualitative leap: "As online discussions disappear and are replaced by artificial syntheses, what users perceive as 'digital memory' increasingly transforms into a simulative structure" (S001).

This isn't a continuation of the old problem of access loss. This is a transformation into a new quality: from content disappearance to its replacement with simulation that looks like the original.

Temporal sequence is critical here. If dead links appeared before AI, then AI cannot be their sole cause. But if AI qualitatively changed the nature of the problem — transforming loss into substitution — then this requires separate analysis of the mechanism, not just correlation.

Conflicts in the Evidence Base: Where Sources Diverge and What This Means for Reliability of Conclusions

🧩 Contradiction Between Wikipedia's Success as a Self-Organizing System and Its Inability to Solve the Dead Link Problem

(S006) describes Wikipedia as a "successful example of a self-managed team," where decentralized collaboration creates a coherent data structure. Simultaneously, (S003) documents the massive problem of "permanently dead" links that this system fails to resolve.

The contradiction reveals a limitation of the volunteer model: it scales for content creation but not for systematic monitoring of millions of external links. Success in editing doesn't guarantee success in maintenance. More details in the Psychology of Belief section.

- Decentralized coordination is effective for synchronous content (articles, edits)

- Asynchronous monitoring of external sources requires sustained resources and automation

- Volunteers are motivated by creation, not technical maintenance

- Result: content grows while infrastructure degrades in parallel

🔎 Uncertainty in Estimating the Scale of AI-Generated Content

(S001) claims that "the internet has transformed into a stream dominated by superficial and repetitive artificial content." However, precise quantitative estimates of AI content's share of the total internet are absent.

This creates three problems simultaneously: the actual scale of the phenomenon is unclear, it's impossible to separate legitimate concerns from panic, and verification of the dominance claim itself becomes difficult.

The absence of systematic measurements isn't just a data gap. It makes the theory resistant to falsification: any observation can be interpreted as confirmation, while lack of evidence becomes proof of the problem's hidden nature.

🧱 Gap Between Technical Research and Sociocultural Analysis

Technical research (S003) measures HTTP status codes, error rates, degradation patterns. Sociocultural analysis (S001) discusses "digital memory," "simulative structures," "artificial past."

The two approaches use different methodologies and levels of abstraction. Technical data shows that links break; cultural analysis interprets this as erosion of collective memory. The connection between levels isn't obvious.

- Technical Reality

- Measurable facts: URL returns 404, domain deleted, server unavailable. Methodology: auditing, logging, statistics.

- Cultural Interpretation

- Abstract concepts: loss of memory, fragmentation of history, replacement of reality with simulation. Methodology: hermeneutics, narrative analysis.

- The Gap

- Technical facts don't automatically translate into cultural conclusions. Intermediate causal logic is needed, which is often skipped or remains implicit. Cognitive biases can amplify interpretation toward catastrophism.

For reliable conclusions, explicit justification is required: why does degradation of technical infrastructure mean erosion of memory specifically, rather than simply a technical problem solvable through backups and archives.

Cognitive Anatomy of the Theory: What Psychological Mechanisms Make the Dead Internet Narrative Convincing

⚠️ Exploitation of Availability Bias

People overestimate the probability of events that are easy to recall or imagine. When a user encounters several dead links in a row or obviously AI-generated content, these vivid examples become cognitively available and shape the impression of the problem's scale. More details in the Critical Thinking section.

"The fact that generative models sometimes produce unverified, fabricated, or context-detached information adds an additional layer of uncertainty" (S001). This uncertainty amplifies anxiety, making the theory more convincing than objective data might justify.

Vivid examples capture attention not because they are representative, but because they are easily recalled. This distinction is critical for assessing the scale of any phenomenon.

🧩 Appeal to Nostalgia for the "Authentic" Internet of the Past

The theory resonates with the narrative of a "golden age" of the early internet—a time of more authentic content, meaningful discussions, and less aggressive commercialization. "Today, the internet is no longer a space that preserves the organic discussions of the past" (S001)—this statement activates nostalgic feelings.

Regardless of the accuracy of representations about the past, it forms a powerful psychological foundation for accepting the theory. Nostalgia works as an emotional anchor that facilitates acceptance of the narrative even with weak evidence.

🔁 Use of the "Hidden Threat" Pattern and Conspiratorial Thinking

The theory is structured as a revelation of a hidden process that most people don't notice. This is a classic pattern: "you're told X, but Y is actually happening." Yildiz formulates: "While real memory is being erased, its place is taken by simulated memory" (S001)—a process allegedly hidden from the average user.

This framing appeals to those who value "insider knowledge" and are skeptical of official narratives. The presence of conspiratorial structure doesn't automatically make a claim false, but it requires more rigorous verification of evidence.

| Cognitive Mechanism | How It Works in the Theory | Why It's Convincing |

|---|---|---|

| Availability | Vivid examples of dead links are easy to recall | Emotional intensity creates an illusion of scale |

| Nostalgia | Comparison with the "golden age" of the internet | Emotional resonance with personal experience |

| Hidden Pattern | Threat allegedly concealed by authorities/corporations | Activates distrust of official sources |

Understanding these mechanisms doesn't mean the theory is false. It means that the persuasiveness of the narrative and its correspondence to facts are different things. Cognitive biases work equally well for both true and false claims.

Similarly, conspiratorial thinking structures use the same psychological levers regardless of whether they describe real hidden processes or fictional ones. The distinction is verified only through evidence, not through psychological analysis of an idea's appeal.

Verification Protocol: Seven Questions That Will Dismantle Any Unfounded Dead Internet Claim in Two Minutes

Any dead internet claim passes through seven filters. If at least three fail — you're facing speculation, not analysis.

✅ Question One: Are Specific Quantitative Data Provided or Only Qualitative Impressions?

Claims must rely on measurable indicators: percentage of dead links, volume of AI content in the sample, temporal trends with dates. Impressions are not evidence.

If a source says "the internet is dying" but doesn't specify what percentage of content disappeared over what period — that's narrative, not research.

✅ Question Two: Is the Data Sample Controlled or Biased?

Check: did the authors analyze the entire internet or only social media? Only English-language content or multilingual? Only major platforms or including niche communities?

Biased sampling creates the illusion of a trend. Cognitive biases often masquerade as statistics.

✅ Question Three: Are Correlation and Causation Separated?

Growth in AI content coincides with disappearing links — but that doesn't mean AI is deleting them. Alternative causes are possible: search algorithm changes, closure of old sites, content migration to new platforms.

- Correlation

- two phenomena occur simultaneously

- Causation

- one phenomenon directly causes another — requires a mechanism and exclusion of alternatives

✅ Question Four: Is There Contradictory Data, and How Is It Explained?

Good analysis doesn't hide counter-evidence — it examines it. If a source ignores facts that don't fit the narrative, that's a red flag.

Check: are studies (S001, S002) mentioned that show growth in content archiving and backup?

✅ Question Five: Who Funds or Promotes This Claim?

Interests can be: academic (publication), commercial (selling verification tools), ideological (fear of AI), social (attracting attention). Each interest distorts focus.

Dead internet theory is convenient for those selling solutions against AI content or stoking panic around technology.

✅ Question Six: Is the Claim Independently Verified or Only Cited?

If all sources reference each other and the original research is unavailable — you're in an echo chamber. Find the primary source and check its methodology.

As with conspiracy narratives, dead internet often spreads through chains of retellings without verification.

✅ Question Seven: Are Alternative Explanations Offered or Only One Scenario?

Complex phenomena rarely have a single cause. Content disappearance can result from: hosting closures, platform changes, removal by request, natural obsolescence, technical failures.

- Check whether the source considers all hypotheses

- Assess which has the most evidence

- Ensure alternatives are excluded, not simply ignored

If analysis passes all seven filters — you're facing not speculation, but a substantiated conclusion. If it fails at least three — it's narrative disguised as science.