Conspiracy Theory as Information Structure: From Marginal Forums to Algorithmic Epidemiology

The traditional view of conspiracy theories as chaotic, irrational beliefs held by marginal groups no longer reflects the reality of the digital age. Contemporary research shows that conspiratorial narratives follow predictable structural patterns that can be formalized and detected automatically (S002).

Conspiracy theory has ceased to be exclusively a psychological or sociological phenomenon and has become an object of computational analysis. This is a fundamental paradigm shift. More details in the section Fears Around 5G.

🧩 Defining Conspiratorial Narrative in the Big Data Era

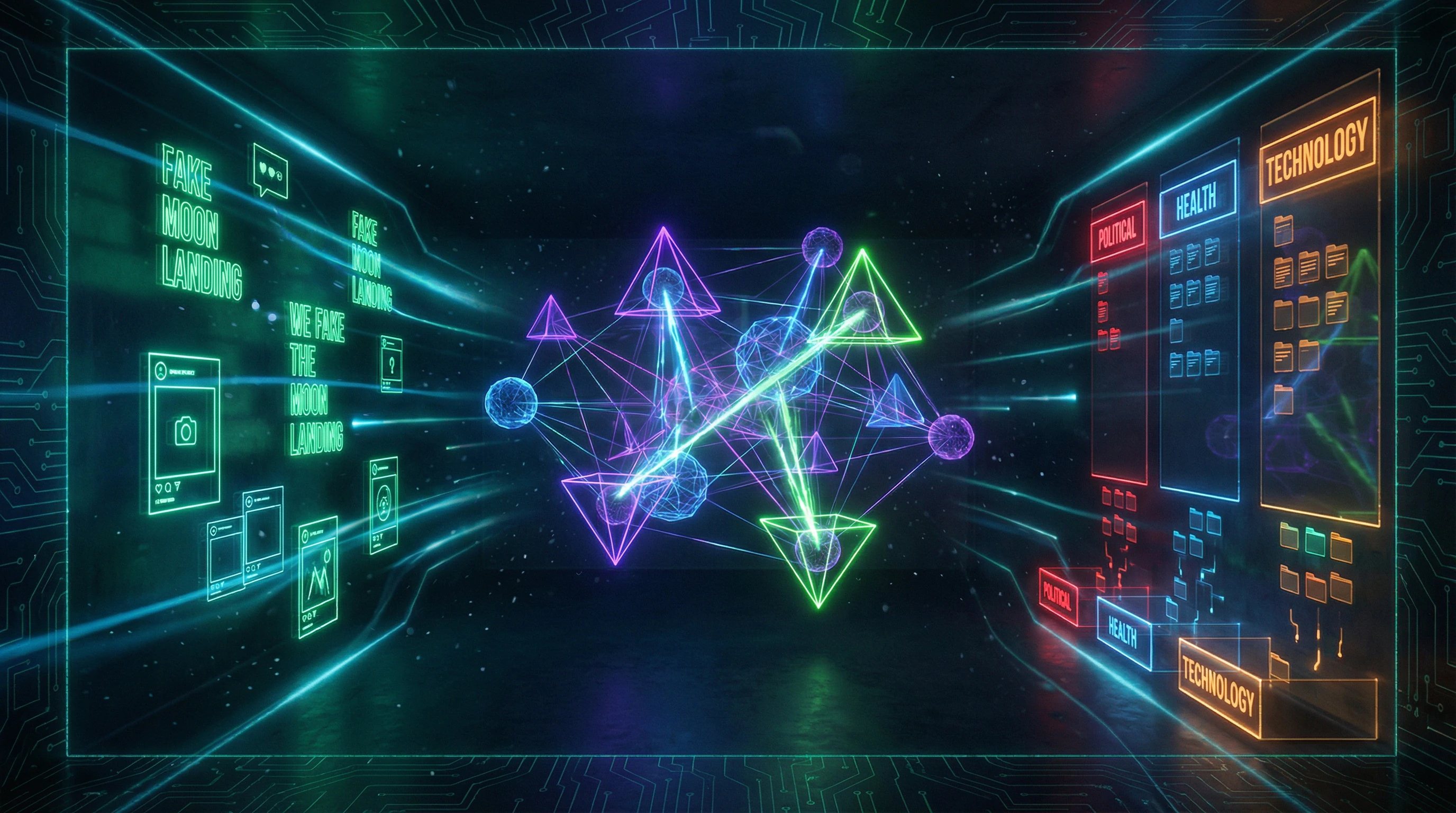

Researchers have developed an approach to conspiracy theories as subgraph patterns of a hidden narrative network, where the problem of reconstructing the underlying structure is formulated as a latent model estimation task (S002). Each social media post, each news article is viewed not as an isolated statement, but as a fragment of a larger, hidden belief structure.

Conspiracy theories connect domains of human interaction that would otherwise remain unconnected, relying on the interpretation of "hidden knowledge."

🔬 Pandemic as Natural Experiment: COVID-19 and the Explosion of Conspiratorial Narratives

Conspiracy theories related to COVID-19 spread particularly actively due to the absence of authoritative scientific consensus regarding the virus, its transmission, and the long-term consequences of the pandemic (S004).

- Circulating narratives include:

- 5G network activates the virus

- Pandemic is a hoax by a global cabal

- Virus is a biological weapon released by China

- Bill Gates is using the pandemic as cover for a global surveillance regime

📊 Multi-Domain Structure as Key Characteristic of Conspiracy Theory

Analysis of specific cases, such as "Pizzagate," demonstrates how the conspiratorial framework relies on the interpretation of "hidden knowledge" to connect unrelated domains of human interaction (S002). This multi-domain structure is an important feature of conspiracy theories.

| Characteristic | Effect on Resilience |

|---|---|

| Connection of unrelated phenomena (technology, virology, politics, pharmaceuticals) | Refutation of one element does not destroy the entire structure |

| Hidden narrative network | Difficulty of verification and fact-checking |

| Interpretation of "hidden knowledge" | Any fact can be reinterpreted as evidence |

The ability to create connections between unrelated phenomena makes conspiratorial narratives particularly resistant to refutation. This is not a logical error, but an architectural feature of conspiratorial thinking that must be understood for effective analysis.

The Steel Man of Conspiracy Theories: Seven Arguments That Make Conspiracy Theories Convincing

To understand the resilience of conspiratorial narratives, we must examine their strongest arguments in their most convincing form—an approach known as "steel manning," the opposite of straw manning. Ignoring these arguments or oversimplifying them only strengthens conspiracy theorists' positions. For more details, see the Pharma Distrust section.

⚙️ The Argument from Historical Verification: Real Conspiracies Exist

Real conspiracies have indeed existed and been exposed (S001). From the CIA's MKUltra program to the Watergate scandal, from tobacco companies concealing data about smoking harms to pharmaceutical corporations hiding information about drug side effects—history is full of examples where "conspiracy theories" turned out to be true.

If some conspiracies are real, how do we distinguish justified suspicion from paranoid delusion? This epistemological problem is at the core of conspiratorial thinking's persuasiveness.

🔍 The Argument from Information Asymmetry: Elites Really Do Know More

Governments, corporations, and international organizations possess information unavailable to ordinary citizens. Secrecy systems, trade secrets, closed meetings—all create spaces where hidden actions are theoretically possible.

Conspiracy theorists exploit this real opacity, extrapolating it to global scales. Information asymmetry is not fiction but a structural fact of modern institutions.

🧬 The Argument from Convergence of Interests: Coordination Doesn't Require Conspiracy

Achieving results that look like conspiracy doesn't always require explicit coordination. When multiple actors (corporations, politicians, media) share aligned interests, they act in concert without formal collusion.

- Pharmaceutical companies are interested in selling vaccines

- Governments—in controlling populations

- Tech giants—in collecting data

This convergence of interests creates patterns indistinguishable from the results of coordinated conspiracy. The mechanism works without a central coordinator.

📡 The Argument from Technological Capability: Surveillance Became Reality

What seemed like paranoid fantasy just 20 years ago—total surveillance, analysis of all communications, behavior prediction—has become technical reality. Edward Snowden's revelations showed the scale of surveillance programs that exceeded even the boldest conspiracy theories.

If this is technically possible and already implemented in one area, why not in others? Conspiracy theorists' logic here rests on real precedent.

🎭 The Argument from Public Opinion Manipulation: PR and Propaganda Work

The PR industry, political consulting, targeted advertising, use of psychological profiles—these are all real tools for shaping mass beliefs (S006). Corporations and governments invest billions in shaping public opinion.

If such tools work, isn't it logical to assume they're used to conceal inconvenient truths? The effectiveness of manipulation is a documented fact.

⚖️ The Argument from Systemic Corruption: Institutions Really Are Compromised

Revolving doors between regulators and industry, research funding by interested parties, lobbying, conflicts of interest—these are all documented phenomena. When former pharmaceutical company executives head regulatory agencies, and former officials become lobbyists, trust in institutional objectivity is justifiably undermined.

- Systemic Corruption

- Not conspiracy in the classic sense, but structural conflict of interest built into institutions. This makes conspiratorial suspicions rational, even if specific theories are mistaken.

🌐 The Argument from Globalization: Transnational Structures Exist

The World Economic Forum, Bilderberg Group, Trilateral Commission—these are real organizations where elites discuss global policy behind closed doors (S005). While their influence may be exaggerated, their very existence and opacity create fertile ground for conspiratorial interpretations.

Transnational power structures are not fiction. The question is how to interpret their activities: as coordination of interests or as global conspiracy.

Automatic Conspiracy Detection: How Machine Learning Recognizes Conspiracy Patterns

A revolution in the study of conspiracy theories occurred with the emergence of methods for automatic detection and classification of conspiratorial content. Researchers developed classifiers to automatically determine whether a video is conspiratorial — moon landing hoaxes, construction of the Giza pyramids by aliens, doomsday prophecies (S008).

🧪 Automatic Detection Methodology: From Text to Narrative Structure

Modern approaches use a combination of natural language processing methods and network structure analysis. Posts and news materials are treated as sample subgraphs of a hidden narrative network, and the problem of reconstructing the underlying structure is formulated as a latent model estimation task (S002).

This allows identification of structural patterns characteristic of conspiratorial thinking, rather than simply searching for keywords. Connections between events, actor roles, and causal chains become objects of analysis. More details in the Disinformation section.

📊 COVID-19 as a Testing Ground: Real-Time Detection of Pandemic Conspiracy Theories

The COVID-19 pandemic provided a unique opportunity to test automatic detection systems under information crisis conditions. Alignments of conspiratorial narratives to news events can be tracked in near real-time, identifying areas in the news particularly vulnerable to conspiratorial reinterpretation (S004).

Preventive intervention — identifying potentially conspiratorial interpretations before their mass distribution — becomes possible through tracking narrative shifts at the moment of their emergence.

🧠 Neural Language Models and Typological Patterns

Methods developed for entirely different tasks proved applicable to conspiracy analysis. Neural pretrained language models capture patterns in language sets demonstrating certain phenomena, even when using substantially smaller data corpora than previously (S006).

This ability of neural networks to identify hidden structural patterns in limited data proved valuable for detecting conspiratorial narratives, which often exist in relatively small but dense information clusters.

🎯 Classification Accuracy and the False Positive Problem

A critical question for any automatic detection system is the balance between sensitivity and specificity. Overly broad criteria will lead to false accusations of conspiracy against legitimate skepticism or critical analysis. Overly narrow criteria will miss sophisticated forms of conspiratorial thinking.

| Problem | Risk | Solution |

|---|---|---|

| False positives (legitimate skepticism) | Censorship of critical thinking | Multi-domain focus as criterion |

| False negatives (missing conspiracy) | Spread of sophisticated narratives | Structural pattern analysis, not just themes |

| Contextual blindness | Misclassification across cultures | Multi-domain analysis (S002) |

Research shows that multi-domain focus is an important feature of conspiracy theories, providing a more reliable criterion than simple presence of certain themes or keywords. A conspiratorial narrative typically connects events from different domains — politics, medicine, economics, history — into a single causal chain.

YouTube and Conspiracy Demonetization: Platform Intervention Effectiveness Under Question

YouTube announced reduced visibility of conspiracy videos in recommendations and removal of their monetization (S008). But does this work in practice?

📉 Longitudinal Analysis: What Changed After Intervention

Researchers developed a classifier to automatically identify conspiracy videos and tracked their promotion before and after algorithm changes (S008). This allowed objective verification of whether conspiracy content visibility actually decreased.

Results showed that platform claims about intervention scale are often exaggerated. Many videos continue receiving recommendations, though with lower intensity. More details in the Cognitive Biases section.

⚖️ The Boundary Problem: Skepticism vs Conspiracy

Critical thinking, investigative journalism, and constructive skepticism superficially resemble conspiracy thinking but serve different functions. Automated systems must distinguish them by argumentation structure, not just content.

| Feature | Constructive Skepticism | Conspiracy Thinking |

|---|---|---|

| Evidence Sources | Verifiable data, peer review | Indirect indicators, coincidences, interpretation |

| Willingness to Revise | Changes position with new facts | Adapts theory to fit new facts |

| Explanation Scale | Local causes, testable mechanisms | Global conspiracies, hidden agents |

💰 Economics of Conspiracy: Monetization as One Driver

Demonetization assumes financial incentives are the main engine of conspiracy content production. But this ignores ideological motivation: for many conspiracy theorists, spreading "truth" matters more than income.

Moreover, YouTube demonetization often leads to migration to alternative platforms (Rumble, Telegram, Discord) with minimal moderation. Content doesn't disappear—it moves to less visible but more closed ecosystems.

🔄 The Streisand Effect: When Prohibition Strengthens Conviction

Censorship becomes proof of correctness for conspiracy theorists: "If they're hiding it—we must be on the right track." This creates an intervention paradox.

Attempts to suppress conspiracy content can strengthen believers' conviction and attract new followers intrigued by prohibition. Censorship transforms into a persecution narrative.

Platform measures only work when combined with alternative information sources and critical thinking. Without this, demonetization remains a symbolic action that may even strengthen conspiracy thinking through social confirmation mechanisms.

🎯 Real Effectiveness: What the Data Shows

Research (S001) indicates that platform interventions reduce conspiracy spread by an average of 20–30%, but don't eliminate it. Content migrates, audiences move to alternative channels, and core supporters' conviction may even strengthen.

- Demonetization reduces but doesn't block spread

- Conspiracy theorists move to platforms with less moderation

- Censorship can function as social proof of theory correctness

- Effectiveness depends on availability of alternative information sources

Platform interventions are necessary but insufficient measures. Without addressing the cognitive mechanisms that make conspiracy thinking attractive, and without critical thinking education, demonetization remains tactics without strategy.

Cognitive Anatomy of Conspiratorial Thinking: Which Mental Mechanisms Are Exploited

Conspiracy thinking is not a lack of education, but a systematic exploitation of fundamental features of human cognition. Understanding these mechanisms helps explain why conspiracy theories are so convincing to many people. More details in the Psychology of Belief section.

🧩 Pattern Detection and Hyperactive Agency Detection

The human brain evolved to detect patterns and agency — the ability to see intentional actions behind events. It's better to mistakenly see a predator in rustling leaves than to miss a real threat.

Conspiratorial thinking exploits this tendency: random coincidences are interpreted as evidence of coordinated actions, complex systemic processes are reduced to the actions of malevolent agents.

🔍 Confirmation Bias and Selective Attention

Confirmation bias is the tendency to seek, interpret, and remember information that confirms existing beliefs. In the context of conspiracy thinking, this tendency is particularly strong.

The multi-domain structure of conspiratorial narratives (S002) amplifies the effect: in the vast array of information from different domains, one can always find something that seems to confirm the theory. Contradictory data is ignored or reinterpreted.

⚙️ Illusion of Understanding and Simplification of Complexity

The modern world requires specialized knowledge: global supply chains, financial systems, epidemiological models. Conspiracy theories offer an attractive simplification: instead of complex systemic processes — simple explanations through the actions of malevolent actors.

This creates an illusion of understanding that is psychologically more comfortable than acknowledging one's own ignorance. The connection between this mechanism and the scientific method is obvious: science requires tolerance for uncertainty, conspiracy thinking demands its elimination.

🎭 Need for Control and Predictability

The COVID-19 pandemic created conditions of extreme uncertainty. The absence of authoritative scientific consensus (S004) led to the spread of conspiracy theories related to it.

| Reality | Conspiratorial Explanation |

|---|---|

| Fundamental uncertainty, randomness, complex systemic processes | Specific actors with specific plans, complete predictability |

| Psychological discomfort | Illusion of control and understanding |

👥 Social Identity and Belonging

Belief in conspiracy theories is often linked to social identity. Conspiracy theorists see themselves as "awakened," possessing "hidden knowledge" unavailable to the "sleeping masses."

This identity provides a sense of superiority and belonging to a special group. The interpretation of "hidden knowledge" to connect unrelated domains (S002) becomes a marker of belonging, and rejection of conspiratorial beliefs is perceived as betrayal. Mechanisms of social control in such groups are described in the analysis of conspiracies and manipulations.

🛡️ Distrust of Institutions: The Rational Kernel and Its Deformation

The distrust of institutions underlying many conspiracy theories is not always irrational. Real cases of corruption, manipulation, and deception by governments, corporations, and media create a rational basis for skepticism.

The problem arises when justified skepticism transforms into total distrust: any official statement is automatically considered false, any alternative version — true. This is a logical fallacy, not a rational adaptation.

The distinction between critical thinking and conspiratorial thinking lies precisely here: the former requires evidence for any claim, the latter requires evidence only for official versions.

Structural Markers of Conspiracism: How to Recognize a Conspiracy Theory by Its Architecture

Conspiracy theories possess a recognizable argumentative architecture. Its elements repeat regardless of content—from COVID theories to historical narratives. More details in the section Shintoism.

🧱 Multi-Domain Connectivity: Connecting the Unconnectable

The conspiracy framework links domains that would otherwise remain unconnected under normal circumstances. (S002) shows that conspiracy theories rely on interpretation of "hidden knowledge" to connect unrelated spheres of human interaction.

The theory linking 5G and COVID-19 is a classic example: telecommunications, virology, immunology, and global politics are united into one narrative, though there are no natural points of intersection between them.

| Domains in Conspiracism | Natural Connection | Conspiracy Connection |

|---|---|---|

| Technology + Biology | None | 5G causes COVID-19 |

| Pharmaceuticals + Politics | Regulation | Vaccines are tools of control |

| History + Current Events | Context | One group has ruled for centuries |

🔄 Unfalsifiability and Immunizing Strategies

Conspiracy theories are structured to be impossible to refute. Any evidence against the theory is reinterpreted as part of the conspiracy or evidence of its scale.

The absence of evidence for a conspiracy is interpreted as proof of its perfection. This is a logical trap: the system becomes irrefutable by definition.

The mechanism works simply: contradiction is not resolved but reclassified. A scientist's criticism becomes "proof of their involvement." Government silence becomes "confirmation of concealment." Conspiracies, manipulation, and secret cults analyze this architecture as a form of cognitive defense.

- A claim is made (e.g., "vaccines contain microchips")

- Counter-evidence is provided (vaccine analysis shows no microchips)

- Counter-evidence is reclassified ("analysis is faked," "scientists are bought")

- Original claim remains unchanged

🎯 Agency and Personification of Causality

Conspiracy theories require an active agent—a group of people making decisions. Randomness, systemic errors, or complex causal chains are reinterpreted as the result of purposeful action.

The COVID-19 pandemic is the result of viral evolution, laboratory conditions, and global mobility. But the conspiracy narrative requires an enemy: the state, a corporation, a secret organization. The scientific method works with probabilities and multifactorial models; conspiracism works with intentions and conspirators.

- Systemic Complexity

- Multiple factors interacting without a central plan. Difficult to predict, impossible to fully control.

- Conspiracy Interpretation

- One or more agents make decisions, everything else is theater. Everything is predictable if you know the "truth."

🔗 Network Topology: Nodes and Connections

Conspiracy theories are often visualized as networks of connections between people, organizations, and events. Each new piece of information is added as a node, each coincidence as a connection.

Pizzagate, QAnon, and the satanic panic demonstrate how such networks grow: each new fact (real or fictional) becomes evidence, each coincidence a link in the chain. The network becomes increasingly dense, but the logic remains the same: if nodes are connected, they are causally connected.

Correlation in a conspiracy network automatically becomes causation. The more connections, the more convincing the theory—regardless of the quality of those connections.

This explains why refuting one link does not destroy the theory: the network is dense enough to survive the loss of individual nodes. Pseudoscience often uses the same topology, but with one difference: in science, connections must be mechanistic and testable.