🧠 Mind Control

🧠 Mind ControlPsychological Control Mechanisms in High-Control Groupsλ

Scientific analysis of manipulation tactics, social control, and recovery after involvement in cults and destructive organizations

Overview

Cults exploit fundamental needs—belonging, purpose, identity—through systematic social control techniques. Destructive groups don't target the "weak," but people in situational vulnerability: 🧩 sophisticated manipulative tactics work regardless of intelligence or education. Recovery requires specialized support and identity reconstruction after psychological control experiences.

🛡️

Laplace Protocol: This section is based on peer-reviewed academic research from 2022-2023, including qualitative interviews with former cult members, systematic literature reviews, and theoretical analysis of control mechanisms. We use a trauma-informed approach and person-centered language, avoiding stigmatization of manipulation victims.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Navigation Matrix

Subsections

[coaching-cults]

Coaching Cults

Investigation of the coaching program phenomenon with cult-like characteristics: financial pyramids, psychological manipulation, and exploitation disguised as self-development

Explore

[mind-control]

Mind Control

Systematic application of psychological techniques to influence thoughts, beliefs, and behavior without informed consent — from historical experiments to modern manipulation methods.

Explore

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control 🧠 Mind Control

🧠 Mind Control⚡

Deep Dive

High-Control Groups: Where the Line Between Ideology and Exploitation Lies

The academic definition of a cult or high-control group includes four critical components: authoritarian leadership structure, systematic psychological manipulation, isolation from external influences, and exploitation of members—psychological, financial, or physical.

These organizations are built around an ideological or religious core, but their defining characteristic is not the content of beliefs, but the methods of control over followers. Cult dynamics can manifest in secular contexts—political movements, therapeutic communities, business organizations—with the same intensity as in religious groups.

Religious vs Ideological Cults: Common Mechanics in Different Shells

Cults exist based on political ideologies, self-help programs, business models, and other secular frameworks. A religious shell is not a necessary condition.

The common denominator is not theology, but power structure: a charismatic leader claiming exclusive access to truth uses this position for total control over followers' lives.

Religious cults exploit the need for spiritual meaning and fear of afterlife punishment. Ideological ones use political identity, promises of personal transformation, or financial success.

- Religious groups

- Manipulate concepts of sin, salvation, and cosmic justice

- Secular cults

- Rely on social identity, revolutionary narratives, or pseudoscientific promises

Control tactics—information isolation, dependency induction, punishment for dissent—remain structurally identical regardless of ideological packaging. Former members of religious and secular cults describe strikingly similar experiences of psychological pressure and post-traumatic symptoms.

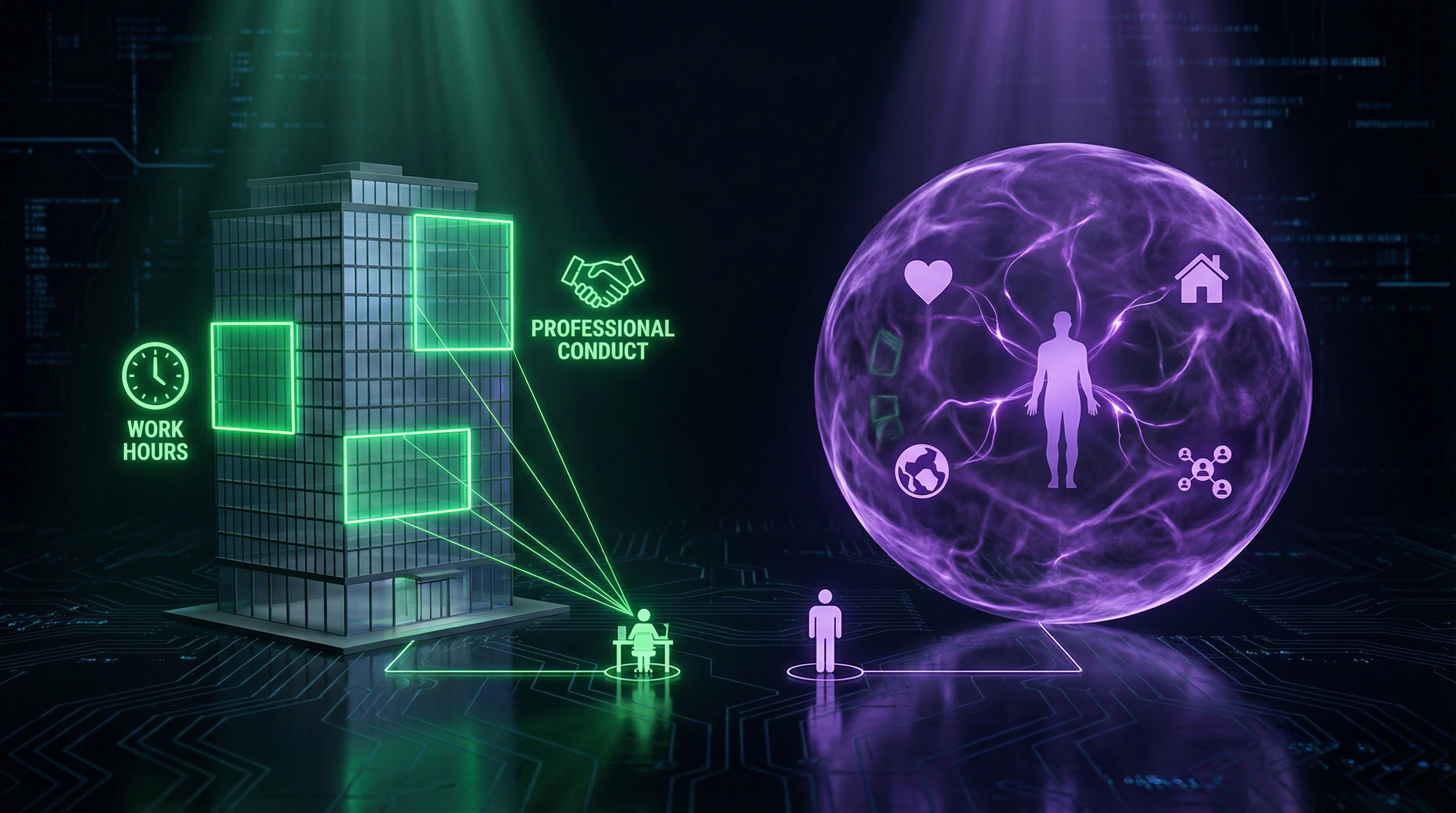

Criteria for Distinguishing from Legitimate Organizations with Strong Culture

Legitimate organizations and cults use mechanisms of social control, but differ fundamentally. Both types employ normative pressure, rituals, identity symbols—but diverge radically on three parameters.

| Parameter | Legitimate Organization | Cult |

|---|---|---|

| Intent | Productivity | Exploitation |

| Scope of Control | Work Processes | Total Life Control |

| Exit Consequences | Career Change | Severe Punishment, Ostracism |

A legitimate organization does not claim control over personal relationships, worldview, or access to information outside the work context.

- Inability to criticize the leader without risk of ostracism

- Requirement to sever ties with the outside world as a condition of membership

- Use of doctrine to justify exploitation (financial, labor, sexual)

A corporation may demand brand loyalty and intense work, but does not isolate employees from family, does not control their sexual life, and does not punish departure by expulsion from all spheres of social life.

When an organization begins to define a member's total identity and monopolizes their social connections, it crosses the boundary into cult territory regardless of formal legitimacy.

Psychological Mechanisms of Manipulation: Anatomy of Mind Control

Jenkins in "Cults, Coercion, and Control" critiques the oversimplified narrative of "brainwashing" as a single irresistible process. Cult influence is a complex interaction of psychological, social, and situational factors, not magical intervention.

Manipulation in high-control groups systematically applies well-known principles of social psychology: cognitive dissonance, gradual commitment, social proof. Effectiveness depends not on the "power" of techniques, but on creating conditions where critical thinking is suppressed through fatigue, emotional pressure, and isolation.

Tactics of Leaders with Cluster-B Personality Traits

Henderson (2023) identifies a correlation between cluster-B personality disorder traits in cult leaders and specific manipulative patterns. Narcissistic leaders demand unconditional admiration and interpret criticism as betrayal, creating an atmosphere of constant loyalty testing.

Antisocial traits manifest in exploitation without empathy, while borderline patterns generate unpredictable cycles of idealization and devaluation, keeping members in a state of anxious uncertainty.

The combination of these traits creates toxic dynamics: the leader is simultaneously charismatic, grandiose, ruthless in exploitation, and emotionally unstable. Followers become trapped in traumatic bonding, where periods of "love bombing" alternate with punishment—intermittent reinforcement, the most powerful mechanism for creating dependency.

This dynamic explains why intelligent and educated people can remain in clearly destructive groups for years.

Information and Behavioral Control: Architecture of Isolation

Systematic information control creates epistemological dependency: members lose the ability to verify the leader's claims through independent sources. Techniques include demonization of external media, internet access restrictions, mandatory reporting of contacts with the outside world.

Behavioral control complements information control: rigid schedules, mandatory participation in group activities, control of sleep and diet create physical exhaustion that reduces critical faculties.

| Control Mechanism | Tool | Result |

|---|---|---|

| Information | Media demonization, access restrictions, contact reporting | Epistemological dependency |

| Behavioral | Schedules, group activities, sleep/diet control | Physical exhaustion, reduced critical thinking |

| Linguistic | Loaded language, concept redefinition | Cognitive control, criticism blocking |

Particularly effective is the "loaded language" tactic—creating specialized jargon that redefines ordinary words according to the group's doctrine. This is a tool of cognitive control: when basic concepts ("freedom," "love," "truth") acquire cult-specific meanings, the group member loses the language to formulate criticism.

Returning to ordinary language becomes an act of betrayal, while maintaining cult language sustains the altered reality even when physically absent from the group.

Phobia Induction and Identity Reformation

Phobia induction—systematic implantation of irrational fears about the consequences of leaving—creates a psychological prison without physical walls. Cults program specific fears: members believe that those who leave will become ill, go insane, lose salvation, or bring curses upon their families.

These phobias are not rational, but emotionally real, creating panic reactions at thoughts of exit. Even intellectual understanding of the absurdity of threats doesn't block the emotional fear response.

- Identity reformation: replacement of the former personality with a cult-constructed identity through "rebirth" rituals, assignment of new names, rewriting of personal history. The former "self" is declared sinful, sick, or ignorant.

- Existential dependency: leaving the group means losing one's very identity, experienced as psychological death. Former members describe prolonged "liminal states" where they don't know who they are outside the cult identity.

This process creates the deepest level of control, explaining why exiting a cult requires not simply changing beliefs, but complete reconstruction of personality.

Recruitment Patterns: Why Normal People Fall Into the Trap

Similarities to Terrorist Organization Methods

Cults and terrorist organizations use identical recruitment architecture: they target the need for belonging, purpose, and identity through a three-stage model—identifying vulnerable individuals, emotional bombardment, gradual escalation of demands with increasing social costs of exit.

Both categories exploit the same cognitive vulnerabilities: the need for certainty, the desire to be part of an elite group, the pursuit of transcendent meaning.

The "us versus them" narrative creates a self-sustaining cycle: the more investment in group identity, the more threatening the outside world appears, which strengthens attachment to the group.

Both use gradual involvement in transgressive actions—from violating personal boundaries to serious crimes. This creates "points of no return," where shame and fear of punishment keep the member in the group.

Situational vs Characterological Vulnerability: The Weakness Myth

Research debunks the myth that only "weak" or "stupid" people join cults. Vulnerability is situational, not innate.

- Life Crisis: relocation, job loss, relationship breakup, death of a loved one—social connections are weakened, the need for meaning is heightened. The cult offers solutions to existential questions and instant community.

- Intellectual Trap: educated people create complex rationalizations to justify contradictions in doctrine, which paradoxically strengthens attachment.

- Information Isolation: critical thinking is suppressed not through intellectual defeat, but through emotional exhaustion, social pressure, and information control.

Doctors, lawyers, scientists have been documented joining cults. Cognitive abilities do not immunize against social-psychological techniques under conditions of isolation. Emotional dependency neutralizes logic.

Social Control: Cults vs Corporate Culture

The superficial similarities between cults and corporations with strong cultures—use of rituals, group identity, normative pressure—mask fundamental differences in intentions, scope, and consequences.

The key distinction lies not in social control techniques themselves, but in their application: corporations pursue organizational efficiency, while cults seek absolute submission and resource extraction (financial, emotional, sexual) from members.

Differences in Intent and Scope of Control

Corporate culture limits control to work hours and professional identity, leaving employees autonomy in personal life, social connections, and worldview. Cults demand reconfiguration of entire identity, severing external ties and subordinating personal decisions to group doctrine.

| Parameter | Corporation | Cult |

|---|---|---|

| Sphere of control | Work hours and professional role | Entire life—marriage, finances, health, children's education |

| Information control | Restriction of trade secrets | Blocking external sources, group criticism, alternative worldviews |

| Resource control | Salary, benefits | Transfer of bank accounts, medical decisions, partner selection to leaders |

Exit Consequences and Barriers

Leaving a corporation carries professional and financial consequences, but doesn't threaten social identity or physical safety. Exiting a cult involves loss of entire social network (often including family), economic instability, and psychological trauma from worldview system collapse.

Corporations compete for talent and care about employer reputation, which limits punitive measures against departing employees. Cults perceive exit as betrayal requiring punishment to deter remaining members.

- Financial dependence

- Deliberate construction of economic trap where member cannot provide for themselves independently.

- Information isolation

- Blocking access to external resources and social networks, creating illusion that exit is impossible.

- Fear indoctrination

- Systematic instillation of belief that outside world is hostile and persecution inevitable.

- Direct threats

- In extreme cases—physical harassment and violence as retention mechanism.

Gender Dynamics and Violence in Cult Relationships

Cult structures reproduce and amplify patterns of gender-based violence, using ideological frameworks to legitimize exploitation. Power imbalances, isolation, cycles of idealization and devaluation, control through fear and guilt—these mechanisms are identical to intimate partner violence.

In cults with charismatic male leaders, women are often subjected to sexual exploitation rationalized through doctrine ("spiritual marriages," "purification through the leader"). Group ideology suppresses resistance by redefining abuse as privilege or spiritual practice.

Parallels with Gender-Based Violence

Mechanisms of mind control in cults overlap with tools of intimate violence: economic dependence (prohibition on working outside the group or income control), social isolation (severing family ties under the pretext of "toxicity"), gaslighting (denying the reality of abuse, redefining it as care).

Cult ideology adds a critical layer: victims internalize the belief that resisting the leader equals spiritual failure or betrayal. This internal censor operates even without external surveillance.

| Mechanism | Function in System |

|---|---|

| Narcissistic leaders | Use charisma to create "special relationships" with victims, who experience this as being chosen |

| Internalized control | Victim self-censors, fearing spiritual failure, making external surveillance unnecessary |

Cycles of Abuse and Isolation

Cult relationships reproduce the classic cycle of violence: love-bombing (special attention from leader), tension building (impossible demands), incident (punishment, humiliation, sexual violence), reconciliation (apologies, return of attention).

In a group context, this cycle intensifies: witnesses remain silent or actively support the leader, other members deny abuse, blame the victim for "insufficient devotion," creating an environment where seeking help feels impossible.

Women remain in exploitative cults for years after recognizing abuse due to fear of losing children (who remain in the group) or lack of external resources for exit.

Isolation intensifies through group mechanisms: members who have invested in the doctrine become active participants in suppression, not merely witnesses. This creates a closed system where exit requires not only physical separation but cognitive reevaluation of the entire belief system.

Recovery and Reintegration of Former Members

Leaving a cult does not end the traumatic experience, but opens a complex period of reconstructing identity, worldview, and social connections. Former members enter a "liminal" state — a period of disorientation between the rejected cult identity and a not-yet-formed new one.

This state can last months or years, requiring specialized support that is rarely available through standard psychological services unfamiliar with the specifics of mind control.

Dismantling and Rebuilding Identity

Former members reevaluate all aspects of identity formed in the cult: values, beliefs, skills, relationships, life goals. Each element requires critical review — what was authentic, what was imposed, what to keep, what to discard.

Recognizing that years of life were spent on an exploitative system triggers shame, anger, and grief that can paralyze forward movement.

Social reintegration is hindered by practical barriers: former members often lack current professional skills, social connections outside the group, and experience navigating the "ordinary" world.

Specialized Support and Long-Term Challenges

Effective assistance requires understanding the specifics of cultic trauma: not only PTSD from specific incidents, but complex trauma from prolonged control, betrayal of trust, and destruction of worldview.

- Restoring Trust

- Cultic experience undermines the ability to distinguish manipulation from authenticity. Work is needed on betrayal by authority, not just event trauma.

- Processing Lost Time

- Especially painful for those who spent their youth in the cult and missed opportunities. Work is needed on existential grief, not just depression.

- Family Relationships

- Navigating contact with family members remaining in the group requires understanding cult control dynamics, not standard family therapy.

- Gender-Based Violence

- Women have experienced sexualized violence in cults. Integration of approaches to gender-based violence and cult control is necessary.

A trauma-informed approach avoids pathologizing victims, recognizes the rationality of their choices in the context of manipulation, and focuses on restoring agency and autonomy — key elements undermined by cult control.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

A cult is a high-control group with an authoritarian leader that uses psychological manipulation to exploit members. Key differences: total control over participants' lives, isolation from the outside world, severe consequences for attempting to leave, and exploitative intentions of the leader (Hadding, 2023). Legitimate organizations focus on specific goals without controlling personal life.

No, this is a common myth. Cults exploit normal psychological needs for belonging, purpose, and meaning in life, using sophisticated manipulation techniques. Vulnerability is situational, not characterological—any person under certain circumstances can become a target (Henderson, 2023).

Leaders employ gaslighting, love bombing, fear induction, information control, and identity reformation. Research shows that many possess Cluster-B traits (narcissism, antisocial behavior), which correlates with manipulative behavior (Henderson, 2023). These techniques systematically destroy critical thinking and autonomy.

Cult recruitment methods resemble terrorist organization tactics: targeting vulnerable individuals, exploiting the need for belonging, and creating the illusion of a special mission. Recruiters identify life crises, loneliness, or searches for meaning, offering ready-made answers and instant community (Synergy Between Cults and Terror Groups).

Key differences: intentions (exploitation vs. productivity), scope of control (entire life vs. work processes), and consequences of leaving (punishment vs. career change). Strong corporate culture focuses on work performance with voluntary participation, while cults control all aspects of life with exploitative goals (O'Reilly & Chatman, 1996).

Yes, cults can be based on political ideologies, therapeutic/self-development practices, business models, and other secular foundations. Religious components are not required—the defining factors are control structure, manipulation, and exploitation, not the specific content of ideology (Hadding, 2023).

Watch for isolation from friends/family, control of information and finances, cycles of idealization and devaluation, guilt and fear induction. Cult relationship dynamics mirror patterns of domestic violence: power imbalance, isolation, and cyclical abuse (Grant, 2022). A critical sign is the impossibility of safe exit.

'Brainwashing' is a simplified term for a complex process of psychological influence. Research shows that cult influence involves multiple psychological, social, and situational factors, not one irresistible technique (Jenkins). It's a gradual process using normal mechanisms of social influence under extreme conditions.

Maintain non-judgmental contact, avoid direct confrontation with beliefs, ask open-ended questions that stimulate critical thinking. Provide factual information about manipulative techniques without directly attacking the person or group. Professional help from cult specialists is critically important for safe exit.

Former members experience an 'in-between' state with difficulties reconstructing identity, social reintegration, and processing trauma. Common issues include loss of basic life skills, destroyed relationships, financial problems, and post-traumatic symptoms (Hadding et al., 2023). Recovery requires specialized trauma-informed support.

Exit is blocked by multiple barriers: induced phobias of the outside world, financial dependence, threats of severing all social connections, and a destroyed identity outside the group. Psychological mechanisms include cognitive dissonance, sunk cost fallacy, and traumatic bonding to the leader (O'Reilly & Chatman, 1996).

Research reveals a predominance of Cluster B traits: narcissism, antisocial personality, borderline and histrionic disorders. These characteristics correlate with charisma, grandiosity, lack of empathy, and a tendency to exploit others (Henderson, 2023). However, not all individuals with these traits become cult leaders.

Yes, digital spaces create new opportunities for cultic control through algorithmic isolation, 24/7 monitoring, and global recruitment. Online cults employ the same manipulation mechanisms but with enhanced information control and leader anonymity. Exit barriers may be lower, but the psychological impact remains significant.

Multi-layered control is applied: restricting access to external sources, demonizing criticism, specialized jargon, rewriting personal history, and controlling internal communications. A closed information ecosystem is created where alternative viewpoints are physically and psychologically inaccessible (Henderson, 2023). Critical thinking is systematically suppressed through group pressure.

Yes, cultic relationships demonstrate patterns identical to gender-based violence: power imbalance, victim isolation, abuse cycles (idealization-devaluation-abuse), and resource control. Both dynamics use psychological dominance, dependency induction, and destruction of victim autonomy (Grant, 2022).

Comprehensive trauma-informed therapy is necessary, along with assistance in rebuilding life skills, financial counseling, and social reintegration support. Support groups for former members and specialists who understand the specifics of cultic trauma are critical (Hadding et al., 2023). Recovery is a multi-year process requiring patience and professional guidance.