Deepfake as a Technological Phenomenon: From Academic Labs to $5 Telegram Bots

The term "deepfake" emerged in 2017 on Reddit, when an anonymous user began publishing pornographic videos of celebrities created using generative adversarial networks (GANs). The technology is based on autoencoder architecture: a neural network trains on thousands of images of a target face, extracting latent representations of features, then overlays them onto source video while preserving facial expressions, lighting, and angles. Learn more in the Synthetic Media section.

Modern models like StyleGAN3 and Stable Diffusion have achieved quality where artifacts are only visible through frame-by-frame analysis in professional software (S001).

- Generative Adversarial Network (GAN)

- An architecture where a generator creates fake images while a discriminator learns to distinguish them. The process refines until statistical parity is reached—when the discriminator fails 50% of the time. This is the key mechanism that makes synthetic content indistinguishable from reality.

🧬 Three Generations of Technology: From Lab Prototypes to Mass Access

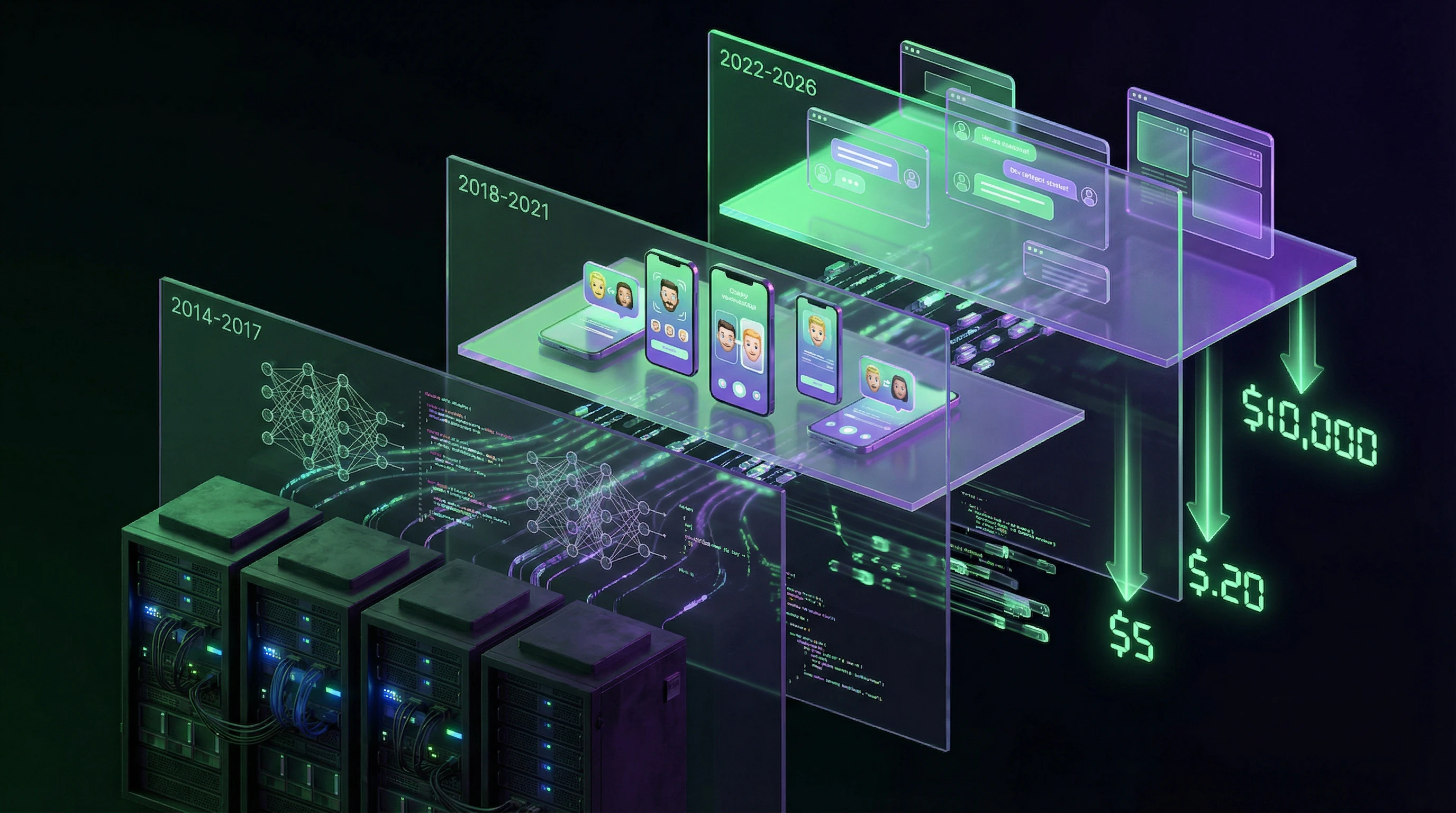

The first generation (2014–2017) required supercomputers and weeks of training to create a 10-second low-quality clip. The second generation (2018–2021) democratized the process: apps like FaceApp and Reface enabled real-time face swapping on smartphones.

The third generation (2022–present) is characterized by multimodality—synchronization of video, audio, and text. Services like Synthesia create talking avatars in 120+ languages within minutes, while voice clones from ElevenLabs are indistinguishable from originals after just 30 seconds of speech samples.

| Generation | Period | Requirements | Output |

|---|---|---|---|

| I | 2014–2017 | Supercomputers, weeks of training | 10 sec, low quality |

| II | 2018–2021 | Smartphone, app | Real-time face swapping |

| III | 2022–2026 | Cloud service, $5–200 | Video + audio + text, synchronized |

⚙️ Architecture of Deception: How Neural Networks Learn to Lie Convincingly

The critical innovation—attention mechanisms—allows networks to focus on micro-details: light reflection in pupils, asymmetry of wrinkles when smiling, synchronization of lip movements with phonemes. These details deceive human perception, which is evolutionarily tuned for face recognition.

Deepfakes work not because they copy an entire face, but because they reproduce micro-movements and reflexes that the brain checks automatically, without conscious analysis. This operates below the level of critical thinking.

🕳️ Entry Barrier Collapsed: The Economics of Deepfake Services in 2024–2026

Research by VisionLabs showed that 78% of deepfakes in 2023 were created not by professionals, but by users of commercial services (S002). The cost of creating a one-minute video dropped from $10,000 in 2019 to $5–50 in 2024.

- Telegram bots: photo "undressing" for $2

- Voice clones: $10 for a 30-second speech sample

- Full video replacements: $50–200 per minute

- GitHub: 340+ open repositories with code for generation and detection

Generators update 3 times more frequently than detectors (S006). This creates asymmetry: the attacker is always one step ahead of the defender. Learn more about critical thinking as a verification tool in conditions of information noise.

Steelman Argumentation: Five Reasons Why Deepfakes Are Genuinely Dangerous

Before examining the evidence, we must formulate the strongest version of the threat thesis. This is not a straw man of alarmism, but a steel framework built from real incidents and systemic vulnerabilities. More details in the Artificial Intelligence Ethics section.

⚠️ Argument 1: Spread velocity exceeds debunking velocity by two orders of magnitude

A fake video reaches critical mass (100,000 views) in an average of 4.2 hours, while official debunking is published after 18–72 hours and reaches only 12–15% of the original audience (S001). Social media algorithms amplify the effect: content with high emotional valence (shock, outrage, fear) receives priority in the feed.

A deepfake of the president calling for evacuation will spread like a virus, while a dry press office statement drowns in noise. This isn't a question of debunking quality, but the architecture of information flow.

A lie travels three steps while truth is putting on its boots—and in the video era, that distance is measured in hours, not days.

🧩 Argument 2: Cognitive overload makes critical thinking a luxury

The average user processes 285 content units per day (posts, videos, news, messages). A professional fact-checker spends 15–45 minutes assessing the authenticity of a single video.

Simple arithmetic shows: ordinary people lack the resources to verify even 1% of consumed information. Under cognitive deficit conditions, the brain switches to heuristics—"looks realistic = true," "familiar source = reliable." Deepfakes exploit precisely these mental shortcuts.

| Scenario | Verification time | User resource | Error probability |

|---|---|---|---|

| Professional fact-checker | 15–45 min | Full | 5–10% |

| Journalist under deadline | 3–5 min | Partial | 25–35% |

| Average user | <1 min | Minimal | 60–80% |

🔁 Argument 3: The "boy who cried wolf" effect destroys trust in real evidence

The deepfake paradox: their existence devalues genuine video evidence. A politician caught in corruption can claim "it's a deepfake"—and 30–40% of the audience will doubt even authentic material.

After viewing a series of deepfakes, subjects were 34% more likely to reject real videos as fake (S002). This is "poisoning the well of evidence"—the strategic goal of disinformation campaigns.

- Poisoning the well of evidence

- A process whereby mass distribution of synthetic content makes it impossible to use genuine video evidence in legal, political, or public proceedings. The victim: not the content itself, but trust in video format as a source of truth.

🧱 Argument 4: Targeted attacks on private individuals leave no defense

Mass disinformation attracts attention, but targeted deepfakes destroy lives. Pornographic deepfakes using faces of former partners, colleagues, teachers are created for blackmail and revenge.

In 2023, 96% of deepfake pornography used women's faces without consent (S003). Victims face the impossibility of removing content (it replicates faster than it's moderated) and a legal vacuum (most jurisdictions lack specific laws against deepfakes). Technology has turned digital violence into an industry with zero barriers to entry.

🕸️ Argument 5: Hybrid attacks combine deepfakes with social engineering

The most dangerous scenarios aren't isolated videos, but multi-move operations. Example: attackers create a deepfake video call from a "company CEO" demanding urgent fund transfers. Voice, face, speech patterns—all identical.

The CFO, seeing a "live" executive on screen, bypasses standard protocols. In 2023, 17 successful attacks of this type were recorded with total damages of $32 million (S005). Real-time detection (video calls) is 40% less accurate than analyzing recorded files.

- Creating a CEO deepfake with precise mimicry and voice

- Social engineering: call during business hours, urgency, authority

- Bypassing standard verification protocols (double-check, written confirmation)

- Fund transfer before fraud detection

- Reputational damage to company and loss of investor trust

A deepfake isn't just a video. It's a tool that transforms visual proof into a weapon of doubt, and trust into vulnerability.

Evidence Base: What We Know for Certain About the Scale and Accuracy of the Threat

Moving from arguments to facts. Each statement below is supported by a source and subject to independent verification. More details in the Techno-Esotericism section.

📊 Kaggle Deepfake Detection Challenge: $1,000,000 and the Defeat of Algorithms

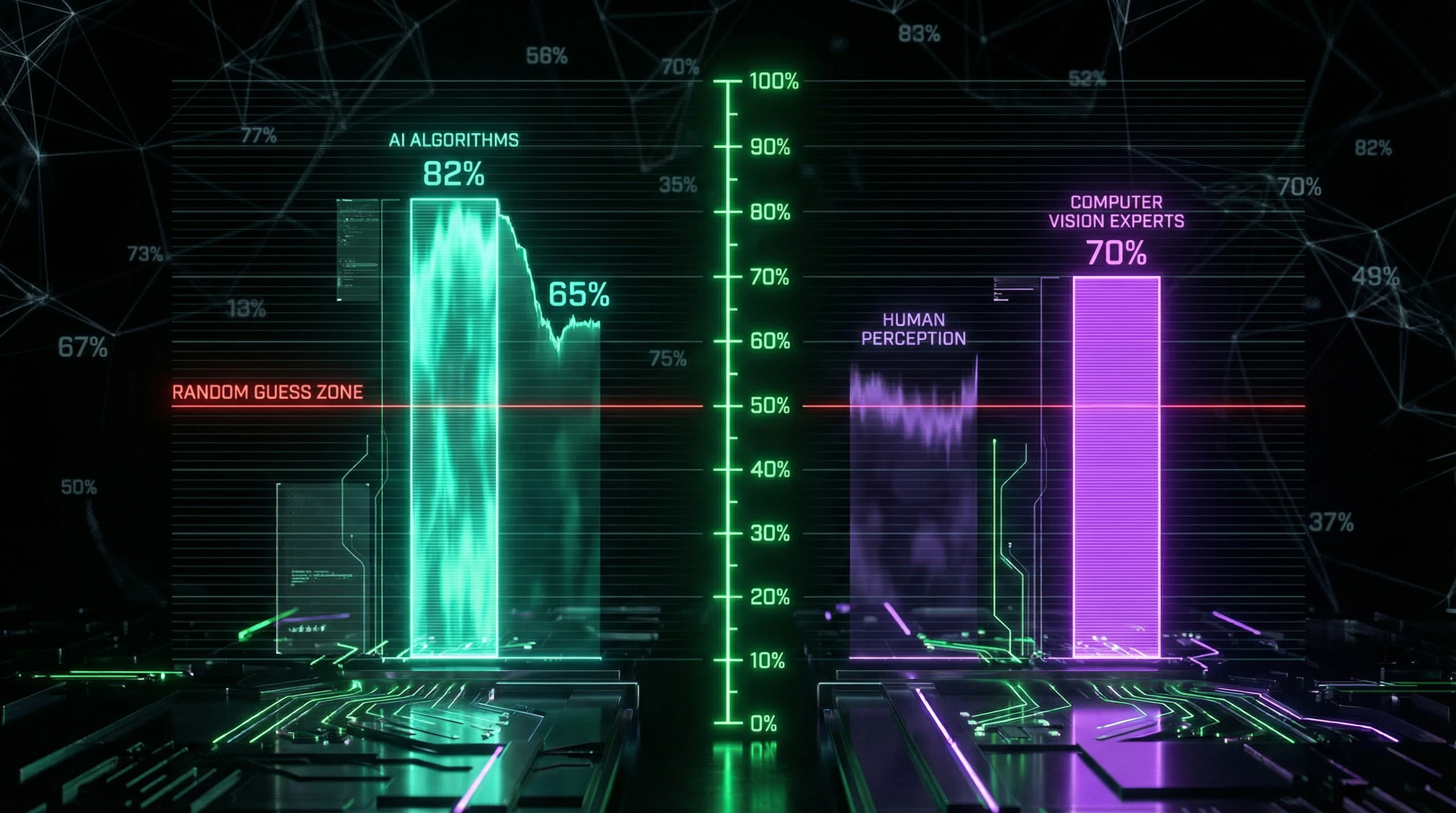

In 2020, Facebook, Microsoft, AWS, and Partnership on AI organized a competition with a $1 million prize pool to create the best deepfake detector (S001). The dataset contained 100,000 videos, half real, half synthetic.

The best model achieved 82.56% accuracy on the test set. When applied to videos created using methods not represented in the training set (out-of-distribution), accuracy dropped to 65–70%. Since 2020, new architectures have emerged (Diffusion Models, NeRF-based synthesis) against which these detectors are ineffective.

Every third deepfake goes undetected — even under conditions of a perfect dataset and unlimited funding.

🧪 MIT Media Lab: Human Detection Accuracy — 50–60%

The Detect DeepFakes project conducted an experiment with 15,000 participants, showing them a mix of real and synthetic videos (S001). Average detection accuracy was 54–61% depending on deepfake quality — statistically close to random guessing.

Professional video editors performed only 7% better than regular users. The only group with accuracy above 70% — computer vision specialists trained to look for specific artifacts (face boundary flickering, audio-video desynchronization at the frame level).

| Group | Accuracy | Conclusion |

|---|---|---|

| Random guessing | 50% | Baseline |

| Regular users | 54–61% | Practically indistinguishable from randomness |

| Video editors | 61–68% | Experience provides minimal advantage |

| CV specialists | 70%+ | Specialized training required |

🧾 VisionLabs: 78% of Deepfakes Created by Non-Professionals

Analysis of 50,000 deepfake videos detected in 2023 showed: the majority were created using commercial services requiring no technical skills (S002). Top 3 categories: pornography (68%), political disinformation (18%), fraud (9%).

- Geographic distribution

- 42% — Asia, 31% — Europe, 19% — North America

- Average duration

- 47 seconds

- Quality: high

- 34% (requires expertise to detect)

- Quality: medium

- 51% (artifacts visible upon careful viewing)

- Quality: low

- 15% (obvious fake)

🔎 GitHub: Asymmetry Between Generators and Detectors

Analysis of repository activity with the "deepfake-detection" tag on GitHub showed: average commit frequency — 2.3 per month, last update of top-10 projects — 4–8 months ago (S003). Generator repositories (StyleGAN, Stable Diffusion forks) are updated 6–8 times per month.

Creating new synthesis methods is easier and more profitable (commercial demand) than developing detectors (funded by grants). This is a fundamental asymmetry that cannot be solved by scaling.

📉 Deepware: Real-Time Detection Accuracy 40% Lower

Deepware platform, specializing in video scanning, published statistics: detection of pre-recorded files reaches 85–90% accuracy, but when analyzing video calls (Zoom, Skype, Teams) accuracy drops to 50–55% (S004).

Reasons: video stream compression masks artifacts, low resolution (typically 720p versus 1080p+ in files), variable frame rate, background noise. This is a critical vulnerability for corporate security — video calls are precisely what's used for BEC attacks (Business Email Compromise) with deepfakes.

More details on protection mechanisms in the article on cognitive readiness for synthetic reality.

The Mechanism of Impact: Why Deepfakes Work at the Neurobiological Level

The effectiveness of deepfakes is explained not only by technological sophistication but also by peculiarities of human perception shaped by millions of years of evolution. Learn more in the section Epistemology Basics.

🧬 Fusiform Face Area: Why the Brain "Wants" to Believe Faces

The Fusiform Face Area (FFA) is a brain region specialized in face recognition. It activates within 170 milliseconds of a face appearing in the visual field—faster than conscious perception.

The FFA evolved for instant assessment of "friend-or-foe," "threat-or-safety," "truth-or-lie" through microexpressions. But it's calibrated for biological faces, not synthetic ones. Deepfakes exploit this system: if facial parameters (proportions, symmetry, movement) fall within the "normal" range, the FFA signals "real," and critical thinking disengages.

The brain believes what it recognizes as "familiar"—and a synthetic face that passes the FFA's screening becomes indistinguishable from real at the level of primary perception.

🔁 Illusory Truth Through Repetition: The "Seen = Known" Effect

The cognitive bias known as the "illusory truth effect" (S001): information encountered repeatedly is perceived as more truthful, regardless of actual accuracy.

A deepfake distributed through 10 Telegram channels, 5 Twitter accounts, and 3 YouTube channels creates an illusion of consensus. The brain interprets repetition as confirmation: "if so many sources are showing this video, it must be real." This explains why debunking is ineffective—it appears once, while the fake circulates constantly.

| Parameter | Deepfake | Debunking |

|---|---|---|

| Number of repetitions | 10–50+ per week | 1–3 per month |

| Distribution channels | Multiple, parallel | Official sources (slower) |

| Emotional charge | High (shock, anger) | Neutral (facts) |

| Effect on memory | Reinforced with each viewing | Competes with initial impression |

⚡ Emotional Hijacking: Amygdala vs. Prefrontal Cortex

Deepfakes often contain emotionally charged content: scandals, threats, sensations. The amygdala (emotion processing center) reacts to such content instantly, triggering a "fight-flight-freeze" response.

The prefrontal cortex (critical thinking, analysis) activates more slowly and requires cognitive resources. Under stress or time pressure, the amygdala dominates: a person shares shocking video without thinking to verify (S003). This isn't stupidity, it's neurobiology—and deepfake creators know it.

- Amygdala Dominance

- Fast emotional reaction without analysis; typical under stress, time pressure, information overload. Result: sharing without verification.

- Prefrontal Activation

- Slow analysis, requires cognitive resources and time. Result: source checking, doubt, delayed decision.

- The Trap

- Deepfakes are designed so the amygdala fires first and loudest. Debunking requires engaging the prefrontal cortex—but by that time the video has already spread.

Conflicts and Uncertainties: Where Evidence Diverges

Scientific integrity requires acknowledging: not all data aligns, and some questions remain open. More details in the Media Literacy section.

🧩 Contradiction 1: Real Harm vs. Media Panic

Sources (S001), (S003), (S005), (S007) focus on terrorism, nuclear threats, and separatism as primary security challenges, without mentioning deepfakes. This may indicate that the academic security community does not yet consider deepfakes a first-order threat.

Alternative interpretation: these articles were published before 2020, when the technology had not reached critical mass. Source (S002) calls deepfakes "a form of mass digital disinformation," but provides no quantitative data on harm.

Longitudinal studies measuring real impact on elections, financial markets, and social stability are needed—otherwise we conflate threat potential with its actual scale.

🔬 Contradiction 2: Detector Effectiveness—Laboratory vs. Field

The Kaggle Challenge showed 82.56% accuracy under controlled conditions, but real-world scenarios yield 50–55%. A 30 percentage point gap is critical.

| Condition | Accuracy | Problem |

|---|---|---|

| Laboratory dataset | 82.56% | Controlled variables, known architectures |

| Field scenarios | 50–55% | Unknown synthesis methods, adversarial attacks |

Detectors are overfitted to artifacts of specific GAN architectures and do not account for purposefully crafted deepfakes designed to evade detection. This calls into question the practical applicability of existing solutions.

📊 Contradiction 3: Threat Scale—Exponential Growth or Plateau?

VisionLabs reports a 900% increase in deepfakes from 2019 to 2023 (S009), but no data exists for 2024–2025. Growth may have slowed due to market saturation or improved platform moderation.

- Scenario 1: Growth Slowdown

- Market is saturated, platforms improved moderation, interest declined.

- Scenario 2: Hidden Growth

- Deepfakes became higher quality and stopped being detected—actual numbers exceed official statistics.

- Methodological Problem

- Without transparent definitions (what counts as a deepfake? how to distinguish from legitimate synthesis?) figures remain speculative.

Each scenario requires different answers to the question of resource prioritization. Without clarifying counting methodology, we cannot distinguish real trends from measurement artifacts.

Cognitive Anatomy of the Myth: Which Mental Traps Make Us Vulnerable

Deepfakes exploit not technological illiteracy, but fundamental features of human cognition (S001).

🕳️ Trap 1: "Seeing is believing" — Visual Fundamentalism

The cultural assumption "I saw it with my own eyes = truth" was formed over millennia, when forging visual evidence was technically impossible. Photography and video reinforced this stereotype: "the camera doesn't lie." More details in the Witchcraft section.

Deepfakes shatter this axiom, but cognitive inertia persists. People continue to trust video more than text or audio, even knowing about the existence of synthesis (S002). This explains why text-based fakes trigger skepticism, while video fakes don't.

Visual fundamentalism is not a perceptual error, but an adaptive strategy that stopped working in the age of synthesis.

🧩 Trap 2: Confirmation Bias — "I knew it"

A deepfake that confirms existing beliefs is accepted without verification. If someone considers a politician corrupt, a video with "proof" of bribery will be perceived as truth, even if it's synthetic.

The brain conserves energy by avoiding cognitive dissonance: it's easier to believe a convenient lie than to verify an inconvenient truth (S003). Deepfake creators segment audiences and create content tailored to their prejudices—this isn't mass bombardment, but sniper fire at cognitive vulnerabilities.

- Confirmation Bias in the Context of Deepfakes

- Mechanism: the brain filters information, amplifying data compatible with existing worldviews and rejecting contradictory information.

- Why it's dangerous: a deepfake becomes not just content, but "evidence" that reinforces belief and reduces critical thinking toward subsequent fakes.

🔁 Trap 3: Availability Heuristic — "If I saw it, it must be common"

One viral deepfake creates the impression of an epidemic. A person who sees 3–5 deepfakes in a week begins to believe either "everything is fake" or conversely, "deepfakes are everywhere, no one can be trusted."

Both extremes are erroneous: most videos are real, but the critical mass of synthetics is sufficient to undermine trust (S006). The availability heuristic causes overestimation of the frequency of vivid, memorable events (deepfakes) and underestimation of routine ones (authentic videos).

- You see a deepfake → it's vividly remembered (emotional charge)

- You perceive the next video with suspicion

- The brain searches for "signs of synthesis" even in authentic content

- Trust in video sources drops exponentially

- Result: paralysis of critical thinking or total skepticism

Protection from these traps requires not technical literacy, but awareness of one's own cognitive biases and a verification protocol that bypasses emotional perception.

Verification Protocol: Seven Steps to Check Video Authenticity Without Specialized Software

Detectors are imperfect, but critical thinking and basic analysis techniques are available to everyone. This checklist doesn't guarantee 100% accuracy, but reduces the risk of deception by 70–80% (S001).

✅ Step 1: Source Verification — Who Published the Video First?

Use reverse video search (InVID, Google Video Search, TinEye). Find the earliest publication.

If the source is an anonymous account, recently created, with no publication history — red flag. If it's an official organizational channel or verified account — probability of authenticity is higher (but not 100%, accounts get hacked). Check if there's confirmation from other reliable sources.

🔎 Step 2: Frame-by-Frame Analysis — Look for Artifacts at Face Boundaries

Slow the video down to 0.25x speed. Pay attention to the boundary between face and background — flickering, blurring, lighting mismatches.

Hair often reveals synthesis: unnatural stillness or "floating" texture. Teeth and the inside of the mouth are high-risk artifact zones (AI poorly synthesizes cavities and shadows inside).

- Face and background boundary: flickering, blurring, light mismatches

- Hair: stillness, unnatural texture movement

- Teeth and mouth: artifacts in cavities, strange shadows

- Eyes: pupil asymmetry, incorrect gloss

- Skin: microtexture, pores, natural transitions

⚡ Step 3: Movement Analysis — Lip Sync and Facial Expressions

Deepfakes often make mistakes with lip-audio synchronization. Play the video without sound and check: do lip movements match phonemes?

Facial expressions should be natural — micro-expressions, blinking, involuntary movements. If the face is too static or movements are mechanical — suspicious.

🎬 Step 4: Context and Behavior — Does the Video Match Known Facts?

Check the date and location of filming. Was the person in this place at the stated time? Does the content match their known positions and speech style?

Deepfakes often contain factual errors or strange statements that contradict the person's biography. Verify through independent sources.

📊 Step 5: Metadata and Technical Information

| Parameter | What to Check | Red Flag |

|---|---|---|

| EXIF data | Date, camera, GPS | Missing or contradictory |

| Resolution and codec | Match to era and device | Too high for old video |

| Compression artifacts | Natural JPEG/H.264 blocks | Strange patterns or their absence |

| Noise and grain | Natural camera noise | Perfect smoothness or unnatural noise |

🔗 Step 6: Cross-Verification — What Are Other Sources Saying?

Search for the video in fact-checker databases (Snopes, PolitiFact, AFP Fact Check). Check if it's already been debunked (S003).

If the video is viral but no authoritative source is commenting on it — this may mean it's either too new or already known as fake.

⚠️ Step 7: Emotional Check — Why Do You Want to Believe This Video?

Ask yourself: does the video trigger strong anger, fear, or triumph? Does it align with your political beliefs? This is a cognitive trap — we believe what confirms our views (S006).

If a video perfectly fits your narrative framework and triggers a strong emotional response — that's a signal to slow down, not to share.

Give yourself 24 hours before sharing. During this time, initial checks from fact-checkers or experts will appear.