🔍 Deepfake Detection

🔍 Deepfake DetectionSynthetic Media Detection Technologies and Deepfake Protectionλ

Artificial intelligence creates realistic fake videos and audio, but the same technologies help detect them and protect the digital authenticity of content

Overview

Deepfakes are synthetic media files created by neural networks: 🧬 they imitate voice, face, and mannerisms of real people with alarming accuracy. The accessibility of AI tools has transformed the problem from laboratory-scale to mass-scale — from political manipulation to blackmail of private individuals. Detection uses machine learning to identify artifacts: inconsistencies in lighting, facial micro-movements, audio signals — but each improvement in defense provokes a new wave of attacks.

🛡️

Laplace Protocol: Verification of digital content requires a multi-layered approach, combining automated analysis with expert assessment and cryptographic methods for confirming source authenticity.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

🔍 Deepfake Detection

🔍 Deepfake Detection 🔍 Deepfake Detection

🔍 Deepfake Detection⚡

Deep Dive

How Generative Networks Create Synthetic Faces Indistinguishable from Real Ones

Deepfakes are based on deep learning architectures capable of synthesizing photorealistic images and videos by analyzing thousands of examples. Modern methods have reached such a level of quality that the human eye is often unable to detect forgeries without specialized tools.

Understanding generation mechanisms is the first step toward developing effective detection systems. This isn't a matter of technical curiosity: every new synthesis method creates a new attack vector.

Generative Adversarial Networks (GANs)

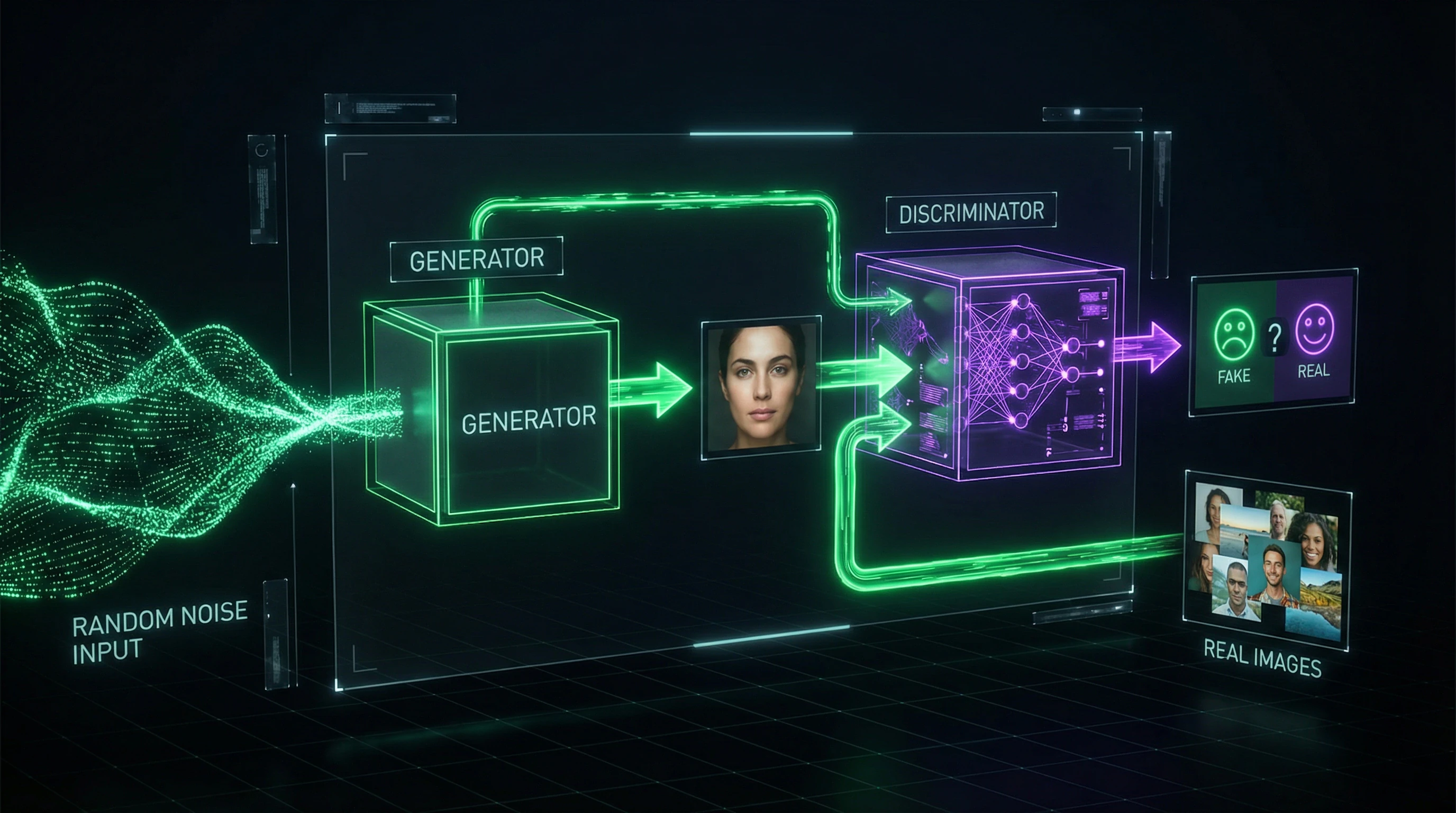

GANs consist of two neural networks: a generator that creates synthetic images, and a discriminator that attempts to distinguish them from real ones. During training, the generator improves until the discriminator can no longer differentiate fakes.

| Architecture | Resolution | Key Vulnerability |

|---|---|---|

| StyleGAN2 | up to 1024×1024 | Artifacts in high-frequency components |

| StyleGAN3 | up to 1024×1024 | Spectral anomalies in texture analysis |

Modern models preserve the finest details of skin texture, hair, and iris, but this very detail creates predictable patterns in spectral analysis.

Autoencoders and Face-Swap Algorithms

Autoencoders compress a face image into a compact latent representation, then reconstruct it with features from another person. Face-swap technology uses two autoencoders: one trained on the source face, another on the target face, while sharing a common encoder.

- DeepFaceLab and FaceSwap

- Require 2,000–10,000 frames for quality results. They allow transferring expressions and movements from one face to another while preserving the target's identity.

- Critical Failure Point

- Mismatch between facial geometry and lighting in the source video often reveals forgeries when analyzing shadows and highlights.

Speech Synthesis and Voice Cloning

Voice deepfakes are created through models like Tacotron 2 and WaveNet, which convert text to speech with a specific person's intonations. Modern systems require only 5–10 minutes of audio recording to clone a voice with acceptable quality.

- Voice conversion technology changes timbre and accent while preserving linguistic content

- The main vulnerability of synthetic speech is the absence of micro-variations in the frequency spectrum characteristic of natural vocal tracts

- Synthetic voices often demonstrate excessive regularity in formant transitions between phonemes

Every synthesis method leaves a trace: GANs in the spectrum, face-swap in light geometry, voice cloning in micro-rhythm. Detectors don't search for perfection, but for its absence.

Digital Traces of Forgery: What Gives Away Synthetic Video

Visual deepfakes leave characteristic artifacts that can be detected using computer vision and image analysis. Detection methods evolve in parallel with generation technologies, creating an arms race between creators and detectors.

An effective detection system must analyze multiple indicators simultaneously, since no single method provides 100% accuracy.

Analysis of Compression and Pixelation Artifacts

Deepfakes often contain inconsistencies in JPEG and H.264 compression patterns, since synthetic regions are processed differently than original ones. Discrete Cosine Transform (DCT) analysis reveals anomalies in 8×8 pixel blocks characteristic of inserted fragments.

Error Level Analysis (ELA) methods visualize differences in compression levels across image regions — detection accuracy based on compression artifacts reaches 87-92% on established datasets like FaceForensics++.

Detection of Anomalies in Eye Movements and Blinking

Early deepfakes rarely blinked naturally, since training datasets contained predominantly frames with open eyes. Modern algorithms track blink frequency (normal rate 15-20 times per minute), eyelid closure duration (100-400 ms), and synchronicity of both eyes' movements.

Neural network detectors analyze pupil movement trajectories and corneal reflexes, which are difficult to reproduce synthetically. The Eye-tracking Consistency Analysis method achieves 94% accuracy on videos longer than 10 seconds.

Verification of Lighting and Shadow Consistency

Physically correct lighting requires adherence to optical laws: shadow direction must correspond to light source positions, and eye reflections must match the environment.

| Lighting Parameter | What the Detector Checks | Method Accuracy |

|---|---|---|

| Shadow Direction | Correspondence with light sources in scene | 89% |

| Eye Reflections | Match with environment and spectrum | 89% |

| Spectral Composition | Uniformity of color temperature on face and background | 89% |

Algorithms like Lighting Environment Estimation analyze facial normal maps and compare them with the presumed scene lighting. Methods based on spherical harmonics model three-dimensional lighting and identify anomalies.

Acoustic Forensics: How to Recognize Synthetic Voice

Audio deepfakes threaten authentication systems of banks and corporations that rely on voice biometrics. Detection of synthetic speech relies on analysis of acoustic characteristics invisible to the human ear.

The combination of spectral analysis and machine learning identifies synthetic voice with accuracy above 95% under controlled conditions.

Spectral Analysis and Identification of Synthetic Patterns

Natural speech contains microvariations in formant frequencies, jitter (irregularity of fundamental frequency period), and shimmer (amplitude variations) that are difficult to reproduce synthetically.

| Characteristic | Natural Speech | Synthetic Speech |

|---|---|---|

| Formant Frequencies | Microvariations, organic transitions | Excessive regularity |

| High Frequencies (>8 kHz) | Natural fluctuations | Regular patterns |

| Background Noise | Present (real conditions) | Absent or artificial |

Analysis of cepstral coefficients (MFCC) and linear prediction (LPC) reveals anomalies in the spectral envelope. Methods based on Constant-Q Transform (CQT) achieve 96% detection accuracy on the ASVspoof 2019 dataset.

Biometric Voice Verification

Speaker verification systems compare acoustic voice prints with reference recordings, analyzing unique characteristics of the vocal tract. X-vectors and i-vectors — compact voice representations extracted by deep neural networks, robust to variations in transmission channel and noise.

Modern systems achieve Equal Error Rate (EER) of 1–3% on clean recordings, but adaptive attacks with adversarial examples deceive them in 40–60% of cases.

This requires multi-factor authentication: voice + PIN code, voice + facial biometrics, or voice + hardware key. No single verification channel should be the sole source of trust.

Machine Learning in Deepfake Detection: From Datasets to Adversarial Robustness

Training Classifiers on Labeled Datasets

The effectiveness of deepfake detectors directly depends on the quality and diversity of training data. The largest public datasets — FaceForensics++ (over 1.8 million frames from 1,000 videos), Celeb-DF (590 real and 5,639 fake celebrity videos), and DFDC (124,000 videos from Facebook) — contain samples created by various generation methods: DeepFakes, Face2Face, FaceSwap, NeuralTextures.

Labeling includes not only binary "real/fake" labels, but also masks of manipulated regions, metadata about generation methods, and compression levels. Modern classifiers based on EfficientNet, XceptionNet, and Vision Transformers achieve 95–99% accuracy on test sets from the same datasets.

Performance drops to 60–70% in cross-dataset testing due to overfitting on artifacts from specific generators.

The domain shift problem is a critical bottleneck in detector generalization capability. A model trained on deepfakes at 1080p resolution with minimal compression shows 30–40% accuracy degradation when analyzing videos compressed with H.264 codec at bitrates below 2 Mbps.

Data augmentation techniques — adding Gaussian noise, JPEG artifacts, motion blur, color balance changes — increase robustness by 15–20%. Ensemble methods combining predictions from multiple models with different architectures (CNN + Transformer + frequency analysis) demonstrate 8–12% improvement in AUC-ROC metric.

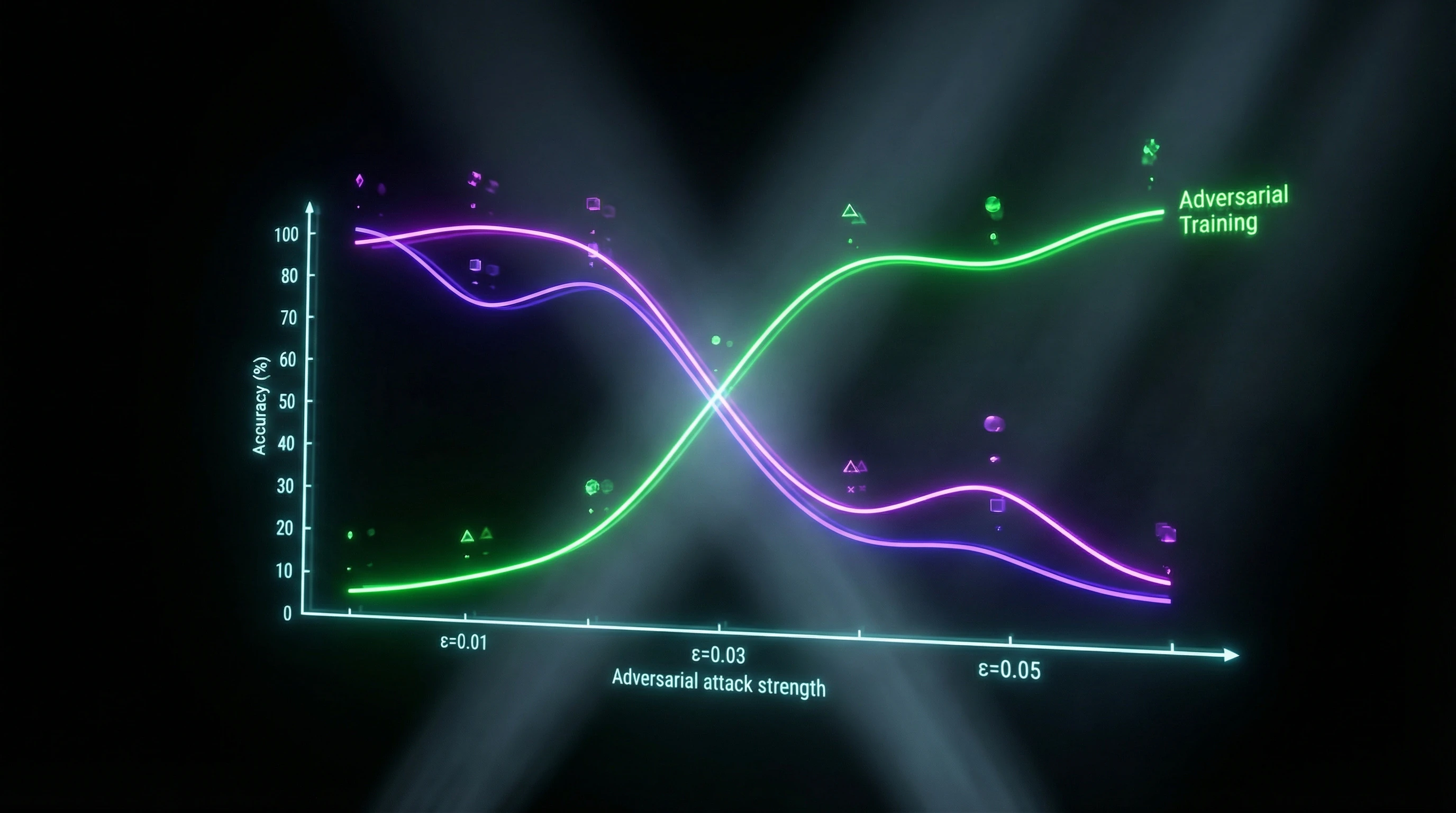

Adversarial Training and Attack Resistance

Adversarial examples — specially constructed input perturbations imperceptible to humans but causing classification errors — pose a serious threat to deepfake detectors.

| Attack Method | Accuracy Reduction | Noise Amplitude |

|---|---|---|

| FGSM (Fast Gradient Sign Method) | from 98% to 20–30% | 8/255 in RGB |

| PGD (Projected Gradient Descent) | from 98% to 20–30% | 8/255 in RGB |

| C&W (Carlini-Wagner) | from 98% to 20–30% | 8/255 in RGB |

Adversarial training — including adversarial examples in the training set — increases robustness by 25–35%, but requires a 2–3x increase in computational resources and training time.

Certified defenses based on randomized smoothing guarantee mathematically provable robustness within an L2-ball of radius 0.5–1.0, but at the cost of 5–8% reduction in baseline accuracy.

Transfer Learning and Model Adaptation

Transfer learning enables adapting detectors to new types of deepfakes without complete retraining from scratch. Models pretrained on ImageNet or face recognition tasks (VGGFace2, MS-Celeb-1M) contain universal features of textures, edges, and semantic structures that transfer to manipulation detection tasks.

- Fine-tuning the last 2–3 layers

- Requires 10–15x less labeled data (500–1,000 samples instead of 10–20k), reduces training time from several days to 2–4 hours on GPU.

- Few-shot learning (Siamese, Prototypical Networks)

- Demonstrates ability to detect new deepfake types from 5–10 examples with 75–82% accuracy.

- Domain adaptation (DANN, CORAL)

- Minimizes feature distribution discrepancy between source and target domains, improves accuracy by 18–22% when transferring to social media-compressed videos.

Continual learning strategies with elastic weight consolidation prevent catastrophic forgetting during sequential training on new manipulation types, maintaining performance on old tasks at 90–93% of original levels.

Blockchain and Cryptographic Content Verification: Trust Technologies in the Age of Synthetic Media

Digital Signatures and Watermarks

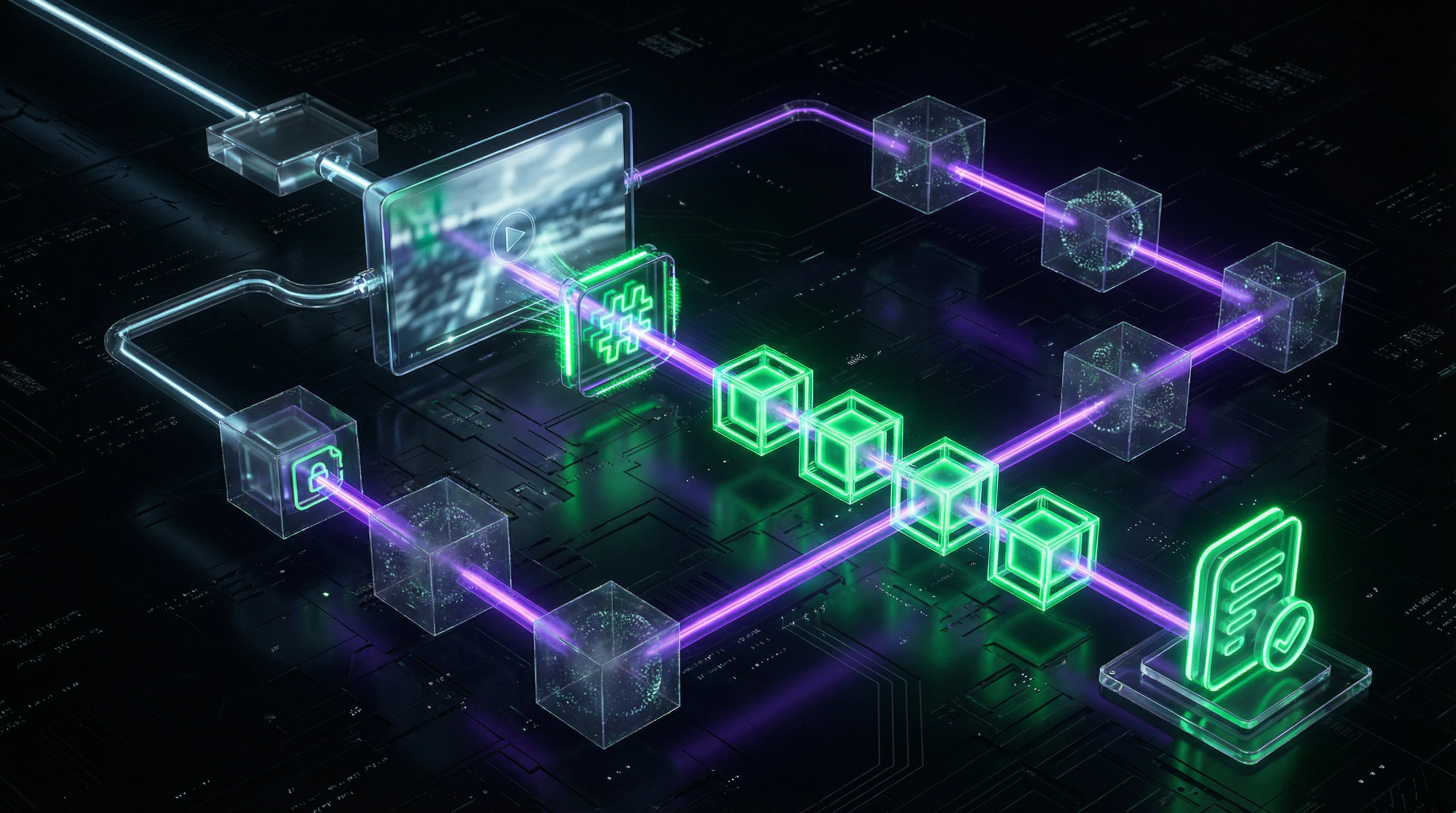

Cryptographic digital signatures provide mathematically provable authenticity and integrity of media content. The C2PA standard embeds a signed manifest in image and video metadata with provenance information: capture device, creation time, editing chain, author identifier.

The signature is created with the camera's or editor's private key and verified with a public key from a trusted certificate. Any change to pixels or metadata invalidates the signature, signaling potential manipulation. Implementation of C2PA in Sony Alpha 1 and Canon EOS R3 cameras since 2023 creates a trust infrastructure for professional photojournalism.

Robust watermarks — invisible patterns embedded in image pixels, resistant to compression, cropping, and filtering — complement cryptographic methods.

Google DeepMind's SynthID technology embeds watermarks directly into the image generation process, modifying token probability distributions so the mark persists even after JPEG compression at 75% quality and 50% resizing.

The detector extracts the mark with 95–98% probability in the absence of attacks and 70–80% after aggressive transformations. Limitation: watermarks don't protect against targeted removal attacks, reducing detectability to 30–40% when the embedding architecture is known.

Decentralized Media Authenticity Registries

Blockchain platforms create immutable provenance logs where each transaction — media file registration, rights transfer, authenticity verification — is recorded in a distributed ledger.

The Starling Lab project uses Filecoin blockchain and IPFS to archive evidence of human rights violations: photos and videos are hashed, the hash is recorded in the blockchain with a timestamp, creating cryptographic proof of the file's existence at a specific moment. Verification takes seconds and requires no trust in a central authority.

- Numbers Protocol integrates NFT certificates with C2PA metadata, allowing photographers to monetize authentic images.

- Content usage tracking occurs through smart contracts in a distributed network.

- Each rights transfer or edit is registered with a cryptographic signature.

Scalability remains a challenge: recording each media file in a public blockchain costs $0.01–0.50 depending on the network and creates a 5–60 second delay.

Hybrid solutions use off-chain storage for the files themselves (IPFS, Arweave) and on-chain records only for hashes and metadata, reducing costs 100–1000 times. Consortium blockchains (Hyperledger Fabric) for corporate media archives process 1000–5000 transactions per second with finality under 1 second, but sacrifice decentralization for performance.

Social and Ethical Aspects: Laws, Education, and Balancing Innovation

Legislative Regulation of Deepfakes

Legal frameworks for deepfakes are forming fragmentarily at the national level. In the US, the federal DEEPFAKES Accountability Act (2023) requires labeling of synthetic content with watermarks and criminalizes creating deepfakes with intent to harm, with penalties up to 5 years imprisonment and $2,500 fines.

California (AB 602, AB 730) prohibits non-consensual deepfake pornography and political deepfakes within 60 days of elections. The European Union under the AI Act classifies deepfake creation systems as "high risk," requiring mandatory labeling, algorithm audits, and training dataset transparency.

China (2023) mandates platforms implement deepfake detectors and block unlabeled synthetic content within 24 hours under threat of fines up to 10% of annual revenue.

Enforcement faces technical and jurisdictional barriers. Anonymity of deepfake creators through VPNs and cryptocurrencies complicates identifying violators.

Cross-border content distribution through decentralized platforms (BitTorrent, IPFS) renders blocking ineffective. Determining "intent to harm" requires proving mens rea, which is difficult in cases of satire or artistic use.

The balance between protection from abuse and freedom of expression remains subject to legal disputes: lawsuits against creators of political deepfakes are often dismissed under the First Amendment in the US.

Media Literacy and Critical Thinking

Educational programs on recognizing deepfakes increase societal resilience to manipulation. A MIT Media Lab study (2024) showed that a 15-minute training with artifact examples improved participants' ability to distinguish real from fake videos from 54% to 73% accuracy.

Long-term courses (8-12 weeks) with practice in metadata analysis, reverse image search, and source verification achieve 85-90% accuracy. Critical thinking—the habit of asking "Who created this?", "What's the purpose?", "Do independent sources confirm?"—is more effective than memorizing specific artifacts, which become outdated as generation technologies evolve.

- Inconsistent lighting and shadows on the face

- Unnatural eye and eyelid movements

- Lip-speech desynchronization

- Artifacts at face-background boundaries

- Anomalies in pupil reflections

Integration of deepfake detectors into educational platforms and browser extensions democratizes access to verification. The InVID-WeVerify extension for Chrome and Firefox analyzes videos for manipulation signs, extracts EXIF metadata, and performs reverse searches of key frames across Google, Yandex, Bing databases in 10-15 seconds.

Mobile applications like Truepic Vision embed C2PA verification into smartphone cameras, signing photos at the moment of capture. Mass adoption of such tools in school curricula and corporate training creates "collective immunity" to disinformation.

Balance Between Innovation and Rights Protection

Media synthesis technologies have legitimate applications: film dubbing with lip synchronization, restoration of historical recordings, personalized education with virtual instructors, accessibility tools for people with disabilities.

Excessive regulation risks stifling innovation and creating barriers to research. The "safe harbor" model—exempting platforms from liability if they implement detectors and complaint-based removal procedures—incentivizes voluntary cooperation without suppressing technological development.

Licensing deepfake creation tools with requirements for embedded watermarks and usage logging balances accessibility and accountability.

Ethical frameworks like IEEE P7003 (Algorithmic Bias) and Partnership on AI Guidelines recommend dataset transparency, bias audits (racial, gender, age), consent mechanisms for biometric data use, and the right to explanation of detector decisions.

Stakeholder participation—technology companies, rights advocates, journalists, legislators—in multistakeholder governance bodies (Content Authenticity Initiative, AI Alliance) ensures consideration of diverse interests.

The future of deepfake detection lies not in a technological arms race, but in sociotechnical systems where technology, law, education, and ethical norms work synergistically to protect the information ecosystem.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

A deepfake is synthetic media content created using artificial intelligence to replace a person's face or voice. The technology is based on generative adversarial networks (GANs) and autoencoders, which are trained on thousands of images of the target face. Modern algorithms can create realistic videos in just a few hours on a standard computer.

High-quality deepfakes are difficult to detect without specialized tools, but there are telltale signs: unnatural blinking, lip-sync misalignment, artifacts at face boundaries. Pay attention to lighting, shadows, and skin texture—algorithms often make mistakes in these details. If you have any doubts, use specialized verification services.

Detection is based on machine learning: neural networks analyze compression artifacts, eye movement anomalies, lighting inconsistencies, and audio spectral patterns. Modern systems use adversarial training for resilience against new forgery methods. Blockchain technologies are also applied for cryptographic verification of original content.

This is a myth—modern deepfakes have achieved photorealistic quality and deceive even experts. GAN and autoencoder technologies are constantly improving, creating increasingly convincing forgeries. Quality depends on the volume of training data and computational resources available to the creator.

Use specialized deepfake detection services (Microsoft Video Authenticator, Sensity AI) or upload the video to verification platforms. Analyze file metadata, check the source and publication context. For important content, apply multiple verification methods simultaneously.

GAN (generative adversarial network) is an AI architecture consisting of two neural networks: a generator creates forgeries, while a discriminator attempts to detect them. Through this 'adversarial' process, the generator learns to create increasingly realistic images that the discriminator cannot distinguish from real ones. GANs form the foundation of most modern deepfakes.

Yes, blockchain enables the creation of immutable records of media content origin through digital signatures and timestamps. Decentralized ledgers store hashes of original files, allowing authenticity verification. News agencies and platforms are already implementing such solutions to combat disinformation.

Early deepfake algorithms poorly reproduced natural human blinking—too infrequent or unnatural in dynamics. Detectors analyze blink frequency, duration, and patterns, comparing them to biological norms. Modern deepfakes have learned to imitate blinking, so this method is combined with other techniques.

Adversarial training is a method of training detectors on constantly updated examples of deepfakes, including those specifically created to deceive the system. The model learns to recognize not only existing forgeries but also adapts to new generation techniques. This is an arms race between deepfake creators and detectors.

Synthetic speech has characteristic artifacts in the frequency spectrum that differ from natural human voice. Spectrogram analysis reveals abnormal harmonics, unnatural transitions between phonemes, and absence of micro-variations. Modern systems use deep learning to automatically detect these patterns.

This is an outdated myth — today, a quality deepfake can be created on a gaming PC with a mid-range graphics card in just a few hours. Ready-made applications and online services exist that automate the process. The accessibility of the technology has increased significantly, which amplifies the problem of deepfake proliferation.

In the U.S., deepfakes may fall under laws concerning defamation, fraud, copyright infringement, and distribution of false information. Since 2024, penalties have been strengthened for using AI to create compromising materials. Legislation continues to evolve in response to emerging threats.

Critical thinking and source verification are the first line of defense against manipulation. Learning to recognize signs of forgery, understanding the technologies, and developing habits of information verification reduce the effectiveness of deepfakes. Media literacy is especially important in an era of widespread synthetic content.

Theoretically possible, but in practice there are always micro-artifacts detectable by specialized algorithms. The race between generation and detection continues — each improvement in deepfake creation stimulates the development of detection methods. Complete indistinguishability remains an unattainable goal.

Digital watermarks are invisible markers embedded in a media file at the creation stage, confirming its authenticity and origin. They are resistant to compression and editing, allowing verification of original content. The technology is being actively implemented by camera manufacturers and news agencies.

Yes, the technology is applied in film production for de-aging actors, in education for creating interactive historical figures, in medicine for synthesizing speech for people with impairments. Deepfakes help with content localization and personalized learning. The key question is ethical use with the consent of all parties.