🔍 Deepfake Detection

🔍 Deepfake DetectionSynthetic Media: Digital Content Created by Artificial Intelligenceλ

Images, videos, audio, and text generated or modified using machine learning and neural networks to create realistic content

Overview

Synthetic media is content that algorithms create or radically transform: 🧬 images, video, voice, text. The technology works in medical imaging (recognizing structures during surgery), entertainment, marketing, science—but simultaneously opens pathways to disinformation and manipulation. The key question: how to verify authenticity when machines imitate reality more precisely than humans.

🛡️

Laplace Protocol: When evaluating synthetic media, it's critically important to distinguish legitimate applications (medical diagnostics, scientific research) from potentially harmful ones (deepfakes, disinformation). Always verify the content source, look for signs of AI generation, and demand transparency regarding the methods used to create digital materials.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

🔍 Deepfake Detection

🔍 Deepfake Detection 🔍 Deepfake Detection

🔍 Deepfake Detection⚡

Deep Dive

Synthetic Media Creation Technologies: From Adversarial Networks to Diffusion Models

Synthetic media is digital content (images, video, audio, text) created or modified by machine learning algorithms. Neural networks generate this content based on large datasets of real data, unlike traditional computer graphics where each element is programmed manually.

The technology has radically lowered barriers to content creation and opened new possibilities for research, commerce, and creativity.

Generative Adversarial Networks (GAN)

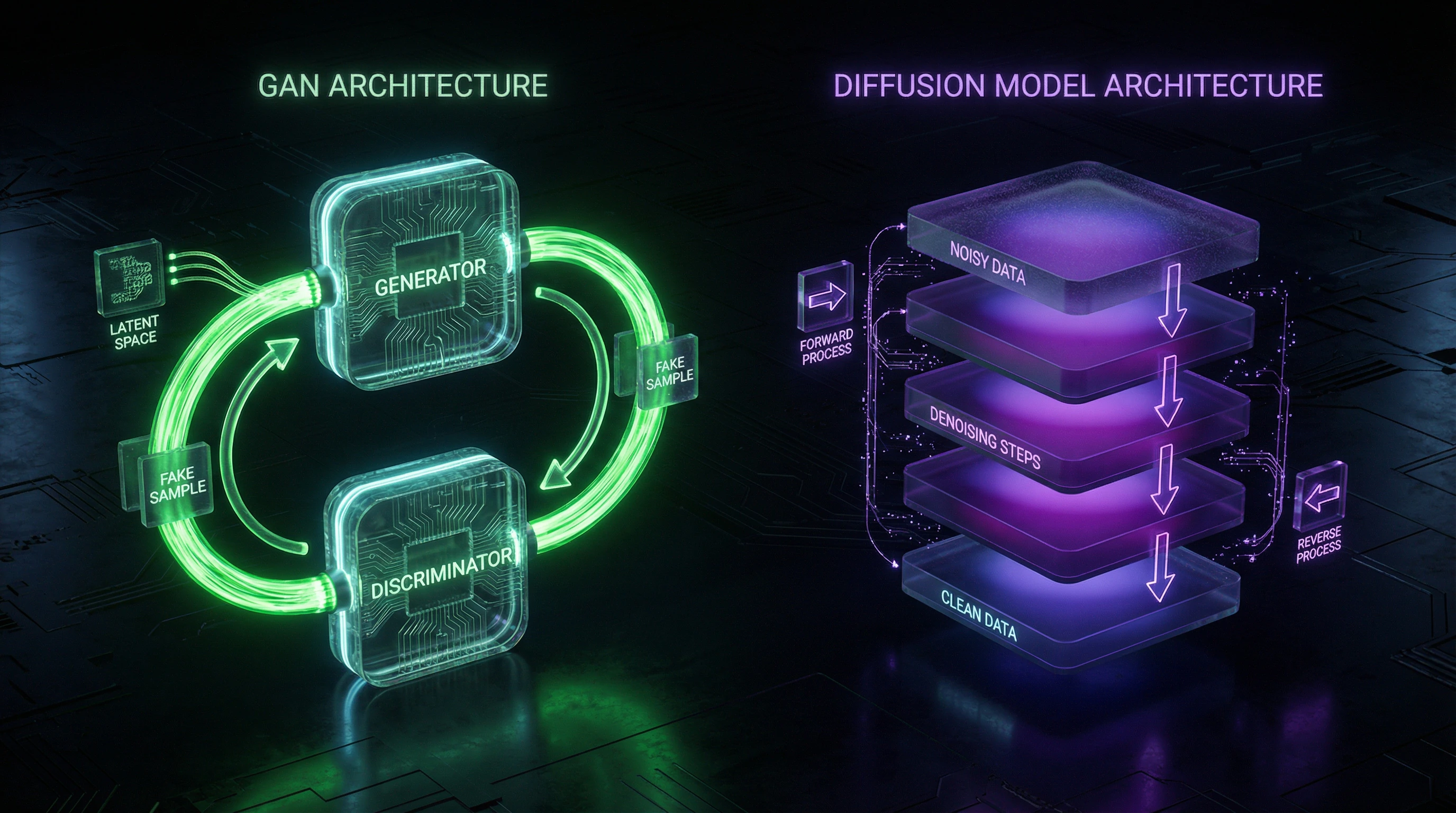

Generative adversarial networks, proposed in 2014, work through competition between two components: a generator creates synthetic samples, while a discriminator distinguishes real data from fake.

| Component | Function | Dynamics |

|---|---|---|

| Generator | Creates synthetic samples | Improves by attempting to fool the discriminator |

| Discriminator | Distinguishes real data from fake | Enhances ability to detect fakes |

The adversarial dynamics lead to creation of highly realistic images indistinguishable from photographs to the human eye. StyleGAN and BigGAN architectures have achieved quality in generating faces and objects that surpasses the capabilities of traditional computer graphics methods.

GANs are applied from creating training data for medical algorithms to generating photorealistic textures in the gaming industry. However, the technology has limitations: training complexity, process instability, and tendency to generate artifacts when working with high resolution.

Diffusion Models and Transformers

Diffusion models, which gained widespread adoption from 2020, work on the principle of gradually removing noise from a random image. The model learns to reverse the data degradation process, recovering original content from noise.

Unlike GANs, diffusion models demonstrate more stable training and ability to generate diverse content without mode collapse.

- DALL-E 2, Midjourney, Stable Diffusion

- Use diffusion processes combined with transformer architectures to convert text descriptions into images.

- Transformers

- Provide an attention mechanism allowing the model to focus on relevant parts of input data and link text prompts with visual representations.

The combination of diffusion models and transformers provides unprecedented control over the generation process, allowing users to precisely specify desired content characteristics through natural language.

Diffusion models require more computational resources during generation than GANs, but provide a more predictable and controllable training process. This has made them the foundation for commercial applications where reliability is critical.

Applications of Synthetic Media in Medicine and Scientific Research

The medical field has become one of the most promising areas for synthetic media technologies, where generative models address critical issues of data scarcity, patient confidentiality, and clinical case variability. Synthetic data enables algorithm training without risk of personal information leakage, complying with strict medical legislation requirements.

AI-Assisted Surgical Visualization and Operation Planning

Generative models create three-dimensional reconstructions of anatomical structures from two-dimensional medical images, improving preoperative planning and reducing surgical intervention risks. Algorithms synthesize missing projections in computed tomography, restore details in low-quality MRI images, and generate virtual organ models for surgical simulations.

AI systems achieve parathyroid gland identification accuracy comparable to experienced surgeons—critical for preventing complications during thyroid operations. In ophthalmology, synthetic media is used to compare the effectiveness of anti-VEGF therapies for neovascular age-related macular degeneration, generating visualizations of disease progression and predicting treatment response.

- Personalized fundus models account for individual anatomical features for accurate disease progression forecasting.

- Medical history analysis determines therapeutic strategy selection based on patient data and predictive models.

- Efficacy monitoring tracks treatment response over time using synthetic visualizations.

Synthetic Data for Medical Research and Algorithm Training

The shortage of labeled medical data represents the main obstacle to developing diagnostic AI systems, especially for rare diseases and atypical clinical cases. Generative models create synthetic datasets that are statistically indistinguishable from real ones but contain no information about specific patients.

Models trained on combinations of real and synthetic data demonstrate better generalization ability and robustness to variations in image quality.

In oncology, synthetic tumor images generated from real biopsies effectively supplement training samples for breast cancer subtype classification algorithms. Synthetic data is used for rare pathology augmentation, balancing imbalanced datasets, and creating controlled scenarios for algorithm validation.

Meta-analyses show that including synthetic samples in training sets increases diagnostic system accuracy by 8–15% compared to training solely on limited real data.

Synthetic Media in Commerce and Entertainment Industries

The commercial sector has adapted synthetic media technologies to create marketing content, personalize user experiences, and reduce production costs. Generative models enable brands to create thousands of variations of advertising materials for different audiences and platforms without expensive photo shoots.

The entertainment industry uses synthetic media to create virtual characters, digital doubles of actors, and procedurally generated game worlds.

Content Generation for Marketing and Personalized Advertising

Marketing platforms integrate generative models to automatically create visual content based on text briefs, accelerating campaign production cycles. Algorithms generate product images in various contexts, adapt style to target audience preferences, and create design variations for A/B testing.

The technology is particularly effective in e-commerce: synthetic models showcase clothing and accessories, eliminating the need for physical photo shoots and enabling instant catalog updates.

Personalization of advertising content reaches a new level through generative systems' ability to adapt visual elements to users' demographic characteristics, cultural context, and behavioral patterns.

- Scalability: unique creatives for audience microsegments — risk of manipulative potential of hyperpersonalization.

- Speed: instant catalog updates without photo shoots — requires transparency in the use of AI-generated content.

Virtual Influencers and Digital Characters in Media

Virtual influencers — fully synthetic characters with detailed biographies, visual style, and personality traits — are gaining millions of followers on social networks and signing advertising contracts with major brands.

Created through a combination of 3D modeling, generative neural networks, and animation technologies. They provide complete control over image and eliminate reputational risks of real ambassadors. They don't age, don't tire, and can simultaneously appear at multiple events.

In the gaming industry and cinema, synthetic media enable the creation of photorealistic digital doubles of actors, filming scenes without performers' physical presence, or recreating images of deceased artists.

- Copyright

- Unclear status of rights to synthetic images and their commercial use.

- Consent for Likeness Use

- Ethical boundary between the right to one's own image and the ability to reproduce it without consent.

- Posthumous Use

- Question of permissibility of recreating images of deceased artists and control over their legacy.

Synthetic characters are becoming an integral part of the modern media landscape, blurring the boundaries between real and artificial in popular culture. Regulatory issues in this area are examined in the section on AI ethics and safety.

Deepfakes and the Disinformation Problem: How Technology Blurs the Boundaries of Reality

Fake Video Creation Technologies and Their Evolution

Deepfakes are synthetic media created by deep neural networks that replace faces and voices in videos with high realism. The technology is based on generative adversarial networks (GANs): a generator creates synthetic content, a discriminator critiques it, and both gradually improve.

Modern algorithms require only a few minutes of video footage to create a convincing fake. This has made the technology accessible not only to professionals but also to ordinary users through specialized apps and online services.

Early deepfakes gave themselves away with artifacts around the eyes and unnatural movements. Modern models have learned to correctly process facial expressions, synchronize lip movements with speech, and adapt lighting—the boundary between fake and real is disappearing.

Audio deepfakes pose a particular danger—synthetic voice recordings based on just a few minutes of original speech. They are used for phone scams and manipulation, where voice is the only marker of identity.

Synthetic Content Detection Methods and Their Limitations

Detecting deepfakes is an arms race between creators of synthetic content and developers of detection systems. Modern methods analyze biological inconsistencies: unnatural blinking frequency, absence of micro-movements in facial muscles, anomalies in blood vessel pulsation.

Technical approaches include analyzing compression artifacts, metadata inconsistencies, and statistical anomalies in pixel distribution characteristic of generative models.

| Verification Level | Method | Reliability |

|---|---|---|

| Automated (known types) | Analysis of biological markers, compression artifacts | ~95% |

| Automated (new variants) | Same methods on unknown models | ~65% |

| Human perception | Visual and auditory assessment | 50–60% (random guessing) |

Each improvement in detection algorithms stimulates the development of more sophisticated generative models capable of bypassing existing verification methods. Accuracy drops with the emergence of new architectures that detection developers haven't yet encountered.

The human eye and ear are becoming unreliable verification tools in the era of synthetic media. Technology is developing faster than methods for detecting it, creating a fundamental asymmetry in favor of creators of fake content.

Ethical and Legal Aspects of Synthetic Media in the Digital Age

Copyright on AI-Generated Content and Legal Uncertainty

The legal status of content created by artificial intelligence remains a subject of intense debate in the legal community. Traditional copyright assumes a human author whose creative expression is protected by law, but synthetic media is created by algorithms with minimal or no human involvement.

Different jurisdictions are developing contradictory approaches: some countries deny protection to AI-generated works, while others recognize copyright for the system operator or algorithm developer.

When AI is trained on copyrighted works and creates derivative works, a conflict of interest arises: artists and photographers file class-action lawsuits, claiming that training on their works without consent violates copyright. The legal system has not yet developed a unified approach to whether using works for AI training constitutes fair use or requires licensing and compensation.

Regulation of Synthetic Media and Labeling Requirements

Legislative initiatives to regulate synthetic media are developing in several directions—from mandatory labeling of AI-generated content to criminal liability for creating malicious deepfakes.

| Jurisdiction / Initiative | Approach | Mechanism |

|---|---|---|

| European Union (AI Act) | Mandatory labeling | Explicit indication of synthetic nature of content, especially when it could be perceived as real |

| California | Criminalization | Criminal liability for deepfakes of politicians before elections and non-consensual pornographic deepfakes |

| Technical standards | Provenance verification | Digital watermarks, cryptographic signatures, metadata at generation stage |

The Content Authenticity Initiative brings together technology companies to develop standards for digital content provenance, enabling tracking of the creation and modification history of media files.

The effectiveness of labeling is limited by technical capabilities to remove metadata and social factors—users often ignore warnings about synthetic content or don't understand their significance. This creates a gap between technical protection and actual audience behavior.

The Future of Synthetic Media: From Multimodality to Hyperreality

Multimodal Content Generation and Technology Convergence

The next generation of synthetic media is characterized by multimodality—the ability to generate coherent content simultaneously across multiple formats. Current models already create text, images, and code within a single prompt.

Future systems will generate complete multimedia projects: videos with synchronized audio, music, and textual accompaniment. Text-to-video will evolve from short clips to feature-length films, where screenplay, visuals, voiceover, and editing are created automatically based on textual description.

Technology convergence leads to the emergence of personalized synthetic media that adapts to individual user preferences.

Advertising campaigns will generate unique video variants for each viewer. Educational platforms will create individualized learning materials, while entertainment services will offer interactive narratives where plot and characters adapt to user choices in real time.

Integration of Synthetic Media with Augmented Reality

The fusion of synthetic media with augmented and virtual reality technologies creates a new class of immersive experiences where boundaries between physical and digital blur. Future AR glasses will overlay synthetic objects and characters onto real environments with photorealistic quality.

Spatial computing combined with generative models will enable the creation of persistent virtual worlds where environments and objects are generated procedurally in response to user actions.

| Opportunities | Risks |

|---|---|

| Unprecedented possibilities for creativity and communication | Deepening of information bubbles |

| Personalized experiences | Detachment from objective reality |

Psychological research documents the phenomenon of "synthetic nostalgia"—emotional attachment to events and places that exist only as AI-generated content.

The future of synthetic media will require not only technological solutions for ensuring safety and authenticity, but also new cultural practices of critical media perception in an era when any image, sound, or video can be synthetic.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Synthetic media are digital content (images, video, audio, text) created or modified using artificial intelligence and machine learning. Technologies include generative adversarial networks (GANs), diffusion models, and transformers, which enable the creation of realistic content without human involvement. They are applied in medicine, marketing, entertainment, and scientific research.

GANs consist of two neural networks: a generator that creates content, and a discriminator that evaluates its realism. They compete against each other—the generator learns to create increasingly convincing images, while the discriminator improves its ability to distinguish fakes from originals. The result is high-quality synthetic content indistinguishable from real content.

In medicine, AI creates synthetic data for training diagnostic systems, visualizes surgical procedures, and helps identify pathologies such as parathyroid glands. Synthetic images supplement real medical data while preserving patient confidentiality. Anti-VEGF therapy technologies and image analysis actively use AI-assisted solutions.

No, that's a myth. While deepfakes are indeed used to create disinformation and fake videos, the technology has legitimate applications: dubbing films, creating virtual assistants, restoring historical recordings. The problem lies not in the technology itself, but in the intentions of those using it and the lack of regulation.

Modern detection methods analyze artifacts, inconsistencies in lighting, unnatural eye movements, and facial micro-expressions. Specialized algorithms check for digital traces and patterns characteristic of AI generation. However, as technologies advance, the quality of synthetic content improves, making detection difficult even for expert systems.

Use available platforms: Midjourney, DALL-E, Stable Diffusion, or alternatives like Kandinsky. Describe the desired image with a text prompt, specifying details of composition, style, and mood. Systems generate results in seconds, allowing iterative improvement through prompt refinement.

Virtual influencers are fully synthetic characters created using AI and 3D modeling who maintain social media accounts. They promote brands, interact with audiences, and create content like real bloggers. Examples include Lil Miquela and Shudu, who have millions of followers and commercial contracts.

The legal status is ambiguous and varies by jurisdiction. In most countries, copyright requires human creativity, so fully AI-generated content may not be protected. If a human substantially participated in creation (prompt engineering, editing), rights may be recognized, but case law is still developing.

Requirements vary by country, but the trend is moving toward mandatory labeling. The EU and some U.S. states are introducing laws requiring disclosure of AI-generated content, especially in political advertising and news. Regulation is under discussion in other regions, but platforms are already implementing voluntary labels.

That's an oversimplification. AI automates routine tasks and expands creative possibilities, but doesn't replace human vision, conceptual thinking, and emotional intelligence. Professionals are adapting by using AI as a tool to accelerate work and experimentation, while retaining their roles as art directors and concept developers.

Use multi-factor authentication and verification through alternative communication channels when receiving unusual requests. Establish code words with colleagues for critical operations and check video calls for artifacts (unnatural movements, lip-sync issues). Train employees to recognize signs of synthetic content.

Diffusion models gradually add noise to an image, then learn to remove it, restoring details. The reverse diffusion process generates new images from random noise, controlling results through text descriptions. The technology ensures high quality and diversity, used in Stable Diffusion and DALL-E.

Yes, synthetic data solves the problem of scarce real datasets, especially in medicine and confidential domains. AI generates diverse examples while preserving statistical properties of original data without revealing personal information. The method accelerates model development and reduces data collection costs.

Multimodal systems simultaneously work with multiple data types: text, images, audio, and video, creating coherent content. For example, generating video with synchronized speech and music from text descriptions. Technologies like GPT-4V and Gemini combine modalities for more natural and contextual interaction.

AI generates virtual objects and characters that overlay the real world through AR devices in real time. Applications include virtual assistants, interactive advertising, and training simulations. The combination of synthetic content and AR creates immersive hybrid environments for work, education, and entertainment.

Yes, AI automatically creates subtitles, audio descriptions, translations, and adapts content for people with disabilities. Synthetic voices narrate text, while image generation helps visualize concepts for different audiences. Technologies reduce barriers to accessing information and cultural content.