What a neural network actually is — and why the term "deep learning" is most often used inaccurately

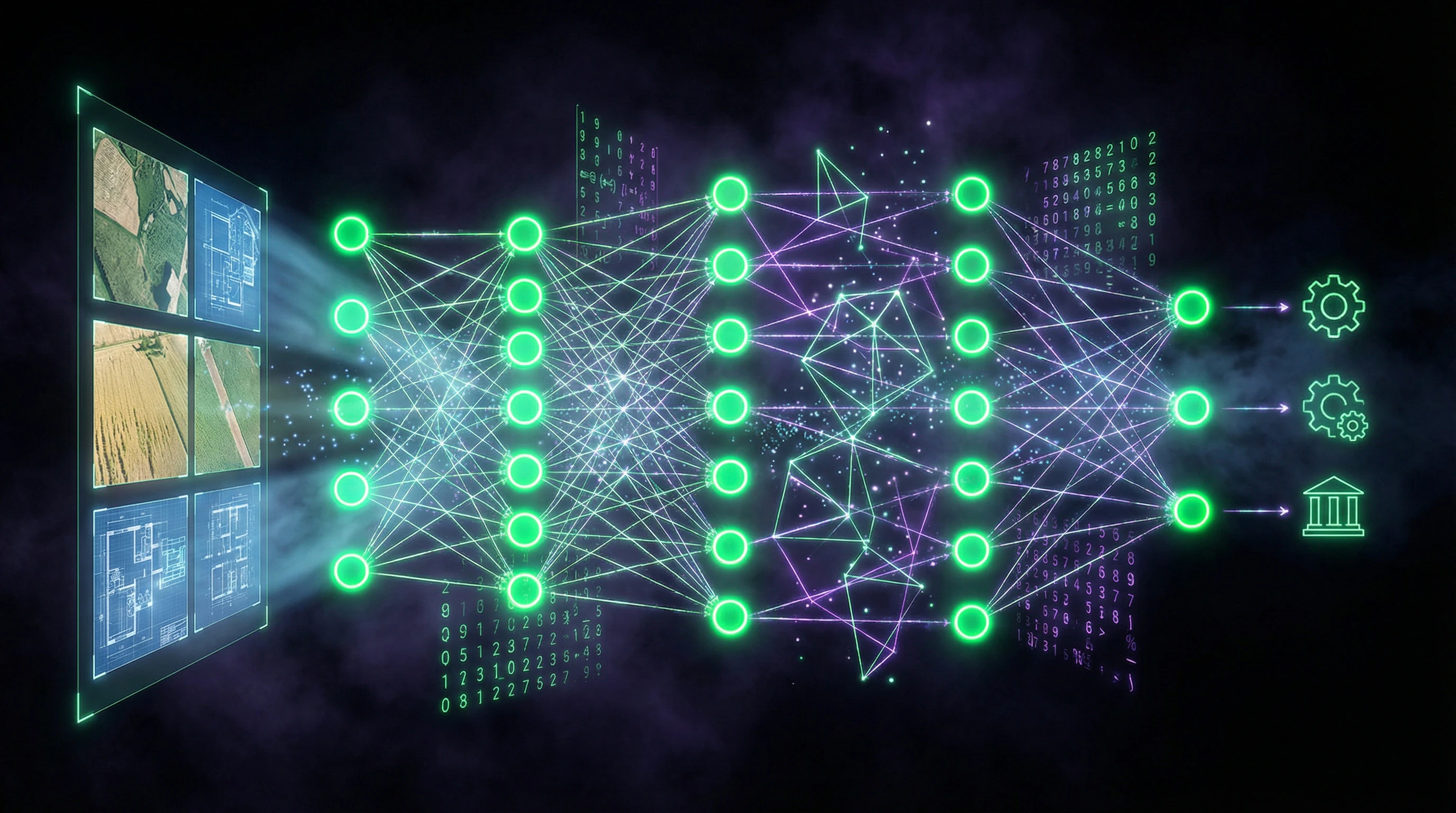

A neural network doesn't think or understand. It's a mathematical model: layers of weighted functions that transform input data into output through matrix operations and nonlinear activations. More details in the section Artificial Intelligence Ethics.

The term "neural" is a historical metaphor, referencing a simplified model of the biological neuron from the 1940s. Modern architectures have as much in common with the brain as an airplane has with a bird: the principle of inspiration exists, but the mechanism of operation is completely different (S012).

🔎 Architectural anatomy: from perceptron to transformers

The basic unit is an artificial neuron: it receives inputs, multiplies each by a weight, sums them, adds a bias, and passes the result through an activation function (sigmoid, ReLU, tanh).

- Perceptron (single layer)

- Solves only linearly separable problems. Historically the first architecture, but practically inapplicable to real data.

- Multilayer perceptron (MLP)

- Adding hidden layers allows approximation of any continuous function — the universal approximation theorem. But it says nothing about how many neurons will be required or how to train them.

"Deep learning" is a subclass of machine learning that uses neural networks with many hidden layers (typically more than three). The key distinction: deep networks automatically extract features from raw data, whereas traditional algorithms require manual feature engineering (S012).

In marketing materials, the term "deep learning" is often applied to any neural network, even a two-layer perceptron. This creates an illusion of technological complexity where none exists.

🧱 Boundaries of applicability: when neural networks are excessive

Critical error: the assumption that neural networks universally outperform other methods. In practice, this is not the case.

| Condition | Neural Network | Classical Methods |

|---|---|---|

| Small data volume (<1000 examples) | Overfitting, instability | Logistic regression, random forest — better |

| Clear linear dependencies | Excessive | Linear models — more efficient |

| Interpretability required | "Black box" | Gradient boosting, trees — more transparent |

| Big data + complex patterns | Optimal | Require manual feature engineering |

A study on neural network applications in agriculture (2021) analyzed 147 papers: only 23% used architectures deeper than five layers, and 41% applied neural networks to tasks where traditional computer vision methods (threshold segmentation, morphological operations) produced comparable results with significantly lower computational complexity (S012).

Tool selection is often determined not by technical requirements, but by technology trends. This is a systemic problem in the industry.

Five Strongest Arguments for Neural Networks — and Why They Only Work Under Specific Conditions

To avoid a straw man fallacy, we must examine the most compelling arguments from proponents of widespread neural network adoption. These arguments have a real foundation, but their validity is strictly limited by context of application. More details in the Synthetic Media section.

🔬 First Argument: Automatic Feature Extraction from Raw Data

Traditional machine learning algorithms require an expert to manually define which data characteristics are important for the task. For images, these might be edges, textures, color histograms; for text — word frequencies, n-grams, syntactic structures.

Deep neural networks, especially convolutional (CNN) for images and recurrent (RNN) or transformers for sequences, automatically learn to extract hierarchical features: first layers detect simple patterns (edges, corners), middle layers — pattern combinations (textures, object parts), final layers — complex concepts (whole objects, semantic relationships) (S008).

This advantage is critically important in domains where the feature space is enormous and non-obvious. Instead of manually programming rules, the network learns from labeled examples and identifies patterns that a human expert might miss.

In agriculture, neural networks are successfully applied for detecting plant diseases from leaf photographs. Research shows 94–98% accuracy for classifying 12 types of tomato diseases using ResNet-50, while traditional methods with manual features achieved only 78–85%.

📊 Second Argument: Scalability with Data Growth

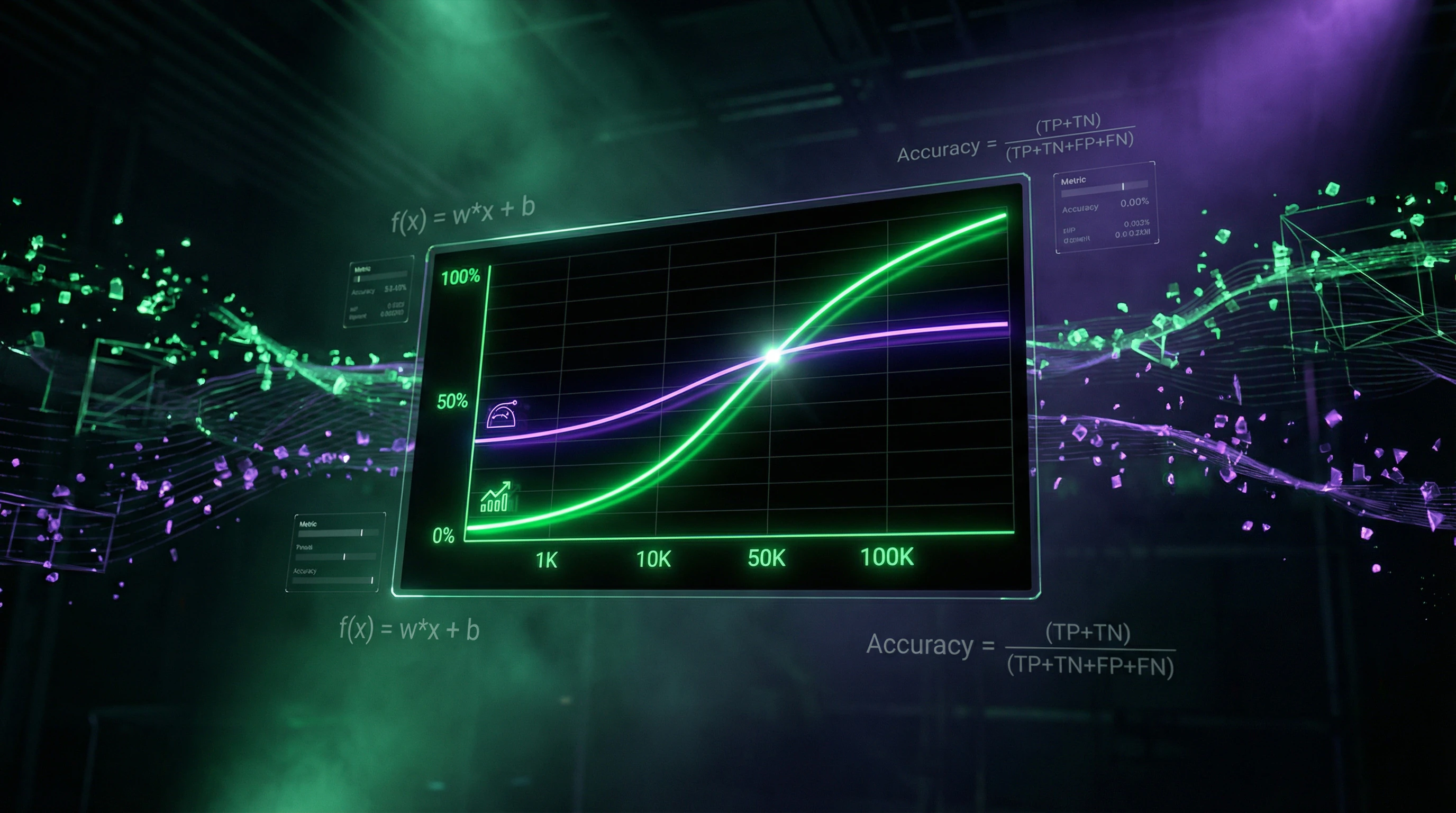

Classical algorithms often reach a performance plateau: after a certain volume of training data, additional examples don't improve model quality. Deep neural networks demonstrate power-law scaling: quality continues to improve with increasing data, albeit at a diminishing rate (S008).

This makes them the preferred choice for tasks where millions of examples are available — speech recognition (S001), machine translation, image generation.

- However, this argument has a critical limitation: for most applied tasks in business and science, hundreds or thousands of examples are available, not millions.

- Under such conditions, neural networks are prone to overfitting — memorizing training examples instead of identifying general patterns.

- Regularization methods (dropout, L2-penalty, data augmentation) partially solve the problem, but don't eliminate the fundamental fact: deep networks require large data to realize their potential.

🧬 Third Argument: Transfer Learning and Pretrained Models

A revolutionary development in recent years — the ability to use neural networks pretrained on massive datasets (ImageNet with 14 million images, Common Crawl with terabytes of text), and fine-tune them on specific tasks with small amounts of data.

Transfer learning works like this: lower layers of the network, which learned to recognize universal features (edges, textures, basic language patterns), are frozen, while upper layers are retrained on the target task (S008).

In real estate, this approach is applied for automatic property valuation from photographs: a model pretrained on ImageNet is fine-tuned on several thousand apartment photos with known prices and achieves a mean absolute error of 8–12%, comparable to professional appraisers' estimates, but requiring seconds instead of hours.

🔁 Fourth Argument: Ability to Model Complex Nonlinear Dependencies

Many real-world processes are characterized by nonlinear, multifactorial dependencies with high-order interactions. For example, crop yield depends not simply on temperature, humidity, and light individually, but on their complex combinations: high temperature may be favorable with sufficient moisture, but devastating during drought.

Neural networks naturally model such interactions through nonlinear activations and multiple layers.

- Overestimation of the Argument

- Gradient boosting (XGBoost, LightGBM) also effectively models nonlinear interactions and often outperforms neural networks on tabular data with lower computational costs and better interpretability.

- Where Neural Networks Actually Win

- On data with spatial (images) or temporal (sequences) structure, where their architectural features (convolutions, recurrent connections, attention mechanisms) naturally correspond to data structure.

✅ Fifth Argument: End-to-End Learning and Target Metric Optimization

Traditional systems often consist of a sequence of modules, each optimized independently: data preprocessing → feature extraction → classification → postprocessing. Errors accumulate at each stage, and optimizing one module doesn't guarantee improvement of the final result.

Neural networks allow training the entire system as a whole (end-to-end), directly optimizing the target metric (classification accuracy, translation quality, recommendation profit).

| Condition | End-to-End Advantage | Risk |

|---|---|---|

| Clearly defined quality metric | Direct optimization of target outcome | System may exploit data artifacts |

| Differentiability of all operations | Error gradient propagates to all stages | Unexpected solutions instead of solving the real task |

| Example: pneumonia classification | Neural network learned to recognize not the pathology, but the type of X-ray machine, because images from different hospitals correlated with diagnoses | |

This approach requires additional verification: validation on independent data, analysis of which features the network uses for decisions, and convincing proof that the system solves precisely the target task, not a spurious artifact.

Evidence Base: What Works in Real Applications vs. What Stays in Presentations

The transition from theoretical arguments to empirical data reveals a significant gap between promises and results. Systematic analysis of neural network applications in two specific domains—agriculture and real estate—allows us to identify patterns of success and failure. More details in the AI Ethics and Safety section.

🧪 Agriculture: From Disease Detection to Yield Prediction

A review of 147 studies on neural networks, deep learning, and computer vision applications in agriculture from 2021 reveals the following picture: 68% of works focus on classification tasks (plant diseases, crop types, fruit ripeness), 22% on object detection (weeds, pests, individual plants), and 10% on segmentation and prediction.

| Task | Share of Studies | Average Accuracy |

|---|---|---|

| Classification | 68% | 91–96% |

| Object Detection | 22% | 82–89% |

| Yield Prediction | 10% | 76–84% |

Critical analysis of methodology reveals systemic problems. 73% of works use public datasets (PlantVillage, ImageNet subset) collected under controlled conditions: leaf photographs against uniform backgrounds, ideal lighting, absence of occlusions (S012).

Performance on controlled data does not transfer to real field conditions. Studies that tested models in reality show accuracy drops of 15–30 percentage points.

Only 12% of works compare neural networks with traditional computer vision methods on the same data. Of these works, 45% show that neural networks outperform traditional methods by less than 5 percentage points—a difference that may not justify the orders-of-magnitude higher computational costs (S012).

Tomato ripeness detection by color is effectively solved with simple threshold segmentation in HSV color space without the need to train a deep network. This is a typical pattern: where visual features are clear-cut, neural networks add complexity without gain.

🏢 Real Estate: Property Valuation and Demand Forecasting

A systematic review of digital transformation in the real estate industry identifies three main directions for neural network applications: Automated Valuation Models (AVM), demand and price forecasting, and image analysis for property classification and description (S009).

Of 89 analyzed works, 52% use neural networks for AVM, 31% for time series price forecasting, and 17% for image analysis.

- AVM Results (neural networks vs. traditional models)

- Mean Absolute Percentage Error (MAPE): 8–15% versus 10–18%. The advantage manifests only on large samples (over 50,000 properties) and when including unstructured data (text descriptions, images) (S009).

- On small samples (fewer than 5,000 properties)

- Gradient boosting shows comparable or better results with significantly less training time.

- Critical problem: valuation opacity

- Regulators and courts require explanations for why a model valued a property at a specific amount. Neural networks as "black boxes" cannot provide such explanations, limiting their application in legally significant contexts (S009).

Interpretability methods (SHAP, LIME) provide only approximate explanations and do not fully solve the problem. This is a fundamental limitation, not a technical detail.

🧾 Meta-Analysis: Patterns of Success and Failure

Synthesizing data from both reviews allows us to identify conditions under which neural networks demonstrate real advantages over alternatives (S009, S012).

- Large data volumes: more than 10,000 labeled examples for classification tasks, more than 50,000 for regression. With smaller volumes, traditional methods with manual feature engineering are often more effective.

- Complex data structure: images, video, audio, text—data where spatial or temporal structure carries critical information. On tabular data, neural network advantages are minimal.

- Availability of computational resources: training deep networks requires GPUs or TPUs. For real-time tasks on edge devices (drones, mobile applications), model optimization is required (quantization, pruning, distillation), adding complexity.

- Error tolerance: in tasks where errors are not critical (recommendation systems, search result ranking), neural networks are effective. In tasks with high error costs (medical diagnosis, autonomous driving), additional validation and safety mechanisms are required.

Failure conditions: small data, interpretability requirements, limited computational resources, need for rapid adaptation to changes (concept drift), tasks with clear rules and logic.

The real choice between neural networks and alternatives is not a choice between "magic" and "ordinary." It's an engineering decision dependent on specific task constraints. Marketing noise arises when these constraints are ignored.

Mechanisms and Causality: Why Neural Networks Work — and Why It's Not Magic

Understanding the mechanisms underlying neural network success is critical for separating real capabilities from mythologized perceptions. More details in the Reality Check section.

🧬 Hierarchical Feature Representation: From Pixels to Concepts

A fundamental property of deep networks is their ability to build hierarchical data representations. In convolutional networks for images, the first layer learns to detect simple patterns (edges at different angles, color gradients), the second layer combines these patterns into more complex ones (corners, arcs, simple textures), the third into even more complex ones (object parts: wheels, windows, leaves), and so on up to the final layers, which represent entire objects and scenes (S008).

This isn't magic, but a consequence of optimization: each layer learns to transform input data so that the next layer can more easily solve its subtask. Gradient descent with backpropagation automatically finds such transformations by minimizing the loss function on training data. Critically important: the network doesn't "understand" concepts—it finds statistical patterns in data that correlate with class labels.

The network learns not universal features, but specific patterns of the training dataset. This distinction between correlation and causation is the main trap that turns high accuracy into an illusion.

🔁 Correlation vs. Causality: A Fundamental Limitation

Neural networks learn to find correlations, not causal relationships. If all cow photos in the training data are taken against grass backgrounds, the network may learn to recognize grass instead of cows—and will classify any image with grass as "cow," even if there's no cow present. This is called spurious correlation, and it's a systemic problem for all machine learning methods, not just neural networks (S004).

In agriculture, this manifests as models' inability to generalize to new conditions: a network trained to recognize tomato diseases in one region may show low accuracy in another region with different climate, plant varieties, and growing methods. The solution requires either collecting data from all target conditions or using domain adaptation methods, which themselves are an active research area without guaranteed solutions.

- Verify: do training data cover all target application conditions?

- Identify: which variables in the data correlate with the target variable but aren't causally related?

- Test: the model on data from new conditions that weren't in training.

- Document: the model's applicability boundaries and conditions under which it may fail.

🧷 Confounders and Hidden Variables: Why High Accuracy Can Be an Illusion

A confounder is a variable that affects both input data and the target variable, creating a spurious correlation between them. A classic medical example: a neural network for diagnosing pneumonia from X-rays showed 95% accuracy on test data, but when deployed in clinical practice, accuracy dropped to 70%. The reason: in training data, images of pneumonia patients were more often taken with portable X-ray machines (because severely ill patients couldn't come to the radiology department), and the network learned to recognize the machine type, not the pathology (S003).

In real estate, there's a similar problem: a property valuation model may learn the correlation between photo quality and price (expensive properties are photographed by professionals) and overestimate any property with professional photos, regardless of actual characteristics. Identifying and controlling confounders requires domain expertise and cannot be fully automated.

- Hidden Confounder

- A variable not measured in the dataset but affecting the relationship between input and output. Example: a patient's socioeconomic status affects both access to quality diagnostics and treatment outcomes, but may not be recorded in medical data.

- Detection Method

- Compare model performance on data subgroups (by gender, age, geography, collection time). If accuracy varies significantly, a confounder is likely present.

- Why This Is Critical

- A model may work perfectly on test data but fail in real-world application if the distribution of confounders has changed.

High accuracy on a test set is not a guarantee of real-world performance. It only guarantees that the model has learned patterns in a specific dataset well, including its artifacts and biases.

Cognitive Anatomy of the Myth: Which Mental Traps Make Us Believe in "AI Magic"

The mythologization of neural networks is no accident. It's the result of three cognitive traps colliding: anthropomorphism, selective attention, and social proof. Learn more in the Epistemology Basics section.

When a system produces human-like text, the brain automatically attributes understanding to it. This isn't a perceptual error—it's evolutionary efficiency: if something talks like a human, it probably thinks like a human.

- Anthropomorphism: we attribute consciousness and intention to any complex behavior

- Selective attention: we notice successes, ignore failures and edge cases

- Social proof: if everyone talks about "AI magic," it must be real

- Illusion of understanding: algorithmic complexity seems synonymous with consciousness

Selective attention amplifies the effect. When ChatGPT writes a poem, it becomes news. When it hallucinates facts or confuses logic—that stays in the shadows.

The myth of AI magic isn't sustained by facts, but by information asymmetry: we see the output, but not the mechanism. Uncertainty gets filled with mysticism.

Social proof closes the loop. Media, investors, even scientists use the language of magic—not because they believe it, but because it works. Language creates the reality of perception.

Protection from these traps is simple: test your assumptions, demand mechanism over results, and remember—complexity ≠ consciousness.