📊 Machine Learning Fundamentals

📊 Machine Learning FundamentalsFundamental Principles and Concepts of Machine Learning for Beginnersλ

Explore fundamental algorithms, mathematical foundations, and practical machine learning methods that form the backbone of modern artificial intelligence and data analysis

Overview

Machine learning is the ability of a system to find patterns in data and apply them to new tasks, bypassing hard-coded rules. Mathematics here 🧩 is not decoration: linear algebra describes feature spaces, probability theory handles uncertainty, optimization searches for the best parameters. Master these three blocks, and you'll have the toolkit to understand any algorithm—from linear regression to transformers.

🛡️

Laplace Protocol: Machine learning fundamentals are not just a collection of algorithms, but a systematic approach to extracting knowledge from data, requiring understanding of statistics, linear algebra, and optimization to build reliable predictive models.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

📊 Machine Learning Fundamentals

📊 Machine Learning Fundamentals⚡

Deep Dive

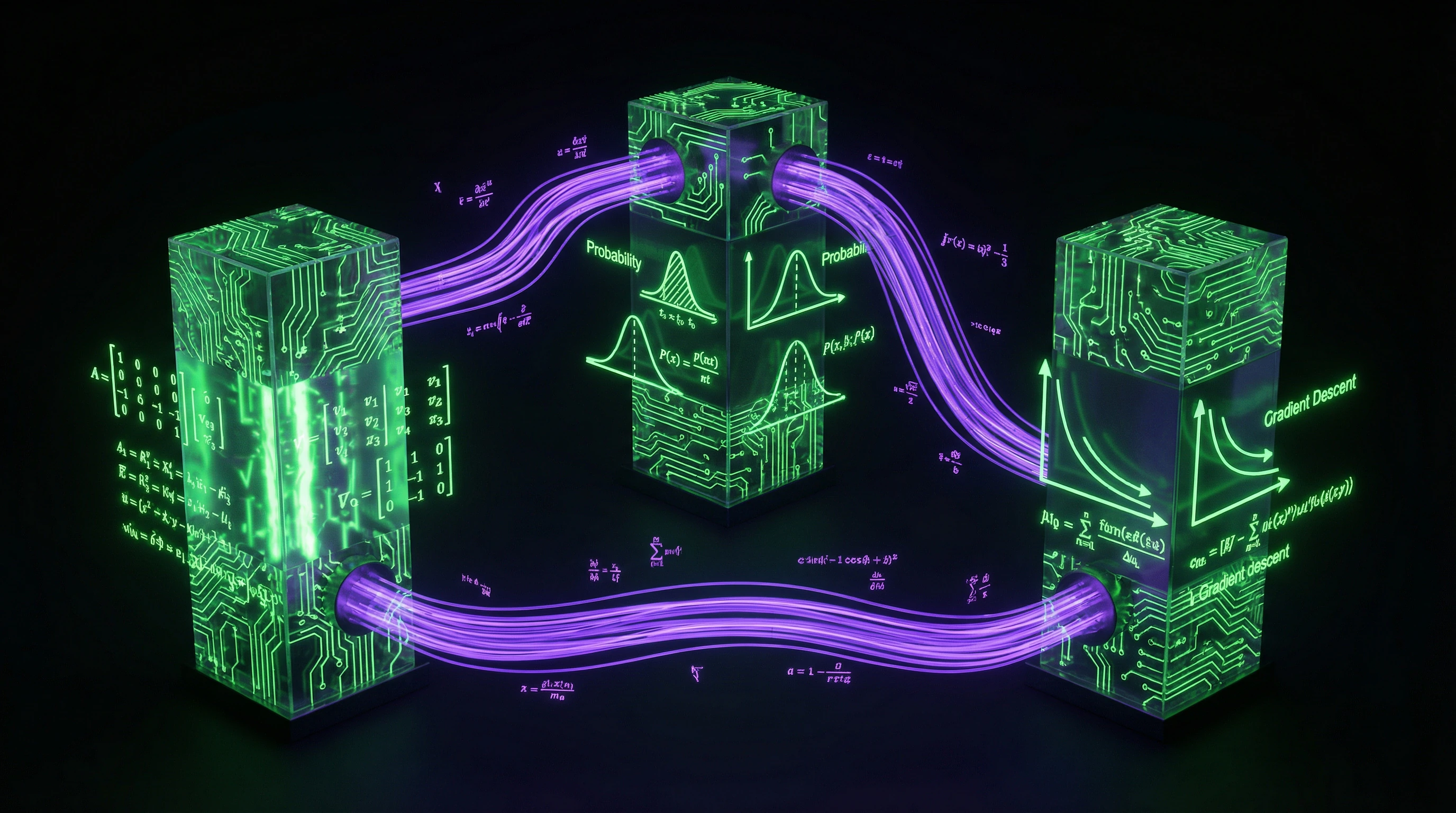

Mathematical Foundations of Machine Learning: Three Pillars Without Which Algorithms Don't Work

Machine learning is built on three mathematical disciplines. Each solves a specific task: linear algebra represents data in multidimensional structures, probability theory handles uncertainty, and optimization methods search for the best model parameters.

Without understanding these foundations, it's impossible to either create new algorithms or competently apply existing ones.

Linear Algebra and Vector Spaces

Every data object in machine learning is a vector in multidimensional space, where each dimension corresponds to one feature. Matrix operations (multiplication, transposition, eigenvectors) form the foundation of neural networks: each layer performs a linear transformation of input data followed by application of a nonlinear activation function.

- Dot Product

- Measures similarity between vectors — critical for classification and clustering.

- Matrix Decomposition (SVD, PCA)

- Reduces data dimensionality and extracts the most significant features.

Probability Theory and Statistics

Probabilistic models underlie Bayesian methods, where each prediction is accompanied by an estimate of the model's confidence. Conditional probability, Bayes' theorem, and probability distributions are necessary for building generative models and working with incomplete data.

| Tool | Application |

|---|---|

| Statistical tests (t-test, chi-square) | Evaluating feature significance and model quality |

| Maximum likelihood | Training parametric models (logistic regression, naive Bayes classifier) |

Optimization Methods and Gradient Descent

Model training reduces to finding parameters that minimize the loss function on training data. Gradient descent is an iterative algorithm that moves in the direction of steepest function decrease, computing partial derivatives with respect to each parameter.

- Stochastic Gradient Descent (SGD) — updates parameters on each example, faster but noisier.

- Adam — adaptive learning rate with momentum, often converges faster.

- RMSprop — adapts learning rate for each parameter separately.

Understanding function convexity and convergence conditions is critical for choosing optimization strategy and training hyperparameters.

Types of Tasks and Learning Algorithms: Choosing the Right Approach

Machine learning is divided by data type and task nature. Supervised learning uses labeled data — for each example, the correct answer is known. Unsupervised learning works with unlabeled data and searches for hidden patterns.

The choice of approach depends not only on the availability of labels, but also on the business task, data volume, and interpretability requirements.

Supervised Learning: Classification and Regression

Classification predicts a discrete label — spam or not spam, cat or dog. Regression predicts a continuous number — price, temperature, sales volume.

| Task Type | Algorithm Examples | Quality Metrics |

|---|---|---|

| Classification | Logistic regression, decision trees, SVM, neural networks | Accuracy, precision, recall, F1-score |

| Regression | Linear regression, polynomial models, gradient boosting | MSE, RMSE, MAE |

Each algorithm has its advantages depending on data size and the complexity of the boundary between classes or the nature of value distribution.

Unsupervised Learning: Clustering and Dimensionality Reduction

Clustering groups objects by similarity without predefined categories — segments customers, detects anomalies, organizes large data arrays. K-means, hierarchical clustering, and DBSCAN differ in how they define similarity and cluster shape.

Dimensionality reduction methods — PCA, t-SNE, UMAP — project data from high-dimensional space into lower-dimensional space, preserving important information and enabling structure visualization.

These techniques are critically important for working with data containing hundreds or thousands of features, where direct analysis is impossible.

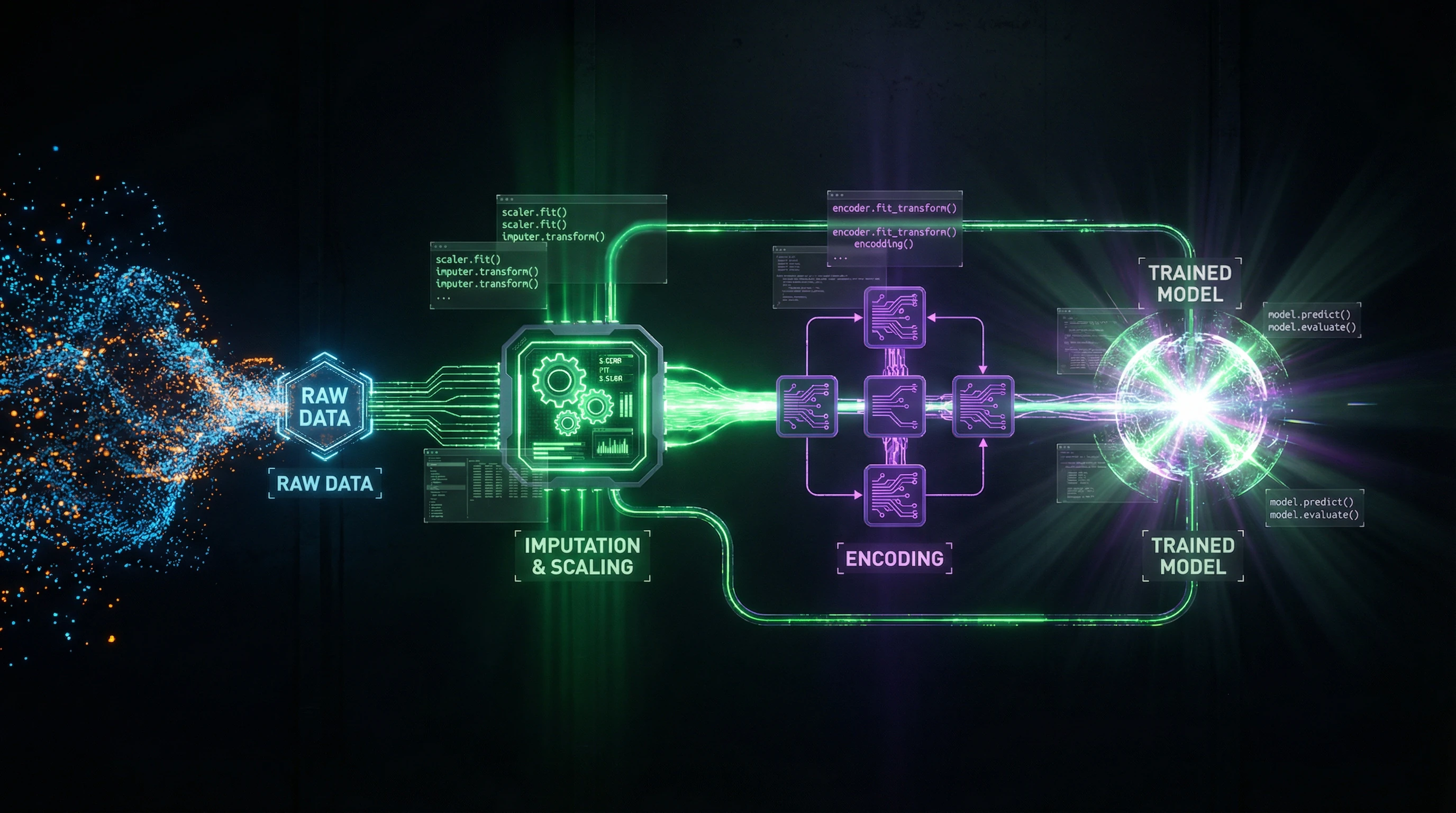

Data Preparation and Processing: 80% of the Work That Determines Model Success

Data quality directly determines the quality of the trained model — even the most sophisticated algorithm cannot extract useful patterns from noisy, incomplete, or improperly prepared data. This work takes up most of the time in real machine learning projects, but it's what determines whether the model will work in production.

Data Cleaning and Normalization

Real data contains missing values, duplicates, outliers, and input errors that must be handled before training begins. Strategies for dealing with missing values include removing rows, filling with mean or median values, or using more sophisticated imputation methods based on other features.

| Normalization Method | Formula/Range | When to Use |

|---|---|---|

| Min-Max scaling | [0, 1] | When a fixed range is needed |

| Standardization (Z-score) | Mean 0, variance 1 | For scale-sensitive algorithms (gradient descent, KNN) |

| Logarithmic transformation | log(x) | For power-law distributions and large value ranges |

Handling outliers requires balancing the removal of anomalous values with preserving rare but important cases.

Feature Extraction and Engineering

Feature engineering — the process of creating new informative features from existing data based on domain knowledge and data analysis. Quality feature engineering often provides greater model improvement than switching to a more complex algorithm.

For time series, this may be extracting trends, seasonality, and lags; for text — creating n-grams, TF-IDF weights, or embeddings; for images — extracting textures, edges, and shapes.

Feature transformations include polynomial features to capture nonlinear dependencies and one-hot encoding for categorical variables.

Data Splitting and Cross-Validation

Proper splitting of data into training, validation, and test sets prevents overfitting and provides an honest assessment of model quality on new data.

- Standard ratio: 60–70% for training, 15–20% for validation, 15–20% for final testing (performed once)

- Cross-validation splits data into k parts and trains k models, each time using different parts for training and validation

- For time series, a special strategy is used that preserves temporal order

- For imbalanced classes, stratified splitting is applied, preserving class proportions across all sets

Cross-validation provides a more reliable quality assessment with limited data volume.

Core Machine Learning Algorithms: From Regression to Ensembles

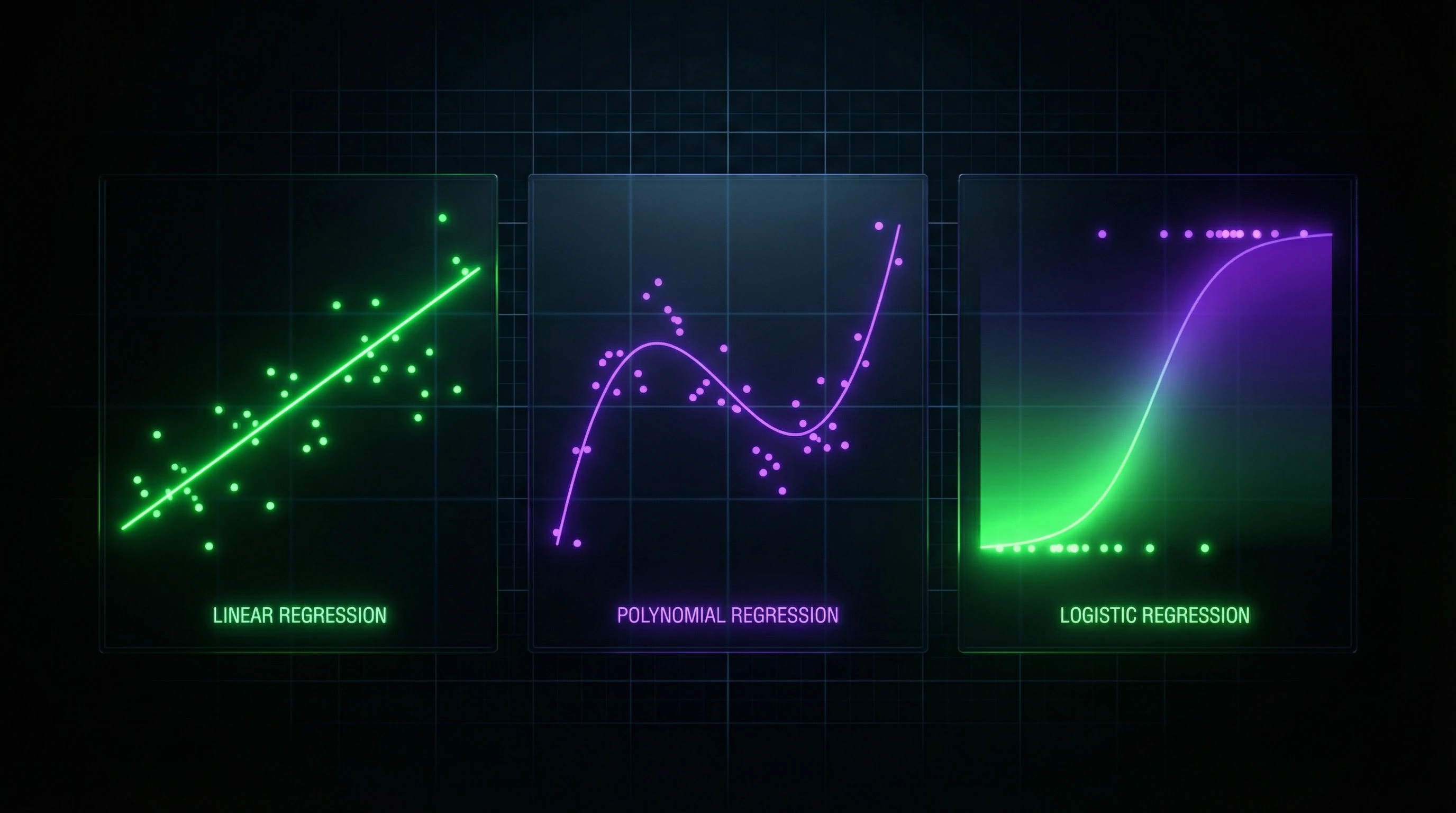

Linear regression models the relationship between a target variable as a weighted sum of features plus an intercept term, minimizing the mean squared error between predictions and actual values.

The least squares method finds optimal weights analytically through the pseudoinverse matrix, making training fast but requiring a linear relationship between features and target. Logistic regression applies a sigmoid function to a linear combination of features, transforming the result into a probability of class membership.

Despite its name, logistic regression solves classification problems rather than regression, and is particularly effective for binary classification with linearly separable classes.

Linear and Logistic Regression

Regularization adds a penalty for large weight values to the loss function. L1 regularization (Lasso) uses the sum of absolute weight values and leads to sparse solutions with zero coefficients, effectively performing feature selection.

L2 regularization (Ridge) uses the sum of squared weights and uniformly reduces all coefficients. Elastic Net combines both approaches, balancing between feature selection and solution stability through two hyperparameters.

- Polynomial Regression

- Extends the linear model by creating new features as powers and products of original features. Allows modeling nonlinear relationships but dramatically increases overfitting risk at high degrees.

- Feature Standardization

- Critically important for regularized models, since weight penalties must be comparable in scale.

Decision Trees and Random Forests

A decision tree recursively partitions the feature space into rectangular regions, choosing at each step the feature and threshold that maximally reduce uncertainty in child nodes, measured through entropy for classification or variance for regression.

The algorithm greedily builds the tree top-down, stopping when reaching maximum depth, minimum number of samples in a leaf, or when further splitting doesn't improve quality. Trees are interpretable, work with categorical features without encoding, and automatically perform feature selection.

Trees are prone to overfitting and instability — small changes in data can radically alter tree structure.

Random forest trains multiple trees on random subsamples of data (bagging) with additional randomization through selecting a random subset of features at each node. Final predictions are obtained by averaging for regression or voting for classification.

This strategy dramatically reduces overfitting and prediction variance while maintaining the low bias of trees, making random forests one of the most reliable general-purpose algorithms. Gradient boosting builds trees sequentially, with each subsequent tree correcting errors of previous ones by training on gradients of the loss function.

- Provides highest accuracy among tree-based methods

- Requires careful tuning of learning rate and number of trees

- Needs protection from overfitting through early stopping

Support Vector Machines

SVM seeks a hyperplane that maximizes the margin — the distance to the nearest objects of different classes, called support vectors. This ensures better generalization ability compared to simple class separation.

For linearly non-separable data, a slack variable is introduced, allowing some objects to violate the margin with a controlled penalty through parameter C, balancing between margin width and number of errors.

The kernel trick implicitly maps data to a high-dimensional space through kernel functions, where classes become linearly separable, without explicitly computing coordinates in the new space.

The RBF kernel creates an infinite-dimensional feature space and is particularly effective for complex nonlinear boundaries, but requires tuning the gamma parameter, which controls the radius of influence of each object.

Evaluating Model Quality: Metrics and Validation Strategies

Classification and Regression Metrics

For classification, accuracy measures the proportion of correct predictions, but is useless with imbalanced classes — a model predicting only the majority class will achieve high accuracy without real utility.

Precision shows the proportion of true positives among all predicted positives (critical when false positives are costly). Recall — the proportion of found positives among all actual positives (critical when misses are dangerous). F1-score — their harmonic mean, balancing both metrics.

| Metric | What It Measures | When to Use |

|---|---|---|

| ROC-AUC | Ability to rank objects across all thresholds (0.5 = random, 1.0 = ideal) | When classification threshold is unknown in advance |

| MAE | Average deviation in original units, robust to outliers | Regression with interpretability |

| MSE / RMSE | Penalizes large errors; RMSE returns to scale | Regression when outliers are critical |

| R² | Proportion of explained variance (1 = ideal, 0 = mean, <0 = worse than trivial) | Regression, model comparison |

| MAPE | Error as percentage of actual values | Interpretability, but undefined at zeros |

Overfitting and Underfitting

Overfitting occurs when a model memorizes noise and random patterns in the training set instead of true dependencies. Result: excellent performance on train, poor on test — a sign of high variance.

Underfitting manifests in low quality on both sets due to insufficient model complexity. The model cannot capture true patterns — a problem of high bias.

Learning curves (metrics on train and validation as a function of sample size) provide diagnosis: with overfitting, curves diverge with a large gap; with underfitting, both remain at low levels; with optimal complexity, they converge at high quality.

Regularization and Hyperparameter Selection

Regularization controls model complexity through penalties in the loss function (L1/L2), constraints on tree depth, dropout in neural networks, or early stopping when monitoring validation.

- Grid search: enumerates all combinations of hyperparameters from a defined grid, guaranteeing an optimum in discrete space, but requires exponential training runs as parameters grow.

- Random search: randomly samples combinations from distributions, often finding good solutions faster than grid search, especially when some parameters are more important than others.

- Bayesian optimization: builds a probabilistic model of quality dependence on hyperparameters and iteratively selects the next point, balancing exploration and exploitation, minimizing the number of costly training runs.

Practical Applications and Machine Learning Tools

Python Libraries for ML: scikit-learn, pandas, numpy

NumPy provides multidimensional arrays and vectorized operations that execute orders of magnitude faster than Python loops thanks to C implementation. Pandas builds on top of NumPy with DataFrame structures for tabular data with named columns, indexes, and tools for filtering, grouping, and merging.

Scikit-learn implements dozens of machine learning algorithms with a uniform API: all models have fit() methods for training and predict() methods for predictions, allowing you to experiment with different algorithms by changing a single line of code.

- Matplotlib and seaborn visualize data and model results

- Jupyter Notebook combines code, visualizations, and text in a single document

- Scikit-learn modules: preprocessing for scaling, model_selection for cross-validation, metrics for quality assessment, pipeline for combining steps

Building Data Processing Pipelines

Pipeline sequentially applies data transformations and the final model, guaranteeing identical processing of train and test data and preventing information leakage.

ColumnTransformer applies different transformations to different feature subsets: numerical features are scaled with StandardScaler, categorical features are encoded with OneHotEncoder, text features are vectorized with TfidfVectorizer — all in one object.

Pipeline integrates with GridSearchCV, allowing hyperparameter tuning for both transformations and the model through a unified interface with automatic cross-validation on each parameter combination.

FeatureUnion applies multiple transformations to the same data in parallel and concatenates the results, allowing you to combine different feature representations.

Custom transformers are created by inheriting from BaseEstimator and TransformerMixin, implementing fit() and transform() methods. Serialization via joblib or pickle saves the entire trained pipeline, including all transformation parameters and model weights, ensuring reproducibility and simple production deployment.

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Machine learning is a branch of artificial intelligence where computers learn to solve problems based on data, without explicitly programming every step. Algorithms find patterns in examples and use them to make predictions on new data. For instance, a facial recognition system learns from thousands of photos and then identifies people in new images.

The foundation is linear algebra (matrices, vectors), probability theory, and mathematical statistics. Optimization methods are also important, especially gradient descent for training models. Beginners need to understand basic concepts; deep knowledge is required for developing new algorithms.

In supervised learning, the model learns from labeled data with known answers (for example, photos labeled 'cat' or 'dog'). Unsupervised learning works with unlabeled data, finding hidden structures—clusters of similar objects or patterns. The first type is used for classification and regression, the second for segmentation and dimensionality reduction.

That's a myth—data volume depends on the task and algorithm. Simple models (linear regression, decision trees) work effectively on hundreds of examples. Deep neural networks do require millions of samples, but techniques like transfer learning and data augmentation allow training models on smaller datasets with good quality.

Overfitting occurs when a model performs excellently on training data but poorly on new data, having 'memorized' noise instead of patterns. Prevention methods include regularization (L1/L2), cross-validation, increasing data volume, and simplifying the model. Proper data splitting into train/validation/test sets is essential for controlling overfitting.

Start with Python and libraries like numpy and pandas for data manipulation. Then learn scikit-learn—it contains ready-made algorithms and clear documentation. Practice on simple datasets (Iris, Titanic), gradually studying the theory of linear regression, decision trees, and quality metrics.

Main metrics include accuracy (proportion of correct answers), precision, recall, and F1-score (their harmonic mean). For imbalanced classes, accuracy is misleading—it's better to look at precision/recall. ROC-AUC shows the quality of class separation at different probability thresholds.

Feature engineering is creating new features from raw data to improve model quality. For example, from a date you can extract day of week, month, or holiday status. Proper feature engineering is often more important than algorithm selection, especially for classical ML methods that don't automatically extract complex dependencies.

Regression predicts continuous numerical values (apartment price, temperature), while classification predicts categories or classes (spam/not spam, animal species). Regression uses metrics like MSE, RMSE, and MAE; classification uses accuracy and F1-score. Some algorithms (decision trees, neural networks) solve both tasks with minor modifications.

In most cases yes, but not always. Random forest averages predictions from multiple trees, reducing overfitting and variance. However, it's harder to interpret, slower to run, and requires more memory. For simple tasks with small amounts of data, a single tree may be sufficient and more understandable.

Cross-validation divides data into K parts (folds), trains the model on K-1 parts and tests on the remaining one, repeating the process K times. The final score is the average across all folds, providing a reliable quality estimate. Standard choice is 5 or 10 folds, balancing estimation accuracy and computational cost.

Normalization brings features to a common scale, which is critical for scale-sensitive algorithms (SVM, neural networks, k-NN). Without it, features with large values (e.g., income in dollars) will dominate over small ones (age). Standardization (z-score) and min-max scaling are the most popular normalization methods.

No-code platforms exist (Google AutoML, Azure ML Studio) that allow building models through an interface. However, for serious work programming is necessary—you need to process data, tune models, and integrate solutions. Python with scikit-learn libraries is the minimum entry threshold; basics can be learned in a few weeks.

Hyperparameters are algorithm settings defined before training (tree depth, learning rate, number of neighbors in k-NN). They're tuned through Grid Search (exhaustive grid search) or Random Search (random sampling). It's important to use cross-validation during tuning to avoid overfitting on the validation set.

Yes, classical algorithms (logistic regression, SVM, trees) are effective on datasets from 100-1000 examples. The key is proper model selection and regularization to prevent overfitting. For very small data (dozens of examples), it's better to use simple interpretable models or expert rule-based methods.

SVM finds the optimal separating hyperplane with maximum margin between classes, while logistic regression maximizes the probability of correct classification. SVM works better in high-dimensional spaces and with the kernel trick solves nonlinear problems. Logistic regression is easier to interpret and provides calibrated class membership probabilities.