Systematic Errors and Bias in Artificial Intelligence in Medicineλ

How to recognize and minimize the risks of algorithmic errors in diagnosis, surgery, and clinical research

Overview

Artificial intelligence in medicine promises to revolutionize diagnosis and treatment, but carries risks of systematic errors and bias. From AI-assisted intraoperative imaging of parathyroid glands to meta-analysis of therapy effectiveness — algorithms can reproduce human prejudices or create new types of errors. Understanding the nature of these errors is critically important for safe implementation of AI in clinical practice.

🛡️ Laplace Protocol: Systematic verification of AI systems for bias includes validation on diverse populations, assessment of sensitivity and specificity by subgroups, analysis of false-positive and false-negative results, and comparison with the gold standard of diagnosis.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

⚡

Deep Dive

Types of Systematic Errors in Medical AI Systems: From Data to Diagnosis

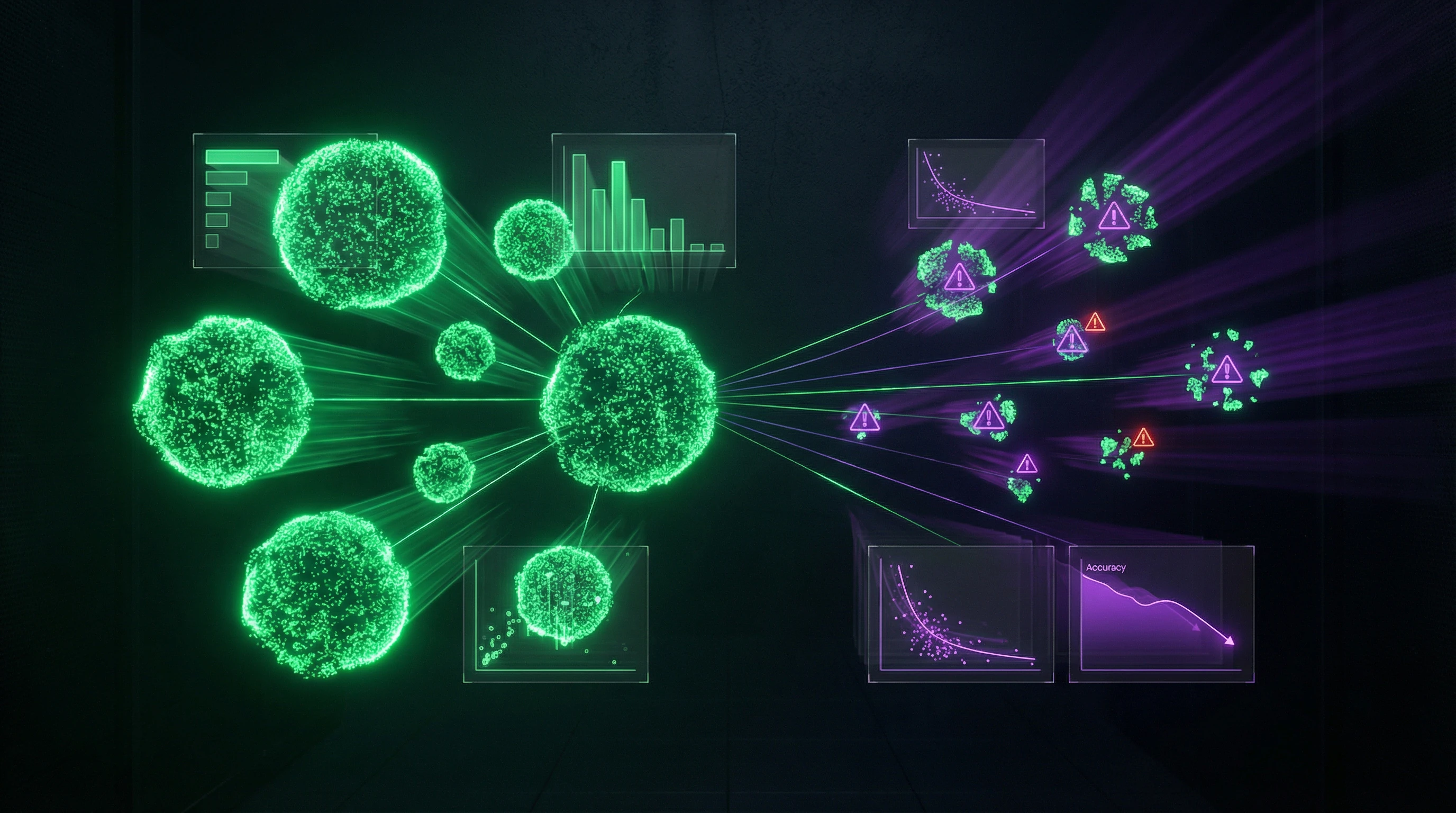

Medical AI systems demonstrate high accuracy in laboratory settings, but when implemented in clinical practice, they face a fundamental problem: systematic errors embedded during development lead to incorrect diagnoses and treatment decisions. Most AI system failures occur not due to algorithm defects, but due to the quality and representativeness of training data.

An error in data is an error in diagnosis. The algorithm merely reproduces what it was trained on.

Training Data and Sampling Errors

Systematic sampling error occurs when the training dataset does not reflect the actual distribution of patients in clinical practice. If an AI system for breast cancer diagnosis was trained predominantly on data from postmenopausal women, its accuracy for premenopausal patients will be significantly lower—the relationship between risk factors and cancer subtypes differs depending on menopausal status.

The problem of class imbalance exacerbates the situation: rare diseases or atypical presentations are underrepresented in training samples, leading to systematic underdetection. Study heterogeneity—differences in populations, diagnostic methods, and inclusion criteria—creates an additional layer of uncertainty when assessing diagnostic accuracy.

- Publication Bias

- Studies with positive results are published more frequently, distorting the perception of actual AI technology effectiveness and creating an illusion of reliability for systems that perform less consistently in practice.

Algorithmic Biases and Overfitting

Algorithmic bias occurs when a model learns not true clinical patterns, but data artifacts or social stereotypes encoded in historical medical records. Overfitting—when a model performs perfectly on training data but shows low accuracy on new patients—is particularly dangerous in medicine, where the cost of error is measured in human lives.

| Error Type | Mechanism | Clinical Risk |

|---|---|---|

| Overfitting | Model memorizes noise instead of patterns | Excellent laboratory results, failure in clinic |

| Feedback Loops | Risk underestimation → fewer examinations → more underdetection | Systematic missed diagnoses in certain groups |

| Data Artifacts | Model captures technical features, not clinical ones | System works only in one hospital, fails in another |

Feedback loops create self-reinforcing biases: if an AI system systematically underestimates risk for a particular patient group, these patients receive additional examinations less frequently, leading to insufficient data about their true condition, further amplifying the original error.

Many AI systems demonstrate excellent results in controlled conditions, but their diagnostic performance requires thorough validation before clinical implementation. Even when targeting a single biological pathway, different approaches demonstrate varying efficacy and safety profiles, requiring consideration of multiple factors when developing AI-based decision support systems.

AI in Intraoperative Diagnosis: The Case of Parathyroid Glands and the Cost of Error

Intraoperative identification of parathyroid glands is a critical task in endocrine surgery. An error means inadvertent removal or damage to organs that regulate calcium metabolism.

AI-assisted computer vision systems demonstrate that misidentification remains a primary source of postoperative complications: hypocalcemia, nerve damage. The technology requires rigorous validation protocols before implementation.

Diagnostic Accuracy of Computer Vision

AI systems use deep learning to analyze intraoperative images in real time. They recognize parathyroid glands by visual characteristics: size, color, vascularization, anatomical location.

Meta-analyses evaluate sensitivity, specificity, and area under the ROC curve, but encounter substantial heterogeneity: differences in surgical techniques, imaging modalities, gold standard criteria. Systematic reviews emphasize the need for standardized evaluation protocols.

- Parathyroid glands are anatomically variable: number (typically four, but ranging from two to six), location, and appearance differ between patients.

- Training datasets must cover this spectrum of variability, otherwise AI systematically misses atypical cases—precisely those where assistance to the surgeon is critical.

- AI performance depends on the quality of intraoperative visualization, lighting, and the presence of pathological tissue changes.

Limitations and Risks of Misidentification

False-positive identification (AI marks another structure as a parathyroid gland) leads to unnecessary manipulation and damage to surrounding tissues, including the recurrent laryngeal nerve.

False-negative error (missing an actual parathyroid gland) increases the risk of its inadvertent removal or damage, causing postoperative hypocalcemia requiring lifelong replacement therapy.

AI systems should be considered assistive tools that complement, but do not replace, the surgeon's clinical judgment.

Many AI studies in surgery are conducted in single-center settings with limited external validation. This calls into question the generalizability of results.

- Contextual Variability

- Differences in surgical protocols, patient populations (primary surgery versus reoperation), and comorbid pathologies (thyroiditis, cancer) create conditions that AI models must account for.

- Pre-Implementation Requirement

- Multicenter prospective studies with clear protocols for evaluating diagnostic accuracy and subgroup analyses to identify situations where the system is most and least reliable.

Bias in Systematic Reviews and Meta-Analyses: When Evidence Synthesis Distorts Reality

Systematic reviews and meta-analyses are considered the pinnacle of the evidence hierarchy in medicine, but are themselves subject to multiple sources of systematic error that can distort conclusions and clinical recommendations. Tools designed for objective synthesis of scientific data can amplify bias from primary studies and introduce additional distortions during selection, analysis, and interpretation.

The synthesis paradox: the more studies combined, the higher the risk of amplifying systematic error if it's present across all sources simultaneously.

Publication Bias and Study Heterogeneity

Publication bias occurs when studies with positive or statistically significant results are published more frequently than work with negative or null findings. This creates a distorted picture of intervention effectiveness.

Meta-analyses of anti-VEGF therapies for neovascular age-related macular degeneration face this problem: comparative effectiveness and safety of different agents (aflibercept, ranibizumab, bevacizumab, brolucizumab, faricimab) remains uncertain due to heterogeneity in study designs and selective publication of results. Funnel plots and statistical tests (Egger, Begg) are used to detect publication bias, but their sensitivity is limited with small numbers of studies.

- Check funnel plot asymmetry — a sign of selective publication

- Apply statistical tests (Egger, Begg) accounting for their limitations

- Conduct sensitivity analysis excluding studies with the largest effects

- Assess whether conclusions change when potentially biased studies are excluded

Heterogeneity between studies — differences in patient populations, outcome definitions, measurement methods, and follow-up duration — creates a fundamental problem for meta-analysis. Studies of the association between body mass index and breast cancer risk demonstrate that the effect varies depending on menopausal status and tumor molecular subtype, requiring stratified analysis and cautious interpretation of pooled estimates.

High statistical heterogeneity (I² > 75%) indicates that pooling results may be inappropriate, but many meta-analyses ignore this warning.

Statistical Methods for Detecting Systematic Errors

Modern meta-analyses use network methods (network meta-analysis) for simultaneous comparison of multiple interventions, but these approaches require the assumption of transitivity — that comparisons through a common comparator are valid. Violation of transitivity, when studies differ in effect modifiers (age, disease severity, concomitant therapies), can lead to systematically biased conclusions about comparative effectiveness.

Sensitivity analysis and meta-regression are used to investigate sources of heterogeneity, but their interpretation requires caution with limited numbers of studies.

| Error Detection Method | What It Checks | Limitation |

|---|---|---|

| Funnel plot | Asymmetry in effect distribution | Non-specific; asymmetry may be caused by heterogeneity rather than publication bias |

| Egger test | Small-study bias | Low power with < 10 studies |

| Meta-regression | Association of study characteristics with effect | Requires sufficient number of studies; results depend on variable selection |

| ROBIS, QUADAS-2 | Risk of bias in primary studies | Subjective; low inter-rater agreement |

Risk of bias assessment in primary studies is a mandatory component of quality systematic reviews, but is itself subject to subjectivity. Studies show low inter-rater agreement in bias risk assessment, especially in domains requiring clinical judgment.

Systematic reviews of AI technologies must explicitly state limitations of included studies, areas of uncertainty, and the need for additional research, avoiding premature conclusions about clinical readiness of technologies based on limited or biased data.

AI System Validation: Methodology and Accuracy Standards in Medical Diagnostics

Evaluating diagnostic performance of AI requires rigorous metrics: sensitivity (proportion of true positive cases), specificity (proportion of true negative cases), positive and negative predictive value. A systematic review of AI-assisted intraoperative parathyroid gland imaging demonstrates the necessity of standardized assessment of these parameters to determine clinical applicability.

Critically important: predictive value depends on the prevalence of the condition in the population. Even a highly sensitive test produces numerous false positives when disease prevalence is low.

AI validation studies must report the complete confusion matrix and confidence intervals for all metrics, not just overall accuracy, which can be misleading with imbalanced datasets.

Sensitivity, Specificity, and Predictive Value

An AI system's sensitivity determines its ability to identify the target structure (e.g., parathyroid gland), minimizing the risk of missed detection and subsequent complications such as hypocalcemia. Specificity controls the rate of false alarms, which can lead to unnecessary surgical interventions and increased operative time.

- Positive Predictive Value (PPV)

- Indicates what proportion of AI's positive predictions are actually correct. When identifying rare anatomical variants, even a system with 95% specificity may yield PPV below 50%.

- ROC Curves and AUC

- Demonstrate the tradeoff between sensitivity and specificity at various decision thresholds. Mandatory in validation study reporting.

Comparison with Gold Standard and Expert Assessment

AI validation requires comparison with an established gold standard: for intraoperative parathyroid gland identification, this may be histopathological confirmation or expert surgeon consensus. The challenge is that the gold standard itself is often imperfect—inter-expert agreement in visual identification of anatomical structures can be moderate (Cohen's kappa 0.4–0.6), creating a performance ceiling for AI.

- Assess not only AI agreement with individual experts, but compare AI performance against inter-expert variability.

- Test on independent datasets from other medical centers—AI systems can overfit to artifacts of specific equipment or imaging protocols.

- Check for sharp performance drops during external validation, which reveals deceptively high accuracy on internal data.

📊

AI Diagnostic Validation Metrics

Sensitivity

85-95%

Target structure detection

Specificity

90-98%

False alarm minimization

External Validation

-15-30%

Accuracy drop on new data

Inter-Expert Agreement

κ 0.4-0.6

Performance ceiling for AI

Ethical Aspects of Algorithmic Bias: Fairness and Transparency in AI Decisions

Algorithmic bias occurs when training data disproportionately represents certain demographic groups, leading to systematically worse AI performance on underrepresented populations. AI systems for breast cancer diagnosis trained predominantly on data from Caucasian women demonstrate reduced sensitivity for African American and Asian women.

The problem is compounded by the fact that different breast cancer subtypes have varying prevalence across ethnic groups, and associations with risk factors vary depending on menopausal status and molecular subtype. Ethical validation of AI requires stratified performance analysis across demographic subgroups and explicit specification of the system's applicability limitations.

- Verify representativeness of training data across all demographic groups in the target population

- Conduct stratified analysis of accuracy metrics (sensitivity, specificity) for each subgroup

- Document performance thresholds below which the system is not recommended for clinical use

- Specify explicit applicability limitations in the instructions for use

Fairness and Equal Access to AI Diagnostics

Fairness of AI systems is evaluated through metrics of equalized odds and demographic parity, requiring comparable rates of type I and type II errors across all groups. Systematic reviews of therapy effectiveness must account for the fact that access to different drugs and technologies varies by geographic region and healthcare system.

AI systems optimized for expensive equipment or protocols unavailable in resource-limited settings create a new dimension of healthcare inequality.

Development should include testing on data from diverse clinical settings and explicit documentation of minimum technical requirements for reliable system operation.

Transparency and Explainability of AI Decisions

Transparency of AI systems requires explainability—the ability to provide clinically interpretable justification for each decision, not just the final verdict. Techniques such as gradient-weighted class activation visualize image regions influencing the neural network's decision, allowing clinicians to assess whether predictions are based on relevant anatomical features or artifacts.

- Post-hoc explanations

- Generated after decision-making; can be deceptive, creating an illusion of understanding without real insight into the algorithm's logic.

- Inherently interpretable models

- Provide direct access to decision-making logic; require more computational resources but are more reliable for high-risk applications.

Regulatory requirements (e.g., EU AI Act) increasingly mandate documentation of decision-making logic for high-risk medical AI systems, but standards for explanation adequacy remain subject to debate among developers, clinicians, and regulators.

Error Minimization Protocols for AI Implementation: From Validation to Clinical Integration

Minimizing AI errors requires a multi-layered approach: technical validation on diverse datasets, clinical validation in real-world use conditions, and post-market performance monitoring.

Systematic reviews of AI technologies must explicitly state the limitations of included studies, areas of uncertainty, and the need for additional research, avoiding premature conclusions about clinical readiness based on limited data.

Implementation protocols must include pilot testing with end users, assessment of impact on clinical workflow, and feedback mechanisms to identify edge cases—rare scenarios where AI systematically fails.

It's critically important to establish clear criteria for overriding AI recommendations and escalation protocols when systematic errors are detected.

Multi-Center Validation and Performance Monitoring

Multi-center validation tests AI on data from different healthcare facilities with varying equipment, protocols, and patient demographics, identifying generalizability issues before widespread deployment.

Post-market monitoring should track not only overall accuracy but also performance drift—gradual degradation due to changes in patient populations, equipment updates, or clinical protocols.

- Automated monitoring systems detect statistically significant deviations from baseline performance

- Deviations trigger revalidation or temporary system shutdown

- Problem resolution precedes resumption of use

Integrating AI as a Support Tool

AI systems should be positioned as decision support tools, not replacements for clinical judgment.

The interface must explicitly communicate the system's confidence level and provide mechanisms for rapid clinician override without bureaucratic barriers.

- User Training

- Includes technical aspects of working with the system and understanding typical AI failure modes—situations where the algorithm systematically errs.

- Documenting Discrepancies

- Cases where AI recommendations differ from clinical decisions create valuable datasets for iterative system improvement and identification of algorithm blind spots invisible during standard testing on static datasets.

🔄

AI System Validation and Monitoring Cycle

1️⃣

Internal Validation

Testing on developer data (overfitting risk)

2️⃣

Multi-Center Validation

Independent datasets, different equipment and protocols

3️⃣

Clinical Piloting

Real-world conditions, workflow integration

4️⃣

Post-Market Monitoring

Continuous tracking of performance drift

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Systematic errors are predictable deviations in AI performance arising from imbalanced data, algorithmic biases, or improper validation. They lead to inaccurate diagnoses for certain patient groups or clinical situations. Detection requires rigorous testing on diverse samples.

Computer vision AI systems analyze intraoperative images in real-time, helping surgeons recognize parathyroid glands and avoid damaging them. This reduces the risk of postoperative hypocalcemia and other complications. The technology functions as an assistive tool, not replacing the surgeon's clinical experience.

Balanced datasets representing different age groups, genders, ethnicities, and disease stages are necessary. Data must be collected from multiple medical centers with varying equipment. Careful expert annotation and checking for hidden correlations that create biases are critically important.

No, that's an oversimplification. AI outperforms doctors in narrow tasks under ideal conditions but falls short in comprehensive assessment and atypical cases. Best results are achieved through collaborative work between AI and specialists. Systematic reviews show high variability in AI accuracy depending on application context.

Publication bias occurs when studies with positive results are published more frequently than those with negative results, distorting the overall picture of AI effectiveness. This inflates diagnostic accuracy estimates in systematic reviews. Detection uses statistical methods like funnel plots and asymmetry tests.

Metrics used include: sensitivity (proportion of detected cases), specificity (proportion of correctly identified healthy individuals), predictive value, and area under the ROC curve. Validation is conducted on independent samples not involved in training. Comparison with gold standards (histology, expert assessment) is mandatory for clinical application.

AI trains on available data, which often underrepresents minorities, rare diseases, or specific demographic groups. The algorithm memorizes patterns from the majority and performs worse on underrepresented cases. This creates inequality in healthcare quality and requires targeted dataset correction.

Complete elimination is impossible, but it can be significantly reduced through diverse training data, regular auditing, and multicenter validation. Bias often reflects real inequalities in healthcare and data quality. Continuous monitoring of AI performance in clinical practice is critically important for identifying new sources of error.

Multi-stage validation is required: internal (on a portion of original data), external (on data from other centers), and prospective (in real practice). Testing across different patient subgroups, equipment, and clinical scenarios is necessary. Comparison with expert assessments and analysis of error cases are mandatory before registration.

Overfitting occurs when AI performs excellently on training data but poorly on new cases, memorizing noise instead of patterns. In medicine, this leads to false diagnoses with the slightest deviations from the training sample. It's prevented through data splitting, regularization, and validation on independent datasets from other clinics.

Yes, explainability is critically important for clinical trust and legal accountability. Physicians must understand which features the AI based its decision on to assess its validity. Methods for visualizing algorithm attention and highlighting significant image regions increase transparency, but many modern AI systems remain "black boxes."

No, this is a myth. Modern AI systems in surgery perform narrow tasks like recognizing anatomical structures or warning about risks, but do not replace human participation. They function as assistive tools requiring surgeon oversight. Full AI autonomy in the operating room has not yet been achieved and raises serious ethical questions.

Differences in study design, patient populations, AI versions, and evaluation methods create heterogeneity that complicates pooling results. High heterogeneity reduces the reliability of generalized conclusions about AI accuracy. Statistical methods (I² statistic, meta-regression) help assess and explain variability between studies.

Biased AI exacerbates healthcare inequality by providing inferior diagnostics to vulnerable population groups. This violates principles of fairness and equal access to quality medical care. Developers and regulators must require demonstration of equal AI performance across all demographic groups before approval.

Absolutely essential. AI performance can decline due to changes in patient populations, equipment updates, or "data drift." Regular accuracy audits, error analysis, and feedback from clinicians enable timely problem detection. Retraining on new data may be required to maintain clinical effectiveness.

Working with rare diseases is a challenging task due to limited training examples, which increases the risk of overfitting and errors. Transfer learning methods, synthetic data generation, and federated learning help partially address the problem. However, validation remains difficult, and AI for rare pathologies requires particularly thorough expert review.