📊 Machine Learning Fundamentals

📊 Machine Learning FundamentalsHow Artificial Intelligence Works: From Data to Decisionsλ

Understanding how AI works — from learning on data to practical applications in medicine, business, and everyday life

Overview

Artificial intelligence learns from data 🧠 — it finds patterns, builds predictions, makes decisions. Neural networks, machine learning algorithms, and natural language processing enable machines to solve tasks that previously required human thinking. No magic: just mathematics, statistics, and computational power.

🛡️

Laplace Protocol: AI is not a replacement for human intelligence, but a tool to amplify it. Understanding the basic principles of how AI works is accessible to everyone and doesn't require technical education for practical application.

Reference Protocol

Scientific Foundation

Evidence-based framework for critical analysis

Navigation Matrix

Subsections

[ai-errors-biases]

AI Errors and Bias

How to recognize and minimize the risks of algorithmic errors in diagnosis, surgery, and clinical research

Explore

[ml-basics]

Machine Learning Fundamentals

Explore fundamental algorithms, mathematical foundations, and practical machine learning methods that form the backbone of modern artificial intelligence and data analysis

Explore

Protocol: Evaluation

Test Yourself

Quizzes on this topic coming soon

Sector L1

Articles

Research materials, essays, and deep dives into critical thinking mechanisms.

📊 Machine Learning Fundamentals

📊 Machine Learning Fundamentals⚡

Deep Dive

AI Fundamentals: From Data to Decisions

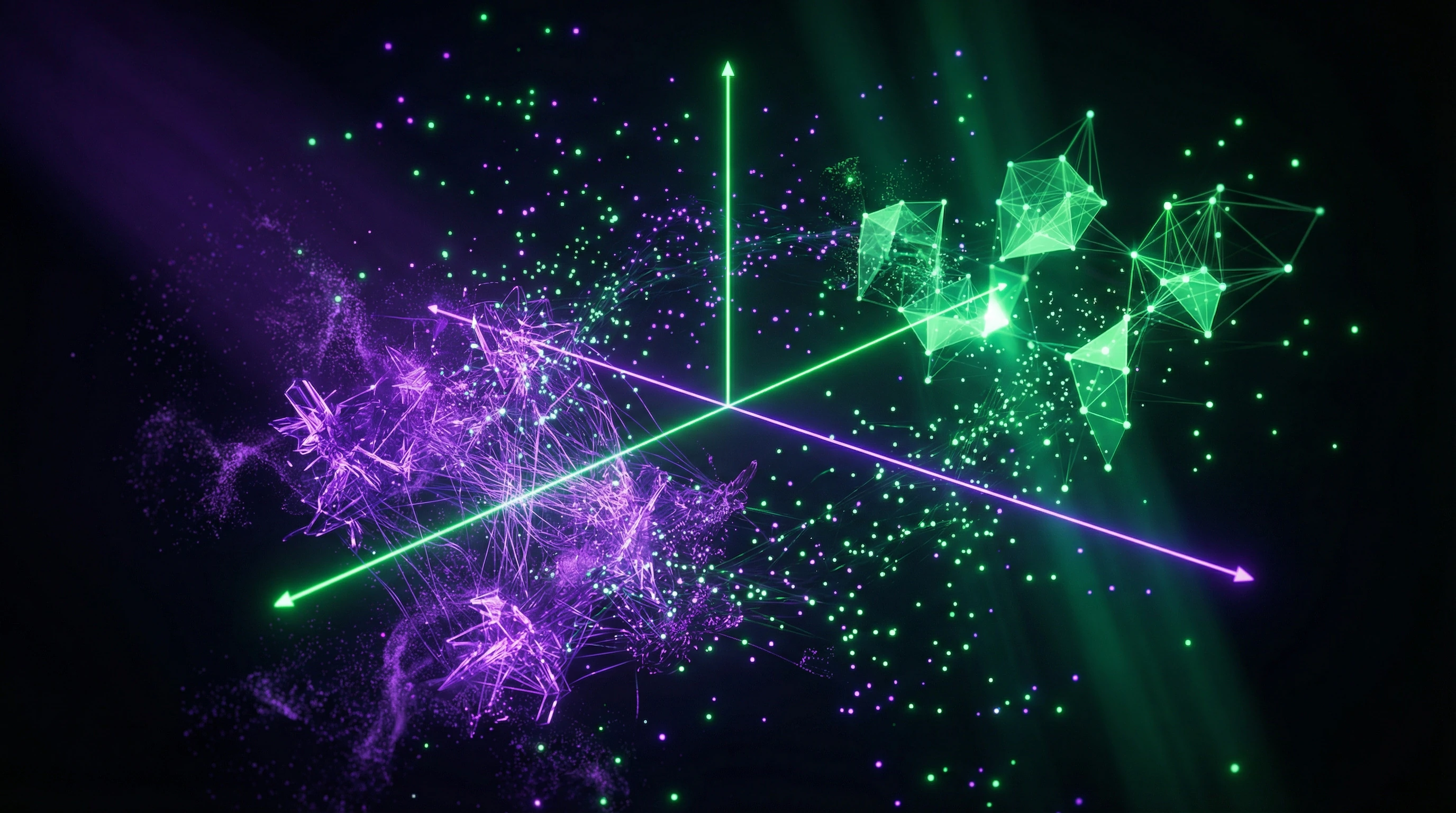

Artificial intelligence works as a mathematical system for recognizing patterns in large volumes of data. The algorithm receives examples, identifies statistical relationships between input and output, then applies the discovered patterns to new information.

This fundamentally differs from traditional programming: there, a developer manually codes each rule; here, AI forms rules independently based on experience.

What is Machine Learning

Machine learning is a subset of AI where systems learn from data without explicit programming of each step.

| Learning Type | Principle | Tasks |

|---|---|---|

| Supervised | Algorithm receives labeled examples with correct answers | Classification, prediction |

| Unsupervised | System independently finds structure in unlabeled data | Clustering, pattern detection |

| Reinforcement | Model learns through a system of rewards and penalties | Optimizing action sequences |

The Role of Data in AI Training

Data is fuel for AI: the quality and volume of the training dataset directly determine model accuracy.

- Collecting Relevant Information

- First stage: selecting sources that genuinely reflect the task.

- Cleaning and Normalization

- Removing errors, outliers, standardizing formats.

- Labeling (for supervised learning)

- Adding correct answers when required.

- Representativeness

- Critical requirement: training data must reflect real-world diversity of situations. Without this, the model produces biased results. For example, a facial recognition system trained predominantly on photos of people with European features performs worse with other ethnic groups—a direct consequence of AI errors and biases.

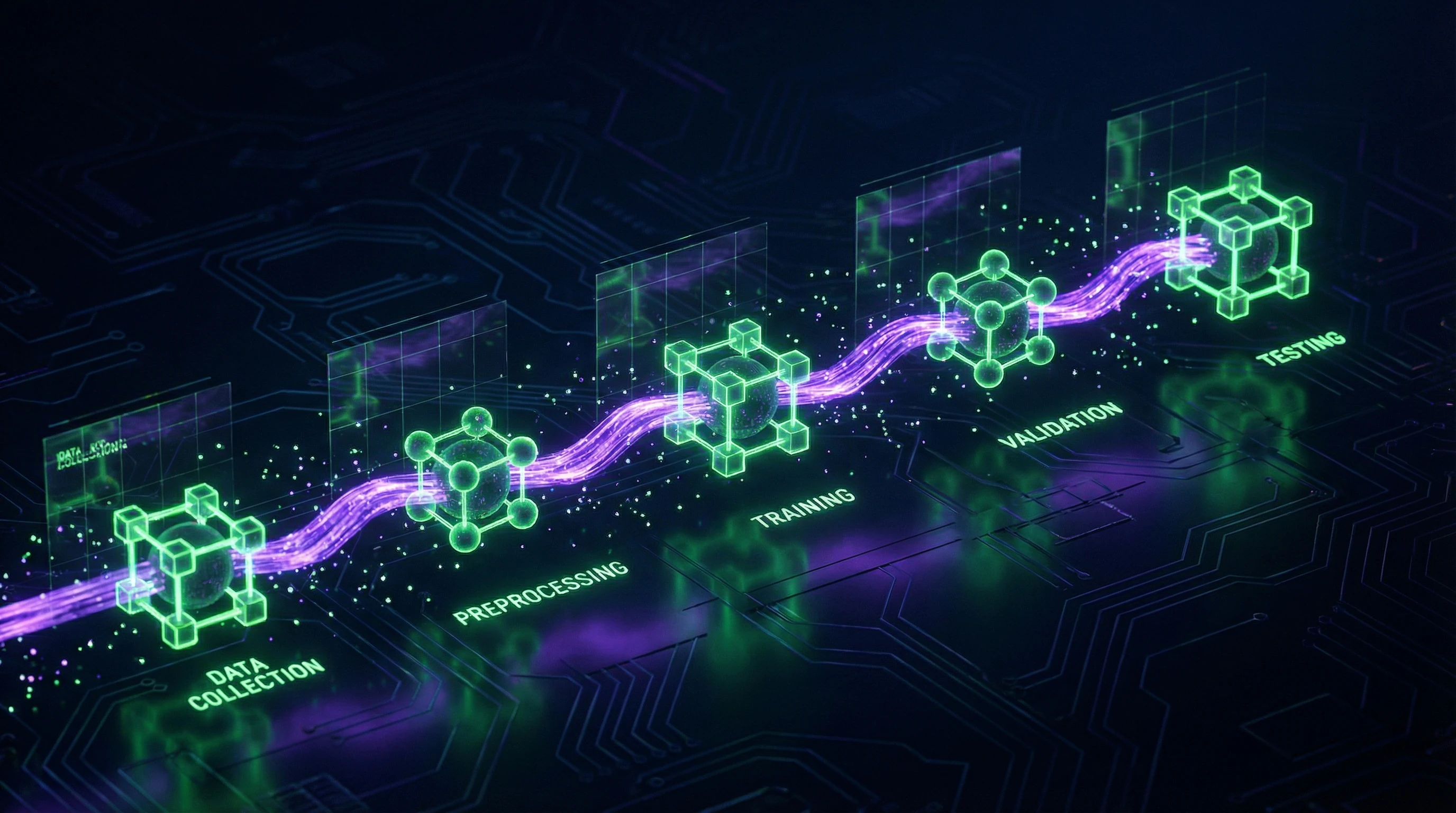

Model Training and Testing Process

AI training proceeds in several stages with data divided into three sets.

- Training set (70–80%) — the model adjusts internal parameters, minimizing error between predictions and actual values through optimization.

- Validation set (10–15%) — used for tuning hyperparameters and preventing overfitting (when the model performs excellently on familiar data but generalizes poorly to new data).

- Test set (10–15%) — final verification shows real system performance under conditions closest to production.

Data quality matters more than quantity: one well-prepared dataset will yield better results than gigabytes of dirty, imbalanced data.

Neural Networks: Architecture and Principles

Neural networks are computational models inspired by the structure of biological neurons, but operating on entirely different principles. An artificial neural network consists of nodes (neurons) organized in layers and connected by weighted links that transmit and transform information.

Each neuron receives input signals, applies a mathematical function to them (typically a weighted sum with nonlinear transformation), and passes the result to the next layer. It is this multi-layered architecture that allows the network to identify complex, hierarchical patterns in data—from simple features in the first layers to abstract concepts at deeper levels.

Structure of an Artificial Neuron

An artificial neuron is a mathematical function that takes multiple inputs, multiplies each by a corresponding weight, sums the results, adds a bias, and passes it through an activation function.

- Weights

- Determine the importance of each input signal and are adjusted during training through the backpropagation algorithm.

- Activation Function

- Introduces nonlinearity, without which even a multi-layer network would behave like a simple linear model. Popular variants: ReLU, sigmoid, tanh.

- Bias

- Allows a neuron to activate even with zero inputs, increasing the model's flexibility.

Layers and Specialized Architectures

A typical neural network contains an input layer (receives raw data), one or more hidden layers (perform transformations), and an output layer (generates the final result).

| Architecture Type | Connection Structure | Application |

|---|---|---|

| Fully Connected (Dense) | Each neuron connected to all neurons in the next layer | Classification, regression |

| Convolutional (CNN) | Local connections and shared weights | Image processing |

| Recurrent (RNN) | Feedback connections for sequence processing | Text analysis, time series |

Network depth (number of layers) and width (number of neurons per layer) determine its expressive capacity, but excessive complexity leads to overfitting and requires more data.

Differences from the Biological Brain

Despite the name, artificial neural networks differ radically from biological ones: they use simplified mathematical models instead of complex electrochemical processes, learn through gradient descent instead of synaptic plasticity, and operate synchronously layer by layer rather than asynchronously like real neurons.

The biological brain contains approximately 86 billion neurons with trillions of connections, each of which can have dozens of neurotransmitter types and complex temporal dynamics—modern AI doesn't even come close to this complexity.

The brain is energy-efficient (consuming about 20 watts), while training large neural networks requires megawatts of electricity. This fundamental difference is often overlooked in popular AI descriptions, creating a false impression of similarity between artificial and biological systems.

Key Technologies of Modern AI

Modern artificial intelligence relies on three complementary technologies. Deep learning uses multi-layered neural networks for automatic feature extraction, natural language processing enables machines to understand and generate speech, and computer vision interprets visual information.

These directions are often combined: image captioning systems unite computer vision and NLP, multimodal models like GPT-4 work simultaneously with text and images.

Deep Learning

Deep learning is a subset of machine learning that uses neural networks with multiple hidden layers (typically from 10 to hundreds) to identify hierarchical data representations.

The breakthrough occurred in 2012: the convolutional network AlexNet won the ImageNet image recognition competition by a huge margin, demonstrating the advantage of deep architectures.

Key success factors: availability of large datasets, growth in GPU computational power, and improved training methods (dropout, batch normalization, residual connections).

Today, deep learning dominates computer vision, speech recognition, machine translation, and generative models.

Natural Language Processing (NLP)

NLP enables computers to analyze, understand, and generate human language through a combination of linguistic rules and statistical models.

Modern systems use transformers—an architecture based on the attention mechanism that efficiently processes long text sequences and captures contextual dependencies.

| Component | Function | Result |

|---|---|---|

| Large Language Models (LLM) | Trained on billions of words, predict the next word or reconstruct missing fragments | Absorb grammar, facts, and elements of reasoning |

| Applications | Machine translation, chatbots, summarization, sentiment analysis, content generation | Practical use in products and services |

Computer Vision

Computer vision gives machines the ability to extract information from images and video: classify (what is depicted), detect (where objects are located), segment (delineate boundaries), and generate images.

Convolutional neural networks (CNN) became the standard thanks to their ability to automatically learn a hierarchy of visual features: first layers identify edges and textures, middle layers—object parts, deep layers—entire objects and scenes.

Modern architectures like ResNet, EfficientNet, and Vision Transformers achieve superhuman accuracy in narrow tasks: traffic sign recognition, X-ray diagnostics.

Applications span autonomous vehicles, medical diagnostics, security systems, augmented reality, and quality control in manufacturing.

Stages of Building an AI System: From Concept to Production

Defining Goals and Choosing Architecture

AI system development begins with clearly formulating the business problem and translating it into a technical specification: classification, regression, clustering, or generation.

At this stage, success metrics are defined (accuracy, F1-score, BLEU for NLP), available computational resources and latency requirements. Architecture choice depends on data type: CNNs are used for images, RNN/LSTM or transformers for sequences, gradient boosting or classical ML algorithms for tabular data.

It's critically important to assess whether there's enough data to train a deep model or whether to start with transfer learning on pre-trained weights.

Data Collection, Cleaning, and Preparation

Data quality determines 80% of project success: a model cannot learn what isn't in the training set.

- Collecting representative examples and labeling (for supervised learning)

- Removing duplicates, handling missing values and outliers

- Feature normalization; for images — augmentation (rotations, crops, brightness adjustments)

- For text — tokenization and vectorization through embeddings

- Splitting into training (70–80%), validation (10–15%), and test (10–15%) sets

- Correcting class imbalance through oversampling, undersampling, or loss function weighting

Critical to verify no information leakage between sets.

Training, Validation, and Deployment

Training involves iterative optimization of model weights through minimizing the loss function on the training set using algorithms like SGD, Adam, or AdamW.

The validation set is used for hyperparameter tuning (learning rate, batch size, architecture) and early stopping when overfitting occurs. After achieving target metrics, the model is tested on held-out data, checked on edge cases and adversarial examples, then packaged into an API or embedded in an application.

In production, monitoring is critical: tracking input data distribution drift, metric degradation, latency, and resource consumption.

Modern MLOps practices include model versioning, A/B testing, automatic retraining when quality drops, and explainability tools for decision auditing.

Practical Applications of AI: From Medicine to Everyday Life

AI in Medicine and Diagnostics

Medical AI analyzes X-rays, MRIs, and CT scans with accuracy comparable to or exceeding radiologists in narrow tasks like detecting pneumonia, tumors, or fractures. Algorithms process histological samples to identify cancer cells and predict cardiovascular disease risk from ECGs.

In drug discovery, AI accelerates the search for candidate molecules by predicting their properties and interactions with target proteins. This reduces drug development time from 10–15 years to 3–5.

Virtual assistants help patients with symptom tracking, medication reminders, and initial consultations through chatbots—shifting part of the burden from physicians to algorithms.

Business Process Automation

In the corporate sector, AI automates routine tasks: document processing through OCR and NLP, customer inquiry routing, demand forecasting, and logistics optimization. Recommendation systems increase e-commerce conversion by 20–30% by analyzing purchase history and website behavior.

| Application | Effect |

|---|---|

| Support chatbots | Handle up to 80% of standard inquiries |

| Fraud detection in banks | Reduce fraud losses by 40–60% |

| Predictive maintenance | Reduce equipment downtime and repair costs |

AI in Education and Daily Life

Adaptive educational platforms adjust the pace and difficulty of material to the student's level by analyzing error patterns. Automated essay and code grading systems provide instant feedback, saving instructors time.

At home, voice assistants control smart homes, answer questions, and perform tasks through NLP. Music, movie, and content recommendations are personalized through collaborative filtering and deep learning.

Smartphone cameras use AI for scene recognition, portrait mode with background blur, and night photography through multi-frame processing. Navigation apps predict traffic and optimize routes by processing data from millions of users in real time.

Myths and Reality About Artificial Intelligence

AI is not equal to neural networks

A common misconception equates all AI with neural networks, although the latter are just one tool in the arsenal.

| Method | Strengths | When to apply |

|---|---|---|

| Classical ML (trees, SVM, logistic regression) | High interpretability, low computation | Small-volume tabular data |

| Rule-based expert systems | Complete logic transparency | Medical diagnosis, financial analysis |

| Evolutionary algorithms, reinforcement learning | Solve problems without labeled data | Optimization, control, games |

| Deep learning | Scalability, handling unstructured data | Images, text, audio at large volumes |

Method selection depends on data volume, accuracy requirements, interpretability, and computational resources—no universal solution exists.

Limitations of modern AI

Modern AI systems do not possess understanding in the human sense: they find statistical correlations in data without grasping causal relationships.

Models are fragile to adversarial attacks—minimal, imperceptible input changes can cause catastrophic errors. Generalization beyond the training distribution remains an unsolved problem: a model trained on summer photos may fail on winter ones.

Data requirements are enormous: GPT-3 training used hundreds of billions of tokens, and ImageNet contains 14 million labeled images. Energy consumption for training large models is comparable to the annual carbon emissions of several cars, raising questions of environmental sustainability.

Ethical aspects and responsible use

AI systems inherit and amplify biases present in training data: hiring algorithms discriminate by gender, facial recognition systems perform worse on darker skin, credit scoring can be unfair to minorities.

The opacity of deep learning models complicates auditing and explaining decisions, which is critical in medicine, law, and finance. Mass AI adoption threatens jobs in transportation, manufacturing, and customer service, requiring retraining programs.

Deepfakes and generative models create risks of disinformation and public opinion manipulation.

- Audit data for bias and representativeness

- Apply explainable AI methods for decision transparency

- Human oversight in critical decisions (medicine, law, finance)

- Document system limitations and failure scenarios

- Regular monitoring of social impact and side effects

Knowledge Access Protocol

FAQ

Frequently Asked Questions

Artificial intelligence is technology that enables computers to perform tasks requiring human thinking: pattern recognition, decision-making, language understanding. AI learns from data through algorithms, identifies patterns, and applies them to solve new problems. Examples: voice assistants, social media recommendations, medical diagnostics.

A neural network receives a large set of examples with correct answers and gradually adjusts connections between neurons, minimizing errors. The process resembles teaching a child: we show thousands of cat photos until the system learns to recognize them. After training, the model is tested on new data to verify accuracy.

In regular programming, humans write all rules manually, while in machine learning the system finds patterns in data itself. The programmer only sets the goal and provides examples; the algorithm independently builds a solution model. This enables solving tasks where rules are too complex for explicit description.

No, this is a common myth. Artificial neural networks are merely inspired by biology but operate through mathematical operations and statistical methods, not biological processes. The human brain uses chemical signals, emotions, and consciousness, which AI lacks.

No, data is the foundation of modern AI. Without quality training data, the system cannot identify patterns and make accurate predictions. The more abundant and diverse the data, the better the model performs.

The process includes six key stages: defining objectives, collecting data, preparing and cleaning it, training the model, testing on new data, and deploying to production. After launch, continuous monitoring and improvement based on real-world performance is required.

Deep learning is a subset of machine learning that uses multi-layered neural networks to analyze complex data. The technology automatically extracts features from images, text, and sound without manual configuration. It's applied in facial recognition, voice assistants, and autonomous vehicles.

Start with ready-made tools: ChatGPT for text, Midjourney for images, voice assistants for task automation. Many services have simple interfaces and require no programming. Learn basic concepts through free online courses.

AI assists in diagnosing diseases from scans, predicts complication risks, accelerates drug development, and personalizes treatment plans. Systems analyze medical data faster than doctors and identify patterns invisible to humans. The technology is already used in oncology, radiology, and cardiology.

The model is tested on a separate dataset not used during training, measuring accuracy, recall, and F1-score. It's important to verify performance on edge cases and real-world usage scenarios. Professionals also analyze confusion matrices and conduct A/B testing.

No, that's a misconception. AI encompasses many technologies: expert systems, genetic algorithms, logical inference, decision trees. Neural networks are just one tool, though a very popular one in recent years.

Modern AI is not fully autonomous and requires human oversight, goal-setting, and interpretation of results. The technology works best as a tool that augments human capabilities rather than replacing them. Creativity, empathy, and strategic thinking remain uniquely human.

NLP is a branch of AI that enables computers to understand, interpret, and generate human language. The technology is used in chatbots, translators, sentiment analysis, and voice assistants. Modern models like GPT understand context and create coherent text.

Large volumes of high-quality, labeled data relevant to the task at hand are required. For image classification, thousands of labeled photos are needed; for NLP, text corpora. Data must be diverse, balanced, and cleaned of errors.

Key issues include: algorithmic bias from skewed data, privacy of personal information, transparency in decision-making, and accountability for errors. It's important to develop AI with ethical principles in mind, ensuring oversight and explainability of systems. Regulators in different countries are creating legal frameworks for responsible use.

Modern AI combines and transforms patterns from training data but doesn't possess true creativity or understanding. Systems generate new images, texts, and music based on learned examples, but don't create conceptually novel ideas. Breakthrough innovations remain the domain of human intelligence.