What exactly the singularity myth promises — and why the definition blurs every time someone tries to pin it down

The concept of technological singularity traces back to the work of mathematician Vernor Vinge (1993) and futurist Ray Kurzweil, but over three decades the term has undergone numerous transformations. In the strict sense, singularity describes the moment when artificial intelligence achieves the capacity for recursive self-improvement — creating smarter versions of itself, which in turn create even smarter versions, triggering an uncontrolled chain reaction of intelligence growth (S004). The mathematical metaphor is borrowed from black hole physics, where singularity denotes a point at which known laws cease to function.

The problem begins with operationalization. Research demonstrates how the term gets applied to any rapid changes in technological systems, losing specificity (S003). Authors use "singularity" to describe the moment when digital educational platforms reach critical mass adoption — a definition radically different from the original concept of recursive AI self-improvement.

This isn't an isolated case: in academic literature, the term gets applied to humanitarian-technological revolution (S002), to any "points of no return" in social systems, to moments of rapid digitization. If singularity can mean both explosive growth of superhuman AI, and simply rapid adoption of new technologies, and a turning point in education, then the term loses predictive and analytical power.

⚠️ Three incompatible definitions coexisting in a single discourse

- Hard Takeoff

- The moment when AI reaches human-level intelligence (AGI) and then within a short period (days, hours) transitions to superhuman intelligence (ASI) through recursive self-improvement. The classic Vinge-Kurzweil version, assuming a discontinuity and loss of human control.

- Soft Takeoff

- Gradual acceleration of technological progress, where AI becomes increasingly capable but without a sharp jump. The transition to superhuman intelligence takes years or decades, leaving time for adaptation and regulation. Closer to observed reality, but loses the drama of a "point of no return."

- Metaphorical Singularity

- Any moment of rapid, irreversible changes in technological or social systems, without connection to AI or self-improvement. This version dominates in sources (S002), (S003), (S004), where singularity is used as a synonym for "revolution," "transformation," or "turning point."

The blurriness of definition isn't a flaw in the singularity concept — it's its key feature, ensuring survival. The question "another planetary revolution or unique singularity?" remains open precisely because the criteria for distinction haven't been established.

If singularity had clear, measurable parameters, it could be tested and potentially falsified. But the term's flexibility allows proponents to redefine it every time predictions fail: if hard takeoff doesn't happen, they can switch to soft takeoff; if that's not observed either, they can declare any acceleration of innovation a singularity.

This is a mechanism familiar from the history of other myths: a concept remains convincing exactly as long as its definition remains blurred. Once verification becomes possible, the myth either transforms or loses its audience. Singularity chose the first path. More details in the section Machine Learning Basics.

Seven Most Compelling Arguments for the Inevitability of the Singularity — and Why They Work on an Intuitive Level

Before examining the evidence base, it's necessary to honestly present the strongest arguments from proponents of the singularity concept. This is not a straw man — this is a steel-man version of the position that explains why the idea resonates with serious researchers, engineers, and investors. More details in the Synthetic Media section.

📊 Argument 1: Empirical Trajectory of Exponential Growth in Computational Power

Moore's Law, describing the doubling of transistors on a chip every 18–24 months, held from 1965 through the early 2020s. Singularity proponents point out that if exponential growth in computational power continues (through new architectures, quantum computing, neuromorphic chips), then achieving computational power equivalent to the human brain (~10^16 operations per second) becomes a matter of time.

If you add algorithmic improvements, which also demonstrate exponential growth in efficiency, then the emergence of AGI appears inevitable in the foreseeable future. This argument is strong because it relies on observable historical trends: computational power has indeed grown exponentially for decades, and many AI breakthroughs (from image recognition to language models) became possible precisely through scaling computation.

| Period | Source of Growth | Intuitive Appeal |

|---|---|---|

| 1965–2000 | Moore's Law (transistors) | Historical fact, easy to extrapolate |

| 2000–2020 | Parallel computing, GPUs | Visible AI progress coincides with power growth |

| 2020+ | Quantum, neuromorphic architectures | New technologies promise even greater leaps |

🧠 Argument 2: Fundamental Possibility of Recursive Self-Improvement

If AI reaches a level where it can understand and improve its own code (or develop more efficient machine learning algorithms), then positive feedback emerges: each improvement makes the system more capable of the next improvement. Human intelligence is limited by biological constants (neural transmission speed, working memory capacity, lifespan), but AI has no such constraints.

Theoretically, a system can operate 24/7, scale horizontally (copy itself across multiple servers), exchange knowledge instantaneously. The argument appeals to logic: if self-improvement is possible in principle, and if AI lacks human biological limitations, then the recursive process can accelerate until it hits physical limits of computation (thermodynamics, speed of light).

The counterargument requires proving that self-improvement is impossible or that non-obvious barriers exist — a more complex position to defend than simply extrapolating feedback loop logic.

🔬 Argument 3: Precedents of Explosive Growth in Evolution and History

Singularity proponents point to historical examples of phase transitions: the emergence of language in Homo sapiens, the Neolithic Revolution, the Industrial Revolution, the Digital Revolution. Each transition was accompanied by accelerating rates of change.

If you plot "time between revolutions," it demonstrates shrinking intervals — from millions of years between biological transitions to decades between technological ones. Extrapolation of this trend suggests the next transition (emergence of superhuman AI) could occur within years or even months. Induction doesn't guarantee future results, but intuitively the pattern looks compelling.

⚙️ Argument 4: Economic Incentives to Create Ever More Powerful AI

The global economy invests hundreds of billions of dollars in AI development. Companies that first create AGI will gain enormous competitive advantage — the ability to automate intellectual labor, accelerate scientific research, dominate any industry.

This race creates a powerful economic imperative to continue scaling models, increasing datasets, improving architectures. Even if individual researchers recognize the risks, market logic pushes the industry forward. AI investments are indeed growing exponentially, and competition between labs (OpenAI, DeepMind, Anthropic, Chinese companies) is intensifying.

Economic incentives are a powerful predictor of behavior, and it's hard to imagine the race stopping voluntarily.

🧬 Argument 5: Absence of Fundamental Barriers to AGI

The human brain is a physical system obeying the laws of physics and chemistry. If intelligence emerges from material processes (rather than an immaterial soul), then in principle it can be reproduced in another substrate.

We already know that neural networks are capable of learning, generalization, problem-solving. Modern language models demonstrate emergent abilities — capabilities that weren't explicitly programmed but arose through scaling. If there's no fundamental barrier, then AGI is a question of engineering and resources, not fundamental impossibility.

- Materialism: brain = machine, machines can be copied

- Emergent abilities: capabilities arise through scaling, not explicitly programmed

- Counterargument requires postulating immateriality or unknown barriers

🕳️ Argument 6: Risk Asymmetry — the Cost of Error Is Too High

Even if the probability of a hard singularity takeoff is low (say, 5–10%), the potential consequences are so catastrophic (existential risk to humanity) that ignoring the threat is irrational. This is an application of the precautionary principle: with high stakes, even low probability demands serious attention.

Proponents point out that we cannot afford to be wrong — if the singularity occurs and we're unprepared, the consequences are irreversible. Insurance logic: we pay premiums even if the probability of catastrophe is small. Emotionally, the argument is strengthened by fear of losing control and existential threat.

👁️ Argument 7: Expert Consensus on the Possibility of AGI in the Foreseeable Future

Surveys of AI researchers show that a significant portion of experts consider AGI emergence likely within the next 20–50 years. While estimates vary, the median forecast points to 2040–2060.

If experts working in the field consider AGI achievable, this is weighty evidence. Singularity proponents point out that skepticism often comes from people distant from cutting-edge research, while those who see progress from the inside are more optimistic (or pessimistic, depending on perspective) about the speed of development. The argument appeals to expert authority — a heuristic that usually works well.

The problem is that expert predictions about future technologies are historically unreliable, but intuitively we tend to trust professional opinion.

All seven arguments work on an intuitive level because each appeals to different cognitive mechanisms: trend extrapolation, feedback loop logic, historical patterns, economic incentives, materialism, risk management, expert authority. Together they create a compelling narrative that explains why the idea of singularity resonates even with skeptics.

What the 2024–2025 Data Shows: Three Charts That Don't Confirm an Exponential Takeoff

Moving from theoretical arguments to empirical verification, it's necessary to analyze what's actually happening with AI development in reality. If the singularity is approaching, we should observe certain indicators: accelerating rates of progress, emergence of qualitatively new capabilities, signs of recursive self-improvement. More details in the AI and Technology section.

Data from the past two years paints a more complex picture.

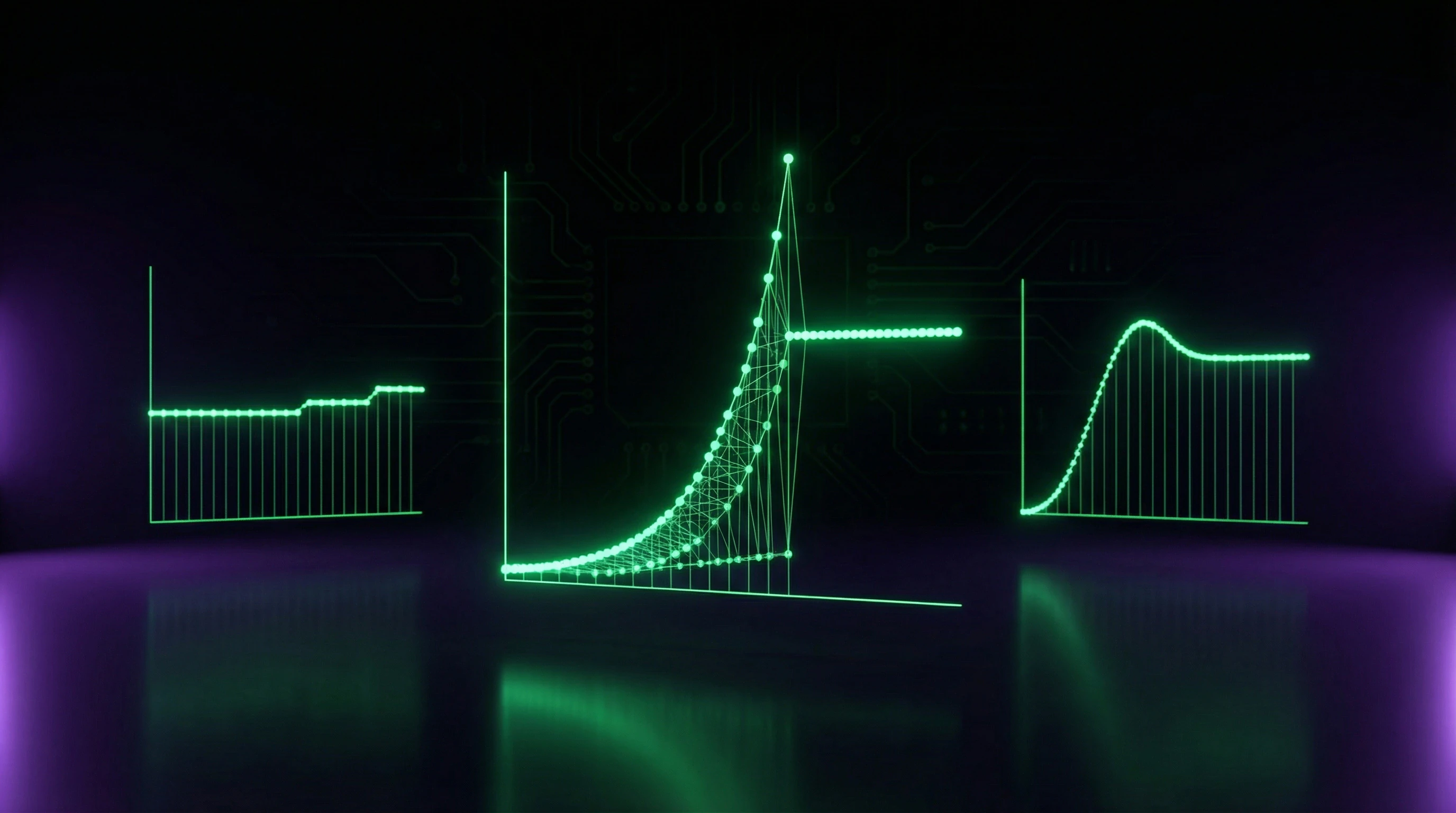

📊 Indicator 1: Slowing Performance Gains in Language Models at Scale

From 2020 to 2023, we witnessed impressive progress: from GPT-3 (175 billion parameters) to GPT-4 and competitors with trillions of parameters. However, benchmark analysis shows that performance gains per unit of increased computation have begun to decline.

Models are getting larger and more expensive to train, but output quality improvements no longer follow the previous exponential trajectory. This phenomenon, known as diminishing returns from scaling, suggests that simply increasing model size and dataset doesn't guarantee proportional capability growth (S010).

| Period | Progress Characteristics | Indicator |

|---|---|---|

| 2020–2022 | Exponential performance growth | Each parameter doubling → significant quality leap |

| 2023–2025 | Diminishing returns | Trillions of parameters → marginal improvements |

A systematic review of contemporary approaches demonstrates that even the most advanced AI systems face fundamental limitations in understanding context, causal relationships, and transferring knowledge to new domains (S010). If we were approaching AGI, we should expect improvements precisely in these areas.

🧪 Indicator 2: Absence of Recursive Self-Improvement Signs in Existing Systems

A key element of the hard takeoff scenario is AI's ability to improve its own algorithms. In practice, modern machine learning systems don't demonstrate such capability.

Language models can generate code, but cannot independently develop new neural network architectures, optimize training processes, or improve their own weights without human intervention. All breakthroughs of recent years (transformers, reinforcement learning from human feedback, chain-of-thought prompting) were made by human researchers, not by the models themselves.

Attempts to create systems capable of automatic algorithm improvement (AutoML, neural architecture search) have shown limited success. The gap between "optimization within a paradigm" and "creating a new paradigm" remains enormous.

These systems can optimize hyperparameters or search for efficient architectures within a defined search space, but are incapable of conceptual breakthroughs requiring fundamental rethinking of approaches.

🧾 Indicator 3: Investment Stabilization and Shift from Hype to Practical Implementation

After the peak hype around generative AI in 2023, the market began to sober up. Investments continue to grow, but growth rates have slowed, and focus has shifted from creating ever-larger models to practical implementation and monetization of existing technologies.

- High inference costs

- Economic barrier to mass adoption; companies are seeking ways to reduce costs rather than scale capacity.

- Integration complexity

- AI requires reworking existing processes; this is not a revolution but an engineering challenge.

- Reliability issues

- Hallucinations, unpredictable behavior—signs that systems remain tools, not agents.

This pattern is typical of technology cycles: after initial enthusiasm comes the "trough of disillusionment" phase (per the Gartner Hype Cycle model). If the singularity were near, we'd observe the opposite dynamic—accelerating investments, panic statements about loss of control, emergency regulatory measures.

Instead, the industry is transitioning to normalization mode: AI is becoming an ordinary tool rather than a revolutionary threat. This aligns with the logic of gradual transformation, not discontinuous transition.

🔎 Qualitative Analysis: Why "Emergent Abilities" Are Not Evidence of Approaching AGI

Singularity proponents often point to emergent abilities—capabilities that suddenly appear in large language models upon reaching a certain scale but are absent in smaller versions. Examples include arithmetic ability, logical reasoning, translation into languages not represented in training data.

This is interpreted as a sign of qualitative leap, a harbinger of more dramatic transitions. However, detailed analysis shows that many "emergent abilities" are artifacts of metric choice and evaluation thresholds.

- Researchers use binary metrics (pass/fail tests) instead of continuous ones.

- When recalculated on continuous metrics, "sudden" emergence turns out to be gradual improvement.

- Capabilities cross arbitrary thresholds, creating the illusion of a leap.

- Capabilities improve smoothly with scale rather than appearing from nowhere.

This doesn't negate impressive progress, but questions the interpretation as a qualitative leap. The pattern is more consistent with incremental progress than with approaching singularity.

Mechanisms of Causality: Why the Correlation Between Computational Power and Intelligence Is Not Linear

One of the central arguments of singularity proponents is based on extrapolation: if computational power grows exponentially, and if intelligence correlates with computational power, then AI intelligence should also grow exponentially. This logic contains several hidden assumptions that do not withstand scrutiny. More details in the Mental Errors section.

🧬 Problem 1: Intelligence Is Not Reducible to Computational Power — The Role of Architecture and Algorithms

The human brain operates at a frequency of ~200 Hz (speed of nerve impulse transmission), which is orders of magnitude slower than modern processors (gigahertz). Nevertheless, the brain solves tasks that AI handles poorly or cannot handle at all: understanding the physical world, social intelligence, creativity, knowledge transfer.

The efficiency of intelligence is determined not by computational speed, but by the architecture of information processing. Doubling processors without changing the algorithm often yields sublinear performance gains.

This points to a fundamental difference: biological intelligence is optimized for energy efficiency and adaptability, not absolute computational power. Modern neural networks require exponential growth in parameters for linear quality improvements — a phenomenon known as scaling plateau.

🔄 Problem 2: The Law of Diminishing Returns in Scaling

Data from 2023–2024 shows that increasing model size yields progressively smaller performance gains for each doubling of compute. This is not coincidental — it is a consequence of the fact that training data quality is finite, and the architectural limitations of transformers become visible at scale.

- First 10 billion parameters: significant capability gains

- 100 billion parameters: noticeable but decelerating gains

- 1 trillion parameters: marginal improvements on most tasks

- Further scaling: requires qualitatively new architectures, not just more compute

This means that exponential growth in computational power does not transform into exponential growth in intelligence. The curve flattens.

⚙️ Problem 3: Intelligence Is Multidimensional, Computational Power Is One-Dimensional

Intelligence includes: logic, intuition, social understanding, planning, few-shot learning, knowledge transfer, metacognition. Computational power is simply the number of operations per second. A correlation exists between them, but it is neither causal nor monotonic.

- Logical Intelligence

- Can improve with greater computational power, but hits algorithmic limitations (NP-completeness, undecidability).

- Social Intelligence

- Requires not computation, but models of human behavior that cannot be obtained simply by increasing parameters.

- Creativity

- Depends on the architecture of search in solution space, not on the speed of enumerating options.

Attempting to solve all AI problems through scaling is a category error. It's like trying to improve music by increasing the volume.

🎯 Why the Myth of Linear Correlation Is So Convincing

Extrapolation works on an intuitive level: we see computational power growing, we see AI getting better, and we assume this is a causal relationship. But this is post hoc ergo propter hoc — a logical fallacy.

The correlation between computational power and AI performance exists, but it masks a deeper cause: improvements in architectures, algorithms, and data. When these factors stabilize, computational power loses its magical force.

This explains why predictions about singularity in 2030, 2040, or 2050 are constantly postponed. Each time scaling stops working, singularity proponents search for a new source of exponential growth — quantum computers, neuromorphic chips, new architectures. But this is no longer extrapolation, it's a search to save the myth.

Reality: AI will develop, but through qualitative leaps in architecture and understanding, not through infinite scaling. This is slower, less dramatic, but far more likely. For more on how predictions have failed, see the analysis of Kurzweil's predictions.