The simulation hypothesis claims that our reality may be a computer program. Despite its popularity in mass culture and among tech enthusiasts, this idea faces a fundamental problem: it is unfalsifiable and untestable. Philosophers and scientists point out that the simulation hypothesis offers no testing mechanism, makes no predictions, and cannot be distinguished from alternative explanations of reality. This makes it an interesting thought experiment, but not a scientific theory.

👁️ Imagine an idea that simultaneously captures the imagination of millions, inspires blockbusters and philosophical discussions—yet is absolutely useless for science. The simulation hypothesis has become a cultural phenomenon of the 21st century, penetrating from academic circles into mass consciousness through "The Matrix," Elon Musk's talks, and countless podcasts. However, behind the flashy exterior lies a fundamental problem: this idea can neither be proven nor disproven. It exists in a special category of claims that philosophers call "unfalsifiable"—and this is precisely what makes it scientifically sterile, despite all its intellectual appeal.

🧩 What exactly the simulation hypothesis claims — and why the boundaries of this claim are blurred beyond recognition

In its basic form, the simulation hypothesis proposes that the reality we observe is not a fundamental physical universe, but a computational simulation created by a more advanced civilization or entity. Nick Bostrom (2003) proposed a trilemma: either civilizations go extinct before reaching technological maturity, or advanced civilizations are not interested in creating simulations, or we are living in a simulation (S001).

Three versions of the hypothesis with radically different implications

Critical problem: the "simulation hypothesis" is not a single claim. There are at least three distinct versions that are often conflated in popular discussions. More details in the Deepfakes section.

- Weak version

- It is technically possible to create a simulation of conscious beings. This is a claim about fundamental feasibility, not about probability.

- Medium version

- Such simulations will be created in large quantities. Adds assumptions about motivation and scale.

- Strong version

- We are very likely living in one of these simulations right now. This is a specific claim about our reality (S001).

David Chalmers attempted to give the hypothesis a more rigorous form through the concept of "digital ontology of consciousness." But even this attempt faces the problem of operationalization: how exactly do we define "simulation"?

If a simulation is indistinguishable from "base reality" by all observable parameters, what is the meaningful difference between these concepts?

The demarcation problem: where physics ends and metaphysics begins

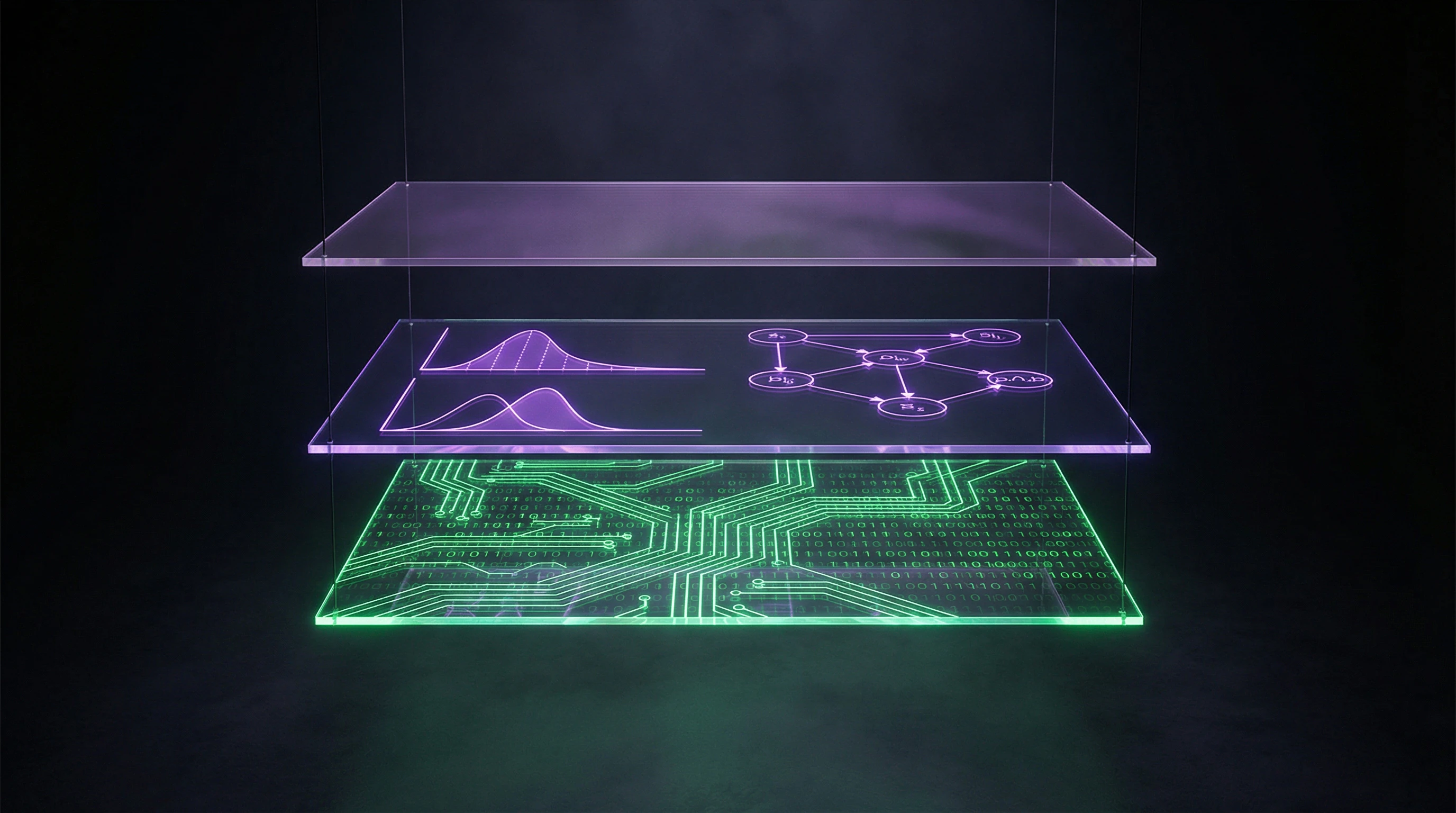

The simulation hypothesis balances on the boundary between empirical claim and metaphysical speculation. Unlike scientific theories that make specific predictions about observable phenomena, the simulation hypothesis offers no mechanism that would allow us to distinguish a "simulated" universe from a "real" one. This places it in the same category as Descartes' classical skeptical scenario of the evil demon or the modern "brain in a vat" version (S003).

For a hypothesis to have scientific value, it must be falsifiable — there must be potential observations that could refute it. The simulation hypothesis is typically formulated in such a way that any observation can be explained within its framework.

| Observation | Explanation within the hypothesis |

|---|---|

| Discovered anomaly in physics | It's a bug in the simulation |

| Physical laws work perfectly | The simulation is running correctly |

| Found discrete structure of space | These are the simulation's pixels |

| Space is continuous | The simulation uses continuous coordinates |

This structure makes the hypothesis immune to refutation — any result can be interpreted as confirmation (S003).

Why the popular version of the hypothesis is not what philosophers discuss

There is a significant gap between the academic discussion of the simulation hypothesis and its popular version. In mass culture, the hypothesis is often presented as a concrete claim about the nature of reality with potentially testable implications — searching for "glitches" in physical laws or discrete structure of spacetime.

Academic philosophers, such as Chalmers, discuss the hypothesis in the context of the problem of consciousness and computational theory of mind — a completely different level of abstraction (S001). Technology entrepreneurs and popularizers often present the hypothesis as having practical implications or even probabilistic estimates ("50% chance we're in a simulation"), without specifying what premises these estimates are based on and what alternative hypotheses are being considered.

The popular version of the simulation hypothesis is not a philosophical claim about the nature of consciousness, but a technological myth that borrows terminology from academic discussion while losing its logical structure.

Five Strongest Arguments for the Simulation Hypothesis — and Why They Don't Make It a Scientific Theory

Before critiquing the simulation hypothesis, we need to present it in its most convincing form — this is called "steelmanning." Proponents advance several intellectually serious arguments that deserve careful consideration. More details in the section AI Errors and Biases.

🔬 The Argument from Computational Power and Exponential Technological Growth

Over the past 70 years, computational power has grown exponentially, following Moore's Law. If we extrapolate this trend centuries or millennia forward, future civilizations will possess resources exceeding current ones by many orders of magnitude.

With sufficient power, simulating entire universes with all physical processes becomes technically feasible. The development of virtual reality and computer games demonstrates that the gap between simulation and reality is constantly narrowing.

📊 Bostrom's Probabilistic Argument: If Simulations Are Possible, There Must Be Many

The central argument is based on probabilistic reasoning. If technologically mature civilizations are capable of creating simulations of conscious beings and are interested in creating many such simulations, then simple counting shows that the vast majority of conscious beings must exist in simulations rather than in base reality.

Mathematically: if one base civilization creates N simulations, and each contains M conscious beings, then the ratio of simulated beings to "real" ones is N×M to 1. With large values of N and M, the probability that a randomly selected being exists in base reality approaches zero.

🧠 The Argument from Computational Theory of Consciousness and Functionalism

The third argument relies on functionalism — the position that consciousness is defined not by substrate (biological neurons) but by functional organization and computational processes (S001). If this is true, consciousness can be realized on any sufficiently complex computational substrate, including digital computers.

If consciousness is a computational process, then simulated consciousness would be genuine consciousness, not imitation. Simulated beings would have authentic subjective experience, indistinguishable from the experience of beings in base reality.

🕳️ The Argument from Quantum Mechanics and the Discrete Nature of Reality

Some proponents point to features of quantum mechanics as potential signs of reality's simulated nature. Quantum uncertainty, the superposition principle, and wavefunction collapse upon observation are interpreted as computational resource optimizations: the system doesn't calculate a particle's exact state until it becomes necessary.

Theoretical approaches to quantum gravity suggest a discrete structure of spacetime at Planck scales — analogous to the pixel structure of a computer screen. While these ideas remain speculative, they're used to support intuitions about the "digital" nature of physics.

⚙️ The Argument from Fine-Tuning of Physical Constants

The final argument relates to the fine-tuning problem of fundamental physical constants. The observed values of the cosmological constant, elementary particle mass ratios, and interaction constants fall within a very narrow range that permits the existence of complex structures and life.

The slightest change in these values would make the universe unsuitable for life. The simulation hypothesis is proposed as an explanation: our universe's parameters were chosen by creators specifically to ensure interesting phenomena, including life and consciousness.

- All five arguments rely on extrapolating current trends (computational growth, VR development) into an uncertain future.

- Bostrom's probabilistic argument requires accepting three unproven premises simultaneously.

- Functionalism is a philosophical position, not an established fact about the nature of consciousness.

- Quantum mechanics is interpreted through the lens of computational optimization, but this isn't the only interpretation.

- Fine-tuning is explained by multiple alternative hypotheses (multiverse, anthropic principle).

The strength of these arguments is that they're logically coherent and grounded in real phenomena. The weakness is that none of them offers a way to distinguish simulation from reality — and that's a fundamental requirement for a scientific hypothesis.

Each argument is convincing in isolation, but together they create an illusion of explanatory power. In reality, they describe possibility, not probability, and offer no mechanism for testing.

This distinguishes the simulation hypothesis from scientific theories, which not only explain phenomena but also predict observable consequences that allow them to be falsified. Myths about conscious AI are often built on the same logic: a convincing description of possibility without a mechanism for verification.

Why None of These Arguments Transform the Hypothesis into a Testable Scientific Claim

Despite the intellectual sophistication of the arguments presented above, all of them suffer from a fundamental problem: they offer no method for empirically testing the simulation hypothesis. Each argument either relies on unproven philosophical premises, makes logical leaps that don't follow from the presented propositions, or simply reformulates the problem without solving it. For more details, see the Techno-Esotericism section.

📊 Extrapolation of Technological Progress Is Not Evidence

The argument from computational power assumes that current trends in technological development will continue indefinitely. However, the history of science is full of examples of technologies that reached fundamental limits.

Moore's Law is already slowing due to quantum effects at small scales. There are theoretical limits to computation related to thermodynamics and quantum mechanics (Bremermann's limit, Bekenstein bound).

Even assuming unlimited growth in computational power, this does not prove that simulating conscious beings is technically possible. We don't know whether consciousness is a computable function, and if so, what computational resources are required to simulate it.

There may be fundamental obstacles to simulating consciousness that cannot be overcome by simply increasing computational power (S001).

🧩 Bostrom's Probabilistic Argument Contains Hidden Premises

Bostrom's trilemma appears to be rigorous logical reasoning, but it rests on several implicit premises, each of which can be challenged.

- First premise: probability space of realities

- The argument assumes we can meaningfully apply probabilistic reasoning to the question of which "reality" we inhabit. This requires the existence of some probability space in which a measure can be defined over different types of realities—an assumption that is itself metaphysical and lacks obvious justification (S003).

- Second premise: principle of indifference

- The argument assumes we should consider ourselves randomly selected from the set of all conscious beings. This principle is problematic in the context of anthropic reasoning and leads to well-known paradoxes such as the Doomsday Argument. Philosophers point out that applying probabilistic reasoning to questions about the fundamental nature of reality requires additional justification that Bostrom does not provide (S003).

🧠 Functionalism About Consciousness Remains an Unproven Philosophical Position

The argument from computational theory of consciousness depends entirely on the truth of functionalism—a philosophical theory about the nature of consciousness. However, functionalism is merely one of many competing theories of consciousness, and it faces serious objections.

John Searle's famous thought experiment, the "Chinese Room," is directed precisely against functionalism, arguing that syntactic symbol processing (computation) cannot generate semantic understanding and subjective experience (S001).

Even if functionalism is correct, this doesn't resolve the question of whether we can determine if we're in a simulation. If simulated consciousness is indistinguishable from "real" consciousness by all internal characteristics, then the distinction between them becomes purely external and possibly devoid of practical significance.

Chalmers acknowledges this problem, suggesting that in a certain sense, simulated reality is "real" for its inhabitants (S001). For more on the philosophical traps of consciousness, see the analysis of myths about conscious AI.

⚠️ Quantum Mechanics Provides No Evidence of Simulation

Interpreting quantum mechanics as a sign of simulation is a classic example of apophenia—perceiving patterns where none exist. Quantum uncertainty and wave function collapse have multiple interpretations within standard physics (Copenhagen interpretation, many-worlds interpretation, de Broglie-Bohm, etc.), none of which require the assumption of simulation.

As for the discreteness of spacetime, this remains an open question in quantum gravity. Some approaches (loop quantum gravity) suggest discreteness, others (string theory) do not. But even if spacetime is discrete at Planck scales, this is not proof of simulation—it's simply a fundamental property of physics.

The analogy with computer screen pixels is superficial and has no explanatory power. The discreteness of physics doesn't require explanation through simulation, just as the wave properties of matter don't require explanation through waves in a medium.

For more detail on quantum mysticism and its logical fallacies, see the analysis of quantum woo.

The Fundamental Problem of Unfalsifiability — and Why It Kills the Hypothesis's Scientific Value

The central problem with the simulation hypothesis isn't that it's wrong, but that it's unfalsifiable. This places it in a special category of claims that philosophers of science consider scientifically sterile. Karl Popper formulated the falsifiability criterion as the demarcation line between science and non-science: a scientific theory must make predictions that can be refuted by observations. More details in the Reality Validation section.

🔎 What Makes a Claim Unfalsifiable and Why That's a Problem

An unfalsifiable claim is one that's compatible with any possible observation. A classic example is the claim "God exists and acts in the world, but his actions are indistinguishable from natural processes." Such a claim is impossible to refute because any observation can be interpreted as compatible with it.

The simulation hypothesis has the same structure: it asserts the existence of an "external" reality (the simulation's creators) that by definition is inaccessible to observation from within the simulation (S003).

If a theory is compatible with any observation, it explains nothing. Explanation requires excluding alternatives — a theory must speak not only about what we observe, but also about what we should not observe if the theory is correct.

The simulation hypothesis makes no such predictions (S003). The problem with unfalsifiability isn't that such claims are necessarily false, but that they have no explanatory power.

📊 The Simulation Hypothesis and Classical Skepticism: The Same Problem

Philosophers point out that the simulation hypothesis is a modern version of classical skeptical scenarios, such as Descartes' evil demon or the "brain in a vat." Descartes proposed a thought experiment: what if everything we perceive is an illusion created by an evil demon deceiving our senses?

The modern version: what if we're brains in vats, connected to a computer that generates all our experiences? (S003)

| Scenario | Problem Structure | Why It's Not Scientific |

|---|---|---|

| Descartes' Evil Demon | External agent creates perfect illusion | No way to distinguish deception from reality |

| Brain in a Vat | Computer generates all experiences | Perfect illusion indistinguishable from reality |

| Simulation Hypothesis | Creators launched our reality as a program | External reality inaccessible to observation |

These scenarios are philosophically interesting because they force us to think about the nature of knowledge and justification of beliefs. But they aren't scientific hypotheses because they offer no way to distinguish the "deceived" state from the "undeceived" one (S003).

🧬 Why "Searching for Glitches" Isn't a Scientific Program

Some simulation hypothesis enthusiasts propose searching for "glitches" or anomalies in physical laws as proof of reality's simulated nature. This idea is appealing, but it faces several problems.

First, what exactly counts as a "glitch"? Any anomaly in data can be explained either as measurement error, or as indication of new physics, or as a "simulation glitch." Without an independent criterion for distinguishing these interpretations, searching for glitches isn't a test of the hypothesis.

- Measurement Error

- Problem with the instrument or methodology, requires repeating the experiment

- New Physics

- Phenomenon requiring expansion of existing theories, tested through predictions

- Simulation Glitch

- Interpretation that makes no new predictions and doesn't exclude alternatives

Second, even if we discover an unexplained anomaly, it wouldn't be proof of simulation — it would just be an unexplained anomaly. The history of science is full of examples of anomalies that eventually received explanations within expanded or new theories (Mercury's perihelion precession, the electron's anomalous magnetic moment, etc.). Interpreting an anomaly as a "simulation glitch" is an additional assumption that itself requires justification.

⚙️ The Problem of Underdetermination of Theory by Data

Philosophers of science have long known about the underdetermination problem: the same data can be explained by multiple competing theories. The simulation hypothesis is an extreme case of this problem.

Any observation we can make inside a simulation is compatible with both the simulation hypothesis and the hypothesis that we live in base reality. There's no logical way to choose between them based on data.

This means the simulation hypothesis can be neither confirmed nor refuted empirically. It remains in the realm of metaphysics, not science. Science requires not just the logical possibility of a theory, but its ability to exclude alternatives through predictions and observations.

A related problem — myths about conscious AI often rely on similar logic: if we can't distinguish consciousness from its imitation, then the distinction becomes philosophical rather than scientific. Similarly, if we can't distinguish simulation from reality, this distinction falls outside the bounds of science.

🔬 Why This Kills Scientific Value

A theory's scientific value lies in its ability to guide research, make new predictions, and exclude alternatives. The simulation hypothesis does none of this. It predicts no phenomena that wouldn't be predicted by alternative theories. It excludes no observations that would be excluded by alternative theories.

Moreover, the simulation hypothesis doesn't guide research in new directions. Physicists, biologists, and other scientists don't use it to plan experiments or develop new methods. It remains in the realm of popular philosophy and science fiction, not in the realm of active scientific work.

This doesn't mean the simulation hypothesis is useless as a philosophical idea. It can be useful for thinking about the nature of reality, consciousness, and knowledge. But as a scientific hypothesis, it's dead on arrival — not because it's wrong, but because it's unfalsifiable and makes no predictions that can be tested.