Anatomy of a myth: what makes AI misconceptions so resilient and why rational arguments against them fail

An AI myth is not simply a false statement. It's a self-sustaining cognitive construct that exploits fundamental features of human thinking: fear of the unknown, tendency to oversimplify complex systems, and the need for narratives that explain rapid technological change (S005).

AI myths spread faster than factual information precisely because they offer simple emotional answers to complex technical questions (S005).

⚠️ Three components of a persistent myth

- Emotional anchor

- Fear of job loss, anxiety about uncontrolled technology, or euphoria from promises of instant business transformation. Emotion is the primary filter for information perception.

- Simplified mental model

- Reducing a complex system to a single characteristic: "AI replaces people," "AI is always objective," "AI is magic." Simplification reduces cognitive load but distorts reality (S001).

- Social reinforcement

- Repetition of the myth through media, corporate marketing, and expert opinions without verification. Source authority replaces fact-checking (S003).

🧩 Why business leaders are particularly vulnerable

Company executives are in the maximum risk zone: they must make strategic decisions about AI investments without deep technical training, under pressure from competitors and shareholder expectations (S003).

Lack of time for information verification, dependence on secondary sources, and cognitive load force reliance on heuristics instead of analysis. 67% of business leaders admit they made AI decisions based on incomplete or distorted information (S004).

🔁 Confirmation loop: how myths create reality

The most dangerous aspect of AI myths is their ability to create self-fulfilling prophecies. A company believing the myth "AI is too expensive for us" doesn't invest in the technology, falls behind competitors, and receives confirmation: "See, we can't afford this" (S004).

| Myth | Company action | Result | "Confirmation" |

|---|---|---|---|

| "AI is too expensive" | Refusal to invest | Falling behind competitors | "We can't afford this" |

| "AI will replace everyone" | Atmosphere of fear, sabotage | Project failure | "AI is dangerous for us" |

This feedback loop makes the myth practically invulnerable to external criticism—it's confirmed by its own consequences. Rational arguments don't work because they don't address the emotional anchor and don't offer an alternative narrative. More details in the section AI Ethics.

Debunking myths requires not refutation but reconstruction: replacing the simplified model with a more accurate one, the emotional anchor with concrete mechanisms, social reinforcement with verifiable facts. This is the work of cognitive immunology, not rhetoric.

Myth One: "AI is a magic wand that will instantly solve all business problems without effort on our part"

This misconception is the most expensive for business. Launch Consulting calls it the "magical transformation myth" and notes that it leads to the highest number of AI project failures (S003). Companies expect that implementing AI will automatically optimize processes, increase profits, and solve structural problems without requiring changes to the organization, data, or strategy.

🔬 Reality: AI is an amplifier of existing processes, not their replacement

Blue Prism emphasizes: AI effectiveness directly depends on the quality of integration into existing workflows and alignment with the organization's strategic goals (S001). AI doesn't create strategy—it executes it faster and at greater scale.

Launch Consulting presents a critical fact: AI thrives on data, and without quality, structured, relevant data, any system will produce useless or harmful results (S003). Research shows that 70% of failed AI projects failed not because of the technology, but due to poor data preparation and lack of clear business objectives.

- Data quality determines result quality—garbage in = garbage out.

- Strategic objectives must be formulated BEFORE choosing technology, not after.

- Integration with existing processes requires workflow redesign, not simply adding a new tool.

📊 The cost of illusion: three categories of losses from believing in magical solutions

The first category is direct financial losses from unjustified investments. Companies spend resources implementing AI solutions without prior process audits, end up with a system that automates inefficient operations, and are surprised by the lack of ROI (S001).

The second is missed opportunities: while the organization waits for "magic," competitors methodically implement AI into specific, well-prepared processes and gain real advantage (S003). The third is reputational damage: the failure of a high-profile AI project creates internal resistance to the technology for years to come (S004).

An AI project failure isn't just wasted money. It's a loss of trust in the technology within the organization and a freeze on all subsequent initiatives, even if they're well-founded.

⚙️ The mechanism of delusion: why AI vendor marketing reinforces the myth

Arion Research points to the role of AI solution providers in perpetuating this myth (S004). Marketing materials often promise "transformation in 90 days," "automation without programming," and "immediate ROI," while remaining silent about the need for data preparation, staff training, iterative model tuning, and integration with legacy systems.

This isn't deception in a legal sense, but the creation of unrealistic expectations that guarantee disappointment. ProfileTree notes: companies that successfully implemented AI spent an average of 6–18 months preparing infrastructure before launching their first productive algorithm (S008).

See also: AI in medicine: how to distinguish breakthrough from marketing and ChatGPT and the wave of AI breakthroughs: where reality ends and marketing noise begins.

Myth Two: "AI Will Completely Replace Human Jobs and Make Most Professions Obsolete"

This myth is the most emotionally charged and politically exploited. Blue Prism calls it the "total replacement myth" and notes that it blocks AI adoption more effectively than any technical limitations (S001). Fear of job loss creates resistance at all organizational levels, from frontline employees to unions and regulators.

🧪 Data Against Fear: What Research Shows About AI's Real Impact on Employment

The World Economic Forum projects the creation of 97 million new jobs by 2025 (S011). This doesn't mean zero losses—some positions will indeed disappear—but the overall balance is positive.

AI's primary impact is the transformation of existing roles, not their elimination (S004). Routine operations become automated, freeing time for analysis, decision-making, and creative work.

AI doesn't replace people—it amplifies human potential. AI excels at processing large data volumes and executing repetitive operations, but critically depends on human context, ethical judgment, and strategic thinking (S003).

🧬 New Roles: What Professions AI Creates and Why There Are More Than You Think

Direct roles created by the AI industry: AI specialists, data scientists, machine learning engineers, AI ethics specialists, algorithm auditors (S011).

Indirect roles expand the spectrum: process transformation managers, data preparation specialists, AI model trainers, business results interpreters, human-machine interface designers (S010).

- AI regulation attorneys

- Psychologists studying human-AI interaction

- Algorithmic bias detection and mitigation specialists (S008)

⚠️ The Real Threat: Not Replacement, But Unpreparedness for Transformation

The danger isn't that AI will take jobs, but that workers and organizations won't prepare for change (S009). Companies that don't invest in workforce reskilling will face an employment crisis—not because AI replaced people, but because people didn't acquire the skills to work with AI.

Successful organizations use AI for employee upskilling and creating new roles focused on collaboration with AI technologies (S004). This is a strategic choice, not a technological inevitability.

Myth Three: "All AI Technologies Are the Same — Just Different Names for One Thing"

This misconception is particularly dangerous for business leaders making investment decisions. Arion Research calls it the "AI monolith myth" and notes that it leads to choosing inappropriate technologies for specific tasks (S004). Executives think of AI as a single technology, but it's actually an umbrella term covering a wide spectrum of methods, tools, and capabilities with different applications and limitations.

🔎 Taxonomy of Reality: Six Core AI Categories

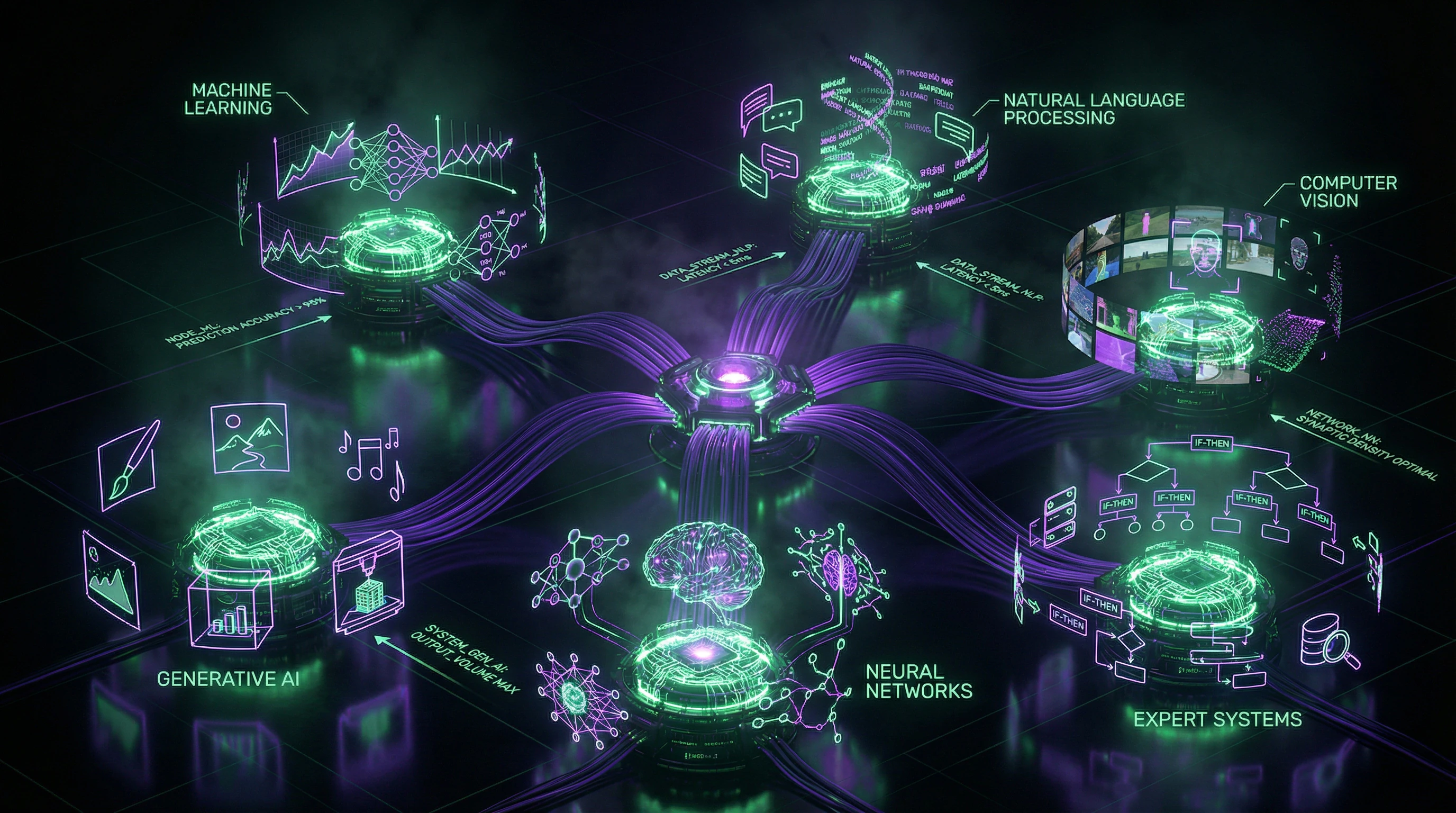

Arion Research identifies key categories: machine learning (ML), natural language processing (NLP), computer vision, generative AI, neural networks, and expert systems (S004). Each category solves specific problems and requires different data, infrastructure, and expertise.

| Category | Primary Task | Input Data | Output |

|---|---|---|---|

| Machine Learning (ML) | Predictive analytics, classification | Structured numerical data | Probability, class, score |

| NLP | Text and speech processing | Text, audio | Meaning, classification, translation |

| Computer Vision | Image and video analysis | Images, video streams | Objects, coordinates, anomalies |

| Generative AI | Content creation | Text prompts, examples | Text, images, code |

| Neural Networks | Complex pattern recognition | Multidimensional data | Hidden representations, predictions |

| Expert Systems | Formalized decision-making | Facts, domain rules | Decision with explanation |

📊 Practical Consequences: How Wrong Choices Kill Projects

Typical mistake: a company chooses generative AI for a task requiring precise classification and gets creative but inaccurate results (S012). An organization implements deep learning for simple regression, overpaying for computational resources and getting a model that's impossible to interpret.

Another scenario: a business tries to use an NLP model trained on English to analyze specialized technical documentation in another language and is surprised by poor quality (S012). Blue Prism emphasizes: generative AI is suitable for solving significant tasks, especially in automation and improving efficiency of legacy and modern systems, but it's not a universal solution (S001).

Choosing the wrong AI category isn't a technical error — it's strategic. It leads to lost investments, team frustration, and discrediting AI within the organization.

🧰 Selection Criteria: Five Questions to Determine the Right Category

- Nature of input data: structured numbers, text, images, time series?

- Type of output: classification, prediction, generation, optimization?

- Interpretability requirements: do you need to explain every decision or is overall accuracy sufficient?

- Resource constraints: what computational power and training time are available?

- Data availability: how many labeled examples exist for training?

Launch Consulting notes: answers to these five questions determine the appropriate AI category with 80–90% accuracy (S003). Skipping this analysis is the primary cause of implementation failures.

Myth Four: "AI Implementation Is Too Expensive and Complex for Our Organization"

This myth blocks AI adoption in small and medium-sized businesses, creating a competitive advantage for large corporations. Arion Research calls it the "inaccessibility myth" and notes that it's based on outdated perceptions about the cost and complexity of AI technologies (S004).

Reality has changed over the past five years thanks to cloud computing and pre-trained models (S004). Advances in cloud computing and the availability of pre-trained AI models have made AI more accessible than ever (S004).

🔬 The Accessibility Revolution: How Cloud Platforms Changed AI Economics

Many cloud-based AI tools offer low barriers to entry and scalability suitable for both small and large businesses (S004). Launch Consulting emphasizes: thanks to cloud computing and open-source AI frameworks, organizations of any size can access AI solutions for a fraction of the historical cost (S003).

Blue Prism adds: most organizations can now benefit from AI without high costs (S001).

📊 Real Costs: Three Pricing Models and Their Applicability

ProfileTree identifies three main models for accessing AI (S008).

- Cloud APIs with pay-per-use: companies pay only for actual requests to the model, with no infrastructure investment. Costs start at cents per thousand requests for basic models.

- Pre-trained models with fine-tuning: organizations take a ready-made model (often free or inexpensive) and train it further on their own data. Costs are mainly for computational resources for training, which can be rented temporarily.

- Fully custom solutions: development from scratch, requiring a team of specialists and significant investment. This model is only needed for unique tasks where ready-made solutions don't work.

Most business tasks are solved by the first two models (S008).

⚙️ Hidden Complexity: Where Problems Actually Arise

Bernard Marr (Forbes) points to the real sources of complexity: not the AI technology itself, but integration with existing systems, organizational change management, and ensuring data quality (S010). Launch Consulting confirms: the cost barrier isn't as high as before, but the organizational readiness barrier remains significant (S003).

Companies underestimate the need for staff training, process reengineering, and creating a culture that supports AI experimentation (S003). This is not a technical challenge but an organizational one—and this is where the real cost of implementation lies.

| Barrier | Nature of Problem | Strategy to Overcome |

|---|---|---|

| Technology cost | Overestimated; cloud solutions are cheap | Start with cloud APIs, pay per use |

| System integration | Requires process redesign | Pilot projects on isolated tasks |

| Team competencies | Lack of knowledge, not shortage of people | Train existing employees, don't hire experts |

| Organizational culture | Fear of failure, conservatism | Support experimentation, transparency of results |

Arion Research recommends: start with small-scale pilot projects, use ready-made cloud solutions, and invest in building AI competencies among existing employees rather than hiring expensive external experts (S004).

If you're interested in how to distinguish real breakthroughs from marketing hype in related fields, see AI in Medicine: How to Distinguish Breakthrough from Marketing. More details in the Deepfakes section.

Myth Five: "AI is completely objective and free from bias, unlike humans"

This is one of the most dangerous myths because it creates a false sense of security. Blue Prism calls it the "myth of machine objectivity" and warns: no system can be completely objective, just as no person can be unaffected by the surrounding world (S001). AI inherits and often amplifies biases present in training data (S001).

An algorithm is not a judge, but a mirror. If a biased society looks into the mirror, the reflection will be biased.

🧬 The mechanism of bias: how human distortions are encoded in algorithms

The fundamental problem is simple: AI models are trained on data, and human biases can inherently exist in that data (S004). If historical hiring data shows preference for certain demographic groups, an AI system trained on that data will reproduce and amplify that bias (S004).

Blue Prism adds: unfair AI biases arise when AI applications are developed with inherent human prejudices (S001). AI algorithms must be regularly updated and audited to avoid biases and ensure accuracy (S011).

🔬 Documented cases: three categories of algorithmic discrimination

ProfileTree classifies types of AI bias (S008):

- Data bias: the training set is not representative of the real population. A facial recognition system trained predominantly on images of one racial group shows low accuracy for other groups (S008).

- Algorithm design bias: the choice of optimization metric or model architecture implicitly favors certain outcomes. A credit scoring system optimized only to minimize defaults may systematically deny groups with less credit history, even if they are creditworthy (S008).

- Interpretation bias: AI results are used without considering context. A recidivism prediction system whose risk scores are used mechanically, without accounting for social factors (S008).

Each category requires different approaches to detection and correction. Ignoring any of them leads to systematic discrimination disguised as "objectivity."

🛡️ Protection protocol: five mandatory practices for minimizing bias

| Practice | What it does | Why it's critical |

|---|---|---|

| Diverse training data | Active search for and inclusion of data from underrepresented groups (S004) | Without this, the model is blind to entire segments of reality |

| Regular bias monitoring | Automated tests checking for performance differences across demographic groups (S004) | Bias is invisible until you measure it |

| Adaptability | Willingness to adjust the system when problems are detected (S004) | A static algorithm degrades as reality changes |

| Guardrails | Preventing problems; flagging unfair behavior (S001) | The system must be able to say "no" to itself |

| Decision transparency | Auditors and users understand why the system made a specific decision (S011) | A black box is not objectivity, it's irresponsibility |

These practices don't guarantee perfection, but they transform AI from a black box into a system that can be audited, criticized, and improved. This is the only path to responsible use.

For more on how marketing conceals the real limitations of technologies, see the article on AI in medicine and the analysis of the AI breakthrough wave. Learn more in the Deepfake Detection section.

Myth Six: "AI requires nothing but data — just upload information and get results"

This oversimplification ignores the critical importance of quality, relevance, and data management (S001). Dirty data means garbage in, garbage out.

An AI system doesn't work with information in general, but with specific patterns in specific datasets. If the data contains errors, gaps, outdated records, or sampling bias — the model will learn to reproduce these defects with mathematical precision. More details in the section Statistics and Probability Theory.

Data quality determines the ceiling of result quality. No algorithm can extract signal from noise if noise is all you've given it.

Second layer: data needs preparation. This means normalization, encoding categories, handling missing values, removing outliers, balancing classes (if needed). Each step is a decision that affects the outcome.

Third layer: relevance. Coffee sales data won't help predict electricity demand. You need to understand which variables are actually connected to the target phenomenon, and which are noise or correlation artifacts.

- Audit data sources: where they come from, how they were collected, who verified them

- Check completeness: are there gaps, how are they distributed, why did they occur

- Analyze biases: is the sample representative, or does it reflect only part of reality

- Validate relevance: are features connected to the goal or is this spurious correlation

- Monitor drift: do patterns in the data change over time

Fourth layer: management. Data needs versioning, documentation, access control, updates. If you uploaded a dataset a year ago and forgot about it — the model is running on dead data.

Fifth layer: ethics and regulation. If data contains information about protected categories (race, gender, health), you need to understand how this affects fairness of results (S005). This isn't a technical problem — it's a problem of responsibility.

"Just upload the data" is like telling a surgeon: "Just pick up the scalpel." The tool exists, but without diagnosis, preparation, and experience, the result will be catastrophic.

Real cost: data preparation often takes 60–80% of project time. This isn't a bug, it's a feature. Organizations that understand this get working systems. Those that ignore it get expensive failures.