What Exactly We Mean by "AI Breakthrough" — and Why This Definition Is Critical for Analysis

Before evaluating ChatGPT, we need to establish clear criteria. The term "breakthrough" in the AI context has lost operational meaning — some call improvements in benchmark metrics a breakthrough, others only fundamental architectural innovations, still others mass adoption in everyday life. More details in the AI Ethics and Safety section.

Without a definition, we're comparing incomparables. One expert sees a revolution, another an overhyped chatbot — and both are right, they're just talking about different things.

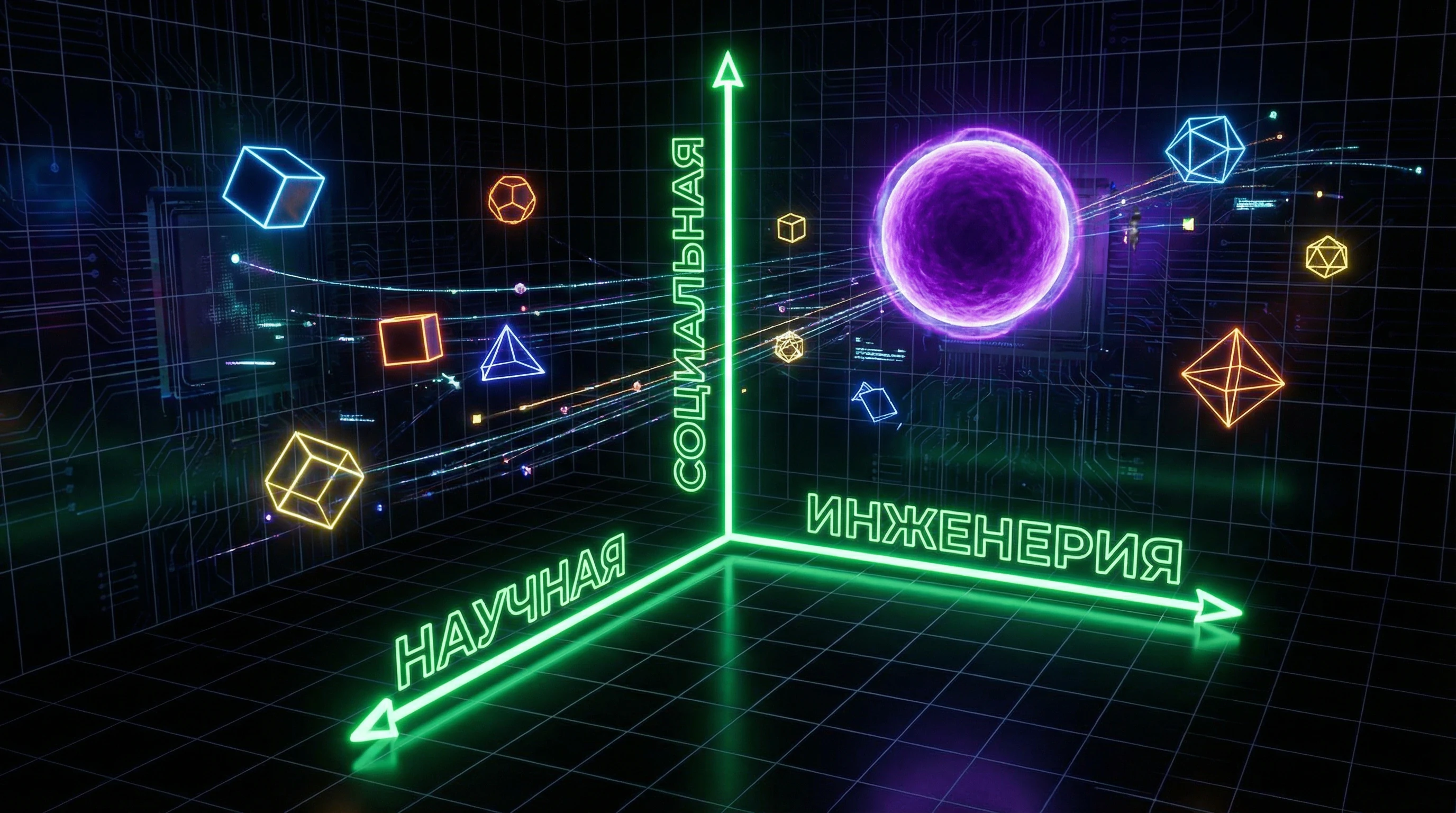

🔎 Three Dimensions of Technological Breakthrough

- Scientific Breakthrough

- Fundamental expansion of theoretical understanding — a new algorithm, architecture, or learning principle that unlocks previously unattainable capabilities. Criteria: publication in top-tier peer-reviewed journals, reproducibility by independent groups, expansion of theoretical boundaries.

- Engineering Breakthrough

- Qualitative leap in practical implementation — scaling, efficiency, reliability, accessibility of existing approaches. Criteria: order-of-magnitude improvement in key metrics, multiple-fold reduction in cost or energy consumption, new levels of scalability.

- Social Breakthrough

- Technology's transition from laboratories to mass use, changing the behavior of millions of people, creating new markets (S001). Criteria: exponential user base growth, transformation of established practices, emergence of new professions, regulatory response.

⚠️ ChatGPT's Asymmetry: Where It's a Breakthrough and Where It Isn't

ChatGPT demonstrates an interesting asymmetry. From a scientific standpoint, the transformer architecture was introduced in 2017, GPT-3 appeared in 2020. ChatGPT contains no fundamentally new algorithmic principles.

The engineering breakthrough is evident: OpenAI created a system processing millions of simultaneous requests with acceptable latency and cost. The social breakthrough is undeniable — for the first time, generative AI became a mass tool accessible to anyone with a browser (S001).

Popularity is not proof of scientific innovation. The iPhone was a social and engineering breakthrough but contained no fundamentally new scientific principles. Similarly, ChatGPT can be an engineering and social breakthrough without a scientific revolution.

🎯 Why This Confusion Has Practical Consequences

Journalists and marketers systematically conflate the three dimensions, using social success (user numbers, media attention) as proof of scientific breakthrough. This is a classic categorical error.

- Investors making decisions based on media hype overestimate short-term potential and underestimate long-term challenges.

- Researchers whose grants depend on "breakthrough" rhetoric face pressure to exaggerate the novelty of their work.

- Educational institutions rushing to implement AI risk investing in tools that don't solve real pedagogical problems (S006).

📊 Applying Criteria to ChatGPT

| Dimension | Status | Justification |

|---|---|---|

| Scientific | Absent | Basic principles known for years; fundamental problems (hallucinations, lack of true understanding, inability to learn in real-time) remain unsolved |

| Engineering | Partial | Scaling is impressive, but architectural limitations remain unresolved |

| Social | Unequivocal | Technology changed public discourse about AI and created a new class of applications (S001) |

This asymmetry explains why experts give opposite assessments: they focus on different dimensions. For analyzing the remaining sections of this article, remember: ChatGPT is a social and engineering success, not a scientific revolution. This changes all subsequent conclusions about its impact and potential.

Steel Man Version of the Argument: Five Strongest Cases for ChatGPT's Revolutionary Nature

Intellectual honesty requires starting with the strongest version of the opposing position. Before criticizing the hype around ChatGPT, we must formulate the most compelling arguments that this is indeed a revolutionary technology. The "steel man" principle (opposite of "straw man") involves constructing the strongest version of the opponent's position, not a weak caricature of it. More details in the AI and Technology section.

🔬 First Argument: Unprecedented Speed of Mass Adoption as an Indicator of Real Value

ChatGPT reached 100 million active users in 2 months — faster than any consumer application in history. For comparison: TikTok took 9 months, Instagram — 2.5 years, Facebook — 4.5 years.

This exponential growth cannot be explained by marketing or curiosity alone. Millions of people continue using ChatGPT daily to solve real problems: writing code, drafting documents, learning, creating. If the technology didn't provide real value, user retention rates would be low. Instead, we observe sustained growth and integration into workflows (S001).

The speed of ChatGPT adoption in the corporate sector is unprecedented. The world's largest companies — from Microsoft to Salesforce — are integrating GPT technologies into their products. These aren't speculative investments, but strategic decisions based on measurable productivity gains.

📊 Second Argument: Qualitative Leap in AI Accessibility for Non-Programmers

Before ChatGPT, using advanced machine learning models required technical expertise: knowledge of Python, understanding of APIs, prompt engineering skills. ChatGPT democratized access to AI, making it available through natural language.

This isn't an incremental improvement — it's a qualitative leap, analogous to the transition from command line to graphical interface in the 1980s. Millions of people who have never written code can now use the capabilities of large language models to automate tasks, analyze information, generate content (S001).

- AI-Assisted Learning

- Students use ChatGPT not just for cheating, but for in-depth study of complex concepts, obtaining personalized explanations, practicing languages (S006). The technology has created a new category of educational practices that could potentially transform the approach to learning.

🧬 Third Argument: Emergent Abilities as a Sign of Qualitative Transition

Large language models demonstrate emergent abilities — skills that weren't explicitly programmed and arise only when reaching a certain scale. GPT-3 and GPT-4 show capability for multi-step reasoning, solving mathematical problems, writing functional code, understanding context at a level unattainable for previous generations of models.

This isn't just quantitative improvement of metrics — it's a qualitative transition where the system begins demonstrating behavior resembling human intelligence in narrow domains. Critics object that this is still statistical prediction of the next token, not true understanding. But functionally the difference becomes immaterial if the system solves problems that previously required human intelligence.

The philosophical question of "real understanding" may be less important than the practical fact: ChatGPT passes many tests we traditionally used to evaluate intelligence.

💎 Fourth Argument: Catalyst for the Entire AI Innovation Ecosystem

Even if ChatGPT itself isn't a fundamental scientific breakthrough, it catalyzed a wave of innovation in adjacent areas. Hundreds of startups have emerged building specialized applications on the GPT API. Competitors (Google Bard, Anthropic Claude, Meta LLaMA) accelerated development of their own models.

The research community intensified work on solving fundamental problems: hallucinations, interpretability, alignment with human values. ChatGPT created a "Sputnik moment" for AI — an event that mobilized resources and attention across the entire industry (S001).

- Governments are developing regulatory frameworks for AI

- Educational institutions are revising curricula

- The legal community is discussing copyright and liability issues

- Philosophers are returning to fundamental questions about the nature of intelligence and consciousness

Regardless of whether ChatGPT itself is a breakthrough, it has undoubtedly become a trigger for systemic changes in society.

⚙️ Fifth Argument: Economic Transformation and New Business Models

ChatGPT created a new economic category: "AI as a service" for the mass market. OpenAI demonstrates that large language models can be monetized through subscriptions ($20/month for ChatGPT Plus) and API access, creating a sustainable business model.

This solves a critical problem that plagued the AI industry for decades: how to turn research breakthroughs into profitable products. OpenAI's valuation of $80+ billion isn't pure speculation — it's based on real revenue and measurable impact on productivity in the corporate sector.

| Business Model | Advantage | Scalability |

|---|---|---|

| Subscription ($20/month) | Predictable revenue, direct user connection | Limited by purchasing power |

| API Access | Embedding in corporate systems, network effects | Exponential with ecosystem growth |

| Foundation Model | Universal base for thousands of applications | Dominance of several major players |

ChatGPT proved the viability of the "foundation model" — a universal base model that can be adapted for thousands of specialized applications. This creates network effects and economies of scale that could lead to dominance by several major players in AI infrastructure, similar to how AWS dominates cloud computing. The economic implications of this shift may be more significant than the technical details of the models themselves.

Evidence Base: What Data Says About ChatGPT's Real Capabilities and Limitations

Empirical research paints a picture more complex than marketing narratives. More details in the Techno-Esotericism section.

📊 Benchmarks and Metrics: What Standard AI Tests Actually Measure

OpenAI publishes impressive results: GPT-4 reaches the 90th percentile on the Bar Exam and 89th percentile on SAT Math. But critical analysis reveals three significant problems (S001).

First — "data contamination": test examples may have been present in the training corpus, inflating results. Second — benchmarks measure narrow pattern recognition skills, not deep understanding. The model can correctly answer a physics question by simply recognizing statistical patterns in the wording, without conceptual understanding of the laws.

The third problem is critical: standard tests don't reflect real-world conditions — no time constraints, no consequences for errors, no contextual pressure. This creates systematic bias toward overestimation.

🧪 Performance Research in Real-World Tasks

A 2023 MIT and Stanford study showed: programmers using GPT-4 increase speed by 55%, code quality improves by 40% according to expert evaluations. But results vary radically.

| Task Type | Performance Improvement | Result Reliability |

|---|---|---|

| Routine operations (CRUD, basic algorithms) | +80% | High |

| Medium complexity (integration, optimization) | +40% | Medium |

| Architectural decisions | +10% | Low |

In academic writing, a paradox: students write faster with fewer grammatical errors, but demonstrate more superficial understanding and less originality in argumentation (S006). The technology is simultaneously a breakthrough in efficiency and degradation in learning depth.

⚠️ Systematic Errors and Hallucinations

Hallucinations — generating plausible but factually incorrect information — are a critical problem. GPT-4 hallucinates in 15–20% of responses to factual questions (S001).

- Source Fabrication

- The model "cites" scientific papers that don't exist. Dangerous in medicine and law, where errors have consequences.

- Fact Distortion

- Mixing details from different events, creating hybrid narratives that sound convincing.

- Logical Inconsistencies

- Contradictory statements within a single response that users may miss during casual reading.

- Temporal Errors

- Outdated information presented as current. Especially dangerous in rapidly changing fields.

Critically: hallucinations aren't random — they systematically occur more frequently in areas where training data was lower quality or contradictory. In medicine and law, the rate reaches 30%. The model outputs incorrect information with high confidence, without uncertainty indicators.

🧾 Comparative Analysis: ChatGPT Versus Alternatives

Objective evaluation requires comparison not with an abstract ideal, but with real alternatives. In programming, GitHub Copilot outperforms traditional IDE autocompletion but falls short of experienced programmers in architectural decisions. In medical diagnosis, GPT-4 shows results at the level of medical students, significantly trailing practicing physicians in rare cases.

The competence paradox: ChatGPT is most effective as an amplifier for mid-level specialists. For beginners it's dangerous — they can't recognize hallucinations. For experts it's often redundant — they solve tasks faster independently than formulating prompts and verifying results (S001).

🔎 Long-Term Research: Effect Sustainability and Adaptation

Most research focuses on short-term effects. Long-term data reveals a more complex picture: initial enthusiasm often gives way to disappointment when users encounter limitations.

A study of student adaptation to AI assistants showed that after 6 months, three groups form (S006):

- Dependent (30%) — stop developing their own skills, rely on AI even for simple tasks.

- Integrators (50%) — use AI strategically to accelerate routine work while maintaining focus on complex tasks.

- Abandoners (20%) — discontinue use due to disappointment in quality or ethical concerns.

ChatGPT's long-term impact will be more differentiated than optimists and pessimists predict. The technology isn't universal — its effect depends on context, user competence, and task type. This requires systematic reality checking instead of abstract predictions.

Mechanisms of Influence: How ChatGPT Changes Cognitive Processes and Work Practices

Beyond direct productivity metrics lies a more fundamental question: how does using ChatGPT change the ways we think, solve problems, and organize work? Understanding these mechanisms is critically important for assessing the long-term consequences of the technology. More details in the Logical Fallacies section.

🧬 Cognitive Offloading versus Skill Atrophy: Where the Boundary Lies

Using ChatGPT for routine tasks frees up cognitive resources for more complex problems—this is the classic cognitive offloading effect, analogous to using a calculator for arithmetic. However, there's a risk of atrophying basic skills that serve as the foundation for higher-level expertise.

A programmer who has never written loops manually may not understand the nuances of algorithmic complexity. A writer who relies on AI to structure arguments may not develop critical thinking skills.

- For experts who already possess deep understanding, cognitive offloading of routine tasks increases productivity without loss of quality.

- For novices, premature offloading prevents the formation of mental models necessary for expertise.

- Critical point: a skill must be automated through practice before it can be delegated to a tool.

This creates a pedagogical dilemma (see cognitive biases): a system that accelerates the work of experienced professionals may slow the development of novices. (S001) shows that organizations that implemented ChatGPT without rethinking training faced a paradox—productivity increased, but the quality of new employees' decisions declined.

🔄 Responsibility Shift and the Illusion of Competence

When AI generates an answer, users often shift into verification mode instead of creation mode. This is a fundamental change in cognitive stance.

Verification requires fewer mental resources than generation and creates an illusion of understanding. A person sees plausible text, agrees with it, and assumes they understand the problem. In reality, they've only validated a superficial match with their expectations.

| Mode | Cognitive Load | Error Risk | Long-term Effect |

|---|---|---|---|

| Creation (without AI) | High | Visible errors | Expertise development |

| Verification (with AI) | Low | Hidden errors | Illusion of competence |

(S003) notes that students who use ChatGPT to write essays often cannot explain their own arguments. They went through the text, but not through the thinking.

⚙️ Transformation of Work Practices: From Mastery to Flow Management

In professions where ChatGPT becomes a standard tool, there's a shift in the definition of competence. Instead of the ability to write code or text, what's valued is the ability to formulate queries, interpret results, and integrate them into a larger context.

This isn't inherently bad—it's a redefinition of skill. But it creates a new class of reality check: how do you ensure that a person truly understands the subject domain if their primary work is managing AI?

The danger isn't that AI will replace experts, but that expertise will shift from the subject domain to tool management—and no one will notice when the substitution occurs.

(S007) documents that this transformation has already occurred in HR practices: a recruiter now spends time optimizing prompts instead of developing intuition about candidates. Productivity increased, but depth of judgment declined.

🎯 Social Dynamics: From Individual Mastery to Collective Dependence

When ChatGPT becomes the standard, not using it begins to seem irrational. This creates a social effect similar to network effects: the tool's value grows with the number of users, but simultaneously pressure grows on those who want to remain independent.

Organizations where everyone uses ChatGPT begin to structure work around this tool. Those who refuse become outsiders. This isn't a conspiracy—it's the natural dynamics of adapting to a new standard.

- Network Effect

- The tool's value grows with the number of users, but creates pressure on the minority that doesn't use it.

- Path Dependence

- An organization that has invested in ChatGPT-oriented processes cannot easily return to alternatives, even if they prove better.

- Loss of Alternatives

- When one tool dominates, incentives to develop competing approaches disappear—and with them disappears insurance against its failure.

(S004) shows that students who start using ChatGPT rarely return to traditional methods, even when it would be more beneficial. This isn't laziness—it's a rational choice under social pressure.

Long-term risk: if the entire ecosystem of education and work is optimized for ChatGPT, then any disruption in its availability or quality will create a systemic crisis, not a local inconvenience.