Artificial intelligence in medicine has become the subject of mass hype: from cancer diagnostics to personalized therapy. But behind the bold headlines lies a complex reality: most systems work under narrow conditions, data is contradictory, and regulatory barriers are high. This article dissects the mechanism of medical AI hype, reveals the actual level of evidence behind these technologies, and provides a protocol for verifying claims about "healthcare revolution."

�� Every week a new startup emerges promising a "diagnostic revolution" or "personalized medicine of the future." Investors pour in billions, media amplify headlines about "breakthroughs," and patients wait for miracles. But between the marketing narrative and clinical reality lies a chasm that few attempt to measure. This article is not a manifesto against technology, but a navigation guide for a world where every promise requires verification and every number needs context. We'll dissect the hype mechanism, show where science ends and speculation begins, and give you a protocol that works regardless of how convincing the pitch sounds.

�� What Exactly They Promise: Anatomy of Medical AI Claims and Technology Applicability Boundaries

The first problem begins with definitions. The term "artificial intelligence in medicine" is used so broadly that it has lost specificity: it encompasses simple image classification algorithms, complex clinical decision support systems, and hypothetical AGI capable of replacing physicians. More details in the How Artificial Intelligence Works section.

When a startup claims a "revolution," it's critically important to understand exactly what class of systems is being discussed—and under what conditions they operate.

�� Three Categories of Medical AI Systems

- Narrow Classifiers

- Solve a single task under strictly controlled conditions: detect diabetic retinopathy in fundus photographs or identify pneumonia on chest X-rays. Trained on large datasets, but applicability is limited by input data quality and training population (S001).

- Clinical Decision Support Systems (CDSS)

- Integrate into clinical workflows and offer recommendations based on electronic medical records, laboratory data, and literature. Depend on data structuring quality, protocol currency, and the physician's ability to critically evaluate recommendations (S004).

- Integrated Platforms

- Promise to combine diagnostics, prediction, and therapy personalization. This is where maximum hype and minimum evidence base concentrate: most are at the pilot stage (S002).

�� Applicability Boundaries: Laboratory vs Clinic

The key error is ignoring the gap between laboratory validation and clinical practice. A system may show 95% accuracy on a test dataset but fail in a real hospital due to differences in equipment, imaging protocols, or patient demographics.

This phenomenon, known as dataset shift, is systematically underestimated in marketing materials.

Most studies are conducted retrospectively: the algorithm analyzes already collected data where diagnoses are known. In prospective studies, where the system operates in real-time, results are often more modest. The transition from retrospective validation to prospective implementation reduces performance metrics by an average of 15–30% (S001).

Regulatory Barriers and Their Limitations

| Evaluation Criterion | What Regulators Check | What It Does NOT Guarantee |

|---|---|---|

| Safety | Absence of harm during use | Improved patient outcomes |

| Analytical Validity | Correct data processing | Clinical utility in real-world conditions |

| Scope of Application | Narrow scenario (e.g., retinopathy screening) | Extrapolation to broader applications |

Obtaining regulatory approval (FDA in the US, CE Mark in Europe) is an important but insufficient criterion. Regulators assess safety and analytical validity, but don't always require evidence of clinical utility—improved patient outcomes (S004).

Approval is often granted for narrow applications, but marketing extrapolates it to broader scenarios. An algorithm approved for diabetic retinopathy screening in type 2 diabetes patients may be promoted as a "universal eye disease diagnostic system"—which exceeds the validated application scope.

�� Steel Man Version of the Argument: Five Strongest Cases for the Revolutionary Potential of Medical AI

Before examining weaknesses, we must honestly present the strongest arguments from medical AI proponents. This is not a straw man, but a steel man version of the position: if we cannot refute the best arguments, criticism is meaningless. For more details, see the section on AI Errors and Biases.

�� Argument 1: Superiority in Narrow Pattern Recognition Tasks Is Already Proven

In strictly defined visual diagnostic tasks, AI systems genuinely achieve or exceed expert-level performance. Algorithms for detecting diabetic retinopathy, melanoma in dermatoscopic images, and certain types of lung cancer on CT scans demonstrate sensitivity and specificity comparable to experienced specialists (S001).

In conditions of specialist shortage (especially in developing countries and rural regions), even a system with 85–90% accuracy can be clinically useful if the alternative is no diagnosis at all. The "imperfection" argument loses force when the comparison is not with an ideal physician, but with the real availability of medical care.

- Randomized controlled trials confirm equivalence or superiority in narrow tasks

- 85–90% accuracy is clinically useful when no alternative exists

- Scaling in regions with specialist shortages addresses accessibility, not quality

�� Argument 2: Ability to Process Multimodal Data Opens New Diagnostic Possibilities

Human physicians are limited in their ability to simultaneously analyze dozens of data sources: genomic profiles, proteomics, medical history, imaging, laboratory values, and literature. AI systems can integrate these heterogeneous data and identify patterns inaccessible to traditional analysis (S002), (S006).

Systems analyzing combinations of genetic markers and imaging data can potentially predict therapy response more accurately than each data source individually. This is not physician replacement, but expansion of cognitive capabilities—an argument for "intelligence augmentation" rather than substitution.

Argument 3: Scalability and Standardization Reduce Variability in Healthcare Quality

Healthcare quality varies significantly depending on physician experience, fatigue, cognitive biases, and access to current information. AI systems, once validated, provide stable quality regardless of time of day, workload, or geography (S004).

This argument is particularly strong in the context of rare diseases: a general practitioner may encounter a specific pathology once in a career, while an algorithm trained on thousands of cases maintains expertise. Standardization through AI is a mechanism for disseminating best practices.

A rare disease encountered by a physician once in a career is routine for an algorithm trained on thousands of cases. Standardization through AI doesn't degrade the profession—it disseminates expertise.

�� Argument 4: Economic Efficiency of Screening Programs Can Increase Radically

Mass screening programs (breast cancer, colorectal cancer, diabetic retinopathy) require enormous resources for image analysis, most of which contain no pathology. AI systems can perform initial triage, directing only suspicious cases for expert evaluation, reducing specialist burden and program costs (S005).

Systematic reviews of screening programs show that implementing AI triage can reduce cases requiring expert evaluation by 50–70% while maintaining sensitivity above 95%. If these figures are confirmed in prospective studies, the economic argument becomes irrefutable.

�� Argument 5: Continuous Learning Allows Systems to Adapt to New Data Faster Than Clinical Protocols Update

Medical knowledge updates faster than educational programs and clinical guidelines can change. AI systems using continuous learning mechanisms can theoretically integrate new data from literature and clinical practice in real time, ensuring recommendation currency (S004).

This argument is especially relevant in rapidly evolving fields such as oncology and infectious diseases, where new drugs and protocols emerge monthly. However, this is also where the main danger lies: continuous learning without strict control can lead to error accumulation and model drift.

- Continuous Learning

- Real-time integration of new data. Advantage: recommendation currency. Risk: model drift and error accumulation without oversight.

- Clinical Protocols

- Updated over years. Advantage: conservatism and verification. Disadvantage: lag behind new data.

�� Evidence Base Under the Microscope: What Systematic Reviews and Meta-Analyses Say About Real-World Effectiveness

Having presented the strongest arguments, let's move to a critical analysis of the evidence base. More details in the AI Ethics and Safety section.

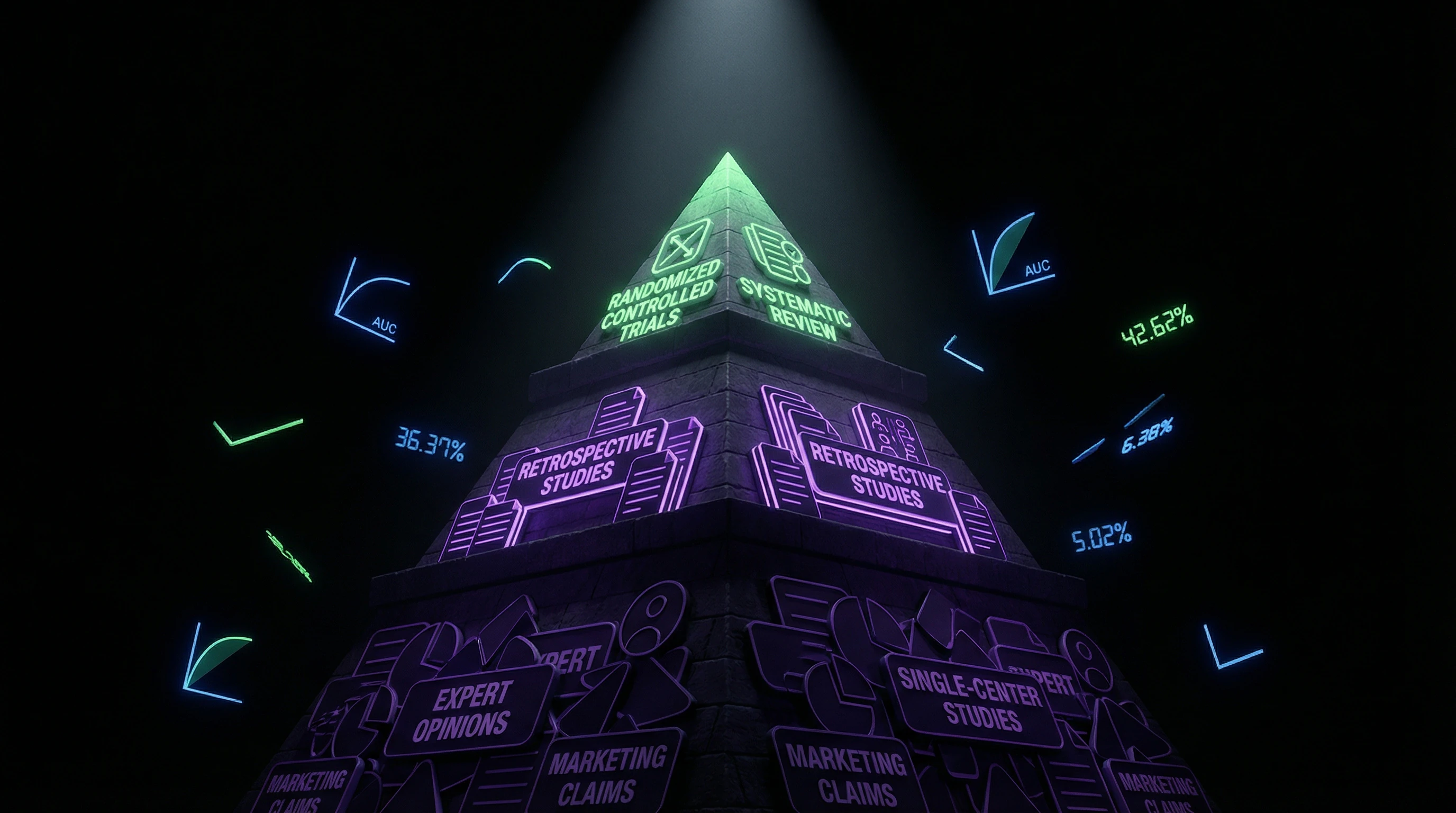

�� Research Quality: Predominance of Retrospective Single-Center Studies Over Prospective RCTs

A systematic review of medical AI research reveals a critical problem: the vast majority of publications are retrospective studies using data from a single medical center. Such studies carry a high risk of overfitting and don't allow assessment of result generalizability (S001).

Prospective RCTs, where an AI system is implemented in real practice and its impact on clinical outcomes (mortality, quality of life, complication rates) is measured, are critically scarce. A review of screening programs shows that less than 15% of medical AI studies meet high methodological quality criteria (S001). This doesn't mean the technologies don't work—but it does mean the level of evidence is lower than for most pharmaceutical drugs.

High accuracy on a test dataset from one center is not proof of effectiveness. It's proof that the algorithm memorized that specific data well.

�� Publication Bias Problem: Negative Results Stay in Desk Drawers

As in other areas of medicine, medical AI research suffers from publication bias: studies with positive results are published more often than those with negative or null results. This distorts perceptions of technology effectiveness (S004).

Commercial developers often publish only the most impressive results, remaining silent about failed implementation attempts or system limitations. The absence of mandatory registration for medical AI studies (unlike clinical trials for drugs) exacerbates the problem.

- Study with positive result: published in a journal, cited in press releases.

- Study with null result: remains in archives, doesn't influence technology perception.

- Result: biased picture of effectiveness in scientific literature and media.

�� Metric Heterogeneity: Why High Accuracy Doesn't Always Mean Clinical Utility

Medical AI studies use heterogeneous evaluation metrics: accuracy, sensitivity, specificity, area under the ROC curve (AUC), F1-score. But none of these metrics directly measures what matters to patients: improved outcomes (S001).

A system can have an AUC of 0.95 (excellent indicator), but if its implementation doesn't change treatment tactics or improve prognosis, clinical utility is zero. Systematic reviews show that correlation between analytical metrics and clinical outcomes is weak and unpredictable (S001).

| Metric | What It Measures | Link to Clinical Outcome |

|---|---|---|

| Accuracy | Proportion of correct predictions | Weak—depends on class distribution |

| Sensitivity | Proportion of detected cases | Moderate—important for screening, but doesn't guarantee improvement |

| AUC (area under curve) | Ability to distinguish classes | Weak—doesn't account for decision thresholds and clinical costs of errors |

| Mortality, quality of life | Real outcomes for patients | Strong—but rarely measured in AI studies |

�� External Validation: Why Algorithms Fail When Tested on Independent Datasets

The gold standard for evaluating medical AI is external validation: testing on data from other medical centers, collected independently from the training set. Systematic reviews show that with external validation, algorithm performance drops by an average of 10–25% compared to internal validation (S001).

Reasons vary: differences in equipment (different MRI, CT, X-ray machine models), imaging protocols, patient demographics, disease prevalence. An algorithm trained on data from a U.S. university hospital may show low accuracy in a district hospital in India—not due to technical flaws, but because of fundamental differences in populations and conditions (S002), (S006).

Overfitting isn't a developer error. It's a natural consequence of algorithms seeking patterns in specific data. The problem is that these patterns often don't transfer to new data.

Clinical Workflow Integration: Why a Technically Working System May Not Be Used by Physicians

Even a validated system can fail at the implementation stage if it doesn't integrate into the existing clinical process. Studies show that physicians ignore AI system recommendations in 30–50% of cases if the system requires additional actions, slows down work, or provides recommendations without explanations (S004).

The "black box" problem is particularly acute: if a system can't explain why it suggests a particular diagnosis or tactic, physicians don't trust it. Trust in a tool depends not only on its accuracy but also on the transparency of its decision-making mechanism (S003). This isn't physician irrationality, but rational caution under conditions of legal liability.

- Clinical workflow

- The sequence of physician actions during diagnosis and treatment. An AI system must fit into this process, not require its redesign.

- Explainability

- A system's ability to justify its decision. Without it, a physician can't verify the logic and bear responsibility for the result.

- Legal liability

- If the system makes an error, the physician answers to the patient and court. Therefore, the physician must understand and control every decision.

�� Mechanism or Correlation: Why AI Finds Patterns But Doesn't Understand Causal Relationships

A fundamental limitation of modern medical AI systems is that they're optimized to find correlations, not to understand causal mechanisms. This creates risks of false discoveries and fragile predictions. Learn more in the Epistemology Basics section.

�� The Confounder Problem: When Algorithms Learn the Wrong Thing

Classic example: an algorithm trained to detect pneumonia on chest X-rays may actually learn to recognize portable X-ray machines (which are more often used for severely ill patients) instead of the pneumonia itself.

This is a confounder—a hidden variable that correlates with the target feature. The problem is compounded by the fact that deep neural networks find patterns invisible to humans—but this doesn't guarantee the patterns are clinically meaningful.

An algorithm can achieve high accuracy by using data artifacts (image labels, file compression characteristics, equipment features) rather than biological disease markers. This isn't a model error—it's an error in understanding what the model actually learned.

�� Absence of Causal Models: Why Correlation Doesn't Predict Intervention Effects

Medical decisions require causal thinking: "If I prescribe this treatment, what will happen?" But most AI systems are trained on observational data, which doesn't allow causal inference (S004).

A system can predict that a patient has a high probability of death, but cannot say whether a specific intervention will change that outcome. This distinction between prediction and action is critical for clinical practice.

- Prediction (correlation)

- "This patient has a high risk of death"—based on patterns in data, but doesn't explain the cause.

- Causal knowledge (mechanism)

- "If drug X is prescribed, risk will decrease by Y%"—requires understanding the biological mechanism and verification through randomized trials (S004).

- Why this is critical

- A physician must choose between multiple interventions. Prediction without mechanism leaves them without a tool for making that choice.

Epistemological analysis of clinical medicine emphasizes that knowledge of disease mechanisms is critically important for choosing therapy. AI systems operating as "black boxes" don't provide this knowledge—they give predictions without explanations, which limits their applicability in complex clinical scenarios (S003).

�� Data Drift: Why Models Become Obsolete Faster Than We Think

Medical practice constantly changes: new drugs appear, protocols evolve, pathogens mutate. A model trained on 2020 data may be inaccurate in 2026—not due to technical problems, but because reality itself has changed.

| Drift Factor | Example | Consequence for Model |

|---|---|---|

| Pathogen evolution | New COVID-19 variants, antibiotic resistance | Model trained on old strains loses accuracy |

| Treatment protocol changes | Transition to new therapy standard | Distribution of outcomes in data shifts |

| Demographic shifts | Population aging, migration | Patient characteristics differ from training sample |

Machine learning models require regular retraining to maintain accuracy, but in medicine this is more complex: each model update requires revalidation and regulatory approval (S001). This creates a paradox: systems must adapt, but the adaptation process is slow and expensive.

The result: an AI system that was accurate at launch may become unreliable after several years, not because the algorithm broke, but because the world changed. This requires constant monitoring and retraining—costs that are often underestimated when planning implementation.

Conflicts and Uncertainties: Where Sources Diverge and Why There's No Consensus

Literature analysis reveals several areas where data conflicts and expert opinions diverge. This isn't a sign of scientific weakness, but an indicator of the problem's complexity. More details in the Cognitive Biases section.

�� The Substitution Debate: Intelligence Augmentation vs. Automation

One central conflict is whether AI systems will augment physicians' capabilities or replace them entirely. Optimists argue that AI will free doctors from routine tasks, allowing them to focus on complex cases and patient communication.

Skeptics point out that economic pressure will drive medical staff reductions, lowering care quality (S007). Systematic analysis of AI's impact on employment shows that in other sectors, automation often leads to polarization: highly skilled specialists benefit, while mid-level workers lose ground.

Whether this applies to medicine remains an open question, dependent on regulatory decisions and healthcare economic models.

�� Uncertainty in Economic Efficiency Assessment: Who Pays, Who Wins?

Claims about reducing healthcare costs through AI often ignore full expenses: development, validation, implementation, staff training, infrastructure support. AI triage's economic efficiency heavily depends on context: in countries with physician shortages, the benefit is higher; in countries with surplus diagnosticians, lower.

Moreover, benefits are distributed unevenly: software manufacturers and large hospitals profit, while outpatient clinics and rural centers may lack access (S001).

- Total cost of ownership (TCO) includes not just licenses, but integration, validation on local data, and staff retraining.

- ROI depends on patient volume and facility type: large centers recoup investments faster.

- Access equity remains unresolved: AI may deepen healthcare inequality.

�� Black Box vs. Transparency: When Explainability Conflicts with Accuracy

Deep neural networks often show better accuracy but explain their decisions poorly. Physicians and regulators demand transparency: why does the system recommend this specific diagnosis? But adding interpretability can reduce accuracy (S003).

This creates a dilemma: a highly accurate black box or a less accurate but explainable system? Different countries and institutions choose differently, complicating standardization.

| Parameter | Black Box (DL) | Interpretable Model |

|---|---|---|

| Accuracy | Often higher | Often lower |

| Explainability | Low | High |

| Regulatory approval | More difficult | Easier |

| Physician trust | Lower | Higher |

�� Generalization and Context: Does AI Work Beyond Training Data?

A system trained on U.S. hospital data may perform poorly in Europe or Asia due to differences in population, equipment, and protocols. This isn't a bug, but a fundamental machine learning problem (S002).

Some researchers argue that local validation solves the problem. Others point out this requires significant resources and slows deployment. There's no consensus: validation standards differ between countries and regulators.

The paradox: the more specialized the system, the higher its accuracy in narrow contexts, but the lower its universality and scalability.

⚖️ Liability and Regulation: Who Bears the Risk?

If an AI system makes an error, who's at fault: the developer, the hospital, the physician who used it? Different countries' legislation provides different answers (S004). In the U.S., the focus is on the manufacturer; in the EU, on the user; in other countries, on the state.

This uncertainty freezes investment and slows adoption. Startups fear lawsuits, hospitals fear liability, physicians fear license loss. Result: AI remains in pilot projects, not transitioning to routine practice.

- Liability Model (U.S.)

- The manufacturer bears primary responsibility for software quality and validation. The physician is responsible for choosing to use the system and interpreting results.

- Liability Model (EU)

- The user (hospital/physician) bears responsibility for implementation and monitoring. The manufacturer is responsible for disclosing limitations.

- Practical Outcome

- Different standards freeze global deployment and create a fragmented market.

�� Why There's No Consensus and Why That's Normal

Medical AI sits at the intersection of technology, economics, ethics, and politics. Each stakeholder sees the problem differently: manufacturers as opportunity, physicians as threat, patients as hope, regulators as risk.

Lack of consensus doesn't mean AI doesn't work. It means its role in medicine remains an open question, dependent on how we choose to regulate, fund, and implement it. This isn't a technical problem—it's a problem of choice.

Counter-Position Analysis

⚖️ Critical Counterpoint

The article takes a cautious position but may underestimate both the pace of progress and the real successes of implementation. Here's where the logic of the argumentation requires clarification.

Underestimating the Speed of Progress

The last 2–3 years have shown exponential growth in the capabilities of large language models and multimodal systems (GPT-4, Med-PaLM 2), which demonstrate a qualitatively new level of understanding medical context. Perhaps we are on the threshold of truly transformational changes, and the article's skepticism reflects outdated notions about AI capabilities.

Ignoring Successful Implementation Cases

The article focuses on problems and limitations but may underestimate real successful implementations of AI in clinical practice. Diabetic retinopathy analysis systems (IDx-DR) have received regulatory approval and are being used in real practice, showing measurable benefits. The criticism may be overly generalizing.

Methodological Bias in Sources

The sources used are not specialized reviews of medical AI—these are fragmented works on nanotechnology, epistemology, and software requirements. The absence of direct systematic reviews of AI effectiveness in medicine (for example, from Nature Medicine, Lancet Digital Health) makes the article's conclusions potentially biased. More recent and specialized sources could provide a different picture.

Underestimating Economic Pressure

The article does not account for the fact that economic factors (physician shortage, rising healthcare costs, pressure for efficiency) may accelerate AI implementation even with an incomplete evidence base. Regulators may make compromises, creating "fast-track" approval pathways for AI systems in healthcare crisis conditions. Reality may prove more pragmatic than the article assumes.

Risk of Conclusions Becoming Outdated

Medical AI is developing so rapidly that conclusions may become outdated within 6–12 months. Breakthroughs in algorithm interpretability, federated learning, or new architectures could radically change the situation. The article risks becoming an example of premature skepticism, as was the case with early criticism of deep learning in the 2000s.

FAQ

Frequently Asked Questions